Merge branch 'dygraph' into dygraph

Showing

文件已移动

文件已移动

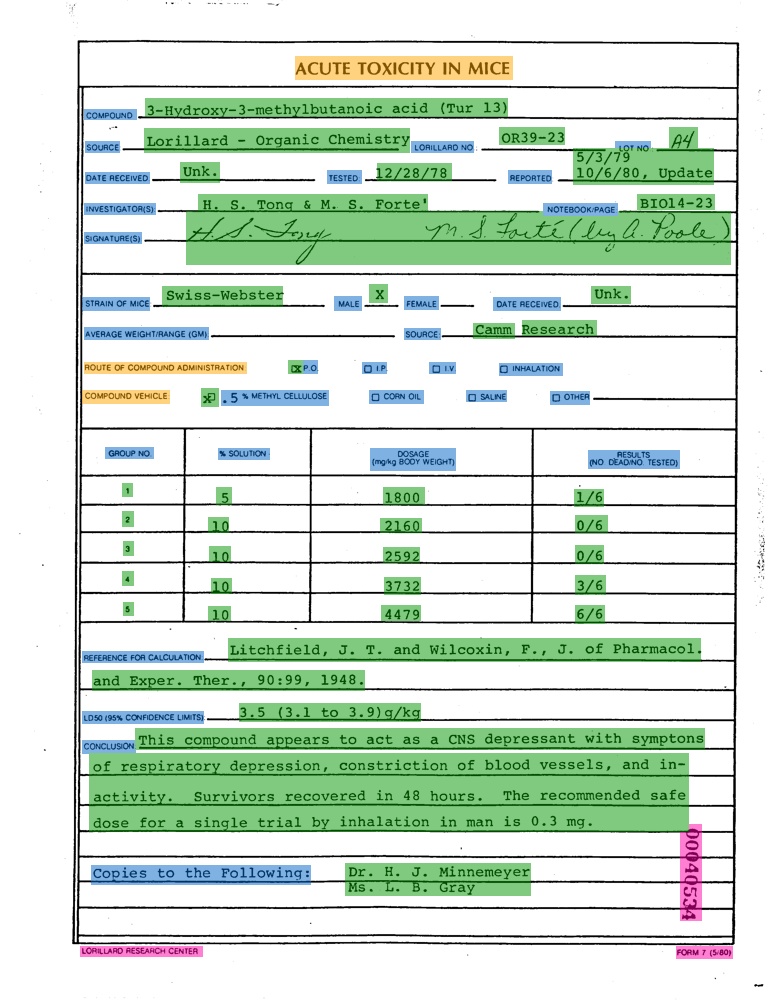

236.7 KB

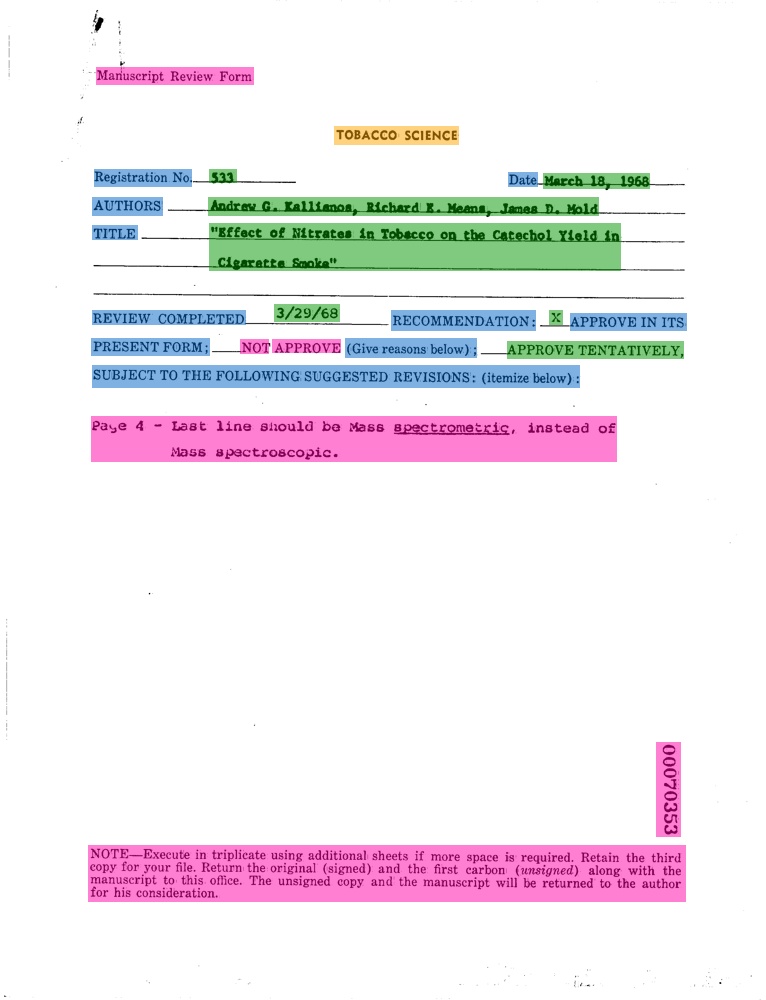

110.2 KB

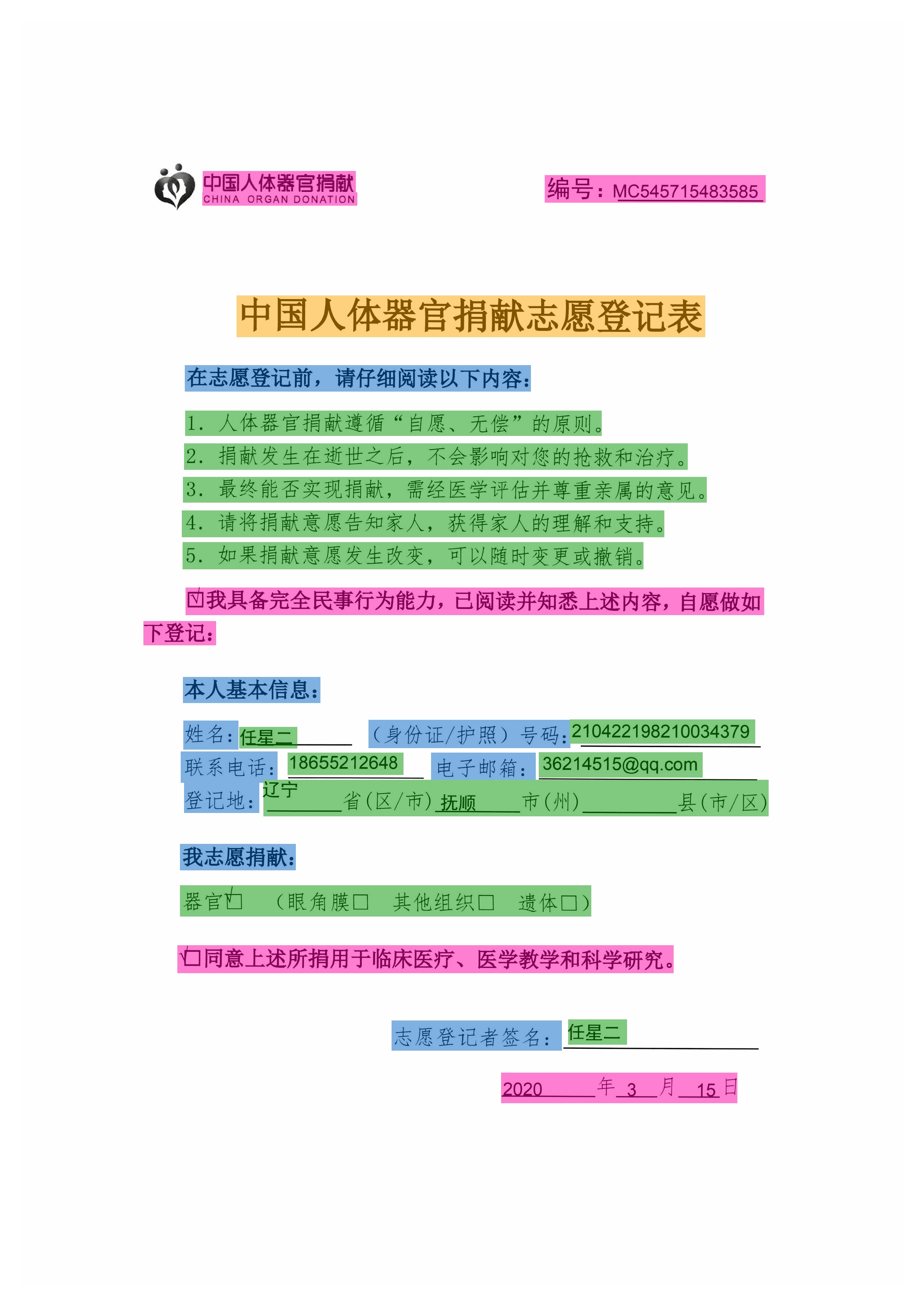

1.3 MB

756.1 KB

doc/doc_ch/algorithm_det_sast.md

0 → 100644

doc/doc_ch/algorithm_rec_sar.md

0 → 100644

doc/doc_ch/algorithm_rec_srn.md

0 → 100644