merge update

Showing

doc/design/cpp_data_feeding.md

0 → 100644

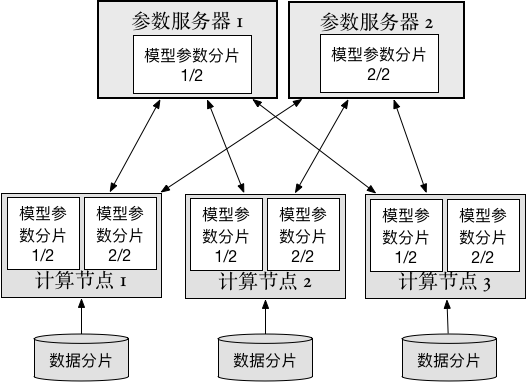

doc/howto/cluster/src/ps_cn.png

0 → 100644

33.1 KB

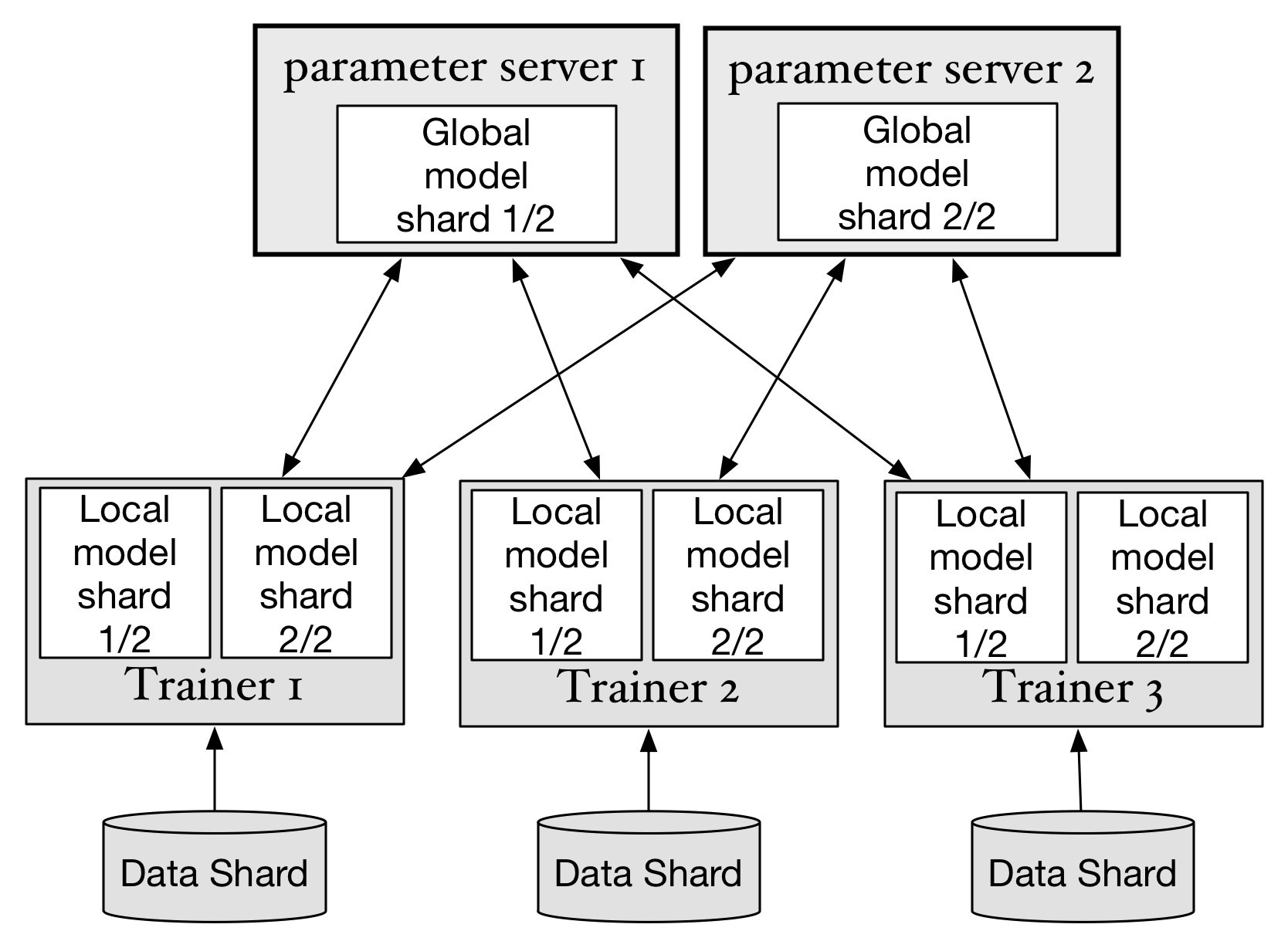

doc/howto/cluster/src/ps_en.png

0 → 100644

141.7 KB

文件已移动

33.1 KB

141.7 KB