更新了爬虫部分的代码

Showing

Day66-75/Scrapy的应用03.md

已删除

100644 → 0

Day66-75/code/douban/scrapy.cfg

0 → 100644

Day66-75/code/example07.py

0 → 100644

Day66-75/code/example08.py

0 → 100644

Day66-75/code/example09.py

0 → 100644

Day66-75/code/example11.py

0 → 100644

Day66-75/code/example12.py

0 → 100644

Day66-75/code/guido.jpg

0 → 100644

59.0 KB

Day66-75/code/tesseract.png

0 → 100644

75.2 KB

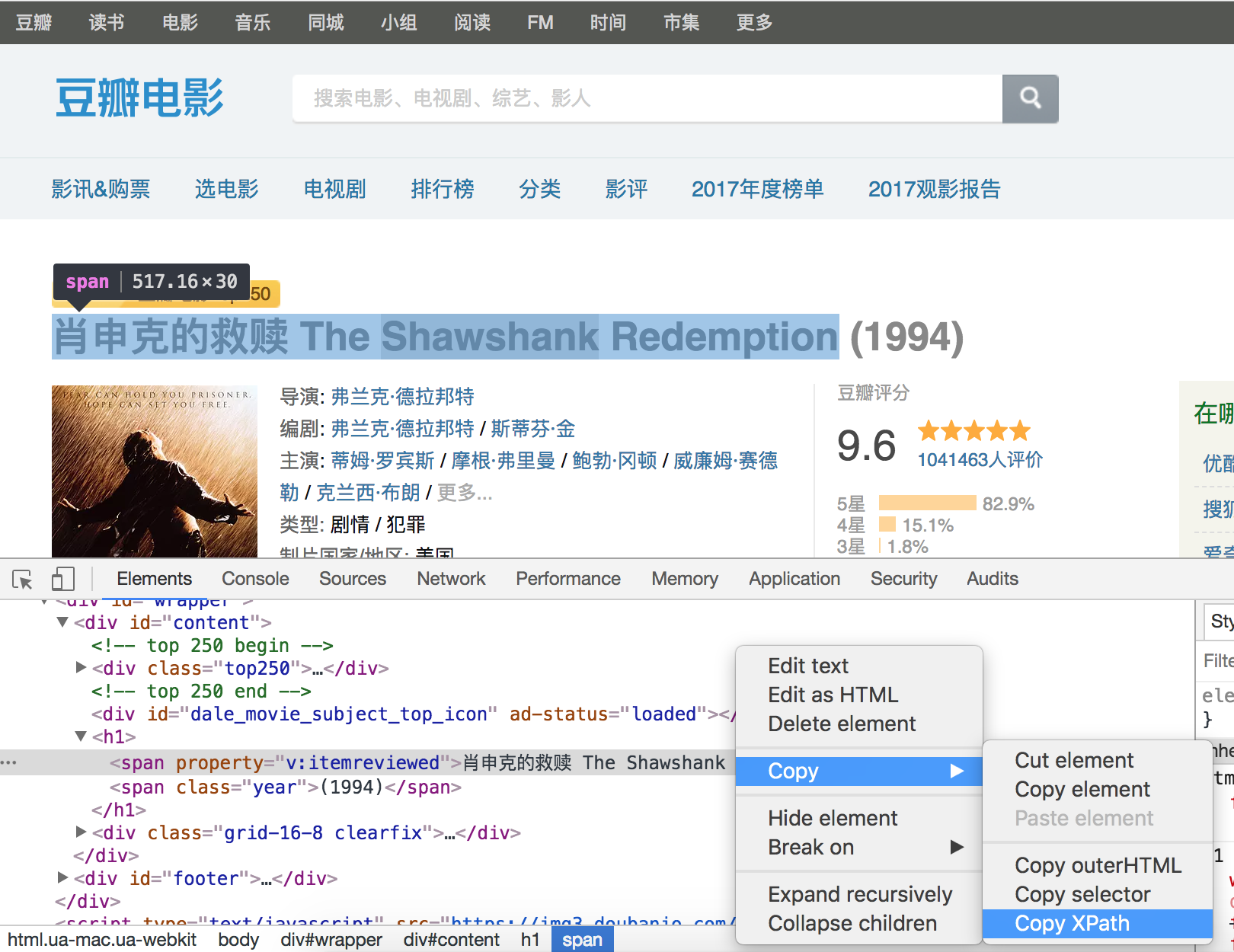

Day66-75/res/douban-xpath.png

0 → 100644

585.2 KB