Merge pull request #2 from PaddlePaddle/develop

update

Showing

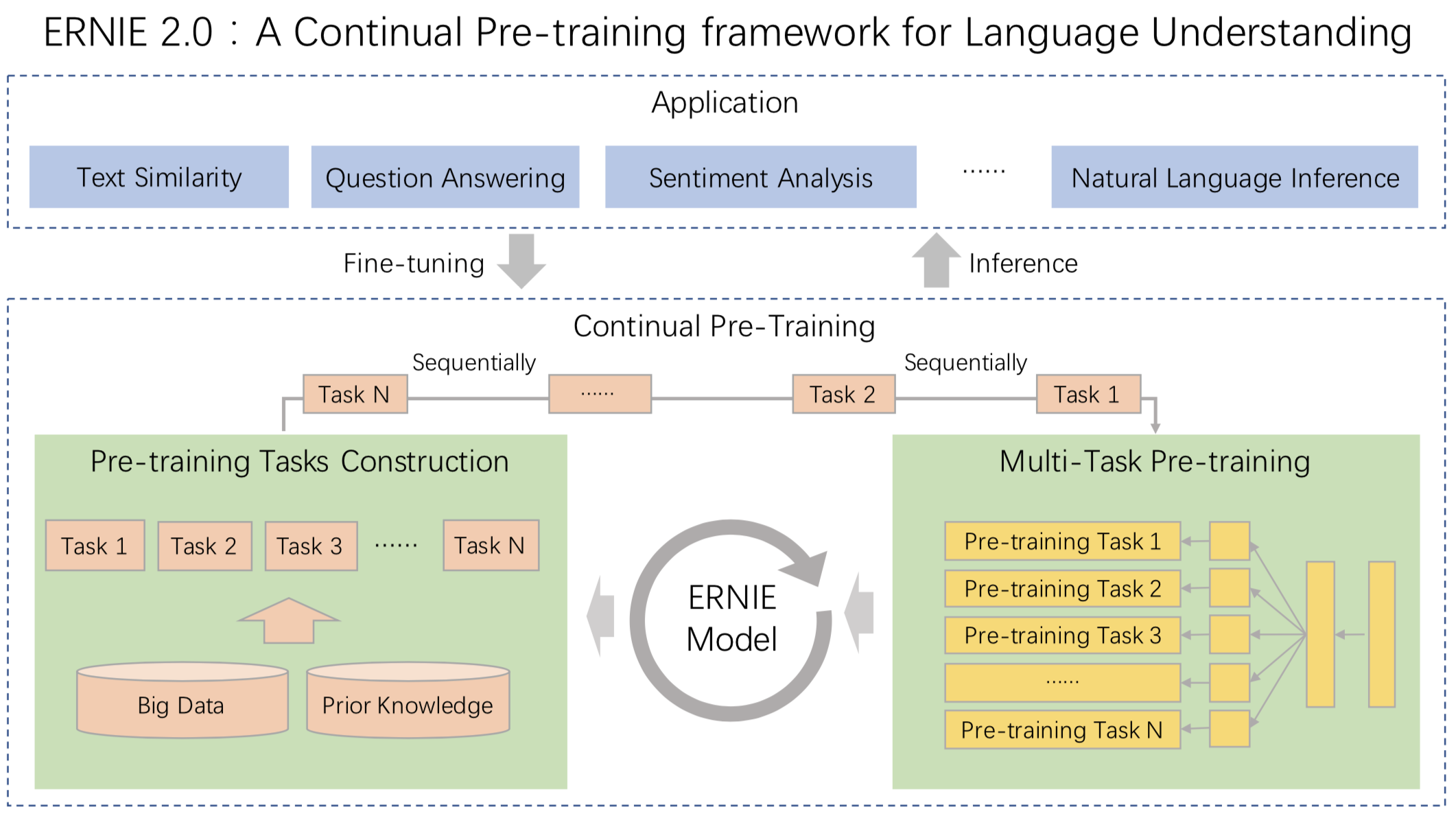

.metas/ernie2.0_arch.png

0 → 100644

303.4 KB

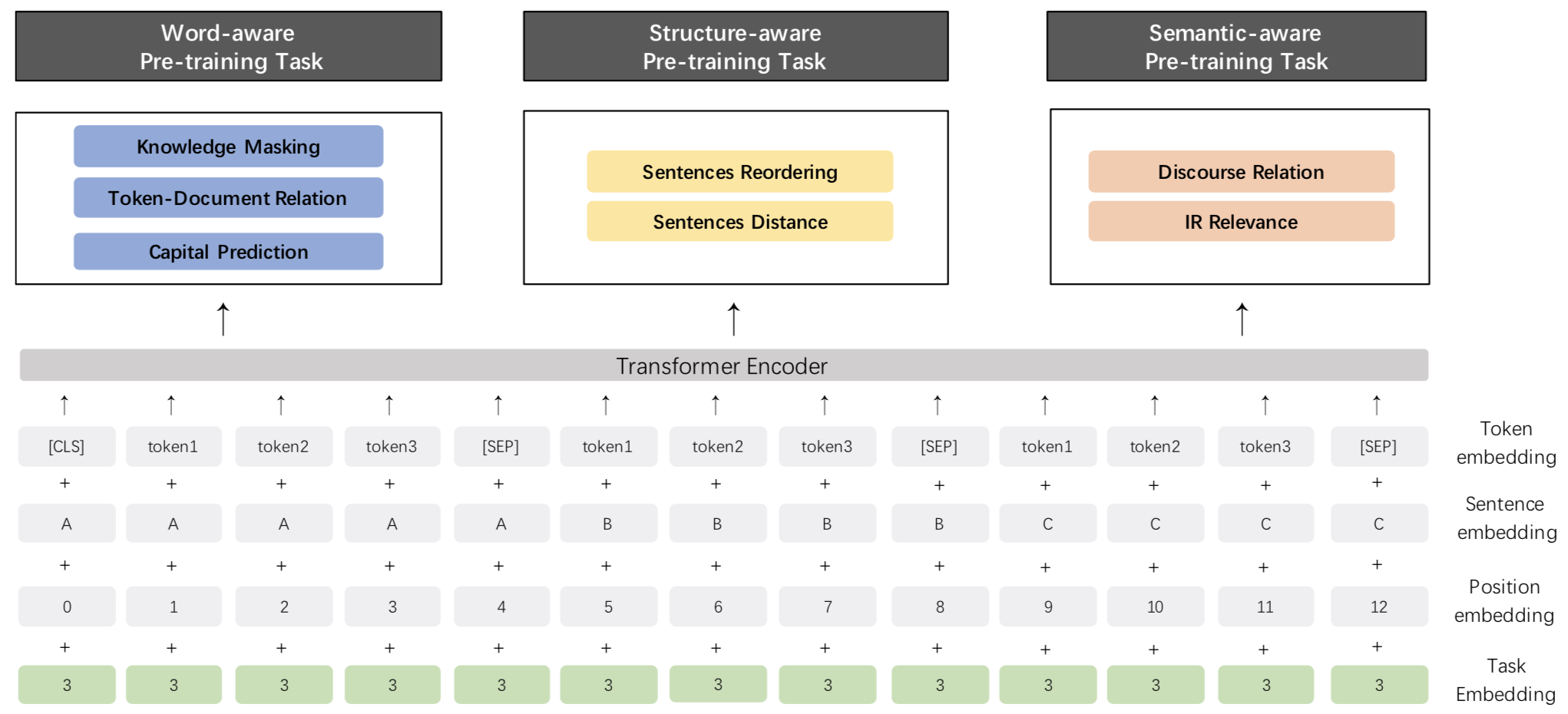

.metas/ernie2.0_model.png

0 → 100644

169.8 KB

此差异已折叠。

BERT/_ce.py

已删除

100644 → 0

BERT/batching.py

已删除

100644 → 0

BERT/convert_params.py

已删除

100644 → 0

此差异已折叠。

此差异已折叠。

文件已删除

文件已删除

BERT/dist_utils.py

已删除

100644 → 0

BERT/inference/CMakeLists.txt

已删除

100644 → 0

BERT/inference/README.md

已删除

100644 → 0

BERT/inference/inference.cc

已删除

100644 → 0

BERT/model/classifier.py

已删除

100644 → 0

BERT/optimization.py

已删除

100644 → 0

BERT/reader/cls.py

已删除

100644 → 0

此差异已折叠。

BERT/reader/pretraining.py

已删除

100644 → 0

BERT/reader/squad.py

已删除

100644 → 0

此差异已折叠。

BERT/run_squad.py

已删除

100644 → 0

BERT/test_local_dist.sh

已删除

100755 → 0

BERT/tokenization.py

已删除

100644 → 0

BERT/train.py

已删除

100644 → 0

BERT/train.sh

已删除

100755 → 0

BERT/utils/args.py

已删除

100644 → 0

此差异已折叠。

BERT/utils/cards.py

已删除

100644 → 0

此差异已折叠。

ELMo/LAC_demo/bilm.py

已删除

100755 → 0

此差异已折叠。

ELMo/LAC_demo/conf/q2b.dic

已删除

100755 → 0

此差异已折叠。

此差异已折叠。

ELMo/LAC_demo/data/tag.dic

已删除

100755 → 0

此差异已折叠。

此差异已折叠。

ELMo/LAC_demo/network.py

已删除

100755 → 0

此差异已折叠。

ELMo/LAC_demo/reader.py

已删除

100755 → 0

此差异已折叠。

ELMo/LAC_demo/run.sh

已删除

100755 → 0

此差异已折叠。

ELMo/LAC_demo/train.py

已删除

100755 → 0

此差异已折叠。

ELMo/README.md

100755 → 100644

此差异已折叠。

ELMo/args.py

已删除

100755 → 0

此差异已折叠。

ELMo/data.py

已删除

100755 → 0

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

ELMo/lm_model.py

已删除

100755 → 0

此差异已折叠。

ELMo/train.py

已删除

100755 → 0

此差异已折叠。

此差异已折叠。

ERNIE/finetune/__init__.py

已删除

100644 → 0

ERNIE/model/__init__.py

已删除

100644 → 0

ERNIE/reader/__init__.py

已删除

100644 → 0

ERNIE/utils/__init__.py

已删除

100644 → 0

ERNIE/utils/fp16.py

已删除

100644 → 0

此差异已折叠。

ERNIE/utils/init.py

已删除

100644 → 0

此差异已折叠。

此差异已折叠。

README.zh.md

0 → 100644

此差异已折叠。

此差异已折叠。

config/vocab_en.txt

0 → 100644

此差异已折叠。

finetune/mrc.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

script/en_glue/preprocess/cvt.sh

0 → 100644

此差异已折叠。

script/en_glue/preprocess/mnli.py

0 → 100644

此差异已折叠。

script/en_glue/preprocess/qnli.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

utils/cmrc2018_eval.py

0 → 100644

此差异已折叠。