Merge branch 'dygraph' into dygraph

Showing

4.1 KB

4.1 KB

configs/table/table_mv3.yml

0 → 100755

deploy/cpp_infer/tools/run.sh

已删除

100755 → 0

| W: | H:

| W: | H:

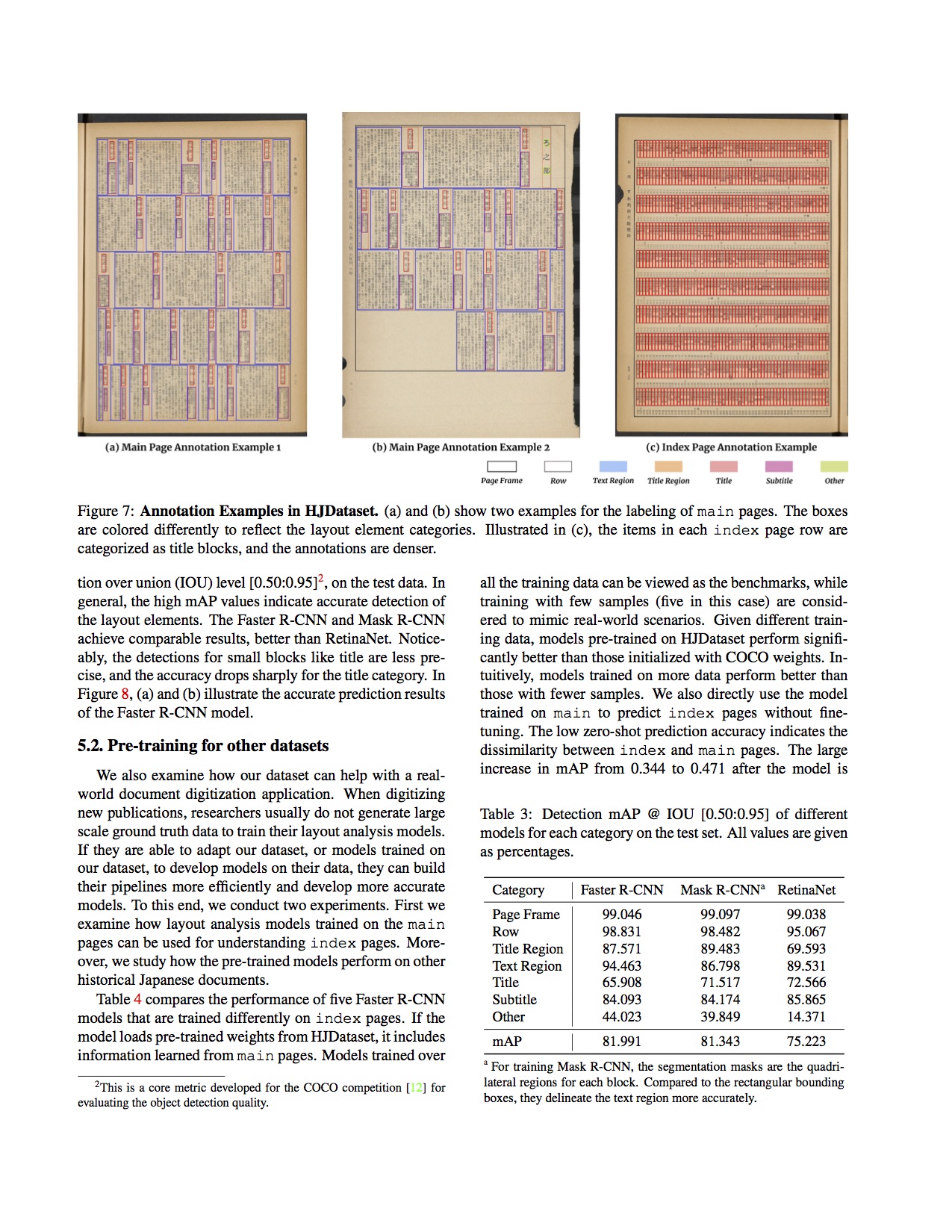

doc/table/1.png

0 → 100644

262.8 KB

doc/table/layout.jpg

0 → 100644

671.5 KB

doc/table/paper-image.jpg

0 → 100644

671.5 KB

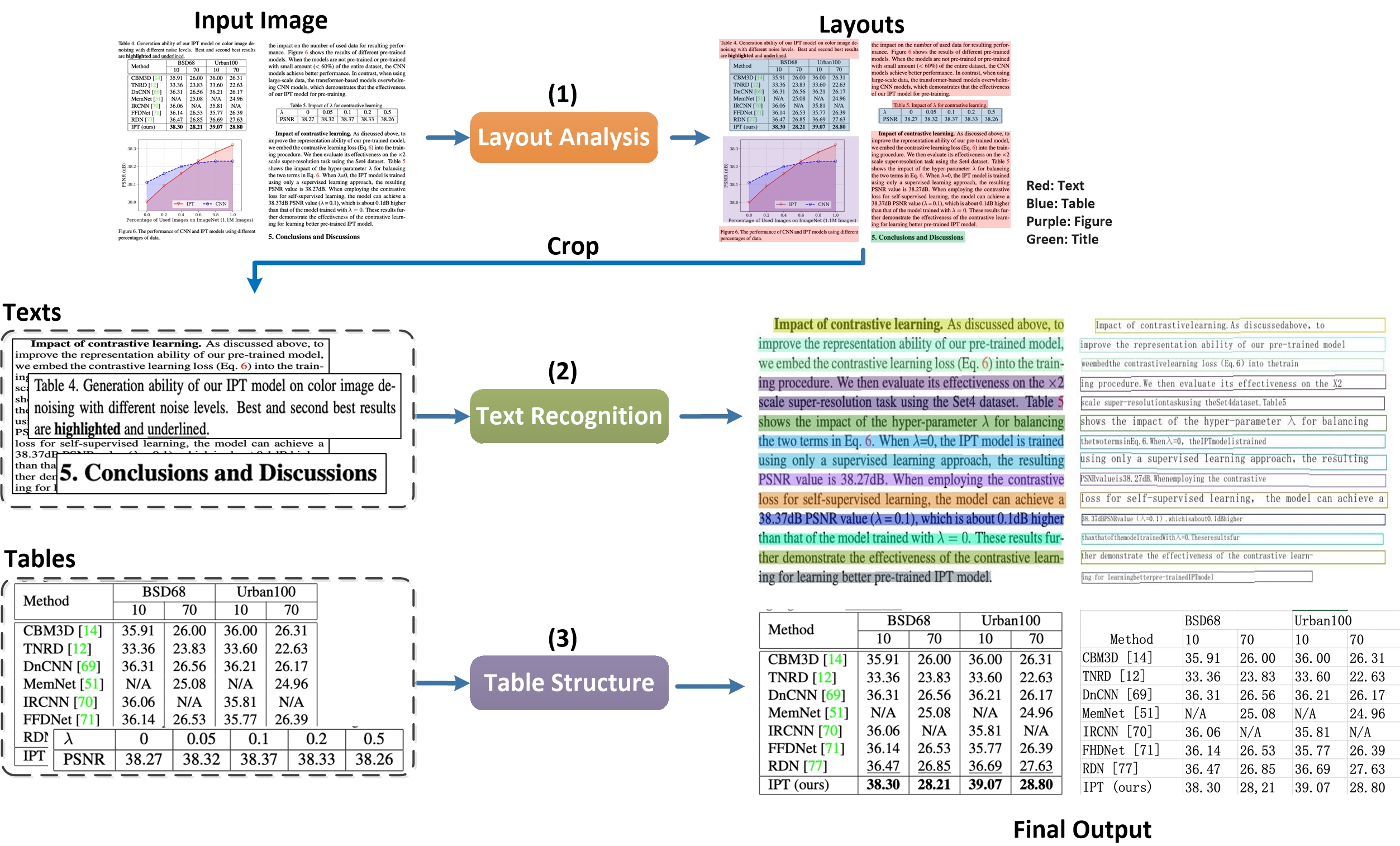

doc/table/pipeline.jpg

0 → 100644

1.5 MB

doc/table/pipeline_en.jpg

0 → 100644

1.4 MB

doc/table/ppstructure.GIF

0 → 100644

2.5 MB

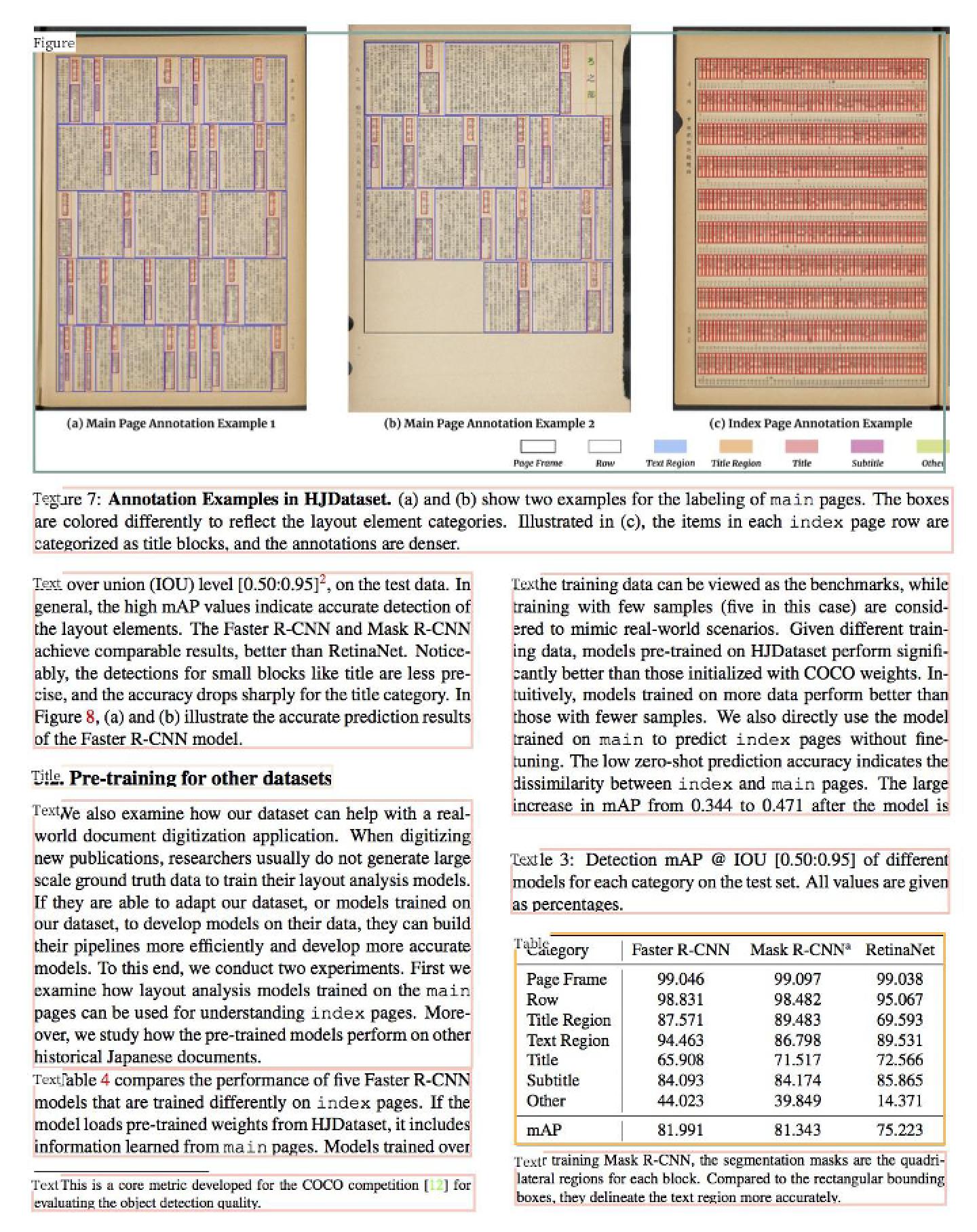

doc/table/result_all.jpg

0 → 100644

521.0 KB

doc/table/result_text.jpg

0 → 100644

146.3 KB

doc/table/table.jpg

0 → 100644

24.1 KB

doc/table/tableocr_pipeline.jpg

0 → 100644

551.7 KB

415.7 KB

ppocr/data/imaug/copy_paste.py

0 → 100644

ppocr/data/pubtab_dataset.py

0 → 100644

此差异已折叠。

ppocr/losses/table_att_loss.py

0 → 100644

此差异已折叠。

ppocr/metrics/table_metric.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

ppocr/modeling/necks/table_fpn.py

0 → 100644

此差异已折叠。

ppocr/utils/dict/table_dict.txt

0 → 100644

此差异已折叠。

此差异已折叠。

ppocr/utils/network.py

0 → 100644

此差异已折叠。

此差异已折叠。

ppstructure/__init__.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

ppstructure/table/__init__.py

0 → 100644

此差异已折叠。

ppstructure/table/eval_table.py

0 → 100755

此差异已折叠。

ppstructure/table/matcher.py

0 → 100755

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

ppstructure/utility.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

tests/ocr_det_params.txt

0 → 100644

此差异已折叠。

tests/ocr_rec_params.txt

0 → 100644

此差异已折叠。

tests/prepare.sh

0 → 100644

此差异已折叠。

tests/readme.md

0 → 100644

此差异已折叠。

tests/test.sh

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

tools/infer_table.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。