Merge pull request #5776 from typhoonzero/update_refactor_dist_train_doc

Update design of dist train refactor

Showing

文件已移动

文件已移动

文件已移动

文件已移动

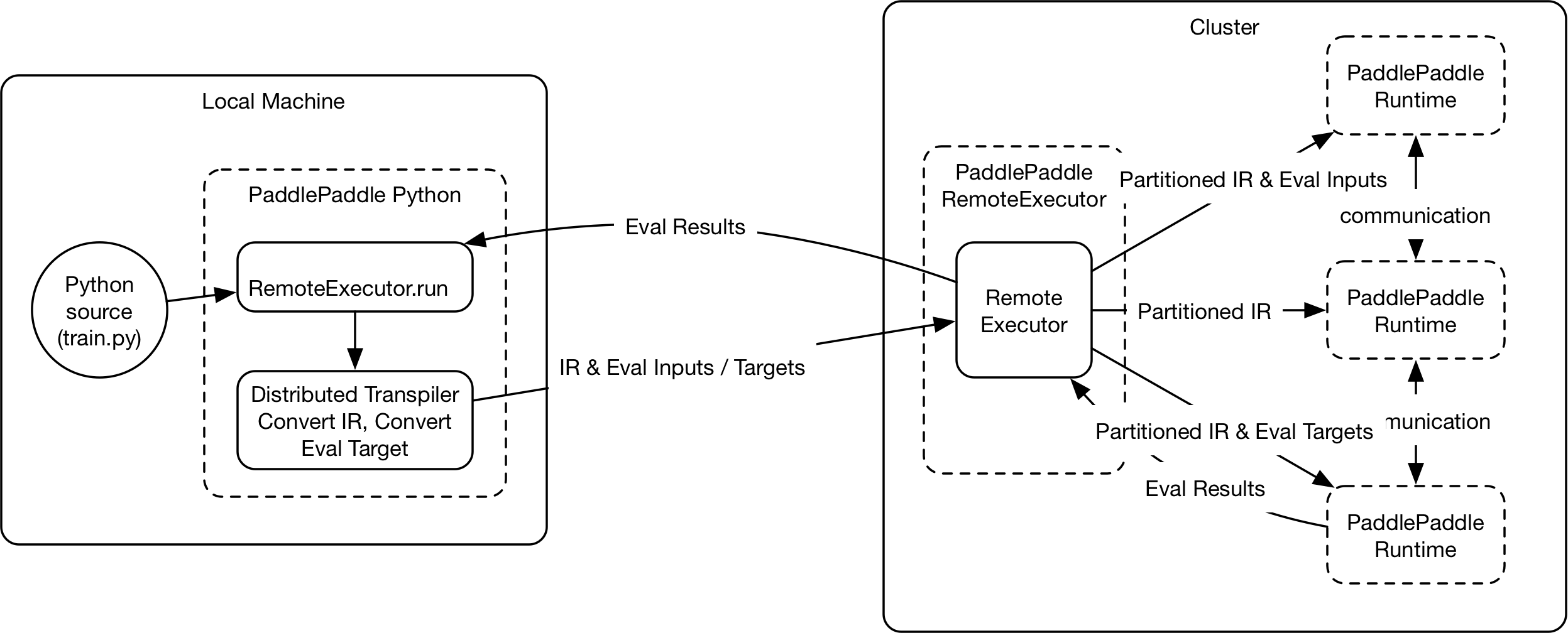

189.2 KB

文件已移动

文件已移动

文件已添加

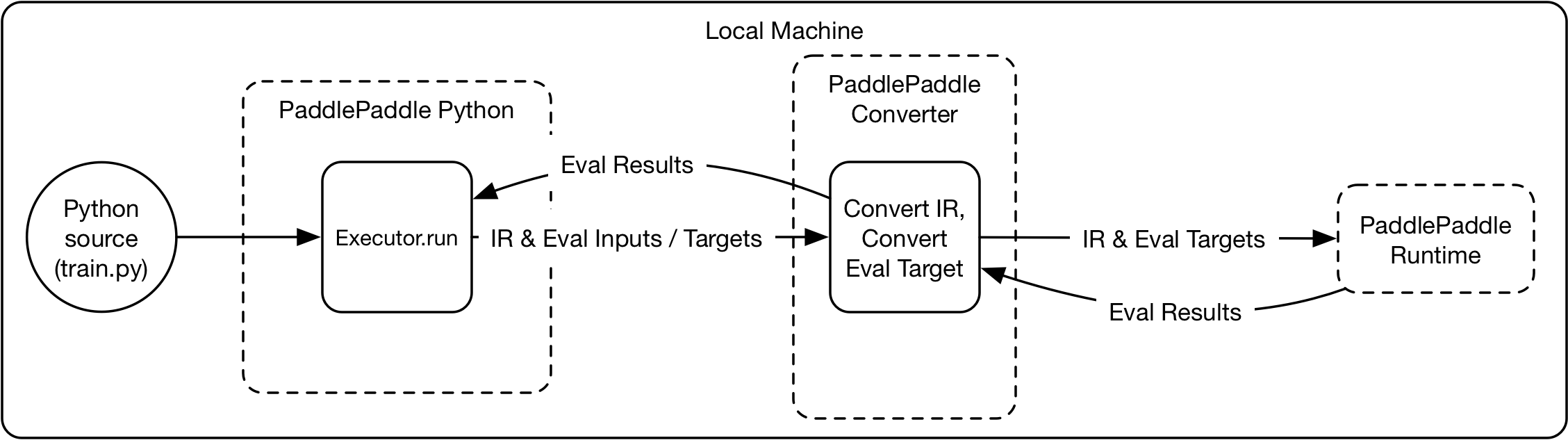

102.5 KB

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已添加

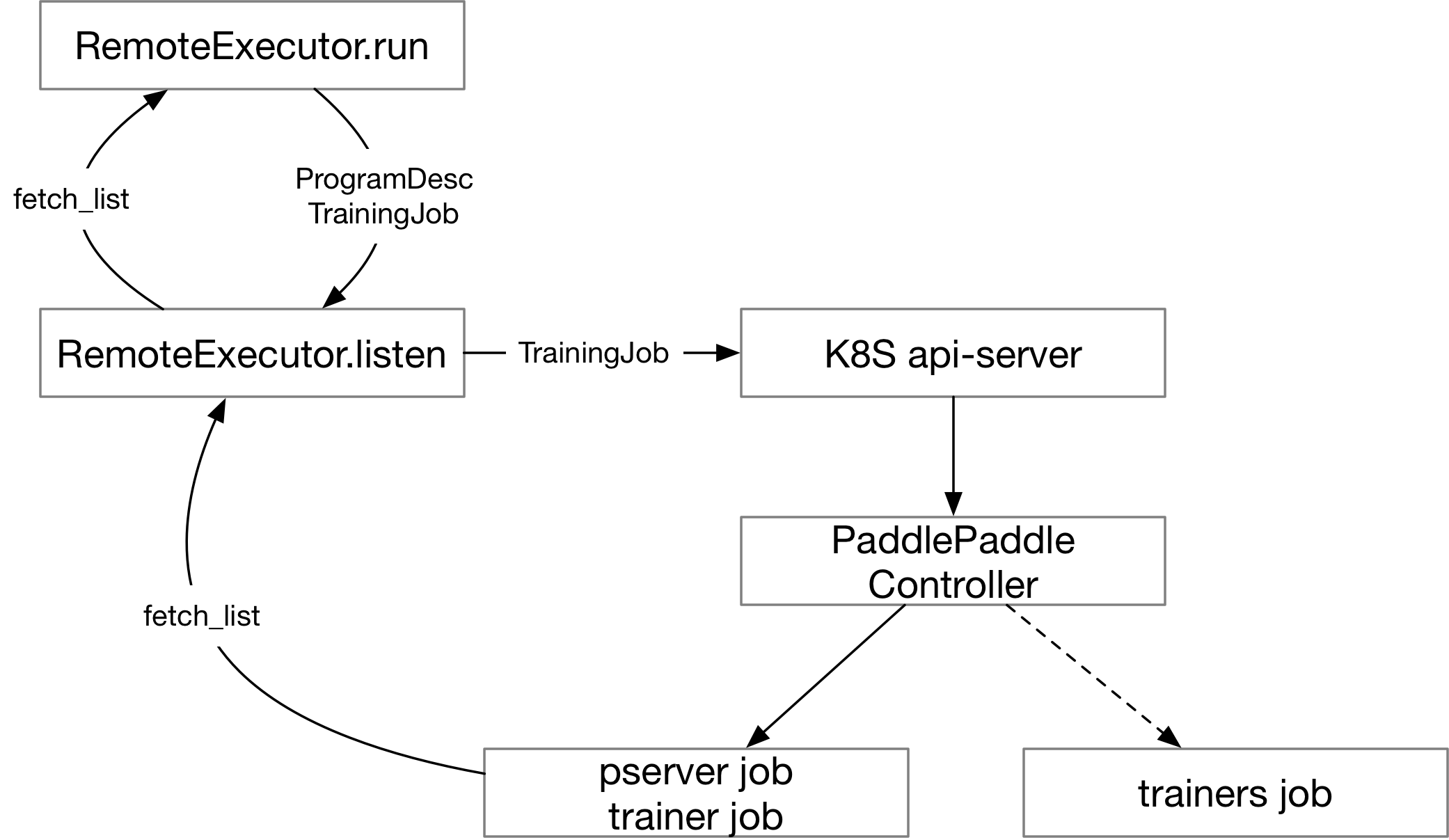

134.5 KB

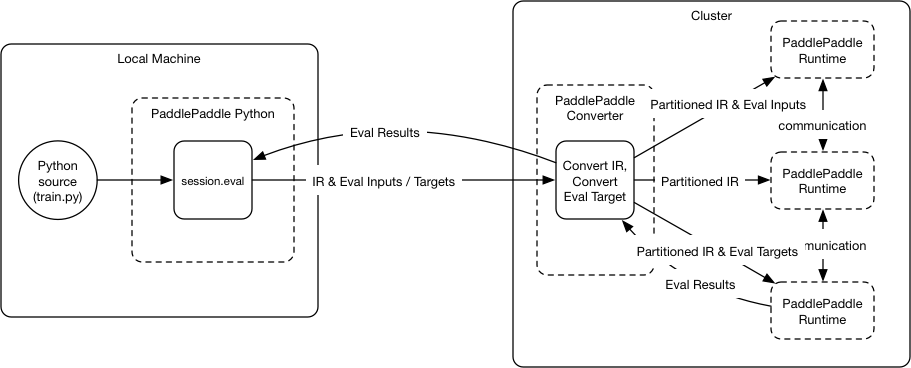

46.5 KB

文件已删除

28.3 KB