Merge remote-tracking branch 'upstream/develop' into prune_impl

Showing

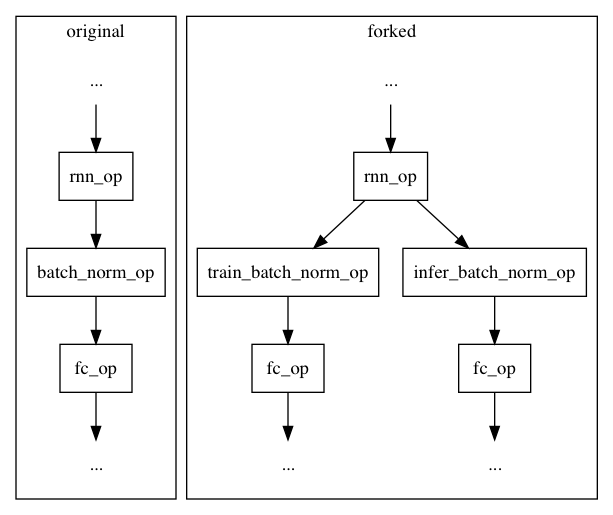

doc/design/executor.md

0 → 100644

31.5 KB

45.0 KB

1.1 KB

989 字节

1.6 KB

doc/design/infer_var_type.md

0 → 100644

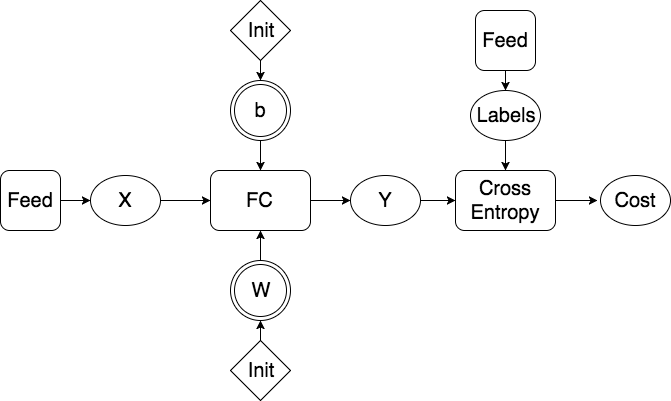

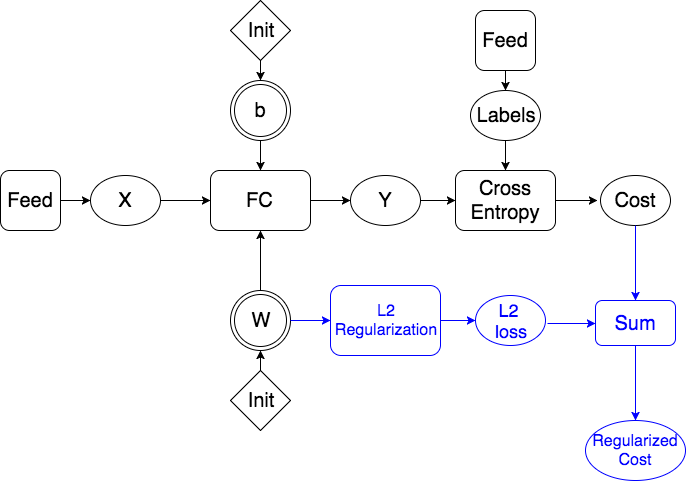

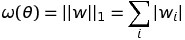

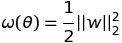

doc/design/regularization.md

0 → 100644

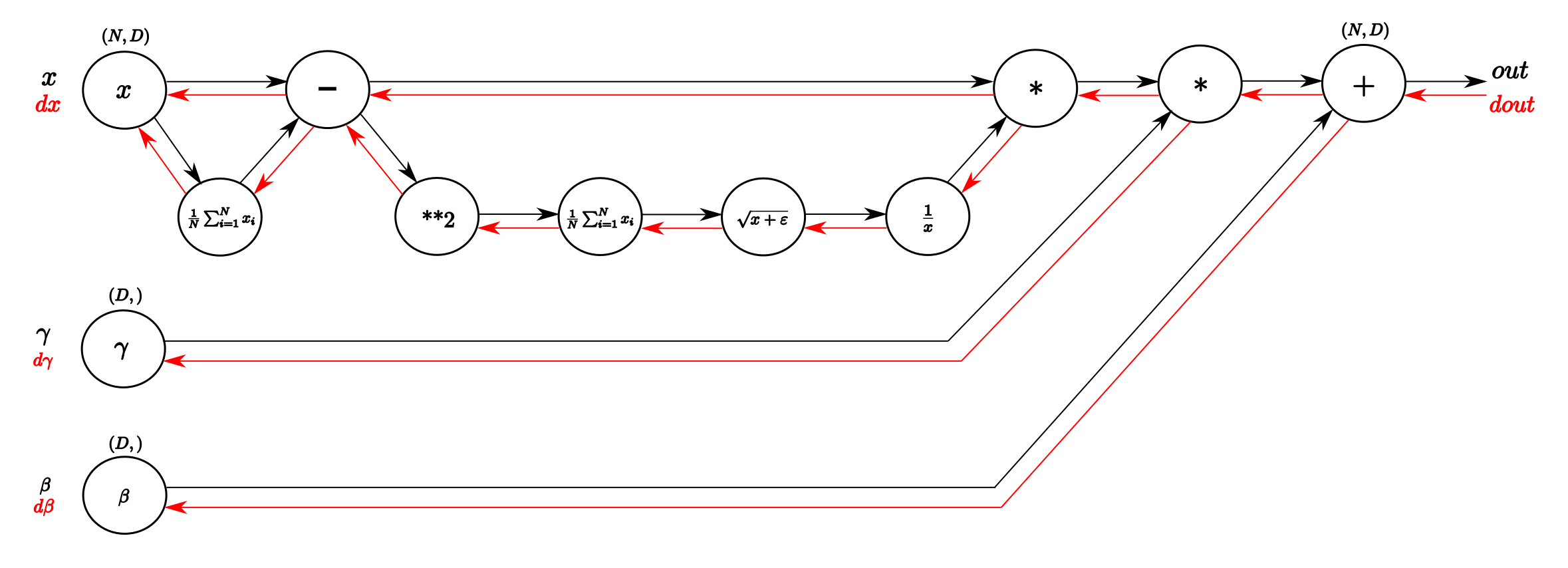

paddle/operators/batch_norm_op.md

0 → 100644

23.3 KB

161.3 KB

paddle/operators/math/matmul.h

0 → 100644

paddle/operators/matmul_op.cc

0 → 100644

paddle/operators/matmul_op.cu

0 → 100644

paddle/operators/matmul_op.h

0 → 100644

paddle/operators/momentum_op.cc

0 → 100644

此差异已折叠。

paddle/operators/momentum_op.cu

0 → 100644

此差异已折叠。

paddle/operators/momentum_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

paddle/operators/proximal_gd_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。