Merge pull request #3862 from wangkuiyi/update_graph_construction_design_doc

Update graph construction design doc

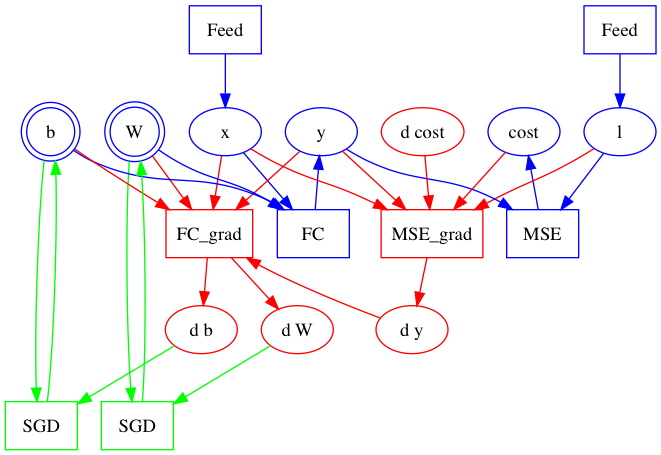

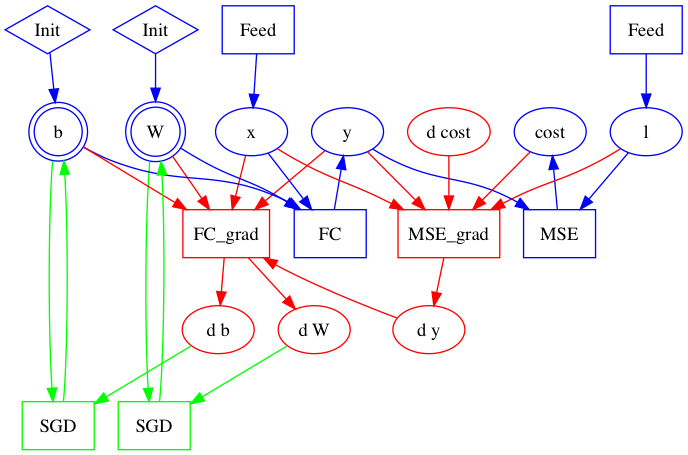

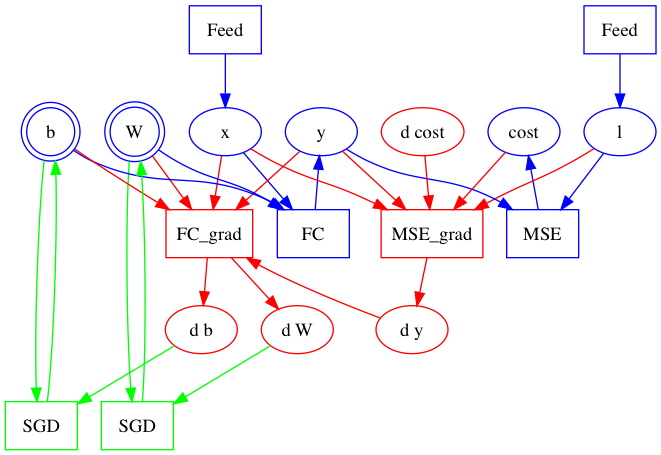

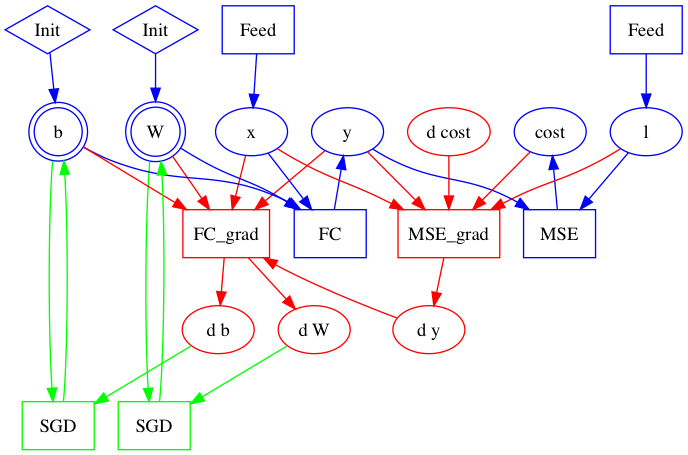

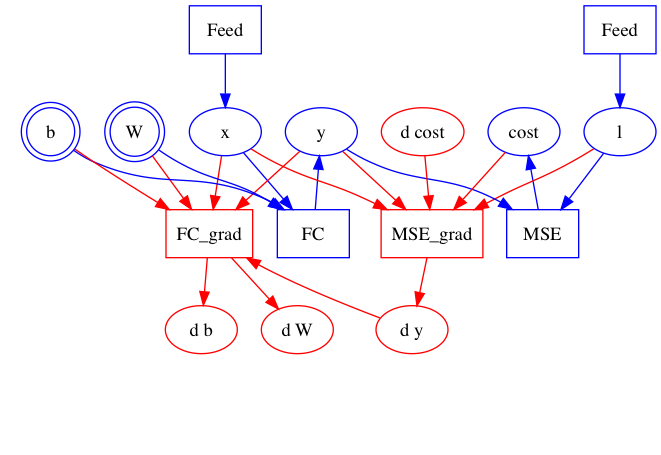

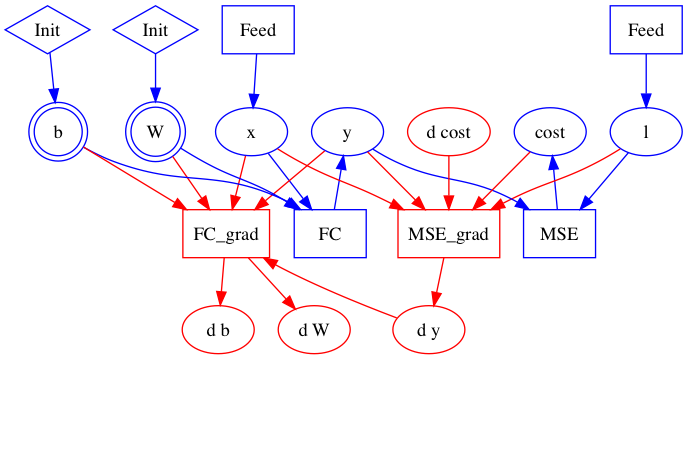

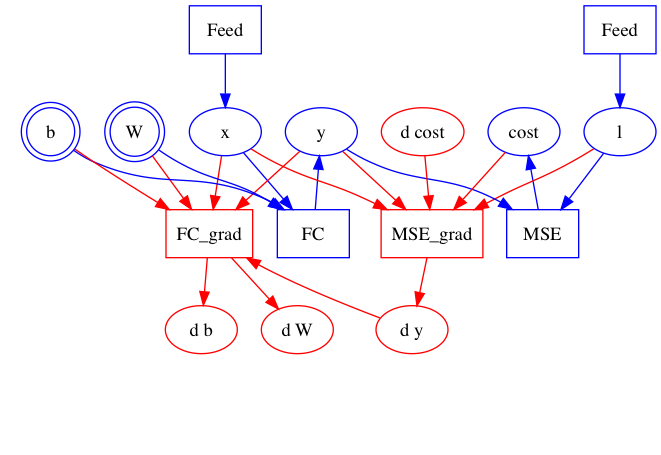

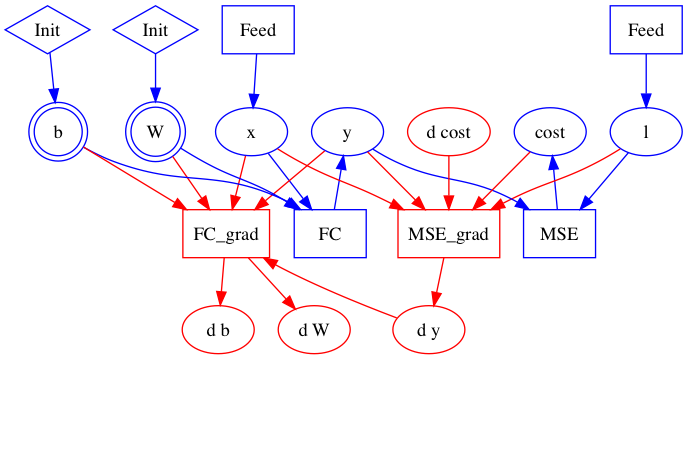

Showing

| W: | H:

| W: | H:

| W: | H:

| W: | H:

Update graph construction design doc

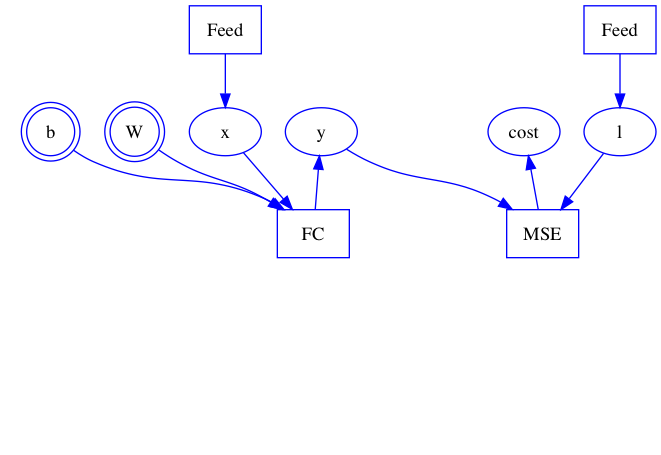

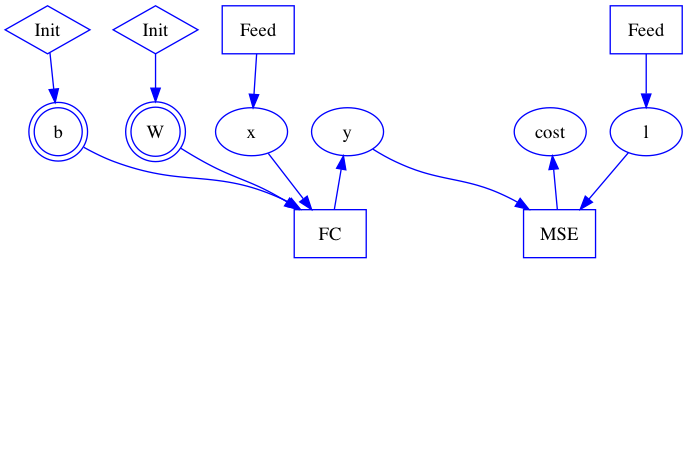

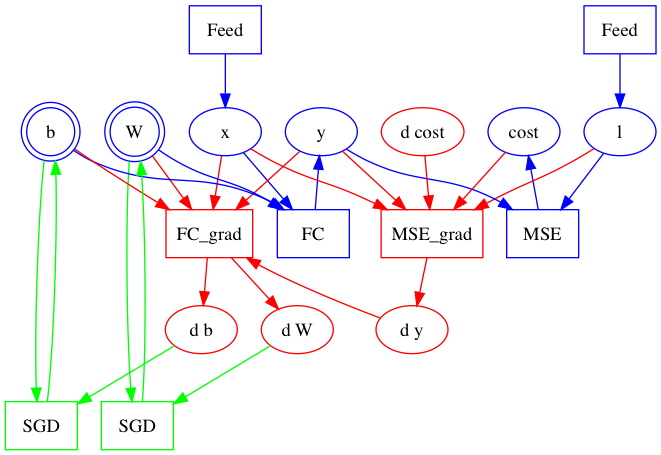

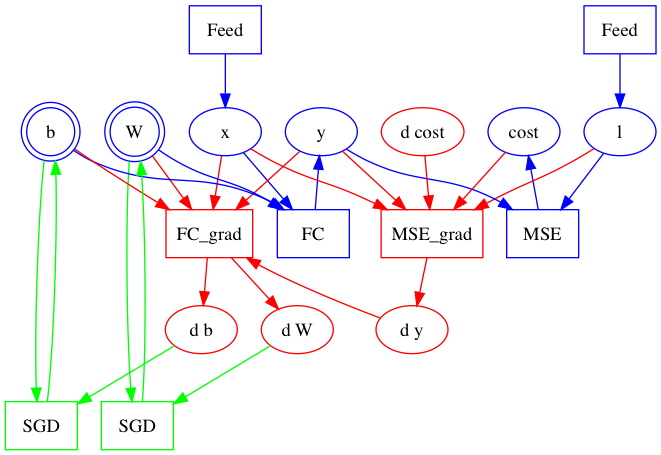

54.1 KB | W: | H:

58.3 KB | W: | H:

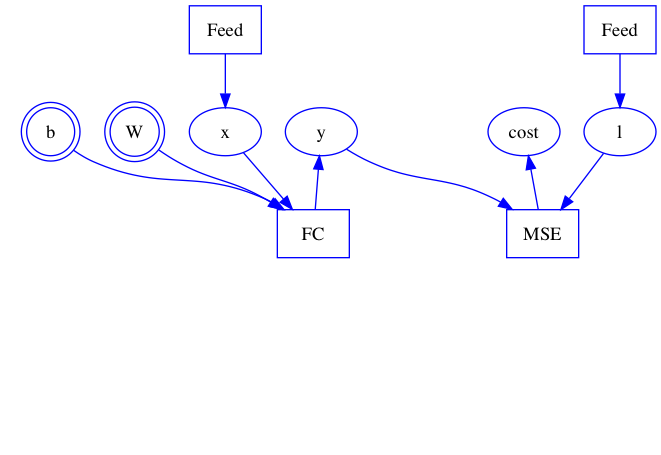

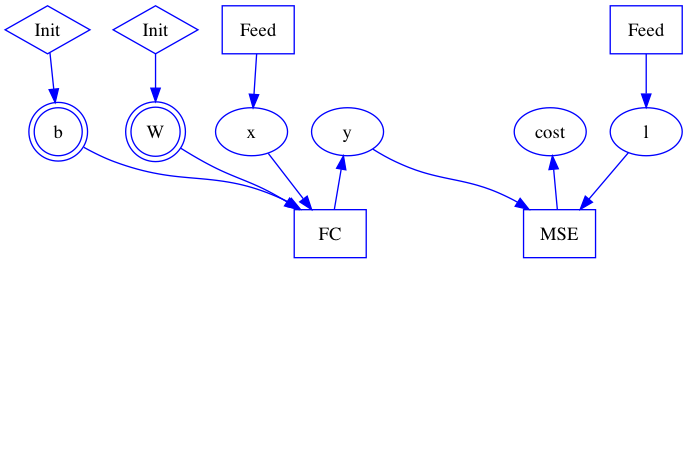

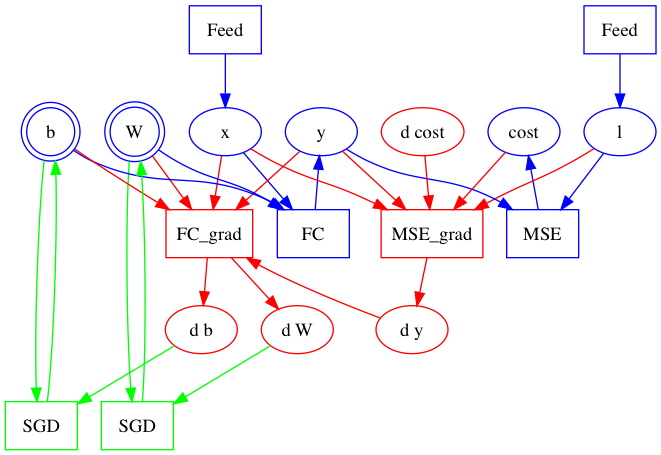

46.1 KB | W: | H:

50.2 KB | W: | H:

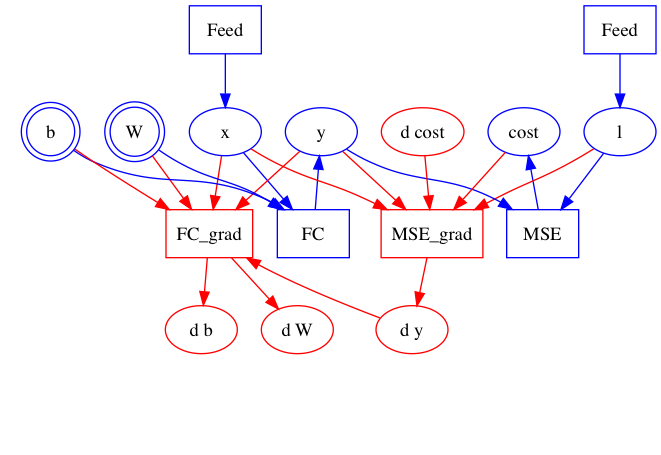

28.5 KB | W: | H:

31.5 KB | W: | H: