Revise docs (#2566)

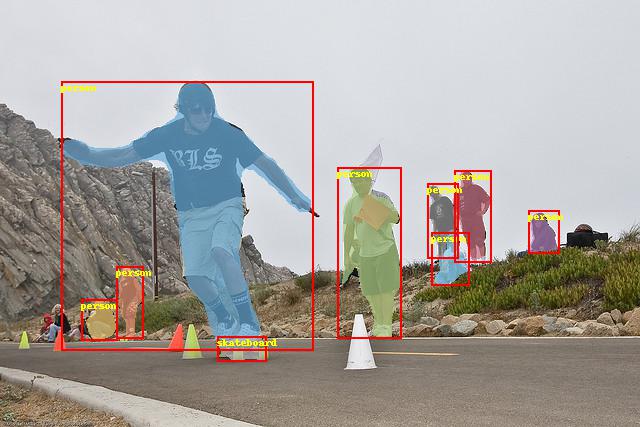

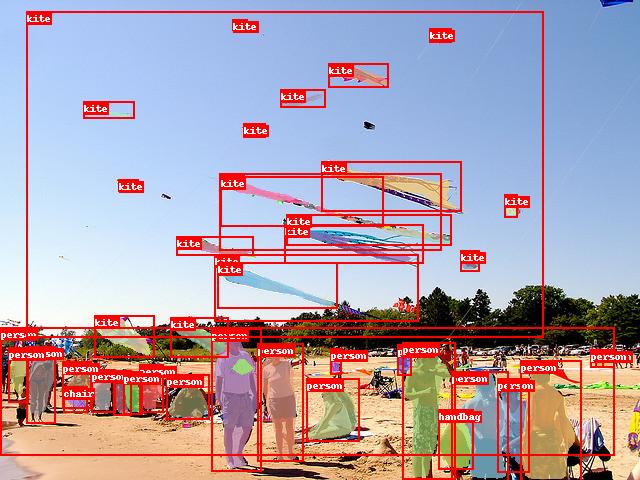

* Revise README.md * Revise `GETTING_STARTED.md` * Revise `INSTALL.md` * Add `CONFIG.md` * Fix RetinaNet backbones in README * Fix cascade backbones in README * Fix capitalization * Replace demo images and update visualize funcall * Revise `DATA.md` * Fix link to `DATA.md` * Fix capitalization

Showing

158.0 KB

190.7 KB

179.0 KB

92.0 KB

57.9 KB

45.6 KB

68.4 KB