Merge pull request #141 from Superjom/dssm

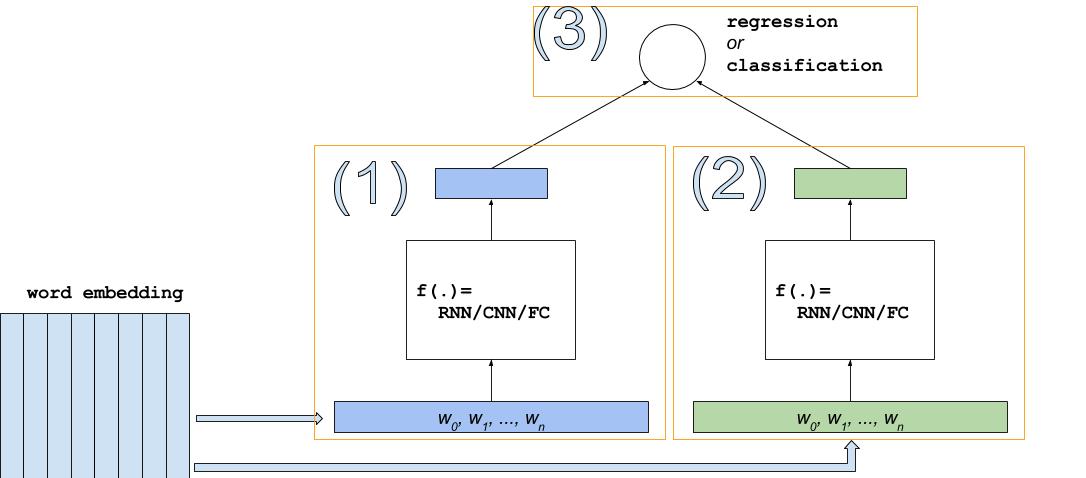

add a DSSM example.

Showing

dssm/README.md

0 → 100644

dssm/data/classification/test.txt

0 → 100644

dssm/data/rank/test.txt

0 → 100644

dssm/data/rank/train.txt

0 → 100644

dssm/data/vocab.txt

0 → 100644

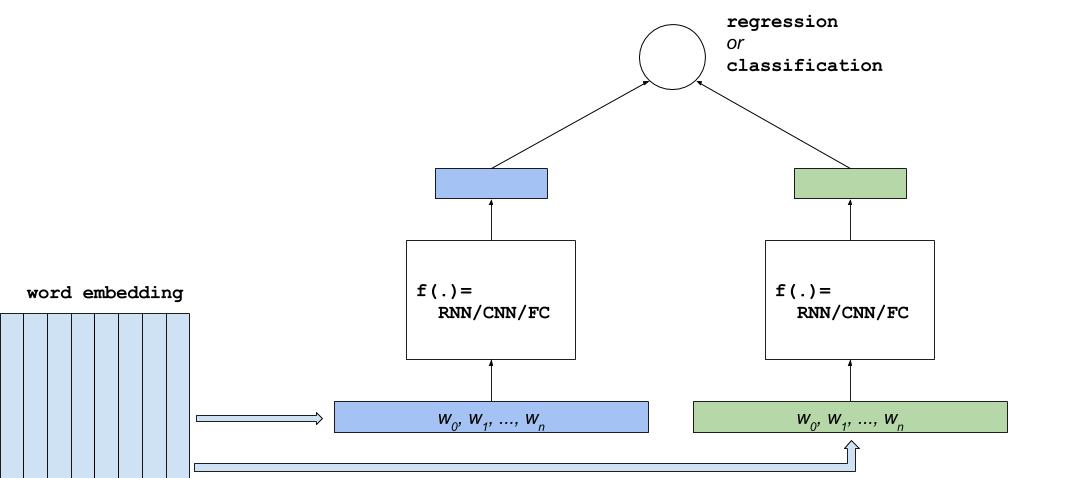

dssm/images/dssm.jpg

0 → 100644

33.0 KB

dssm/images/dssm.png

0 → 100644

210.2 KB

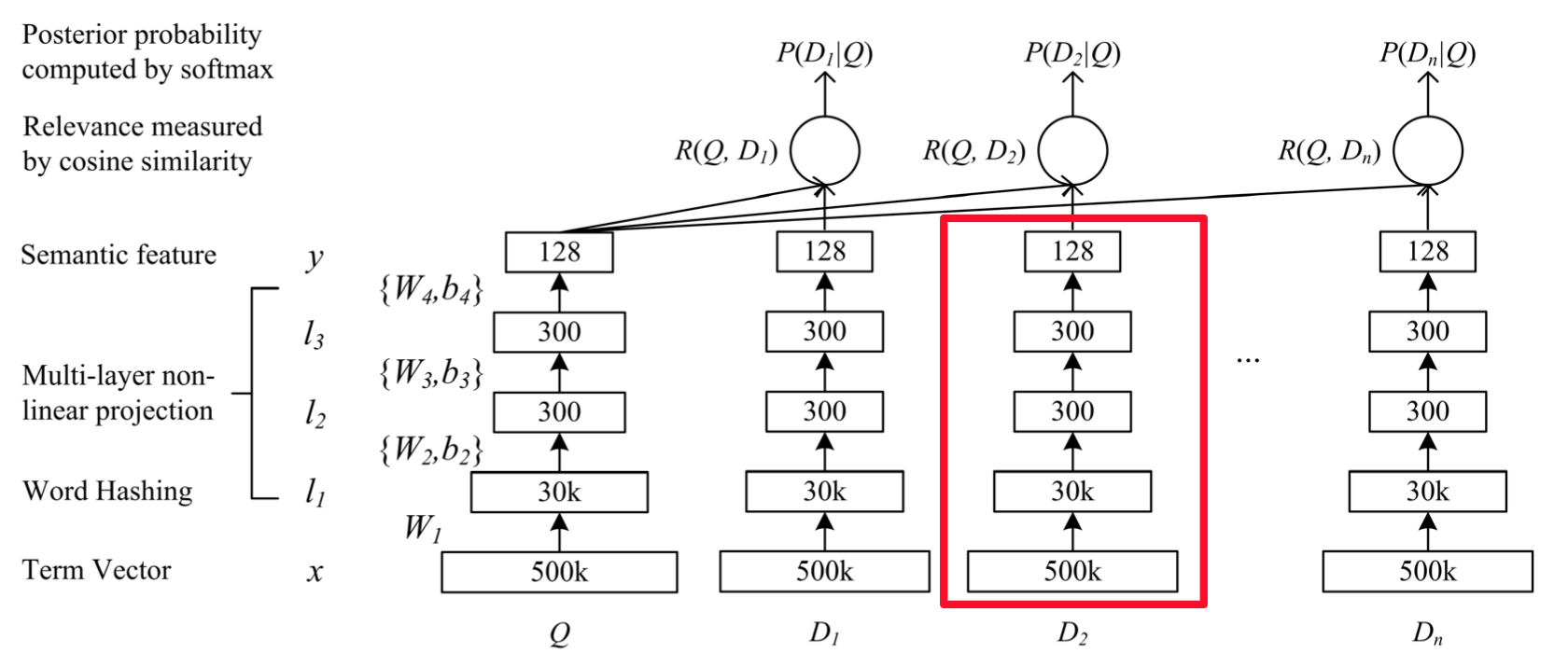

dssm/images/dssm2.jpg

0 → 100644

45.2 KB

dssm/images/dssm2.png

0 → 100644

80.9 KB

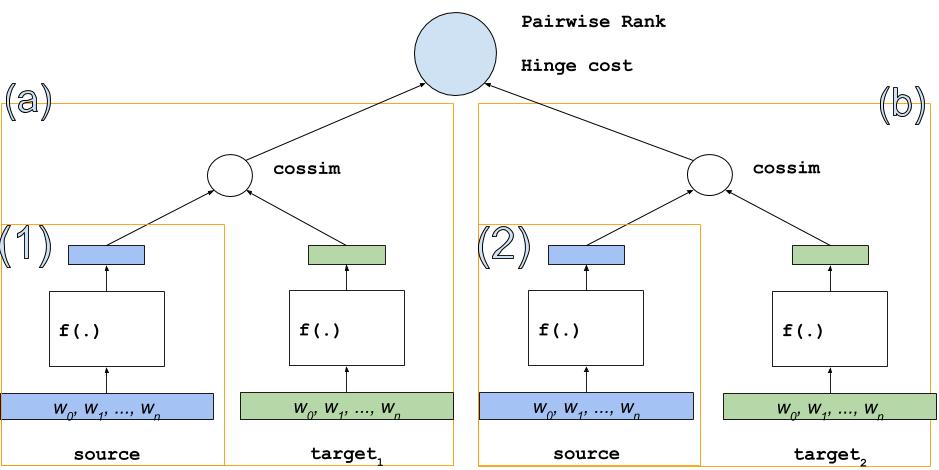

dssm/images/dssm3.jpg

0 → 100644

43.6 KB

dssm/infer.py

0 → 100644

dssm/network_conf.py

0 → 100644

dssm/reader.py

0 → 100644

dssm/train.py

0 → 100644

dssm/utils.py

0 → 100644