Add gan for book repo (#695)

* add gan for book * fix some typo * Update README.cn.md * modified html * fix some description * optimized the readme * fixed reference

Showing

09.gan/README.cn.md

0 → 100644

09.gan/dc_gan.py

0 → 100644

09.gan/image/dcgan_demo.png

0 → 100644

164.0 KB

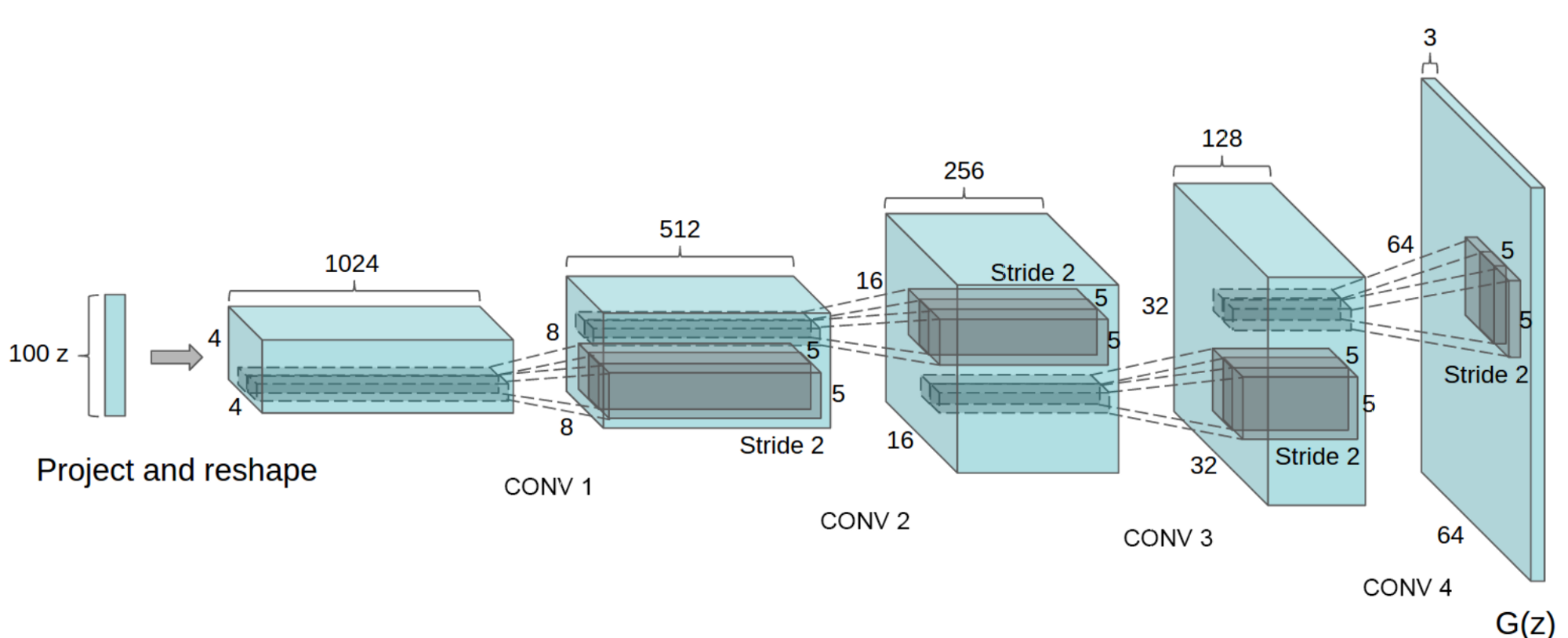

09.gan/image/dcgan_g.png

0 → 100644

231.8 KB

09.gan/image/process.png

0 → 100644

131.0 KB

09.gan/index.cn.html

0 → 100644

此差异已折叠。

09.gan/network.py

0 → 100644

09.gan/utility.py

0 → 100644