merge

Showing

core/general-server/op/general_dist_kv_infer_op.cpp

100755 → 100644

core/sdk-cpp/include/macros.h

0 → 100644

doc/INFERNCE_TO_SERVING.md

0 → 100644

doc/INFERNCE_TO_SERVING_CN.md

0 → 100644

doc/NEW_WEB_SERVICE.md

0 → 100644

doc/NEW_WEB_SERVICE_CN.md

0 → 100644

| W: | H:

| W: | H:

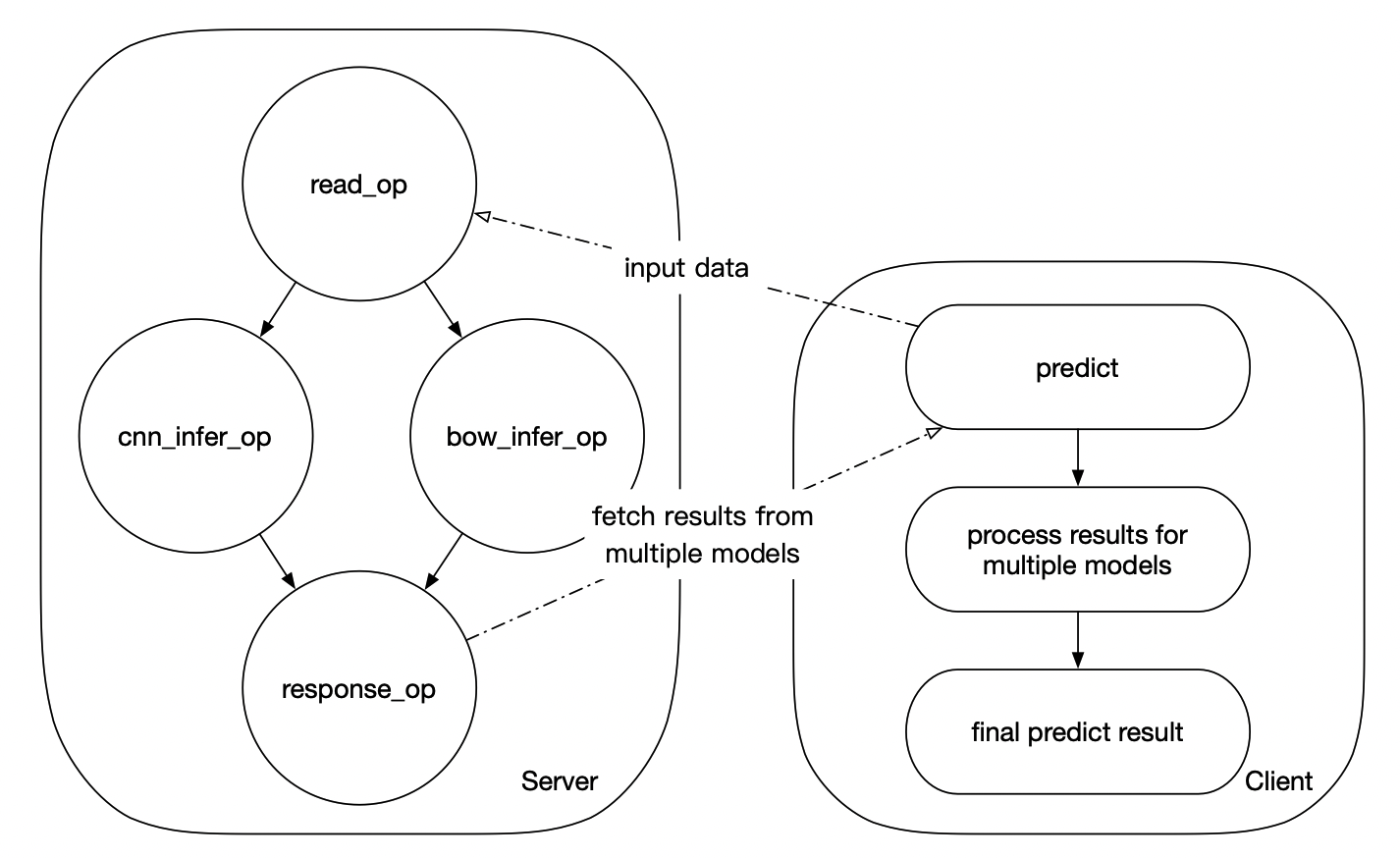

doc/complex_dag.png

0 → 100644

396.8 KB

doc/model_ensemble_example.png

0 → 100644

341.4 KB

doc/serving_logo.png

0 → 100644

167.5 KB

135.1 KB

148.2 KB

python/examples/senta/README.md

0 → 100644

python/examples/senta/get_data.sh

0 → 100644

此差异已折叠。

tools/Dockerfile.centos6.devel

0 → 100644