Merge pull request #1499 from TeslaZhao/develop

V0.7 Version Update Docs

Showing

此差异已折叠。

README_CN.md

100755 → 100644

此差异已折叠。

文件已移动

文件已移动

文件已移动

doc/Install_CN.md

0 → 100644

doc/Install_EN.md

0 → 100644

doc/Quick_Start_CN.md

0 → 100644

doc/Quick_Start_EN.md

0 → 100644

doc/TENSOR_RT.md

已删除

100644 → 0

doc/TENSOR_RT_CN.md

已删除

100644 → 0

doc/deprecated/CLUSTERING.md

已删除

100644 → 0

doc/deprecated/FAQ.md

已删除

100644 → 0

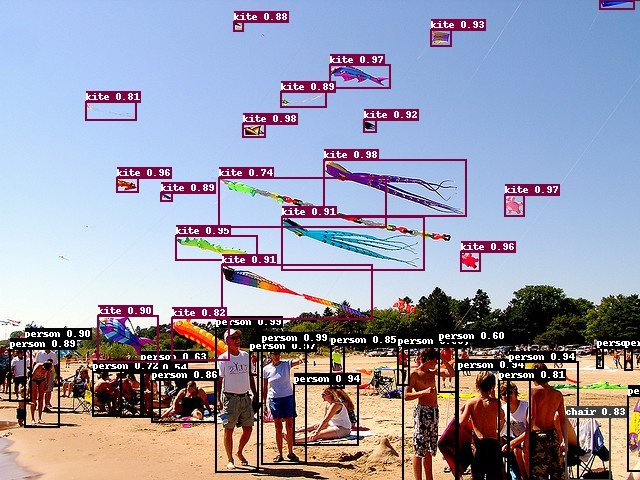

doc/images/detection.png

0 → 100644

510.1 KB