Merge branch 'PaddlePaddle:dygraph' into dygraph

Showing

| W: | H:

| W: | H:

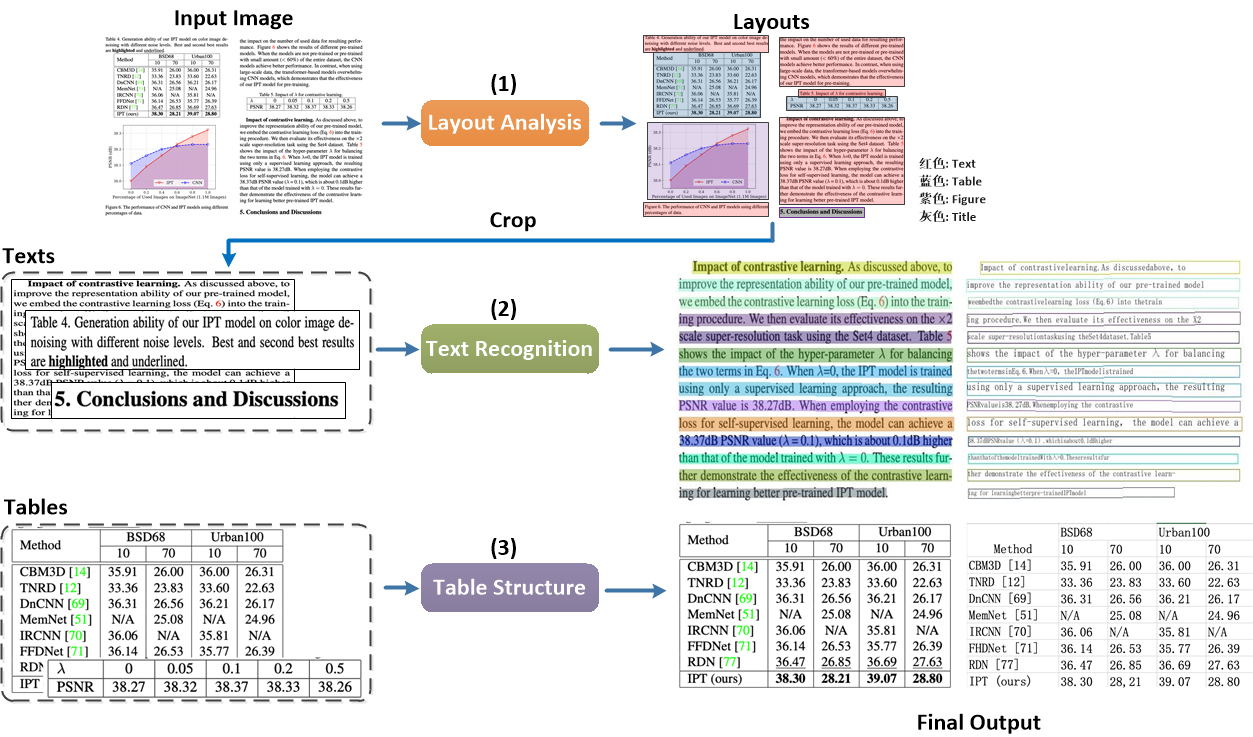

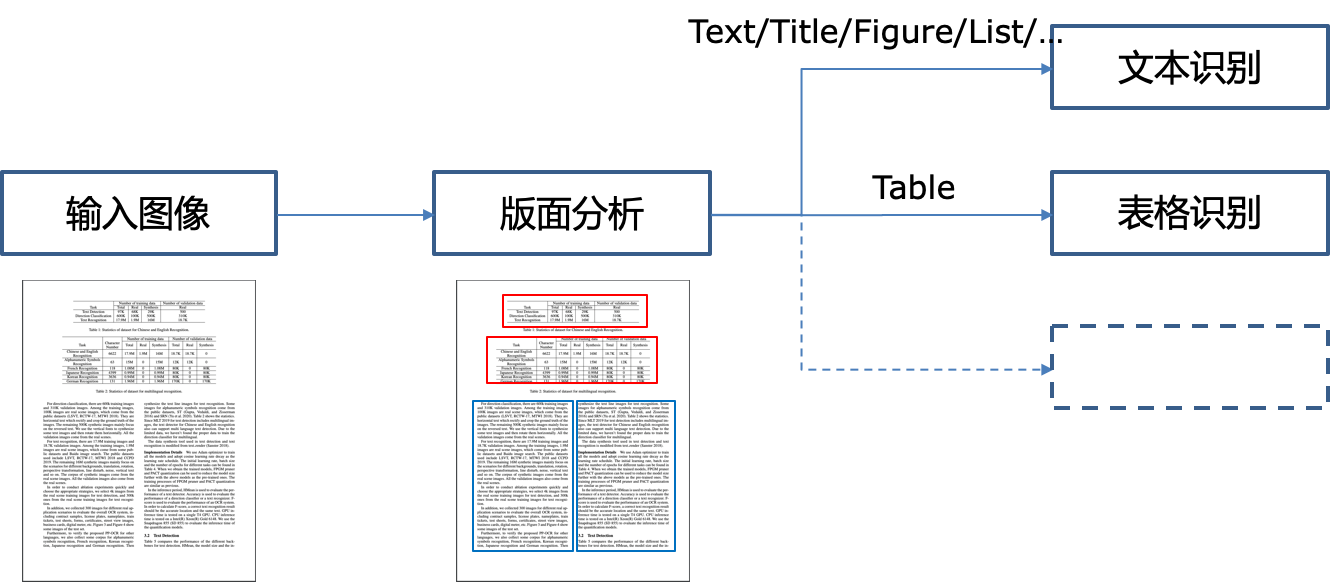

doc/table/pipeline.jpg

0 → 100644

611.2 KB

doc/table/pipeline.png

已删除

100644 → 0

115.7 KB

文件已移动

文件已移动

文件已移动

文件已移动

188.6 KB | W: | H:

195.4 KB | W: | H:

611.2 KB

115.7 KB