merge PaddleOCR

Showing

MANIFEST.in

0 → 100644

此差异已折叠。

README_cn.md

已删除

100644 → 0

README_en.md

0 → 100644

__init__.py

0 → 100644

197.8 KB

170.7 KB

61.1 KB

此差异已折叠。

doc/doc_ch/whl.md

0 → 100644

doc/doc_en/whl_en.md

0 → 100644

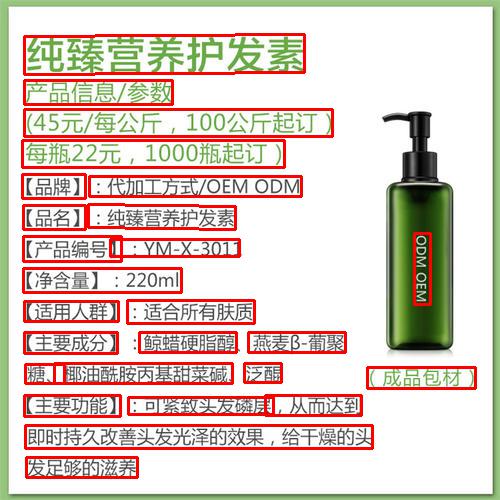

doc/imgs_en/img623.jpg

0 → 100755

247.6 KB

125.6 KB

332.8 KB

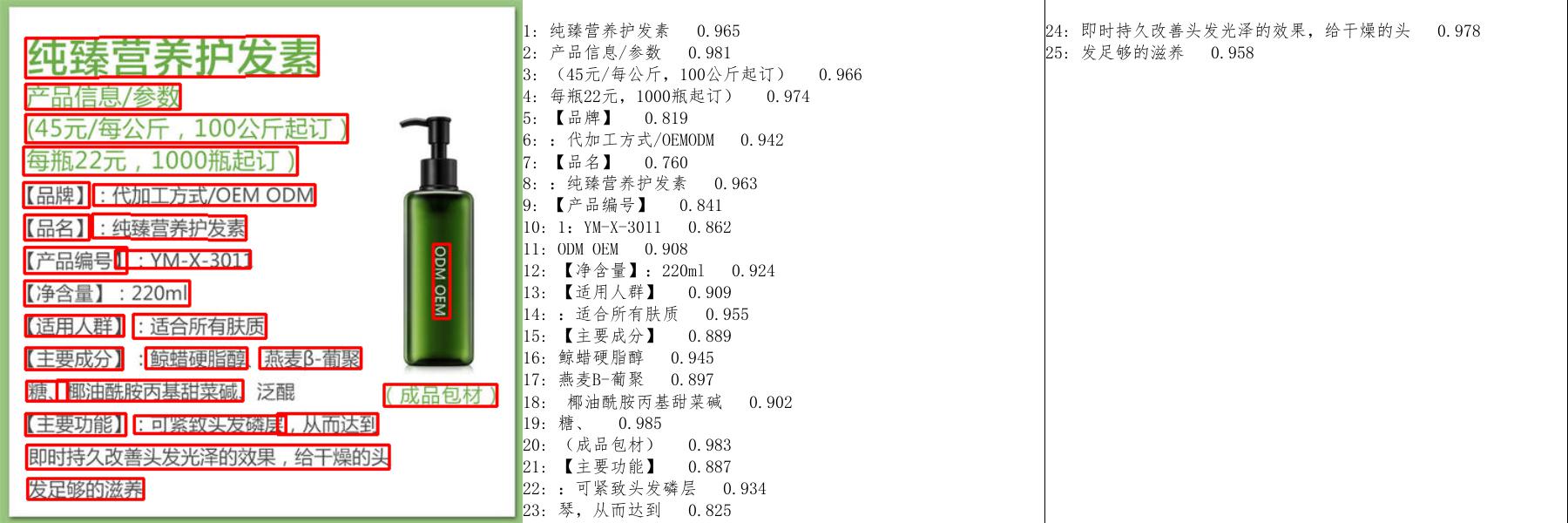

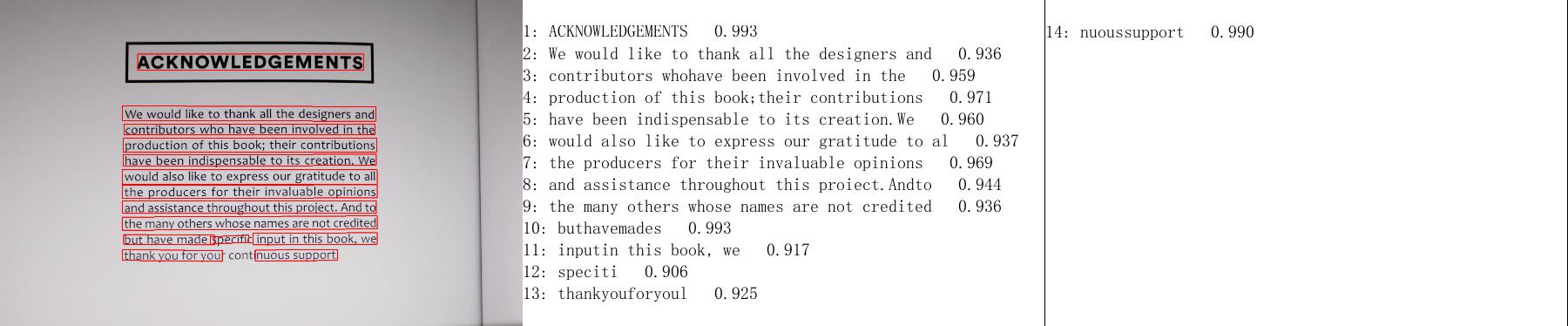

doc/imgs_results/whl/11_det.jpg

0 → 100644

61.4 KB

135.0 KB

doc/imgs_results/whl/12_det.jpg

0 → 100644

166.3 KB

84.0 KB

docker/hubserving/README.md

0 → 100644

docker/hubserving/README_cn.md

0 → 100644

paddleocr.py

0 → 100644

setup.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。