add test_tipc

Showing

test_tipc/README.md

0 → 100644

test_tipc/common_func.sh

0 → 100644

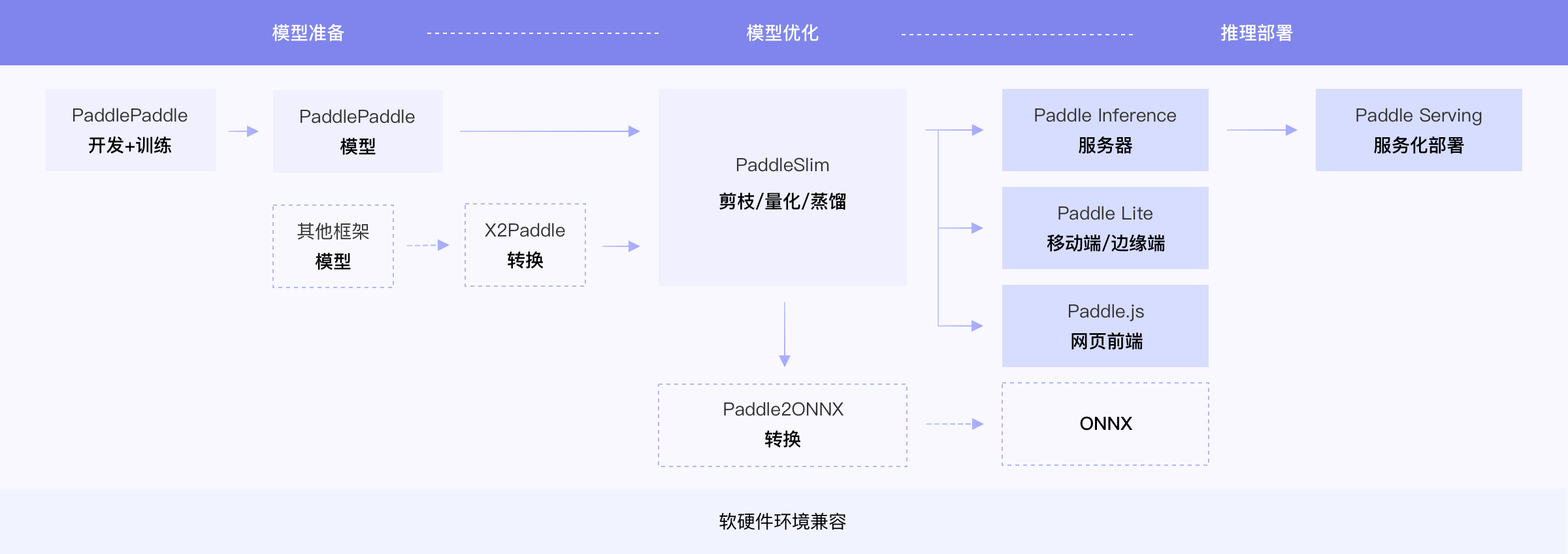

test_tipc/docs/guide.png

0 → 100644

138.3 KB

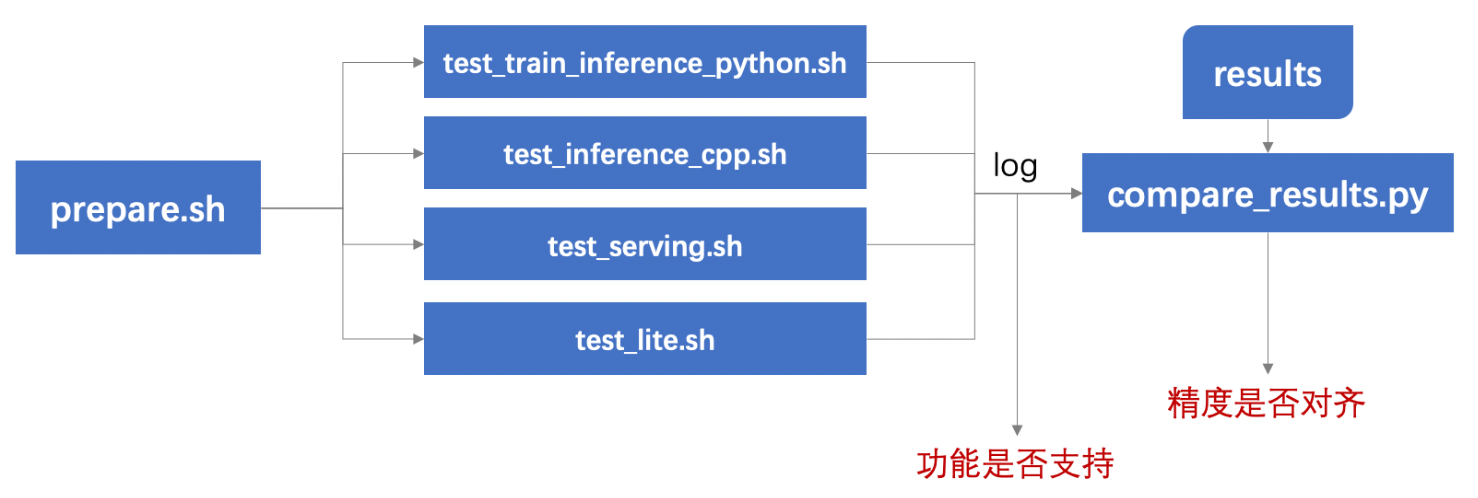

test_tipc/docs/test.png

0 → 100644

223.8 KB

test_tipc/prepare.sh

0 → 100644