Merge branch 'develop' into crf

Showing

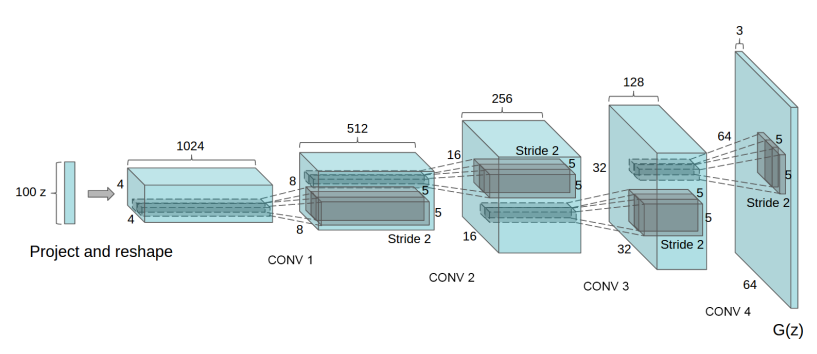

doc/design/dcgan.png

0 → 100644

56.6 KB

doc/design/gan_api.md

0 → 100644

doc/design/optimizer.md

0 → 100644

doc/design/selected_rows.md

0 → 100644

doc/design/test.dot

0 → 100644

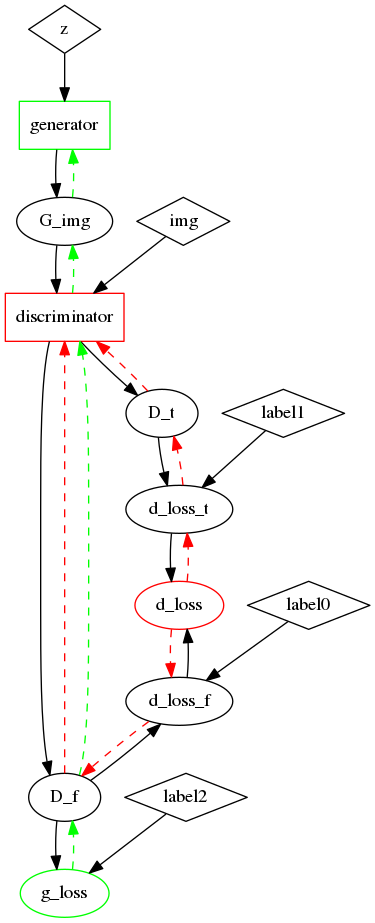

doc/design/test.dot.png

0 → 100644

57.6 KB

paddle/framework/executor.cc

0 → 100644

paddle/framework/executor.h

0 → 100644

paddle/framework/executor_test.cc

0 → 100644

paddle/operators/adamax_op.cc

0 → 100644

paddle/operators/adamax_op.cu

0 → 100644

paddle/operators/adamax_op.h

0 → 100644

paddle/operators/conv_shift_op.cc

0 → 100644

paddle/operators/conv_shift_op.cu

0 → 100644

paddle/operators/conv_shift_op.h

0 → 100644

paddle/operators/feed_op.cc

0 → 100644

paddle/operators/feed_op.cu

0 → 100644

paddle/operators/feed_op.h

0 → 100644

paddle/operators/fetch_op.cc

0 → 100644

此差异已折叠。

paddle/operators/fetch_op.cu

0 → 100644

此差异已折叠。

paddle/operators/fetch_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

paddle/operators/interp_op.cc

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

paddle/operators/math/vol2col.cc

0 → 100644

此差异已折叠。

paddle/operators/math/vol2col.cu

0 → 100644

此差异已折叠。

paddle/operators/math/vol2col.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。