optimize pool3x3 kernel

Showing

doc/design_doc.md

0 → 100644

doc/development_doc.md

0 → 100644

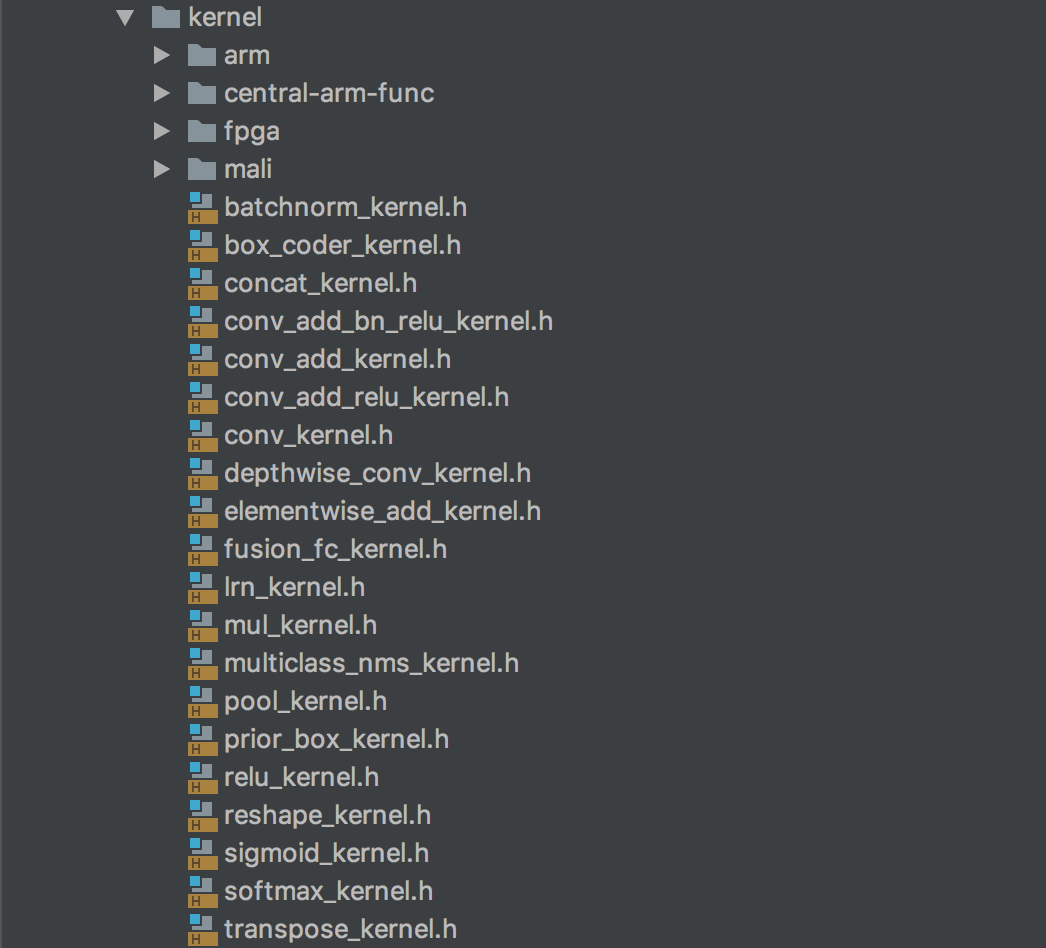

doc/images/devices.png

0 → 100644

116.0 KB

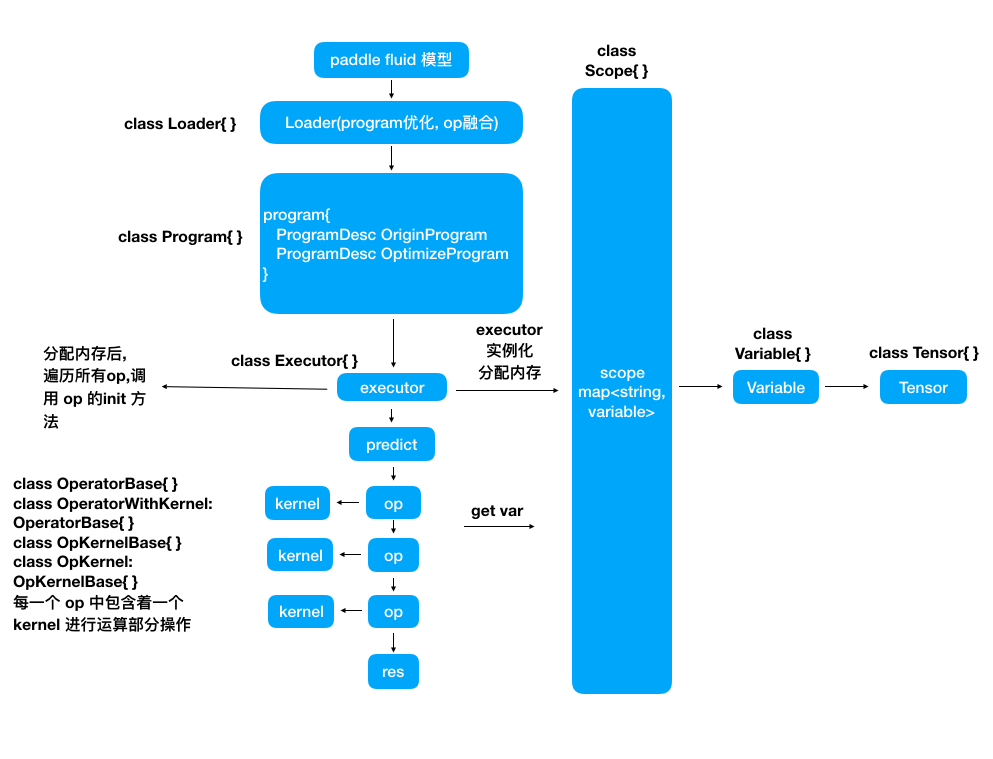

doc/images/flow_chart.png

0 → 100644

110.3 KB

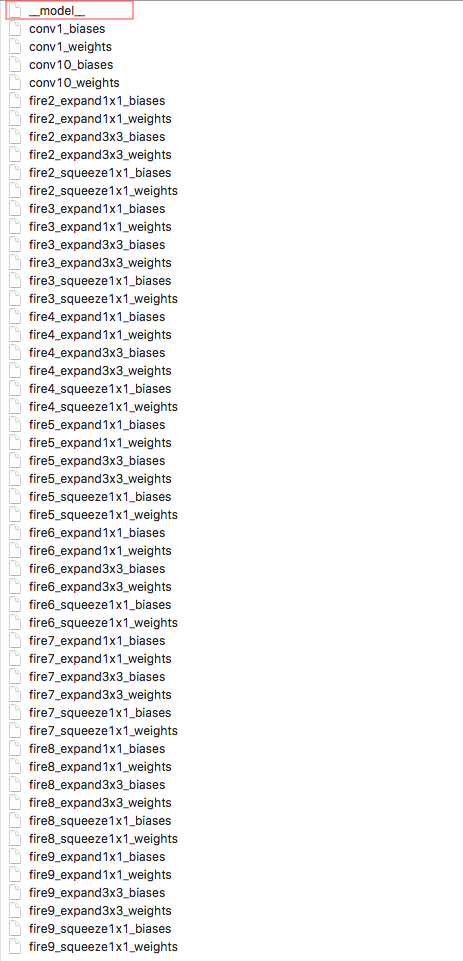

doc/images/model_desc.png

0 → 100644

162.1 KB

10.1 KB

文件已添加

此差异已折叠。

文件已添加

文件已添加

src/io/loader.cpp

0 → 100644

src/io/loader.h

0 → 100644

src/io/paddle_mobile.cpp

0 → 100644

src/io/paddle_mobile.h

0 → 100644

src/operators/dropout_op.cpp

0 → 100644

src/operators/dropout_op.h

0 → 100644

src/operators/im2sequence_op.cpp

0 → 100644

src/operators/im2sequence_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。