fix conflict

Showing

demo/ReadMe.md

0 → 100644

demo/getDemo.sh

0 → 100644

doc/design_doc.md

0 → 100644

doc/development_doc.md

0 → 100644

doc/images/devices.png

0 → 100644

116.0 KB

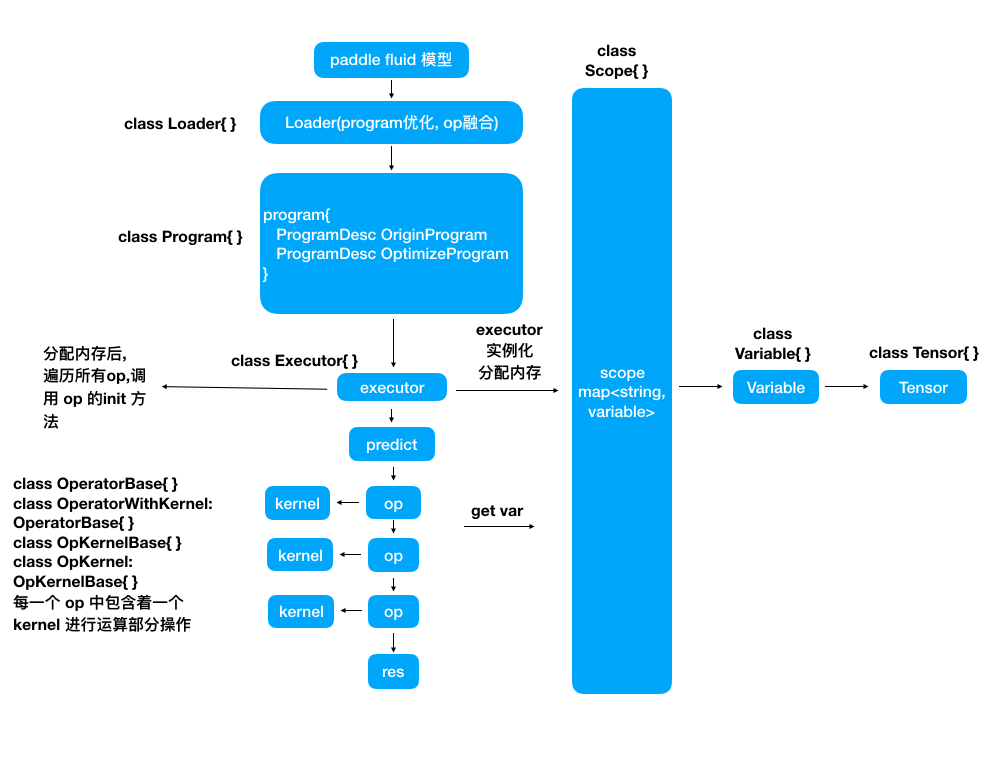

doc/images/flow_chart.png

0 → 100644

110.3 KB

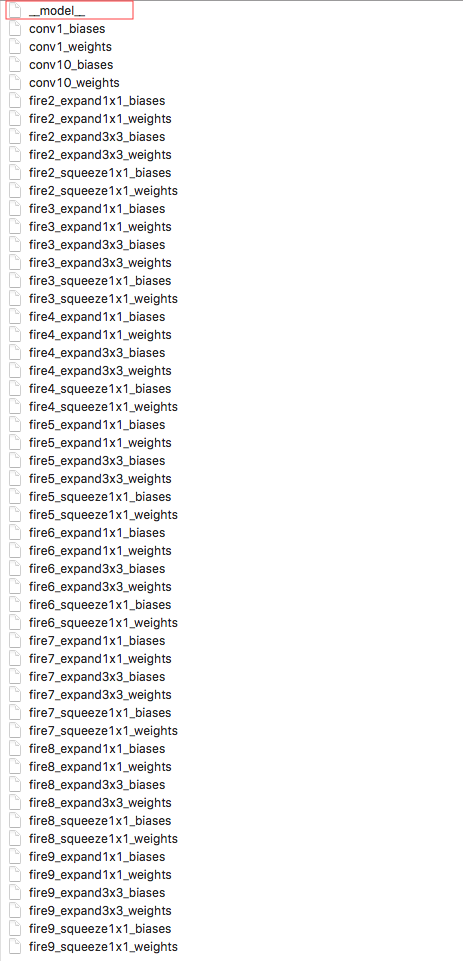

doc/images/model_desc.png

0 → 100644

162.1 KB

10.1 KB

doc/quantification.md

0 → 100644

metal/Podfile

0 → 100644

此差异已折叠。

文件已添加

此差异已折叠。

此差异已折叠。

文件已添加

此差异已折叠。

文件已添加

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

python/tools/mdl2fluid/loader.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

python/tools/mdl2fluid/swicher.py

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/fpga/api.cpp

0 → 100644

此差异已折叠。

src/fpga/api.h

0 → 100644

此差异已折叠。

src/fpga/bias_scale.cpp

0 → 100644

此差异已折叠。

src/fpga/bias_scale.h

0 → 100644

此差异已折叠。

src/fpga/filter.cpp

0 → 100644

此差异已折叠。

src/fpga/filter.h

0 → 100644

此差异已折叠。

src/fpga/image.cpp

0 → 100644

此差异已折叠。

src/fpga/image.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/io/api.cc

0 → 100644

此差异已折叠。

src/io/api_paddle_mobile.cc

0 → 100644

此差异已折叠。

src/io/api_paddle_mobile.h

0 → 100644

此差异已折叠。

src/io/loader.cpp

0 → 100644

此差异已折叠。

src/io/loader.h

0 → 100644

此差异已折叠。

src/io/paddle_inference_api.h

0 → 100644

此差异已折叠。

src/io/paddle_mobile.cpp

0 → 100644

此差异已折叠。

src/io/paddle_mobile.h

0 → 100644

此差异已折叠。

src/ios_io/PaddleMobileCPU.h

0 → 100644

此差异已折叠。

src/ios_io/PaddleMobileCPU.mm

0 → 100644

此差异已折叠。

src/ios_io/op_symbols.h

0 → 100644

此差异已折叠。

src/jni/PML.java

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/conv_transpose_op.h

0 → 100644

此差异已折叠。

src/operators/crf_op.cpp

0 → 100644

此差异已折叠。

src/operators/crf_op.h

0 → 100644

此差异已折叠。

src/operators/dropout_op.cpp

0 → 100644

此差异已折叠。

src/operators/dropout_op.h

0 → 100644

此差异已折叠。

src/operators/flatten_op.cpp

0 → 100644

此差异已折叠。

src/operators/flatten_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/fusion_conv_bn_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/fusion_fc_relu_op.h

0 → 100644

此差异已折叠。

src/operators/gru_op.cpp

0 → 100644

此差异已折叠。

src/operators/gru_op.h

0 → 100644

此差异已折叠。

src/operators/im2sequence_op.cpp

0 → 100644

此差异已折叠。

src/operators/im2sequence_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/kernel/crf_kernel.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/kernel/gru_kernel.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/kernel/mali/acl_operator.cc

100644 → 100755

文件模式从 100644 更改为 100755

src/operators/kernel/mali/acl_operator.h

100644 → 100755

此差异已折叠。

src/operators/kernel/mali/acl_tensor.cc

100644 → 100755

文件模式从 100644 更改为 100755

src/operators/kernel/mali/acl_tensor.h

100644 → 100755

文件模式从 100644 更改为 100755

src/operators/kernel/mali/batchnorm_kernel.cpp

100644 → 100755

此差异已折叠。

src/operators/kernel/mali/fushion_fc_kernel.cpp

100644 → 100755

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/lookup_op.cpp

0 → 100644

此差异已折叠。

src/operators/lookup_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/math/gru_compute.h

0 → 100644

此差异已折叠。

此差异已折叠。

src/operators/math/gru_kernel.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/operators/prelu_op.cpp

0 → 100644

此差异已折叠。

src/operators/prelu_op.h

0 → 100644

此差异已折叠。

src/operators/resize_op.cpp

0 → 100644

此差异已折叠。

src/operators/resize_op.h

0 → 100644

此差异已折叠。

src/operators/scale_op.cpp

0 → 100644

此差异已折叠。

src/operators/scale_op.h

0 → 100644

此差异已折叠。

src/operators/shape_op.cpp

0 → 100644

此差异已折叠。

src/operators/shape_op.h

0 → 100644

此差异已折叠。

src/operators/slice_op.cpp

0 → 100644

此差异已折叠。

src/operators/slice_op.h

0 → 100644

此差异已折叠。

src/operators/split_op.cpp

0 → 100644

此差异已折叠。

src/operators/split_op.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

test/fpga/test_concat_op.cpp

0 → 100644

此差异已折叠。

test/fpga/test_format_data.cpp

0 → 100644

此差异已折叠。

test/fpga/test_tensor_quant.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

test/net/test_alexnet.cpp

0 → 100644

此差异已折叠。

test/net/test_genet_combine.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

test/net/test_inceptionv4.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

test/net/test_nlp.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

test/operators/test_gru_op.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

test/operators/test_prelu_op.cpp

0 → 100644

此差异已折叠。

test/operators/test_resize_op.cpp

0 → 100644

此差异已折叠。

test/operators/test_scale_op.cpp

0 → 100644

此差异已折叠。

test/operators/test_slice_op.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

tools/net-detail.awk

0 → 100644

此差异已折叠。

tools/net.awk

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

tools/quantification/README.md

0 → 100644

此差异已折叠。

tools/quantification/convert.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。