Merge pull request #992 from yt605155624/fix_docs

[TTS] add tts tutorial

Showing

demos/metaverse/Lamarr.png

0 → 100644

441.0 KB

demos/metaverse/path.sh

0 → 100755

demos/metaverse/run.sh

0 → 100755

demos/metaverse/sentences.txt

0 → 100644

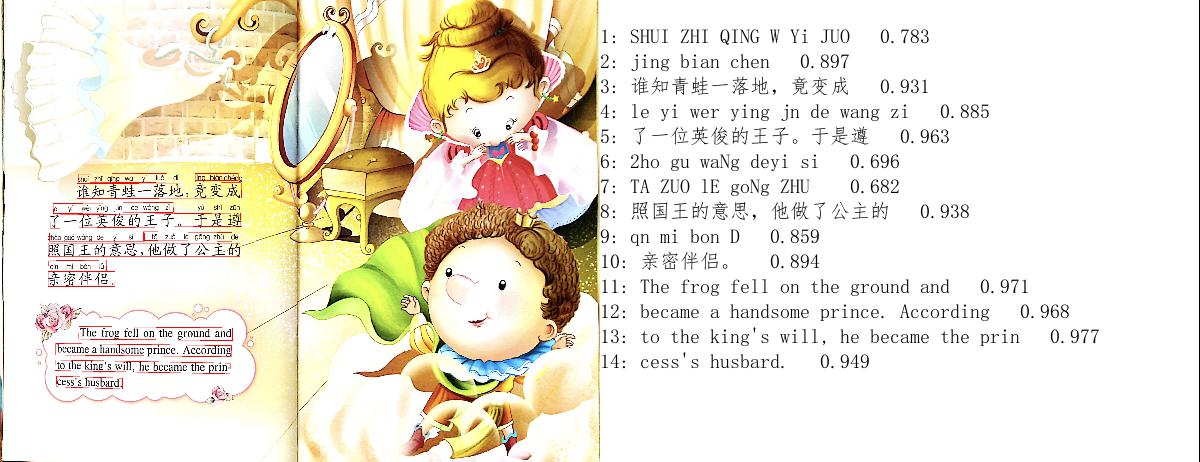

demos/story_talker/imgs/000.jpg

0 → 100644

1.5 MB

demos/story_talker/ocr.py

0 → 100644

demos/story_talker/path.sh

0 → 100755

demos/story_talker/run.sh

0 → 100755

demos/story_talker/simfang.ttf

0 → 100644

文件已添加

demos/style_fs2/path.sh

0 → 100755

demos/style_fs2/run.sh

0 → 100755

demos/style_fs2/sentences.txt

0 → 100644

demos/style_fs2/style_syn.py

0 → 100644

46.7 KB

117.1 KB

1.5 MB

docs/tutorial/tts/source/ocr.wav

0 → 100644

文件已添加

107.5 KB

224.2 KB

1.5 MB

581.2 KB

文件已添加

367.9 KB

此差异已折叠。

examples/aishell3/voc1/README.md

0 → 100644