Merge branch 'dygraph' into doc_2.1

Showing

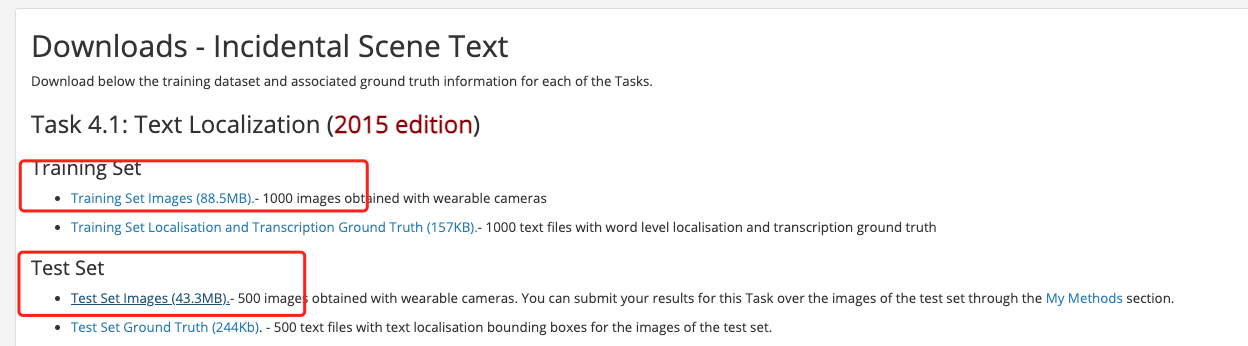

doc/ic15_location_download.png

0 → 100644

80.1 KB

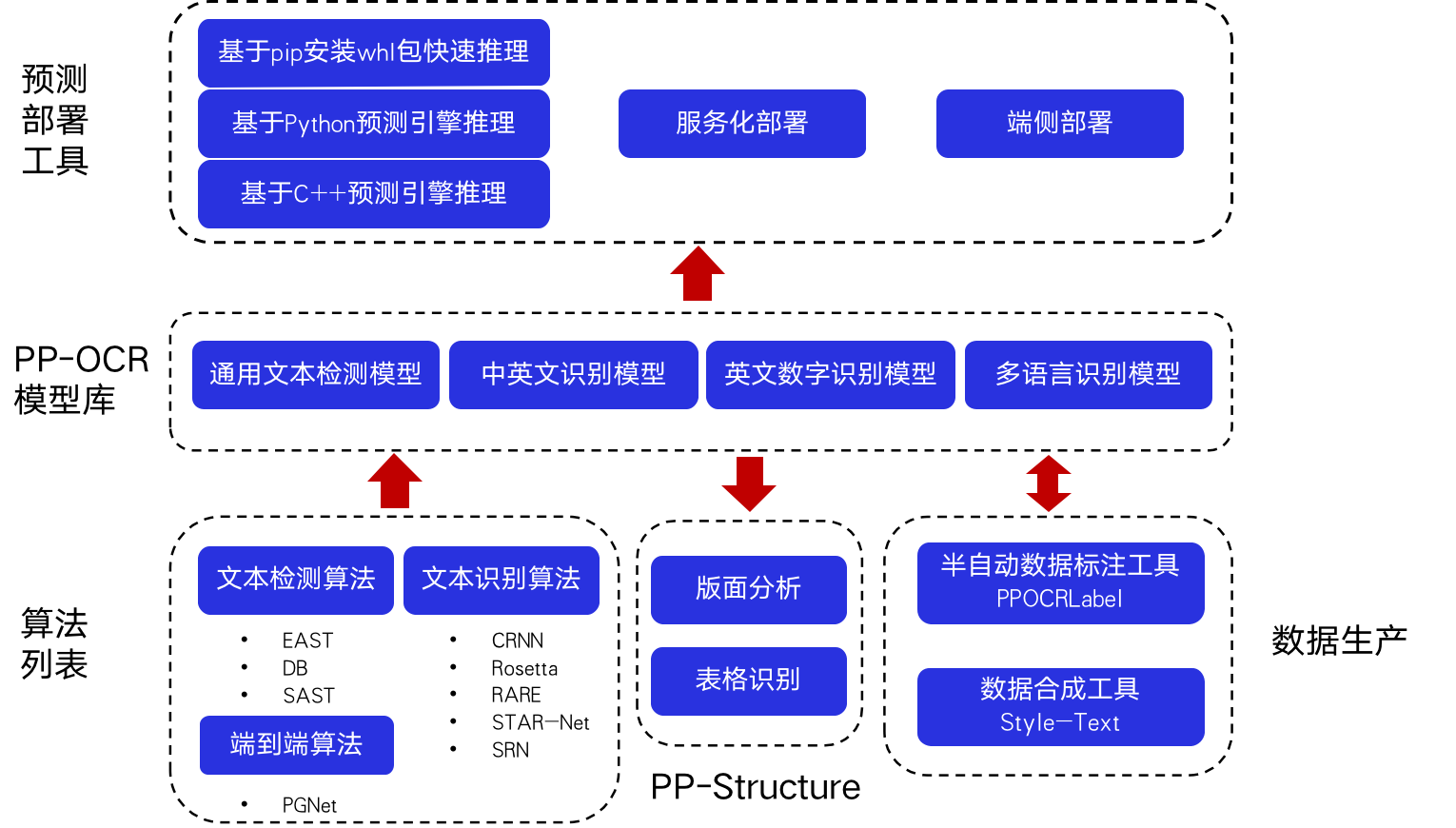

doc/overview.png

0 → 100644

142.8 KB

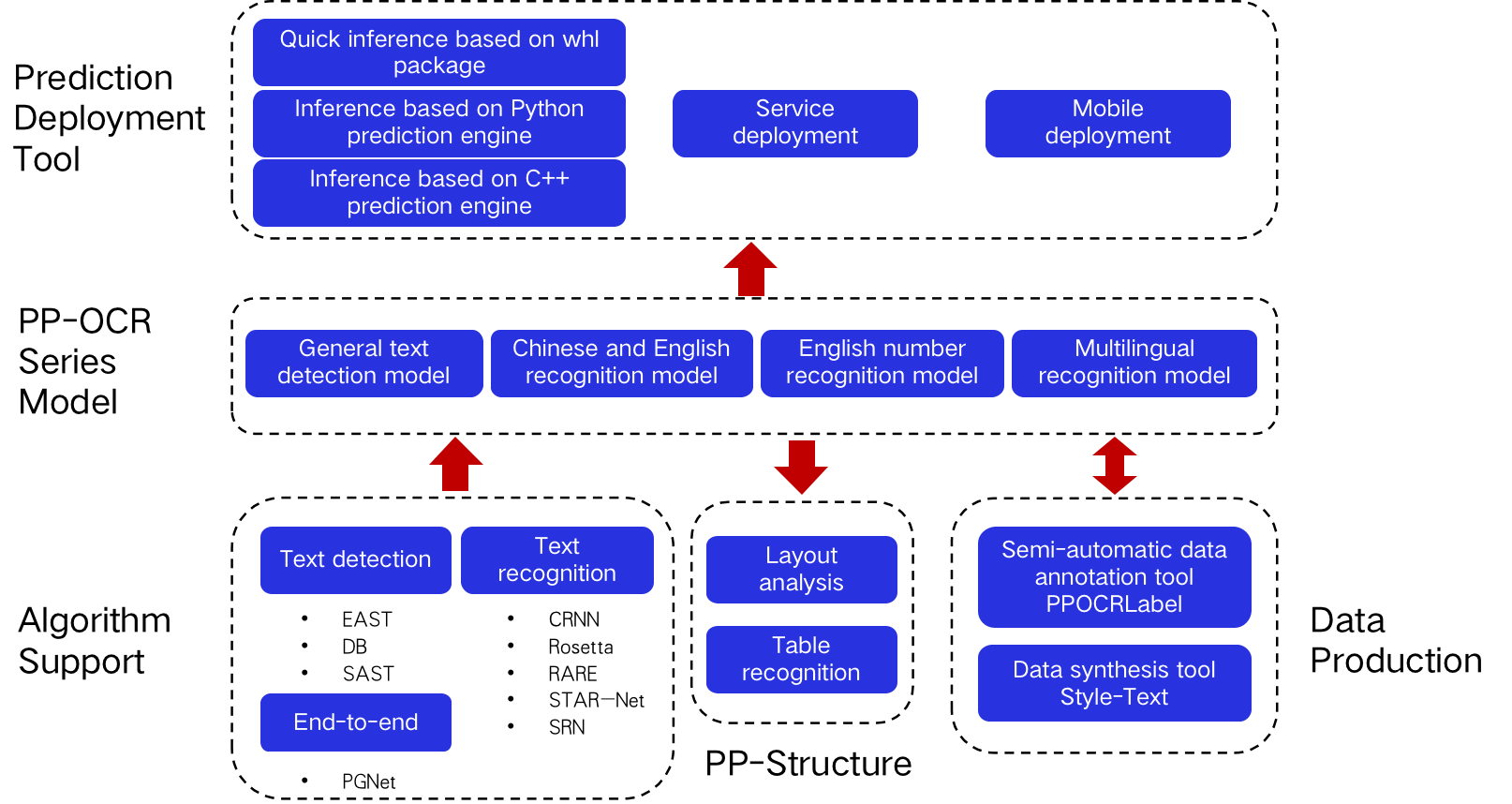

doc/overview_en.png

0 → 100644

144.4 KB

80.1 KB

142.8 KB

144.4 KB