Merge branch 'develop' of github.com:baidu/Paddle into feature/mnist_train_api

Showing

WORKSPACE

已删除

100644 → 0

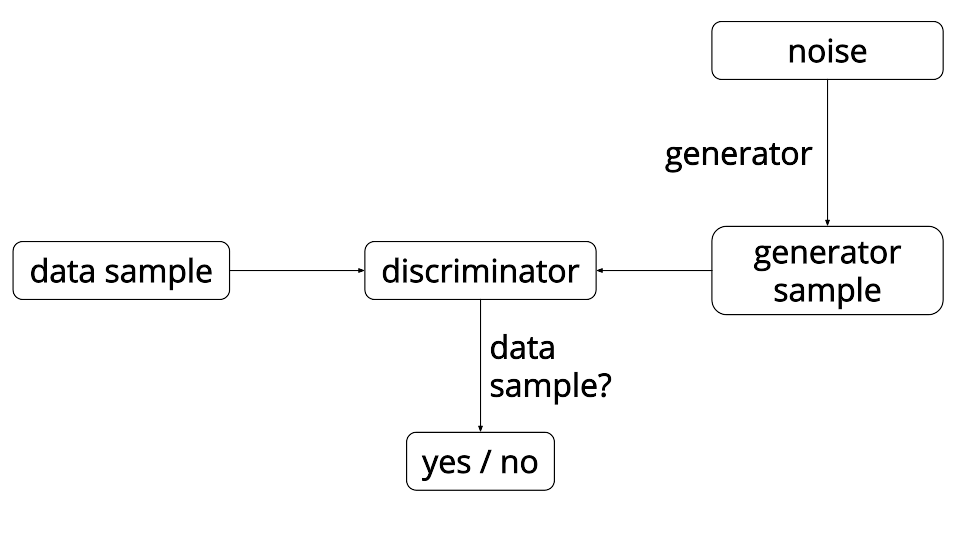

doc/tutorials/gan/gan.png

0 → 100644

32.5 KB

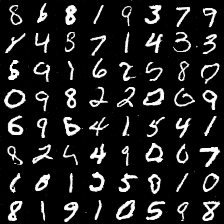

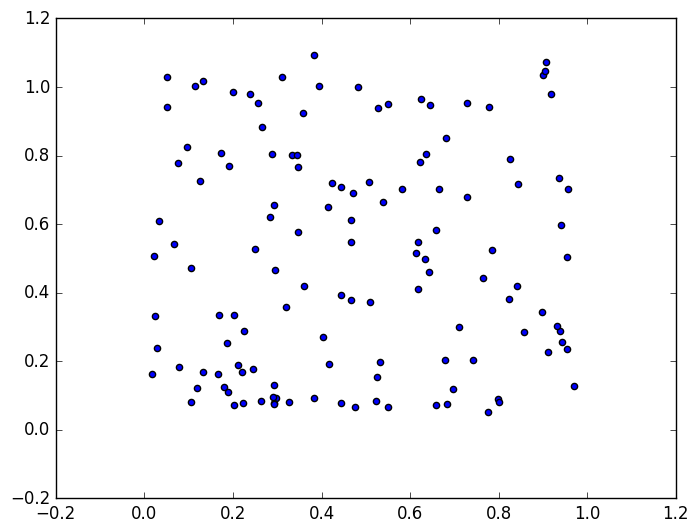

doc/tutorials/gan/index_en.md

0 → 100644

28.0 KB

20.1 KB

third_party/gflags.BUILD

已删除

100644 → 0

third_party/gflags_test/BUILD

已删除

100644 → 0

third_party/glog.BUILD

已删除

100644 → 0

third_party/glog_test/BUILD

已删除

100644 → 0

third_party/gtest.BUILD

已删除

100644 → 0