溧阳摄影圈

Showing

NO35/imgs/束手无策_td_att3067312.jpg

0 → 100644

468.9 KB

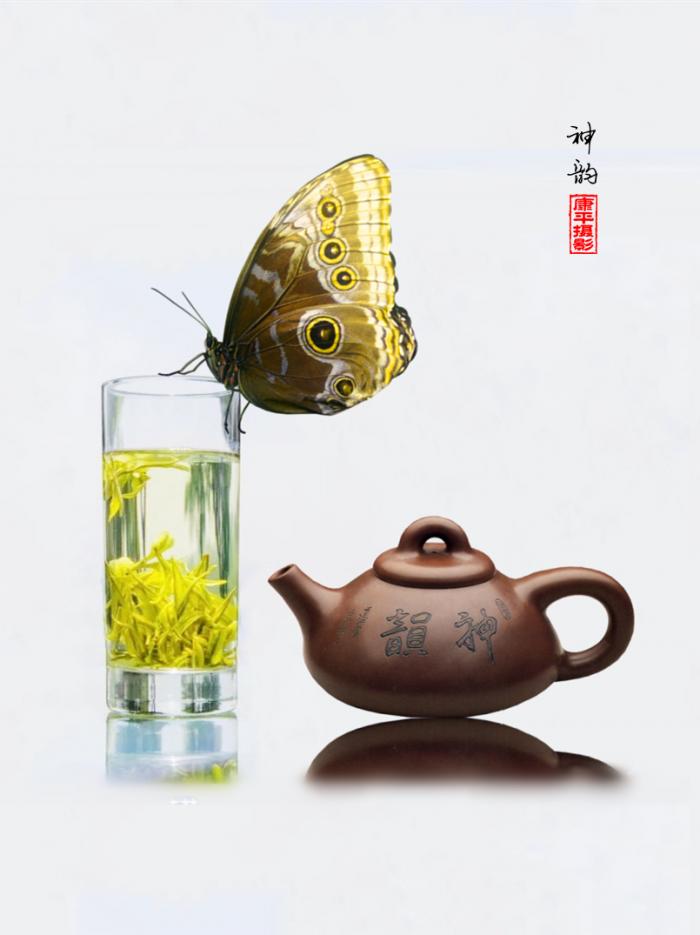

NO35/imgs/白茶神韵_td_att3067306.jpg

0 → 100644

41.4 KB

45.9 KB

53.6 KB

NO35/imgs/秋日晨韵_td_att3065566.jpg

0 → 100644

1.3 MB

NO35/溧阳摄影圈.py

0 → 100644