Merge branch 'dev' into dev_v2.6.0

Showing

build.sh

已删除

100644 → 0

文件已删除

distribution/bin/shutdown.sh

0 → 100644

distribution/bin/startup.sh

0 → 100644

distribution/conf/application.yml

0 → 100644

文件已移动

distribution/pom.xml

0 → 100644

distribution/readme.md

0 → 100644

distribution/release-km.xml

0 → 100755

distribution/upgrade_config.md

0 → 100644

785.2 KB

2.5 MB

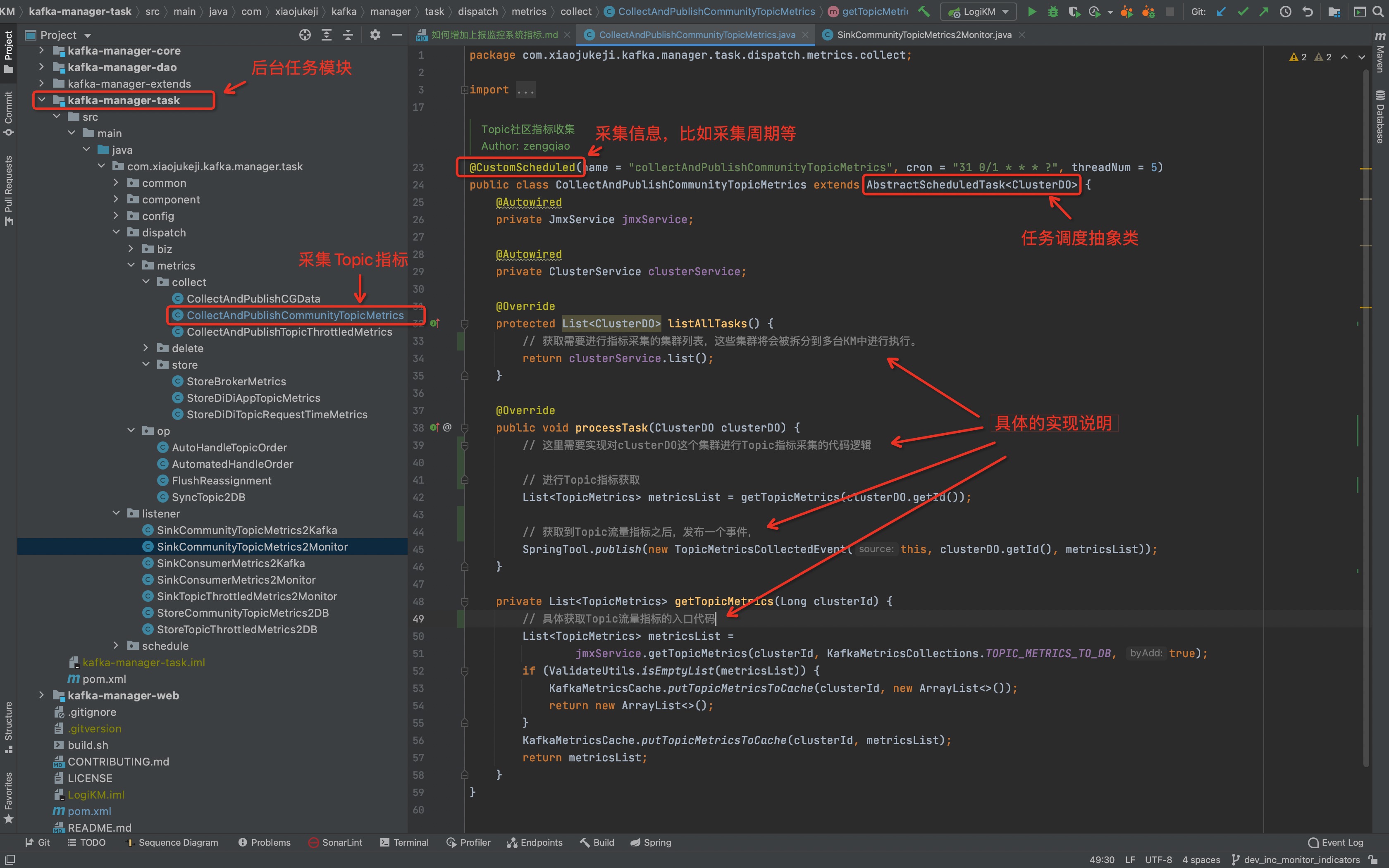

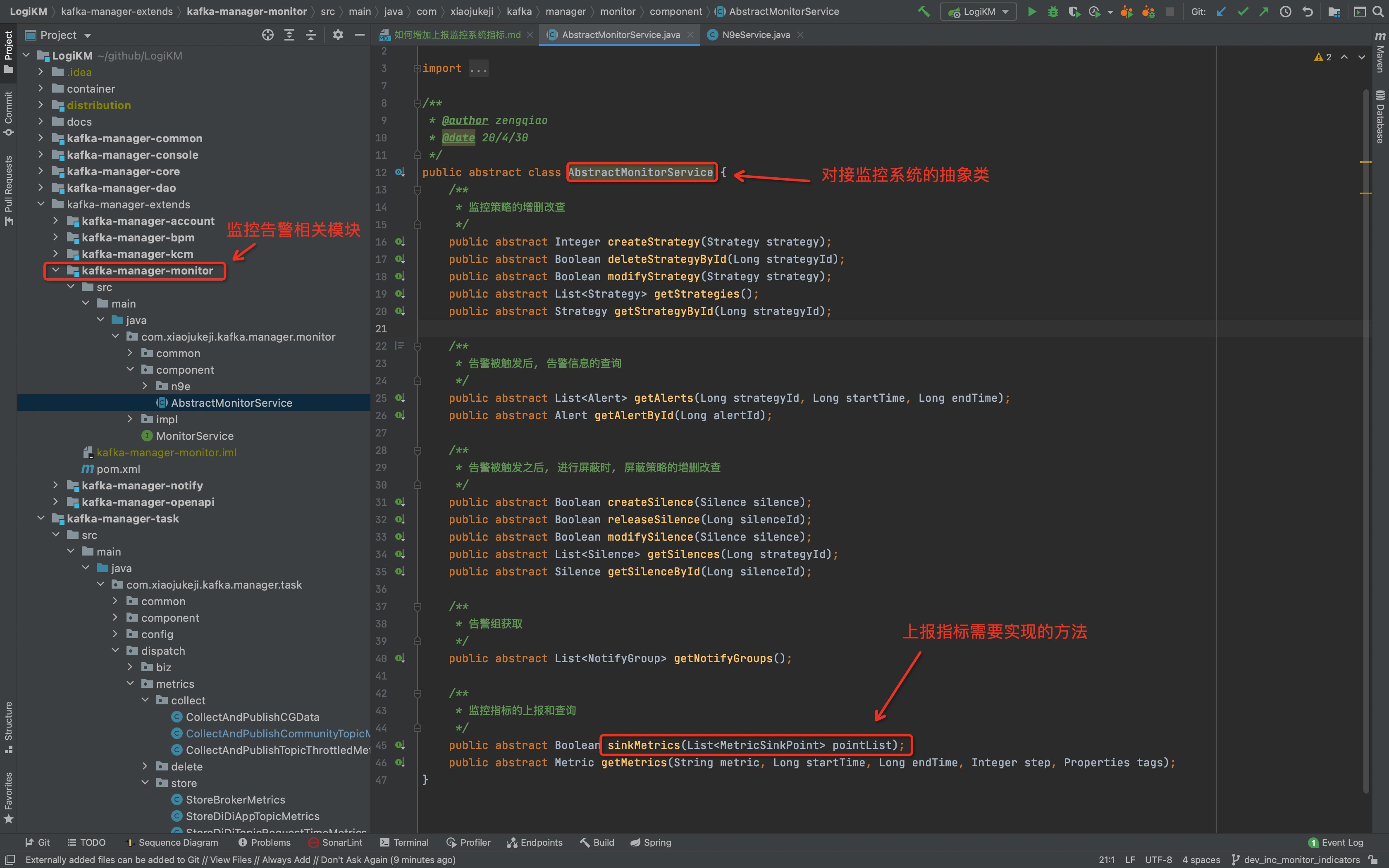

docs/dev_guide/如何增加上报监控系统指标.md

0 → 100644

36.2 KB

文件已移动