Initial Commit

上级

Showing

.gitignore

0 → 100644

.gitmodules

0 → 100644

LICENSE

0 → 100644

README.md

0 → 100644

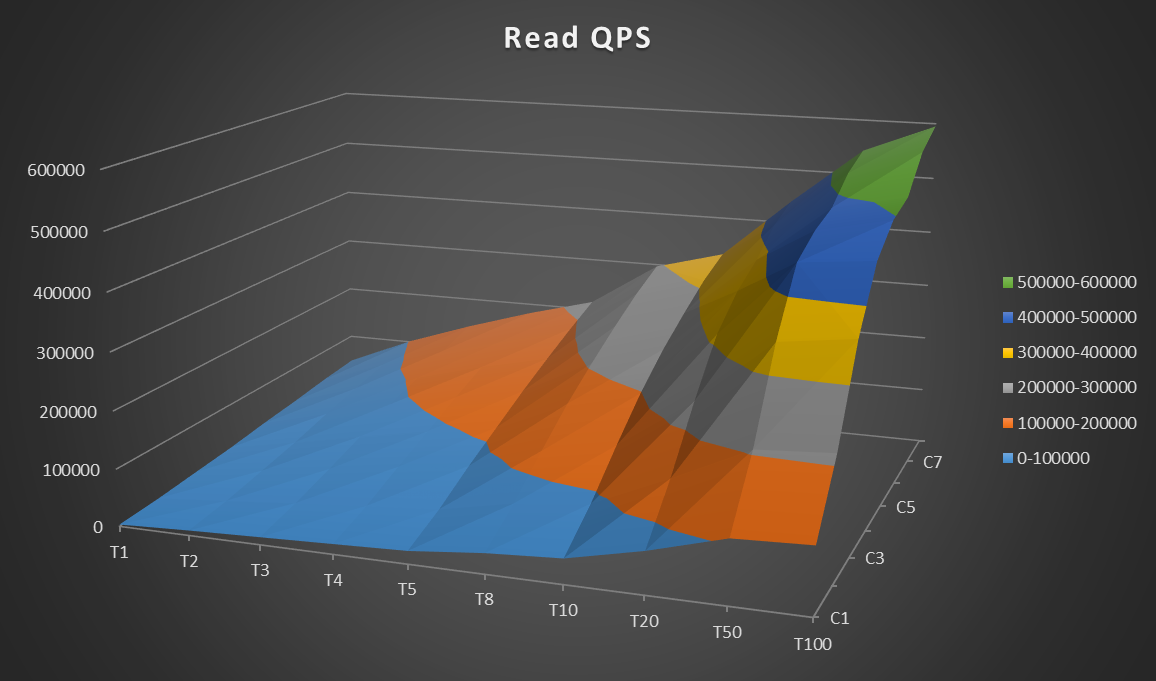

docs/benchmark/r1.png

0 → 100644

291.4 KB

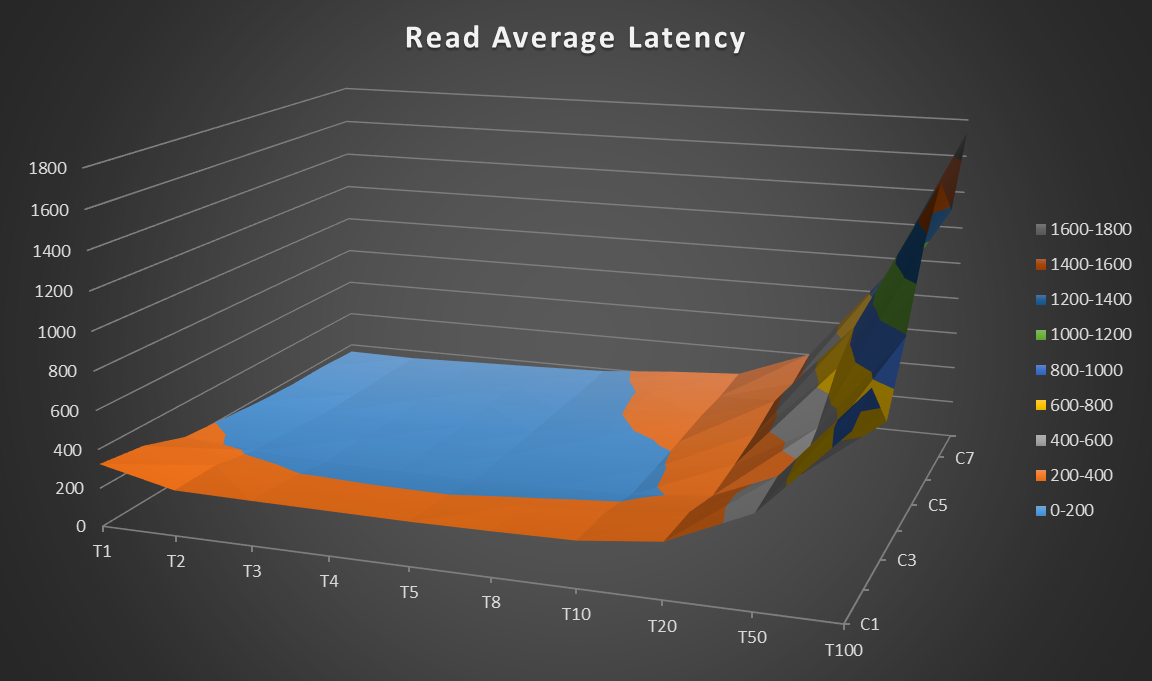

docs/benchmark/r2.png

0 → 100644

298.1 KB

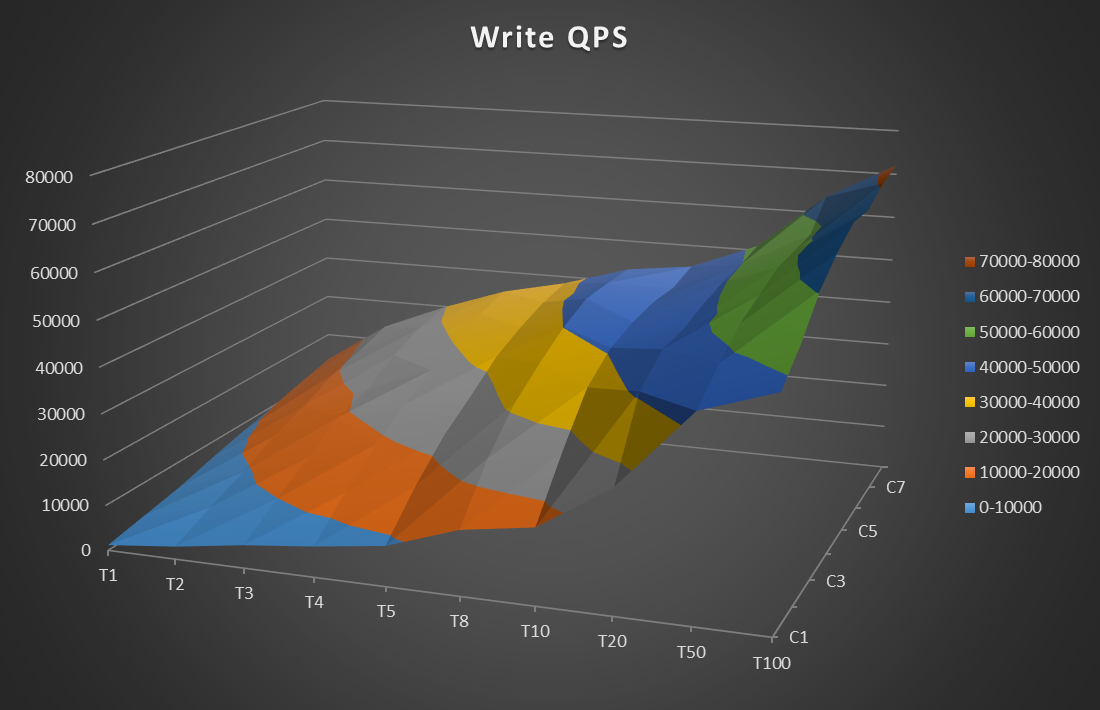

docs/benchmark/r3.png

0 → 100644

268.4 KB

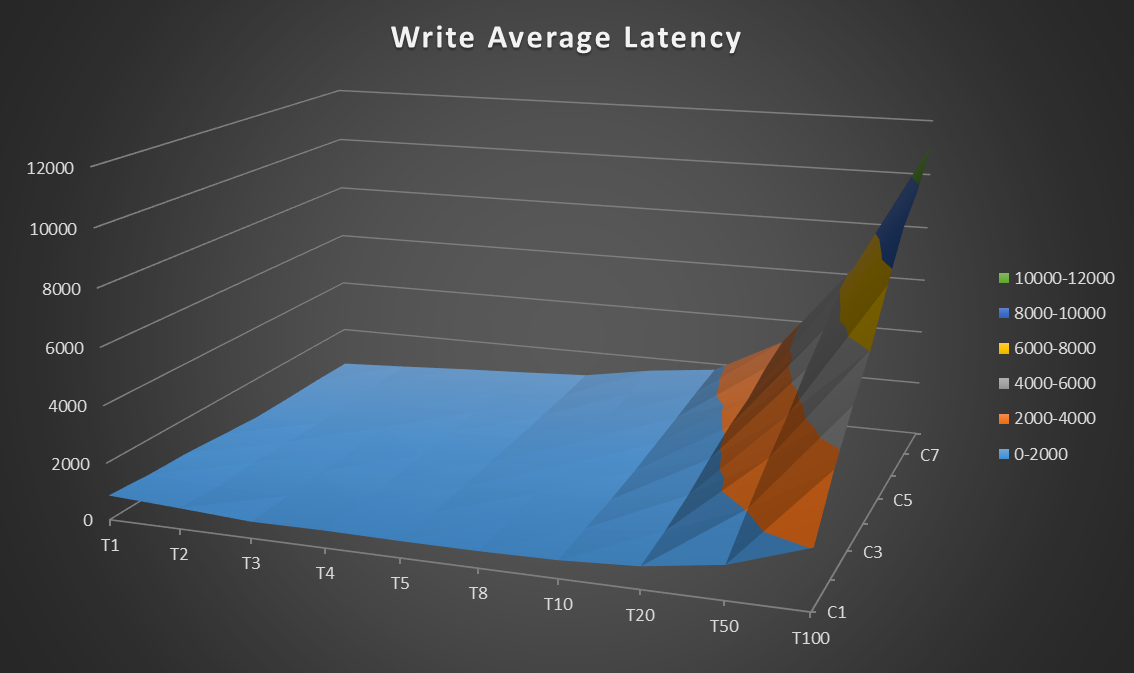

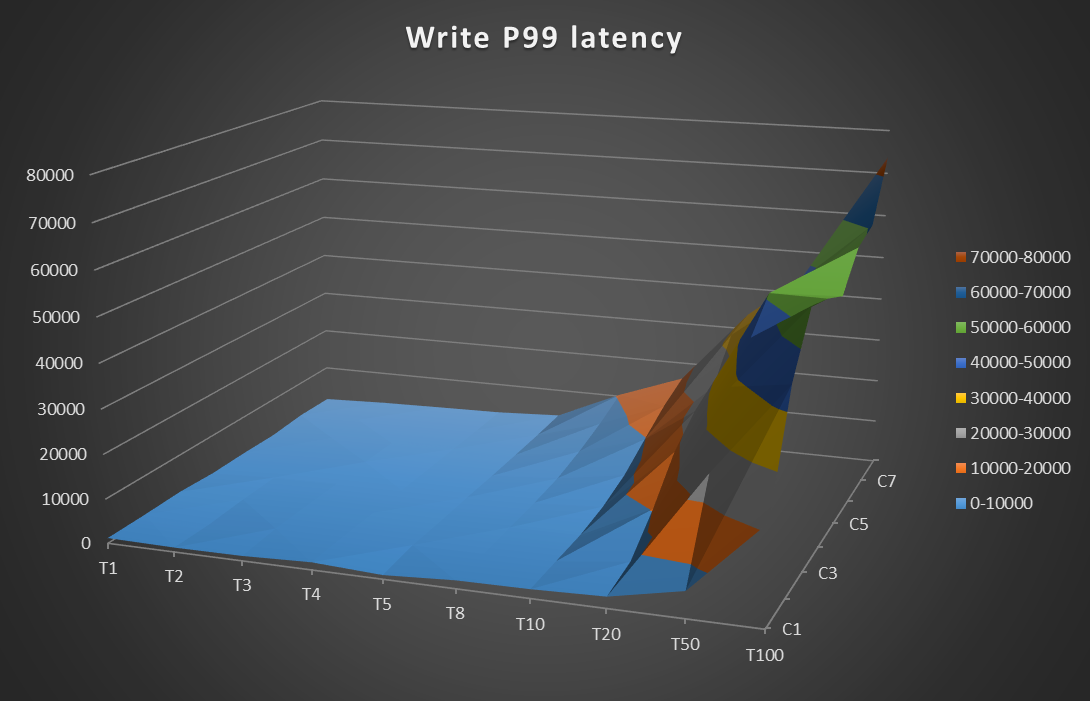

docs/benchmark/w1.png

0 → 100644

303.5 KB

docs/benchmark/w2.png

0 → 100644

278.5 KB

docs/benchmark/w3.png

0 → 100644

284.5 KB

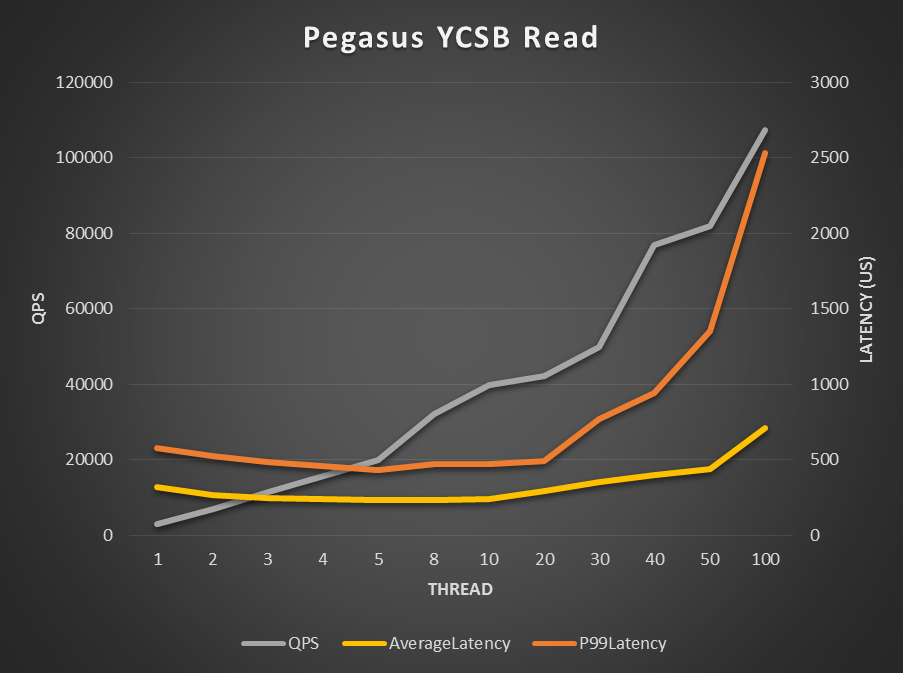

docs/benchmark/ycsb_read.png

0 → 100644

226.5 KB

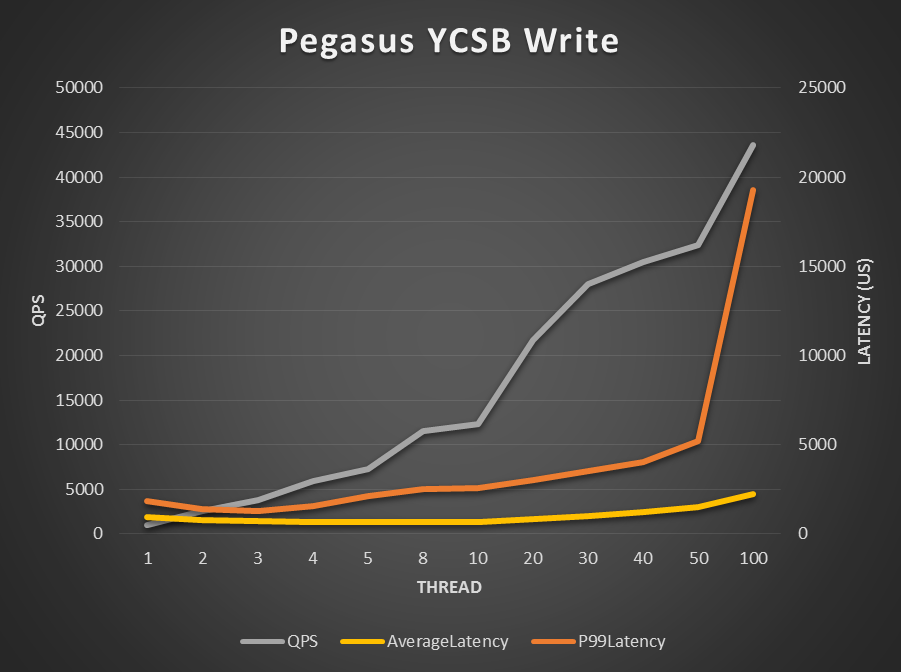

docs/benchmark/ycsb_write.png

0 → 100644

225.7 KB

docs/contribution.md

0 → 100644

docs/installation.md

0 → 100644

32.5 KB

152.8 KB

107.3 KB

72.2 KB

71.8 KB

文件已添加

文件已添加

文件已添加

文件已添加

docs/roadmap.md

0 → 100644

rocksdb/.clang-format

0 → 100644

rocksdb/.travis.yml

0 → 100644

rocksdb/AUTHORS

0 → 100644

rocksdb/CMakeLists.txt

0 → 100644

rocksdb/CONTRIBUTING.md

0 → 100644

rocksdb/DUMP_FORMAT.md

0 → 100644

rocksdb/HISTORY.md

0 → 100644

此差异已折叠。

rocksdb/INSTALL.md

0 → 100644

rocksdb/LICENSE

0 → 100644

rocksdb/Makefile

0 → 100644

此差异已折叠。

rocksdb/PATENTS

0 → 100644

rocksdb/README.md

0 → 100644

rocksdb/ROCKSDB_LITE.md

0 → 100644

rocksdb/USERS.md

0 → 100644

rocksdb/Vagrantfile

0 → 100644

rocksdb/WINDOWS_PORT.md

0 → 100644

rocksdb/appveyor.yml

0 → 100644

rocksdb/appveyordailytests.yml

0 → 100644

此差异已折叠。

rocksdb/build_tools/amalgamate.py

0 → 100755

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/build_tools/version.sh

0 → 100755

rocksdb/coverage/coverage_test.sh

0 → 100755

此差异已折叠。

此差异已折叠。

rocksdb/db/builder.cc

0 → 100644

此差异已折叠。

rocksdb/db/builder.h

0 → 100644

rocksdb/db/c.cc

0 → 100644

此差异已折叠。

rocksdb/db/c_test.c

0 → 100644

此差异已折叠。

rocksdb/db/column_family.cc

0 → 100644

此差异已折叠。

rocksdb/db/column_family.h

0 → 100644

此差异已折叠。

rocksdb/db/column_family_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/compact_files_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/compacted_db_impl.cc

0 → 100644

此差异已折叠。

rocksdb/db/compacted_db_impl.h

0 → 100644

此差异已折叠。

rocksdb/db/compaction.cc

0 → 100644

此差异已折叠。

rocksdb/db/compaction.h

0 → 100644

此差异已折叠。

rocksdb/db/compaction_iterator.cc

0 → 100644

此差异已折叠。

rocksdb/db/compaction_iterator.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/compaction_job.cc

0 → 100644

此差异已折叠。

rocksdb/db/compaction_job.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/compaction_job_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/compaction_picker.cc

0 → 100644

此差异已折叠。

rocksdb/db/compaction_picker.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/comparator_db_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/convenience.cc

0 → 100644

此差异已折叠。

rocksdb/db/corruption_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/db_bench.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/db_compaction_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/db_filesnapshot.cc

0 → 100644

此差异已折叠。

rocksdb/db/db_impl.cc

0 → 100644

此差异已折叠。

rocksdb/db/db_impl.h

0 → 100644

此差异已折叠。

rocksdb/db/db_impl_debug.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/db_impl_readonly.cc

0 → 100644

此差异已折叠。

rocksdb/db/db_impl_readonly.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/db_iter.cc

0 → 100644

此差异已折叠。

rocksdb/db/db_iter.h

0 → 100644

此差异已折叠。

rocksdb/db/db_iter_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/db_log_iter_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/db_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/db_wal_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/dbformat.cc

0 → 100644

此差异已折叠。

rocksdb/db/dbformat.h

0 → 100644

此差异已折叠。

rocksdb/db/dbformat_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/deletefile_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/event_helpers.cc

0 → 100644

此差异已折叠。

rocksdb/db/event_helpers.h

0 → 100644

此差异已折叠。

rocksdb/db/experimental.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/file_indexer.cc

0 → 100644

此差异已折叠。

rocksdb/db/file_indexer.h

0 → 100644

此差异已折叠。

rocksdb/db/file_indexer_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/filename.cc

0 → 100644

此差异已折叠。

rocksdb/db/filename.h

0 → 100644

此差异已折叠。

rocksdb/db/filename_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/flush_job.cc

0 → 100644

此差异已折叠。

rocksdb/db/flush_job.h

0 → 100644

此差异已折叠。

rocksdb/db/flush_job_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/flush_scheduler.cc

0 → 100644

此差异已折叠。

rocksdb/db/flush_scheduler.h

0 → 100644

此差异已折叠。

rocksdb/db/forward_iterator.cc

0 → 100644

此差异已折叠。

rocksdb/db/forward_iterator.h

0 → 100644

此差异已折叠。

rocksdb/db/internal_stats.cc

0 → 100644

此差异已折叠。

rocksdb/db/internal_stats.h

0 → 100644

此差异已折叠。

rocksdb/db/job_context.h

0 → 100644

此差异已折叠。

rocksdb/db/listener_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/log_format.h

0 → 100644

此差异已折叠。

rocksdb/db/log_reader.cc

0 → 100644

此差异已折叠。

rocksdb/db/log_reader.h

0 → 100644

此差异已折叠。

rocksdb/db/log_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/log_writer.cc

0 → 100644

此差异已折叠。

rocksdb/db/log_writer.h

0 → 100644

此差异已折叠。

rocksdb/db/managed_iterator.cc

0 → 100644

此差异已折叠。

rocksdb/db/managed_iterator.h

0 → 100644

此差异已折叠。

rocksdb/db/memtable.cc

0 → 100644

此差异已折叠。

rocksdb/db/memtable.h

0 → 100644

此差异已折叠。

rocksdb/db/memtable_allocator.cc

0 → 100644

此差异已折叠。

rocksdb/db/memtable_allocator.h

0 → 100644

此差异已折叠。

rocksdb/db/memtable_list.cc

0 → 100644

此差异已折叠。

rocksdb/db/memtable_list.h

0 → 100644

此差异已折叠。

rocksdb/db/memtable_list_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/memtablerep_bench.cc

0 → 100644

此差异已折叠。

rocksdb/db/merge_context.h

0 → 100644

此差异已折叠。

rocksdb/db/merge_helper.cc

0 → 100644

此差异已折叠。

rocksdb/db/merge_helper.h

0 → 100644

此差异已折叠。

rocksdb/db/merge_helper_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/merge_operator.cc

0 → 100644

此差异已折叠。

rocksdb/db/merge_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/pegasus_bench.cc

0 → 100644

此差异已折叠。

rocksdb/db/perf_context_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/plain_table_db_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/prefix_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/repair.cc

0 → 100644

此差异已折叠。

rocksdb/db/skiplist.h

0 → 100644

此差异已折叠。

rocksdb/db/skiplist_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/slice.cc

0 → 100644

此差异已折叠。

rocksdb/db/snapshot_impl.cc

0 → 100644

此差异已折叠。

rocksdb/db/snapshot_impl.h

0 → 100644

此差异已折叠。

rocksdb/db/table_cache.cc

0 → 100644

此差异已折叠。

rocksdb/db/table_cache.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/db/transaction_log_impl.h

0 → 100644

此差异已折叠。

rocksdb/db/version_builder.cc

0 → 100644

此差异已折叠。

rocksdb/db/version_builder.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/version_edit.cc

0 → 100644

此差异已折叠。

rocksdb/db/version_edit.h

0 → 100644

此差异已折叠。

rocksdb/db/version_edit_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/version_set.cc

0 → 100644

此差异已折叠。

rocksdb/db/version_set.h

0 → 100644

此差异已折叠。

rocksdb/db/version_set_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/wal_manager.cc

0 → 100644

此差异已折叠。

rocksdb/db/wal_manager.h

0 → 100644

此差异已折叠。

rocksdb/db/wal_manager_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/write_batch.cc

0 → 100644

此差异已折叠。

rocksdb/db/write_batch_base.cc

0 → 100644

此差异已折叠。

rocksdb/db/write_batch_internal.h

0 → 100644

此差异已折叠。

rocksdb/db/write_batch_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/write_callback.h

0 → 100644

此差异已折叠。

rocksdb/db/write_callback_test.cc

0 → 100644

此差异已折叠。

rocksdb/db/write_controller.cc

0 → 100644

此差异已折叠。

rocksdb/db/write_controller.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/db/write_thread.cc

0 → 100644

此差异已折叠。

rocksdb/db/write_thread.h

0 → 100644

此差异已折叠。

rocksdb/db/writebuffer.h

0 → 100644

此差异已折叠。

rocksdb/doc/doc.css

0 → 100644

此差异已折叠。

rocksdb/doc/index.html

0 → 100644

此差异已折叠。

rocksdb/doc/log_format.txt

0 → 100644

此差异已折叠。

rocksdb/doc/rockslogo.jpg

0 → 100644

此差异已折叠。

rocksdb/doc/rockslogo.png

0 → 100644

此差异已折叠。

rocksdb/examples/.gitignore

0 → 100644

此差异已折叠。

rocksdb/examples/Makefile

0 → 100644

此差异已折叠。

rocksdb/examples/README.md

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/hdfs/README

0 → 100644

此差异已折叠。

rocksdb/hdfs/env_hdfs.h

0 → 100644

此差异已折叠。

rocksdb/hdfs/setup.sh

0 → 100644

此差异已折叠。

rocksdb/include/rocksdb/c.h

0 → 100644

此差异已折叠。

rocksdb/include/rocksdb/cache.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/include/rocksdb/db.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/include/rocksdb/env.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/include/rocksdb/options.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/include/rocksdb/slice.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/include/rocksdb/status.h

0 → 100644

此差异已折叠。

rocksdb/include/rocksdb/table.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/include/rocksdb/types.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/include/rocksdb/version.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/java/HISTORY-JAVA.md

0 → 100644

此差异已折叠。

rocksdb/java/Makefile

0 → 100644

此差异已折叠。

rocksdb/java/RELEASE.md

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/java/jdb_bench.sh

0 → 100755

此差异已折叠。

rocksdb/java/rocksjni.pom

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/java/rocksjni/env.cc

0 → 100644

此差异已折叠。

rocksdb/java/rocksjni/filter.cc

0 → 100644

此差异已折叠。

rocksdb/java/rocksjni/iterator.cc

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/java/rocksjni/options.cc

0 → 100644

此差异已折叠。

rocksdb/java/rocksjni/portal.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/java/rocksjni/rocksjni.cc

0 → 100644

此差异已折叠。

rocksdb/java/rocksjni/slice.cc

0 → 100644

此差异已折叠。

rocksdb/java/rocksjni/snapshot.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/java/rocksjni/table.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/java/rocksjni/ttl.cc

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/port/README

0 → 100644

此差异已折叠。

rocksdb/port/dirent.h

0 → 100644

此差异已折叠。

rocksdb/port/likely.h

0 → 100644

此差异已折叠。

rocksdb/port/port.h

0 → 100644

此差异已折叠。

rocksdb/port/port_example.h

0 → 100644

此差异已折叠。

rocksdb/port/port_posix.cc

0 → 100644

此差异已折叠。

rocksdb/port/port_posix.h

0 → 100644

此差异已折叠。

rocksdb/port/stack_trace.cc

0 → 100644

此差异已折叠。

rocksdb/port/stack_trace.h

0 → 100644

此差异已折叠。

rocksdb/port/sys_time.h

0 → 100644

此差异已折叠。

rocksdb/port/util_logger.h

0 → 100644

此差异已折叠。

rocksdb/port/win/env_win.cc

0 → 100644

此差异已折叠。

rocksdb/port/win/port_win.cc

0 → 100644

此差异已折叠。

rocksdb/port/win/port_win.h

0 → 100644

此差异已折叠。

rocksdb/port/win/win_logger.cc

0 → 100644

此差异已折叠。

rocksdb/port/win/win_logger.h

0 → 100644

此差异已折叠。

rocksdb/src.mk

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/table/block.cc

0 → 100644

此差异已折叠。

rocksdb/table/block.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/table/block_builder.cc

0 → 100644

此差异已折叠。

rocksdb/table/block_builder.h

0 → 100644

此差异已折叠。

rocksdb/table/block_hash_index.cc

0 → 100644

此差异已折叠。

rocksdb/table/block_hash_index.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/table/block_test.cc

0 → 100644

此差异已折叠。

rocksdb/table/bloom_block.cc

0 → 100644

此差异已折叠。

rocksdb/table/bloom_block.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/table/filter_block.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/table/format.cc

0 → 100644

此差异已折叠。

rocksdb/table/format.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/table/full_filter_block.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/table/get_context.cc

0 → 100644

此差异已折叠。

rocksdb/table/get_context.h

0 → 100644

此差异已折叠。

rocksdb/table/iter_heap.h

0 → 100644

此差异已折叠。

rocksdb/table/iterator.cc

0 → 100644

此差异已折叠。

rocksdb/table/iterator_wrapper.h

0 → 100644

此差异已折叠。

rocksdb/table/merger.cc

0 → 100644

此差异已折叠。

rocksdb/table/merger.h

0 → 100644

此差异已折叠。

rocksdb/table/merger_test.cc

0 → 100644

此差异已折叠。

rocksdb/table/meta_blocks.cc

0 → 100644

此差异已折叠。

rocksdb/table/meta_blocks.h

0 → 100644

此差异已折叠。

rocksdb/table/mock_table.cc

0 → 100644

此差异已折叠。

rocksdb/table/mock_table.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/table/plain_table_index.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/table/sst_file_writer.cc

0 → 100644

此差异已折叠。

rocksdb/table/table_builder.h

0 → 100644

此差异已折叠。

rocksdb/table/table_properties.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/table/table_reader.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/table/table_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/thirdparty.inc

0 → 100644

此差异已折叠。

rocksdb/tools/Dockerfile

0 → 100644

此差异已折叠。

rocksdb/tools/auto_sanity_test.sh

0 → 100755

此差异已折叠。

rocksdb/tools/benchmark.sh

0 → 100755

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/tools/db_crashtest.py

0 → 100644

此差异已折叠。

rocksdb/tools/db_crashtest2.py

0 → 100644

此差异已折叠。

rocksdb/tools/db_repl_stress.cc

0 → 100644

此差异已折叠。

rocksdb/tools/db_sanity_test.cc

0 → 100644

此差异已折叠。

rocksdb/tools/db_stress.cc

0 → 100644

此差异已折叠。

rocksdb/tools/dbench_monitor

0 → 100755

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/tools/ldb.cc

0 → 100644

此差异已折叠。

rocksdb/tools/ldb_test.py

0 → 100644

此差异已折叠。

rocksdb/tools/pflag

0 → 100755

此差异已折叠。

rocksdb/tools/rdb/.gitignore

0 → 100644

此差异已折叠。

rocksdb/tools/rdb/API.md

0 → 100644

此差异已折叠。

rocksdb/tools/rdb/README.md

0 → 100644

此差异已折叠。

rocksdb/tools/rdb/binding.gyp

0 → 100644

此差异已折叠。

rocksdb/tools/rdb/db_wrapper.cc

0 → 100644

此差异已折叠。

rocksdb/tools/rdb/db_wrapper.h

0 → 100644

此差异已折叠。

rocksdb/tools/rdb/rdb

0 → 100755

此差异已折叠。

rocksdb/tools/rdb/rdb.cc

0 → 100644

此差异已折叠。

rocksdb/tools/rdb/unit_test.js

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/tools/run_flash_bench.sh

0 → 100755

此差异已折叠。

rocksdb/tools/run_leveldb.sh

0 → 100755

此差异已折叠。

rocksdb/tools/sample-dump.dmp

0 → 100644

此差异已折叠。

rocksdb/tools/sst_dump.cc

0 → 100644

此差异已折叠。

rocksdb/tools/verify_random_db.sh

0 → 100755

此差异已折叠。

rocksdb/util/aligned_buffer.h

0 → 100644

此差异已折叠。

rocksdb/util/allocator.h

0 → 100644

此差异已折叠。

rocksdb/util/arena.cc

0 → 100644

此差异已折叠。

rocksdb/util/arena.h

0 → 100644

此差异已折叠。

rocksdb/util/arena_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/auto_roll_logger.cc

0 → 100644

此差异已折叠。

rocksdb/util/auto_roll_logger.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/autovector.h

0 → 100644

此差异已折叠。

rocksdb/util/autovector_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/bloom.cc

0 → 100644

此差异已折叠。

rocksdb/util/bloom_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/build_version.h

0 → 100644

此差异已折叠。

rocksdb/util/cache.cc

0 → 100644

此差异已折叠。

rocksdb/util/cache_bench.cc

0 → 100644

此差异已折叠。

rocksdb/util/cache_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/channel.h

0 → 100644

此差异已折叠。

rocksdb/util/coding.cc

0 → 100644

此差异已折叠。

rocksdb/util/coding.h

0 → 100644

此差异已折叠。

rocksdb/util/coding_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/comparator.cc

0 → 100644

此差异已折叠。

rocksdb/util/compression.h

0 → 100644

此差异已折叠。

rocksdb/util/crc32c.cc

0 → 100644

此差异已折叠。

rocksdb/util/crc32c.h

0 → 100644

此差异已折叠。

rocksdb/util/crc32c_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/db_info_dumper.cc

0 → 100644

此差异已折叠。

rocksdb/util/db_info_dumper.h

0 → 100644

此差异已折叠。

rocksdb/util/db_test_util.cc

0 → 100644

此差异已折叠。

rocksdb/util/db_test_util.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/util/dynamic_bloom.cc

0 → 100644

此差异已折叠。

rocksdb/util/dynamic_bloom.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/env.cc

0 → 100644

此差异已折叠。

rocksdb/util/env_hdfs.cc

0 → 100644

此差异已折叠。

rocksdb/util/env_posix.cc

0 → 100644

此差异已折叠。

rocksdb/util/env_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/event_logger.cc

0 → 100644

此差异已折叠。

rocksdb/util/event_logger.h

0 → 100644

此差异已折叠。

rocksdb/util/event_logger_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/file_reader_writer.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/file_util.cc

0 → 100644

此差异已折叠。

rocksdb/util/file_util.h

0 → 100644

此差异已折叠。

rocksdb/util/filelock_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/filter_policy.cc

0 → 100644

此差异已折叠。

rocksdb/util/hash.cc

0 → 100644

此差异已折叠。

rocksdb/util/hash.h

0 → 100644

此差异已折叠。

rocksdb/util/hash_cuckoo_rep.cc

0 → 100644

此差异已折叠。

rocksdb/util/hash_cuckoo_rep.h

0 → 100644

此差异已折叠。

rocksdb/util/hash_linklist_rep.cc

0 → 100644

此差异已折叠。

rocksdb/util/hash_linklist_rep.h

0 → 100644

此差异已折叠。

rocksdb/util/hash_skiplist_rep.cc

0 → 100644

此差异已折叠。

rocksdb/util/hash_skiplist_rep.h

0 → 100644

此差异已折叠。

rocksdb/util/heap.h

0 → 100644

此差异已折叠。

rocksdb/util/heap_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/histogram.cc

0 → 100644

此差异已折叠。

rocksdb/util/histogram.h

0 → 100644

此差异已折叠。

rocksdb/util/histogram_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/instrumented_mutex.h

0 → 100644

此差异已折叠。

rocksdb/util/iostats_context.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/ldb_cmd.cc

0 → 100644

此差异已折叠。

rocksdb/util/ldb_cmd.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/ldb_cmd_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/ldb_tool.cc

0 → 100644

此差异已折叠。

rocksdb/util/log_buffer.cc

0 → 100644

此差异已折叠。

rocksdb/util/log_buffer.h

0 → 100644

此差异已折叠。

rocksdb/util/log_write_bench.cc

0 → 100644

此差异已折叠。

rocksdb/util/logging.cc

0 → 100644

此差异已折叠。

rocksdb/util/logging.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/memenv.cc

0 → 100644

此差异已折叠。

rocksdb/util/memenv_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/mock_env.cc

0 → 100644

此差异已折叠。

rocksdb/util/mock_env.h

0 → 100644

此差异已折叠。

rocksdb/util/mock_env_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/murmurhash.cc

0 → 100644

此差异已折叠。

rocksdb/util/murmurhash.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/mutable_cf_options.h

0 → 100644

此差异已折叠。

rocksdb/util/mutexlock.h

0 → 100644

此差异已折叠。

rocksdb/util/options.cc

0 → 100644

此差异已折叠。

rocksdb/util/options_builder.cc

0 → 100644

此差异已折叠。

rocksdb/util/options_helper.cc

0 → 100644

此差异已折叠。

rocksdb/util/options_helper.h

0 → 100644

此差异已折叠。

rocksdb/util/options_parser.cc

0 → 100644

此差异已折叠。

rocksdb/util/options_parser.h

0 → 100644

此差异已折叠。

rocksdb/util/options_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/perf_context.cc

0 → 100644

此差异已折叠。

rocksdb/util/perf_context_imp.h

0 → 100644

此差异已折叠。

rocksdb/util/perf_level.cc

0 → 100644

此差异已折叠。

rocksdb/util/perf_level_imp.h

0 → 100644

此差异已折叠。

rocksdb/util/perf_step_timer.h

0 → 100644

此差异已折叠。

rocksdb/util/posix_logger.h

0 → 100644

此差异已折叠。

rocksdb/util/random.h

0 → 100644

此差异已折叠。

rocksdb/util/rate_limiter.cc

0 → 100644

此差异已折叠。

rocksdb/util/rate_limiter.h

0 → 100644

此差异已折叠。

rocksdb/util/rate_limiter_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/skiplistrep.cc

0 → 100644

此差异已折叠。

rocksdb/util/slice.cc

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/sst_dump_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/sst_dump_tool.cc

0 → 100644

此差异已折叠。

rocksdb/util/sst_dump_tool_imp.h

0 → 100644

此差异已折叠。

rocksdb/util/statistics.cc

0 → 100644

此差异已折叠。

rocksdb/util/statistics.h

0 → 100644

此差异已折叠。

rocksdb/util/status.cc

0 → 100644

此差异已折叠。

rocksdb/util/status_message.cc

0 → 100644

此差异已折叠。

rocksdb/util/stl_wrappers.h

0 → 100644

此差异已折叠。

rocksdb/util/stop_watch.h

0 → 100644

此差异已折叠。

rocksdb/util/string_util.cc

0 → 100644

此差异已折叠。

rocksdb/util/string_util.h

0 → 100644

此差异已折叠。

rocksdb/util/sync_point.cc

0 → 100644

此差异已折叠。

rocksdb/util/sync_point.h

0 → 100644

此差异已折叠。

rocksdb/util/testharness.cc

0 → 100644

此差异已折叠。

rocksdb/util/testharness.h

0 → 100644

此差异已折叠。

rocksdb/util/testutil.cc

0 → 100644

此差异已折叠。

rocksdb/util/testutil.h

0 → 100644

此差异已折叠。

rocksdb/util/thread_list_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/thread_local.cc

0 → 100644

此差异已折叠。

rocksdb/util/thread_local.h

0 → 100644

此差异已折叠。

rocksdb/util/thread_local_test.cc

0 → 100644

此差异已折叠。

rocksdb/util/thread_operation.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/util/thread_status_util.h

0 → 100644

此差异已折叠。

此差异已折叠。

rocksdb/util/vectorrep.cc

0 → 100644

此差异已折叠。

rocksdb/util/xfunc.cc

0 → 100644

此差异已折叠。

rocksdb/util/xfunc.h

0 → 100644

此差异已折叠。

rocksdb/util/xxhash.cc

0 → 100644

此差异已折叠。

rocksdb/util/xxhash.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/utilities/redis/README

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

rocksdb/utilities/ttl/ttl_test.cc

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

run.sh

0 → 100755

此差异已折叠。

scripts/bump_version.sh

0 → 100755

此差异已折叠。

scripts/carrot

0 → 100755

此差异已折叠。

scripts/clear_zk.sh

0 → 100755

此差异已折叠。

scripts/migrate_node.sh

0 → 100755

此差异已折叠。

scripts/pack_client.sh

0 → 100755

此差异已折叠。

scripts/pack_server.sh

0 → 100755

此差异已折叠。

scripts/pack_tools.sh

0 → 100755

此差异已折叠。

scripts/pegasus_bench_run.sh

0 → 100755

此差异已折叠。

scripts/pegasus_kill_test.sh

0 → 100755

此差异已折叠。

此差异已折叠。

scripts/pegasus_rolling_update.sh

0 → 100755

此差异已折叠。

scripts/pegasus_stat_available.sh

0 → 100755

此差异已折叠。

scripts/redis_proto_check.py

0 → 100755

此差异已折叠。

scripts/scp-no-interactive

0 → 100755

此差异已折叠。

scripts/sendmail.sh

0 → 100755

此差异已折叠。

scripts/ssh-no-interactive

0 → 100755

此差异已折叠。

scripts/start_zk.sh

0 → 100755

此差异已折叠。

scripts/stop_zk.sh

0 → 100755

此差异已折叠。

scripts/update_qt_config.sh

0 → 100755

此差异已折叠。

src/.clang-format

0 → 100644

此差异已折叠。

src/CMakeLists.txt

0 → 100644

此差异已折叠。

src/base/CMakeLists.txt

0 → 100644

此差异已折叠。

src/base/counter_utils.h

0 → 100644

此差异已折叠。

src/base/pegasus_const.h

0 → 100644

此差异已折叠。

src/base/pegasus_key_schema.h

0 → 100644

此差异已折叠。

src/base/pegasus_utils.cpp

0 → 100644

此差异已折叠。

src/base/pegasus_utils.h

0 → 100644

此差异已折叠。

src/base/pegasus_value_schema.h

0 → 100644

此差异已折叠。

src/base/rrdb_types.cpp

0 → 100644

此差异已折叠。

src/build.sh

0 → 100755

此差异已折叠。

src/client_lib/CMakeLists.txt

0 → 100644

此差异已折叠。

src/client_lib/client_factory.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/config-bench.ini

0 → 100644

此差异已折叠。

此差异已折叠。

src/ext/libevent/include/evdns.h

0 → 100644

此差异已折叠。

src/ext/libevent/include/event.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/ext/libevent/include/evhttp.h

0 → 100644

此差异已折叠。

src/ext/libevent/include/evrpc.h

0 → 100644

此差异已折叠。

src/ext/libevent/include/evutil.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/ext/libevent/lib/libevent.la

0 → 100755

此差异已折叠。

src/ext/libevent/lib/libevent.so

0 → 120000

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/idl/dsn.thrift

0 → 100644

此差异已折叠。

src/idl/recompile_thrift.sh

0 → 100755

此差异已折叠。

src/idl/rrdb.thrift

0 → 100644

此差异已折叠。

src/idl/rrdb.thrift.annotations

0 → 100644

此差异已折叠。

src/include/pegasus/client.h

0 → 100644

此差异已折叠。

src/include/pegasus/error.h

0 → 100644

此差异已折叠。

src/include/pegasus/error_def.h

0 → 100644

此差异已折叠。

src/include/pegasus/version.h

0 → 100644

此差异已折叠。

src/include/rrdb/rrdb.client.h

0 → 100644

此差异已折叠。

此差异已折叠。

src/include/rrdb/rrdb.server.h

0 → 100644

此差异已折叠。

src/include/rrdb/rrdb.types.h

0 → 100644

此差异已折叠。

src/include/rrdb/rrdb_types.h

0 → 100644

此差异已折叠。

src/redis_protocol/CMakeLists.txt

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/redis_protocol/proxy/main.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/sample/Makefile

0 → 100644

此差异已折叠。

src/sample/README

0 → 100644

此差异已折叠。

src/sample/config-sample.ini

0 → 100644

此差异已折叠。

src/sample/main.cpp

0 → 100644

此差异已折叠。

src/sample/run.sh

0 → 100755

此差异已折叠。

src/server/CMakeLists.txt

0 → 100644

此差异已折叠。

src/server/available_detector.cpp

0 → 100644

此差异已折叠。

src/server/available_detector.h

0 → 100644

此差异已折叠。

src/server/config-server.ini

0 → 100644

此差异已折叠。

src/server/config.ini

0 → 100644

此差异已折叠。

src/server/info_collector.cpp

0 → 100644

此差异已折叠。

src/server/info_collector.h

0 → 100644

此差异已折叠。

src/server/info_collector_app.cpp

0 → 100644

此差异已折叠。

src/server/info_collector_app.h

0 → 100644

此差异已折叠。

此差异已折叠。

src/server/main.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/server/pegasus_io_service.h

0 → 100644

此差异已折叠。

此差异已折叠。

src/server/pegasus_perf_counter.h

0 → 100644

此差异已折叠。

src/server/pegasus_scan_context.h

0 → 100644

此差异已折叠。

此差异已折叠。

src/server/pegasus_server_impl.h

0 → 100644

此差异已折叠。

src/server/pegasus_service_app.h

0 → 100644

此差异已折叠。

src/server/profiler-template.ini

0 → 100644

此差异已折叠。

src/shell/CMakeLists.txt

0 → 100644

此差异已折叠。

src/shell/args.h

0 → 100644

此差异已折叠。

src/shell/command_executor.h

0 → 100644

此差异已折叠。

src/shell/command_helper.h

0 → 100644

此差异已折叠。

src/shell/command_utils.h

0 → 100644

此差异已折叠。

src/shell/commands.h

0 → 100644

此差异已折叠。

src/shell/config.ini

0 → 100644

此差异已折叠。

src/shell/main.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

src/test/function_test/config.ini

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/test/function_test/main.cpp

0 → 100644

此差异已折叠。

src/test/function_test/run.sh

0 → 100755

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/test/function_test/utils.h

0 → 100644

此差异已折叠。

src/test/kill_test/CMakeLists.txt

0 → 100644

此差异已折叠。

src/test/kill_test/config.ini

0 → 100644

此差异已折叠。

src/test/kill_test/job.cpp

0 → 100644

此差异已折叠。

src/test/kill_test/job.h

0 → 100644

此差异已折叠。

此差异已折叠。

src/test/kill_test/kill_testor.h

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/test/kill_test/main.cpp

0 → 100644

此差异已折叠。

此差异已折叠。

此差异已折叠。

src/test/pressure_test/main.cpp

0 → 100644

此差异已折叠。