init commit for build whl

Showing

MANIFEST.in

0 → 100644

__init__.py

0 → 100644

doc/doc_ch/whl.md

0 → 100644

doc/doc_en/whl.md

0 → 100644

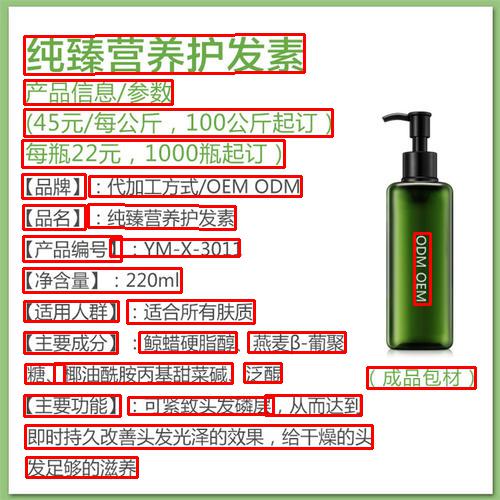

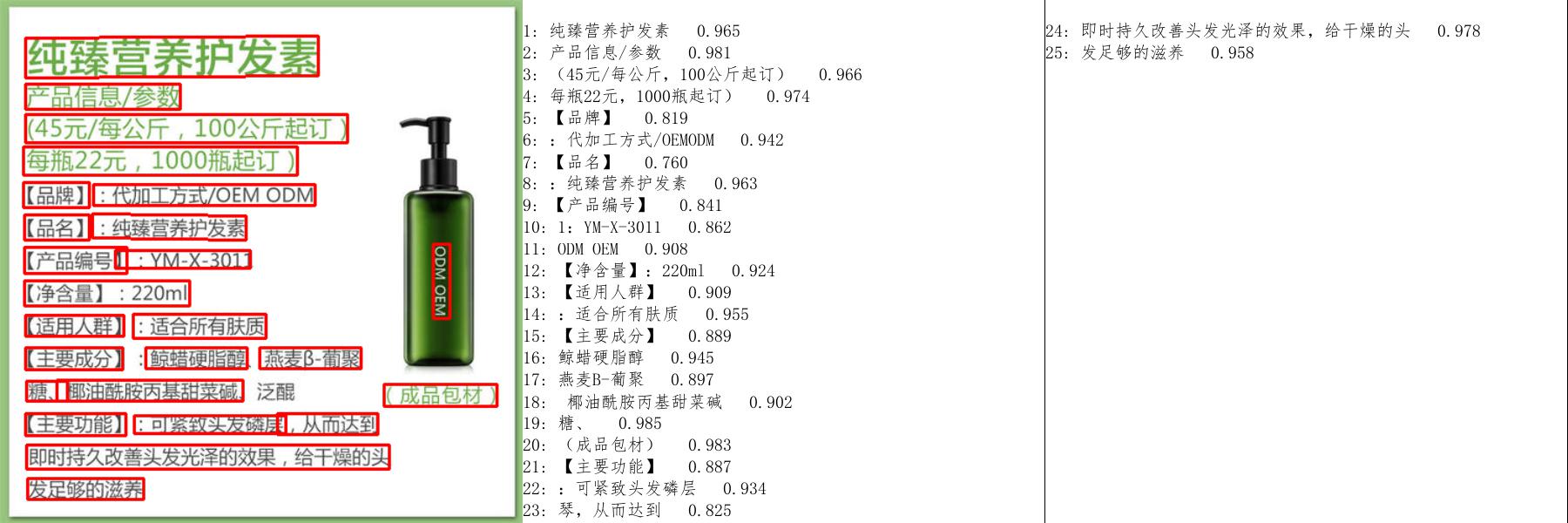

doc/imgs_results/whl/11_det.jpg

0 → 100644

61.4 KB

135.0 KB

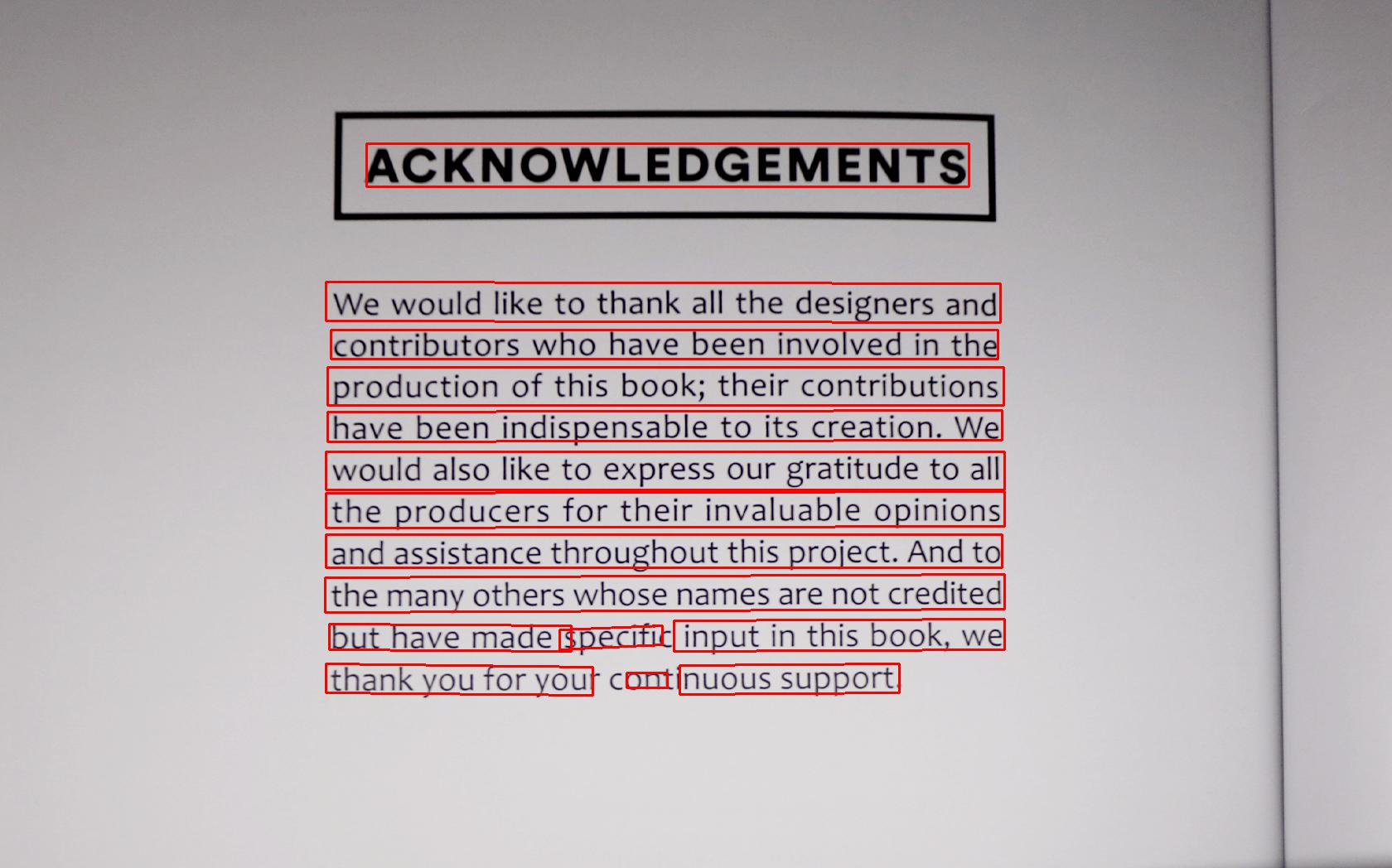

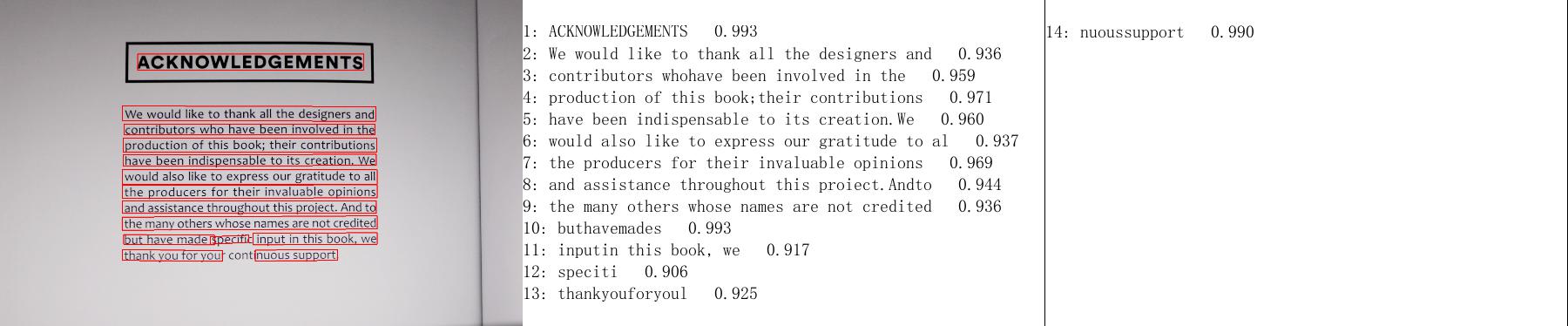

doc/imgs_results/whl/12_det.jpg

0 → 100644

166.3 KB

84.0 KB

paddleocr.py

0 → 100644

setup.py

0 → 100644