@@ -196,8 +198,8 @@ PaddleOCR旨在打造一套丰富、领先、且实用的OCR工具库,助力

-

-- RE(关系提取)

+

+- RE(关系提取)

diff --git "a/applications/\345\244\232\346\250\241\346\200\201\350\241\250\345\215\225\350\257\206\345\210\253.md" "b/applications/\345\244\232\346\250\241\346\200\201\350\241\250\345\215\225\350\257\206\345\210\253.md"

index a831813c1504a0d00db23f0218154cbf36741118..2143a6da86abb5eb83788944cd0381b91dad86c1 100644

--- "a/applications/\345\244\232\346\250\241\346\200\201\350\241\250\345\215\225\350\257\206\345\210\253.md"

+++ "b/applications/\345\244\232\346\250\241\346\200\201\350\241\250\345\215\225\350\257\206\345\210\253.md"

@@ -16,7 +16,7 @@

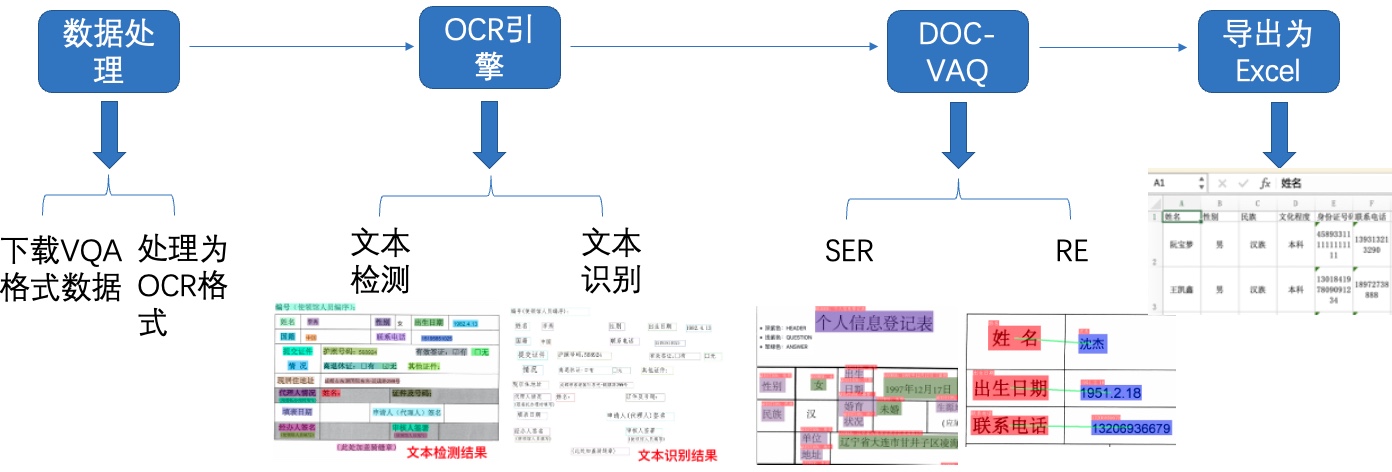

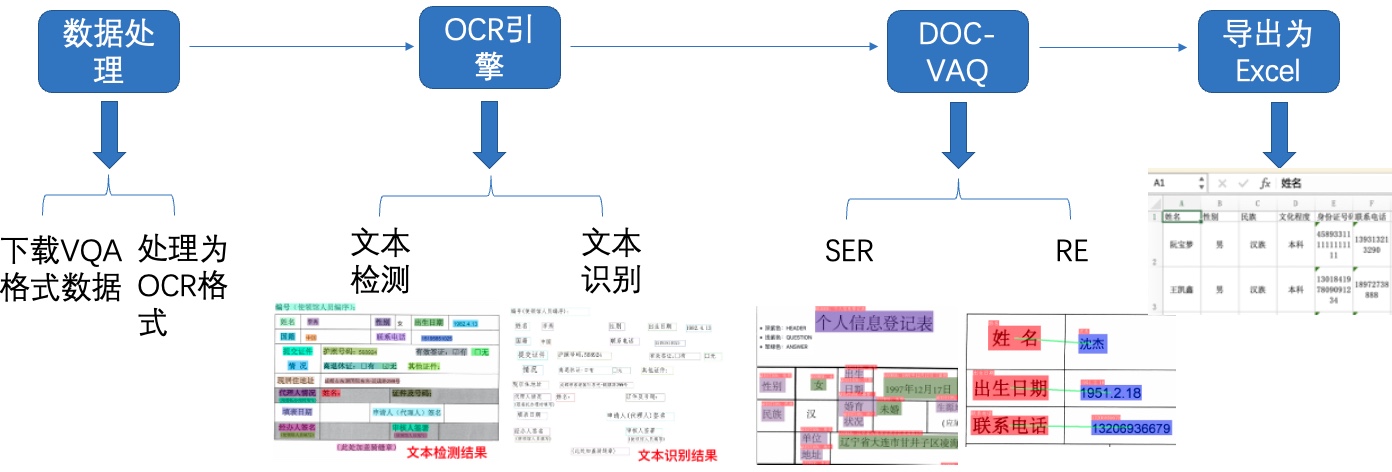

图1 多模态表单识别流程图

-注:欢迎再AIStudio领取免费算力体验线上实训,项目链接: 多模态表单识别](https://aistudio.baidu.com/aistudio/projectdetail/3815918)(配备Tesla V100、A100等高级算力资源)

+注:欢迎再AIStudio领取免费算力体验线上实训,项目链接: [多模态表单识别](https://aistudio.baidu.com/aistudio/projectdetail/3815918)(配备Tesla V100、A100等高级算力资源)

diff --git a/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_cml.yml b/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_cml.yml

new file mode 100644

index 0000000000000000000000000000000000000000..3e77577c17abe2111c501d96ce6b1087ac44f8d6

--- /dev/null

+++ b/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_cml.yml

@@ -0,0 +1,234 @@

+Global:

+ debug: false

+ use_gpu: true

+ epoch_num: 500

+ log_smooth_window: 20

+ print_batch_step: 10

+ save_model_dir: ./output/ch_PP-OCR_v3_det/

+ save_epoch_step: 100

+ eval_batch_step:

+ - 0

+ - 400

+ cal_metric_during_train: false

+ pretrained_model: null

+ checkpoints: null

+ save_inference_dir: null

+ use_visualdl: false

+ infer_img: doc/imgs_en/img_10.jpg

+ save_res_path: ./checkpoints/det_db/predicts_db.txt

+ distributed: true

+

+Architecture:

+ name: DistillationModel

+ algorithm: Distillation

+ model_type: det

+ Models:

+ Student:

+ model_type: det

+ algorithm: DB

+ Transform: null

+ Backbone:

+ name: MobileNetV3

+ scale: 0.5

+ model_name: large

+ disable_se: true

+ Neck:

+ name: RSEFPN

+ out_channels: 96

+ shortcut: True

+ Head:

+ name: DBHead

+ k: 50

+ Student2:

+ model_type: det

+ algorithm: DB

+ Transform: null

+ Backbone:

+ name: MobileNetV3

+ scale: 0.5

+ model_name: large

+ disable_se: true

+ Neck:

+ name: RSEFPN

+ out_channels: 96

+ shortcut: True

+ Head:

+ name: DBHead

+ k: 50

+ Teacher:

+ freeze_params: true

+ return_all_feats: false

+ model_type: det

+ algorithm: DB

+ Backbone:

+ name: ResNet

+ in_channels: 3

+ layers: 50

+ Neck:

+ name: LKPAN

+ out_channels: 256

+ Head:

+ name: DBHead

+ kernel_list: [7,2,2]

+ k: 50

+

+Loss:

+ name: CombinedLoss

+ loss_config_list:

+ - DistillationDilaDBLoss:

+ weight: 1.0

+ model_name_pairs:

+ - ["Student", "Teacher"]

+ - ["Student2", "Teacher"]

+ key: maps

+ balance_loss: true

+ main_loss_type: DiceLoss

+ alpha: 5

+ beta: 10

+ ohem_ratio: 3

+ - DistillationDMLLoss:

+ model_name_pairs:

+ - ["Student", "Student2"]

+ maps_name: "thrink_maps"

+ weight: 1.0

+ # act: None

+ model_name_pairs: ["Student", "Student2"]

+ key: maps

+ - DistillationDBLoss:

+ weight: 1.0

+ model_name_list: ["Student", "Student2"]

+ # key: maps

+ # name: DBLoss

+ balance_loss: true

+ main_loss_type: DiceLoss

+ alpha: 5

+ beta: 10

+ ohem_ratio: 3

+

+Optimizer:

+ name: Adam

+ beta1: 0.9

+ beta2: 0.999

+ lr:

+ name: Cosine

+ learning_rate: 0.001

+ warmup_epoch: 2

+ regularizer:

+ name: L2

+ factor: 5.0e-05

+

+PostProcess:

+ name: DistillationDBPostProcess

+ model_name: ["Student"]

+ key: head_out

+ thresh: 0.3

+ box_thresh: 0.6

+ max_candidates: 1000

+ unclip_ratio: 1.5

+

+Metric:

+ name: DistillationMetric

+ base_metric_name: DetMetric

+ main_indicator: hmean

+ key: "Student"

+

+Train:

+ dataset:

+ name: SimpleDataSet

+ data_dir: ./train_data/icdar2015/text_localization/

+ label_file_list:

+ - ./train_data/icdar2015/text_localization/train_icdar2015_label.txt

+ ratio_list: [1.0]

+ transforms:

+ - DecodeImage:

+ img_mode: BGR

+ channel_first: false

+ - DetLabelEncode: null

+ - CopyPaste:

+ - IaaAugment:

+ augmenter_args:

+ - type: Fliplr

+ args:

+ p: 0.5

+ - type: Affine

+ args:

+ rotate:

+ - -10

+ - 10

+ - type: Resize

+ args:

+ size:

+ - 0.5

+ - 3

+ - EastRandomCropData:

+ size:

+ - 960

+ - 960

+ max_tries: 50

+ keep_ratio: true

+ - MakeBorderMap:

+ shrink_ratio: 0.4

+ thresh_min: 0.3

+ thresh_max: 0.7

+ - MakeShrinkMap:

+ shrink_ratio: 0.4

+ min_text_size: 8

+ - NormalizeImage:

+ scale: 1./255.

+ mean:

+ - 0.485

+ - 0.456

+ - 0.406

+ std:

+ - 0.229

+ - 0.224

+ - 0.225

+ order: hwc

+ - ToCHWImage: null

+ - KeepKeys:

+ keep_keys:

+ - image

+ - threshold_map

+ - threshold_mask

+ - shrink_map

+ - shrink_mask

+ loader:

+ shuffle: true

+ drop_last: false

+ batch_size_per_card: 8

+ num_workers: 4

+Eval:

+ dataset:

+ name: SimpleDataSet

+ data_dir: ./train_data/icdar2015/text_localization/

+ label_file_list:

+ - ./train_data/icdar2015/text_localization/test_icdar2015_label.txt

+ transforms:

+ - DecodeImage:

+ img_mode: BGR

+ channel_first: false

+ - DetLabelEncode: null

+ - DetResizeForTest: null

+ - NormalizeImage:

+ scale: 1./255.

+ mean:

+ - 0.485

+ - 0.456

+ - 0.406

+ std:

+ - 0.229

+ - 0.224

+ - 0.225

+ order: hwc

+ - ToCHWImage: null

+ - KeepKeys:

+ keep_keys:

+ - image

+ - shape

+ - polys

+ - ignore_tags

+ loader:

+ shuffle: false

+ drop_last: false

+ batch_size_per_card: 1

+ num_workers: 2

diff --git a/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_student.yml b/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_student.yml

new file mode 100644

index 0000000000000000000000000000000000000000..0e8af776479ea26f834ca9ddc169f80b3982e86d

--- /dev/null

+++ b/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_student.yml

@@ -0,0 +1,163 @@

+Global:

+ debug: false

+ use_gpu: true

+ epoch_num: 500

+ log_smooth_window: 20

+ print_batch_step: 10

+ save_model_dir: ./output/ch_PP-OCR_V3_det/

+ save_epoch_step: 100

+ eval_batch_step:

+ - 0

+ - 400

+ cal_metric_during_train: false

+ pretrained_model: null

+ checkpoints: null

+ save_inference_dir: null

+ use_visualdl: false

+ infer_img: doc/imgs_en/img_10.jpg

+ save_res_path: ./checkpoints/det_db/predicts_db.txt

+ distributed: true

+

+Architecture:

+ model_type: det

+ algorithm: DB

+ Transform:

+ Backbone:

+ name: MobileNetV3

+ scale: 0.5

+ model_name: large

+ disable_se: True

+ Neck:

+ name: RSEFPN

+ out_channels: 96

+ shortcut: True

+ Head:

+ name: DBHead

+ k: 50

+

+Loss:

+ name: DBLoss

+ balance_loss: true

+ main_loss_type: DiceLoss

+ alpha: 5

+ beta: 10

+ ohem_ratio: 3

+Optimizer:

+ name: Adam

+ beta1: 0.9

+ beta2: 0.999

+ lr:

+ name: Cosine

+ learning_rate: 0.001

+ warmup_epoch: 2

+ regularizer:

+ name: L2

+ factor: 5.0e-05

+PostProcess:

+ name: DBPostProcess

+ thresh: 0.3

+ box_thresh: 0.6

+ max_candidates: 1000

+ unclip_ratio: 1.5

+Metric:

+ name: DetMetric

+ main_indicator: hmean

+Train:

+ dataset:

+ name: SimpleDataSet

+ data_dir: ./train_data/icdar2015/text_localization/

+ label_file_list:

+ - ./train_data/icdar2015/text_localization/train_icdar2015_label.txt

+ ratio_list: [1.0]

+ transforms:

+ - DecodeImage:

+ img_mode: BGR

+ channel_first: false

+ - DetLabelEncode: null

+ - IaaAugment:

+ augmenter_args:

+ - type: Fliplr

+ args:

+ p: 0.5

+ - type: Affine

+ args:

+ rotate:

+ - -10

+ - 10

+ - type: Resize

+ args:

+ size:

+ - 0.5

+ - 3

+ - EastRandomCropData:

+ size:

+ - 960

+ - 960

+ max_tries: 50

+ keep_ratio: true

+ - MakeBorderMap:

+ shrink_ratio: 0.4

+ thresh_min: 0.3

+ thresh_max: 0.7

+ - MakeShrinkMap:

+ shrink_ratio: 0.4

+ min_text_size: 8

+ - NormalizeImage:

+ scale: 1./255.

+ mean:

+ - 0.485

+ - 0.456

+ - 0.406

+ std:

+ - 0.229

+ - 0.224

+ - 0.225

+ order: hwc

+ - ToCHWImage: null

+ - KeepKeys:

+ keep_keys:

+ - image

+ - threshold_map

+ - threshold_mask

+ - shrink_map

+ - shrink_mask

+ loader:

+ shuffle: true

+ drop_last: false

+ batch_size_per_card: 8

+ num_workers: 4

+Eval:

+ dataset:

+ name: SimpleDataSet

+ data_dir: ./train_data/icdar2015/text_localization/

+ label_file_list:

+ - ./train_data/icdar2015/text_localization/test_icdar2015_label.txt

+ transforms:

+ - DecodeImage:

+ img_mode: BGR

+ channel_first: false

+ - DetLabelEncode: null

+ - DetResizeForTest: null

+ - NormalizeImage:

+ scale: 1./255.

+ mean:

+ - 0.485

+ - 0.456

+ - 0.406

+ std:

+ - 0.229

+ - 0.224

+ - 0.225

+ order: hwc

+ - ToCHWImage: null

+ - KeepKeys:

+ keep_keys:

+ - image

+ - shape

+ - polys

+ - ignore_tags

+ loader:

+ shuffle: false

+ drop_last: false

+ batch_size_per_card: 1

+ num_workers: 2

diff --git a/configs/rec/ch_PP-OCRv3/ch_PP-OCRv3_rec.yml b/configs/rec/PP-OCRv3/ch_PP-OCRv3_rec.yml

similarity index 100%

rename from configs/rec/ch_PP-OCRv3/ch_PP-OCRv3_rec.yml

rename to configs/rec/PP-OCRv3/ch_PP-OCRv3_rec.yml

diff --git a/configs/rec/ch_PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml b/configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml

similarity index 100%

rename from configs/rec/ch_PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml

rename to configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml

diff --git a/doc/doc_ch/docvqa_datasets.md b/configs/rec/PP-OCRv3/multi_language/.gitkeep

similarity index 100%

rename from doc/doc_ch/docvqa_datasets.md

rename to configs/rec/PP-OCRv3/multi_language/.gitkeep

diff --git a/deploy/slim/quantization/export_model.py b/deploy/slim/quantization/export_model.py

index 90f79dab34a5f20d4556ae4b10ad1d4e1f8b7f0d..fd1c3e5e109667fa74f5ade18b78f634e4d325db 100755

--- a/deploy/slim/quantization/export_model.py

+++ b/deploy/slim/quantization/export_model.py

@@ -17,9 +17,9 @@ import sys

__dir__ = os.path.dirname(os.path.abspath(__file__))

sys.path.append(__dir__)

-sys.path.append(os.path.abspath(os.path.join(__dir__, '..', '..', '..')))

-sys.path.append(

- os.path.abspath(os.path.join(__dir__, '..', '..', '..', 'tools')))

+sys.path.insert(0, os.path.abspath(os.path.join(__dir__, '..', '..', '..')))

+sys.path.insert(

+ 0, os.path.abspath(os.path.join(__dir__, '..', '..', '..', 'tools')))

import argparse

@@ -129,7 +129,6 @@ def main():

quanter.quantize(model)

load_model(config, model)

- model.eval()

# build metric

eval_class = build_metric(config['Metric'])

@@ -142,6 +141,7 @@ def main():

# start eval

metric = program.eval(model, valid_dataloader, post_process_class,

eval_class, model_type, use_srn)

+ model.eval()

logger.info('metric eval ***************')

for k, v in metric.items():

@@ -156,7 +156,6 @@ def main():

if arch_config["algorithm"] in ["Distillation", ]: # distillation model

archs = list(arch_config["Models"].values())

for idx, name in enumerate(model.model_name_list):

- model.model_list[idx].eval()

sub_model_save_path = os.path.join(save_path, name, "inference")

export_single_model(model.model_list[idx], archs[idx],

sub_model_save_path, logger, quanter)

diff --git a/doc/datasets/funsd_demo/gt_train_00040534.jpg b/doc/datasets/funsd_demo/gt_train_00040534.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..9f7cf4d4977689b73e2ca91cbe9c877bb8f0c7ff

Binary files /dev/null and b/doc/datasets/funsd_demo/gt_train_00040534.jpg differ

diff --git a/doc/datasets/funsd_demo/gt_train_00070353.jpg b/doc/datasets/funsd_demo/gt_train_00070353.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..36d3345e5ec4c262764e63a972aaa82e98877681

Binary files /dev/null and b/doc/datasets/funsd_demo/gt_train_00070353.jpg differ

diff --git a/doc/datasets/table_PubTabNet_demo/PMC524509_007_00.png b/doc/datasets/table_PubTabNet_demo/PMC524509_007_00.png

new file mode 100755

index 0000000000000000000000000000000000000000..5b9d631cba434e4bd6ac6fe2108b7f6c081c4811

Binary files /dev/null and b/doc/datasets/table_PubTabNet_demo/PMC524509_007_00.png differ

diff --git a/doc/datasets/table_PubTabNet_demo/PMC535543_007_01.png b/doc/datasets/table_PubTabNet_demo/PMC535543_007_01.png

new file mode 100755

index 0000000000000000000000000000000000000000..e808de72d62325ae4cbd009397b7beaeed0d88fc

Binary files /dev/null and b/doc/datasets/table_PubTabNet_demo/PMC535543_007_01.png differ

diff --git a/doc/datasets/table_tal_demo/1.jpg b/doc/datasets/table_tal_demo/1.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..e7ddd6d1db59ca27a0461ab93b3672aeec4a8941

Binary files /dev/null and b/doc/datasets/table_tal_demo/1.jpg differ

diff --git a/doc/datasets/table_tal_demo/2.jpg b/doc/datasets/table_tal_demo/2.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..e7ddd6d1db59ca27a0461ab93b3672aeec4a8941

Binary files /dev/null and b/doc/datasets/table_tal_demo/2.jpg differ

diff --git a/doc/datasets/xfund_demo/gt_zh_train_0.jpg b/doc/datasets/xfund_demo/gt_zh_train_0.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..6fdaf12fa1d79e6ea9029d665ab7488223459436

Binary files /dev/null and b/doc/datasets/xfund_demo/gt_zh_train_0.jpg differ

diff --git a/doc/datasets/xfund_demo/gt_zh_train_1.jpg b/doc/datasets/xfund_demo/gt_zh_train_1.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..6a1e53a3ba09b6f84809cfd10a15c42f42b9a163

Binary files /dev/null and b/doc/datasets/xfund_demo/gt_zh_train_1.jpg differ

diff --git a/doc/doc_ch/algorithm_det_fcenet.md b/doc/doc_ch/algorithm_det_fcenet.md

new file mode 100644

index 0000000000000000000000000000000000000000..bd2e734204d32bbf575ddea9f889953a72582c59

--- /dev/null

+++ b/doc/doc_ch/algorithm_det_fcenet.md

@@ -0,0 +1,104 @@

+# FCENet

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [Fourier Contour Embedding for Arbitrary-Shaped Text Detection](https://arxiv.org/abs/2104.10442)

+> Yiqin Zhu and Jianyong Chen and Lingyu Liang and Zhanghui Kuang and Lianwen Jin and Wayne Zhang

+> CVPR, 2021

+

+在CTW1500文本检测公开数据集上,算法复现效果如下:

+

+| 模型 |骨干网络|配置文件|precision|recall|Hmean|下载链接|

+|-----| --- | --- | --- | --- | --- | --- |

+| FCE | ResNet50_dcn | [configs/det/det_r50_vd_dcn_fce_ctw.yml](../../configs/det/det_r50_vd_dcn_fce_ctw.yml)| 88.39%|82.18%|85.27%|[训练模型](https://paddleocr.bj.bcebos.com/contribution/det_r50_dcn_fce_ctw_v2.0_train.tar)|

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+上述FCE模型使用CTW1500文本检测公开数据集训练得到,数据集下载可参考 [ocr_datasets](./dataset/ocr_datasets.md)。

+

+数据下载完成后,请参考[文本检测训练教程](./detection.md)进行训练。PaddleOCR对代码进行了模块化,训练不同的检测模型只需要**更换配置文件**即可。

+

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+首先将FCE文本检测训练过程中保存的模型,转换成inference model。以基于Resnet50_vd_dcn骨干网络,在CTW1500英文数据集训练的模型为例( [模型下载地址](https://paddleocr.bj.bcebos.com/contribution/det_r50_dcn_fce_ctw_v2.0_train.tar) ),可以使用如下命令进行转换:

+

+```shell

+python3 tools/export_model.py -c configs/det/det_r50_vd_dcn_fce_ctw.yml -o Global.pretrained_model=./det_r50_dcn_fce_ctw_v2.0_train/best_accuracy Global.save_inference_dir=./inference/det_fce

+```

+

+FCE文本检测模型推理,执行非弯曲文本检测,可以执行如下命令:

+

+```shell

+python3 tools/infer/predict_det.py --image_dir="./doc/imgs_en/img_10.jpg" --det_model_dir="./inference/det_fce/" --det_algorithm="FCE" --det_fce_box_type=quad

+```

+

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+如果想执行弯曲文本检测,可以执行如下命令:

+

+```shell

+python3 tools/infer/predict_det.py --image_dir="./doc/imgs_en/img623.jpg" --det_model_dir="./inference/det_fce/" --det_algorithm="FCE" --det_fce_box_type=poly

+```

+

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+**注意**:由于CTW1500数据集只有1000张训练图像,且主要针对英文场景,所以上述模型对中文文本图像检测效果会比较差。

+

+

+### 4.2 C++推理

+

+由于后处理暂未使用CPP编写,FCE文本检测模型暂不支持CPP推理。

+

+

+### 4.3 Serving服务化部署

+

+暂未支持

+

+

+### 4.4 更多推理部署

+

+暂未支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@InProceedings{zhu2021fourier,

+ title={Fourier Contour Embedding for Arbitrary-Shaped Text Detection},

+ author={Yiqin Zhu and Jianyong Chen and Lingyu Liang and Zhanghui Kuang and Lianwen Jin and Wayne Zhang},

+ year={2021},

+ booktitle = {CVPR}

+}

+```

diff --git a/doc/doc_ch/algorithm_det_psenet.md b/doc/doc_ch/algorithm_det_psenet.md

new file mode 100644

index 0000000000000000000000000000000000000000..58d8ccf97292f4e988861b618697fb0e7694fbab

--- /dev/null

+++ b/doc/doc_ch/algorithm_det_psenet.md

@@ -0,0 +1,106 @@

+# PSENet

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [Shape robust text detection with progressive scale expansion network](https://arxiv.org/abs/1903.12473)

+> Wang, Wenhai and Xie, Enze and Li, Xiang and Hou, Wenbo and Lu, Tong and Yu, Gang and Shao, Shuai

+> CVPR, 2019

+

+在ICDAR2015文本检测公开数据集上,算法复现效果如下:

+

+|模型|骨干网络|配置文件|precision|recall|Hmean|下载链接|

+| --- | --- | --- | --- | --- | --- | --- |

+|PSE| ResNet50_vd | [configs/det/det_r50_vd_pse.yml](../../configs/det/det_r50_vd_pse.yml)| 85.81% |79.53%|82.55%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.1/en_det/det_r50_vd_pse_v2.0_train.tar)|

+|PSE| MobileNetV3| [configs/det/det_mv3_pse.yml](../../configs/det/det_mv3_pse.yml) | 82.20% |70.48%|75.89%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.1/en_det/det_mv3_pse_v2.0_train.tar)|

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+上述PSE模型使用ICDAR2015文本检测公开数据集训练得到,数据集下载可参考 [ocr_datasets](./dataset/ocr_datasets.md)。

+

+数据下载完成后,请参考[文本检测训练教程](./detection.md)进行训练。PaddleOCR对代码进行了模块化,训练不同的检测模型只需要**更换配置文件**即可。

+

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+首先将PSE文本检测训练过程中保存的模型,转换成inference model。以基于Resnet50_vd骨干网络,在ICDAR2015英文数据集训练的模型为例( [模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.1/en_det/det_r50_vd_pse_v2.0_train.tar) ),可以使用如下命令进行转换:

+

+```shell

+python3 tools/export_model.py -c configs/det/det_r50_vd_pse.yml -o Global.pretrained_model=./det_r50_vd_pse_v2.0_train/best_accuracy Global.save_inference_dir=./inference/det_pse

+```

+

+PSE文本检测模型推理,执行非弯曲文本检测,可以执行如下命令:

+

+```shell

+python3 tools/infer/predict_det.py --image_dir="./doc/imgs_en/img_10.jpg" --det_model_dir="./inference/det_pse/" --det_algorithm="PSE" --det_pse_box_type=quad

+```

+

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+如果想执行弯曲文本检测,可以执行如下命令:

+

+```shell

+python3 tools/infer/predict_det.py --image_dir="./doc/imgs_en/img_10.jpg" --det_model_dir="./inference/det_pse/" --det_algorithm="PSE" --det_pse_box_type=poly

+```

+

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+**注意**:由于ICDAR2015数据集只有1000张训练图像,且主要针对英文场景,所以上述模型对中文或弯曲文本图像检测效果会比较差。

+

+

+### 4.2 C++推理

+

+由于后处理暂未使用CPP编写,PSE文本检测模型暂不支持CPP推理。

+

+

+### 4.3 Serving服务化部署

+

+暂未支持

+

+

+### 4.4 更多推理部署

+

+暂未支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@inproceedings{wang2019shape,

+ title={Shape robust text detection with progressive scale expansion network},

+ author={Wang, Wenhai and Xie, Enze and Li, Xiang and Hou, Wenbo and Lu, Tong and Yu, Gang and Shao, Shuai},

+ booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

+ pages={9336--9345},

+ year={2019}

+}

+```

diff --git a/doc/doc_ch/algorithm_det_sast.md b/doc/doc_ch/algorithm_det_sast.md

new file mode 100644

index 0000000000000000000000000000000000000000..038d73fc15f3203bbcc17997c1a8e1c208f80ba8

--- /dev/null

+++ b/doc/doc_ch/algorithm_det_sast.md

@@ -0,0 +1,115 @@

+# SAST

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [A Single-Shot Arbitrarily-Shaped Text Detector based on Context Attended Multi-Task Learning](https://arxiv.org/abs/1908.05498)

+> Wang, Pengfei and Zhang, Chengquan and Qi, Fei and Huang, Zuming and En, Mengyi and Han, Junyu and Liu, Jingtuo and Ding, Errui and Shi, Guangming

+> ACM MM, 2019

+

+在ICDAR2015文本检测公开数据集上,算法复现效果如下:

+

+|模型|骨干网络|配置文件|precision|recall|Hmean|下载链接|

+| --- | --- | --- | --- | --- | --- | --- |

+|SAST|ResNet50_vd|[configs/det/det_r50_vd_sast_icdar15.yml](../../configs/det/det_r50_vd_sast_icdar15.yml)|91.39%|83.77%|87.42%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/det_r50_vd_sast_icdar15_v2.0_train.tar)|

+

+

+在Total-text文本检测公开数据集上,算法复现效果如下:

+

+|模型|骨干网络|配置文件|precision|recall|Hmean|下载链接|

+| --- | --- | --- | --- | --- | --- | --- |

+|SAST|ResNet50_vd|[configs/det/det_r50_vd_sast_totaltext.yml](../../configs/det/det_r50_vd_sast_totaltext.yml)|89.63%|78.44%|83.66%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/det_r50_vd_sast_totaltext_v2.0_train.tar)|

+

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+请参考[文本检测训练教程](./detection.md)。PaddleOCR对代码进行了模块化,训练不同的检测模型只需要**更换配置文件**即可。

+

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+#### (1). 四边形文本检测模型(ICDAR2015)

+首先将SAST文本检测训练过程中保存的模型,转换成inference model。以基于Resnet50_vd骨干网络,在ICDAR2015英文数据集训练的模型为例([模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/det_r50_vd_sast_icdar15_v2.0_train.tar)),可以使用如下命令进行转换:

+```

+python3 tools/export_model.py -c configs/det/det_r50_vd_sast_icdar15.yml -o Global.pretrained_model=./det_r50_vd_sast_icdar15_v2.0_train/best_accuracy Global.save_inference_dir=./inference/det_sast_ic15

+

+```

+**SAST文本检测模型推理,需要设置参数`--det_algorithm="SAST"`**,可以执行如下命令:

+```

+python3 tools/infer/predict_det.py --det_algorithm="SAST" --image_dir="./doc/imgs_en/img_10.jpg" --det_model_dir="./inference/det_sast_ic15/"

+```

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+#### (2). 弯曲文本检测模型(Total-Text)

+首先将SAST文本检测训练过程中保存的模型,转换成inference model。以基于Resnet50_vd骨干网络,在Total-Text英文数据集训练的模型为例([模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/det_r50_vd_sast_totaltext_v2.0_train.tar)),可以使用如下命令进行转换:

+

+```

+python3 tools/export_model.py -c configs/det/det_r50_vd_sast_totaltext.yml -o Global.pretrained_model=./det_r50_vd_sast_totaltext_v2.0_train/best_accuracy Global.save_inference_dir=./inference/det_sast_tt

+

+```

+

+SAST文本检测模型推理,需要设置参数`--det_algorithm="SAST"`,同时,还需要增加参数`--det_sast_polygon=True`,可以执行如下命令:

+```

+python3 tools/infer/predict_det.py --det_algorithm="SAST" --image_dir="./doc/imgs_en/img623.jpg" --det_model_dir="./inference/det_sast_tt/" --det_sast_polygon=True

+```

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+**注意**:本代码库中,SAST后处理Locality-Aware NMS有python和c++两种版本,c++版速度明显快于python版。由于c++版本nms编译版本问题,只有python3.5环境下会调用c++版nms,其他情况将调用python版nms。

+

+

+### 4.2 C++推理

+

+暂未支持

+

+

+### 4.3 Serving服务化部署

+

+暂未支持

+

+

+### 4.4 更多推理部署

+

+暂未支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@inproceedings{wang2019single,

+ title={A Single-Shot Arbitrarily-Shaped Text Detector based on Context Attended Multi-Task Learning},

+ author={Wang, Pengfei and Zhang, Chengquan and Qi, Fei and Huang, Zuming and En, Mengyi and Han, Junyu and Liu, Jingtuo and Ding, Errui and Shi, Guangming},

+ booktitle={Proceedings of the 27th ACM International Conference on Multimedia},

+ pages={1277--1285},

+ year={2019}

+}

+```

diff --git a/doc/doc_ch/algorithm_rec_sar.md b/doc/doc_ch/algorithm_rec_sar.md

new file mode 100644

index 0000000000000000000000000000000000000000..b8304313994754480a89d708e39149d67f828c0d

--- /dev/null

+++ b/doc/doc_ch/algorithm_rec_sar.md

@@ -0,0 +1,114 @@

+# SAR

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [Show, Attend and Read: A Simple and Strong Baseline for Irregular Text Recognition](https://arxiv.org/abs/1811.00751)

+> Hui Li, Peng Wang, Chunhua Shen, Guyu Zhang

+> AAAI, 2019

+

+使用MJSynth和SynthText两个文字识别数据集训练,在IIIT, SVT, IC03, IC13, IC15, SVTP, CUTE数据集上进行评估,算法复现效果如下:

+

+|模型|骨干网络|配置文件|Acc|下载链接|

+| --- | --- | --- | --- | --- |

+|SAR|ResNet31|[rec_r31_sar.yml](../../configs/rec/rec_r31_sar.yml)|87.20%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.1/rec/rec_r31_sar_train.tar)|

+

+注:除了使用MJSynth和SynthText两个文字识别数据集外,还加入了[SynthAdd](https://pan.baidu.com/share/init?surl=uV0LtoNmcxbO-0YA7Ch4dg)数据(提取码:627x),和部分真实数据,具体数据细节可以参考论文。

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+请参考[文本识别教程](./recognition.md)。PaddleOCR对代码进行了模块化,训练不同的识别模型只需要**更换配置文件**即可。

+

+训练

+

+具体地,在完成数据准备后,便可以启动训练,训练命令如下:

+

+```

+#单卡训练(训练周期长,不建议)

+python3 tools/train.py -c configs/rec/rec_r31_sar.yml

+

+#多卡训练,通过--gpus参数指定卡号

+python3 -m paddle.distributed.launch --gpus '0,1,2,3' tools/train.py -c configs/rec/rec_r31_sar.yml

+```

+

+评估

+

+```

+# GPU 评估, Global.pretrained_model 为待测权重

+python3 -m paddle.distributed.launch --gpus '0' tools/eval.py -c configs/rec/rec_r31_sar.yml -o Global.pretrained_model={path/to/weights}/best_accuracy

+```

+

+预测:

+

+```

+# 预测使用的配置文件必须与训练一致

+python3 tools/infer_rec.py -c configs/rec/rec_r31_sar.yml -o Global.pretrained_model={path/to/weights}/best_accuracy Global.infer_img=doc/imgs_words/en/word_1.png

+```

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+首先将SAR文本识别训练过程中保存的模型,转换成inference model。( [模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.1/rec/rec_r31_sar_train.tar) ),可以使用如下命令进行转换:

+

+```

+python3 tools/export_model.py -c configs/rec/rec_r31_sar.yml -o Global.pretrained_model=./rec_r31_sar_train/best_accuracy Global.save_inference_dir=./inference/rec_sar

+```

+

+SAR文本识别模型推理,可以执行如下命令:

+

+```

+python3 tools/infer/predict_rec.py --image_dir="./doc/imgs_words/en/word_1.png" --rec_model_dir="./inference/rec_sar/" --rec_image_shape="3, 48, 48, 160" --rec_char_type="ch" --rec_algorithm="SAR" --rec_char_dict_path="ppocr/utils/dict90.txt" --max_text_length=30 --use_space_char=False

+```

+

+

+### 4.2 C++推理

+

+由于C++预处理后处理还未支持SAR,所以暂未支持

+

+

+### 4.3 Serving服务化部署

+

+暂不支持

+

+

+### 4.4 更多推理部署

+

+暂不支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@article{Li2019ShowAA,

+ title={Show, Attend and Read: A Simple and Strong Baseline for Irregular Text Recognition},

+ author={Hui Li and Peng Wang and Chunhua Shen and Guyu Zhang},

+ journal={ArXiv},

+ year={2019},

+ volume={abs/1811.00751}

+}

+```

diff --git a/doc/doc_ch/algorithm_rec_srn.md b/doc/doc_ch/algorithm_rec_srn.md

new file mode 100644

index 0000000000000000000000000000000000000000..ca7961359eb902fafee959b26d02f324aece233a

--- /dev/null

+++ b/doc/doc_ch/algorithm_rec_srn.md

@@ -0,0 +1,113 @@

+# SRN

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [Towards Accurate Scene Text Recognition with Semantic Reasoning Networks](https://arxiv.org/abs/2003.12294#)

+> Deli Yu, Xuan Li, Chengquan Zhang, Junyu Han, Jingtuo Liu, Errui Ding

+> CVPR,2020

+

+使用MJSynth和SynthText两个文字识别数据集训练,在IIIT, SVT, IC03, IC13, IC15, SVTP, CUTE数据集上进行评估,算法复现效果如下:

+

+|模型|骨干网络|配置文件|Acc|下载链接|

+| --- | --- | --- | --- | --- |

+|SRN|Resnet50_vd_fpn|[rec_r50_fpn_srn.yml](../../configs/rec/rec_r50_fpn_srn.yml)|86.31%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/rec_r50_vd_srn_train.tar)|

+

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+请参考[文本识别教程](./recognition.md)。PaddleOCR对代码进行了模块化,训练不同的识别模型只需要**更换配置文件**即可。

+

+训练

+

+具体地,在完成数据准备后,便可以启动训练,训练命令如下:

+

+```

+#单卡训练(训练周期长,不建议)

+python3 tools/train.py -c configs/rec/rec_r50_fpn_srn.yml

+

+#多卡训练,通过--gpus参数指定卡号

+python3 -m paddle.distributed.launch --gpus '0,1,2,3' tools/train.py -c configs/rec/rec_r50_fpn_srn.yml

+```

+

+评估

+

+```

+# GPU 评估, Global.pretrained_model 为待测权重

+python3 -m paddle.distributed.launch --gpus '0' tools/eval.py -c configs/rec/rec_r50_fpn_srn.yml -o Global.pretrained_model={path/to/weights}/best_accuracy

+```

+

+预测:

+

+```

+# 预测使用的配置文件必须与训练一致

+python3 tools/infer_rec.py -c configs/rec/rec_r50_fpn_srn.yml -o Global.pretrained_model={path/to/weights}/best_accuracy Global.infer_img=doc/imgs_words/en/word_1.png

+```

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+首先将SRN文本识别训练过程中保存的模型,转换成inference model。( [模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/rec_r50_vd_srn_train.tar) ),可以使用如下命令进行转换:

+

+```

+python3 tools/export_model.py -c configs/rec/rec_r50_fpn_srn.yml -o Global.pretrained_model=./rec_r50_vd_srn_train/best_accuracy Global.save_inference_dir=./inference/rec_srn

+```

+

+SRN文本识别模型推理,可以执行如下命令:

+

+```

+python3 tools/infer/predict_rec.py --image_dir="./doc/imgs_words/en/word_1.png" --rec_model_dir="./inference/rec_srn/" --rec_image_shape="1,64,256" --rec_char_type="ch" --rec_algorithm="SRN" --rec_char_dict_path=./ppocr/utils/ic15_dict.txt --use_space_char=False

+```

+

+

+### 4.2 C++推理

+

+由于C++预处理后处理还未支持SRN,所以暂未支持

+

+

+### 4.3 Serving服务化部署

+

+暂不支持

+

+

+### 4.4 更多推理部署

+

+暂不支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@article{Yu2020TowardsAS,

+ title={Towards Accurate Scene Text Recognition With Semantic Reasoning Networks},

+ author={Deli Yu and Xuan Li and Chengquan Zhang and Junyu Han and Jingtuo Liu and Errui Ding},

+ journal={2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

+ year={2020},

+ pages={12110-12119}

+}

+```

diff --git a/doc/doc_ch/datasets.md b/doc/doc_ch/dataset/datasets.md

similarity index 90%

rename from doc/doc_ch/datasets.md

rename to doc/doc_ch/dataset/datasets.md

index d365fd711aff2dffcd30dd06028734cc707d5df0..aad4f50b2d8baa369cf6f2576a24127a23cb5c48 100644

--- a/doc/doc_ch/datasets.md

+++ b/doc/doc_ch/dataset/datasets.md

@@ -6,17 +6,17 @@

- [中文文档文字识别](#中文文档文字识别)

- [ICDAR2019-ArT](#ICDAR2019-ArT)

-除了开源数据,用户还可使用合成工具自行合成,可参考[数据合成工具](./data_synthesis.md);

+除了开源数据,用户还可使用合成工具自行合成,可参考[数据合成工具](../data_synthesis.md);

-如果需要标注自己的数据,可参考[数据标注工具](./data_annotation.md)。

+如果需要标注自己的数据,可参考[数据标注工具](../data_annotation.md)。

#### 1、ICDAR2019-LSVT

- **数据来源**:https://ai.baidu.com/broad/introduction?dataset=lsvt

- **数据简介**: 共45w中文街景图像,包含5w(2w测试+3w训练)全标注数据(文本坐标+文本内容),40w弱标注数据(仅文本内容),如下图所示:

-

+

(a) 全标注数据

-

+

(b) 弱标注数据

- **下载地址**:https://ai.baidu.com/broad/download?dataset=lsvt

- **说明**:其中,test数据集的label目前没有开源,如要评估结果,可以去官网提交:https://rrc.cvc.uab.es/?ch=16

@@ -25,16 +25,16 @@

#### 2、ICDAR2017-RCTW-17

- **数据来源**:https://rctw.vlrlab.net/

- **数据简介**:共包含12,000+图像,大部分图片是通过手机摄像头在野外采集的。有些是截图。这些图片展示了各种各样的场景,包括街景、海报、菜单、室内场景和手机应用程序的截图。

-

+

- **下载地址**:https://rctw.vlrlab.net/dataset/

-#### 3、中文街景文字识别

+#### 3、中文街景文字识别

- **数据来源**:https://aistudio.baidu.com/aistudio/competition/detail/8

- **数据简介**:ICDAR2019-LSVT行识别任务,共包括29万张图片,其中21万张图片作为训练集(带标注),8万张作为测试集(无标注)。数据集采自中国街景,并由街景图片中的文字行区域(例如店铺标牌、地标等等)截取出来而形成。所有图像都经过一些预处理,将文字区域利用仿射变化,等比映射为一张高为48像素的图片,如图所示:

-

+

(a) 标注:魅派集成吊顶

-

+

(b) 标注:母婴用品连锁

- **下载地址**

https://aistudio.baidu.com/aistudio/datasetdetail/8429

@@ -48,15 +48,15 @@ https://aistudio.baidu.com/aistudio/datasetdetail/8429

- 包含汉字、英文字母、数字和标点共5990个字符(字符集合:https://github.com/YCG09/chinese_ocr/blob/master/train/char_std_5990.txt )

- 每个样本固定10个字符,字符随机截取自语料库中的句子

- 图片分辨率统一为280x32

-

-

+

+

- **下载地址**:https://pan.baidu.com/s/1QkI7kjah8SPHwOQ40rS1Pw (密码:lu7m)

#### 5、ICDAR2019-ArT

- **数据来源**:https://ai.baidu.com/broad/introduction?dataset=art

- **数据简介**:共包含10,166张图像,训练集5603图,测试集4563图。由Total-Text、SCUT-CTW1500、Baidu Curved Scene Text (ICDAR2019-LSVT部分弯曲数据) 三部分组成,包含水平、多方向和弯曲等多种形状的文本。

-

+

- **下载地址**:https://ai.baidu.com/broad/download?dataset=art

## 参考文献

diff --git a/doc/doc_ch/dataset/docvqa_datasets.md b/doc/doc_ch/dataset/docvqa_datasets.md

new file mode 100644

index 0000000000000000000000000000000000000000..3ec1865ee42be99ec19343428cd9ad6439686f15

--- /dev/null

+++ b/doc/doc_ch/dataset/docvqa_datasets.md

@@ -0,0 +1,27 @@

+## DocVQA数据集

+这里整理了常见的DocVQA数据集,持续更新中,欢迎各位小伙伴贡献数据集~

+- [FUNSD数据集](#funsd)

+- [XFUND数据集](#xfund)

+

+

+#### 1、FUNSD数据集

+- **数据来源**:https://guillaumejaume.github.io/FUNSD/

+- **数据简介**:FUNSD数据集是一个用于表单理解的数据集,它包含199张真实的、完全标注的扫描版图片,类型包括市场报告、广告以及学术报告等,并分为149张训练集以及50张测试集。FUNSD数据集适用于多种类型的DocVQA任务,如字段级实体分类、字段级实体连接等。部分图像以及标注框可视化如下所示:

+

图1 多模态表单识别流程图

-注:欢迎再AIStudio领取免费算力体验线上实训,项目链接: 多模态表单识别](https://aistudio.baidu.com/aistudio/projectdetail/3815918)(配备Tesla V100、A100等高级算力资源)

+注:欢迎再AIStudio领取免费算力体验线上实训,项目链接: [多模态表单识别](https://aistudio.baidu.com/aistudio/projectdetail/3815918)(配备Tesla V100、A100等高级算力资源)

diff --git a/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_cml.yml b/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_cml.yml

new file mode 100644

index 0000000000000000000000000000000000000000..3e77577c17abe2111c501d96ce6b1087ac44f8d6

--- /dev/null

+++ b/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_cml.yml

@@ -0,0 +1,234 @@

+Global:

+ debug: false

+ use_gpu: true

+ epoch_num: 500

+ log_smooth_window: 20

+ print_batch_step: 10

+ save_model_dir: ./output/ch_PP-OCR_v3_det/

+ save_epoch_step: 100

+ eval_batch_step:

+ - 0

+ - 400

+ cal_metric_during_train: false

+ pretrained_model: null

+ checkpoints: null

+ save_inference_dir: null

+ use_visualdl: false

+ infer_img: doc/imgs_en/img_10.jpg

+ save_res_path: ./checkpoints/det_db/predicts_db.txt

+ distributed: true

+

+Architecture:

+ name: DistillationModel

+ algorithm: Distillation

+ model_type: det

+ Models:

+ Student:

+ model_type: det

+ algorithm: DB

+ Transform: null

+ Backbone:

+ name: MobileNetV3

+ scale: 0.5

+ model_name: large

+ disable_se: true

+ Neck:

+ name: RSEFPN

+ out_channels: 96

+ shortcut: True

+ Head:

+ name: DBHead

+ k: 50

+ Student2:

+ model_type: det

+ algorithm: DB

+ Transform: null

+ Backbone:

+ name: MobileNetV3

+ scale: 0.5

+ model_name: large

+ disable_se: true

+ Neck:

+ name: RSEFPN

+ out_channels: 96

+ shortcut: True

+ Head:

+ name: DBHead

+ k: 50

+ Teacher:

+ freeze_params: true

+ return_all_feats: false

+ model_type: det

+ algorithm: DB

+ Backbone:

+ name: ResNet

+ in_channels: 3

+ layers: 50

+ Neck:

+ name: LKPAN

+ out_channels: 256

+ Head:

+ name: DBHead

+ kernel_list: [7,2,2]

+ k: 50

+

+Loss:

+ name: CombinedLoss

+ loss_config_list:

+ - DistillationDilaDBLoss:

+ weight: 1.0

+ model_name_pairs:

+ - ["Student", "Teacher"]

+ - ["Student2", "Teacher"]

+ key: maps

+ balance_loss: true

+ main_loss_type: DiceLoss

+ alpha: 5

+ beta: 10

+ ohem_ratio: 3

+ - DistillationDMLLoss:

+ model_name_pairs:

+ - ["Student", "Student2"]

+ maps_name: "thrink_maps"

+ weight: 1.0

+ # act: None

+ model_name_pairs: ["Student", "Student2"]

+ key: maps

+ - DistillationDBLoss:

+ weight: 1.0

+ model_name_list: ["Student", "Student2"]

+ # key: maps

+ # name: DBLoss

+ balance_loss: true

+ main_loss_type: DiceLoss

+ alpha: 5

+ beta: 10

+ ohem_ratio: 3

+

+Optimizer:

+ name: Adam

+ beta1: 0.9

+ beta2: 0.999

+ lr:

+ name: Cosine

+ learning_rate: 0.001

+ warmup_epoch: 2

+ regularizer:

+ name: L2

+ factor: 5.0e-05

+

+PostProcess:

+ name: DistillationDBPostProcess

+ model_name: ["Student"]

+ key: head_out

+ thresh: 0.3

+ box_thresh: 0.6

+ max_candidates: 1000

+ unclip_ratio: 1.5

+

+Metric:

+ name: DistillationMetric

+ base_metric_name: DetMetric

+ main_indicator: hmean

+ key: "Student"

+

+Train:

+ dataset:

+ name: SimpleDataSet

+ data_dir: ./train_data/icdar2015/text_localization/

+ label_file_list:

+ - ./train_data/icdar2015/text_localization/train_icdar2015_label.txt

+ ratio_list: [1.0]

+ transforms:

+ - DecodeImage:

+ img_mode: BGR

+ channel_first: false

+ - DetLabelEncode: null

+ - CopyPaste:

+ - IaaAugment:

+ augmenter_args:

+ - type: Fliplr

+ args:

+ p: 0.5

+ - type: Affine

+ args:

+ rotate:

+ - -10

+ - 10

+ - type: Resize

+ args:

+ size:

+ - 0.5

+ - 3

+ - EastRandomCropData:

+ size:

+ - 960

+ - 960

+ max_tries: 50

+ keep_ratio: true

+ - MakeBorderMap:

+ shrink_ratio: 0.4

+ thresh_min: 0.3

+ thresh_max: 0.7

+ - MakeShrinkMap:

+ shrink_ratio: 0.4

+ min_text_size: 8

+ - NormalizeImage:

+ scale: 1./255.

+ mean:

+ - 0.485

+ - 0.456

+ - 0.406

+ std:

+ - 0.229

+ - 0.224

+ - 0.225

+ order: hwc

+ - ToCHWImage: null

+ - KeepKeys:

+ keep_keys:

+ - image

+ - threshold_map

+ - threshold_mask

+ - shrink_map

+ - shrink_mask

+ loader:

+ shuffle: true

+ drop_last: false

+ batch_size_per_card: 8

+ num_workers: 4

+Eval:

+ dataset:

+ name: SimpleDataSet

+ data_dir: ./train_data/icdar2015/text_localization/

+ label_file_list:

+ - ./train_data/icdar2015/text_localization/test_icdar2015_label.txt

+ transforms:

+ - DecodeImage:

+ img_mode: BGR

+ channel_first: false

+ - DetLabelEncode: null

+ - DetResizeForTest: null

+ - NormalizeImage:

+ scale: 1./255.

+ mean:

+ - 0.485

+ - 0.456

+ - 0.406

+ std:

+ - 0.229

+ - 0.224

+ - 0.225

+ order: hwc

+ - ToCHWImage: null

+ - KeepKeys:

+ keep_keys:

+ - image

+ - shape

+ - polys

+ - ignore_tags

+ loader:

+ shuffle: false

+ drop_last: false

+ batch_size_per_card: 1

+ num_workers: 2

diff --git a/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_student.yml b/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_student.yml

new file mode 100644

index 0000000000000000000000000000000000000000..0e8af776479ea26f834ca9ddc169f80b3982e86d

--- /dev/null

+++ b/configs/det/ch_PP-OCRv3/ch_PP-OCRv3_det_student.yml

@@ -0,0 +1,163 @@

+Global:

+ debug: false

+ use_gpu: true

+ epoch_num: 500

+ log_smooth_window: 20

+ print_batch_step: 10

+ save_model_dir: ./output/ch_PP-OCR_V3_det/

+ save_epoch_step: 100

+ eval_batch_step:

+ - 0

+ - 400

+ cal_metric_during_train: false

+ pretrained_model: null

+ checkpoints: null

+ save_inference_dir: null

+ use_visualdl: false

+ infer_img: doc/imgs_en/img_10.jpg

+ save_res_path: ./checkpoints/det_db/predicts_db.txt

+ distributed: true

+

+Architecture:

+ model_type: det

+ algorithm: DB

+ Transform:

+ Backbone:

+ name: MobileNetV3

+ scale: 0.5

+ model_name: large

+ disable_se: True

+ Neck:

+ name: RSEFPN

+ out_channels: 96

+ shortcut: True

+ Head:

+ name: DBHead

+ k: 50

+

+Loss:

+ name: DBLoss

+ balance_loss: true

+ main_loss_type: DiceLoss

+ alpha: 5

+ beta: 10

+ ohem_ratio: 3

+Optimizer:

+ name: Adam

+ beta1: 0.9

+ beta2: 0.999

+ lr:

+ name: Cosine

+ learning_rate: 0.001

+ warmup_epoch: 2

+ regularizer:

+ name: L2

+ factor: 5.0e-05

+PostProcess:

+ name: DBPostProcess

+ thresh: 0.3

+ box_thresh: 0.6

+ max_candidates: 1000

+ unclip_ratio: 1.5

+Metric:

+ name: DetMetric

+ main_indicator: hmean

+Train:

+ dataset:

+ name: SimpleDataSet

+ data_dir: ./train_data/icdar2015/text_localization/

+ label_file_list:

+ - ./train_data/icdar2015/text_localization/train_icdar2015_label.txt

+ ratio_list: [1.0]

+ transforms:

+ - DecodeImage:

+ img_mode: BGR

+ channel_first: false

+ - DetLabelEncode: null

+ - IaaAugment:

+ augmenter_args:

+ - type: Fliplr

+ args:

+ p: 0.5

+ - type: Affine

+ args:

+ rotate:

+ - -10

+ - 10

+ - type: Resize

+ args:

+ size:

+ - 0.5

+ - 3

+ - EastRandomCropData:

+ size:

+ - 960

+ - 960

+ max_tries: 50

+ keep_ratio: true

+ - MakeBorderMap:

+ shrink_ratio: 0.4

+ thresh_min: 0.3

+ thresh_max: 0.7

+ - MakeShrinkMap:

+ shrink_ratio: 0.4

+ min_text_size: 8

+ - NormalizeImage:

+ scale: 1./255.

+ mean:

+ - 0.485

+ - 0.456

+ - 0.406

+ std:

+ - 0.229

+ - 0.224

+ - 0.225

+ order: hwc

+ - ToCHWImage: null

+ - KeepKeys:

+ keep_keys:

+ - image

+ - threshold_map

+ - threshold_mask

+ - shrink_map

+ - shrink_mask

+ loader:

+ shuffle: true

+ drop_last: false

+ batch_size_per_card: 8

+ num_workers: 4

+Eval:

+ dataset:

+ name: SimpleDataSet

+ data_dir: ./train_data/icdar2015/text_localization/

+ label_file_list:

+ - ./train_data/icdar2015/text_localization/test_icdar2015_label.txt

+ transforms:

+ - DecodeImage:

+ img_mode: BGR

+ channel_first: false

+ - DetLabelEncode: null

+ - DetResizeForTest: null

+ - NormalizeImage:

+ scale: 1./255.

+ mean:

+ - 0.485

+ - 0.456

+ - 0.406

+ std:

+ - 0.229

+ - 0.224

+ - 0.225

+ order: hwc

+ - ToCHWImage: null

+ - KeepKeys:

+ keep_keys:

+ - image

+ - shape

+ - polys

+ - ignore_tags

+ loader:

+ shuffle: false

+ drop_last: false

+ batch_size_per_card: 1

+ num_workers: 2

diff --git a/configs/rec/ch_PP-OCRv3/ch_PP-OCRv3_rec.yml b/configs/rec/PP-OCRv3/ch_PP-OCRv3_rec.yml

similarity index 100%

rename from configs/rec/ch_PP-OCRv3/ch_PP-OCRv3_rec.yml

rename to configs/rec/PP-OCRv3/ch_PP-OCRv3_rec.yml

diff --git a/configs/rec/ch_PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml b/configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml

similarity index 100%

rename from configs/rec/ch_PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml

rename to configs/rec/PP-OCRv3/ch_PP-OCRv3_rec_distillation.yml

diff --git a/doc/doc_ch/docvqa_datasets.md b/configs/rec/PP-OCRv3/multi_language/.gitkeep

similarity index 100%

rename from doc/doc_ch/docvqa_datasets.md

rename to configs/rec/PP-OCRv3/multi_language/.gitkeep

diff --git a/deploy/slim/quantization/export_model.py b/deploy/slim/quantization/export_model.py

index 90f79dab34a5f20d4556ae4b10ad1d4e1f8b7f0d..fd1c3e5e109667fa74f5ade18b78f634e4d325db 100755

--- a/deploy/slim/quantization/export_model.py

+++ b/deploy/slim/quantization/export_model.py

@@ -17,9 +17,9 @@ import sys

__dir__ = os.path.dirname(os.path.abspath(__file__))

sys.path.append(__dir__)

-sys.path.append(os.path.abspath(os.path.join(__dir__, '..', '..', '..')))

-sys.path.append(

- os.path.abspath(os.path.join(__dir__, '..', '..', '..', 'tools')))

+sys.path.insert(0, os.path.abspath(os.path.join(__dir__, '..', '..', '..')))

+sys.path.insert(

+ 0, os.path.abspath(os.path.join(__dir__, '..', '..', '..', 'tools')))

import argparse

@@ -129,7 +129,6 @@ def main():

quanter.quantize(model)

load_model(config, model)

- model.eval()

# build metric

eval_class = build_metric(config['Metric'])

@@ -142,6 +141,7 @@ def main():

# start eval

metric = program.eval(model, valid_dataloader, post_process_class,

eval_class, model_type, use_srn)

+ model.eval()

logger.info('metric eval ***************')

for k, v in metric.items():

@@ -156,7 +156,6 @@ def main():

if arch_config["algorithm"] in ["Distillation", ]: # distillation model

archs = list(arch_config["Models"].values())

for idx, name in enumerate(model.model_name_list):

- model.model_list[idx].eval()

sub_model_save_path = os.path.join(save_path, name, "inference")

export_single_model(model.model_list[idx], archs[idx],

sub_model_save_path, logger, quanter)

diff --git a/doc/datasets/funsd_demo/gt_train_00040534.jpg b/doc/datasets/funsd_demo/gt_train_00040534.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..9f7cf4d4977689b73e2ca91cbe9c877bb8f0c7ff

Binary files /dev/null and b/doc/datasets/funsd_demo/gt_train_00040534.jpg differ

diff --git a/doc/datasets/funsd_demo/gt_train_00070353.jpg b/doc/datasets/funsd_demo/gt_train_00070353.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..36d3345e5ec4c262764e63a972aaa82e98877681

Binary files /dev/null and b/doc/datasets/funsd_demo/gt_train_00070353.jpg differ

diff --git a/doc/datasets/table_PubTabNet_demo/PMC524509_007_00.png b/doc/datasets/table_PubTabNet_demo/PMC524509_007_00.png

new file mode 100755

index 0000000000000000000000000000000000000000..5b9d631cba434e4bd6ac6fe2108b7f6c081c4811

Binary files /dev/null and b/doc/datasets/table_PubTabNet_demo/PMC524509_007_00.png differ

diff --git a/doc/datasets/table_PubTabNet_demo/PMC535543_007_01.png b/doc/datasets/table_PubTabNet_demo/PMC535543_007_01.png

new file mode 100755

index 0000000000000000000000000000000000000000..e808de72d62325ae4cbd009397b7beaeed0d88fc

Binary files /dev/null and b/doc/datasets/table_PubTabNet_demo/PMC535543_007_01.png differ

diff --git a/doc/datasets/table_tal_demo/1.jpg b/doc/datasets/table_tal_demo/1.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..e7ddd6d1db59ca27a0461ab93b3672aeec4a8941

Binary files /dev/null and b/doc/datasets/table_tal_demo/1.jpg differ

diff --git a/doc/datasets/table_tal_demo/2.jpg b/doc/datasets/table_tal_demo/2.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..e7ddd6d1db59ca27a0461ab93b3672aeec4a8941

Binary files /dev/null and b/doc/datasets/table_tal_demo/2.jpg differ

diff --git a/doc/datasets/xfund_demo/gt_zh_train_0.jpg b/doc/datasets/xfund_demo/gt_zh_train_0.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..6fdaf12fa1d79e6ea9029d665ab7488223459436

Binary files /dev/null and b/doc/datasets/xfund_demo/gt_zh_train_0.jpg differ

diff --git a/doc/datasets/xfund_demo/gt_zh_train_1.jpg b/doc/datasets/xfund_demo/gt_zh_train_1.jpg

new file mode 100644

index 0000000000000000000000000000000000000000..6a1e53a3ba09b6f84809cfd10a15c42f42b9a163

Binary files /dev/null and b/doc/datasets/xfund_demo/gt_zh_train_1.jpg differ

diff --git a/doc/doc_ch/algorithm_det_fcenet.md b/doc/doc_ch/algorithm_det_fcenet.md

new file mode 100644

index 0000000000000000000000000000000000000000..bd2e734204d32bbf575ddea9f889953a72582c59

--- /dev/null

+++ b/doc/doc_ch/algorithm_det_fcenet.md

@@ -0,0 +1,104 @@

+# FCENet

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [Fourier Contour Embedding for Arbitrary-Shaped Text Detection](https://arxiv.org/abs/2104.10442)

+> Yiqin Zhu and Jianyong Chen and Lingyu Liang and Zhanghui Kuang and Lianwen Jin and Wayne Zhang

+> CVPR, 2021

+

+在CTW1500文本检测公开数据集上,算法复现效果如下:

+

+| 模型 |骨干网络|配置文件|precision|recall|Hmean|下载链接|

+|-----| --- | --- | --- | --- | --- | --- |

+| FCE | ResNet50_dcn | [configs/det/det_r50_vd_dcn_fce_ctw.yml](../../configs/det/det_r50_vd_dcn_fce_ctw.yml)| 88.39%|82.18%|85.27%|[训练模型](https://paddleocr.bj.bcebos.com/contribution/det_r50_dcn_fce_ctw_v2.0_train.tar)|

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+上述FCE模型使用CTW1500文本检测公开数据集训练得到,数据集下载可参考 [ocr_datasets](./dataset/ocr_datasets.md)。

+

+数据下载完成后,请参考[文本检测训练教程](./detection.md)进行训练。PaddleOCR对代码进行了模块化,训练不同的检测模型只需要**更换配置文件**即可。

+

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+首先将FCE文本检测训练过程中保存的模型,转换成inference model。以基于Resnet50_vd_dcn骨干网络,在CTW1500英文数据集训练的模型为例( [模型下载地址](https://paddleocr.bj.bcebos.com/contribution/det_r50_dcn_fce_ctw_v2.0_train.tar) ),可以使用如下命令进行转换:

+

+```shell

+python3 tools/export_model.py -c configs/det/det_r50_vd_dcn_fce_ctw.yml -o Global.pretrained_model=./det_r50_dcn_fce_ctw_v2.0_train/best_accuracy Global.save_inference_dir=./inference/det_fce

+```

+

+FCE文本检测模型推理,执行非弯曲文本检测,可以执行如下命令:

+

+```shell

+python3 tools/infer/predict_det.py --image_dir="./doc/imgs_en/img_10.jpg" --det_model_dir="./inference/det_fce/" --det_algorithm="FCE" --det_fce_box_type=quad

+```

+

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+如果想执行弯曲文本检测,可以执行如下命令:

+

+```shell

+python3 tools/infer/predict_det.py --image_dir="./doc/imgs_en/img623.jpg" --det_model_dir="./inference/det_fce/" --det_algorithm="FCE" --det_fce_box_type=poly

+```

+

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+**注意**:由于CTW1500数据集只有1000张训练图像,且主要针对英文场景,所以上述模型对中文文本图像检测效果会比较差。

+

+

+### 4.2 C++推理

+

+由于后处理暂未使用CPP编写,FCE文本检测模型暂不支持CPP推理。

+

+

+### 4.3 Serving服务化部署

+

+暂未支持

+

+

+### 4.4 更多推理部署

+

+暂未支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@InProceedings{zhu2021fourier,

+ title={Fourier Contour Embedding for Arbitrary-Shaped Text Detection},

+ author={Yiqin Zhu and Jianyong Chen and Lingyu Liang and Zhanghui Kuang and Lianwen Jin and Wayne Zhang},

+ year={2021},

+ booktitle = {CVPR}

+}

+```

diff --git a/doc/doc_ch/algorithm_det_psenet.md b/doc/doc_ch/algorithm_det_psenet.md

new file mode 100644

index 0000000000000000000000000000000000000000..58d8ccf97292f4e988861b618697fb0e7694fbab

--- /dev/null

+++ b/doc/doc_ch/algorithm_det_psenet.md

@@ -0,0 +1,106 @@

+# PSENet

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [Shape robust text detection with progressive scale expansion network](https://arxiv.org/abs/1903.12473)

+> Wang, Wenhai and Xie, Enze and Li, Xiang and Hou, Wenbo and Lu, Tong and Yu, Gang and Shao, Shuai

+> CVPR, 2019

+

+在ICDAR2015文本检测公开数据集上,算法复现效果如下:

+

+|模型|骨干网络|配置文件|precision|recall|Hmean|下载链接|

+| --- | --- | --- | --- | --- | --- | --- |

+|PSE| ResNet50_vd | [configs/det/det_r50_vd_pse.yml](../../configs/det/det_r50_vd_pse.yml)| 85.81% |79.53%|82.55%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.1/en_det/det_r50_vd_pse_v2.0_train.tar)|

+|PSE| MobileNetV3| [configs/det/det_mv3_pse.yml](../../configs/det/det_mv3_pse.yml) | 82.20% |70.48%|75.89%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.1/en_det/det_mv3_pse_v2.0_train.tar)|

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+上述PSE模型使用ICDAR2015文本检测公开数据集训练得到,数据集下载可参考 [ocr_datasets](./dataset/ocr_datasets.md)。

+

+数据下载完成后,请参考[文本检测训练教程](./detection.md)进行训练。PaddleOCR对代码进行了模块化,训练不同的检测模型只需要**更换配置文件**即可。

+

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+首先将PSE文本检测训练过程中保存的模型,转换成inference model。以基于Resnet50_vd骨干网络,在ICDAR2015英文数据集训练的模型为例( [模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.1/en_det/det_r50_vd_pse_v2.0_train.tar) ),可以使用如下命令进行转换:

+

+```shell

+python3 tools/export_model.py -c configs/det/det_r50_vd_pse.yml -o Global.pretrained_model=./det_r50_vd_pse_v2.0_train/best_accuracy Global.save_inference_dir=./inference/det_pse

+```

+

+PSE文本检测模型推理,执行非弯曲文本检测,可以执行如下命令:

+

+```shell

+python3 tools/infer/predict_det.py --image_dir="./doc/imgs_en/img_10.jpg" --det_model_dir="./inference/det_pse/" --det_algorithm="PSE" --det_pse_box_type=quad

+```

+

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+如果想执行弯曲文本检测,可以执行如下命令:

+

+```shell

+python3 tools/infer/predict_det.py --image_dir="./doc/imgs_en/img_10.jpg" --det_model_dir="./inference/det_pse/" --det_algorithm="PSE" --det_pse_box_type=poly

+```

+

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+**注意**:由于ICDAR2015数据集只有1000张训练图像,且主要针对英文场景,所以上述模型对中文或弯曲文本图像检测效果会比较差。

+

+

+### 4.2 C++推理

+

+由于后处理暂未使用CPP编写,PSE文本检测模型暂不支持CPP推理。

+

+

+### 4.3 Serving服务化部署

+

+暂未支持

+

+

+### 4.4 更多推理部署

+

+暂未支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@inproceedings{wang2019shape,

+ title={Shape robust text detection with progressive scale expansion network},

+ author={Wang, Wenhai and Xie, Enze and Li, Xiang and Hou, Wenbo and Lu, Tong and Yu, Gang and Shao, Shuai},

+ booktitle={Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition},

+ pages={9336--9345},

+ year={2019}

+}

+```

diff --git a/doc/doc_ch/algorithm_det_sast.md b/doc/doc_ch/algorithm_det_sast.md

new file mode 100644

index 0000000000000000000000000000000000000000..038d73fc15f3203bbcc17997c1a8e1c208f80ba8

--- /dev/null

+++ b/doc/doc_ch/algorithm_det_sast.md

@@ -0,0 +1,115 @@

+# SAST

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [A Single-Shot Arbitrarily-Shaped Text Detector based on Context Attended Multi-Task Learning](https://arxiv.org/abs/1908.05498)

+> Wang, Pengfei and Zhang, Chengquan and Qi, Fei and Huang, Zuming and En, Mengyi and Han, Junyu and Liu, Jingtuo and Ding, Errui and Shi, Guangming

+> ACM MM, 2019

+

+在ICDAR2015文本检测公开数据集上,算法复现效果如下:

+

+|模型|骨干网络|配置文件|precision|recall|Hmean|下载链接|

+| --- | --- | --- | --- | --- | --- | --- |

+|SAST|ResNet50_vd|[configs/det/det_r50_vd_sast_icdar15.yml](../../configs/det/det_r50_vd_sast_icdar15.yml)|91.39%|83.77%|87.42%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/det_r50_vd_sast_icdar15_v2.0_train.tar)|

+

+

+在Total-text文本检测公开数据集上,算法复现效果如下:

+

+|模型|骨干网络|配置文件|precision|recall|Hmean|下载链接|

+| --- | --- | --- | --- | --- | --- | --- |

+|SAST|ResNet50_vd|[configs/det/det_r50_vd_sast_totaltext.yml](../../configs/det/det_r50_vd_sast_totaltext.yml)|89.63%|78.44%|83.66%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/det_r50_vd_sast_totaltext_v2.0_train.tar)|

+

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+请参考[文本检测训练教程](./detection.md)。PaddleOCR对代码进行了模块化,训练不同的检测模型只需要**更换配置文件**即可。

+

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+#### (1). 四边形文本检测模型(ICDAR2015)

+首先将SAST文本检测训练过程中保存的模型,转换成inference model。以基于Resnet50_vd骨干网络,在ICDAR2015英文数据集训练的模型为例([模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/det_r50_vd_sast_icdar15_v2.0_train.tar)),可以使用如下命令进行转换:

+```

+python3 tools/export_model.py -c configs/det/det_r50_vd_sast_icdar15.yml -o Global.pretrained_model=./det_r50_vd_sast_icdar15_v2.0_train/best_accuracy Global.save_inference_dir=./inference/det_sast_ic15

+

+```

+**SAST文本检测模型推理,需要设置参数`--det_algorithm="SAST"`**,可以执行如下命令:

+```

+python3 tools/infer/predict_det.py --det_algorithm="SAST" --image_dir="./doc/imgs_en/img_10.jpg" --det_model_dir="./inference/det_sast_ic15/"

+```

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+#### (2). 弯曲文本检测模型(Total-Text)

+首先将SAST文本检测训练过程中保存的模型,转换成inference model。以基于Resnet50_vd骨干网络,在Total-Text英文数据集训练的模型为例([模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/det_r50_vd_sast_totaltext_v2.0_train.tar)),可以使用如下命令进行转换:

+

+```

+python3 tools/export_model.py -c configs/det/det_r50_vd_sast_totaltext.yml -o Global.pretrained_model=./det_r50_vd_sast_totaltext_v2.0_train/best_accuracy Global.save_inference_dir=./inference/det_sast_tt

+

+```

+

+SAST文本检测模型推理,需要设置参数`--det_algorithm="SAST"`,同时,还需要增加参数`--det_sast_polygon=True`,可以执行如下命令:

+```

+python3 tools/infer/predict_det.py --det_algorithm="SAST" --image_dir="./doc/imgs_en/img623.jpg" --det_model_dir="./inference/det_sast_tt/" --det_sast_polygon=True

+```

+可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'det_res'。结果示例如下:

+

+

+

+**注意**:本代码库中,SAST后处理Locality-Aware NMS有python和c++两种版本,c++版速度明显快于python版。由于c++版本nms编译版本问题,只有python3.5环境下会调用c++版nms,其他情况将调用python版nms。

+

+

+### 4.2 C++推理

+

+暂未支持

+

+

+### 4.3 Serving服务化部署

+

+暂未支持

+

+

+### 4.4 更多推理部署

+

+暂未支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@inproceedings{wang2019single,

+ title={A Single-Shot Arbitrarily-Shaped Text Detector based on Context Attended Multi-Task Learning},

+ author={Wang, Pengfei and Zhang, Chengquan and Qi, Fei and Huang, Zuming and En, Mengyi and Han, Junyu and Liu, Jingtuo and Ding, Errui and Shi, Guangming},

+ booktitle={Proceedings of the 27th ACM International Conference on Multimedia},

+ pages={1277--1285},

+ year={2019}

+}

+```

diff --git a/doc/doc_ch/algorithm_rec_sar.md b/doc/doc_ch/algorithm_rec_sar.md

new file mode 100644

index 0000000000000000000000000000000000000000..b8304313994754480a89d708e39149d67f828c0d

--- /dev/null

+++ b/doc/doc_ch/algorithm_rec_sar.md

@@ -0,0 +1,114 @@

+# SAR

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [Show, Attend and Read: A Simple and Strong Baseline for Irregular Text Recognition](https://arxiv.org/abs/1811.00751)

+> Hui Li, Peng Wang, Chunhua Shen, Guyu Zhang

+> AAAI, 2019

+

+使用MJSynth和SynthText两个文字识别数据集训练,在IIIT, SVT, IC03, IC13, IC15, SVTP, CUTE数据集上进行评估,算法复现效果如下:

+

+|模型|骨干网络|配置文件|Acc|下载链接|

+| --- | --- | --- | --- | --- |

+|SAR|ResNet31|[rec_r31_sar.yml](../../configs/rec/rec_r31_sar.yml)|87.20%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.1/rec/rec_r31_sar_train.tar)|

+

+注:除了使用MJSynth和SynthText两个文字识别数据集外,还加入了[SynthAdd](https://pan.baidu.com/share/init?surl=uV0LtoNmcxbO-0YA7Ch4dg)数据(提取码:627x),和部分真实数据,具体数据细节可以参考论文。

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+请参考[文本识别教程](./recognition.md)。PaddleOCR对代码进行了模块化,训练不同的识别模型只需要**更换配置文件**即可。

+

+训练

+

+具体地,在完成数据准备后,便可以启动训练,训练命令如下:

+

+```

+#单卡训练(训练周期长,不建议)

+python3 tools/train.py -c configs/rec/rec_r31_sar.yml

+

+#多卡训练,通过--gpus参数指定卡号

+python3 -m paddle.distributed.launch --gpus '0,1,2,3' tools/train.py -c configs/rec/rec_r31_sar.yml

+```

+

+评估

+

+```

+# GPU 评估, Global.pretrained_model 为待测权重

+python3 -m paddle.distributed.launch --gpus '0' tools/eval.py -c configs/rec/rec_r31_sar.yml -o Global.pretrained_model={path/to/weights}/best_accuracy

+```

+

+预测:

+

+```

+# 预测使用的配置文件必须与训练一致

+python3 tools/infer_rec.py -c configs/rec/rec_r31_sar.yml -o Global.pretrained_model={path/to/weights}/best_accuracy Global.infer_img=doc/imgs_words/en/word_1.png

+```

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+首先将SAR文本识别训练过程中保存的模型,转换成inference model。( [模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.1/rec/rec_r31_sar_train.tar) ),可以使用如下命令进行转换:

+

+```

+python3 tools/export_model.py -c configs/rec/rec_r31_sar.yml -o Global.pretrained_model=./rec_r31_sar_train/best_accuracy Global.save_inference_dir=./inference/rec_sar

+```

+

+SAR文本识别模型推理,可以执行如下命令:

+

+```

+python3 tools/infer/predict_rec.py --image_dir="./doc/imgs_words/en/word_1.png" --rec_model_dir="./inference/rec_sar/" --rec_image_shape="3, 48, 48, 160" --rec_char_type="ch" --rec_algorithm="SAR" --rec_char_dict_path="ppocr/utils/dict90.txt" --max_text_length=30 --use_space_char=False

+```

+

+

+### 4.2 C++推理

+

+由于C++预处理后处理还未支持SAR,所以暂未支持

+

+

+### 4.3 Serving服务化部署

+

+暂不支持

+

+

+### 4.4 更多推理部署

+

+暂不支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@article{Li2019ShowAA,

+ title={Show, Attend and Read: A Simple and Strong Baseline for Irregular Text Recognition},

+ author={Hui Li and Peng Wang and Chunhua Shen and Guyu Zhang},

+ journal={ArXiv},

+ year={2019},

+ volume={abs/1811.00751}

+}

+```

diff --git a/doc/doc_ch/algorithm_rec_srn.md b/doc/doc_ch/algorithm_rec_srn.md

new file mode 100644

index 0000000000000000000000000000000000000000..ca7961359eb902fafee959b26d02f324aece233a

--- /dev/null

+++ b/doc/doc_ch/algorithm_rec_srn.md

@@ -0,0 +1,113 @@

+# SRN

+

+- [1. 算法简介](#1)

+- [2. 环境配置](#2)

+- [3. 模型训练、评估、预测](#3)

+ - [3.1 训练](#3-1)

+ - [3.2 评估](#3-2)

+ - [3.3 预测](#3-3)

+- [4. 推理部署](#4)

+ - [4.1 Python推理](#4-1)

+ - [4.2 C++推理](#4-2)

+ - [4.3 Serving服务化部署](#4-3)

+ - [4.4 更多推理部署](#4-4)

+- [5. FAQ](#5)

+

+

+## 1. 算法简介

+

+论文信息:

+> [Towards Accurate Scene Text Recognition with Semantic Reasoning Networks](https://arxiv.org/abs/2003.12294#)

+> Deli Yu, Xuan Li, Chengquan Zhang, Junyu Han, Jingtuo Liu, Errui Ding

+> CVPR,2020

+

+使用MJSynth和SynthText两个文字识别数据集训练,在IIIT, SVT, IC03, IC13, IC15, SVTP, CUTE数据集上进行评估,算法复现效果如下:

+

+|模型|骨干网络|配置文件|Acc|下载链接|

+| --- | --- | --- | --- | --- |

+|SRN|Resnet50_vd_fpn|[rec_r50_fpn_srn.yml](../../configs/rec/rec_r50_fpn_srn.yml)|86.31%|[训练模型](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/rec_r50_vd_srn_train.tar)|

+

+

+

+## 2. 环境配置

+请先参考[《运行环境准备》](./environment.md)配置PaddleOCR运行环境,参考[《项目克隆》](./clone.md)克隆项目代码。

+

+

+

+## 3. 模型训练、评估、预测

+

+请参考[文本识别教程](./recognition.md)。PaddleOCR对代码进行了模块化,训练不同的识别模型只需要**更换配置文件**即可。

+

+训练

+

+具体地,在完成数据准备后,便可以启动训练,训练命令如下:

+

+```

+#单卡训练(训练周期长,不建议)

+python3 tools/train.py -c configs/rec/rec_r50_fpn_srn.yml

+

+#多卡训练,通过--gpus参数指定卡号

+python3 -m paddle.distributed.launch --gpus '0,1,2,3' tools/train.py -c configs/rec/rec_r50_fpn_srn.yml

+```

+

+评估

+

+```

+# GPU 评估, Global.pretrained_model 为待测权重

+python3 -m paddle.distributed.launch --gpus '0' tools/eval.py -c configs/rec/rec_r50_fpn_srn.yml -o Global.pretrained_model={path/to/weights}/best_accuracy

+```

+

+预测:

+

+```

+# 预测使用的配置文件必须与训练一致

+python3 tools/infer_rec.py -c configs/rec/rec_r50_fpn_srn.yml -o Global.pretrained_model={path/to/weights}/best_accuracy Global.infer_img=doc/imgs_words/en/word_1.png

+```

+

+

+## 4. 推理部署

+

+

+### 4.1 Python推理

+首先将SRN文本识别训练过程中保存的模型,转换成inference model。( [模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.0/en/rec_r50_vd_srn_train.tar) ),可以使用如下命令进行转换:

+

+```

+python3 tools/export_model.py -c configs/rec/rec_r50_fpn_srn.yml -o Global.pretrained_model=./rec_r50_vd_srn_train/best_accuracy Global.save_inference_dir=./inference/rec_srn

+```

+

+SRN文本识别模型推理,可以执行如下命令:

+

+```

+python3 tools/infer/predict_rec.py --image_dir="./doc/imgs_words/en/word_1.png" --rec_model_dir="./inference/rec_srn/" --rec_image_shape="1,64,256" --rec_char_type="ch" --rec_algorithm="SRN" --rec_char_dict_path=./ppocr/utils/ic15_dict.txt --use_space_char=False

+```

+

+

+### 4.2 C++推理

+

+由于C++预处理后处理还未支持SRN,所以暂未支持

+

+

+### 4.3 Serving服务化部署

+

+暂不支持

+

+

+### 4.4 更多推理部署

+

+暂不支持

+

+

+## 5. FAQ

+

+

+## 引用

+

+```bibtex

+@article{Yu2020TowardsAS,

+ title={Towards Accurate Scene Text Recognition With Semantic Reasoning Networks},

+ author={Deli Yu and Xuan Li and Chengquan Zhang and Junyu Han and Jingtuo Liu and Errui Ding},

+ journal={2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

+ year={2020},

+ pages={12110-12119}

+}

+```

diff --git a/doc/doc_ch/datasets.md b/doc/doc_ch/dataset/datasets.md

similarity index 90%

rename from doc/doc_ch/datasets.md

rename to doc/doc_ch/dataset/datasets.md

index d365fd711aff2dffcd30dd06028734cc707d5df0..aad4f50b2d8baa369cf6f2576a24127a23cb5c48 100644

--- a/doc/doc_ch/datasets.md

+++ b/doc/doc_ch/dataset/datasets.md

@@ -6,17 +6,17 @@

- [中文文档文字识别](#中文文档文字识别)

- [ICDAR2019-ArT](#ICDAR2019-ArT)

-除了开源数据,用户还可使用合成工具自行合成,可参考[数据合成工具](./data_synthesis.md);

+除了开源数据,用户还可使用合成工具自行合成,可参考[数据合成工具](../data_synthesis.md);

-如果需要标注自己的数据,可参考[数据标注工具](./data_annotation.md)。

+如果需要标注自己的数据,可参考[数据标注工具](../data_annotation.md)。

#### 1、ICDAR2019-LSVT

- **数据来源**:https://ai.baidu.com/broad/introduction?dataset=lsvt

- **数据简介**: 共45w中文街景图像,包含5w(2w测试+3w训练)全标注数据(文本坐标+文本内容),40w弱标注数据(仅文本内容),如下图所示:

-

+

(a) 全标注数据

-

+

(b) 弱标注数据

- **下载地址**:https://ai.baidu.com/broad/download?dataset=lsvt

- **说明**:其中,test数据集的label目前没有开源,如要评估结果,可以去官网提交:https://rrc.cvc.uab.es/?ch=16

@@ -25,16 +25,16 @@

#### 2、ICDAR2017-RCTW-17

- **数据来源**:https://rctw.vlrlab.net/

- **数据简介**:共包含12,000+图像,大部分图片是通过手机摄像头在野外采集的。有些是截图。这些图片展示了各种各样的场景,包括街景、海报、菜单、室内场景和手机应用程序的截图。

-

+

- **下载地址**:https://rctw.vlrlab.net/dataset/

-#### 3、中文街景文字识别

+#### 3、中文街景文字识别

- **数据来源**:https://aistudio.baidu.com/aistudio/competition/detail/8

- **数据简介**:ICDAR2019-LSVT行识别任务,共包括29万张图片,其中21万张图片作为训练集(带标注),8万张作为测试集(无标注)。数据集采自中国街景,并由街景图片中的文字行区域(例如店铺标牌、地标等等)截取出来而形成。所有图像都经过一些预处理,将文字区域利用仿射变化,等比映射为一张高为48像素的图片,如图所示:

-

+

(a) 标注:魅派集成吊顶

-

+

(b) 标注:母婴用品连锁

- **下载地址**

https://aistudio.baidu.com/aistudio/datasetdetail/8429

@@ -48,15 +48,15 @@ https://aistudio.baidu.com/aistudio/datasetdetail/8429

- 包含汉字、英文字母、数字和标点共5990个字符(字符集合:https://github.com/YCG09/chinese_ocr/blob/master/train/char_std_5990.txt )

- 每个样本固定10个字符,字符随机截取自语料库中的句子

- 图片分辨率统一为280x32

-

-

+

+

- **下载地址**:https://pan.baidu.com/s/1QkI7kjah8SPHwOQ40rS1Pw (密码:lu7m)

#### 5、ICDAR2019-ArT

- **数据来源**:https://ai.baidu.com/broad/introduction?dataset=art

- **数据简介**:共包含10,166张图像,训练集5603图,测试集4563图。由Total-Text、SCUT-CTW1500、Baidu Curved Scene Text (ICDAR2019-LSVT部分弯曲数据) 三部分组成,包含水平、多方向和弯曲等多种形状的文本。

-

+

- **下载地址**:https://ai.baidu.com/broad/download?dataset=art

## 参考文献

diff --git a/doc/doc_ch/dataset/docvqa_datasets.md b/doc/doc_ch/dataset/docvqa_datasets.md

new file mode 100644

index 0000000000000000000000000000000000000000..3ec1865ee42be99ec19343428cd9ad6439686f15

--- /dev/null

+++ b/doc/doc_ch/dataset/docvqa_datasets.md

@@ -0,0 +1,27 @@

+## DocVQA数据集

+这里整理了常见的DocVQA数据集,持续更新中,欢迎各位小伙伴贡献数据集~

+- [FUNSD数据集](#funsd)

+- [XFUND数据集](#xfund)

+

+

+#### 1、FUNSD数据集

+- **数据来源**:https://guillaumejaume.github.io/FUNSD/

+- **数据简介**:FUNSD数据集是一个用于表单理解的数据集,它包含199张真实的、完全标注的扫描版图片,类型包括市场报告、广告以及学术报告等,并分为149张训练集以及50张测试集。FUNSD数据集适用于多种类型的DocVQA任务,如字段级实体分类、字段级实体连接等。部分图像以及标注框可视化如下所示:

+

+

+

+

+

+

+

+  +

+

+

+将下载到的数据集解压到工作目录下,假设解压在 PaddleOCR/train_data/下。然后从上表中下载转换好的标注文件。

+

+PaddleOCR 也提供了数据格式转换脚本,可以将官网 label 转换支持的数据格式。 数据转换工具在 `ppocr/utils/gen_label.py`, 这里以训练集为例:

+

+```

+# 将官网下载的标签文件转换为 train_icdar2015_label.txt

+python gen_label.py --mode="det" --root_path="/path/to/icdar_c4_train_imgs/" \

+ --input_path="/path/to/ch4_training_localization_transcription_gt" \

+ --output_label="/path/to/train_icdar2015_label.txt"

+```

+

+解压数据集和下载标注文件后,PaddleOCR/train_data/ 有两个文件夹和两个文件,按照如下方式组织icdar2015数据集:

+```

+/PaddleOCR/train_data/icdar2015/text_localization/

+ └─ icdar_c4_train_imgs/ icdar 2015 数据集的训练数据

+ └─ ch4_test_images/ icdar 2015 数据集的测试数据

+ └─ train_icdar2015_label.txt icdar 2015 数据集的训练标注

+ └─ test_icdar2015_label.txt icdar 2015 数据集的测试标注

+```

+

+## 2. 文本识别

+

+### 2.1 PaddleOCR 文字识别数据格式

+

+PaddleOCR 中的文字识别算法支持两种数据格式:

+

+ - `lmdb` 用于训练以lmdb格式存储的数据集,使用 [lmdb_dataset.py](../../../ppocr/data/lmdb_dataset.py) 进行读取;

+ - `通用数据` 用于训练以文本文件存储的数据集,使用 [simple_dataset.py](../../../ppocr/data/simple_dataset.py)进行读取。

+

+下面以通用数据集为例, 介绍如何准备数据集:

+

+* 训练集

+

+建议将训练图片放入同一个文件夹,并用一个txt文件(rec_gt_train.txt)记录图片路径和标签,txt文件里的内容如下:

+

+**注意:** txt文件中默认请将图片路径和图片标签用 \t 分割,如用其他方式分割将造成训练报错。

+

+```

+" 图像文件名 图像标注信息 "

+

+train_data/rec/train/word_001.jpg 简单可依赖

+train_data/rec/train/word_002.jpg 用科技让复杂的世界更简单

+...

+```

+

+最终训练集应有如下文件结构:

+```

+|-train_data

+ |-rec

+ |- rec_gt_train.txt