diff --git a/configs/e2e/e2e_r50_vd_pg.yml b/configs/e2e/e2e_r50_vd_pg.yml

index 0a232f7a4f3b9ca214bbc6fd1840cec186c027e4..e4d868f98b5847fa064e14f87a69932806791320 100644

--- a/configs/e2e/e2e_r50_vd_pg.yml

+++ b/configs/e2e/e2e_r50_vd_pg.yml

@@ -59,8 +59,10 @@ Optimizer:

PostProcess:

name: PGPostProcess

score_thresh: 0.5

+ mode: fast # fast or slow two ways

Metric:

name: E2EMetric

+ gt_mat_dir: # the dir of gt_mat

character_dict_path: ppocr/utils/ic15_dict.txt

main_indicator: f_score_e2e

@@ -106,7 +108,7 @@ Eval:

order: 'hwc'

- ToCHWImage:

- KeepKeys:

- keep_keys: [ 'image', 'shape', 'polys', 'strs', 'tags' ]

+ keep_keys: [ 'image', 'shape', 'polys', 'strs', 'tags', 'img_id']

loader:

shuffle: False

drop_last: False

diff --git a/doc/doc_ch/inference.md b/doc/doc_ch/inference.md

index 1288d90692e154220b8ceb22cd7b6d98f53d3efb..f0f7401538a9f8940f671fdcc170aca6c003040d 100755

--- a/doc/doc_ch/inference.md

+++ b/doc/doc_ch/inference.md

@@ -13,7 +13,6 @@ inference 模型(`paddle.jit.save`保存的模型)

- [检测模型转inference模型](#检测模型转inference模型)

- [识别模型转inference模型](#识别模型转inference模型)

- [方向分类模型转inference模型](#方向分类模型转inference模型)

- - [端到端模型转inference模型](#端到端模型转inference模型)

- [二、文本检测模型推理](#文本检测模型推理)

- [1. 超轻量中文检测模型推理](#超轻量中文检测模型推理)

@@ -28,13 +27,10 @@ inference 模型(`paddle.jit.save`保存的模型)

- [4. 自定义文本识别字典的推理](#自定义文本识别字典的推理)

- [5. 多语言模型的推理](#多语言模型的推理)

-- [四、端到端模型推理](#端到端模型推理)

- - [1. PGNet端到端模型推理](#PGNet端到端模型推理)

-

-- [五、方向分类模型推理](#方向识别模型推理)

+- [四、方向分类模型推理](#方向识别模型推理)

- [1. 方向分类模型推理](#方向分类模型推理)

-- [六、文本检测、方向分类和文字识别串联推理](#文本检测、方向分类和文字识别串联推理)

+- [五、文本检测、方向分类和文字识别串联推理](#文本检测、方向分类和文字识别串联推理)

- [1. 超轻量中文OCR模型推理](#超轻量中文OCR模型推理)

- [2. 其他模型推理](#其他模型推理)

@@ -122,32 +118,6 @@ python3 tools/export_model.py -c configs/cls/cls_mv3.yml -o Global.pretrained_mo

├── inference.pdiparams.info # 分类inference模型的参数信息,可忽略

└── inference.pdmodel # 分类inference模型的program文件

```

-

-### 端到端模型转inference模型

-

-下载端到端模型:

-```

-wget -P ./ch_lite/ https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_train.tar && tar xf ./ch_lite/ch_ppocr_mobile_v2.0_cls_train.tar -C ./ch_lite/

-```

-

-端到端模型转inference模型与检测的方式相同,如下:

-```

-# -c 后面设置训练算法的yml配置文件

-# -o 配置可选参数

-# Global.pretrained_model 参数设置待转换的训练模型地址,不用添加文件后缀 .pdmodel,.pdopt或.pdparams。

-# Global.load_static_weights 参数需要设置为 False。

-# Global.save_inference_dir参数设置转换的模型将保存的地址。

-

-python3 tools/export_model.py -c configs/e2e/e2e_r50_vd_pg.yml -o Global.pretrained_model=./ch_lite/ch_ppocr_mobile_v2.0_cls_train/best_accuracy Global.load_static_weights=False Global.save_inference_dir=./inference/e2e/

-```

-

-转换成功后,在目录下有三个文件:

-```

-/inference/e2e/

- ├── inference.pdiparams # 分类inference模型的参数文件

- ├── inference.pdiparams.info # 分类inference模型的参数信息,可忽略

- └── inference.pdmodel # 分类inference模型的program文件

-```

## 二、文本检测模型推理

@@ -362,38 +332,8 @@ python3 tools/infer/predict_rec.py --image_dir="./doc/imgs_words/korean/1.jpg" -

Predicts of ./doc/imgs_words/korean/1.jpg:('바탕으로', 0.9948904)

```

-

-## 四、端到端模型推理

-

-端到端模型推理,默认使用PGNet模型的配置参数。当不使用PGNet模型时,在推理时,需要通过传入相应的参数进行算法适配,细节参考下文。

-

-### 1. PGNet端到端模型推理

-#### (1). 四边形文本检测模型(ICDAR2015)

-首先将PGNet端到端训练过程中保存的模型,转换成inference model。以基于Resnet50_vd骨干网络,在ICDAR2015英文数据集训练的模型为例([模型下载地址](https://paddleocr.bj.bcebos.com/dygraph_v2.0/pgnet/en_server_pgnetA.tar)),可以使用如下命令进行转换:

-```

-python3 tools/export_model.py -c configs/e2e/e2e_r50_vd_pg.yml -o Global.pretrained_model=./en_server_pgnetA/iter_epoch_450 Global.load_static_weights=False Global.save_inference_dir=./inference/e2e

-```

-**PGNet端到端模型推理,需要设置参数`--e2e_algorithm="PGNet"`**,可以执行如下命令:

-```

-python3 tools/infer/predict_e2e.py --e2e_algorithm="PGNet" --image_dir="./doc/imgs_en/img_10.jpg" --e2e_model_dir="./inference/e2e/" --e2e_pgnet_polygon=False

-```

-可视化文本检测结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'e2e_res'。结果示例如下:

-

-

-

-#### (2). 弯曲文本检测模型(Total-Text)

-和四边形文本检测模型共用一个推理模型

-**PGNet端到端模型推理,需要设置参数`--e2e_algorithm="PGNet"`,同时,还需要增加参数`--e2e_pgnet_polygon=True`,**可以执行如下命令:

-```

-python3.7 tools/infer/predict_e2e.py --e2e_algorithm="PGNet" --image_dir="./doc/imgs_en/img623.jpg" --e2e_model_dir="./inference/e2e/" --e2e_pgnet_polygon=True

-```

-可视化文本端到端结果默认保存到`./inference_results`文件夹里面,结果文件的名称前缀为'e2e_res'。结果示例如下:

-

-

-

-

-## 五、方向分类模型推理

+## 四、方向分类模型推理

下面将介绍方向分类模型推理。

@@ -418,7 +358,7 @@ Predicts of ./doc/imgs_words/ch/word_4.jpg:['0', 0.9999982]

```

-## 六、文本检测、方向分类和文字识别串联推理

+## 五、文本检测、方向分类和文字识别串联推理

### 1. 超轻量中文OCR模型推理

diff --git a/doc/doc_ch/multi_languages.md b/doc/doc_ch/multi_languages.md

index a8f7c2b77f64285e0edfbd22c248e84f0bb84d42..eec09535e7242f62cdebda97cee11086e45c1096 100644

--- a/doc/doc_ch/multi_languages.md

+++ b/doc/doc_ch/multi_languages.md

@@ -5,6 +5,25 @@

- 2021.4.9 支持**80种**语言的检测和识别

- 2021.4.9 支持**轻量高精度**英文模型检测识别

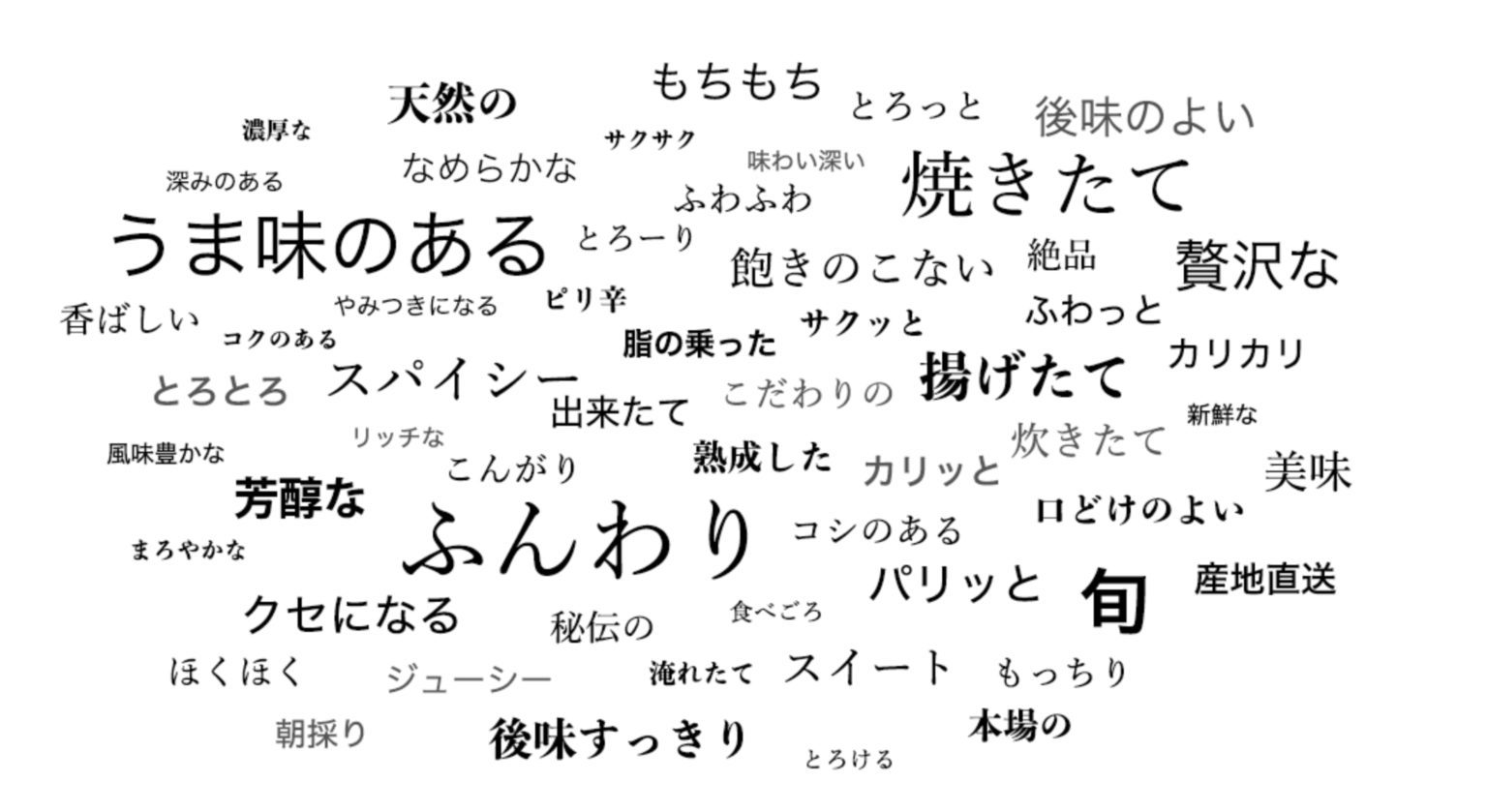

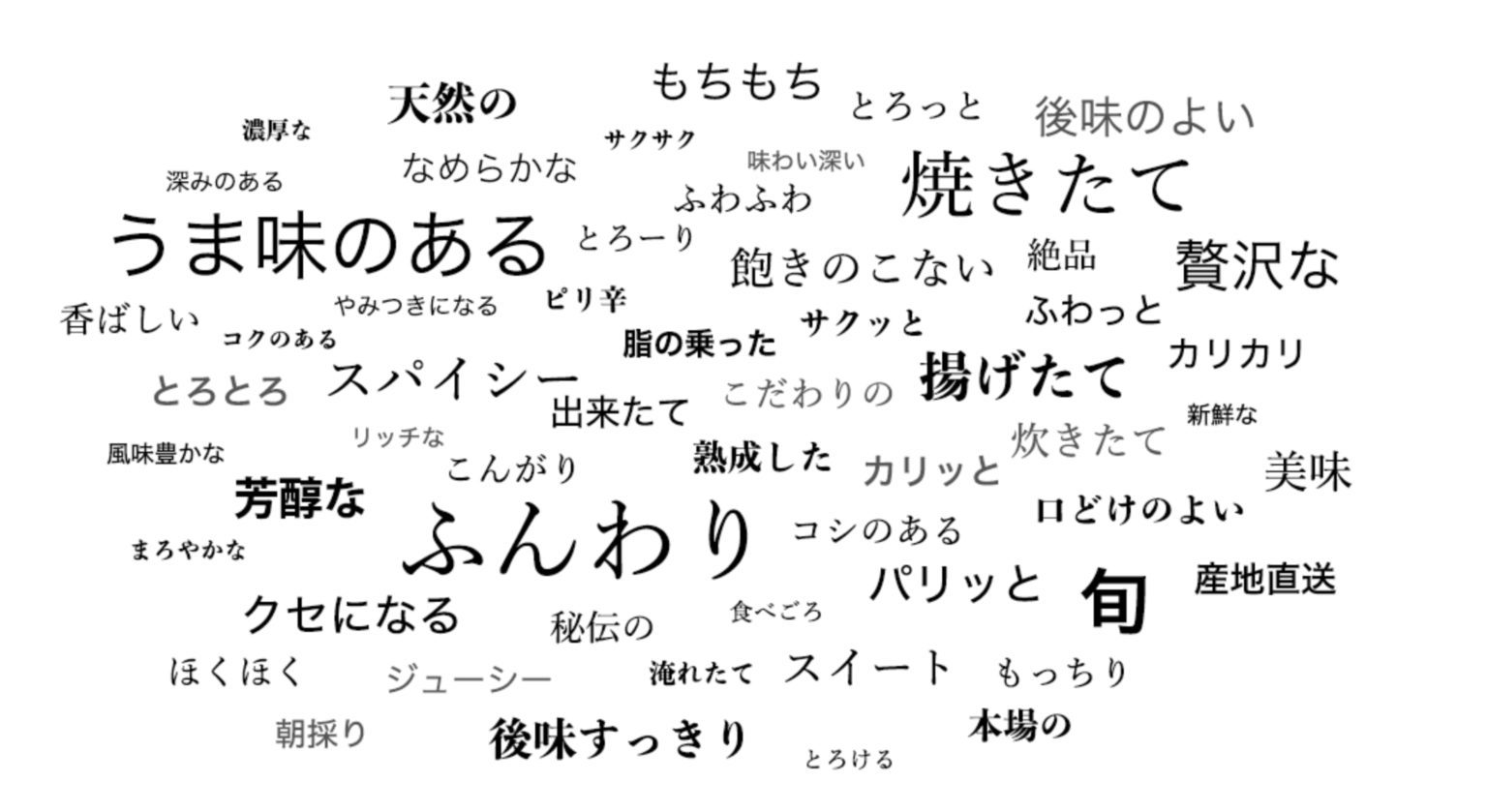

+PaddleOCR 旨在打造一套丰富、领先、且实用的OCR工具库,不仅提供了通用场景下的中英文模型,也提供了专门在英文场景下训练的模型,

+和覆盖[80个语言](#语种缩写)的小语种模型。

+

+其中英文模型支持,大小写字母和常见标点的检测识别,并优化了空格字符的识别:

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+

+ +

+ +

+  +

+ +

+ +

+ +

+ +

+ +

+  +

+