Merge branch 'add_rec_sar' of https://github.com/andyjpaddle/PaddleOCR into add_rec_sar

Showing

因为 它太大了无法显示 image diff 。你可以改为 查看blob。

doc/PaddleOCR_log.png

0 → 100644

75.5 KB

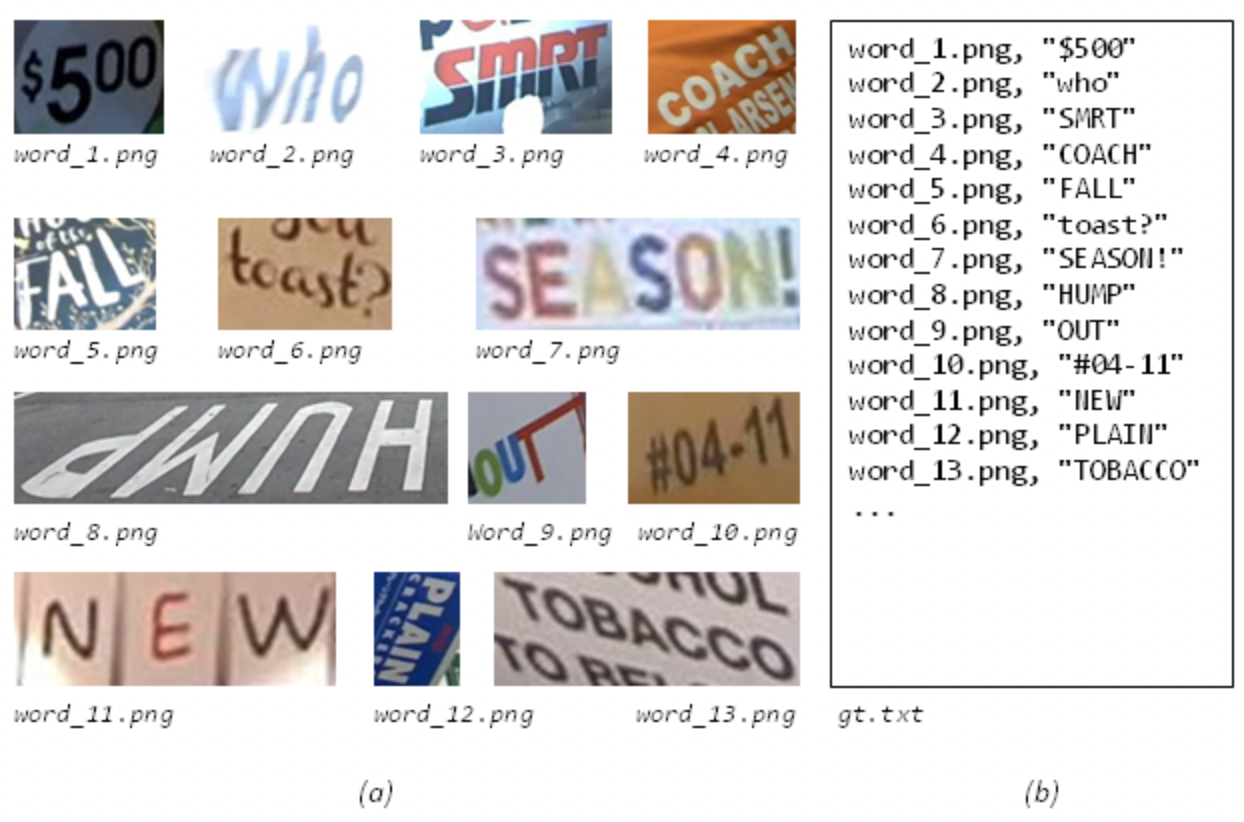

doc/datasets/icdar_rec.png

0 → 100644

921.4 KB

doc/doc_ch/environment.md

0 → 100644

doc/doc_ch/inference_ppocr.md

0 → 100644

doc/doc_ch/models_and_config.md

0 → 100644

doc/doc_ch/paddleOCR_overview.md

0 → 100644

doc/doc_ch/training.md

0 → 100644

doc/doc_en/environment_en.md

0 → 100644

doc/doc_en/inference_ppocr_en.md

0 → 100755

doc/doc_en/training_en.md

0 → 100644

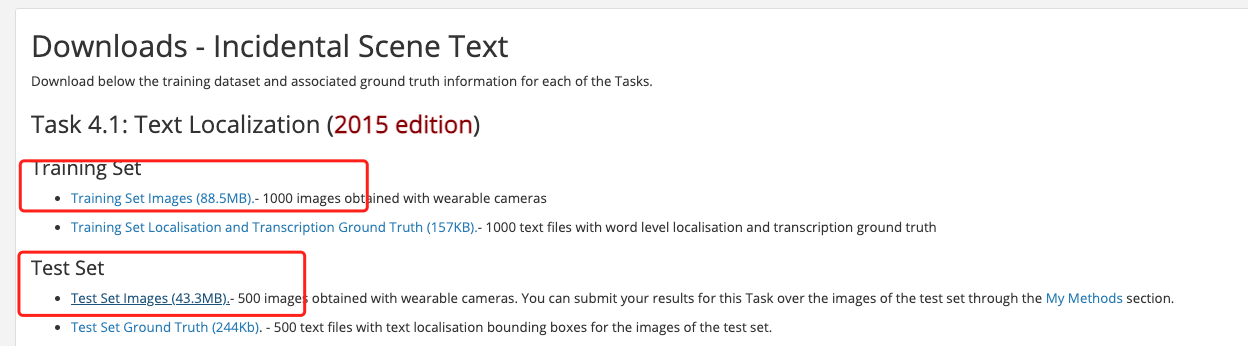

doc/ic15_location_download.png

0 → 100644

80.1 KB

192.4 KB

93.6 KB

246.4 KB

70.9 KB

48.1 KB

140.7 KB

84.5 KB

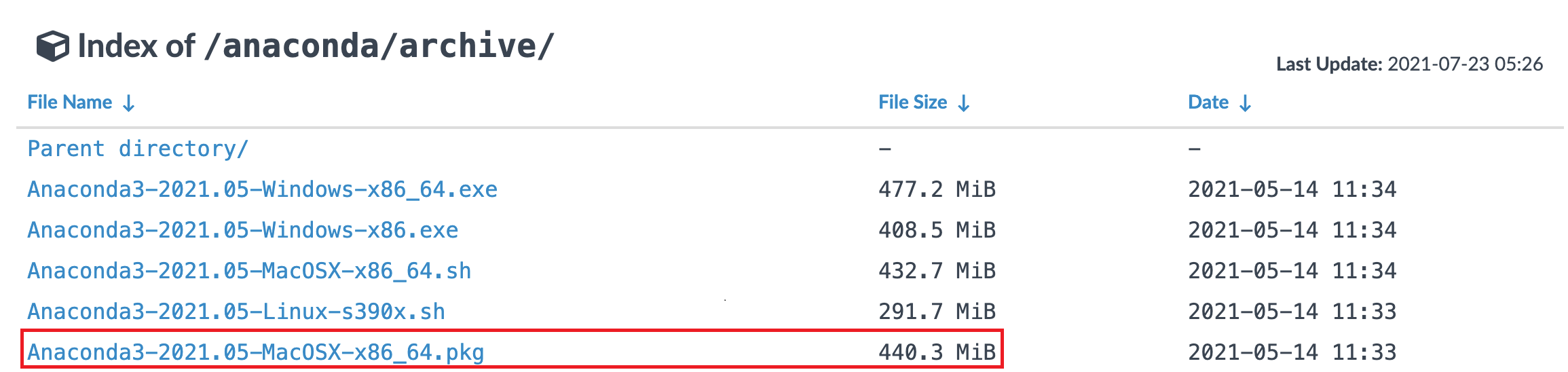

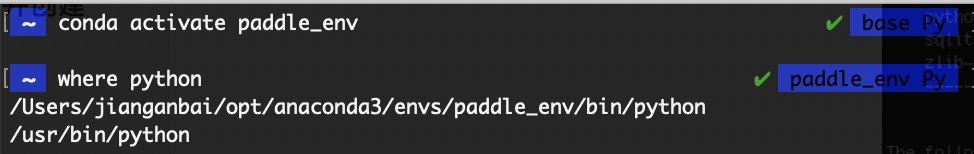

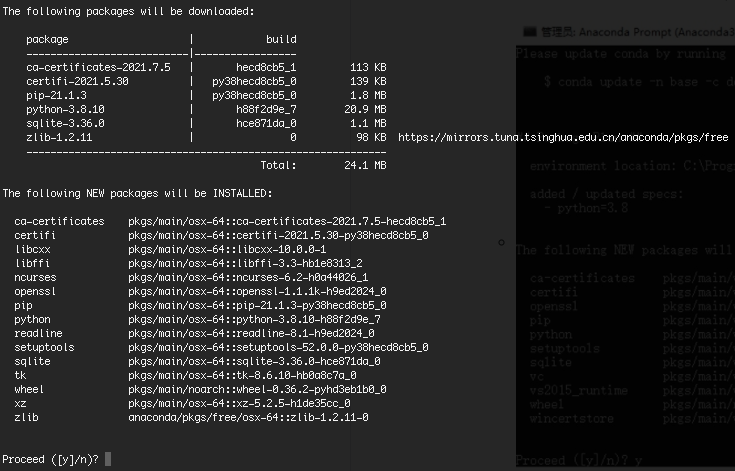

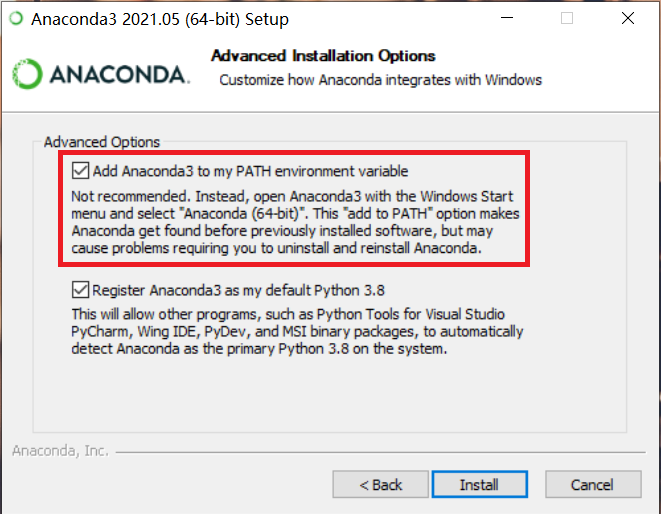

doc/install/mac/conda_create.png

0 → 100755

71.6 KB

173.2 KB

124.7 KB

73.8 KB

321.2 KB

134.9 KB

231.4 KB

| W: | H:

| W: | H:

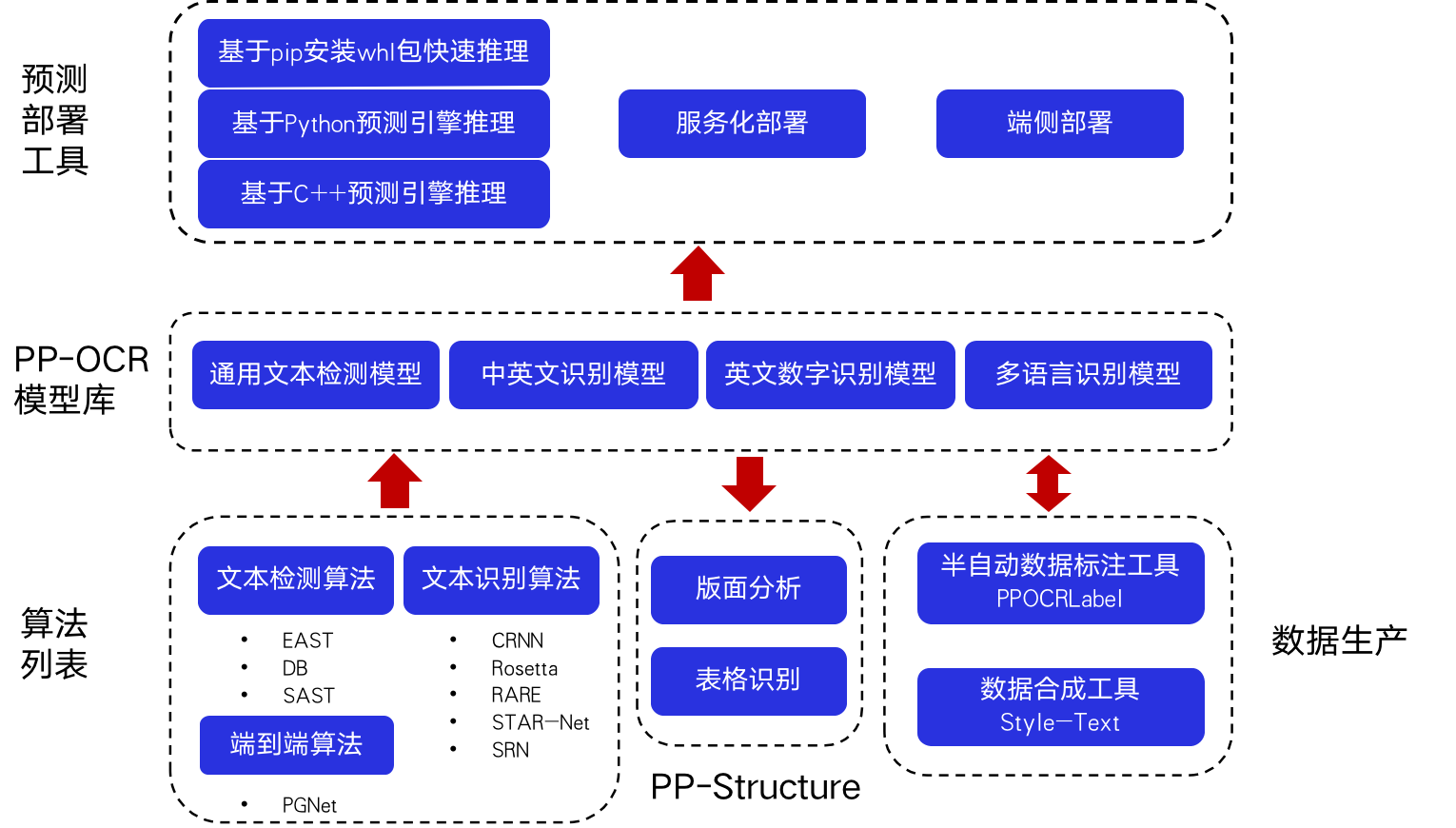

doc/overview.png

0 → 100644

142.8 KB

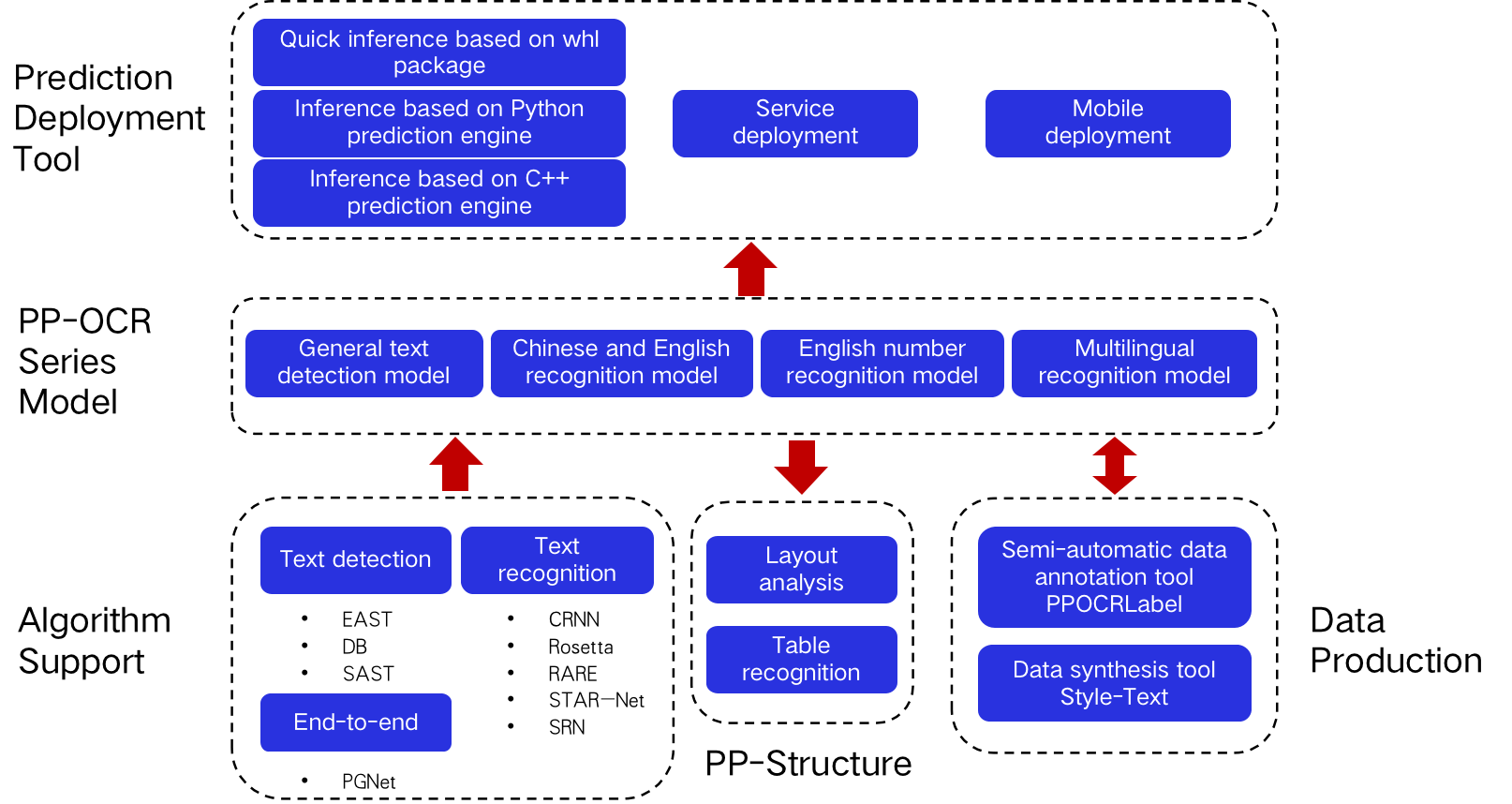

doc/overview_en.png

0 → 100644

144.4 KB

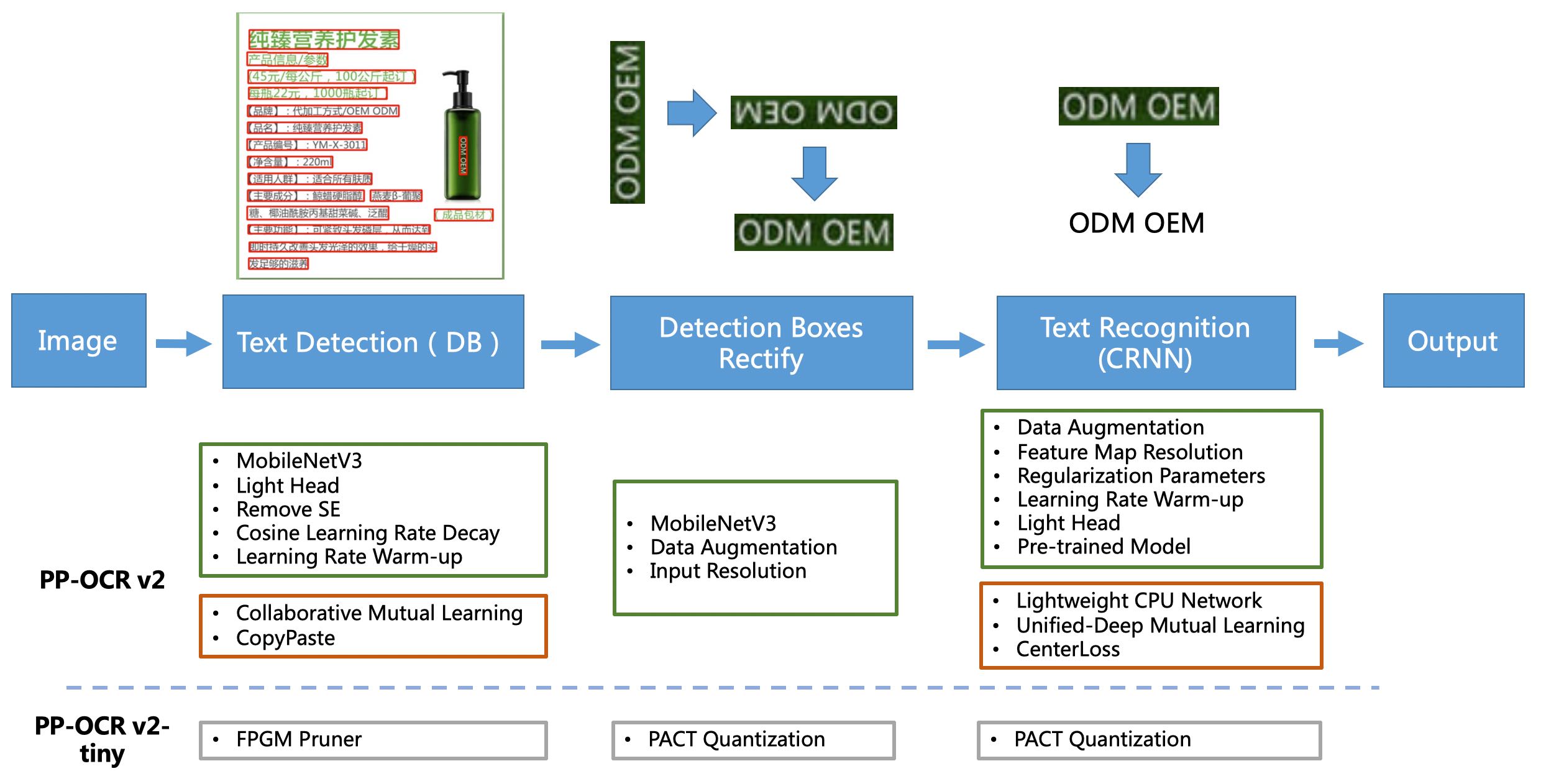

doc/ppocrv2_framework.jpg

0 → 100644

260.7 KB

tests/compare_results.py

0 → 100644