polish kie doc and code (#7255)

* add fapiao kie * fix readme * fix fanli * add readme * add how to do kie en * add algo kie * add algo overview en * rename vqa to kie * fix read gif

Showing

applications/发票关键信息抽取.md

0 → 100644

doc/doc_en/kie_en.md

0 → 100644

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

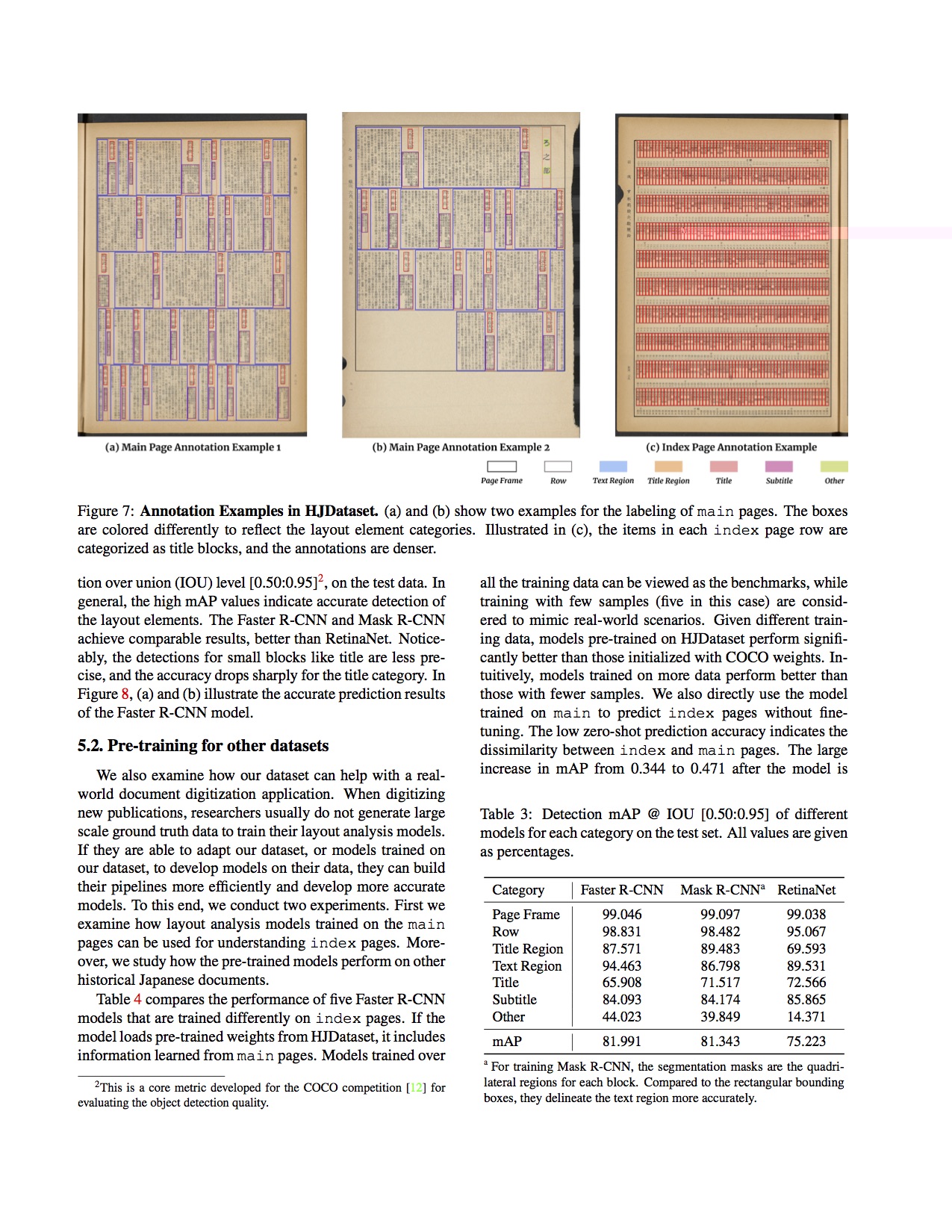

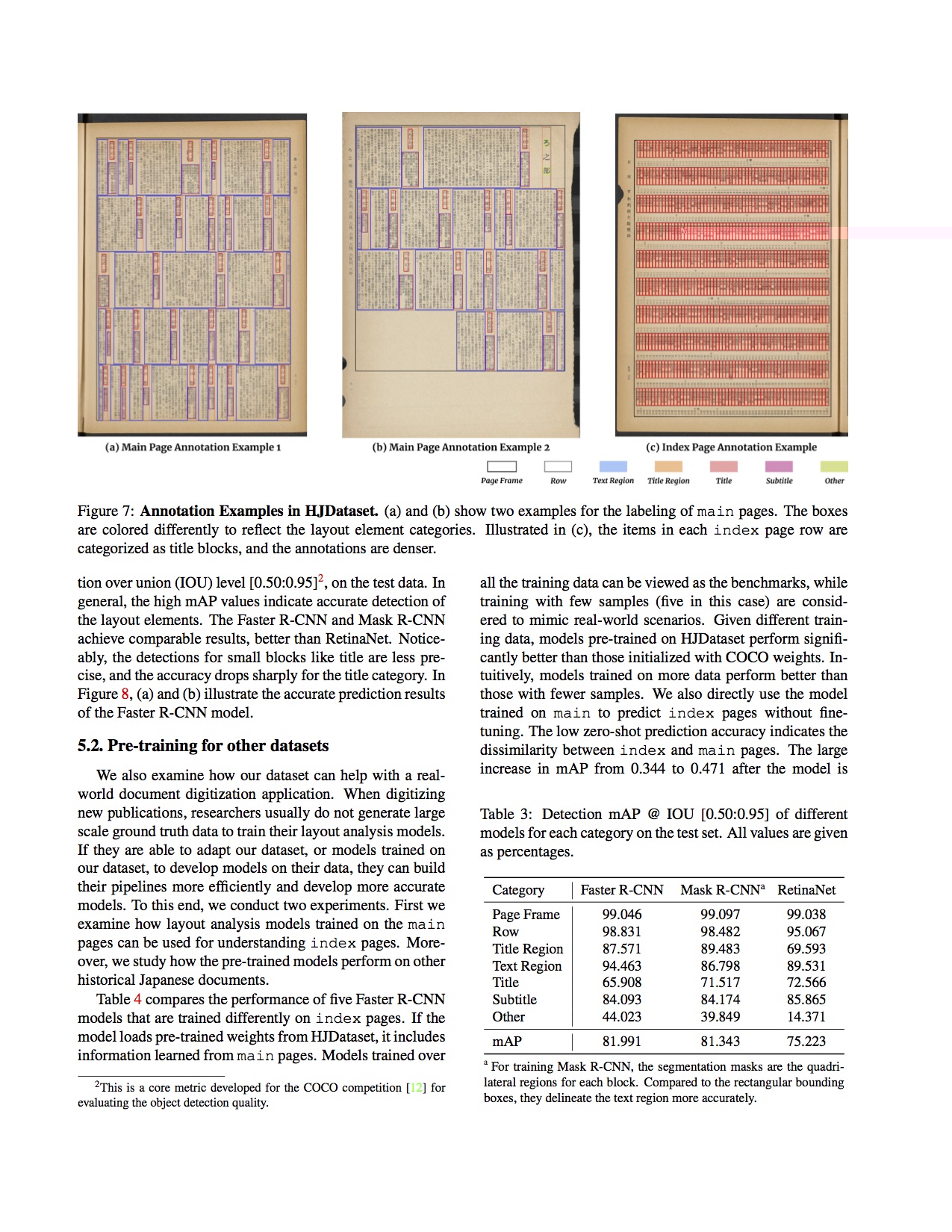

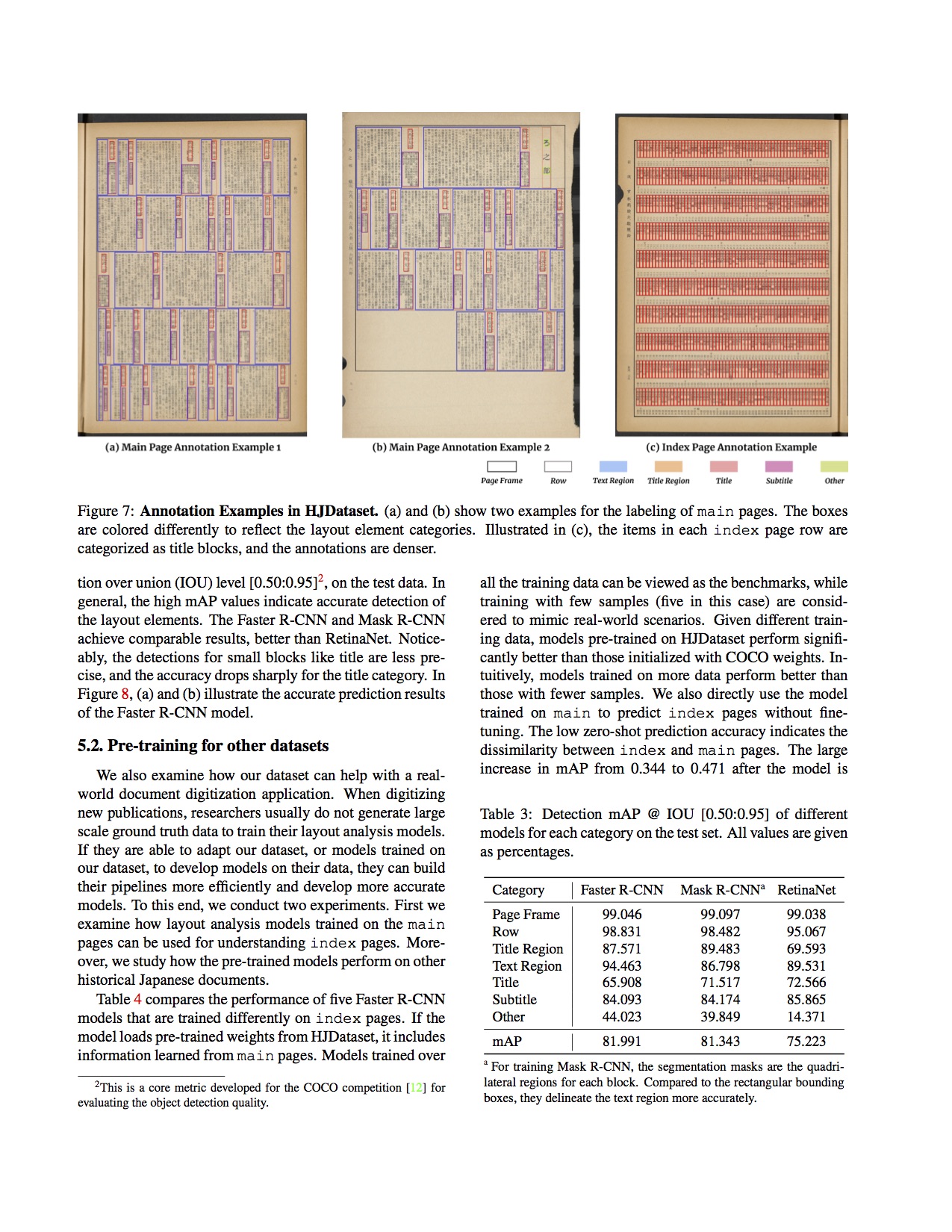

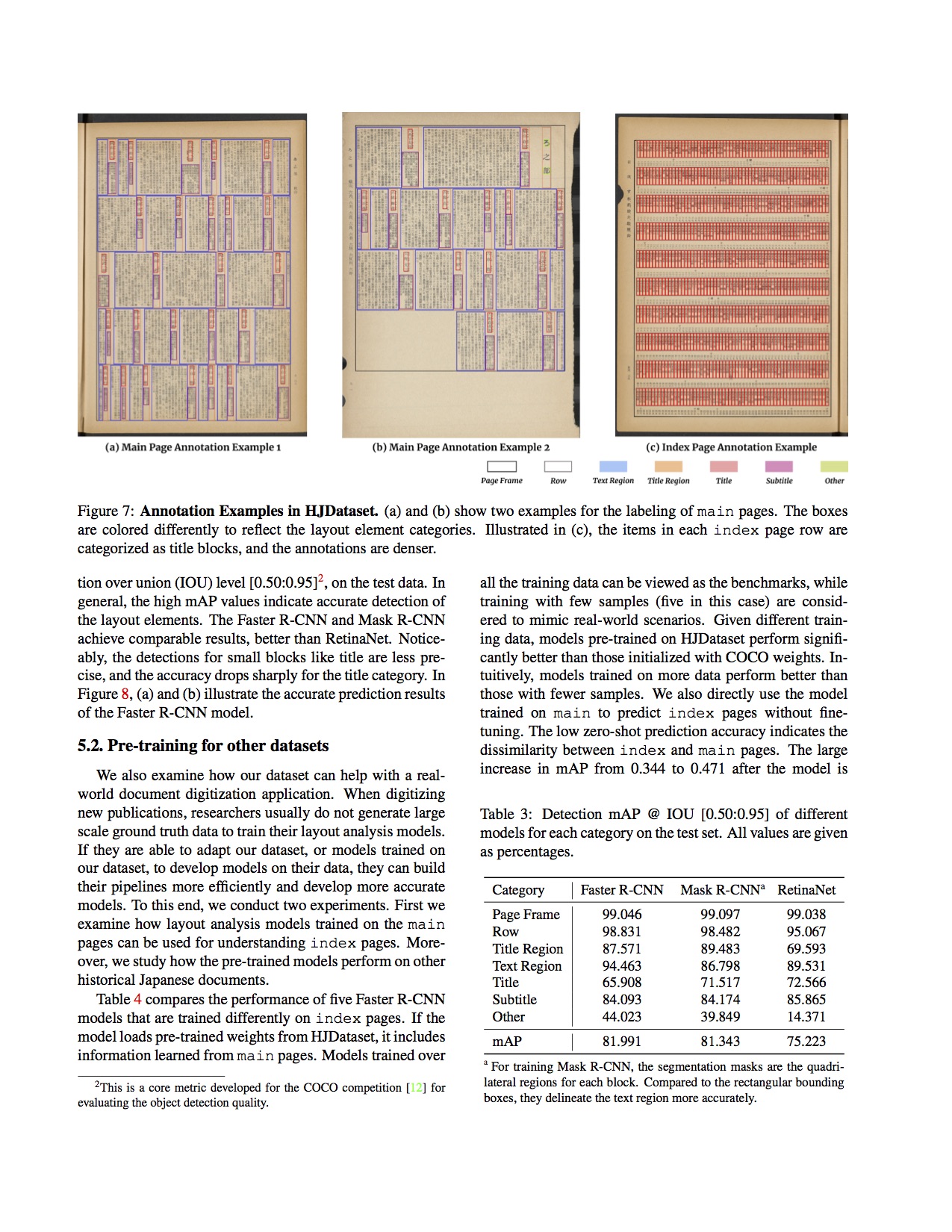

| W: | H:

| W: | H:

| W: | H:

| W: | H:

ppstructure/kie/README.md

0 → 100644

ppstructure/kie/README_ch.md

0 → 100644

文件已移动

文件已移动

文件已移动

ppstructure/vqa/README.md

已删除

100644 → 0

ppstructure/vqa/README_ch.md

已删除

100644 → 0