diff --git a/modelcenter/ERNIE-3.0/fastdeploy_cn.md b/modelcenter/ERNIE-3.0/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..534cd04ae56572b75dc6e620df611cd6d32f981d

--- /dev/null

+++ b/modelcenter/ERNIE-3.0/fastdeploy_cn.md

@@ -0,0 +1,49 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+# 下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/text/ernie-3.0/python

+

+# 下载AFQMC数据集的微调后的ERNIE 3.0模型

+wget https://bj.bcebos.com/fastdeploy/models/ernie-3.0/ernie-3.0-medium-zh-afqmc.tgz

+tar xvfz ernie-3.0-medium-zh-afqmc.tgz

+

+# CPU 推理

+python seq_cls_infer.py --device cpu --model_dir ernie-3.0-medium-zh-afqmc

+

+# GPU 推理

+python seq_cls_infer.py --device gpu --model_dir ernie-3.0-medium-zh-afqmc

+```

+运行完成后返回的结果如下:

+

+```bash

+[INFO] fastdeploy/runtime.cc(469)::Init Runtime initialized with Backend::ORT in Device::CPU.

+Batch id:0, example id:0, sentence1:花呗收款额度限制, sentence2:收钱码,对花呗支付的金额有限制吗, label:1, similarity:0.5819

+Batch id:1, example id:0, sentence1:花呗支持高铁票支付吗, sentence2:为什么友付宝不支持花呗付款, label:0, similarity:0.9979

+```

+

+### 参数说明

+

+`seq_cls_infer.py` 除了以上示例的命令行参数,还支持更多命令行参数的设置。以下为各命令行参数的说明。

+

+| 参数 |参数说明 |

+|----------|--------------|

+|--model_dir | 指定部署模型的目录, |

+|--batch_size |最大可测的 batch size,默认为 1|

+|--max_length |最大序列长度,默认为 128|

+|--device | 运行的设备,可选范围: ['cpu', 'gpu'],默认为'cpu' |

+|--backend | 支持的推理后端,可选范围: ['onnx_runtime', 'paddle', 'openvino', 'tensorrt', 'paddle_tensorrt'],默认为'onnx_runtime' |

+|--use_fp16 | 是否使用FP16模式进行推理。使用tensorrt和paddle_tensorrt后端时可开启,默认为False |

+|--use_fast| 是否使用FastTokenizer加速分词阶段。默认为True|

\ No newline at end of file

diff --git a/modelcenter/ERNIE-3.0/fastdeploy_en.md b/modelcenter/ERNIE-3.0/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..4ed92be9c196c9930044f5e065f09c49d673e892

--- /dev/null

+++ b/modelcenter/ERNIE-3.0/fastdeploy_en.md

@@ -0,0 +1,50 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/text/ernie-3.0/python

+

+# download the fine-tuned ERNIE 3.0 model trained from the AFQMC dataset

+wget https://bj.bcebos.com/fastdeploy/models/ernie-3.0/ernie-3.0-medium-zh-afqmc.tgz

+tar xvfz ernie-3.0-medium-zh-afqmc.tgz

+

+# CPU deployment

+python seq_cls_infer.py --device cpu --model_dir ernie-3.0-medium-zh-afqmc

+

+# GPU deployment

+python seq_cls_infer.py --device gpu --model_dir ernie-3.0-medium-zh-afqmc

+```

+The results returned after the operation is completed are as follows:

+

+```bash

+[INFO] fastdeploy/runtime.cc(469)::Init Runtime initialized with Backend::ORT in Device::CPU.

+Batch id:0, example id:0, sentence1:花呗收款额度限制, sentence2:收钱码,对花呗支付的金额有限制吗, label:1, similarity:0.5819

+Batch id:1, example id:0, sentence1:花呗支持高铁票支付吗, sentence2:为什么友付宝不支持花呗付款, label:0, similarity:0.9979

+```

+

+### Parameter Description

+

+`seq_cls_infer.py` In addition to the command line parameters in the above example, more command line parameters are also supported. The following is a description of each command line parameter.

+

+| Parameter |Parameter Description |

+|----------|--------------|

+|--model_dir | Specify the directory where the model is deployed, |

+|--batch_size |Maximum measurable batch size,default 1|

+|--max_length |Maximum sequence length,default 128|

+|--device | equipment running,Optional range: ['cpu', 'gpu'],default'cpu' |

+|--backend | Supported Inference Backends,Optional range: ['onnx_runtime', 'paddle', 'openvino', 'tensorrt', 'paddle_tensorrt'],default 'onnx_runtime' |

+|--use_fp16 | Whether to use FP16 mode for inference。Use tensorrt and paddle_tensorrt can be turned on when backend,default False |

+|--use_fast| Whether to use FastTokenizer to speed up the word segmentation stage。default True|

\ No newline at end of file

diff --git a/modelcenter/PP-HGNet/fastdeploy_cn.md b/modelcenter/PP-HGNet/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..bd38bf0af3eaa8001b962e7ce18d157cfc5fe16e

--- /dev/null

+++ b/modelcenter/PP-HGNet/fastdeploy_cn.md

@@ -0,0 +1,40 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+# 下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/classification/paddleclas/python

+

+# 下载HGNet模型文件和测试图片

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PPHGNet_tiny_ssld_infer.tgz

+tar xvfz PPHGNet_tiny_ssld_infer.tgz

+wget https://gitee.com/paddlepaddle/PaddleClas/raw/release/2.4/deploy/images/ImageNet/ILSVRC2012_val_00000010.jpeg

+

+# CPU推理

+python infer.py --model PPHGNet_tiny_ssld_infer --image ILSVRC2012_val_00000010.jpeg --device cpu --topk 1

+# GPU推理

+python infer.py --model PPHGNet_tiny_ssld_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --topk 1

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer.py --model PPHGNet_tiny_ssld_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --use_trt True --topk 1

+# IPU推理(注意:IPU推理首次运行会有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer.py --model PPHGNet_tiny_ssld_infer --image ILSVRC2012_val_00000010.jpeg --device ipu --topk 1

+

+运行完成后返回的结果如下:

+

+```bash

+==============================PPHGNet_tiny_ssld==============================

+cpu_label: 153, cpu_score: 0.536040

+ipu_label: 153, ipu_score: 0.536039

+==============================PPHGNet_tiny_ssld==============================

+```

\ No newline at end of file

diff --git a/modelcenter/PP-HGNet/fastdeploy_en.md b/modelcenter/PP-HGNet/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..a10028afdf47d7049461796b141ecb1fd8c25f5b

--- /dev/null

+++ b/modelcenter/PP-HGNet/fastdeploy_en.md

@@ -0,0 +1,42 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/classification/paddleclas/python

+

+# download HGNet model and test image

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PPHGNet_tiny_ssld_infer.tgz

+tar xvfz PPHGNet_tiny_ssld_infer.tgz

+wget https://gitee.com/paddlepaddle/PaddleClas/raw/release/2.4/deploy/images/ImageNet/ILSVRC2012_val_00000010.jpeg

+

+# CPU deployment

+python infer.py --model PPHGNet_tiny_ssld_infer --image ILSVRC2012_val_00000010.jpeg --device cpu --topk 1

+# GPU deployment

+python infer.py --model PPHGNet_tiny_ssld_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --topk 1

+#TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --model PPHGNet_tiny_ssld_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --use_trt True --topk 1

+#IPU inference (note: the first run of IPU inference will have serialized model operations, which will take a certain amount of time, so you need to wait patiently)

+python infer.py --model PPHGNet_tiny_ssld_infer --image ILSVRC2012_val_00000010.jpeg --device ipu --topk 1

+```

+

+The results returned after the operation is completed are as follows:

+

+```bash

+==============================PPHGNet_tiny_ssld==============================

+cpu_label: 153, cpu_score: 0.536040

+ipu_label: 153, ipu_score: 0.536039

+==============================PPHGNet_tiny_ssld==============================

+```

\ No newline at end of file

diff --git a/modelcenter/PP-HumanSegV2/fastdeploy_cn.md b/modelcenter/PP-HumanSegV2/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..2445b2c3825bcedc83455523f90fd68c5c42e6ab

--- /dev/null

+++ b/modelcenter/PP-HumanSegV2/fastdeploy_cn.md

@@ -0,0 +1,30 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+# 下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/segmentation/paddleseg/python

+

+# 下载HumanSegV2模型文件和测试图片

+wget https://bj.bcebos.com/paddle2onnx/libs/PP_HumanSegV2_Lite_192x192_infer.tgz

+tar -xvf PP_HumanSegV2_Lite_192x192_infer.tgz

+wget https://paddleseg.bj.bcebos.com/dygraph/demo/cityscapes_demo.png

+

+# CPU推理

+python infer.py --model PP_HumanSegV2_Lite_192x192_infer --image cityscapes_demo.png --device cpu

+# GPU推理

+python infer.py --model PP_HumanSegV2_Lite_192x192_infer --image cityscapes_demo.png --device gpu

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer.py --model PP_HumanSegV2_Lite_192x192_infer --image cityscapes_demo.png --device gpu --use_trt True

+```

\ No newline at end of file

diff --git a/modelcenter/PP-HumanSegV2/fastdeploy_en.md b/modelcenter/PP-HumanSegV2/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..035b8c40d3a00f6e36a8a2a21d2a22a5fa320b3f

--- /dev/null

+++ b/modelcenter/PP-HumanSegV2/fastdeploy_en.md

@@ -0,0 +1,33 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/segmentation/paddleseg/python

+

+# download HumanSegV2 model and test image

+wget https://bj.bcebos.com/paddle2onnx/libs/PP_HumanSegV2_Lite_192x192_infer.tgz

+tar -xvf PP_HumanSegV2_Lite_192x192_infer.tgz

+wget https://paddleseg.bj.bcebos.com/dygraph/demo/cityscapes_demo.png

+

+# CPU deployment

+python infer.py --model PP_HumanSegV2_Lite_192x192_infer --image cityscapes_demo.png --device cpu

+

+# GPU deployment

+python infer.py --model PP_HumanSegV2_Lite_192x192_infer --image cityscapes_demo.png --device gpu

+

+#TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --model PP_HumanSegV2_Lite_192x192_infer --image cityscapes_demo.png --device gpu --use_trt True

+```

\ No newline at end of file

diff --git a/modelcenter/PP-LCNet/fastdeploy_cn.md b/modelcenter/PP-LCNet/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..94f5bc6ca1596b5f69fe9d90d28fedf98125dc80

--- /dev/null

+++ b/modelcenter/PP-LCNet/fastdeploy_cn.md

@@ -0,0 +1,41 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/classification/paddleclas/python

+

+# 下载LCNet模型文件和测试图片

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PPLCNet_x1_0_infer.tgz

+tar -xvf PPLCNet_x1_0_infer.tgz

+wget https://gitee.com/paddlepaddle/PaddleClas/raw/release/2.4/deploy/images/ImageNet/ILSVRC2012_val_00000010.jpeg

+

+# CPU推理

+python infer.py --model PPLCNet_x1_0_infer --image ILSVRC2012_val_00000010.jpeg --device cpu --topk 1

+# GPU推理

+python infer.py --model PPLCNet_x1_0_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --topk 1

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer.py --model PPLCNet_x1_0_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --use_trt True --topk 1

+# IPU推理(注意:IPU推理首次运行会有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer.py --model PPLCNet_x1_0_infer --image ILSVRC2012_val_00000010.jpeg --device ipu --topk 1

+```

+

+运行完成后返回的结果如下:

+

+```bash

+==============================PPLCNet_x1_0==============================

+cpu_label: 153, cpu_score: 0.612086

+ipu_label: 153, ipu_score: 0.612087

+==============================PPLCNet_x1_0==============================

+```

\ No newline at end of file

diff --git a/modelcenter/PP-LCNet/fastdeploy_en.md b/modelcenter/PP-LCNet/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..04700ef3c0b3701fcb792867432bb6c50406cf5d

--- /dev/null

+++ b/modelcenter/PP-LCNet/fastdeploy_en.md

@@ -0,0 +1,42 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/classification/paddleclas/python

+

+# download LCNet model and test image

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PPLCNet_x1_0_infer.tgz

+tar -xvf PPLCNet_x1_0_infer.tgz

+wget https://gitee.com/paddlepaddle/PaddleClas/raw/release/2.4/deploy/images/ImageNet/ILSVRC2012_val_00000010.jpeg

+

+# CPU deployment

+python infer.py --model PPLCNet_x1_0_infer --image ILSVRC2012_val_00000010.jpeg --device cpu --topk 1

+# GPU deployment

+python infer.py --model PPLCNet_x1_0_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --topk 1

+#TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --model PPLCNet_x1_0_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --use_trt True --topk 1

+#IPU inference (note: the first run of IPU inference will have serialized model operations, which will take a certain amount of time, so you need to wait patiently)

+python infer.py --model PPLCNet_x1_0_infer --image ILSVRC2012_val_00000010.jpeg --device ipu --topk 1

+```

+

+The results returned after the operation is completed are as follows:

+

+```bash

+==============================PPLCNet_x1_0==============================

+cpu_label: 153, cpu_score: 0.612086

+ipu_label: 153, ipu_score: 0.612087

+==============================PPLCNet_x1_0==============================

+```

\ No newline at end of file

diff --git a/modelcenter/PP-LCNetV2/fastdeploy_cn.md b/modelcenter/PP-LCNetV2/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..da6229738dad46298f14693c25f9d7ec87cd25b9

--- /dev/null

+++ b/modelcenter/PP-LCNetV2/fastdeploy_cn.md

@@ -0,0 +1,41 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/classification/paddleclas/python

+

+# 下载LCNetv2模型文件和测试图片

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PPLCNetV2_base_infer.tgz

+tar -xvf PPLCNetV2_base_infer.tgz

+wget https://gitee.com/paddlepaddle/PaddleClas/raw/release/2.4/deploy/images/ImageNet/ILSVRC2012_val_00000010.jpeg

+

+# CPU推理

+python infer.py --model PPLCNetV2_base_infer --image ILSVRC2012_val_00000010.jpeg --device cpu --topk 1

+# GPU推理

+python infer.py --model PPLCNetV2_base_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --topk 1

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer.py --model PPLCNetV2_base_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --use_trt True --topk 1

+# IPU推理(注意:IPU推理首次运行会有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer.py --model PPLCNetV2_base_infer --image ILSVRC2012_val_00000010.jpeg --device ipu --topk 1

+```

+

+运行完成后返回的结果如下:

+

+```bash

+==============================PPLCNetV2_base==============================

+cpu_label: 332, cpu_score: 0.278354

+ipu_label: 332, ipu_score: 0.278357

+==============================PPLCNetV2_base==============================

+```

\ No newline at end of file

diff --git a/modelcenter/PP-LCNetV2/fastdeploy_en.md b/modelcenter/PP-LCNetV2/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..f82a680dc712c23a6ea62123413656d406cd296a

--- /dev/null

+++ b/modelcenter/PP-LCNetV2/fastdeploy_en.md

@@ -0,0 +1,42 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/classification/paddleclas/python

+

+# download LCNetv2 model and test image

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PPLCNetV2_base_infer.tgz

+tar -xvf PPLCNetV2_base_infer.tgz

+wget https://gitee.com/paddlepaddle/PaddleClas/raw/release/2.4/deploy/images/ImageNet/ILSVRC2012_val_00000010.jpeg

+

+# CPU deployment

+python infer.py --model PPLCNetV2_base_infer --image ILSVRC2012_val_00000010.jpeg --device cpu --topk 1

+# GPU deployment

+python infer.py --model PPLCNetV2_base_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --topk 1

+#TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --model PPLCNetV2_base_infer --image ILSVRC2012_val_00000010.jpeg --device gpu --use_trt True --topk 1

+#IPU inference (note: the first run of IPU inference will have serialized model operations, which will take a certain amount of time, so you need to wait patiently)

+python infer.py --model PPLCNetV2_base_infer --image ILSVRC2012_val_00000010.jpeg --device ipu --topk 1

+```

+

+The results returned after the operation is completed are as follows:

+

+```bash

+==============================PPLCNetV2_base==============================

+cpu_label: 332, cpu_score: 0.278354

+ipu_label: 332, ipu_score: 0.278357

+==============================PPLCNetV2_base==============================

+```

\ No newline at end of file

diff --git a/modelcenter/PP-MSVSR/fastdeploy_cn.md b/modelcenter/PP-MSVSR/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..783c244272f00d6e49258ed6f7d3f9d72a09984d

--- /dev/null

+++ b/modelcenter/PP-MSVSR/fastdeploy_cn.md

@@ -0,0 +1,34 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/sr/ppmsvsr/python

+

+# 下载VSR模型文件和测试视频

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PP-MSVSR_reds_x4.tar

+tar -xvf PP-MSVSR_reds_x4.tar

+wget https://bj.bcebos.com/paddlehub/fastdeploy/vsr_src.mp4

+# CPU推理

+python infer.py --model PP-MSVSR_reds_x4 --video person.mp4 --frame_num 2 --device cpu

+# GPU推理

+python infer.py --model PP-MSVSR_reds_x4 --video person.mp4 --frame_num 2 --device gpu

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer.py --model PP-MSVSR_reds_x4 --video person.mp4 --frame_num 2 --device gpu --use_trt True

+```

+

+运行完成可视化结果如下图所示:

+

+

+

+

+

\ No newline at end of file

diff --git a/modelcenter/PP-OCRv2/fastdeploy_en.md b/modelcenter/PP-OCRv2/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..bc1cc60e1a0b99acb1867781bbaa2e957b899441

--- /dev/null

+++ b/modelcenter/PP-OCRv2/fastdeploy_en.md

@@ -0,0 +1,45 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download model, image and dictionary files

+wget https://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_det_infer.tar

+tar -xvf ch_PP-OCRv2_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wgethttps://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_rec_infer.tar

+tar -xvf ch_PP-OCRv2_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv2/python/

+

+

+# CPU deployment

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU deployment

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

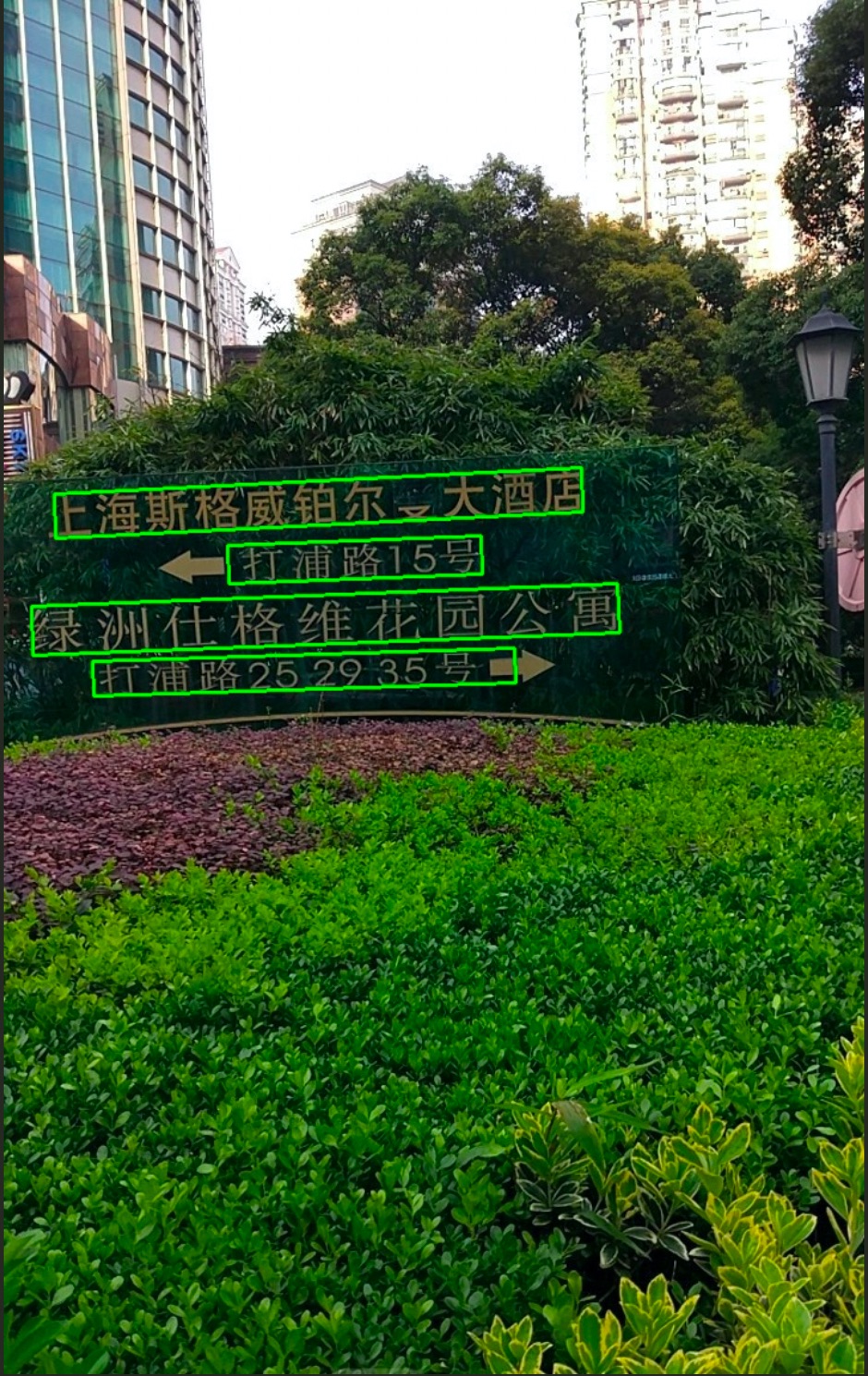

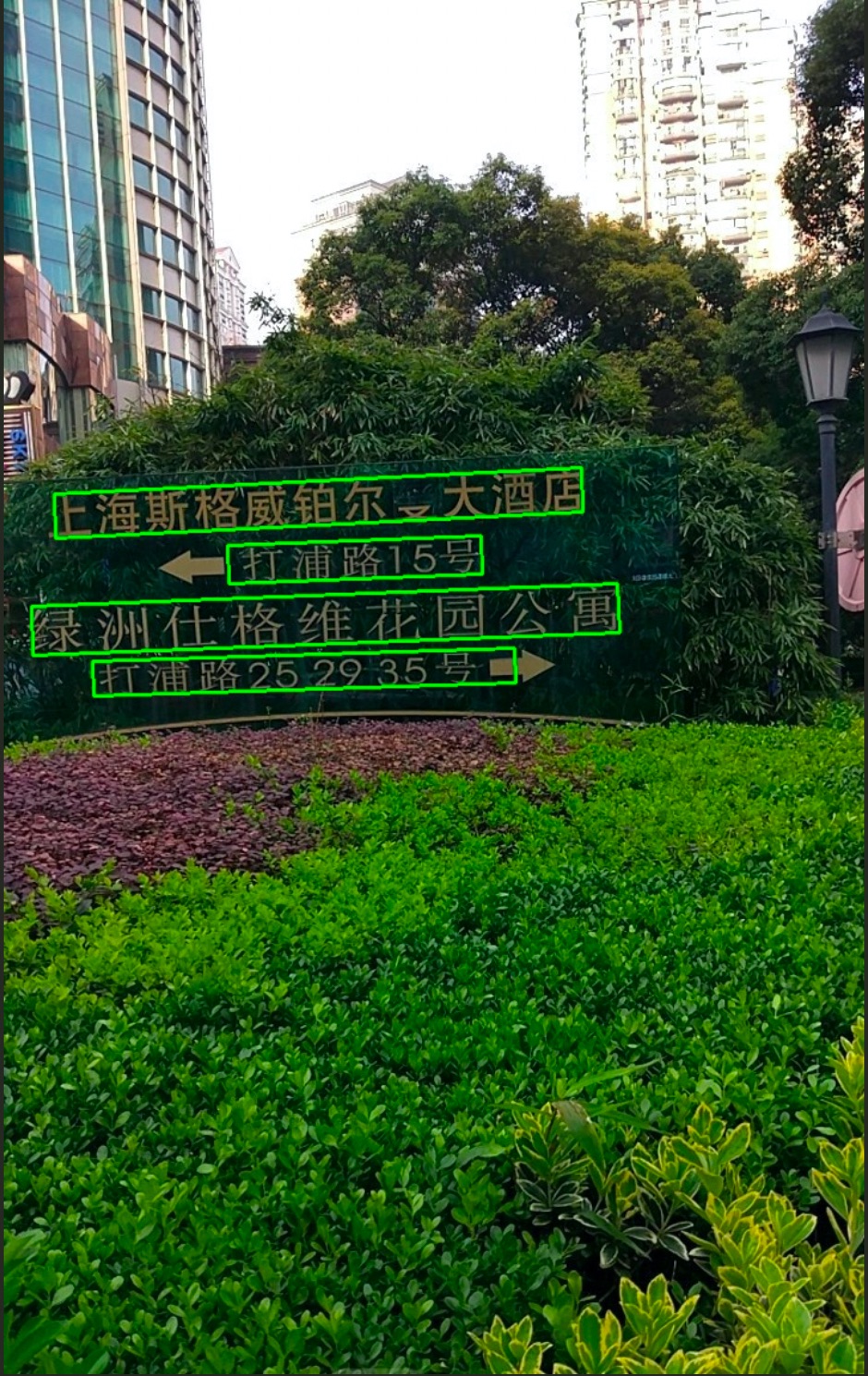

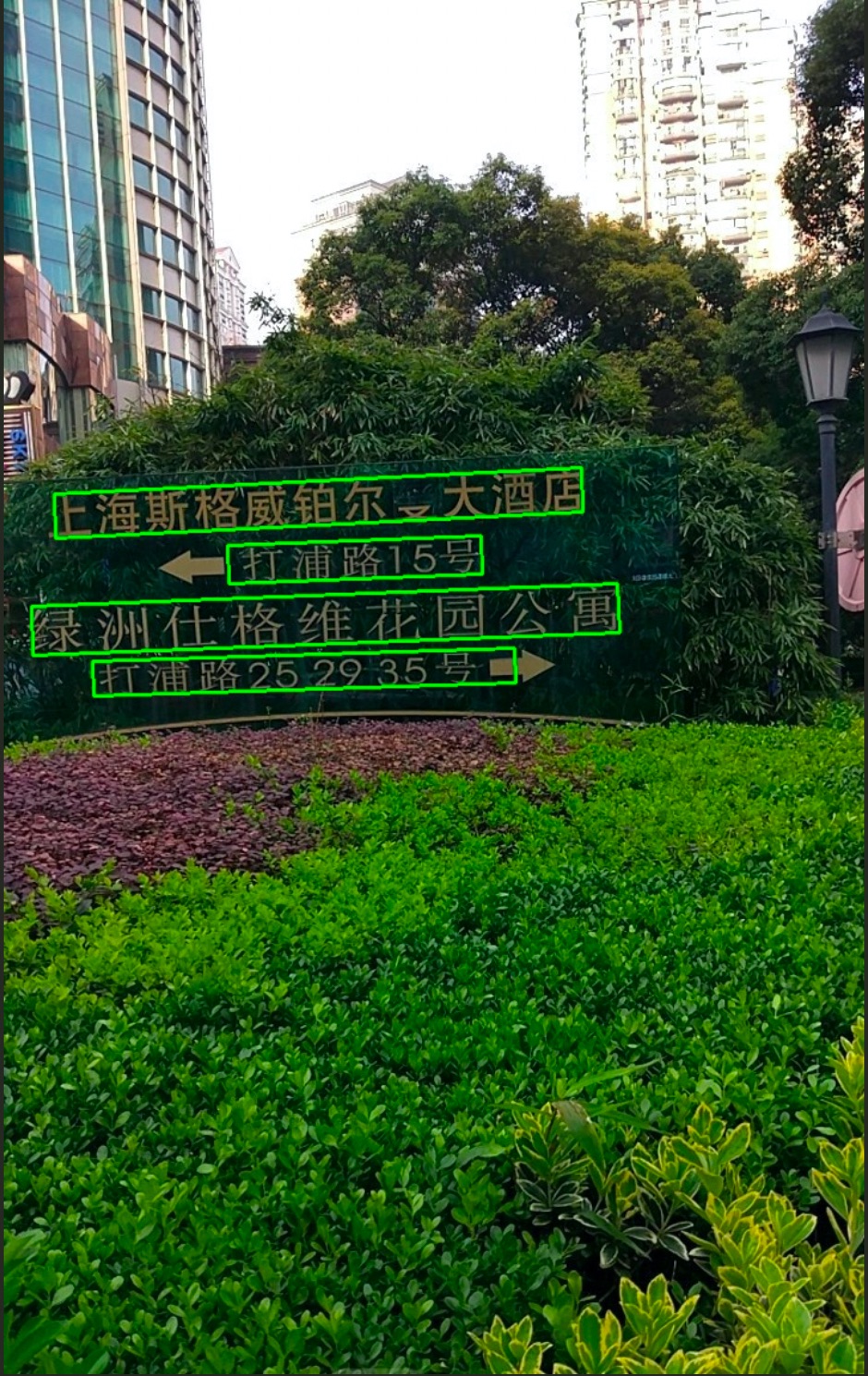

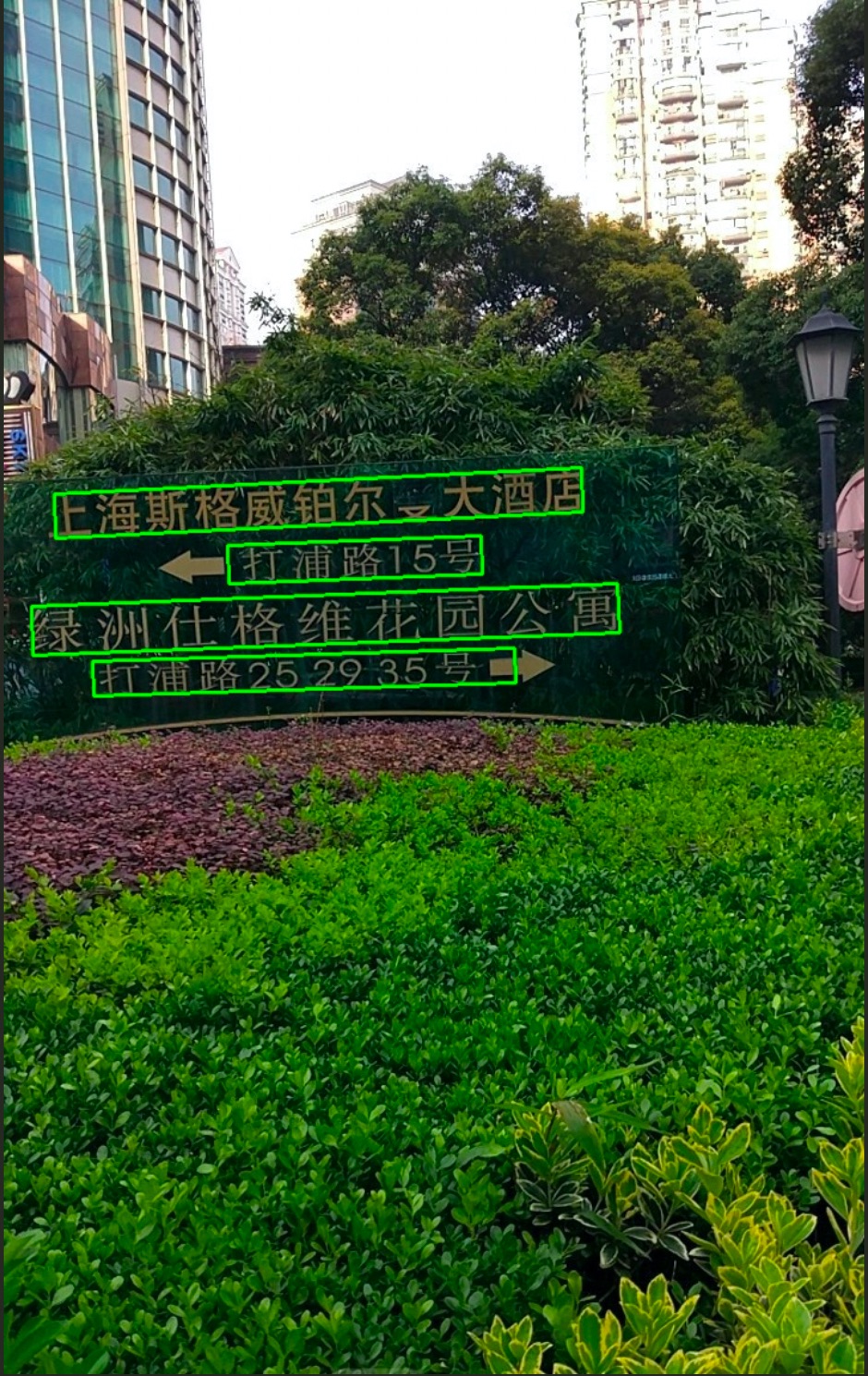

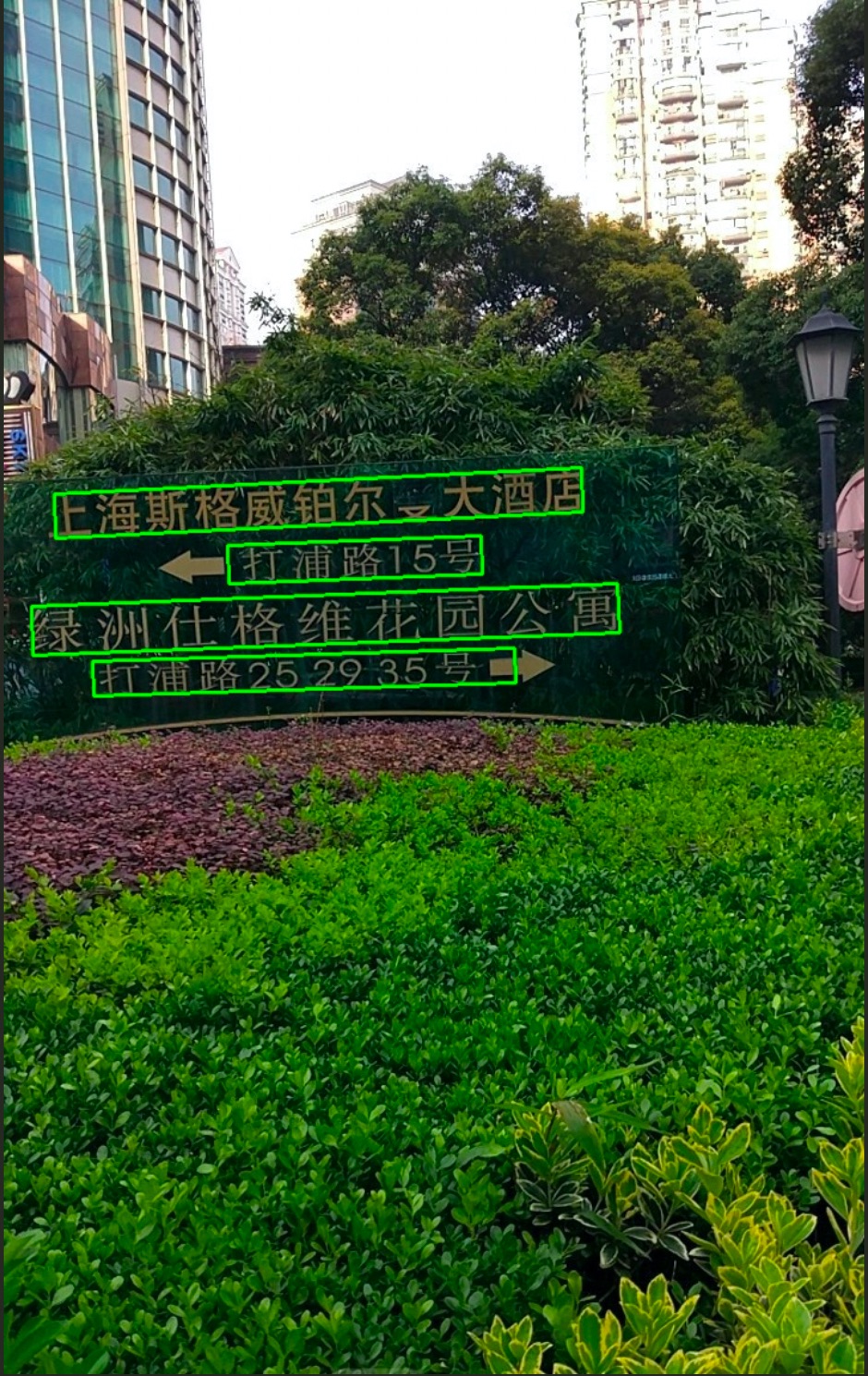

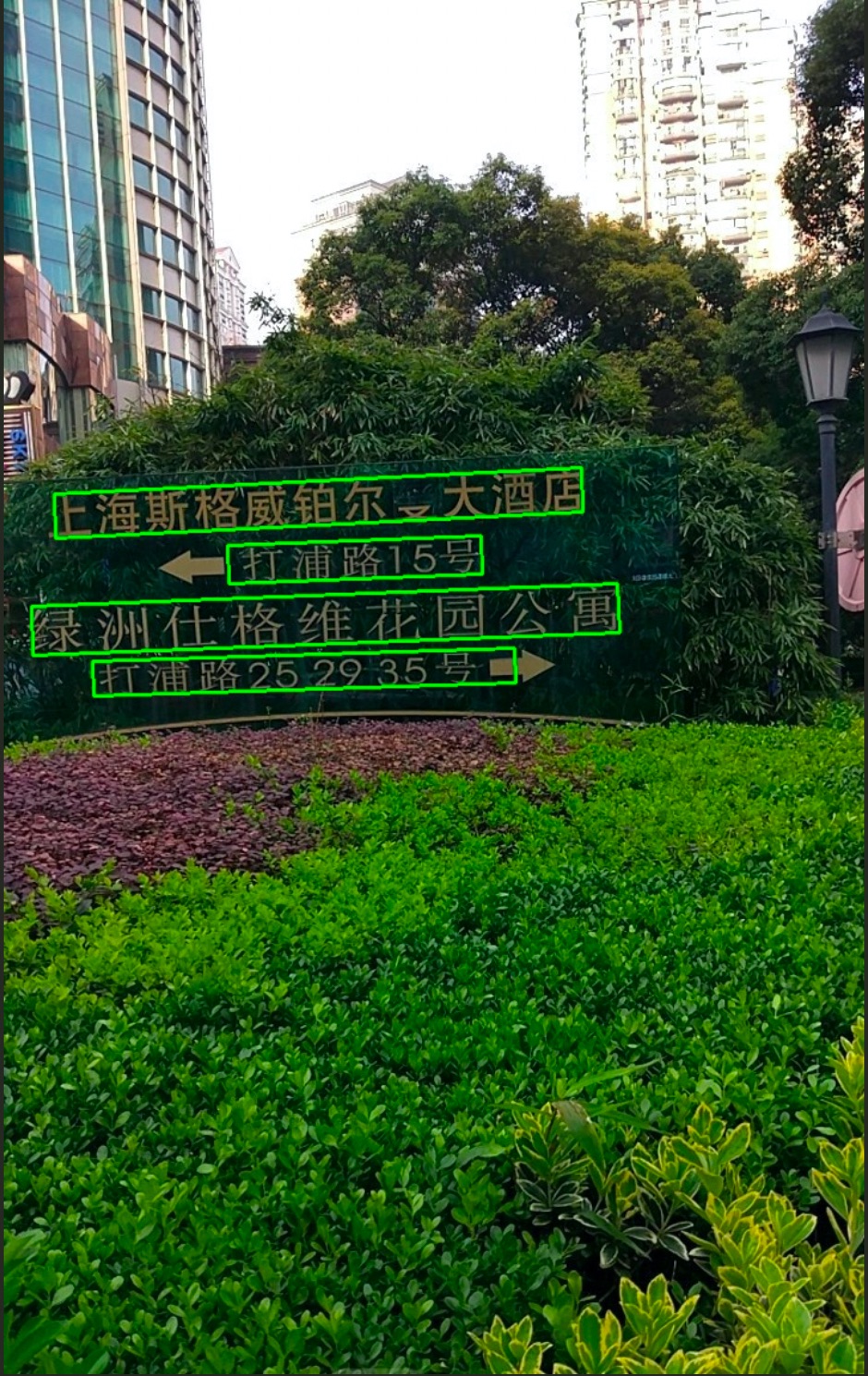

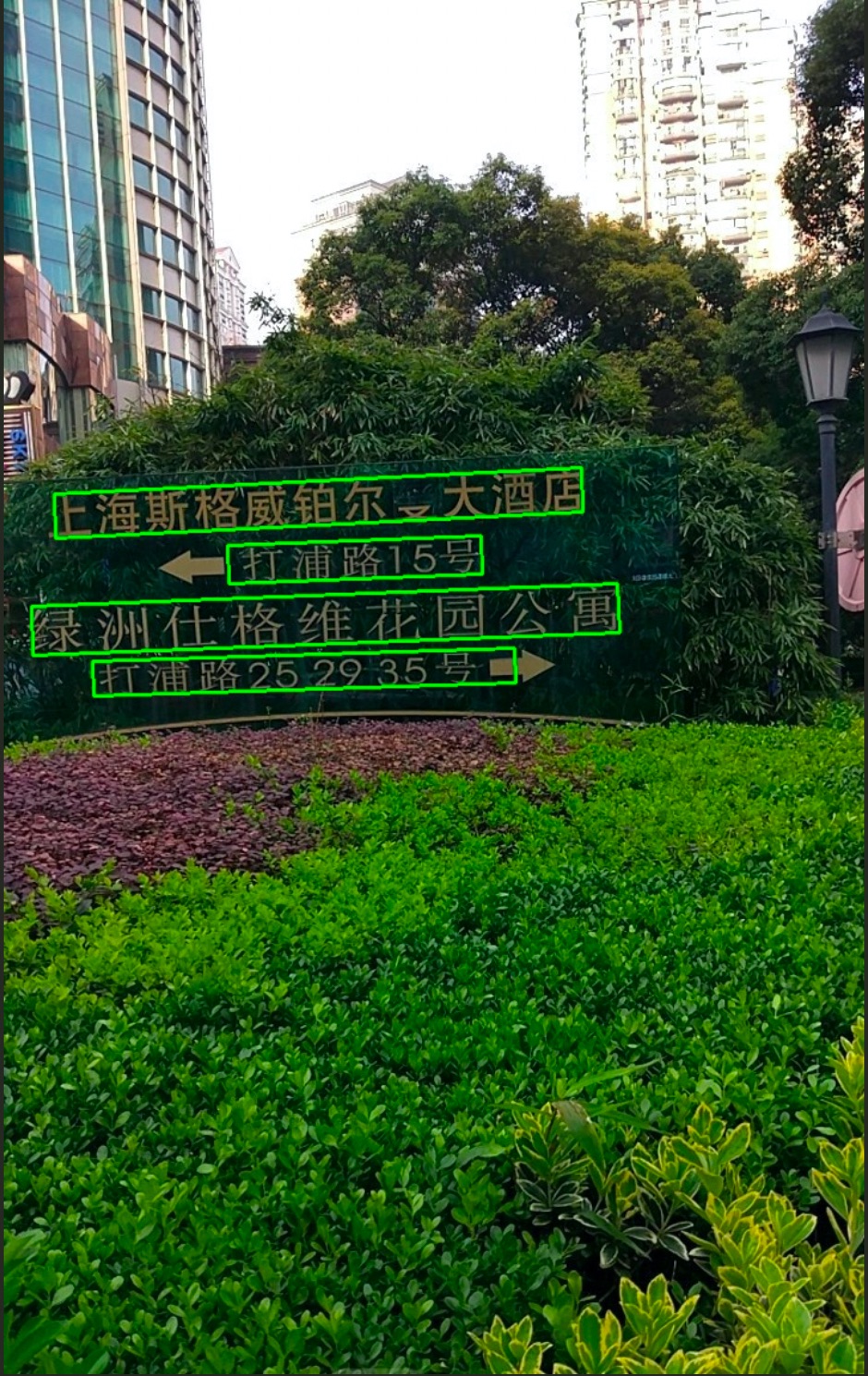

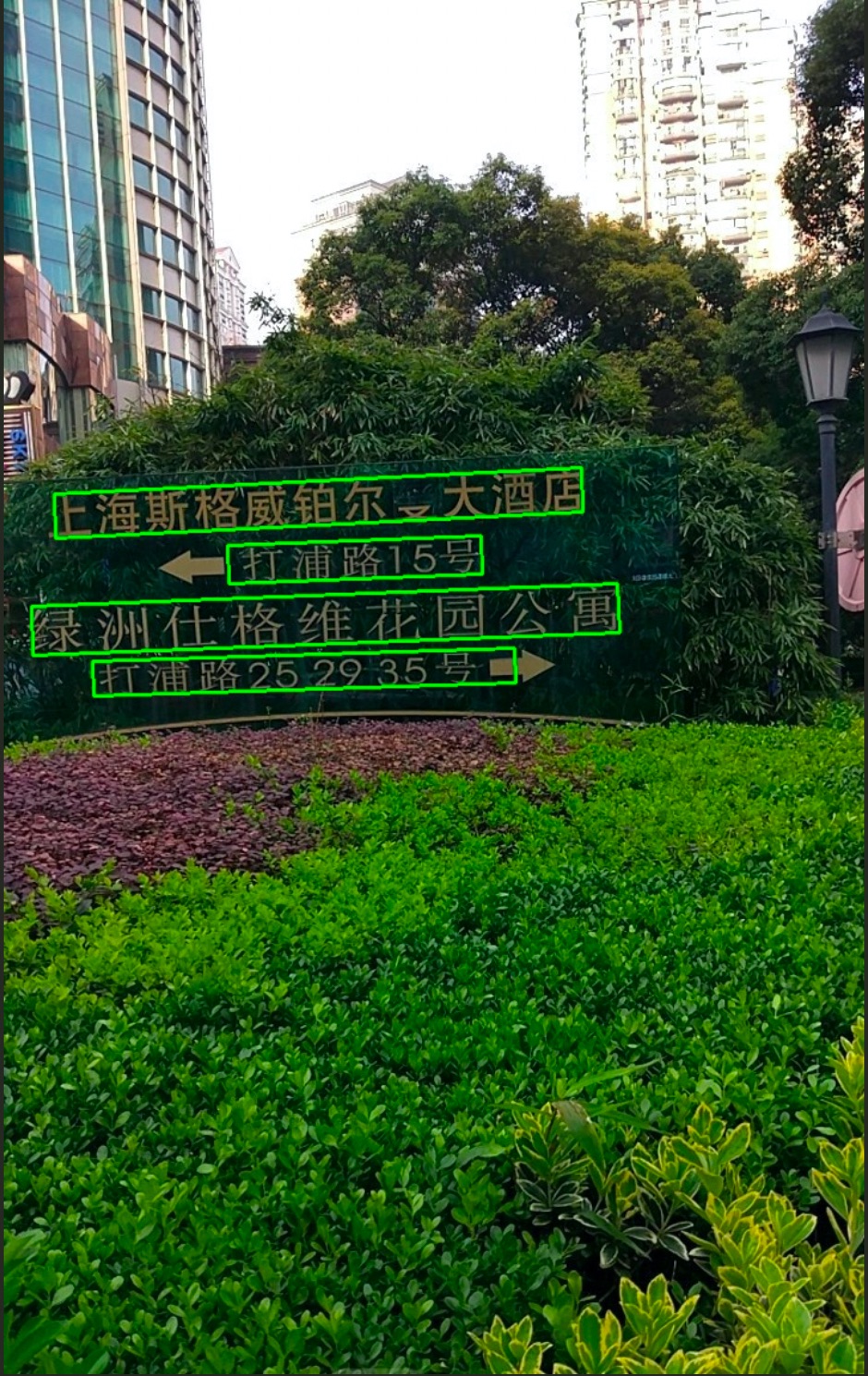

+The results of the completed visualisation are shown below

+

\ No newline at end of file

diff --git a/modelcenter/PP-OCRv2/fastdeploy_en.md b/modelcenter/PP-OCRv2/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..bc1cc60e1a0b99acb1867781bbaa2e957b899441

--- /dev/null

+++ b/modelcenter/PP-OCRv2/fastdeploy_en.md

@@ -0,0 +1,45 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download model, image and dictionary files

+wget https://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_det_infer.tar

+tar -xvf ch_PP-OCRv2_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wgethttps://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_rec_infer.tar

+tar -xvf ch_PP-OCRv2_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv2/python/

+

+

+# CPU deployment

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU deployment

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+The results of the completed visualisation are shown below

+ \ No newline at end of file

diff --git a/modelcenter/PP-OCRv3/fastdeploy_cn.md b/modelcenter/PP-OCRv3/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..1d6ebb9e7c38cbdabbc88dcd9c39053d6bfcce43

--- /dev/null

+++ b/modelcenter/PP-OCRv3/fastdeploy_cn.md

@@ -0,0 +1,42 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+# 下载模型,图片和字典文件

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

+tar xvf ch_PP-OCRv3_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar

+tar xvf ch_PP-OCRv3_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv3/python/

+

+# CPU推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# GPU上使用TensorRT推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+运行完成可视化结果如下图所示

+

\ No newline at end of file

diff --git a/modelcenter/PP-OCRv3/fastdeploy_cn.md b/modelcenter/PP-OCRv3/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..1d6ebb9e7c38cbdabbc88dcd9c39053d6bfcce43

--- /dev/null

+++ b/modelcenter/PP-OCRv3/fastdeploy_cn.md

@@ -0,0 +1,42 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+# 下载模型,图片和字典文件

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

+tar xvf ch_PP-OCRv3_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar

+tar xvf ch_PP-OCRv3_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv3/python/

+

+# CPU推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# GPU上使用TensorRT推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+运行完成可视化结果如下图所示

+ \ No newline at end of file

diff --git a/modelcenter/PP-OCRv3/fastdeploy_en.md b/modelcenter/PP-OCRv3/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..f86e9ac253eafff388e01c2174ba0d22dfa6683f

--- /dev/null

+++ b/modelcenter/PP-OCRv3/fastdeploy_en.md

@@ -0,0 +1,44 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download model, image and dictionary files

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

+tar xvf ch_PP-OCRv3_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar

+tar xvf ch_PP-OCRv3_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv3/python/

+

+

+# CPU deployment

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU deployment

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+The results of the completed visualisation are shown below

+

\ No newline at end of file

diff --git a/modelcenter/PP-OCRv3/fastdeploy_en.md b/modelcenter/PP-OCRv3/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..f86e9ac253eafff388e01c2174ba0d22dfa6683f

--- /dev/null

+++ b/modelcenter/PP-OCRv3/fastdeploy_en.md

@@ -0,0 +1,44 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download model, image and dictionary files

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

+tar xvf ch_PP-OCRv3_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar

+tar xvf ch_PP-OCRv3_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv3/python/

+

+

+# CPU deployment

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU deployment

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+The results of the completed visualisation are shown below

+ \ No newline at end of file

diff --git a/modelcenter/PP-PicoDet/fastdeploy_cn.md b/modelcenter/PP-PicoDet/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..ec134279f3762621efde3a8a28cffb1bf0e418bc

--- /dev/null

+++ b/modelcenter/PP-PicoDet/fastdeploy_cn.md

@@ -0,0 +1,30 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/detection/paddledetection/python/

+

+#下载PPYOLOE模型文件和测试图片

+wget https://bj.bcebos.com/paddlehub/fastdeploy/picodet_l_320_coco_lcnet.tgz

+wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/000000014439.jpg

+tar xvf picodet_l_320_coco_lcnet.tgz

+

+# CPU推理

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device cpu

+# GPU推理

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device gpu

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device gpu --use_trt True

+```

\ No newline at end of file

diff --git a/modelcenter/PP-PicoDet/fastdeploy_en.md b/modelcenter/PP-PicoDet/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..71a5d21c3db78cbc1f5893045a85a21b55ce36f5

--- /dev/null

+++ b/modelcenter/PP-PicoDet/fastdeploy_en.md

@@ -0,0 +1,31 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/detection/paddledetection/python/

+

+# download PicoDet model and test image

+wget https://bj.bcebos.com/paddlehub/fastdeploy/picodet_l_320_coco_lcnet.tgz

+wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/000000014439.jpg

+tar xvf picodet_l_320_coco_lcnet.tgz

+

+# CPU deployment

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device cpu

+# GPU deployment

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device gpu --use_trt True

+```

\ No newline at end of file

diff --git a/modelcenter/PP-TinyPose/fastdeploy_cn.md b/modelcenter/PP-TinyPose/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..bcd653bd8333378807e3ac10110737fbdf5072fa

--- /dev/null

+++ b/modelcenter/PP-TinyPose/fastdeploy_cn.md

@@ -0,0 +1,34 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/keypointdetection/tiny_pose/python

+

+# 下载PP-TinyPose模型文件和测试图片

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PP_TinyPose_256x192_infer.tgz

+tar -xvf PP_TinyPose_256x192_infer.tgz

+wget https://bj.bcebos.com/paddlehub/fastdeploy/hrnet_demo.jpg

+

+# CPU推理

+python pptinypose_infer.py --tinypose_model_dir PP_TinyPose_256x192_infer --image hrnet_demo.jpg --device cpu

+# GPU推理

+python pptinypose_infer.py --tinypose_model_dir PP_TinyPose_256x192_infer --image hrnet_demo.jpg --device gpu

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python pptinypose_infer.py --tinypose_model_dir PP_TinyPose_256x192_infer --image hrnet_demo.jpg --device gpu --use_trt True

+```

+运行完成可视化结果如下图所示:

+

\ No newline at end of file

diff --git a/modelcenter/PP-PicoDet/fastdeploy_cn.md b/modelcenter/PP-PicoDet/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..ec134279f3762621efde3a8a28cffb1bf0e418bc

--- /dev/null

+++ b/modelcenter/PP-PicoDet/fastdeploy_cn.md

@@ -0,0 +1,30 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/detection/paddledetection/python/

+

+#下载PPYOLOE模型文件和测试图片

+wget https://bj.bcebos.com/paddlehub/fastdeploy/picodet_l_320_coco_lcnet.tgz

+wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/000000014439.jpg

+tar xvf picodet_l_320_coco_lcnet.tgz

+

+# CPU推理

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device cpu

+# GPU推理

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device gpu

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device gpu --use_trt True

+```

\ No newline at end of file

diff --git a/modelcenter/PP-PicoDet/fastdeploy_en.md b/modelcenter/PP-PicoDet/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..71a5d21c3db78cbc1f5893045a85a21b55ce36f5

--- /dev/null

+++ b/modelcenter/PP-PicoDet/fastdeploy_en.md

@@ -0,0 +1,31 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/detection/paddledetection/python/

+

+# download PicoDet model and test image

+wget https://bj.bcebos.com/paddlehub/fastdeploy/picodet_l_320_coco_lcnet.tgz

+wget https://gitee.com/paddlepaddle/PaddleDetection/raw/release/2.4/demo/000000014439.jpg

+tar xvf picodet_l_320_coco_lcnet.tgz

+

+# CPU deployment

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device cpu

+# GPU deployment

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer_picodet.py --model_dir picodet_l_320_coco_lcnet --image 000000014439.jpg --device gpu --use_trt True

+```

\ No newline at end of file

diff --git a/modelcenter/PP-TinyPose/fastdeploy_cn.md b/modelcenter/PP-TinyPose/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..bcd653bd8333378807e3ac10110737fbdf5072fa

--- /dev/null

+++ b/modelcenter/PP-TinyPose/fastdeploy_cn.md

@@ -0,0 +1,34 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd FastDeploy/examples/vision/keypointdetection/tiny_pose/python

+

+# 下载PP-TinyPose模型文件和测试图片

+wget https://bj.bcebos.com/paddlehub/fastdeploy/PP_TinyPose_256x192_infer.tgz

+tar -xvf PP_TinyPose_256x192_infer.tgz

+wget https://bj.bcebos.com/paddlehub/fastdeploy/hrnet_demo.jpg

+

+# CPU推理

+python pptinypose_infer.py --tinypose_model_dir PP_TinyPose_256x192_infer --image hrnet_demo.jpg --device cpu

+# GPU推理

+python pptinypose_infer.py --tinypose_model_dir PP_TinyPose_256x192_infer --image hrnet_demo.jpg --device gpu

+# GPU上使用TensorRT推理 (注意:TensorRT推理第一次运行,有序列化模型的操作,有一定耗时,需要耐心等待)

+python pptinypose_infer.py --tinypose_model_dir PP_TinyPose_256x192_infer --image hrnet_demo.jpg --device gpu --use_trt True

+```

+运行完成可视化结果如下图所示:

+

+

+

+

+

+

+

+

+

+

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ \ No newline at end of file

diff --git a/modelcenter/PP-OCRv2/fastdeploy_en.md b/modelcenter/PP-OCRv2/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..bc1cc60e1a0b99acb1867781bbaa2e957b899441

--- /dev/null

+++ b/modelcenter/PP-OCRv2/fastdeploy_en.md

@@ -0,0 +1,45 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download model, image and dictionary files

+wget https://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_det_infer.tar

+tar -xvf ch_PP-OCRv2_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wgethttps://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_rec_infer.tar

+tar -xvf ch_PP-OCRv2_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv2/python/

+

+

+# CPU deployment

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU deployment

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+The results of the completed visualisation are shown below

+

\ No newline at end of file

diff --git a/modelcenter/PP-OCRv2/fastdeploy_en.md b/modelcenter/PP-OCRv2/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..bc1cc60e1a0b99acb1867781bbaa2e957b899441

--- /dev/null

+++ b/modelcenter/PP-OCRv2/fastdeploy_en.md

@@ -0,0 +1,45 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download model, image and dictionary files

+wget https://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_det_infer.tar

+tar -xvf ch_PP-OCRv2_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wgethttps://paddleocr.bj.bcebos.com/PP-OCRv2/chinese/ch_PP-OCRv2_rec_infer.tar

+tar -xvf ch_PP-OCRv2_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv2/python/

+

+

+# CPU deployment

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU deployment

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --det_model ch_PP-OCRv2_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv2_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+The results of the completed visualisation are shown below

+ \ No newline at end of file

diff --git a/modelcenter/PP-OCRv3/fastdeploy_cn.md b/modelcenter/PP-OCRv3/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..1d6ebb9e7c38cbdabbc88dcd9c39053d6bfcce43

--- /dev/null

+++ b/modelcenter/PP-OCRv3/fastdeploy_cn.md

@@ -0,0 +1,42 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+# 下载模型,图片和字典文件

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

+tar xvf ch_PP-OCRv3_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar

+tar xvf ch_PP-OCRv3_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv3/python/

+

+# CPU推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# GPU上使用TensorRT推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+运行完成可视化结果如下图所示

+

\ No newline at end of file

diff --git a/modelcenter/PP-OCRv3/fastdeploy_cn.md b/modelcenter/PP-OCRv3/fastdeploy_cn.md

new file mode 100644

index 0000000000000000000000000000000000000000..1d6ebb9e7c38cbdabbc88dcd9c39053d6bfcce43

--- /dev/null

+++ b/modelcenter/PP-OCRv3/fastdeploy_cn.md

@@ -0,0 +1,42 @@

+## 0. 全场景高性能AI推理部署工具 FastDeploy

+FastDeploy 是一款**全场景、易用灵活、极致高效**的AI推理部署工具。提供开箱即用的**云边端**部署体验, 支持超过 150+ Text, Vision, Speech和跨模态模型,实现了AI模型**端到端的优化加速**。目前支持的硬件包括 **X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU**等10类云边端的硬件,通过一行代码切换不同推理后端和硬件。

+

+使用 FastDeploy 3步即可搞定AI模型部署:(1)安装FastDeploy预编译包(2)调用FastDeploy的API实现部署代码 (3)推理部署。

+

+**注** : 本文档下载 FastDeploy 示例来完成高性能部署体验;仅展示X86 CPU、NVIDIA GPU的推理,且默认已经准备好GPU环境(如 CUDA >= 11.2等),如需要部署其他硬件或者完整了解 FastDeploy 部署能力,请参考 [FastDeploy的GitHub仓库](https://github.com/PaddlePaddle/FastDeploy)

+

+

+## 1. 安装FastDeploy预编译包

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. 运行部署示例

+```

+# 下载模型,图片和字典文件

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

+tar xvf ch_PP-OCRv3_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar

+tar xvf ch_PP-OCRv3_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+#下载部署示例代码

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv3/python/

+

+# CPU推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# GPU上使用TensorRT推理

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+运行完成可视化结果如下图所示

+ \ No newline at end of file

diff --git a/modelcenter/PP-OCRv3/fastdeploy_en.md b/modelcenter/PP-OCRv3/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..f86e9ac253eafff388e01c2174ba0d22dfa6683f

--- /dev/null

+++ b/modelcenter/PP-OCRv3/fastdeploy_en.md

@@ -0,0 +1,44 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download model, image and dictionary files

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

+tar xvf ch_PP-OCRv3_det_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/dygraph_v2.0/ch/ch_ppocr_mobile_v2.0_cls_infer.tar

+tar -xvf ch_ppocr_mobile_v2.0_cls_infer.tar

+

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar

+tar xvf ch_PP-OCRv3_rec_infer.tar

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/doc/imgs/12.jpg

+

+wget https://gitee.com/paddlepaddle/PaddleOCR/raw/release/2.6/ppocr/utils/ppocr_keys_v1.txt

+

+# download deployment example

+git clone https://github.com/PaddlePaddle/FastDeploy.git

+cd examples/vison/ocr/PP-OCRv3/python/

+

+

+# CPU deployment

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device cpu

+# GPU deployment

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu

+# TensorRT inference on GPU (note: if you run TensorRT inference the first time, there is a serialization of the model, which is time-consuming and requires patience)

+python infer.py --det_model ch_PP-OCRv3_det_infer --cls_model ch_ppocr_mobile_v2.0_cls_infer --rec_model ch_PP-OCRv3_rec_infer --rec_label_file ppocr_keys_v1.txt --image 12.jpg --device gpu --backend trt

+```

+

+The results of the completed visualisation are shown below

+

\ No newline at end of file

diff --git a/modelcenter/PP-OCRv3/fastdeploy_en.md b/modelcenter/PP-OCRv3/fastdeploy_en.md

new file mode 100644

index 0000000000000000000000000000000000000000..f86e9ac253eafff388e01c2174ba0d22dfa6683f

--- /dev/null

+++ b/modelcenter/PP-OCRv3/fastdeploy_en.md

@@ -0,0 +1,44 @@

+## 0. FastDeploy

+

+FastDeploy is an Easy-to-use and High Performance AI model deployment toolkit for Cloud, Mobile and Edge with out-of-the-box and unified experience, end-to-end optimization for over 150+ Text, Vision, Speech and Cross-modal AI models. FastDeploy Supports AI model deployment on

+**X86 CPU、NVIDIA GPU、ARM CPU、XPU、NPU、IPU** etc. You can switch different inference backends and hardware with a single line of code.

+

+Deploying AI model in 3 steps with FastDeploy: (1)Install FastDeploy SDK; (2)Use FastDeploy's API to implement the deployment code; (3) Deploy.

+

+**Notes** : This document downloads FastDeploy examples to complete the high performance deployment experience; only X86 CPUs, NVIDIA GPUs are shown for reasoning and GPU environments are ready by default (e.g. CUDA >= 11.2, etc.), if you need to deploy AI model on other hardware or learn about FastDeploy's full capabilities, please refer to [FastDeploy GitHub](https://github.com/PaddlePaddle/FastDeploy).

+

+## 1. Install FastDeploy SDK

+```

+pip install fastdeploy-gpu-python==0.0.0 -f https://www.paddlepaddle.org.cn/whl/fastdeploy_nightly_build.html

+```

+## 2. Run Deployment Example

+```

+# download model, image and dictionary files

+wget https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar

+tar xvf ch_PP-OCRv3_det_infer.tar

+