fix conflict

Showing

cyclegan/README.md

0 → 100644

cyclegan/__init__.py

0 → 100644

cyclegan/check.py

0 → 100644

cyclegan/cyclegan.py

0 → 100644

cyclegan/data.py

0 → 100644

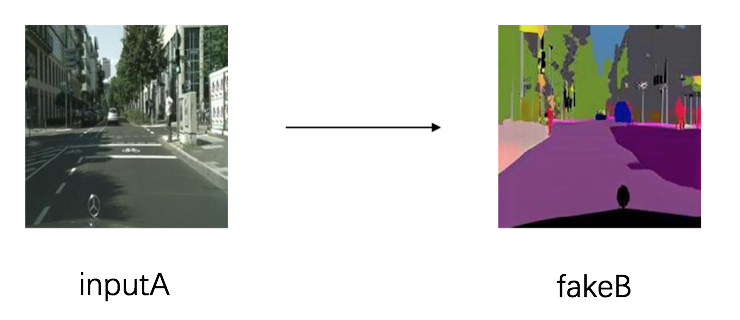

cyclegan/image/A2B.png

0 → 100644

154.5 KB

cyclegan/image/B2A.png

0 → 100644

143.2 KB

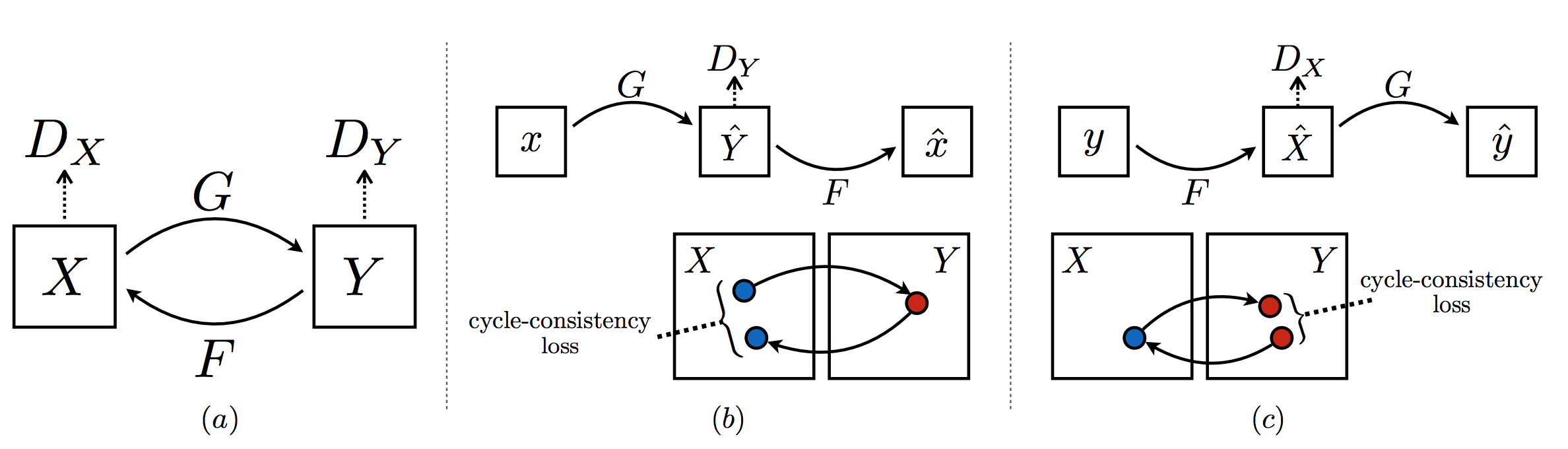

cyclegan/image/net.png

0 → 100644

152.5 KB

cyclegan/image/testA/123_A.jpg

0 → 100644

33.4 KB

cyclegan/image/testB/78_B.jpg

0 → 100644

24.8 KB

cyclegan/infer.py

0 → 100644

cyclegan/layers.py

0 → 100644

cyclegan/test.py

0 → 100644

cyclegan/train.py

0 → 100644

lac.py

0 → 100644

models/darknet.py

0 → 100755

models/yolov3.py

0 → 100644

text.py

0 → 100644

transformer/README.md

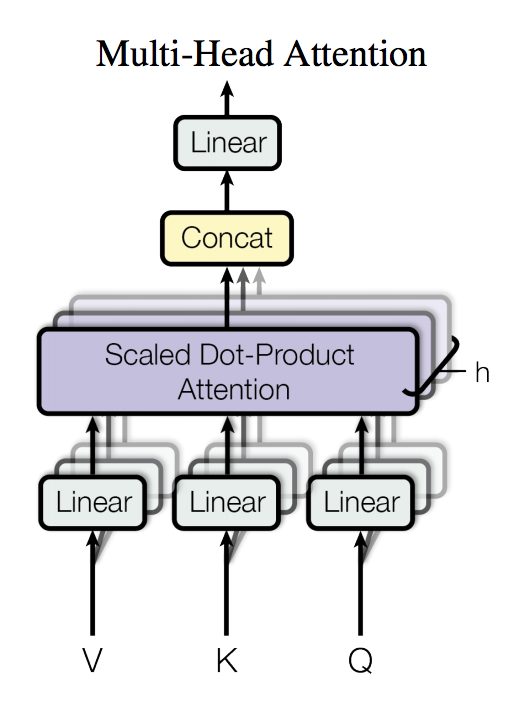

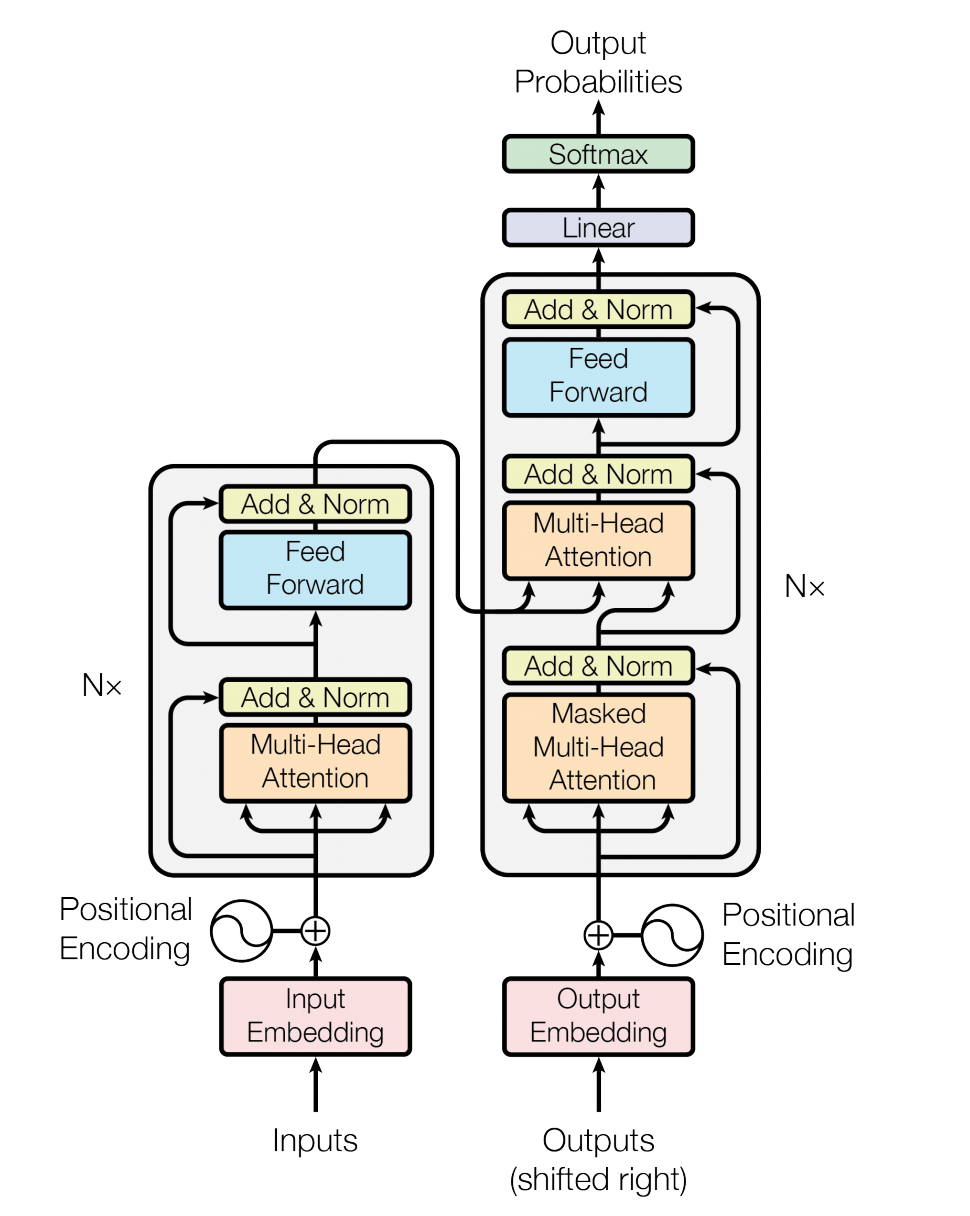

0 → 100644

104.5 KB

259.1 KB

transformer/predict.py

0 → 100644

transformer/reader.py

0 → 100644

transformer/run.sh

0 → 100644

transformer/train.py

0 → 100644

transformer/transformer.py

0 → 100644

transformer/transformer.yaml

0 → 100644

transformer/utils/__init__.py

0 → 100644

transformer/utils/check.py

0 → 100644

transformer/utils/configure.py

0 → 100644

yolov3.py

已删除

100644 → 0

yolov3/README.md

0 → 100644

yolov3/coco.py

0 → 100644

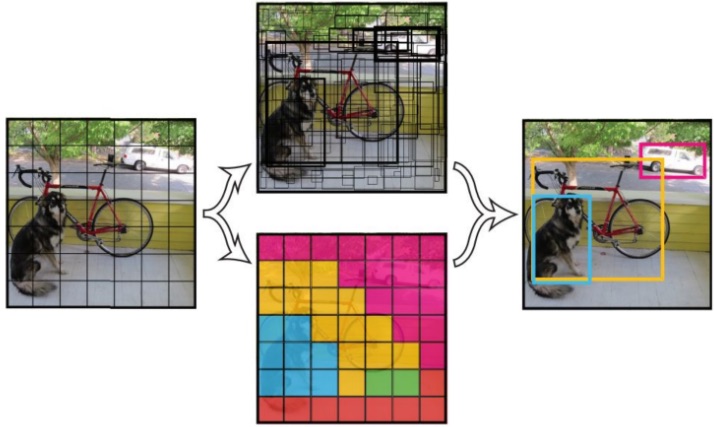

yolov3/image/YOLOv3.jpg

0 → 100644

68.4 KB

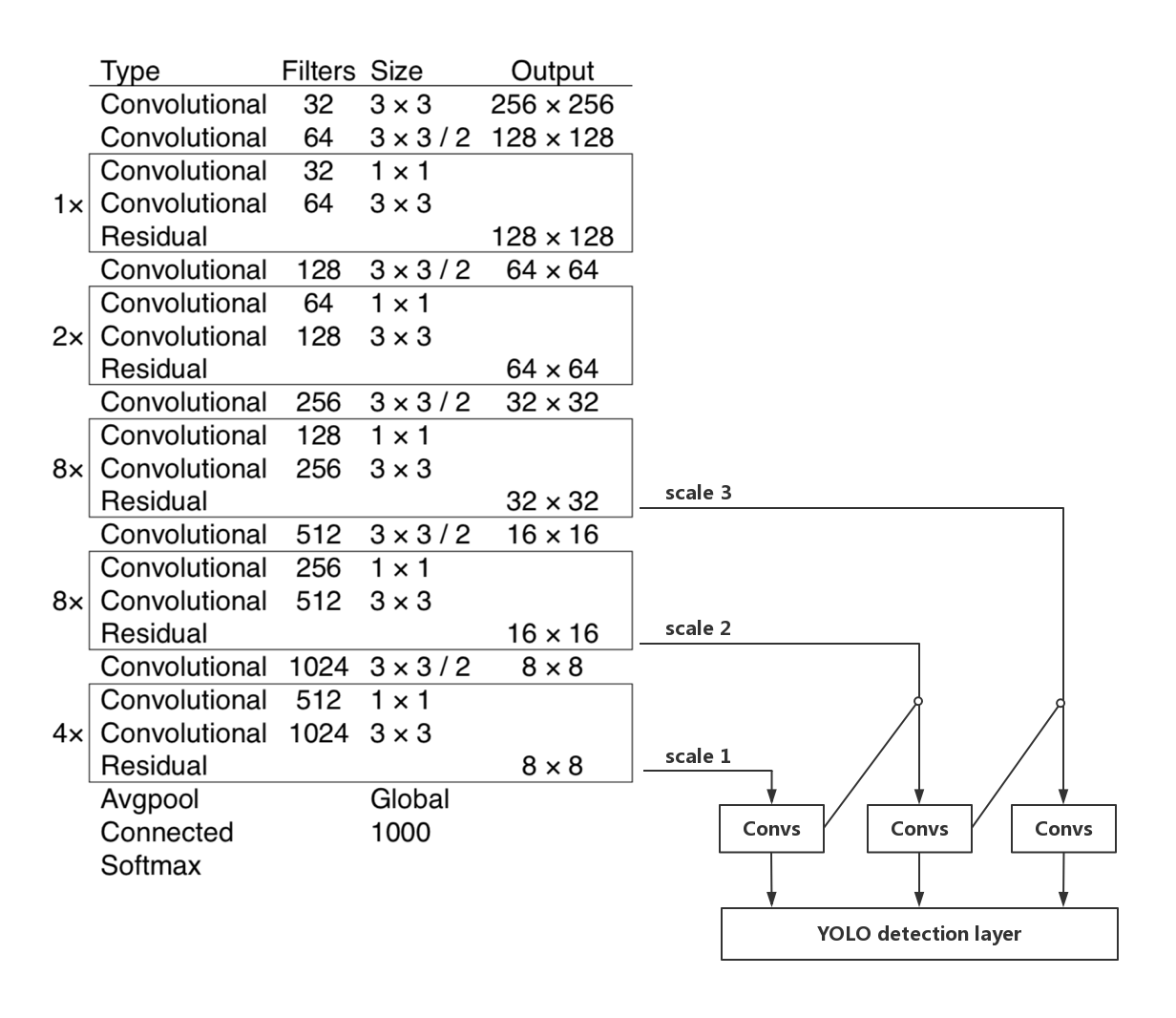

yolov3/image/YOLOv3_structure.jpg

0 → 100644

288.4 KB

yolov3/image/dog.jpg

0 → 100644

159.9 KB

yolov3/infer.py

0 → 100644

yolov3/main.py

0 → 100644

yolov3/transforms.py

0 → 100644

yolov3/visualizer.py

0 → 100644