diff --git a/README.md b/README.md

index 26ecef77dc7206e4ada4b89ac863a935222c8c07..95326fa08cc5c55a8b9932146a35a1cba800b715 100644

--- a/README.md

+++ b/README.md

@@ -3,10 +3,8 @@ English | [简体中文](README_cn.md)

## Introduction

PaddleOCR aims to create rich, leading, and practical OCR tools that help users train better models and apply them into practice.

-**Live stream on coming day**: July 21, 2020 at 8 pm BiliBili station live stream

-

**Recent updates**

-

+- 2020.7.23, Release the playback and PPT of live class on BiliBili station, PaddleOCR Introduction, [address](https://aistudio.baidu.com/aistudio/course/introduce/1519)

- 2020.7.15, Add mobile App demo , support both iOS and Android ( based on easyedge and Paddle Lite)

- 2020.7.15, Improve the deployment ability, add the C + + inference , serving deployment. In addtion, the benchmarks of the ultra-lightweight OCR model are provided.

- 2020.7.15, Add several related datasets, data annotation and synthesis tools.

diff --git a/README_cn.md b/README_cn.md

index 1594b6b25db9e8ccebf81ab4f164d9cbcaf97ca9..47bddd5d6793b97a63eb037860bf59aac4dd0ed9 100644

--- a/README_cn.md

+++ b/README_cn.md

@@ -3,9 +3,8 @@

## 简介

PaddleOCR旨在打造一套丰富、领先、且实用的OCR工具库,助力使用者训练出更好的模型,并应用落地。

-**直播预告:2020年7月21日晚8点B站直播,PaddleOCR开源大礼包全面解读,直播地址当天更新**

-

**近期更新**

+- 2020.7.23 发布7月21日B站直播课回放和PPT,PaddleOCR开源大礼包全面解读,[获取地址](https://aistudio.baidu.com/aistudio/course/introduce/1519)

- 2020.7.15 添加基于EasyEdge和Paddle-Lite的移动端DEMO,支持iOS和Android系统

- 2020.7.15 完善预测部署,添加基于C++预测引擎推理、服务化部署和端侧部署方案,以及超轻量级中文OCR模型预测耗时Benchmark

- 2020.7.15 整理OCR相关数据集、常用数据标注以及合成工具

diff --git a/deploy/android_demo/app/src/main/AndroidManifest.xml b/deploy/android_demo/app/src/main/AndroidManifest.xml

index ff1900d637a827998c4da52b9a2dda51b8ae89c8..b7c1d8b51bafb1ddc3c927eb2410d32a8bc55593 100644

--- a/deploy/android_demo/app/src/main/AndroidManifest.xml

+++ b/deploy/android_demo/app/src/main/AndroidManifest.xml

@@ -25,6 +25,15 @@

android:name="com.baidu.paddle.lite.demo.ocr.SettingsActivity"

android:label="Settings">

+

+

+

\ No newline at end of file

diff --git a/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/MainActivity.java b/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/MainActivity.java

index b72d72df47a3c6d769559230185c50823276fe85..716e7bddbc63d7787d79913f0ba8faf795a4bfe6 100644

--- a/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/MainActivity.java

+++ b/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/MainActivity.java

@@ -3,14 +3,17 @@ package com.baidu.paddle.lite.demo.ocr;

import android.Manifest;

import android.app.ProgressDialog;

import android.content.ContentResolver;

+import android.content.Context;

import android.content.Intent;

import android.content.SharedPreferences;

import android.content.pm.PackageManager;

import android.database.Cursor;

import android.graphics.Bitmap;

import android.graphics.BitmapFactory;

+import android.media.ExifInterface;

import android.net.Uri;

import android.os.Bundle;

+import android.os.Environment;

import android.os.Handler;

import android.os.HandlerThread;

import android.os.Message;

@@ -19,6 +22,7 @@ import android.provider.MediaStore;

import android.support.annotation.NonNull;

import android.support.v4.app.ActivityCompat;

import android.support.v4.content.ContextCompat;

+import android.support.v4.content.FileProvider;

import android.support.v7.app.AppCompatActivity;

import android.text.method.ScrollingMovementMethod;

import android.util.Log;

@@ -32,6 +36,8 @@ import android.widget.Toast;

import java.io.File;

import java.io.IOException;

import java.io.InputStream;

+import java.text.SimpleDateFormat;

+import java.util.Date;

public class MainActivity extends AppCompatActivity {

private static final String TAG = MainActivity.class.getSimpleName();

@@ -69,6 +75,7 @@ public class MainActivity extends AppCompatActivity {

protected float[] inputMean = new float[]{};

protected float[] inputStd = new float[]{};

protected float scoreThreshold = 0.1f;

+ private String currentPhotoPath;

protected Predictor predictor = new Predictor();

@@ -368,18 +375,56 @@ public class MainActivity extends AppCompatActivity {

}

private void takePhoto() {

- Intent takePhotoIntent = new Intent(MediaStore.ACTION_IMAGE_CAPTURE);

- if (takePhotoIntent.resolveActivity(getPackageManager()) != null) {

- startActivityForResult(takePhotoIntent, TAKE_PHOTO_REQUEST_CODE);

+ Intent takePictureIntent = new Intent(MediaStore.ACTION_IMAGE_CAPTURE);

+ // Ensure that there's a camera activity to handle the intent

+ if (takePictureIntent.resolveActivity(getPackageManager()) != null) {

+ // Create the File where the photo should go

+ File photoFile = null;

+ try {

+ photoFile = createImageFile();

+ } catch (IOException ex) {

+ Log.e("MainActitity", ex.getMessage(), ex);

+ Toast.makeText(MainActivity.this,

+ "Create Camera temp file failed: " + ex.getMessage(), Toast.LENGTH_SHORT).show();

+ }

+ // Continue only if the File was successfully created

+ if (photoFile != null) {

+ Log.i(TAG, "FILEPATH " + getExternalFilesDir("Pictures").getAbsolutePath());

+ Uri photoURI = FileProvider.getUriForFile(this,

+ "com.baidu.paddle.lite.demo.ocr.fileprovider",

+ photoFile);

+ currentPhotoPath = photoFile.getAbsolutePath();

+ takePictureIntent.putExtra(MediaStore.EXTRA_OUTPUT, photoURI);

+ startActivityForResult(takePictureIntent, TAKE_PHOTO_REQUEST_CODE);

+ Log.i(TAG, "startActivityForResult finished");

+ }

}

+

+ }

+

+ private File createImageFile() throws IOException {

+ // Create an image file name

+ String timeStamp = new SimpleDateFormat("yyyyMMdd_HHmmss").format(new Date());

+ String imageFileName = "JPEG_" + timeStamp + "_";

+ File storageDir = getExternalFilesDir(Environment.DIRECTORY_PICTURES);

+ File image = File.createTempFile(

+ imageFileName, /* prefix */

+ ".bmp", /* suffix */

+ storageDir /* directory */

+ );

+

+ return image;

}

@Override

protected void onActivityResult(int requestCode, int resultCode, Intent data) {

super.onActivityResult(requestCode, resultCode, data);

- if (resultCode == RESULT_OK && data != null) {

+ if (resultCode == RESULT_OK) {

switch (requestCode) {

case OPEN_GALLERY_REQUEST_CODE:

+ if (data == null) {

+ break;

+ }

try {

ContentResolver resolver = getContentResolver();

Uri uri = data.getData();

@@ -393,9 +438,22 @@ public class MainActivity extends AppCompatActivity {

}

break;

case TAKE_PHOTO_REQUEST_CODE:

- Bundle extras = data.getExtras();

- Bitmap image = (Bitmap) extras.get("data");

- onImageChanged(image);

+ if (currentPhotoPath != null) {

+ ExifInterface exif = null;

+ try {

+ exif = new ExifInterface(currentPhotoPath);

+ } catch (IOException e) {

+ e.printStackTrace();

+ }

+ int orientation = exif.getAttributeInt(ExifInterface.TAG_ORIENTATION,

+ ExifInterface.ORIENTATION_UNDEFINED);

+ Log.i(TAG, "rotation " + orientation);

+ Bitmap image = BitmapFactory.decodeFile(currentPhotoPath);

+ image = Utils.rotateBitmap(image, orientation);

+ onImageChanged(image);

+ } else {

+ Log.e(TAG, "currentPhotoPath is null");

+ }

break;

default:

break;

diff --git a/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/OCRPredictorNative.java b/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/OCRPredictorNative.java

index 103d5d37aec3ddc026d48a202df17b140e3e4533..2e78a3ece96bb5e37bebcdda7ebc77060686b710 100644

--- a/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/OCRPredictorNative.java

+++ b/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/OCRPredictorNative.java

@@ -35,8 +35,8 @@ public class OCRPredictorNative {

}

- public void release(){

- if (nativePointer != 0){

+ public void release() {

+ if (nativePointer != 0) {

nativePointer = 0;

destory(nativePointer);

}

diff --git a/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/Predictor.java b/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/Predictor.java

index d491481e7b61bca8043c34a65ecb3bbf6a72487d..7543aceea0331138d1b6c7ab7b33e6d0b4a223ca 100644

--- a/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/Predictor.java

+++ b/deploy/android_demo/app/src/main/java/com/baidu/paddle/lite/demo/ocr/Predictor.java

@@ -127,12 +127,12 @@ public class Predictor {

}

public void releaseModel() {

- if (paddlePredictor != null){

+ if (paddlePredictor != null) {

paddlePredictor.release();

paddlePredictor = null;

}

isLoaded = false;

- cpuThreadNum = 4;

+ cpuThreadNum = 1;

cpuPowerMode = "LITE_POWER_HIGH";

modelPath = "";

modelName = "";

@@ -287,9 +287,7 @@ public class Predictor {

if (image == null) {

return;

}

- // Scale image to the size of input tensor

- Bitmap rgbaImage = image.copy(Bitmap.Config.ARGB_8888, true);

- this.inputImage = rgbaImage;

+ this.inputImage = image.copy(Bitmap.Config.ARGB_8888, true);

}

private ArrayList postprocess(ArrayList results) {

@@ -310,7 +308,7 @@ public class Predictor {

private void drawResults(ArrayList results) {

StringBuffer outputResultSb = new StringBuffer("");

- for (int i=0;i

+

+

+

\ No newline at end of file

diff --git a/deploy/cpp_infer/CMakeLists.txt b/deploy/cpp_infer/CMakeLists.txt

index 1415e2cb89e1d42cda4d5ee15963f513e728a0cb..466c2be8f79c11a9e6cf39631ef2dc5a2a213321 100644

--- a/deploy/cpp_infer/CMakeLists.txt

+++ b/deploy/cpp_infer/CMakeLists.txt

@@ -1,8 +1,17 @@

project(ocr_system CXX C)

+

option(WITH_MKL "Compile demo with MKL/OpenBlas support, default use MKL." ON)

option(WITH_GPU "Compile demo with GPU/CPU, default use CPU." OFF)

option(WITH_STATIC_LIB "Compile demo with static/shared library, default use static." ON)

-option(USE_TENSORRT "Compile demo with TensorRT." OFF)

+option(WITH_TENSORRT "Compile demo with TensorRT." OFF)

+

+SET(PADDLE_LIB "" CACHE PATH "Location of libraries")

+SET(OPENCV_DIR "" CACHE PATH "Location of libraries")

+SET(CUDA_LIB "" CACHE PATH "Location of libraries")

+SET(CUDNN_LIB "" CACHE PATH "Location of libraries")

+SET(TENSORRT_DIR "" CACHE PATH "Compile demo with TensorRT")

+

+set(DEMO_NAME "ocr_system")

macro(safe_set_static_flag)

@@ -15,24 +24,60 @@ macro(safe_set_static_flag)

endforeach(flag_var)

endmacro()

-set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -std=c++11 -g -fpermissive")

-set(CMAKE_STATIC_LIBRARY_PREFIX "")

-message("flags" ${CMAKE_CXX_FLAGS})

-set(CMAKE_CXX_FLAGS_RELEASE "-O3")

+if (WITH_MKL)

+ ADD_DEFINITIONS(-DUSE_MKL)

+endif()

if(NOT DEFINED PADDLE_LIB)

message(FATAL_ERROR "please set PADDLE_LIB with -DPADDLE_LIB=/path/paddle/lib")

endif()

-if(NOT DEFINED DEMO_NAME)

- message(FATAL_ERROR "please set DEMO_NAME with -DDEMO_NAME=demo_name")

+

+if(NOT DEFINED OPENCV_DIR)

+ message(FATAL_ERROR "please set OPENCV_DIR with -DOPENCV_DIR=/path/opencv")

endif()

-set(OPENCV_DIR ${OPENCV_DIR})

-find_package(OpenCV REQUIRED PATHS ${OPENCV_DIR}/share/OpenCV NO_DEFAULT_PATH)

+if (WIN32)

+ include_directories("${PADDLE_LIB}/paddle/fluid/inference")

+ include_directories("${PADDLE_LIB}/paddle/include")

+ link_directories("${PADDLE_LIB}/paddle/fluid/inference")

+ find_package(OpenCV REQUIRED PATHS ${OPENCV_DIR}/build/ NO_DEFAULT_PATH)

+

+else ()

+ find_package(OpenCV REQUIRED PATHS ${OPENCV_DIR}/share/OpenCV NO_DEFAULT_PATH)

+ include_directories("${PADDLE_LIB}/paddle/include")

+ link_directories("${PADDLE_LIB}/paddle/lib")

+endif ()

include_directories(${OpenCV_INCLUDE_DIRS})

-include_directories("${PADDLE_LIB}/paddle/include")

+if (WIN32)

+ add_definitions("/DGOOGLE_GLOG_DLL_DECL=")

+ set(CMAKE_C_FLAGS_DEBUG "${CMAKE_C_FLAGS_DEBUG} /bigobj /MTd")

+ set(CMAKE_C_FLAGS_RELEASE "${CMAKE_C_FLAGS_RELEASE} /bigobj /MT")

+ set(CMAKE_CXX_FLAGS_DEBUG "${CMAKE_CXX_FLAGS_DEBUG} /bigobj /MTd")

+ set(CMAKE_CXX_FLAGS_RELEASE "${CMAKE_CXX_FLAGS_RELEASE} /bigobj /MT")

+ if (WITH_STATIC_LIB)

+ safe_set_static_flag()

+ add_definitions(-DSTATIC_LIB)

+ endif()

+else()

+ set(CMAKE_CXX_FLAGS "${CMAKE_CXX_FLAGS} -g -o3 -std=c++11")

+ set(CMAKE_STATIC_LIBRARY_PREFIX "")

+endif()

+message("flags" ${CMAKE_CXX_FLAGS})

+

+

+if (WITH_GPU)

+ if (NOT DEFINED CUDA_LIB OR ${CUDA_LIB} STREQUAL "")

+ message(FATAL_ERROR "please set CUDA_LIB with -DCUDA_LIB=/path/cuda-8.0/lib64")

+ endif()

+ if (NOT WIN32)

+ if (NOT DEFINED CUDNN_LIB)

+ message(FATAL_ERROR "please set CUDNN_LIB with -DCUDNN_LIB=/path/cudnn_v7.4/cuda/lib64")

+ endif()

+ endif(NOT WIN32)

+endif()

+

include_directories("${PADDLE_LIB}/third_party/install/protobuf/include")

include_directories("${PADDLE_LIB}/third_party/install/glog/include")

include_directories("${PADDLE_LIB}/third_party/install/gflags/include")

@@ -43,10 +88,12 @@ include_directories("${PADDLE_LIB}/third_party/eigen3")

include_directories("${CMAKE_SOURCE_DIR}/")

-if (USE_TENSORRT AND WITH_GPU)

- include_directories("${TENSORRT_ROOT}/include")

- link_directories("${TENSORRT_ROOT}/lib")

-endif()

+if (NOT WIN32)

+ if (WITH_TENSORRT AND WITH_GPU)

+ include_directories("${TENSORRT_DIR}/include")

+ link_directories("${TENSORRT_DIR}/lib")

+ endif()

+endif(NOT WIN32)

link_directories("${PADDLE_LIB}/third_party/install/zlib/lib")

@@ -57,17 +104,24 @@ link_directories("${PADDLE_LIB}/third_party/install/xxhash/lib")

link_directories("${PADDLE_LIB}/paddle/lib")

-AUX_SOURCE_DIRECTORY(./src SRCS)

-add_executable(${DEMO_NAME} ${SRCS})

-

if(WITH_MKL)

include_directories("${PADDLE_LIB}/third_party/install/mklml/include")

- set(MATH_LIB ${PADDLE_LIB}/third_party/install/mklml/lib/libmklml_intel${CMAKE_SHARED_LIBRARY_SUFFIX}

- ${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5${CMAKE_SHARED_LIBRARY_SUFFIX})

+ if (WIN32)

+ set(MATH_LIB ${PADDLE_LIB}/third_party/install/mklml/lib/mklml.lib

+ ${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5md.lib)

+ else ()

+ set(MATH_LIB ${PADDLE_LIB}/third_party/install/mklml/lib/libmklml_intel${CMAKE_SHARED_LIBRARY_SUFFIX}

+ ${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5${CMAKE_SHARED_LIBRARY_SUFFIX})

+ execute_process(COMMAND cp -r ${PADDLE_LIB}/third_party/install/mklml/lib/libmklml_intel${CMAKE_SHARED_LIBRARY_SUFFIX} /usr/lib)

+ endif ()

set(MKLDNN_PATH "${PADDLE_LIB}/third_party/install/mkldnn")

if(EXISTS ${MKLDNN_PATH})

include_directories("${MKLDNN_PATH}/include")

- set(MKLDNN_LIB ${MKLDNN_PATH}/lib/libmkldnn.so.0)

+ if (WIN32)

+ set(MKLDNN_LIB ${MKLDNN_PATH}/lib/mkldnn.lib)

+ else ()

+ set(MKLDNN_LIB ${MKLDNN_PATH}/lib/libmkldnn.so.0)

+ endif ()

endif()

else()

set(MATH_LIB ${PADDLE_LIB}/third_party/install/openblas/lib/libopenblas${CMAKE_STATIC_LIBRARY_SUFFIX})

@@ -82,24 +136,66 @@ else()

${PADDLE_LIB}/paddle/lib/libpaddle_fluid${CMAKE_SHARED_LIBRARY_SUFFIX})

endif()

-set(EXTERNAL_LIB "-lrt -ldl -lpthread -lm")

+if (NOT WIN32)

+ set(DEPS ${DEPS}

+ ${MATH_LIB} ${MKLDNN_LIB}

+ glog gflags protobuf z xxhash

+ )

+ if(EXISTS "${PADDLE_LIB}/third_party/install/snappystream/lib")

+ set(DEPS ${DEPS} snappystream)

+ endif()

+ if (EXISTS "${PADDLE_LIB}/third_party/install/snappy/lib")

+ set(DEPS ${DEPS} snappy)

+ endif()

+else()

+ set(DEPS ${DEPS}

+ ${MATH_LIB} ${MKLDNN_LIB}

+ glog gflags_static libprotobuf xxhash)

+ set(DEPS ${DEPS} libcmt shlwapi)

+ if (EXISTS "${PADDLE_LIB}/third_party/install/snappy/lib")

+ set(DEPS ${DEPS} snappy)

+ endif()

+ if(EXISTS "${PADDLE_LIB}/third_party/install/snappystream/lib")

+ set(DEPS ${DEPS} snappystream)

+ endif()

+endif(NOT WIN32)

-set(DEPS ${DEPS}

- ${MATH_LIB} ${MKLDNN_LIB}

- glog gflags protobuf z xxhash

- ${EXTERNAL_LIB} ${OpenCV_LIBS})

if(WITH_GPU)

- if (USE_TENSORRT)

- set(DEPS ${DEPS}

- ${TENSORRT_ROOT}/lib/libnvinfer${CMAKE_SHARED_LIBRARY_SUFFIX})

- set(DEPS ${DEPS}

- ${TENSORRT_ROOT}/lib/libnvinfer_plugin${CMAKE_SHARED_LIBRARY_SUFFIX})

+ if(NOT WIN32)

+ if (WITH_TENSORRT)

+ set(DEPS ${DEPS} ${TENSORRT_DIR}/lib/libnvinfer${CMAKE_SHARED_LIBRARY_SUFFIX})

+ set(DEPS ${DEPS} ${TENSORRT_DIR}/lib/libnvinfer_plugin${CMAKE_SHARED_LIBRARY_SUFFIX})

+ endif()

+ set(DEPS ${DEPS} ${CUDA_LIB}/libcudart${CMAKE_SHARED_LIBRARY_SUFFIX})

+ set(DEPS ${DEPS} ${CUDNN_LIB}/libcudnn${CMAKE_SHARED_LIBRARY_SUFFIX})

+ else()

+ set(DEPS ${DEPS} ${CUDA_LIB}/cudart${CMAKE_STATIC_LIBRARY_SUFFIX} )

+ set(DEPS ${DEPS} ${CUDA_LIB}/cublas${CMAKE_STATIC_LIBRARY_SUFFIX} )

+ set(DEPS ${DEPS} ${CUDNN_LIB}/cudnn${CMAKE_STATIC_LIBRARY_SUFFIX})

endif()

- set(DEPS ${DEPS} ${CUDA_LIB}/libcudart${CMAKE_SHARED_LIBRARY_SUFFIX})

- set(DEPS ${DEPS} ${CUDA_LIB}/libcudart${CMAKE_SHARED_LIBRARY_SUFFIX} )

- set(DEPS ${DEPS} ${CUDA_LIB}/libcublas${CMAKE_SHARED_LIBRARY_SUFFIX} )

- set(DEPS ${DEPS} ${CUDNN_LIB}/libcudnn${CMAKE_SHARED_LIBRARY_SUFFIX} )

endif()

+

+if (NOT WIN32)

+ set(EXTERNAL_LIB "-ldl -lrt -lgomp -lz -lm -lpthread")

+ set(DEPS ${DEPS} ${EXTERNAL_LIB})

+endif()

+

+set(DEPS ${DEPS} ${OpenCV_LIBS})

+

+AUX_SOURCE_DIRECTORY(./src SRCS)

+add_executable(${DEMO_NAME} ${SRCS})

+

target_link_libraries(${DEMO_NAME} ${DEPS})

+

+if (WIN32 AND WITH_MKL)

+ add_custom_command(TARGET ${DEMO_NAME} POST_BUILD

+ COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mklml/lib/mklml.dll ./mklml.dll

+ COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5md.dll ./libiomp5md.dll

+ COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mkldnn/lib/mkldnn.dll ./mkldnn.dll

+ COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mklml/lib/mklml.dll ./release/mklml.dll

+ COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mklml/lib/libiomp5md.dll ./release/libiomp5md.dll

+ COMMAND ${CMAKE_COMMAND} -E copy_if_different ${PADDLE_LIB}/third_party/install/mkldnn/lib/mkldnn.dll ./release/mkldnn.dll

+ )

+endif()

\ No newline at end of file

diff --git a/deploy/cpp_infer/docs/windows_vs2019_build.md b/deploy/cpp_infer/docs/windows_vs2019_build.md

new file mode 100644

index 0000000000000000000000000000000000000000..21fbf4e0eb95ee82475164047d8051e90e9e224f

--- /dev/null

+++ b/deploy/cpp_infer/docs/windows_vs2019_build.md

@@ -0,0 +1,95 @@

+# Visual Studio 2019 Community CMake 编译指南

+

+PaddleOCR在Windows 平台下基于`Visual Studio 2019 Community` 进行了测试。微软从`Visual Studio 2017`开始即支持直接管理`CMake`跨平台编译项目,但是直到`2019`才提供了稳定和完全的支持,所以如果你想使用CMake管理项目编译构建,我们推荐你使用`Visual Studio 2019`环境下构建。

+

+

+## 前置条件

+* Visual Studio 2019

+* CUDA 9.0 / CUDA 10.0,cudnn 7+ (仅在使用GPU版本的预测库时需要)

+* CMake 3.0+

+

+请确保系统已经安装好上述基本软件,我们使用的是`VS2019`的社区版。

+

+**下面所有示例以工作目录为 `D:\projects`演示**。

+

+### Step1: 下载PaddlePaddle C++ 预测库 fluid_inference

+

+PaddlePaddle C++ 预测库针对不同的`CPU`和`CUDA`版本提供了不同的预编译版本,请根据实际情况下载: [C++预测库下载列表](https://www.paddlepaddle.org.cn/documentation/docs/zh/develop/advanced_guide/inference_deployment/inference/windows_cpp_inference.html)

+

+解压后`D:\projects\fluid_inference`目录包含内容为:

+```

+fluid_inference

+├── paddle # paddle核心库和头文件

+|

+├── third_party # 第三方依赖库和头文件

+|

+└── version.txt # 版本和编译信息

+```

+

+### Step2: 安装配置OpenCV

+

+1. 在OpenCV官网下载适用于Windows平台的3.4.6版本, [下载地址](https://sourceforge.net/projects/opencvlibrary/files/3.4.6/opencv-3.4.6-vc14_vc15.exe/download)

+2. 运行下载的可执行文件,将OpenCV解压至指定目录,如`D:\projects\opencv`

+3. 配置环境变量,如下流程所示

+ - 我的电脑->属性->高级系统设置->环境变量

+ - 在系统变量中找到Path(如没有,自行创建),并双击编辑

+ - 新建,将opencv路径填入并保存,如`D:\projects\opencv\build\x64\vc14\bin`

+

+### Step3: 使用Visual Studio 2019直接编译CMake

+

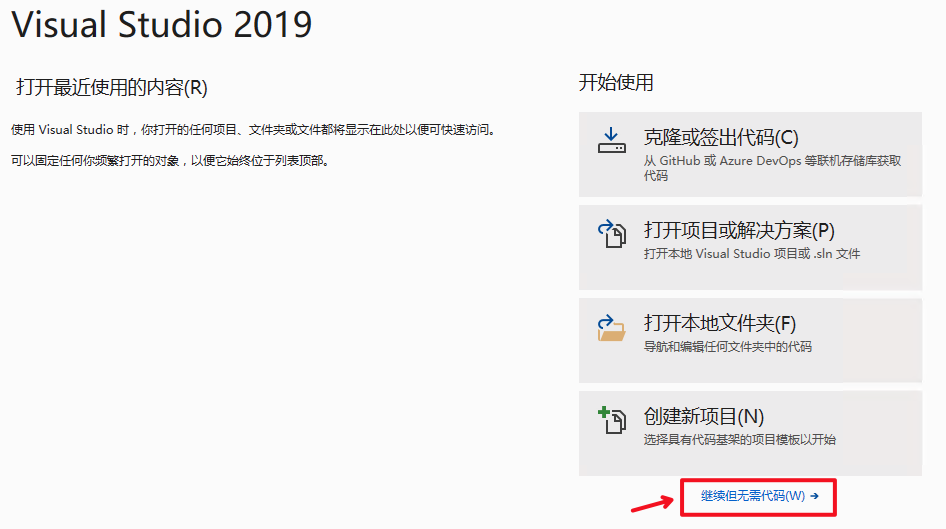

+1. 打开Visual Studio 2019 Community,点击`继续但无需代码`

+

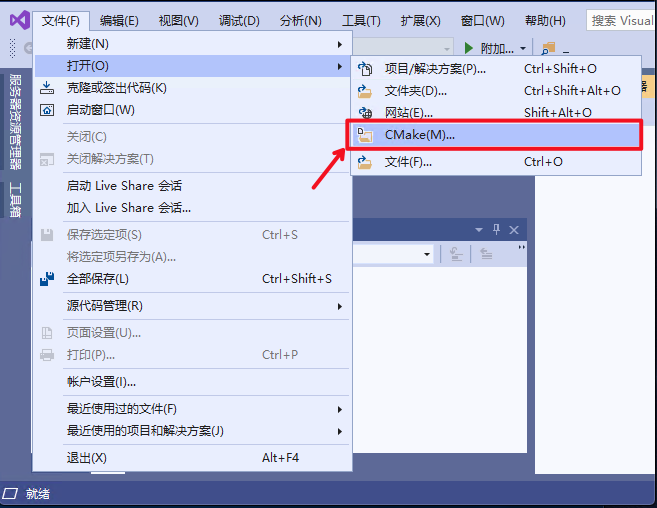

+2. 点击: `文件`->`打开`->`CMake`

+

+

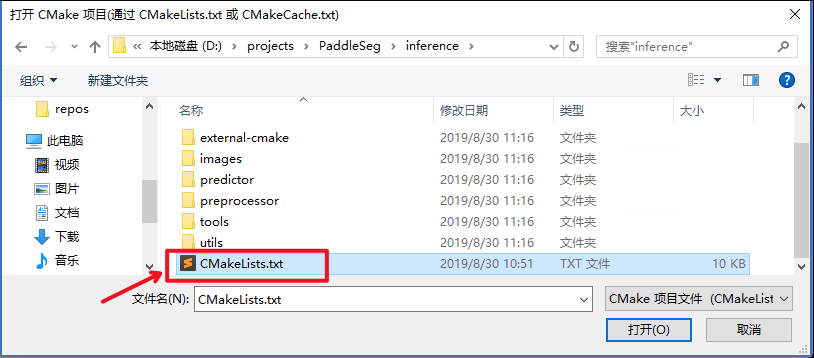

+选择项目代码所在路径,并打开`CMakeList.txt`:

+

+

+

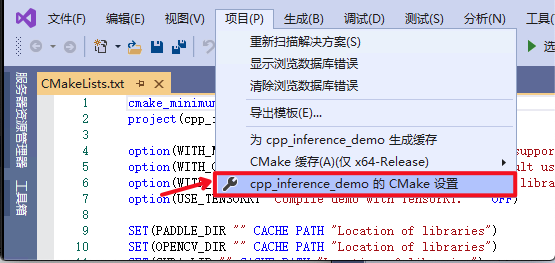

+3. 点击:`项目`->`cpp_inference_demo的CMake设置`

+

+

+

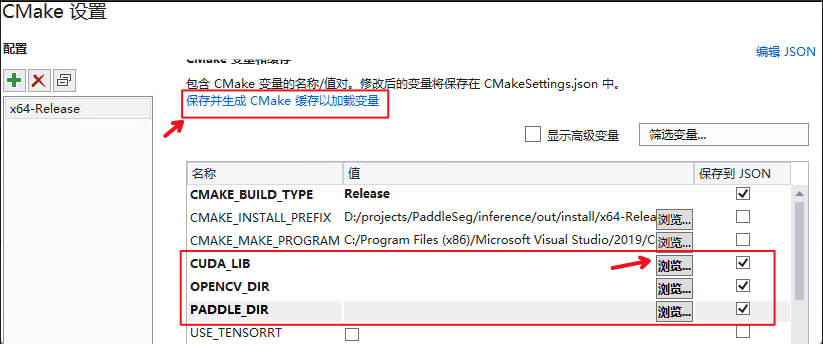

+4. 点击`浏览`,分别设置编译选项指定`CUDA`、`CUDNN_LIB`、`OpenCV`、`Paddle预测库`的路径

+

+三个编译参数的含义说明如下(带`*`表示仅在使用**GPU版本**预测库时指定, 其中CUDA库版本尽量对齐,**使用9.0、10.0版本,不使用9.2、10.1等版本CUDA库**):

+

+| 参数名 | 含义 |

+| ---- | ---- |

+| *CUDA_LIB | CUDA的库路径 |

+| *CUDNN_LIB | CUDNN的库路径 |

+| OPENCV_DIR | OpenCV的安装路径 |

+| PADDLE_LIB | Paddle预测库的路径 |

+

+**注意:**

+ 1. 使用`CPU`版预测库,请把`WITH_GPU`的勾去掉

+ 2. 如果使用的是`openblas`版本,请把`WITH_MKL`勾去掉

+

+

+

+**设置完成后**, 点击上图中`保存并生成CMake缓存以加载变量`。

+

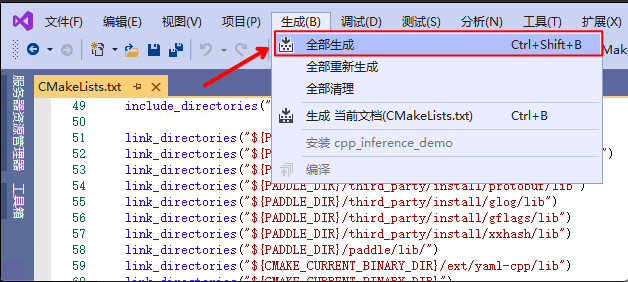

+5. 点击`生成`->`全部生成`

+

+

+

+

+### Step4: 预测及可视化

+

+上述`Visual Studio 2019`编译产出的可执行文件在`out\build\x64-Release`目录下,打开`cmd`,并切换到该目录:

+

+```

+cd D:\projects\PaddleOCR\deploy\cpp_infer\out\build\x64-Release

+```

+可执行文件`ocr_system.exe`即为样例的预测程序,其主要使用方法如下

+

+```shell

+#预测图片 `D:\projects\PaddleOCR\doc\imgs\10.jpg`

+.\ocr_system.exe D:\projects\PaddleOCR\deploy\cpp_infer\tools\config.txt D:\projects\PaddleOCR\doc\imgs\10.jpg

+```

+

+第一个参数为配置文件路径,第二个参数为需要预测的图片路径。

+

+

+### 注意

+* 在Windows下的终端中执行文件exe时,可能会发生乱码的现象,此时需要在终端中输入`CHCP 65001`,将终端的编码方式由GBK编码(默认)改为UTF-8编码,更加具体的解释可以参考这篇博客:[https://blog.csdn.net/qq_35038153/article/details/78430359](https://blog.csdn.net/qq_35038153/article/details/78430359)。

diff --git a/deploy/cpp_infer/readme.md b/deploy/cpp_infer/readme.md

index 03db8c5051a9720de599e396a589f3e0c252e5e8..0b2441097fbdd0c0ea3acb7ce5a696837645443f 100644

--- a/deploy/cpp_infer/readme.md

+++ b/deploy/cpp_infer/readme.md

@@ -7,6 +7,9 @@

### 运行准备

- Linux环境,推荐使用docker。

+- Windows环境,目前支持基于`Visual Studio 2019 Community`进行编译。

+

+* 该文档主要介绍基于Linux环境的PaddleOCR C++预测流程,如果需要在Windows下基于预测库进行C++预测,具体编译方法请参考[Windows下编译教程](./docs/windows_vs2019_build.md)

### 1.1 编译opencv库

diff --git a/deploy/cpp_infer/src/config.cpp b/deploy/cpp_infer/src/config.cpp

index 228c874d193771648d0ce559f00f55865a750fee..52dfa209b049c6d47285bcba40e41de846de610f 100644

--- a/deploy/cpp_infer/src/config.cpp

+++ b/deploy/cpp_infer/src/config.cpp

@@ -44,7 +44,7 @@ Config::LoadConfig(const std::string &config_path) {

std::map dict;

for (int i = 0; i < config.size(); i++) {

// pass for empty line or comment

- if (config[i].size() <= 1 or config[i][0] == '#') {

+ if (config[i].size() <= 1 || config[i][0] == '#') {

continue;

}

std::vector res = split(config[i], " ");

diff --git a/deploy/cpp_infer/src/utility.cpp b/deploy/cpp_infer/src/utility.cpp

index ffb74c2e121fd6f11f834785c86a271c5b275a08..c1c9d9382a06432daca71eb7b08acb8b19b8ee98 100644

--- a/deploy/cpp_infer/src/utility.cpp

+++ b/deploy/cpp_infer/src/utility.cpp

@@ -39,22 +39,21 @@ std::vector Utility::ReadDict(const std::string &path) {

void Utility::VisualizeBboxes(

const cv::Mat &srcimg,

const std::vector>> &boxes) {

- cv::Point rook_points[boxes.size()][4];

- for (int n = 0; n < boxes.size(); n++) {

- for (int m = 0; m < boxes[0].size(); m++) {

- rook_points[n][m] = cv::Point(int(boxes[n][m][0]), int(boxes[n][m][1]));

- }

- }

cv::Mat img_vis;

srcimg.copyTo(img_vis);

for (int n = 0; n < boxes.size(); n++) {

- const cv::Point *ppt[1] = {rook_points[n]};

+ cv::Point rook_points[4];

+ for (int m = 0; m < boxes[n].size(); m++) {

+ rook_points[m] = cv::Point(int(boxes[n][m][0]), int(boxes[n][m][1]));

+ }

+

+ const cv::Point *ppt[1] = {rook_points};

int npt[] = {4};

cv::polylines(img_vis, ppt, npt, 1, 1, CV_RGB(0, 255, 0), 2, 8, 0);

}

cv::imwrite("./ocr_vis.png", img_vis);

- std::cout << "The detection visualized image saved in ./ocr_vis.png.pn"

+ std::cout << "The detection visualized image saved in ./ocr_vis.png"

<< std::endl;

}

diff --git a/deploy/cpp_infer/tools/build.sh b/deploy/cpp_infer/tools/build.sh

index d8344a23c7ac5b2ff083c2dadd3bbe418edc8c24..606539487fce82adf817e7a3ee300e3bf890643b 100755

--- a/deploy/cpp_infer/tools/build.sh

+++ b/deploy/cpp_infer/tools/build.sh

@@ -1,8 +1,7 @@

-

OPENCV_DIR=your_opencv_dir

LIB_DIR=your_paddle_inference_dir

CUDA_LIB_DIR=your_cuda_lib_dir

-CUDNN_LIB_DIR=/your_cudnn_lib_dir

+CUDNN_LIB_DIR=your_cudnn_lib_dir

BUILD_DIR=build

rm -rf ${BUILD_DIR}

@@ -11,7 +10,6 @@ cd ${BUILD_DIR}

cmake .. \

-DPADDLE_LIB=${LIB_DIR} \

-DWITH_MKL=ON \

- -DDEMO_NAME=ocr_system \

-DWITH_GPU=OFF \

-DWITH_STATIC_LIB=OFF \

-DUSE_TENSORRT=OFF \

diff --git a/deploy/cpp_infer/tools/config.txt b/deploy/cpp_infer/tools/config.txt

index fe7f27a0c7c3c1f680339ff716bdd9540e8dd119..a049fc7d9dfaac88e69581b7c0aad8af8a9efaab 100644

--- a/deploy/cpp_infer/tools/config.txt

+++ b/deploy/cpp_infer/tools/config.txt

@@ -15,8 +15,7 @@ det_model_dir ./inference/det_db

# rec config

rec_model_dir ./inference/rec_crnn

char_list_file ../../ppocr/utils/ppocr_keys_v1.txt

-img_path ../../doc/imgs/11.jpg

# show the detection results

-visualize 0

+visualize 1

diff --git a/deploy/lite/readme.md b/deploy/lite/readme.md

index 378a3eec2ebf2ffcc1e8f46c52ff75bfc607cdef..219cc83fe4487400e886e47e46cb30275ba72c14 100644

--- a/deploy/lite/readme.md

+++ b/deploy/lite/readme.md

@@ -18,7 +18,7 @@ Paddle Lite是飞桨轻量化推理引擎,为手机、IOT端提供高效推理

1. [Docker](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#docker)

2. [Linux](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#android)

3. [MAC OS](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#id13)

-4. [Windows](https://paddle-lite.readthedocs.io/zh/latest/demo_guides/x86.html#windows)

+4. [Windows](https://paddle-lite.readthedocs.io/zh/latest/demo_guides/x86.html#id4)

### 1.2 准备预测库

@@ -84,7 +84,7 @@ Paddle-Lite 提供了多种策略来自动优化原始的模型,其中包括

|模型简介|检测模型|识别模型|Paddle-Lite版本|

|-|-|-|-|

-|超轻量级中文OCR opt优化模型|[下载地址](https://paddleocr.bj.bcebos.com/deploy/lite/ch_det_mv3_db_opt.nb)|[下载地址](https://paddleocr.bj.bcebos.com/deploy/lite/ch_rec_mv3_crnn_opt.nb)|2.6.1|

+|超轻量级中文OCR opt优化模型|[下载地址](https://paddleocr.bj.bcebos.com/deploy/lite/ch_det_mv3_db_opt.nb)|[下载地址](https://paddleocr.bj.bcebos.com/deploy/lite/ch_rec_mv3_crnn_opt.nb)|develop|

如果直接使用上述表格中的模型进行部署,可略过下述步骤,直接阅读 [2.2节](#2.2与手机联调)。

diff --git a/deploy/lite/readme_en.md b/deploy/lite/readme_en.md

index a8c9f604a756b1f7d1da457dab0909244de021f9..1efbba368cedcfe276b56560082662211e2c333a 100644

--- a/deploy/lite/readme_en.md

+++ b/deploy/lite/readme_en.md

@@ -17,7 +17,7 @@ deployment solutions for end-side deployment issues.

[build for Docker](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#docker)

[build for Linux](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#android)

[build for MAC OS](https://paddle-lite.readthedocs.io/zh/latest/user_guides/source_compile.html#id13)

-[build for windows](https://paddle-lite.readthedocs.io/zh/latest/demo_guides/x86.html#windows)

+[build for windows](https://paddle-lite.readthedocs.io/zh/latest/demo_guides/x86.html#id4)

## 3. Download prebuild library for android and ios

@@ -155,7 +155,7 @@ demo/cxx/ocr/

|-- debug/

| |--ch_det_mv3_db_opt.nb Detection model

| |--ch_rec_mv3_crnn_opt.nb Recognition model

-| |--11.jpg image for OCR

+| |--11.jpg Image for OCR

| |--ppocr_keys_v1.txt Dictionary file

| |--libpaddle_light_api_shared.so C++ .so file

| |--config.txt Config file

diff --git a/deploy/pdserving/det_local_server.py b/deploy/pdserving/det_local_server.py

new file mode 100644

index 0000000000000000000000000000000000000000..acfccdb6d24a7e1ba490705dd147f21dbf921d31

--- /dev/null

+++ b/deploy/pdserving/det_local_server.py

@@ -0,0 +1,71 @@

+# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+from paddle_serving_client import Client

+import cv2

+import sys

+import numpy as np

+import os

+from paddle_serving_client import Client

+from paddle_serving_app.reader import Sequential, ResizeByFactor

+from paddle_serving_app.reader import Div, Normalize, Transpose

+from paddle_serving_app.reader import DBPostProcess, FilterBoxes

+from paddle_serving_server_gpu.web_service import WebService

+import time

+import re

+import base64

+

+

+class OCRService(WebService):

+ def init_det(self):

+ self.det_preprocess = Sequential([

+ ResizeByFactor(32, 960), Div(255),

+ Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]), Transpose(

+ (2, 0, 1))

+ ])

+ self.filter_func = FilterBoxes(10, 10)

+ self.post_func = DBPostProcess({

+ "thresh": 0.3,

+ "box_thresh": 0.5,

+ "max_candidates": 1000,

+ "unclip_ratio": 1.5,

+ "min_size": 3

+ })

+

+ def preprocess(self, feed=[], fetch=[]):

+ data = base64.b64decode(feed[0]["image"].encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ im = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ self.ori_h, self.ori_w, _ = im.shape

+ det_img = self.det_preprocess(im)

+ _, self.new_h, self.new_w = det_img.shape

+ return {"image": det_img[np.newaxis, :].copy()}, ["concat_1.tmp_0"]

+

+ def postprocess(self, feed={}, fetch=[], fetch_map=None):

+ det_out = fetch_map["concat_1.tmp_0"]

+ ratio_list = [

+ float(self.new_h) / self.ori_h, float(self.new_w) / self.ori_w

+ ]

+ dt_boxes_list = self.post_func(det_out, [ratio_list])

+ dt_boxes = self.filter_func(dt_boxes_list[0], [self.ori_h, self.ori_w])

+ return {"dt_boxes": dt_boxes.tolist()}

+

+

+ocr_service = OCRService(name="ocr")

+ocr_service.load_model_config("ocr_det_model")

+ocr_service.set_gpus("0")

+ocr_service.prepare_server(workdir="workdir", port=9292, device="gpu", gpuid=0)

+ocr_service.init_det()

+ocr_service.run_debugger_service()

+ocr_service.run_web_service()

diff --git a/deploy/pdserving/det_web_server.py b/deploy/pdserving/det_web_server.py

new file mode 100644

index 0000000000000000000000000000000000000000..dd69be0c70eb0f4dd627aa47ad33045a204f78c0

--- /dev/null

+++ b/deploy/pdserving/det_web_server.py

@@ -0,0 +1,72 @@

+# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+from paddle_serving_client import Client

+import cv2

+import sys

+import numpy as np

+import os

+from paddle_serving_client import Client

+from paddle_serving_app.reader import Sequential, ResizeByFactor

+from paddle_serving_app.reader import Div, Normalize, Transpose

+from paddle_serving_app.reader import DBPostProcess, FilterBoxes

+from paddle_serving_server_gpu.web_service import WebService

+import time

+import re

+import base64

+

+

+class OCRService(WebService):

+ def init_det(self):

+ self.det_preprocess = Sequential([

+ ResizeByFactor(32, 960), Div(255),

+ Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]), Transpose(

+ (2, 0, 1))

+ ])

+ self.filter_func = FilterBoxes(10, 10)

+ self.post_func = DBPostProcess({

+ "thresh": 0.3,

+ "box_thresh": 0.5,

+ "max_candidates": 1000,

+ "unclip_ratio": 1.5,

+ "min_size": 3

+ })

+

+ def preprocess(self, feed=[], fetch=[]):

+ data = base64.b64decode(feed[0]["image"].encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ im = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ self.ori_h, self.ori_w, _ = im.shape

+ det_img = self.det_preprocess(im)

+ _, self.new_h, self.new_w = det_img.shape

+ print(det_img)

+ return {"image": det_img}, ["concat_1.tmp_0"]

+

+ def postprocess(self, feed={}, fetch=[], fetch_map=None):

+ det_out = fetch_map["concat_1.tmp_0"]

+ ratio_list = [

+ float(self.new_h) / self.ori_h, float(self.new_w) / self.ori_w

+ ]

+ dt_boxes_list = self.post_func(det_out, [ratio_list])

+ dt_boxes = self.filter_func(dt_boxes_list[0], [self.ori_h, self.ori_w])

+ return {"dt_boxes": dt_boxes.tolist()}

+

+

+ocr_service = OCRService(name="ocr")

+ocr_service.load_model_config("ocr_det_model")

+ocr_service.set_gpus("0")

+ocr_service.prepare_server(workdir="workdir", port=9292, device="gpu", gpuid=0)

+ocr_service.init_det()

+ocr_service.run_rpc_service()

+ocr_service.run_web_service()

diff --git a/deploy/pdserving/ocr_local_server.py b/deploy/pdserving/ocr_local_server.py

new file mode 100644

index 0000000000000000000000000000000000000000..93e2d7a3d1dc64451774ecf790c2ebd3b39f1d91

--- /dev/null

+++ b/deploy/pdserving/ocr_local_server.py

@@ -0,0 +1,103 @@

+# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+from paddle_serving_client import Client

+from paddle_serving_app.reader import OCRReader

+import cv2

+import sys

+import numpy as np

+import os

+from paddle_serving_client import Client

+from paddle_serving_app.reader import Sequential, URL2Image, ResizeByFactor

+from paddle_serving_app.reader import Div, Normalize, Transpose

+from paddle_serving_app.reader import DBPostProcess, FilterBoxes, GetRotateCropImage, SortedBoxes

+from paddle_serving_server_gpu.web_service import WebService

+from paddle_serving_app.local_predict import Debugger

+import time

+import re

+import base64

+

+

+class OCRService(WebService):

+ def init_det_debugger(self, det_model_config):

+ self.det_preprocess = Sequential([

+ ResizeByFactor(32, 960), Div(255),

+ Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]), Transpose(

+ (2, 0, 1))

+ ])

+ self.det_client = Debugger()

+ self.det_client.load_model_config(

+ det_model_config, gpu=True, profile=False)

+ self.ocr_reader = OCRReader()

+

+ def preprocess(self, feed=[], fetch=[]):

+ data = base64.b64decode(feed[0]["image"].encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ im = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ ori_h, ori_w, _ = im.shape

+ det_img = self.det_preprocess(im)

+ _, new_h, new_w = det_img.shape

+ det_img = det_img[np.newaxis, :]

+ det_img = det_img.copy()

+ det_out = self.det_client.predict(

+ feed={"image": det_img}, fetch=["concat_1.tmp_0"])

+ filter_func = FilterBoxes(10, 10)

+ post_func = DBPostProcess({

+ "thresh": 0.3,

+ "box_thresh": 0.5,

+ "max_candidates": 1000,

+ "unclip_ratio": 1.5,

+ "min_size": 3

+ })

+ sorted_boxes = SortedBoxes()

+ ratio_list = [float(new_h) / ori_h, float(new_w) / ori_w]

+ dt_boxes_list = post_func(det_out["concat_1.tmp_0"], [ratio_list])

+ dt_boxes = filter_func(dt_boxes_list[0], [ori_h, ori_w])

+ dt_boxes = sorted_boxes(dt_boxes)

+ get_rotate_crop_image = GetRotateCropImage()

+ img_list = []

+ max_wh_ratio = 0

+ for i, dtbox in enumerate(dt_boxes):

+ boximg = get_rotate_crop_image(im, dt_boxes[i])

+ img_list.append(boximg)

+ h, w = boximg.shape[0:2]

+ wh_ratio = w * 1.0 / h

+ max_wh_ratio = max(max_wh_ratio, wh_ratio)

+ if len(img_list) == 0:

+ return [], []

+ _, w, h = self.ocr_reader.resize_norm_img(img_list[0],

+ max_wh_ratio).shape

+ imgs = np.zeros((len(img_list), 3, w, h)).astype('float32')

+ for id, img in enumerate(img_list):

+ norm_img = self.ocr_reader.resize_norm_img(img, max_wh_ratio)

+ imgs[id] = norm_img

+ feed = {"image": imgs.copy()}

+ fetch = ["ctc_greedy_decoder_0.tmp_0", "softmax_0.tmp_0"]

+ return feed, fetch

+

+ def postprocess(self, feed={}, fetch=[], fetch_map=None):

+ rec_res = self.ocr_reader.postprocess(fetch_map, with_score=True)

+ res_lst = []

+ for res in rec_res:

+ res_lst.append(res[0])

+ res = {"res": res_lst}

+ return res

+

+

+ocr_service = OCRService(name="ocr")

+ocr_service.load_model_config("ocr_rec_model")

+ocr_service.prepare_server(workdir="workdir", port=9292)

+ocr_service.init_det_debugger(det_model_config="ocr_det_model")

+ocr_service.run_debugger_service(gpu=True)

+ocr_service.run_web_service()

diff --git a/deploy/pdserving/ocr_web_client.py b/deploy/pdserving/ocr_web_client.py

new file mode 100644

index 0000000000000000000000000000000000000000..e2a92eb8ee4aa62059be184dd7e67237ed460f13

--- /dev/null

+++ b/deploy/pdserving/ocr_web_client.py

@@ -0,0 +1,37 @@

+# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+# -*- coding: utf-8 -*-

+

+import requests

+import json

+import cv2

+import base64

+import os, sys

+import time

+

+def cv2_to_base64(image):

+ #data = cv2.imencode('.jpg', image)[1]

+ return base64.b64encode(image).decode(

+ 'utf8') #data.tostring()).decode('utf8')

+

+headers = {"Content-type": "application/json"}

+url = "http://127.0.0.1:9292/ocr/prediction"

+test_img_dir = "../../doc/imgs/"

+for img_file in os.listdir(test_img_dir):

+ with open(os.path.join(test_img_dir, img_file), 'rb') as file:

+ image_data1 = file.read()

+ image = cv2_to_base64(image_data1)

+ data = {"feed": [{"image": image}], "fetch": ["res"]}

+ r = requests.post(url=url, headers=headers, data=json.dumps(data))

+ print(r.json())

diff --git a/deploy/pdserving/ocr_web_server.py b/deploy/pdserving/ocr_web_server.py

new file mode 100644

index 0000000000000000000000000000000000000000..d017f6b9b560dc82158641b9f3a9f80137b40716

--- /dev/null

+++ b/deploy/pdserving/ocr_web_server.py

@@ -0,0 +1,99 @@

+# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+from paddle_serving_client import Client

+from paddle_serving_app.reader import OCRReader

+import cv2

+import sys

+import numpy as np

+import os

+from paddle_serving_client import Client

+from paddle_serving_app.reader import Sequential, URL2Image, ResizeByFactor

+from paddle_serving_app.reader import Div, Normalize, Transpose

+from paddle_serving_app.reader import DBPostProcess, FilterBoxes, GetRotateCropImage, SortedBoxes

+from paddle_serving_server_gpu.web_service import WebService

+import time

+import re

+import base64

+

+

+class OCRService(WebService):

+ def init_det_client(self, det_port, det_client_config):

+ self.det_preprocess = Sequential([

+ ResizeByFactor(32, 960), Div(255),

+ Normalize([0.485, 0.456, 0.406], [0.229, 0.224, 0.225]), Transpose(

+ (2, 0, 1))

+ ])

+ self.det_client = Client()

+ self.det_client.load_client_config(det_client_config)

+ self.det_client.connect(["127.0.0.1:{}".format(det_port)])

+ self.ocr_reader = OCRReader()

+

+ def preprocess(self, feed=[], fetch=[]):

+ data = base64.b64decode(feed[0]["image"].encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ im = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ ori_h, ori_w, _ = im.shape

+ det_img = self.det_preprocess(im)

+ det_out = self.det_client.predict(

+ feed={"image": det_img}, fetch=["concat_1.tmp_0"])

+ _, new_h, new_w = det_img.shape

+ filter_func = FilterBoxes(10, 10)

+ post_func = DBPostProcess({

+ "thresh": 0.3,

+ "box_thresh": 0.5,

+ "max_candidates": 1000,

+ "unclip_ratio": 1.5,

+ "min_size": 3

+ })

+ sorted_boxes = SortedBoxes()

+ ratio_list = [float(new_h) / ori_h, float(new_w) / ori_w]

+ dt_boxes_list = post_func(det_out["concat_1.tmp_0"], [ratio_list])

+ dt_boxes = filter_func(dt_boxes_list[0], [ori_h, ori_w])

+ dt_boxes = sorted_boxes(dt_boxes)

+ get_rotate_crop_image = GetRotateCropImage()

+ feed_list = []

+ img_list = []

+ max_wh_ratio = 0

+ for i, dtbox in enumerate(dt_boxes):

+ boximg = get_rotate_crop_image(im, dt_boxes[i])

+ img_list.append(boximg)

+ h, w = boximg.shape[0:2]

+ wh_ratio = w * 1.0 / h

+ max_wh_ratio = max(max_wh_ratio, wh_ratio)

+ for img in img_list:

+ norm_img = self.ocr_reader.resize_norm_img(img, max_wh_ratio)

+ feed = {"image": norm_img}

+ feed_list.append(feed)

+ fetch = ["ctc_greedy_decoder_0.tmp_0", "softmax_0.tmp_0"]

+ return feed_list, fetch

+

+ def postprocess(self, feed={}, fetch=[], fetch_map=None):

+ rec_res = self.ocr_reader.postprocess(fetch_map, with_score=True)

+ res_lst = []

+ for res in rec_res:

+ res_lst.append(res[0])

+ res = {"res": res_lst}

+ return res

+

+

+ocr_service = OCRService(name="ocr")

+ocr_service.load_model_config("ocr_rec_model")

+ocr_service.set_gpus("0")

+ocr_service.prepare_server(workdir="workdir", port=9292, device="gpu", gpuid=0)

+ocr_service.init_det_client(

+ det_port=9293,

+ det_client_config="ocr_det_client/serving_client_conf.prototxt")

+ocr_service.run_rpc_service()

+ocr_service.run_web_service()

diff --git a/deploy/pdserving/readme.md b/deploy/pdserving/readme.md

index 27542774d0df02e2c63484853aaffb47662ee1a3..4ec42d79bec15bb254e6436a1f0e65c3bec630e5 100644

--- a/deploy/pdserving/readme.md

+++ b/deploy/pdserving/readme.md

@@ -1,28 +1,83 @@

-# Paddle Serving 服务部署

+# Paddle Serving 服务部署(Beta)

本教程将介绍基于[Paddle Serving](https://github.com/PaddlePaddle/Serving)部署PaddleOCR在线预测服务的详细步骤。

## 快速启动服务

### 1. 准备环境

+我们先安装Paddle Serving相关组件

+我们推荐用户使用GPU来做Paddle Serving的OCR服务部署

+

+**CUDA版本:9.0**

+**CUDNN版本:7.0**

+**操作系统版本:CentOS 6以上**

+

+```

+#以下提供beta版本的paddle serving whl包,欢迎试用,正式版会在7月底正式上线

+wget --no-check-certificate https://paddle-serving.bj.bcebos.com/others/paddle_serving_server_gpu-0.3.2-py2-none-any.whl

+wget --no-check-certificate https://paddle-serving.bj.bcebos.com/others/paddle_serving_app-0.1.2-py2-none-any.whl

+wget --no-check-certificate https://paddle-serving.bj.bcebos.com/others/paddle_serving_client-0.3.2-cp27-none-any.whl

+python -m pip install paddle_serving_app-0.1.2-py2-none-any.whl paddle_serving_server_gpu-0.3.2-py2-none-any.whl paddle_serving_client-0.3.2-cp27-none-any.whl

+```

### 2. 模型转换

+可以使用`paddle_serving_app`提供的模型,执行下列命令

+```

+python -m paddle_serving_app.package --get_model ocr_rec

+tar -xzvf ocr_rec.tar.gz

+python -m paddle_serving_app.package --get_model ocr_det

+tar -xzvf ocr_det.tar.gz

+```

+执行上述命令会下载`db_crnn_mobile`的模型,如果想要下载规模更大的`db_crnn_server`模型,可以在下载预测模型并解压之后。参考[如何从Paddle保存的预测模型转为Paddle Serving格式可部署的模型](https://github.com/PaddlePaddle/Serving/blob/develop/doc/INFERENCE_TO_SERVING_CN.md)。

### 3. 启动服务

启动服务可以根据实际需求选择启动`标准版`或者`快速版`,两种方式的对比如下表:

|版本|特点|适用场景|

|-|-|-|

-|标准版|||

-|快速版|||

+|标准版|稳定性高,分布式部署|适用于吞吐量大,需要跨机房部署的情况|

+|快速版|部署方便,预测速度快|适用于对预测速度要求高,迭代速度快的场景|

#### 方式1. 启动标准版服务

+```

+python -m paddle_serving_server_gpu.serve --model ocr_det_model --port 9293 --gpu_id 0

+python ocr_web_server.py

+```

+

#### 方式2. 启动快速版服务

+```

+python ocr_local_server.py

+```

## 发送预测请求

+```

+python ocr_web_client.py

+```

+

## 返回结果格式说明

+返回结果是json格式

+```

+{u'result': {u'res': [u'\u571f\u5730\u6574\u6cbb\u4e0e\u571f\u58e4\u4fee\u590d\u7814\u7a76\u4e2d\u5fc3', u'\u534e\u5357\u519c\u4e1a\u5927\u5b661\u7d20\u56fe']}}

+```

+我们也可以打印结果json串中`res`字段的每一句话

+```

+土地整治与土壤修复研究中心

+华南农业大学1素图

+```

+

## 自定义修改服务逻辑

+

+在`ocr_web_server.py`或是`ocr_local_server.py`当中的`preprocess`函数里面做了检测服务和识别服务的前处理,`postprocess`函数里面做了识别的后处理服务,可以在相应的函数中做修改。调用了`paddle_serving_app`库提供的常见CV模型的前处理/后处理库。

+

+如果想要单独启动Paddle Serving的检测服务和识别服务,参见下列表格, 执行对应的脚本即可。

+

+| 模型 | 标准版 | 快速版 |

+| ---- | ----------------- | ------------------- |

+| 检测 | det_web_server.py | det_local_server.py |

+| 识别 | rec_web_server.py | rec_local_server.py |

+

+更多信息参见[Paddle Serving](https://github.com/PaddlePaddle/Serving)

diff --git a/deploy/pdserving/rec_local_server.py b/deploy/pdserving/rec_local_server.py

new file mode 100644

index 0000000000000000000000000000000000000000..fbe67aafee5c8dcae269cd4ad6f6100ed514f0b7

--- /dev/null

+++ b/deploy/pdserving/rec_local_server.py

@@ -0,0 +1,72 @@

+# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+from paddle_serving_client import Client

+from paddle_serving_app.reader import OCRReader

+import cv2

+import sys

+import numpy as np

+import os

+from paddle_serving_client import Client

+from paddle_serving_app.reader import Sequential, URL2Image, ResizeByFactor

+from paddle_serving_app.reader import Div, Normalize, Transpose

+from paddle_serving_app.reader import DBPostProcess, FilterBoxes, GetRotateCropImage, SortedBoxes

+from paddle_serving_server_gpu.web_service import WebService

+import time

+import re

+import base64

+

+

+class OCRService(WebService):

+ def init_rec(self):

+ self.ocr_reader = OCRReader()

+

+ def preprocess(self, feed=[], fetch=[]):

+ img_list = []

+ for feed_data in feed:

+ data = base64.b64decode(feed_data["image"].encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ im = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ img_list.append(im)

+ max_wh_ratio = 0

+ for i, boximg in enumerate(img_list):

+ h, w = boximg.shape[0:2]

+ wh_ratio = w * 1.0 / h

+ max_wh_ratio = max(max_wh_ratio, wh_ratio)

+ _, w, h = self.ocr_reader.resize_norm_img(img_list[0],

+ max_wh_ratio).shape

+ imgs = np.zeros((len(img_list), 3, w, h)).astype('float32')

+ for i, img in enumerate(img_list):

+ norm_img = self.ocr_reader.resize_norm_img(img, max_wh_ratio)

+ imgs[i] = norm_img

+ feed = {"image": imgs.copy()}

+ fetch = ["ctc_greedy_decoder_0.tmp_0", "softmax_0.tmp_0"]

+ return feed, fetch

+

+ def postprocess(self, feed={}, fetch=[], fetch_map=None):

+ rec_res = self.ocr_reader.postprocess(fetch_map, with_score=True)

+ res_lst = []

+ for res in rec_res:

+ res_lst.append(res[0])

+ res = {"res": res_lst}

+ return res

+

+

+ocr_service = OCRService(name="ocr")

+ocr_service.load_model_config("ocr_rec_model")

+ocr_service.set_gpus("0")

+ocr_service.init_rec()

+ocr_service.prepare_server(workdir="workdir", port=9292, device="gpu", gpuid=0)

+ocr_service.run_debugger_service()

+ocr_service.run_web_service()

diff --git a/deploy/pdserving/rec_web_server.py b/deploy/pdserving/rec_web_server.py

new file mode 100644

index 0000000000000000000000000000000000000000..684c313d4d50cfe00c576c81aad05a810525dcce

--- /dev/null

+++ b/deploy/pdserving/rec_web_server.py

@@ -0,0 +1,71 @@

+# Copyright (c) 2020 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+from paddle_serving_client import Client

+from paddle_serving_app.reader import OCRReader

+import cv2

+import sys

+import numpy as np

+import os

+from paddle_serving_client import Client

+from paddle_serving_app.reader import Sequential, URL2Image, ResizeByFactor

+from paddle_serving_app.reader import Div, Normalize, Transpose

+from paddle_serving_app.reader import DBPostProcess, FilterBoxes, GetRotateCropImage, SortedBoxes

+from paddle_serving_server_gpu.web_service import WebService

+import time

+import re

+import base64

+

+

+class OCRService(WebService):

+ def init_rec(self):

+ self.ocr_reader = OCRReader()

+

+ def preprocess(self, feed=[], fetch=[]):

+ # TODO: to handle batch rec images

+ img_list = []

+ for feed_data in feed:

+ data = base64.b64decode(feed_data["image"].encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ im = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ img_list.append(im)

+ feed_list = []

+ max_wh_ratio = 0

+ for i, boximg in enumerate(img_list):

+ h, w = boximg.shape[0:2]

+ wh_ratio = w * 1.0 / h

+ max_wh_ratio = max(max_wh_ratio, wh_ratio)

+ for img in img_list:

+ norm_img = self.ocr_reader.resize_norm_img(img, max_wh_ratio)

+ feed = {"image": norm_img}

+ feed_list.append(feed)

+ fetch = ["ctc_greedy_decoder_0.tmp_0", "softmax_0.tmp_0"]

+ return feed_list, fetch

+

+ def postprocess(self, feed={}, fetch=[], fetch_map=None):

+ rec_res = self.ocr_reader.postprocess(fetch_map, with_score=True)

+ res_lst = []

+ for res in rec_res:

+ res_lst.append(res[0])

+ res = {"res": res_lst}

+ return res

+

+

+ocr_service = OCRService(name="ocr")

+ocr_service.load_model_config("ocr_rec_model")

+ocr_service.set_gpus("0")

+ocr_service.init_rec()

+ocr_service.prepare_server(workdir="workdir", port=9292, device="gpu", gpuid=0)

+ocr_service.run_rpc_service()

+ocr_service.run_web_service()

diff --git a/doc/doc_ch/installation.md b/doc/doc_ch/installation.md

index 4b75e21bfab247bfde6c2146468dc522653b769c..7a51c5616c470e58ef4f186c4e3c809cf181e494 100644

--- a/doc/doc_ch/installation.md

+++ b/doc/doc_ch/installation.md

@@ -80,3 +80,6 @@ git clone https://gitee.com/paddlepaddle/PaddleOCR

cd PaddleOCR

pip3 install -r requirments.txt

```

+

+注意,windows环境下,建议从[这里](https://www.lfd.uci.edu/~gohlke/pythonlibs/#shapely)下载shapely安装包完成安装,

+直接通过pip安装的shapely库可能出现`[winRrror 126] 找不到指定模块的问题`。

diff --git a/doc/doc_ch/serving.md b/doc/doc_ch/serving.md

index 0b5eff3f4750ae051ca8ec50e0ab9bb9f97775c0..1cc57d5324f1415adba8863671fc19fddb648aa3 100644

--- a/doc/doc_ch/serving.md

+++ b/doc/doc_ch/serving.md

@@ -69,7 +69,7 @@ $ hub serving start --modules [Module1==Version1, Module2==Version2, ...] \

#### 方式2. 配置文件启动(支持CPU、GPU)

**启动命令:**

-```hub serving start --config/-c config.json```

+```hub serving start -c config.json```

其中,`config.json`格式如下:

```python

diff --git a/doc/doc_ch/update.md b/doc/doc_ch/update.md

index 59514aad0885b61721e037a7c5d6b065409391fb..e22d05b4316ebabbb7bb036e1be1b09600538925 100644

--- a/doc/doc_ch/update.md

+++ b/doc/doc_ch/update.md

@@ -1,4 +1,5 @@

# 更新

+- 2020.7.23 发布7月21日B站直播课回放和PPT,PaddleOCR开源大礼包全面解读,[获取地址](https://aistudio.baidu.com/aistudio/course/introduce/1519)

- 2020.7.15 添加基于EasyEdge和Paddle-Lite的移动端DEMO,支持iOS和Android系统

- 2020.7.15 完善预测部署,添加基于C++预测引擎推理、服务化部署和端侧部署方案,以及超轻量级中文OCR模型预测耗时Benchmark

- 2020.7.15 整理OCR相关数据集、常用数据标注以及合成工具

diff --git a/doc/doc_en/installation_en.md b/doc/doc_en/installation_en.md

index 585f9d43f34d2df86081180106dd4e4313f93a8d..9e4df74f6ddf3500262583e1d08bef12c4e14403 100644

--- a/doc/doc_en/installation_en.md

+++ b/doc/doc_en/installation_en.md

@@ -82,3 +82,9 @@ git clone https://gitee.com/paddlepaddle/PaddleOCR

cd PaddleOCR

pip3 install -r requirments.txt

```

+

+If you getting this error `OSError: [WinError 126] The specified module could not be found` when you install shapely on windows.

+

+Please try to download Shapely whl file using [http://www.lfd.uci.edu/~gohlke/pythonlibs/#shapely](http://www.lfd.uci.edu/~gohlke/pythonlibs/#shapely).

+

+Reference: [Solve shapely installation on windows](https://stackoverflow.com/questions/44398265/install-shapely-oserror-winerror-126-the-specified-module-could-not-be-found)

diff --git a/doc/doc_en/update_en.md b/doc/doc_en/update_en.md

index 6cd347a851b83284dea1fa31fbafd3213d9b835d..ef02d9dbf7a1379d029ca6fcf819a06d5996151b 100644

--- a/doc/doc_en/update_en.md

+++ b/doc/doc_en/update_en.md

@@ -1,5 +1,5 @@

# RECENT UPDATES

-

+- 2020.7.23, Release the playback and PPT of live class on BiliBili station, PaddleOCR Introduction, [address](https://aistudio.baidu.com/aistudio/course/introduce/1519)

- 2020.7.15, Add mobile App demo , support both iOS and Android ( based on easyedge and Paddle Lite)

- 2020.7.15, Improve the deployment ability, add the C + + inference , serving deployment. In addtion, the benchmarks of the ultra-lightweight Chinese OCR model are provided.

- 2020.7.15, Add several related datasets, data annotation and synthesis tools.

diff --git a/ppocr/modeling/backbones/rec_mobilenet_v3.py b/ppocr/modeling/backbones/rec_mobilenet_v3.py

index 506209cc9c701ac889b0d9f7eb4c1cb706a1f175..e504064242bfe6b0f433126ef4efb191b550a2eb 100755

--- a/ppocr/modeling/backbones/rec_mobilenet_v3.py

+++ b/ppocr/modeling/backbones/rec_mobilenet_v3.py

@@ -78,7 +78,7 @@ class MobileNetV3():

supported_scale = [0.35, 0.5, 0.75, 1.0, 1.25]

assert self.scale in supported_scale, \

- "supported scale are {} but input scale is {}".format(supported_scale, scale)

+ "supported scales are {} but input scale is {}".format(supported_scale, self.scale)

def __call__(self, input):

scale = self.scale