Merge branch 'develop' of https://github.com/PaddlePaddle/PaddleOCR into fix_cls_shape

Showing

configs/det/det_mv3_db_v1.1.yml

0 → 100755

deploy/paddleOcrSpringBoot/mvnw

0 → 100644

2.8 KB

deploy/pdserving/params.py

0 → 100644

deploy/pdserving/readme.md

已删除

100644 → 0

deploy/pdserving/readme_en.md

已删除

100644 → 0

deploy/slim/prune/README.md

0 → 100644

doc/PPOCR.pdf

0 → 100644

文件已添加

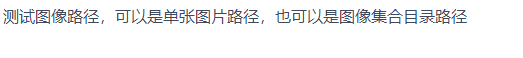

doc/datasets/VoTT.jpg

0 → 100644

164.6 KB

doc/doc_ch/serving_inference.md

0 → 100644

doc/imgs/french_0.jpg

0 → 100644

164.4 KB

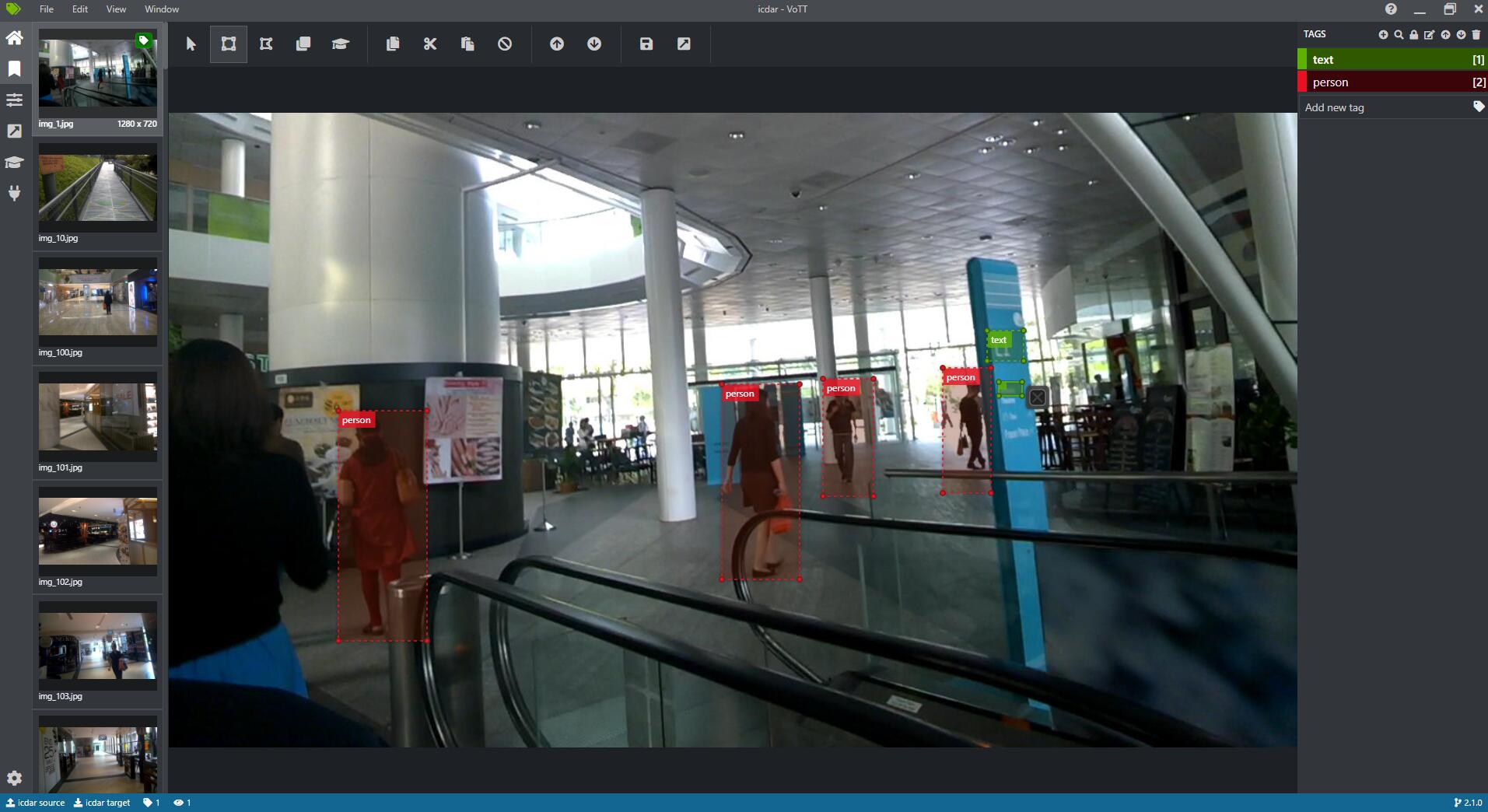

doc/imgs/ger_1.jpg

0 → 100644

34.0 KB

doc/imgs/ger_2.jpg

0 → 100644

46.8 KB

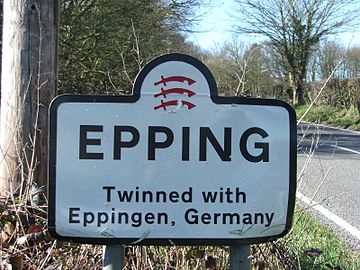

doc/imgs/japan_1.jpg

0 → 100644

6.1 KB

doc/imgs/japan_2.jpg

0 → 100644

95.8 KB

doc/imgs/korean_1.jpg

0 → 100644

982.9 KB

doc/imgs_results/img_12.jpg

0 → 100644

563.7 KB

| W: | H:

| W: | H:

ppocr/data/det/__init__.py

0 → 100644

ppocr/postprocess/__init__.py

0 → 100644

因为 它太大了无法显示 source diff 。你可以改为 查看blob。

ppocr/utils/corpus/readme.md

0 → 100644

ppocr/utils/corpus/readme_ch.md

0 → 100644

ppocr/utils/dict/occitan_dict.txt

0 → 100644

tools/__init__.py

0 → 100644

tools/infer/__init__.py

0 → 100644

tools/inference_to_serving.py

0 → 100644