@@ -185,12 +187,28 @@ PaddleOCR support a variety of cutting-edge algorithms related to OCR, and devel

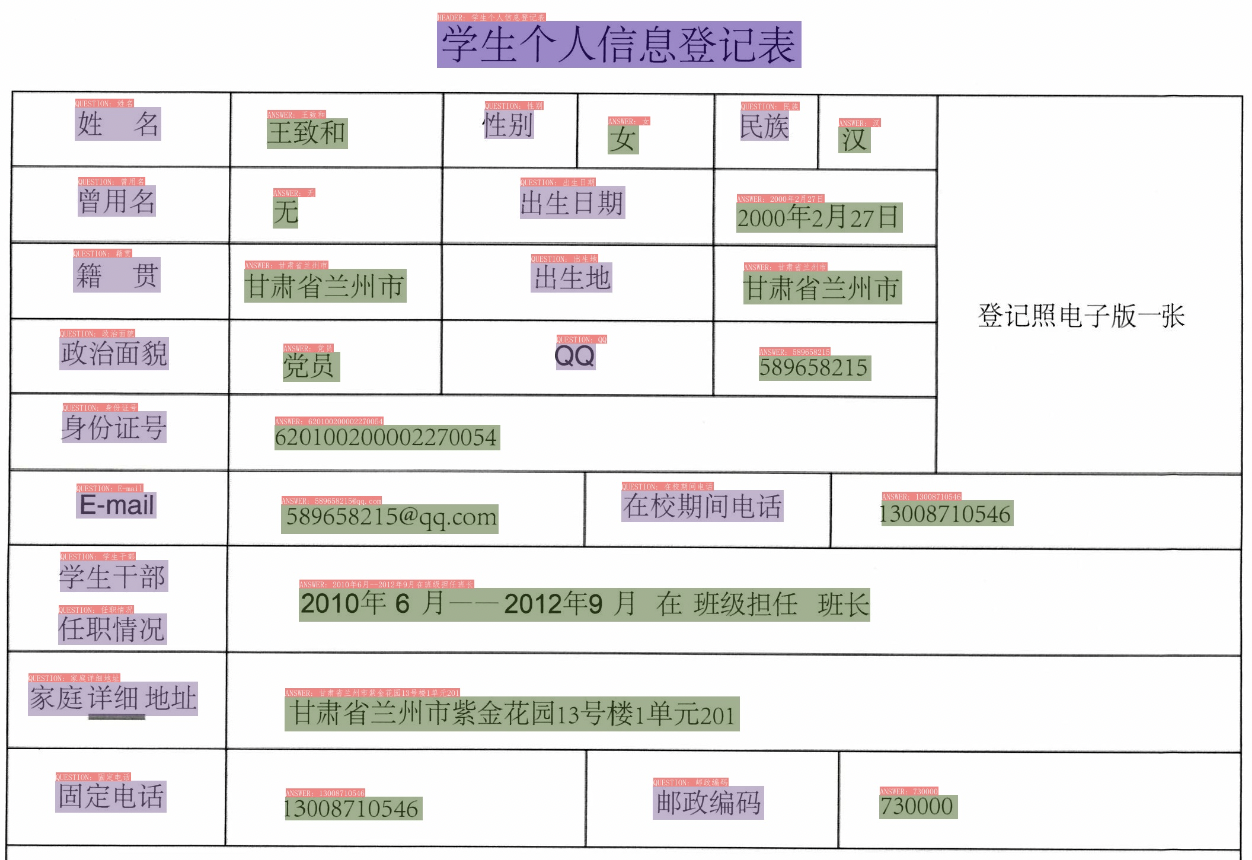

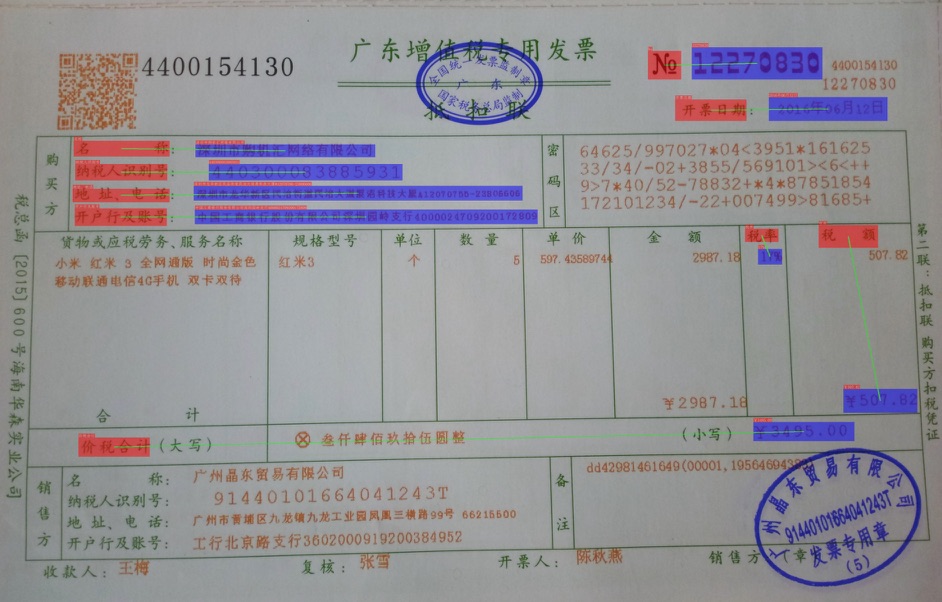

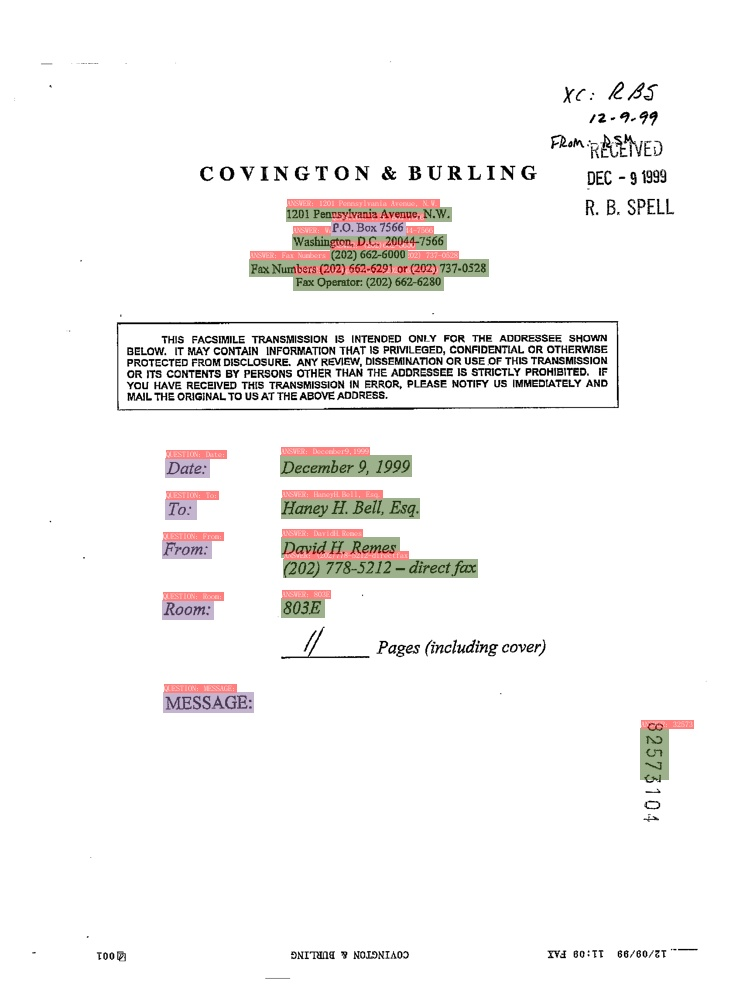

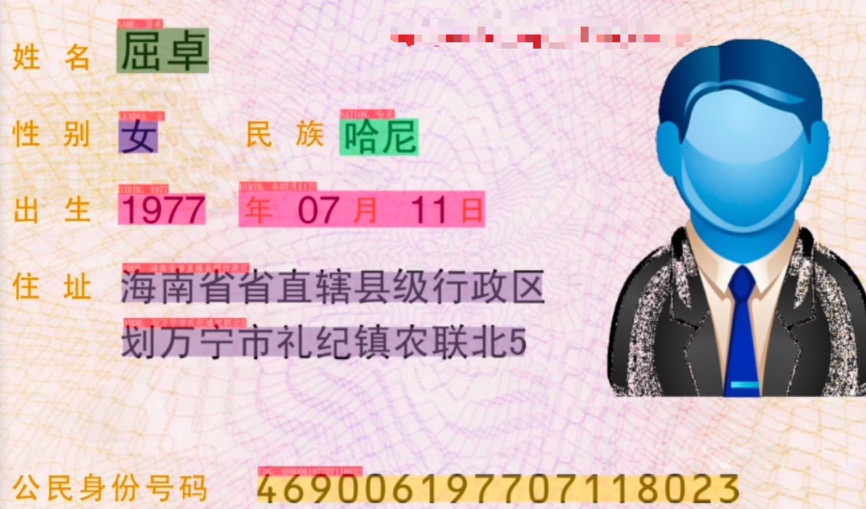

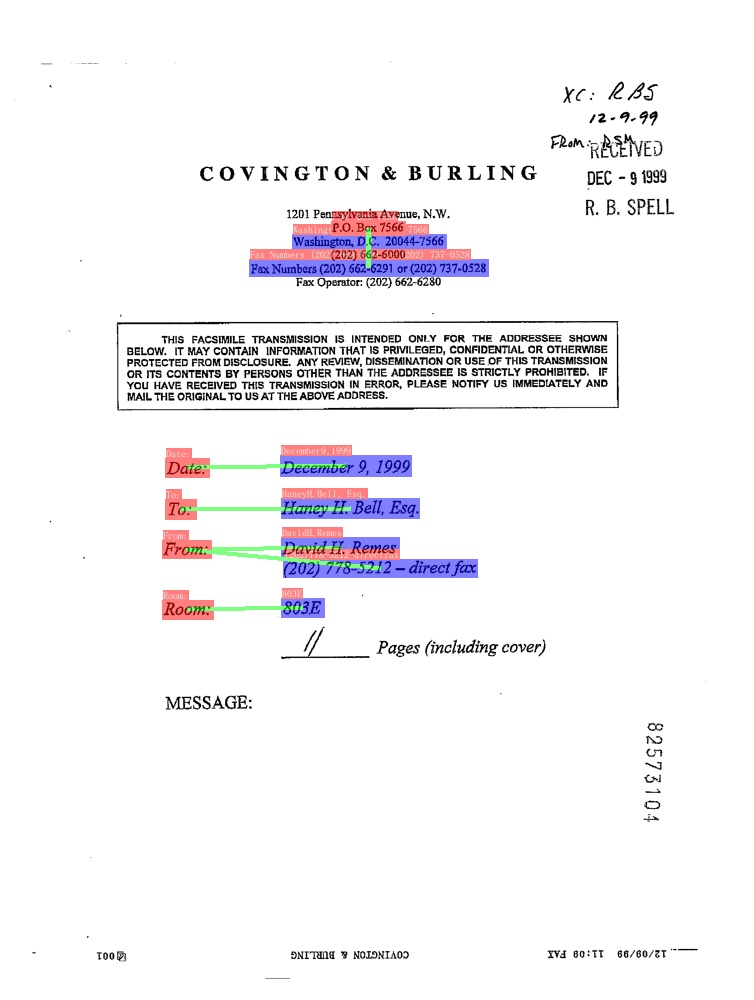

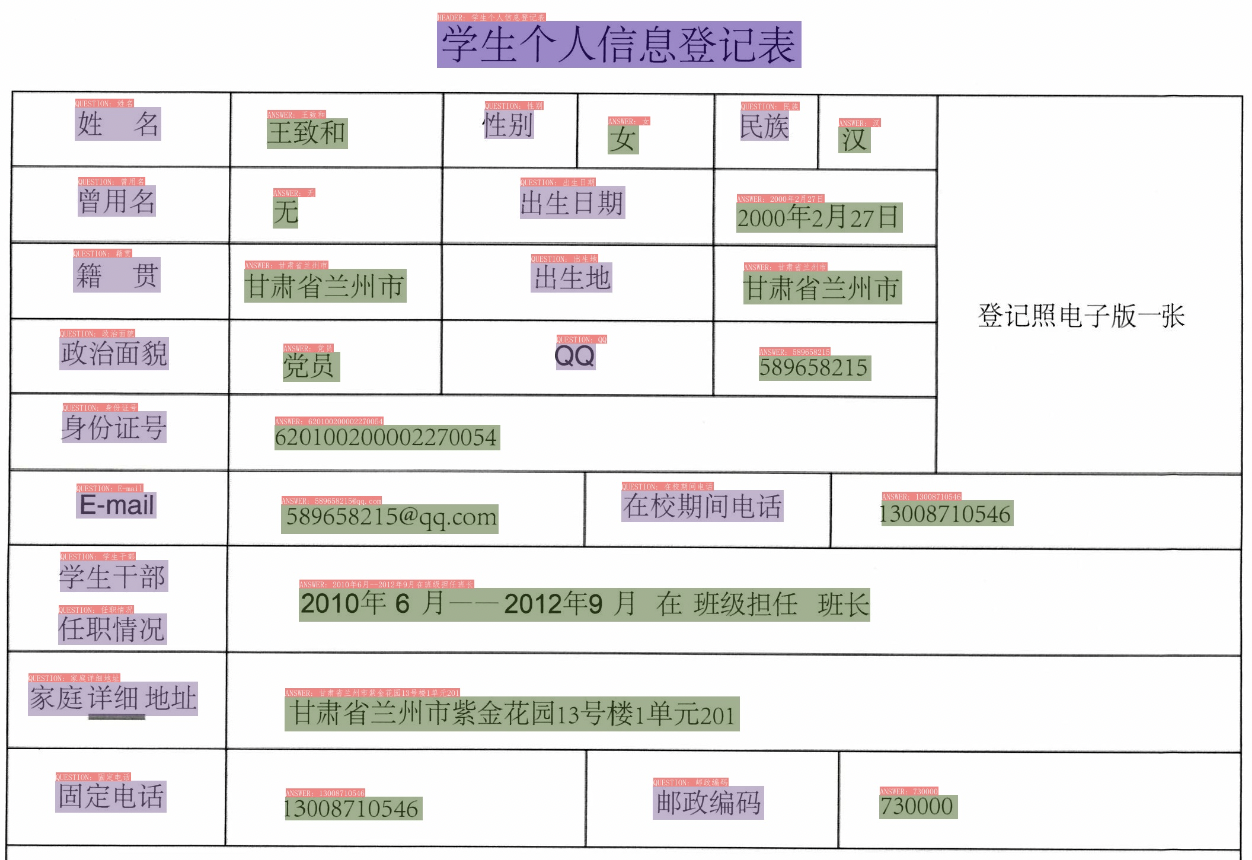

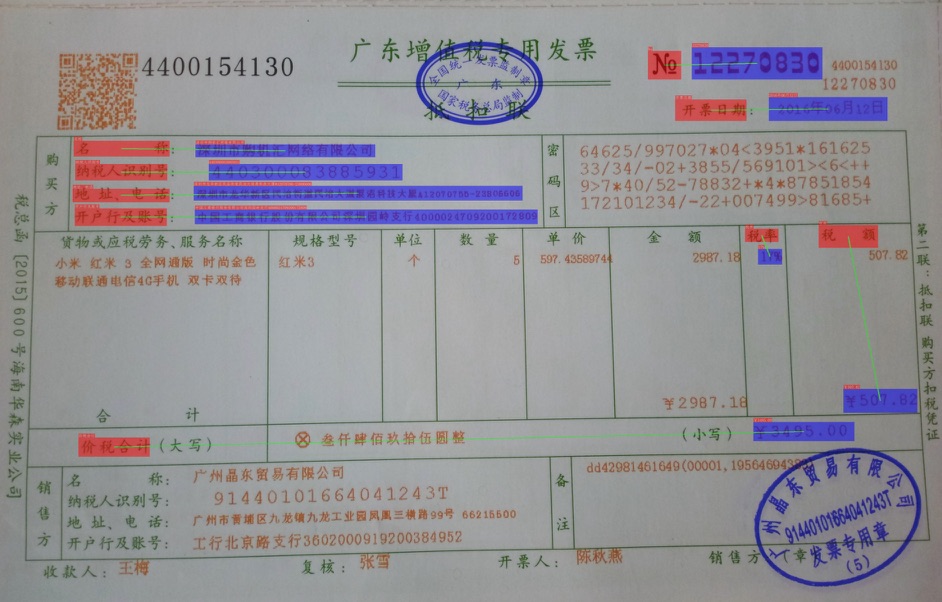

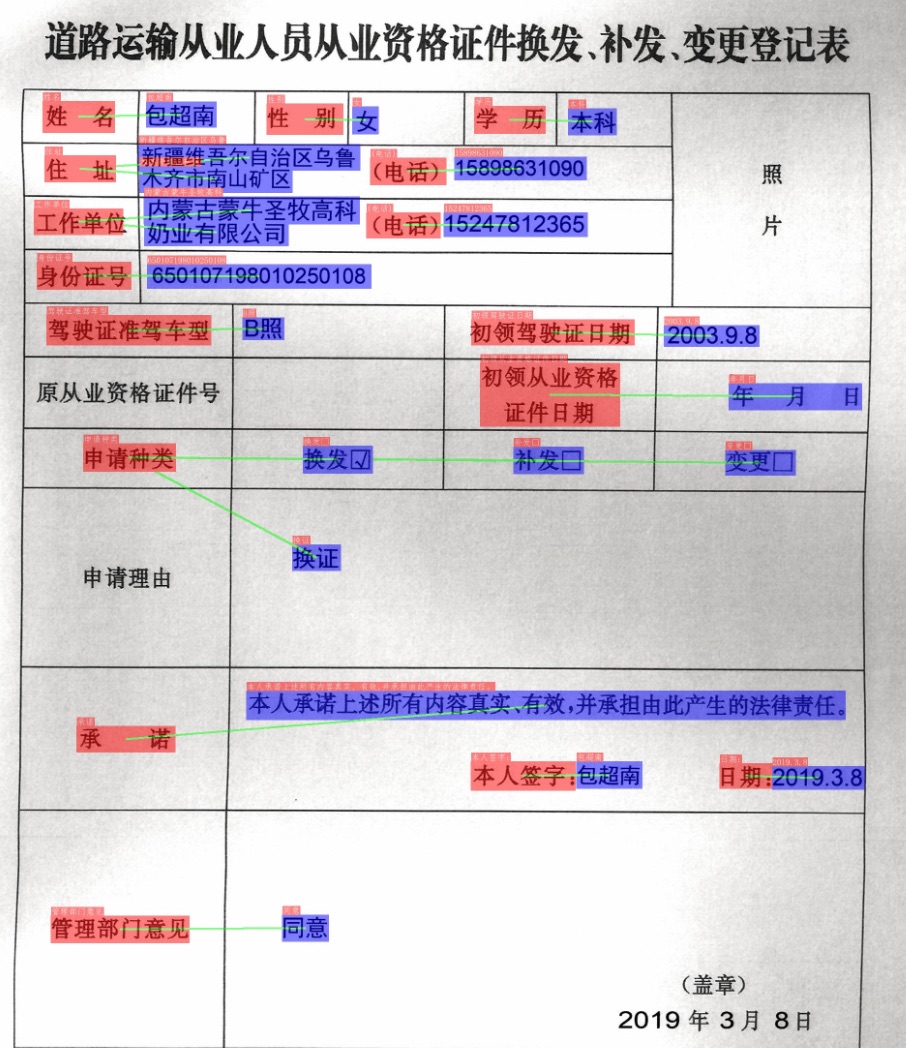

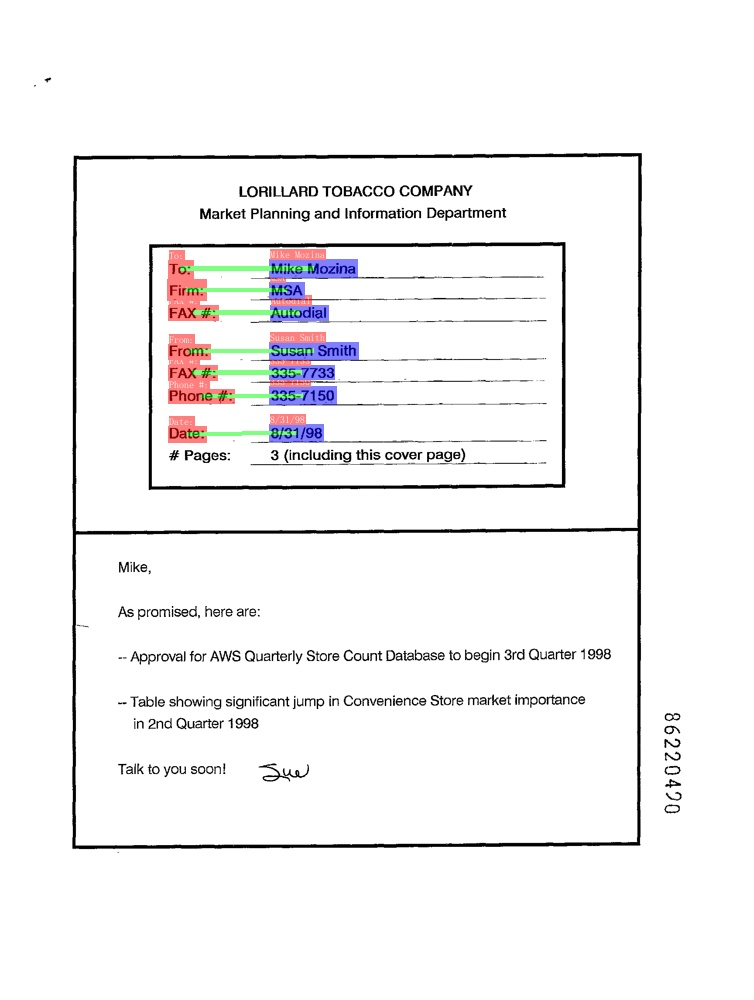

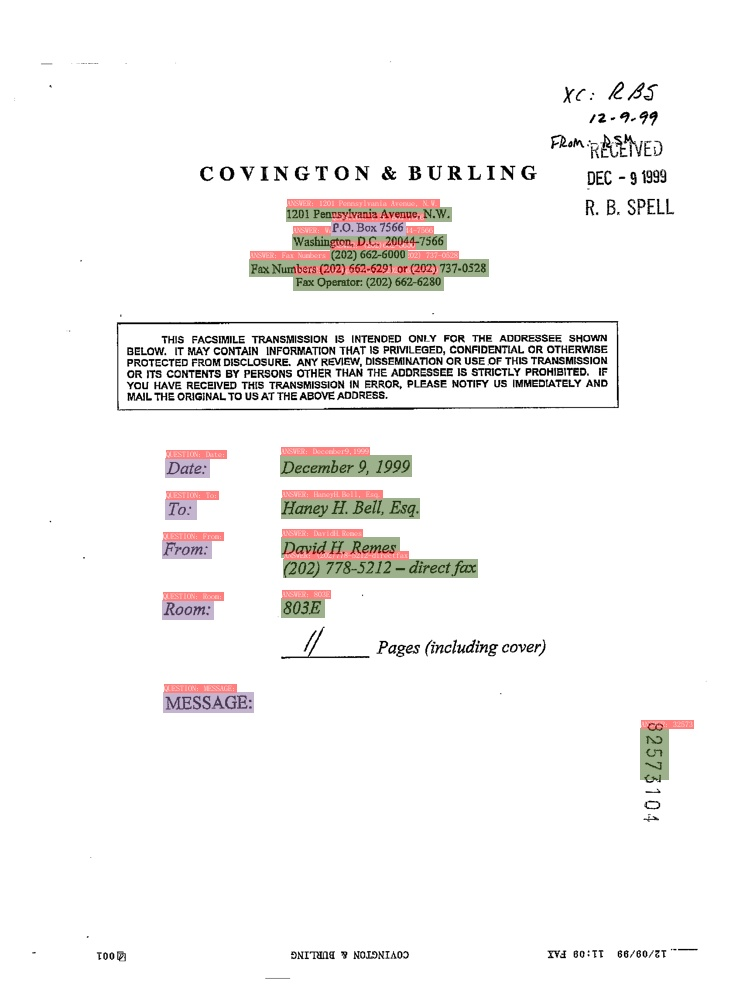

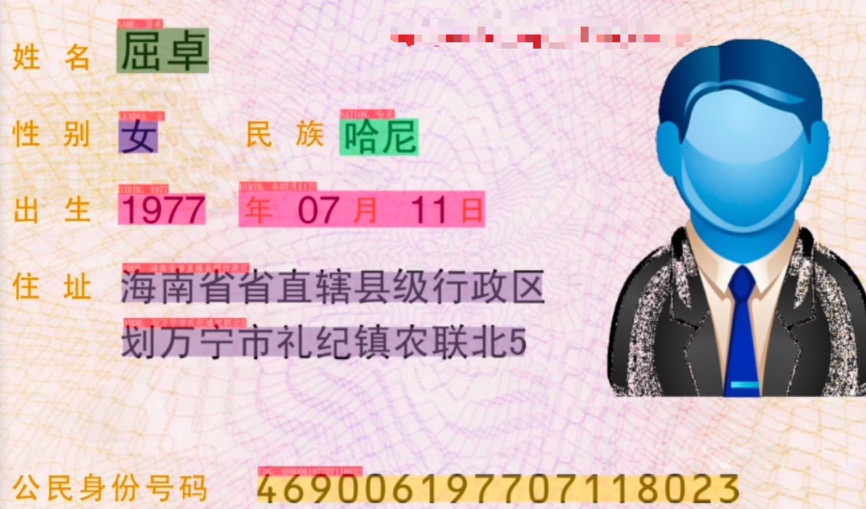

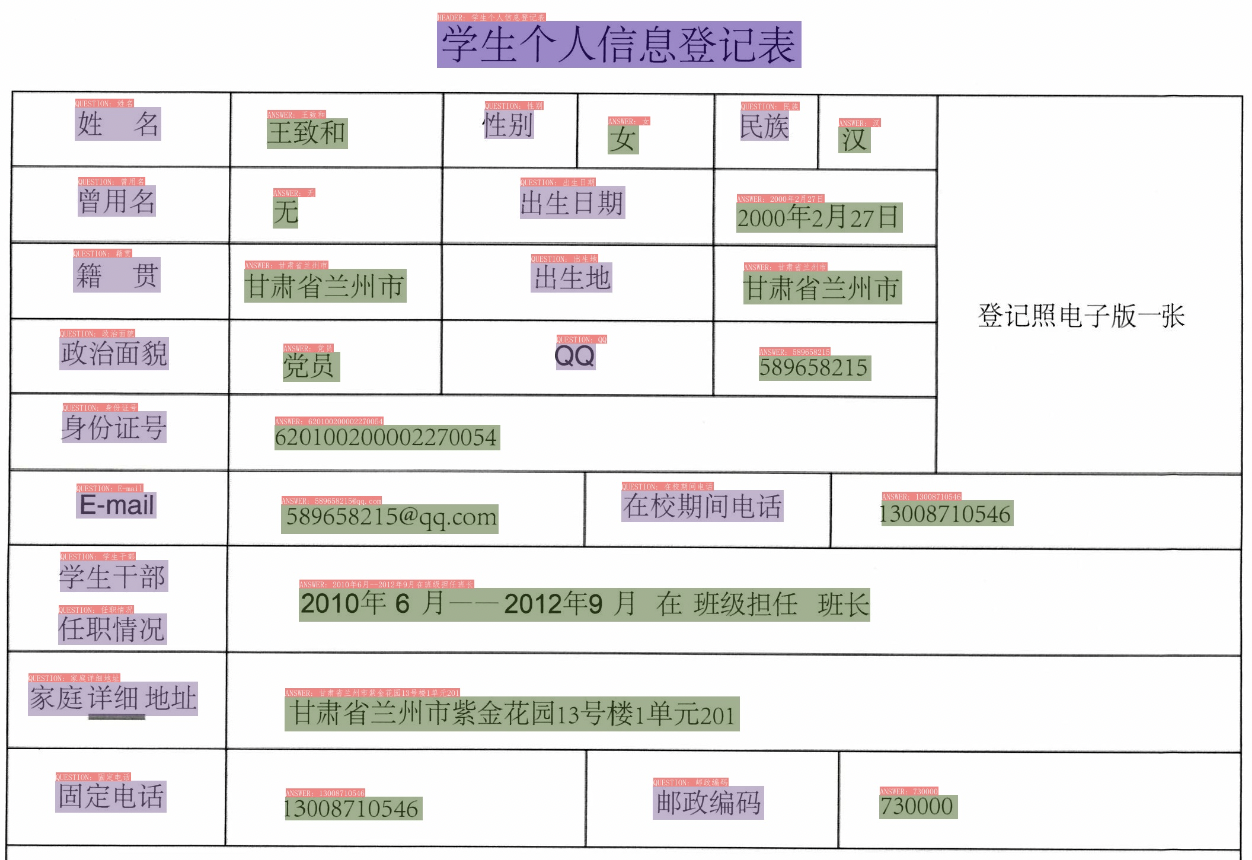

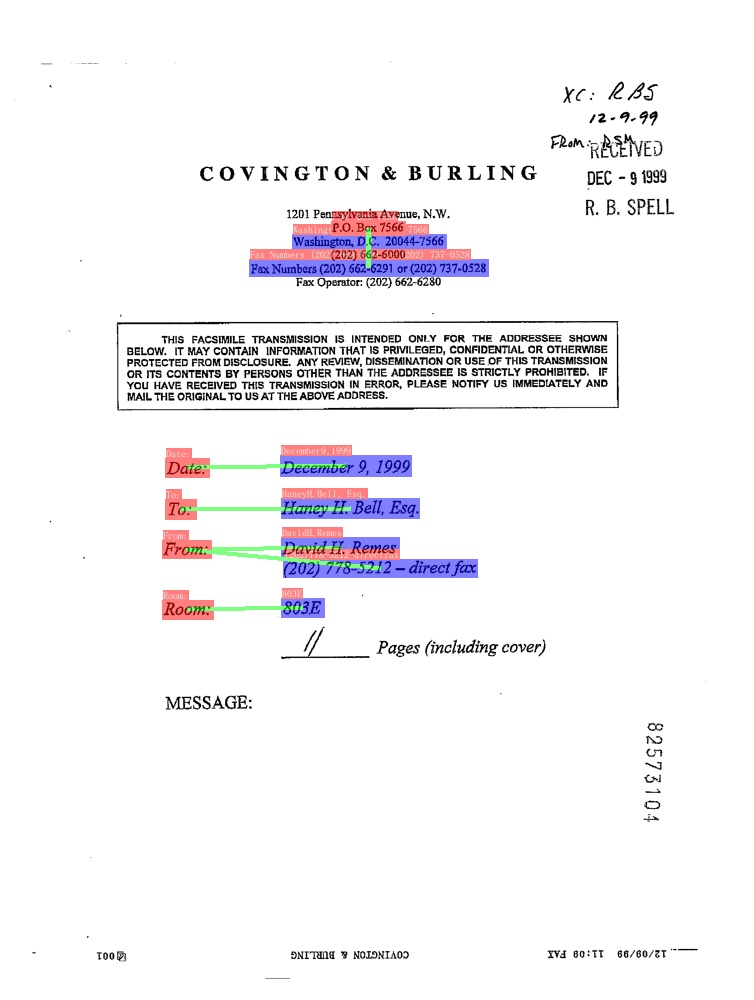

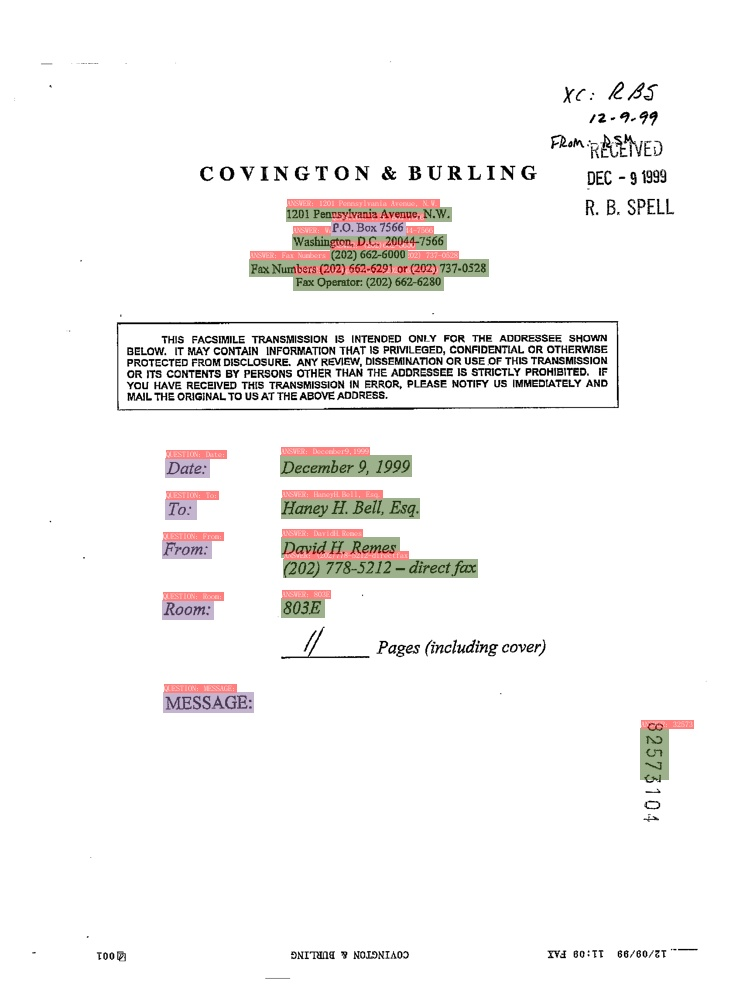

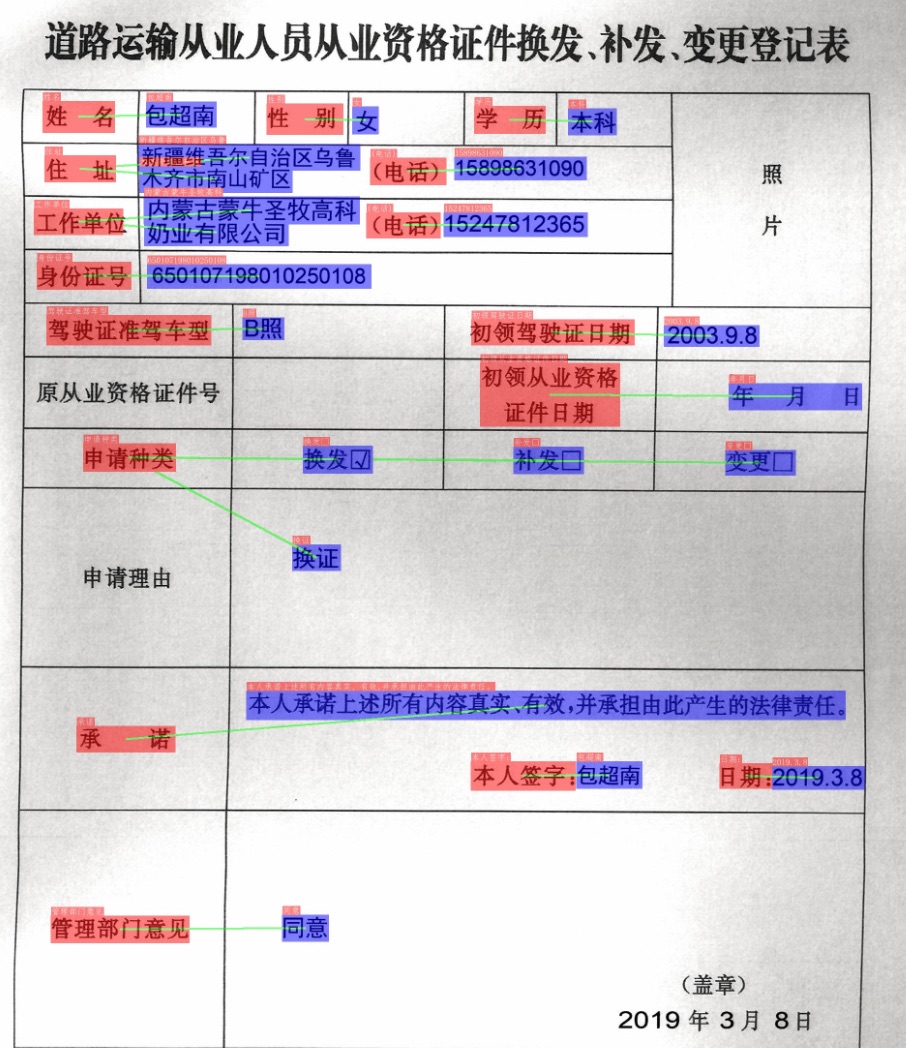

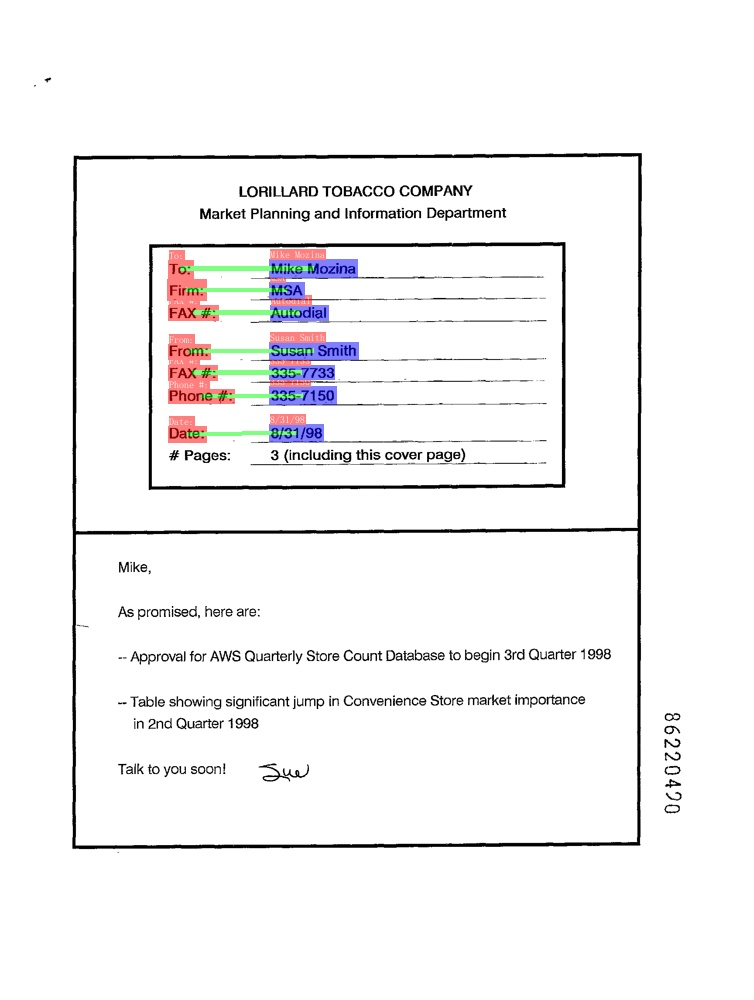

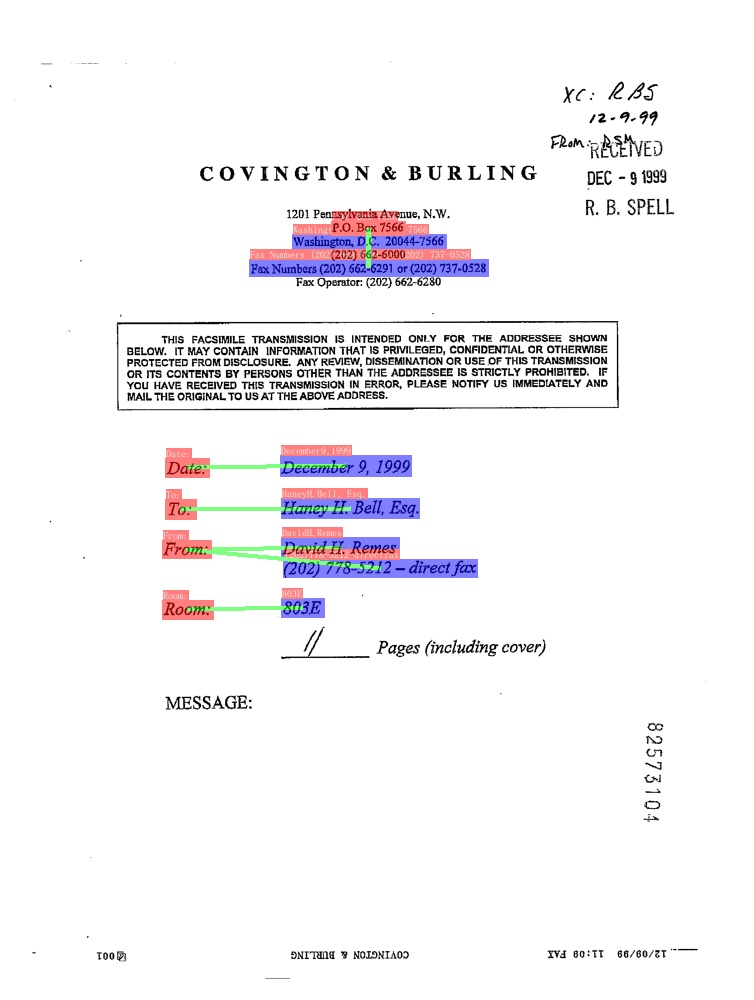

- SER (Semantic entity recognition)

-

+

+

+

+

+

+

+

+

+

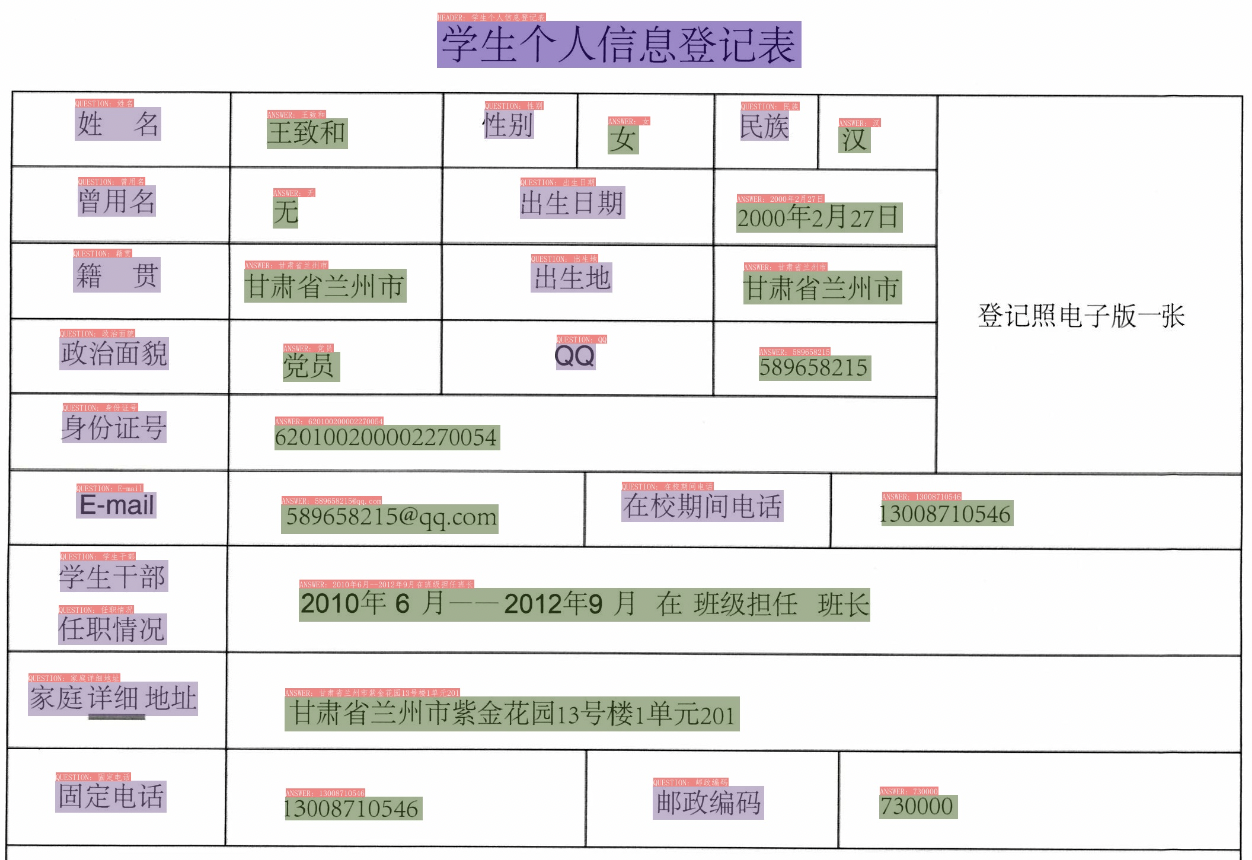

- RE (Relation Extraction)

-

+

+

+

+

+

+

+

+

+

diff --git a/README_ch.md b/README_ch.md

index c52d5f3dd17839254c3f58794e016f08dc0b21bc..79de6ab71958f91436d67c98f0d00a4246e2b5ad 100755

--- a/README_ch.md

+++ b/README_ch.md

@@ -27,21 +27,20 @@ PaddleOCR旨在打造一套丰富、领先、且实用的OCR工具库,助力

## 近期更新

-- **🔥2022.7 发布[OCR场景应用集合](./applications)**

- - 发布OCR场景应用集合,包含数码管、液晶屏、车牌、高精度SVTR模型等**7个垂类模型**,覆盖通用,制造、金融、交通行业的主要OCR垂类应用。

+- **🔥2022.8.24 发布 PaddleOCR [release/2.6](https://github.com/PaddlePaddle/PaddleOCR/tree/release/2.6)**

+ - 发布[PP-Structurev2](./ppstructure/),系统功能性能全面升级,适配中文场景,新增支持[版面复原](./ppstructure/recovery)和[PDF转Word]();

+ - [版面分析](./ppstructure/layout)模型优化:模型存储减少95%,速度提升11倍,平均CPU耗时仅需41ms;

+ - [表格识别](./ppstructure/table)模型优化:设计3大优化策略,预测耗时不变情况下,模型精度提升6%;

+ - [关键信息抽取](./ppstructure/kie)模型优化:设计视觉无关模型结构,语义实体识别精度提升2.8%,关系抽取精度提升9.1%。

-- **🔥2022.5.9 发布PaddleOCR [release/2.5](https://github.com/PaddlePaddle/PaddleOCR/tree/release/2.5)**

+- **🔥2022.8 发布 [OCR场景应用集合](./applications)**

+ - 包含数码管、液晶屏、车牌、高精度SVTR模型、手写体识别等**9个垂类模型**,覆盖通用,制造、金融、交通行业的主要OCR垂类应用。

+

+- **2022.5.9 发布 PaddleOCR [release/2.5](https://github.com/PaddlePaddle/PaddleOCR/tree/release/2.5)**

- 发布[PP-OCRv3](./doc/doc_ch/ppocr_introduction.md#pp-ocrv3),速度可比情况下,中文场景效果相比于PP-OCRv2再提升5%,英文场景提升11%,80语种多语言模型平均识别准确率提升5%以上;

- 发布半自动标注工具[PPOCRLabelv2](./PPOCRLabel):新增表格文字图像、图像关键信息抽取任务和不规则文字图像的标注功能;

- 发布OCR产业落地工具集:打通22种训练部署软硬件环境与方式,覆盖企业90%的训练部署环境需求;

- 发布交互式OCR开源电子书[《动手学OCR》](./doc/doc_ch/ocr_book.md),覆盖OCR全栈技术的前沿理论与代码实践,并配套教学视频。

-- 2021.12.21 发布PaddleOCR [release/2.4](https://github.com/PaddlePaddle/PaddleOCR/tree/release/2.4)

- - OCR算法新增1种文本检测算法([PSENet](./doc/doc_ch/algorithm_det_psenet.md)),3种文本识别算法([NRTR](./doc/doc_ch/algorithm_rec_nrtr.md)、[SEED](./doc/doc_ch/algorithm_rec_seed.md)、[SAR](./doc/doc_ch/algorithm_rec_sar.md));

- - 文档结构化算法新增1种关键信息提取算法([SDMGR](./ppstructure/docs/kie.md)),3种[DocVQA](./ppstructure/vqa)算法(LayoutLM、LayoutLMv2,LayoutXLM)。

-- 2021.9.7 发布PaddleOCR [release/2.3](https://github.com/PaddlePaddle/PaddleOCR/tree/release/2.3)

- - 发布[PP-OCRv2](./doc/doc_ch/ppocr_introduction.md#pp-ocrv2),CPU推理速度相比于PP-OCR server提升220%;效果相比于PP-OCR mobile 提升7%。

-- 2021.8.3 发布PaddleOCR [release/2.2](https://github.com/PaddlePaddle/PaddleOCR/tree/release/2.2)

- - 发布文档结构分析[PP-Structure](./ppstructure/README_ch.md)工具包,支持版面分析与表格识别(含Excel导出)。

> [更多](./doc/doc_ch/update.md)

@@ -49,7 +48,9 @@ PaddleOCR旨在打造一套丰富、领先、且实用的OCR工具库,助力

支持多种OCR相关前沿算法,在此基础上打造产业级特色模型[PP-OCR](./doc/doc_ch/ppocr_introduction.md)和[PP-Structure](./ppstructure/README_ch.md),并打通数据生产、模型训练、压缩、预测部署全流程。

-

+

+

+

> 上述内容的使用方法建议从文档教程中的快速开始体验

@@ -213,14 +214,30 @@ PaddleOCR旨在打造一套丰富、领先、且实用的OCR工具库,助力

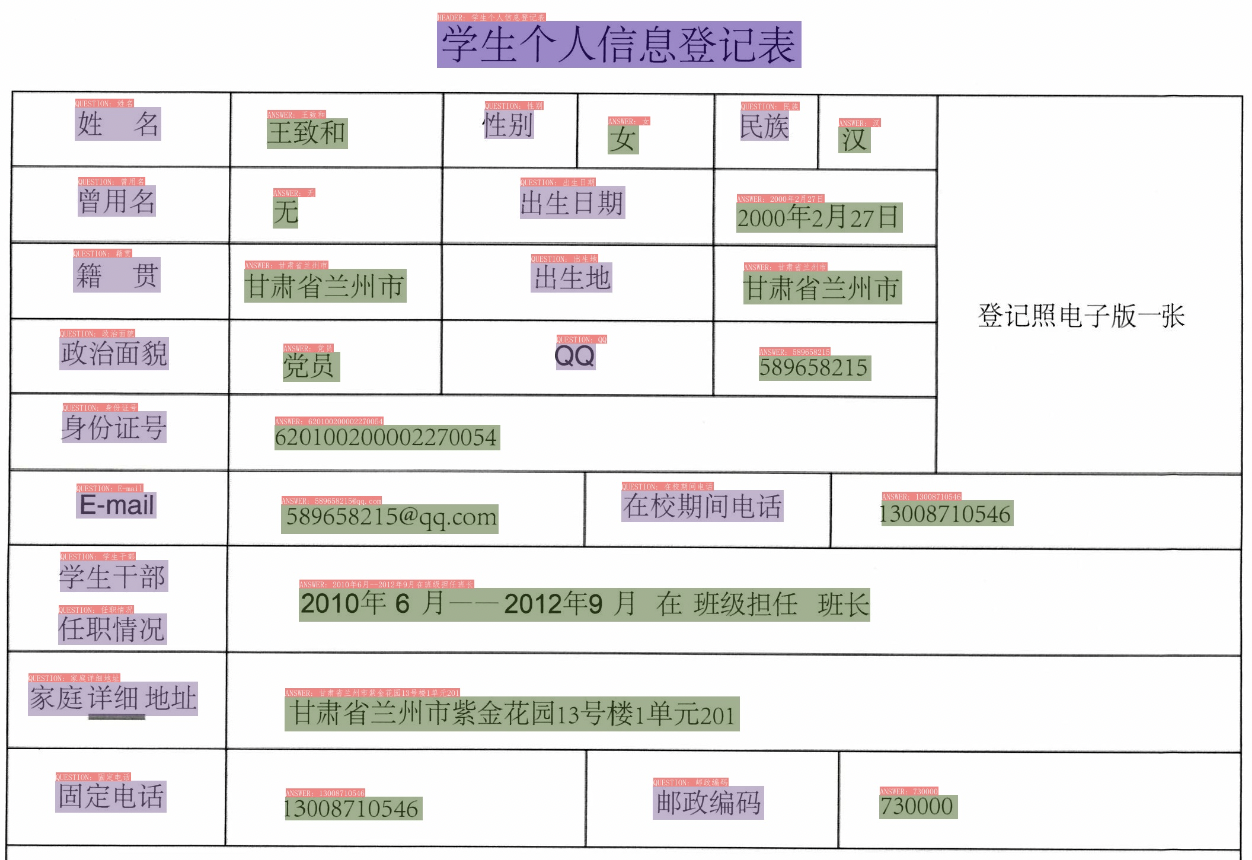

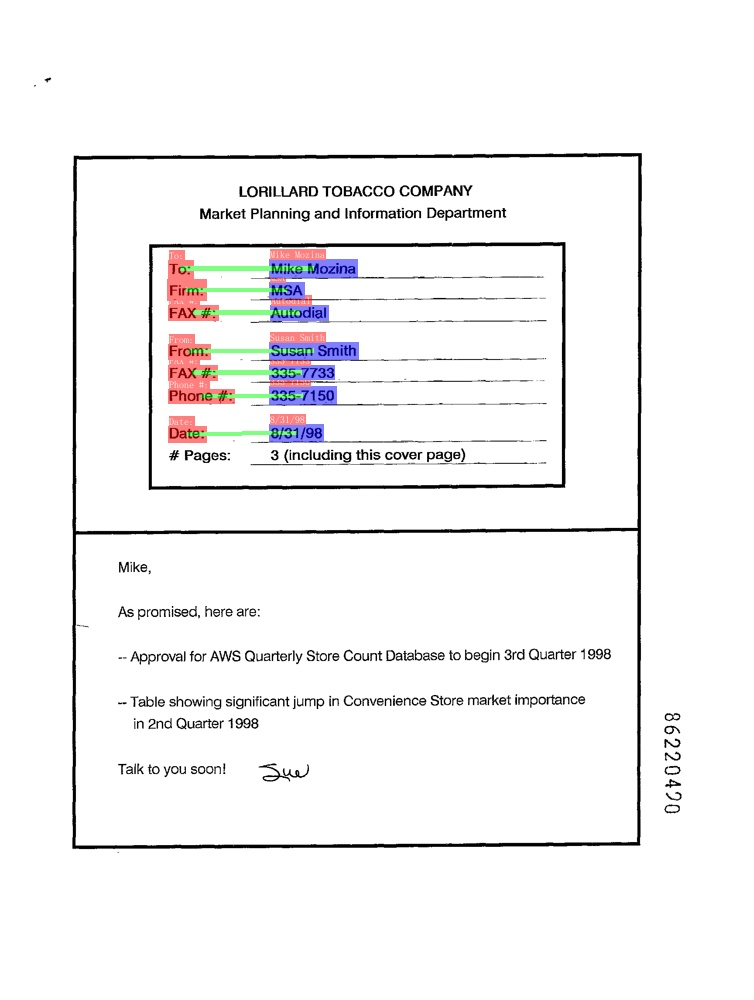

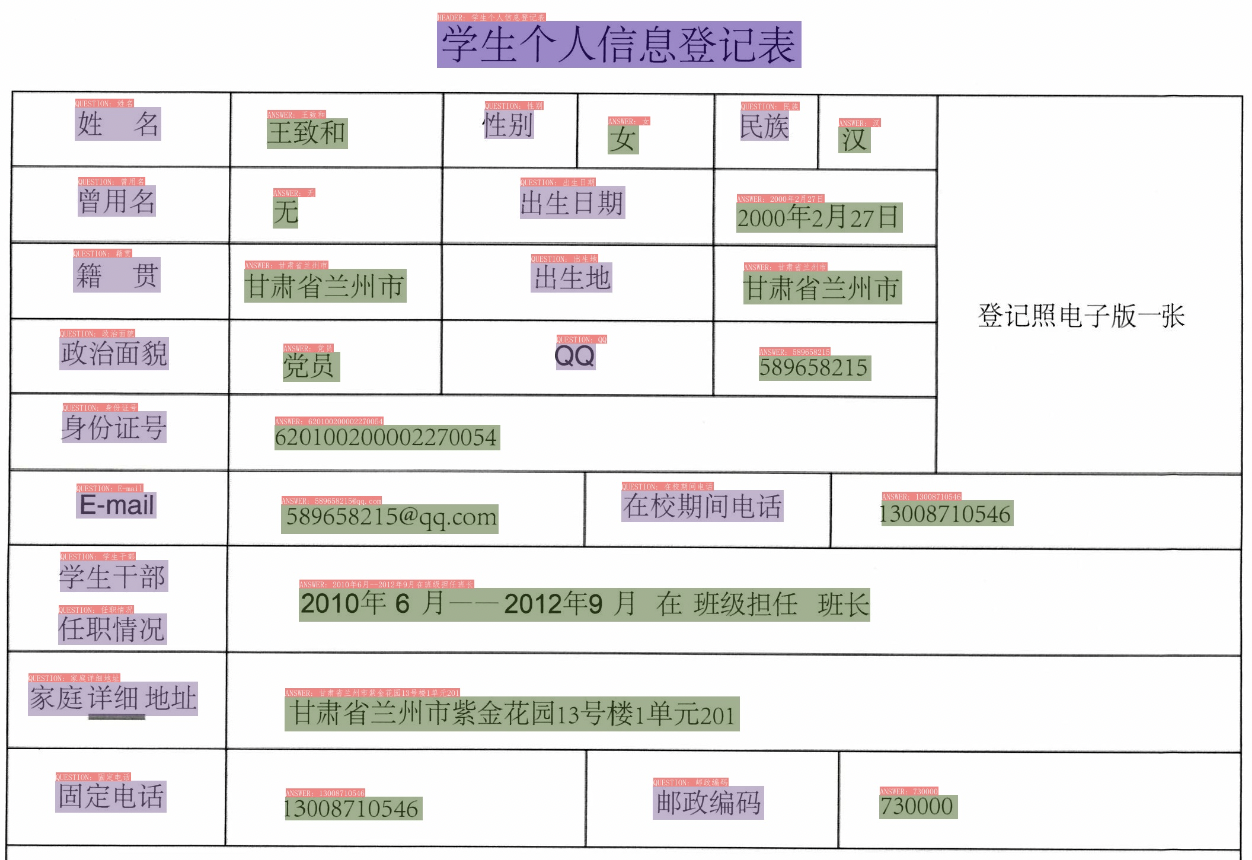

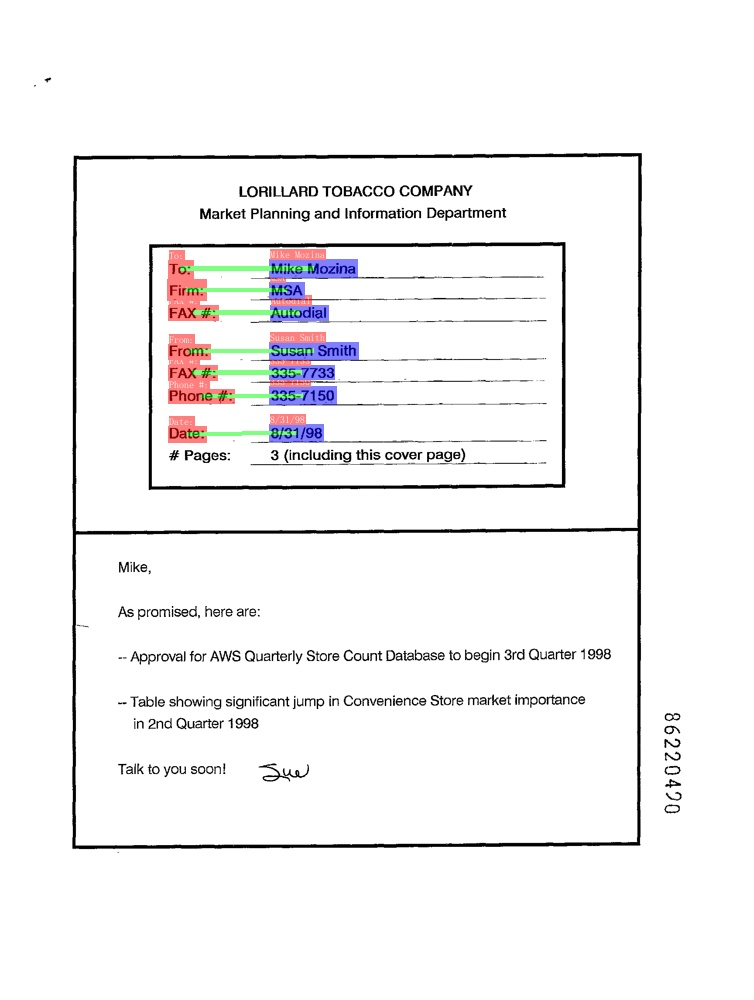

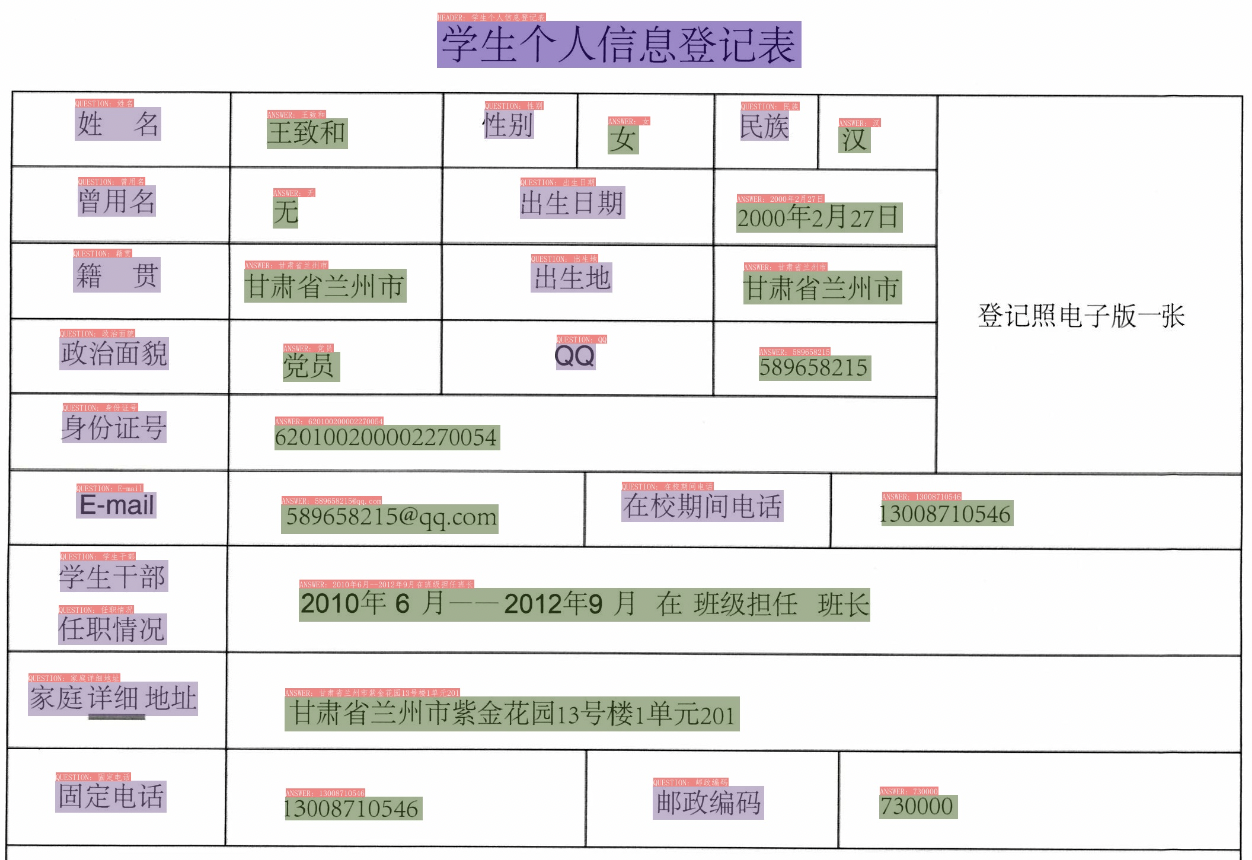

- SER(语义实体识别)

-

+

+

+

+

+

+

+

+

+

- RE(关系提取)

-

+

+

+

+

+

+

+

+

+

diff --git a/doc/doc_ch/algorithm_rec_srn.md b/doc/doc_ch/algorithm_rec_srn.md

index ca7961359eb902fafee959b26d02f324aece233a..dd61a388c7024fabdadec1c120bd3341ed0197cc 100644

--- a/doc/doc_ch/algorithm_rec_srn.md

+++ b/doc/doc_ch/algorithm_rec_srn.md

@@ -78,7 +78,7 @@ python3 tools/export_model.py -c configs/rec/rec_r50_fpn_srn.yml -o Global.pretr

SRN文本识别模型推理,可以执行如下命令:

```

-python3 tools/infer/predict_rec.py --image_dir="./doc/imgs_words/en/word_1.png" --rec_model_dir="./inference/rec_srn/" --rec_image_shape="1,64,256" --rec_char_type="ch" --rec_algorithm="SRN" --rec_char_dict_path=./ppocr/utils/ic15_dict.txt --use_space_char=False

+python3 tools/infer/predict_rec.py --image_dir="./doc/imgs_words/en/word_1.png" --rec_model_dir="./inference/rec_srn/" --rec_image_shape="1,64,256" --rec_algorithm="SRN" --rec_char_dict_path=./ppocr/utils/ic15_dict.txt --use_space_char=False

```

diff --git a/doc/doc_en/algorithm_en.md b/doc/doc_en/algorithm_en.md

deleted file mode 100644

index c880336b4ad528eab2cce479edf11fce0b43f435..0000000000000000000000000000000000000000

--- a/doc/doc_en/algorithm_en.md

+++ /dev/null

@@ -1,11 +0,0 @@

-# Academic Algorithms and Models

-

-PaddleOCR will add cutting-edge OCR algorithms and models continuously. Check out the supported models and tutorials by clicking the following list:

-

-

-- [text detection algorithms](./algorithm_overview_en.md#11)

-- [text recognition algorithms](./algorithm_overview_en.md#12)

-- [end-to-end algorithms](./algorithm_overview_en.md#2)

-- [table recognition algorithms](./algorithm_overview_en.md#3)

-

-Developers are welcome to contribute more algorithms! Please refer to [add new algorithm](./add_new_algorithm_en.md) guideline.

diff --git a/doc/doc_en/algorithm_overview_en.md b/doc/doc_en/algorithm_overview_en.md

index 3f59bf9c829920fb43fa7f89858b4586ceaac26f..5bf569e3e1649cfabbe196be7e1a55d1caa3bf61 100755

--- a/doc/doc_en/algorithm_overview_en.md

+++ b/doc/doc_en/algorithm_overview_en.md

@@ -7,7 +7,11 @@

- [3. Table Recognition Algorithms](#3)

- [4. Key Information Extraction Algorithms](#4)

-This tutorial lists the OCR algorithms supported by PaddleOCR, as well as the models and metrics of each algorithm on **English public datasets**. It is mainly used for algorithm introduction and algorithm performance comparison. For more models on other datasets including Chinese, please refer to [PP-OCR v2.0 models list](./models_list_en.md).

+This tutorial lists the OCR algorithms supported by PaddleOCR, as well as the models and metrics of each algorithm on **English public datasets**. It is mainly used for algorithm introduction and algorithm performance comparison. For more models on other datasets including Chinese, please refer to [PP-OCRv3 models list](./models_list_en.md).

+

+>>

+Developers are welcome to contribute more algorithms! Please refer to [add new algorithm](./add_new_algorithm_en.md) guideline.

+

diff --git a/doc/features.png b/doc/features.png

deleted file mode 100644

index 273e4beb74771b723ab732f703863fa2a3a4c21c..0000000000000000000000000000000000000000

Binary files a/doc/features.png and /dev/null differ

diff --git a/doc/features_en.png b/doc/features_en.png

deleted file mode 100644

index 310a1b7e50920304521a5fa68c5c2e2a881d3917..0000000000000000000000000000000000000000

Binary files a/doc/features_en.png and /dev/null differ

diff --git a/paddleocr.py b/paddleocr.py

index bada383612725608d19596ea6f331758a8aba53c..54ed6a0222efae8050395172827564d9a357f30d 100644

--- a/paddleocr.py

+++ b/paddleocr.py

@@ -652,8 +652,9 @@ def main():

for index, pdf_img in enumerate(img):

os.makedirs(

os.path.join(args.output, img_name), exist_ok=True)

- pdf_img_path = os.path.join(args.output, img_name, img_name

- + '_' + str(index) + '.jpg')

+ pdf_img_path = os.path.join(

+ args.output, img_name,

+ img_name + '_' + str(index) + '.jpg')

cv2.imwrite(pdf_img_path, pdf_img)

img_paths.append([pdf_img_path, pdf_img])

diff --git a/ppocr/utils/save_load.py b/ppocr/utils/save_load.py

index f86125521d19342f63a9fcb3bdcaed02cc4c6463..aa65f290c0a5f4f13b3103fb4404815e2ae74a88 100644

--- a/ppocr/utils/save_load.py

+++ b/ppocr/utils/save_load.py

@@ -104,8 +104,9 @@ def load_model(config, model, optimizer=None, model_type='det'):

continue

pre_value = params[key]

if pre_value.dtype == paddle.float16:

- pre_value = pre_value.astype(paddle.float32)

is_float16 = True

+ if pre_value.dtype != value.dtype:

+ pre_value = pre_value.astype(value.dtype)

if list(value.shape) == list(pre_value.shape):

new_state_dict[key] = pre_value

else:

@@ -162,8 +163,9 @@ def load_pretrained_params(model, path):

logger.warning("The pretrained params {} not in model".format(k1))

else:

if params[k1].dtype == paddle.float16:

- params[k1] = params[k1].astype(paddle.float32)

is_float16 = True

+ if params[k1].dtype != state_dict[k1].dtype:

+ params[k1] = params[k1].astype(state_dict[k1].dtype)

if list(state_dict[k1].shape) == list(params[k1].shape):

new_state_dict[k1] = params[k1]

else:

diff --git a/ppstructure/README.md b/ppstructure/README.md

index 66df10b2ec4d52fb743c40893d5fc5aa7d6ab5be..fb3697bc1066262833ee20bcbb8f79833f264f14 100644

--- a/ppstructure/README.md

+++ b/ppstructure/README.md

@@ -1,120 +1,115 @@

English | [简体中文](README_ch.md)

- [1. Introduction](#1-introduction)

-- [2. Update log](#2-update-log)

-- [3. Features](#3-features)

-- [4. Results](#4-results)

- - [4.1 Layout analysis and table recognition](#41-layout-analysis-and-table-recognition)

- - [4.2 KIE](#42-kie)

-- [5. Quick start](#5-quick-start)

-- [6. PP-Structure System](#6-pp-structure-system)

- - [6.1 Layout analysis and table recognition](#61-layout-analysis-and-table-recognition)

- - [6.1.1 Layout analysis](#611-layout-analysis)

- - [6.1.2 Table recognition](#612-table-recognition)

- - [6.2 KIE](#62-kie)

-- [7. Model List](#7-model-list)

- - [7.1 Layout analysis model](#71-layout-analysis-model)

- - [7.2 OCR and table recognition model](#72-ocr-and-table-recognition-model)

- - [7.3 KIE model](#73-kie-model)

+- [2. Features](#2-features)

+- [3. Results](#3-results)

+ - [3.1 Layout analysis and table recognition](#31-layout-analysis-and-table-recognition)

+ - [3.2 Layout Recovery](#32-layout-recovery)

+ - [3.3 KIE](#33-kie)

+- [4. Quick start](#4-quick-start)

+- [5. Model List](#5-model-list)

## 1. Introduction

-PP-Structure is an OCR toolkit that can be used for document analysis and processing with complex structures, designed to help developers better complete document understanding tasks

+PP-Structure is an intelligent document analysis system developed by the PaddleOCR team, which aims to help developers better complete tasks related to document understanding such as layout analysis and table recognition.

-## 2. Update log

-* 2022.02.12 KIE add LayoutLMv2 model。

-* 2021.12.07 add [KIE SER and RE tasks](kie/README.md)。

+The pipeline of PP-Structurev2 system is shown below. The document image first passes through the image direction correction module to identify the direction of the entire image and complete the direction correction. Then, two tasks of layout information analysis and key information extraction can be completed.

-## 3. Features

+- In the layout analysis task, the image first goes through the layout analysis model to divide the image into different areas such as text, table, and figure, and then analyze these areas separately. For example, the table area is sent to the form recognition module for structured recognition, and the text area is sent to the OCR engine for text recognition. Finally, the layout recovery module restores it to a word or pdf file with the same layout as the original image;

+- In the key information extraction task, the OCR engine is first used to extract the text content, and then the SER(semantic entity recognition) module obtains the semantic entities in the image, and finally the RE(relationship extraction) module obtains the correspondence between the semantic entities, thereby extracting the required key information.

+

-The main features of PP-Structure are as follows:

+More technical details: 👉 [PP-Structurev2 Technical Report](docs/PP-Structurev2_introduction.md)

-- Support the layout analysis of documents, divide the documents into 5 types of areas **text, title, table, image and list** (conjunction with Layout-Parser)

-- Support to extract the texts from the text, title, picture and list areas (used in conjunction with PP-OCR)

-- Support to extract excel files from the table areas

-- Support python whl package and command line usage, easy to use

-- Support custom training for layout analysis and table structure tasks

-- Support Document Key Information Extraction (KIE) tasks: Semantic Entity Recognition (SER) and Relation Extraction (RE)

+PP-Structurev2 supports independent use or flexible collocation of each module. For example, you can use layout analysis alone or table recognition alone. Click the corresponding link below to get the tutorial for each independent module:

-## 4. Results

+- [Layout Analysis](layout/README.md)

+- [Table Recognition](table/README.md)

+- [Key Information Extraction](kie/README.md)

+- [Layout Recovery](recovery/README.md)

-### 4.1 Layout analysis and table recognition

+## 2. Features

-

-

-The figure shows the pipeline of layout analysis + table recognition. The image is first divided into four areas of image, text, title and table by layout analysis, and then OCR detection and recognition is performed on the three areas of image, text and title, and the table is performed table recognition, where the image will also be stored for use.

-

-### 4.2 KIE

-

-* SER

-*

- |

----|---

-

-Different colored boxes in the figure represent different categories. For xfun dataset, there are three categories: query, answer and header:

+The main features of PP-Structurev2 are as follows:

+- Support layout analysis of documents in the form of images/pdfs, which can be divided into areas such as **text, titles, tables, figures, formulas, etc.**;

+- Support common Chinese and English **table detection** tasks;

+- Support structured table recognition, and output the final result to **Excel file**;

+- Support multimodal-based Key Information Extraction (KIE) tasks - **Semantic Entity Recognition** (SER) and **Relation Extraction (RE);

+- Support **layout recovery**, that is, restore the document in word or pdf format with the same layout as the original image;

+- Support customized training and multiple inference deployment methods such as python whl package quick start;

+- Connect with the semi-automatic data labeling tool PPOCRLabel, which supports the labeling of layout analysis, table recognition, and SER.

-* Dark purple: header

-* Light purple: query

-* Army green: answer

+## 3. Results

-The corresponding category and OCR recognition results are also marked at the top left of the OCR detection box.

+PP-Structurev2 supports the independent use or flexible collocation of each module. For example, layout analysis can be used alone, or table recognition can be used alone. Only the visualization effects of several representative usage methods are shown here.

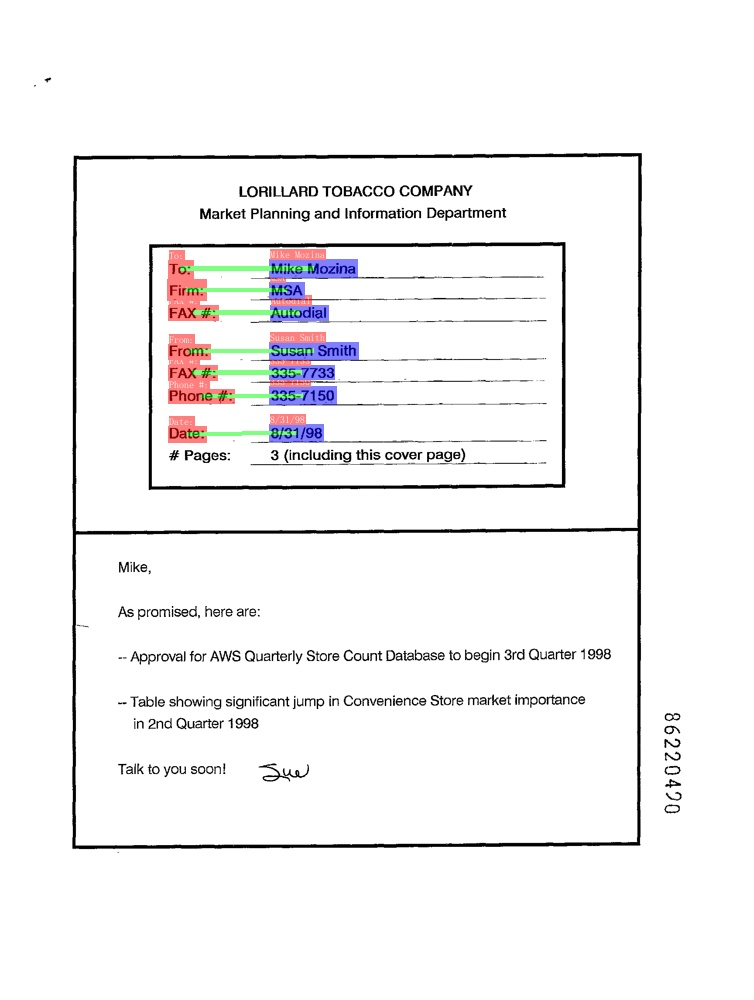

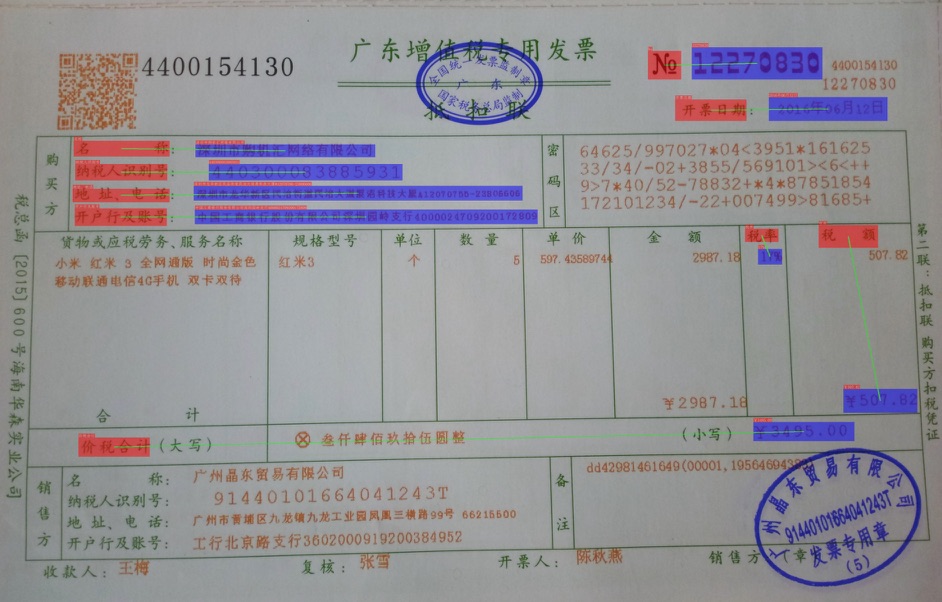

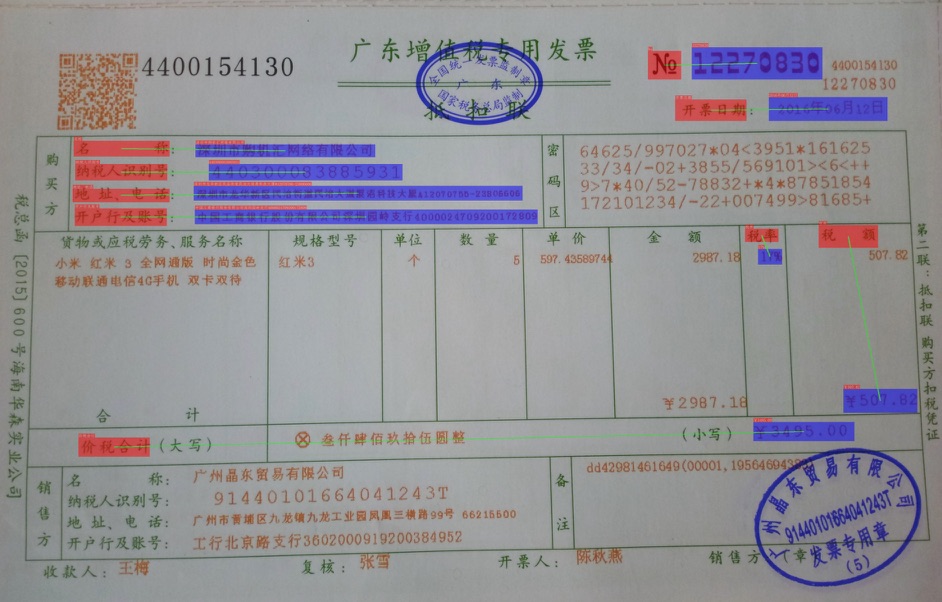

+### 3.1 Layout analysis and table recognition

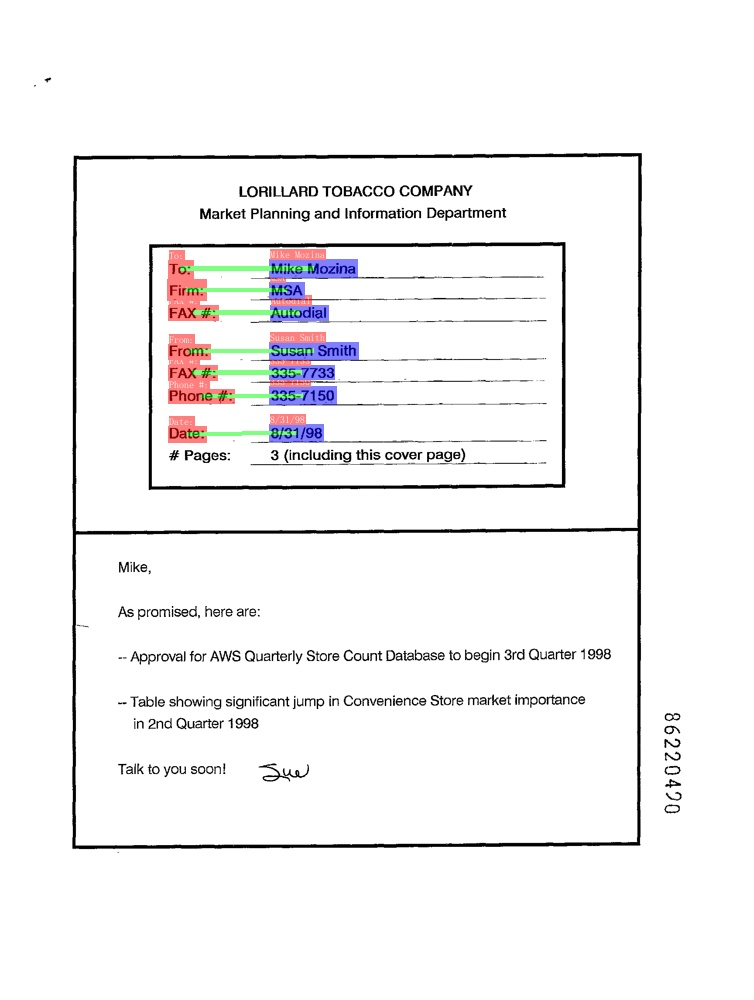

-* RE

-

- |

----|---

+The figure shows the pipeline of layout analysis + table recognition. The image is first divided into four areas of image, text, title and table by layout analysis, and then OCR detection and recognition is performed on the three areas of image, text and title, and the table is performed table recognition, where the image will also be stored for use.

+

+### 3.2 Layout recovery

-In the figure, the red box represents the question, the blue box represents the answer, and the question and answer are connected by green lines. The corresponding category and OCR recognition results are also marked at the top left of the OCR detection box.

+The following figure shows the effect of layout recovery based on the results of layout analysis and table recognition in the previous section.

+

-## 5. Quick start

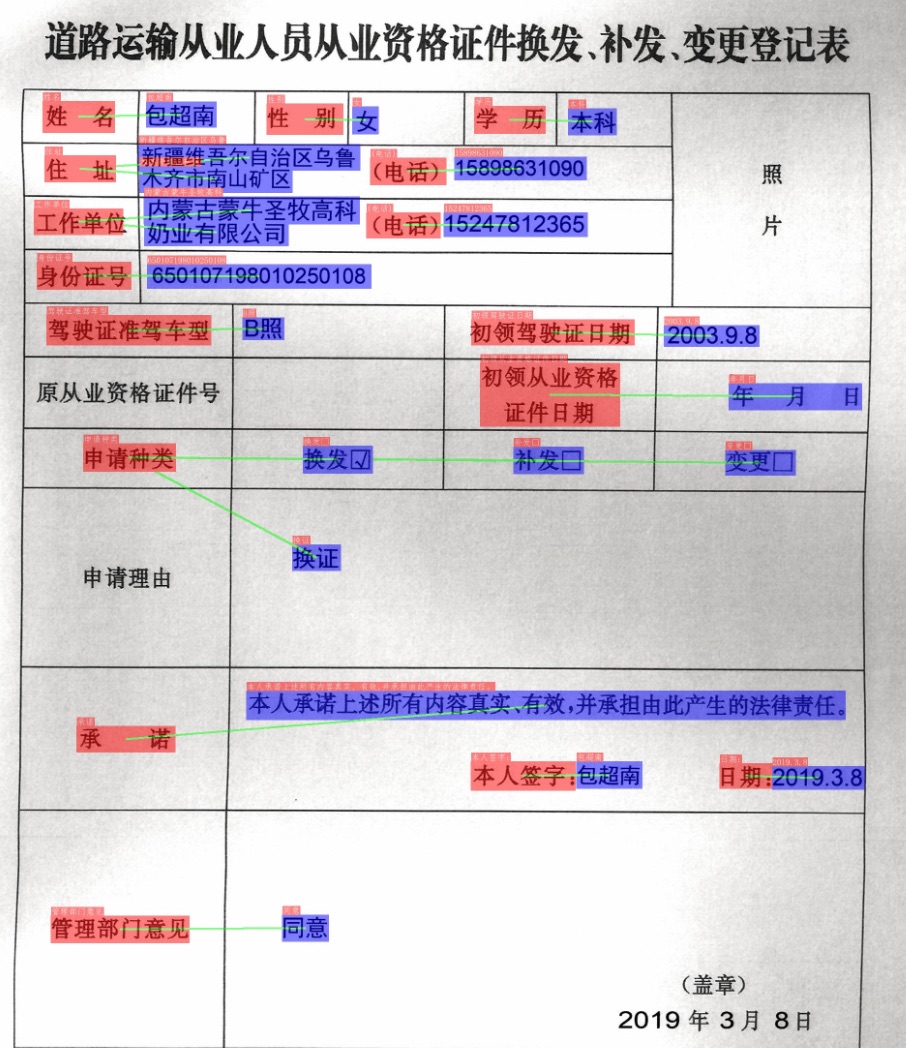

+### 3.3 KIE

-Start from [Quick Installation](./docs/quickstart.md)

+* SER

-## 6. PP-Structure System

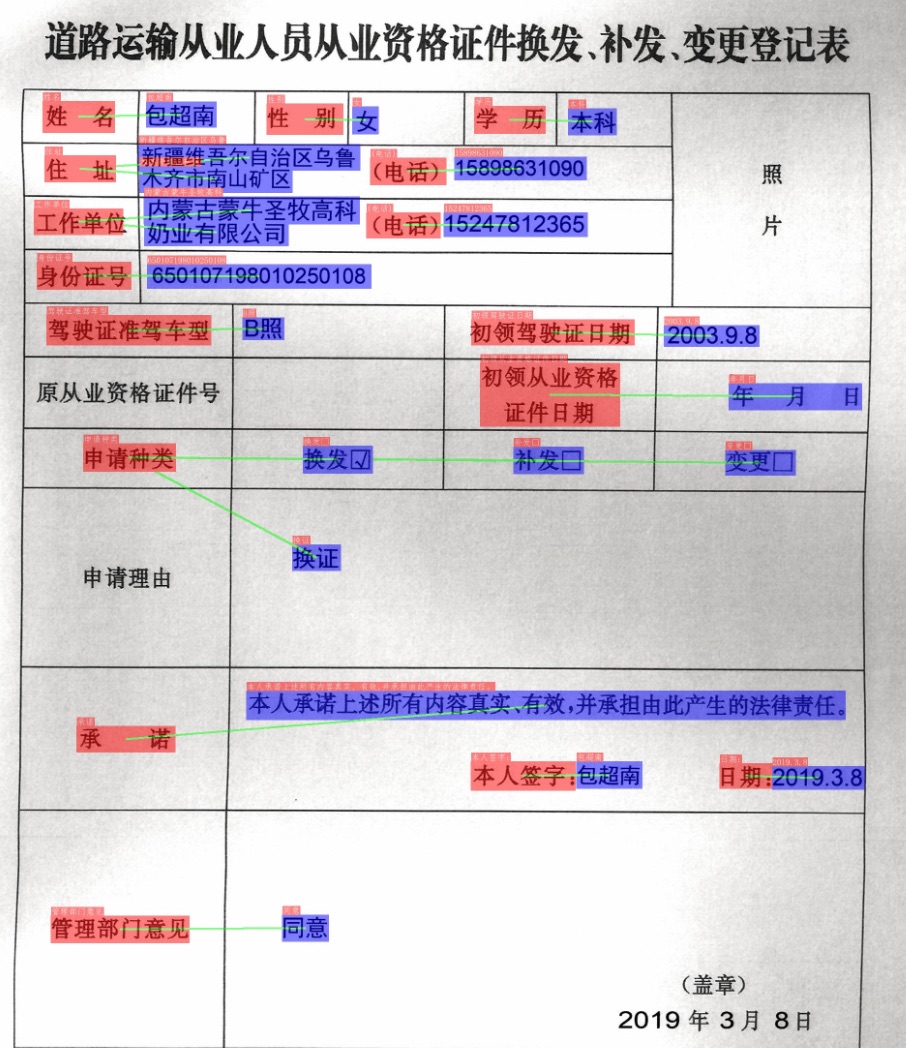

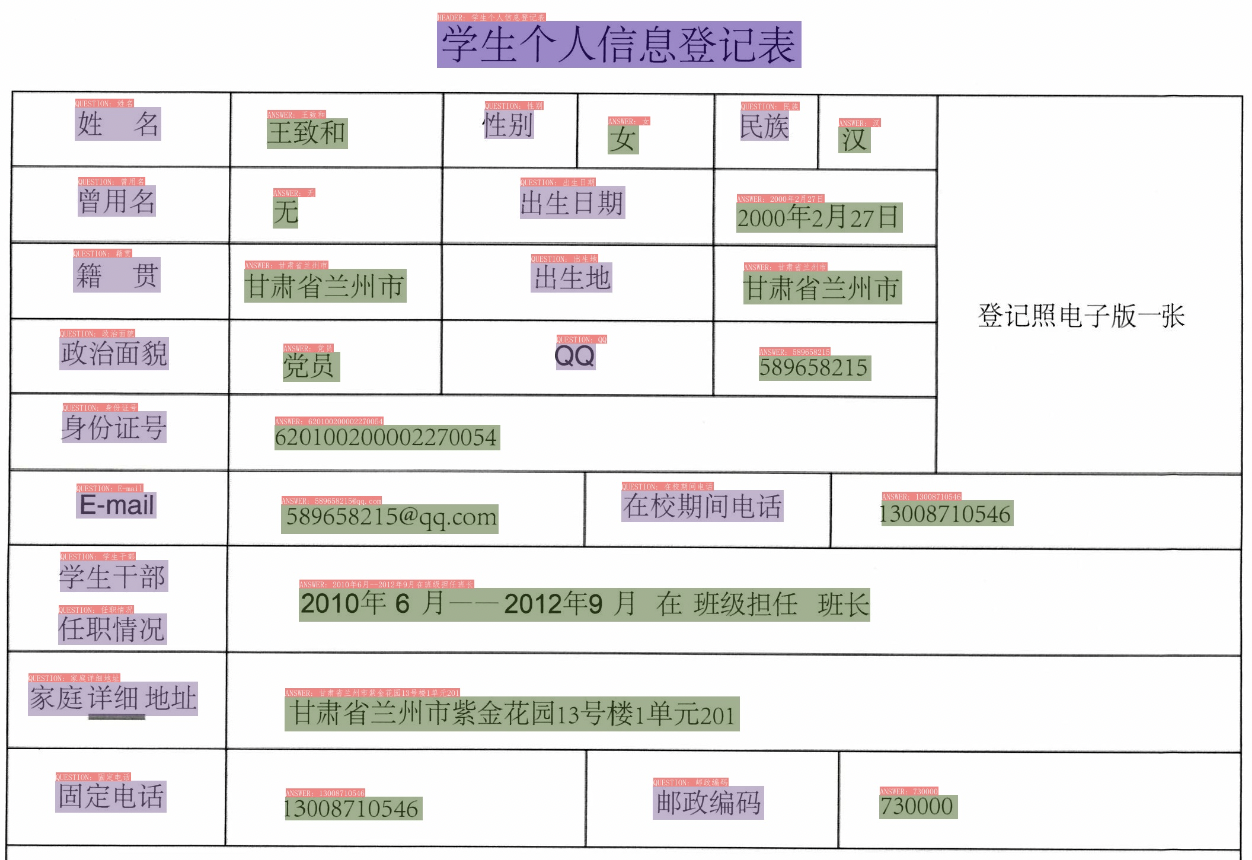

+Different colored boxes in the figure represent different categories.

-### 6.1 Layout analysis and table recognition

+

+

+

-

+

+

+

-In PP-Structure, the image will be divided into 5 types of areas **text, title, image list and table**. For the first 4 types of areas, directly use PP-OCR system to complete the text detection and recognition. For the table area, after the table structuring process, the table in image is converted into an Excel file with the same table style.

+

+

+

-#### 6.1.1 Layout analysis

+

+

+

-Layout analysis classifies image by region, including the use of Python scripts of layout analysis tools, extraction of designated category detection boxes, performance indicators, and custom training layout analysis models. For details, please refer to [document](layout/README.md).

+

+

+

-#### 6.1.2 Table recognition

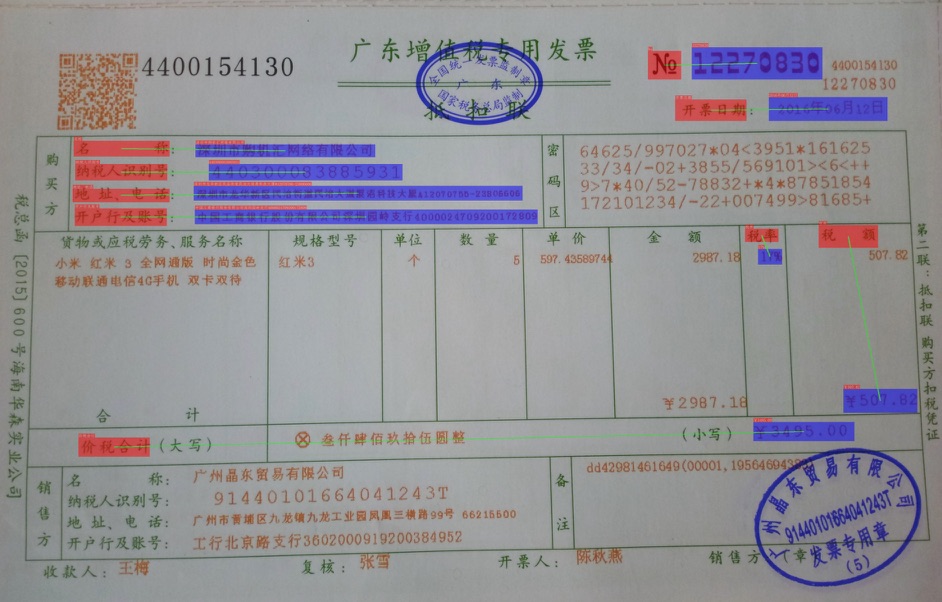

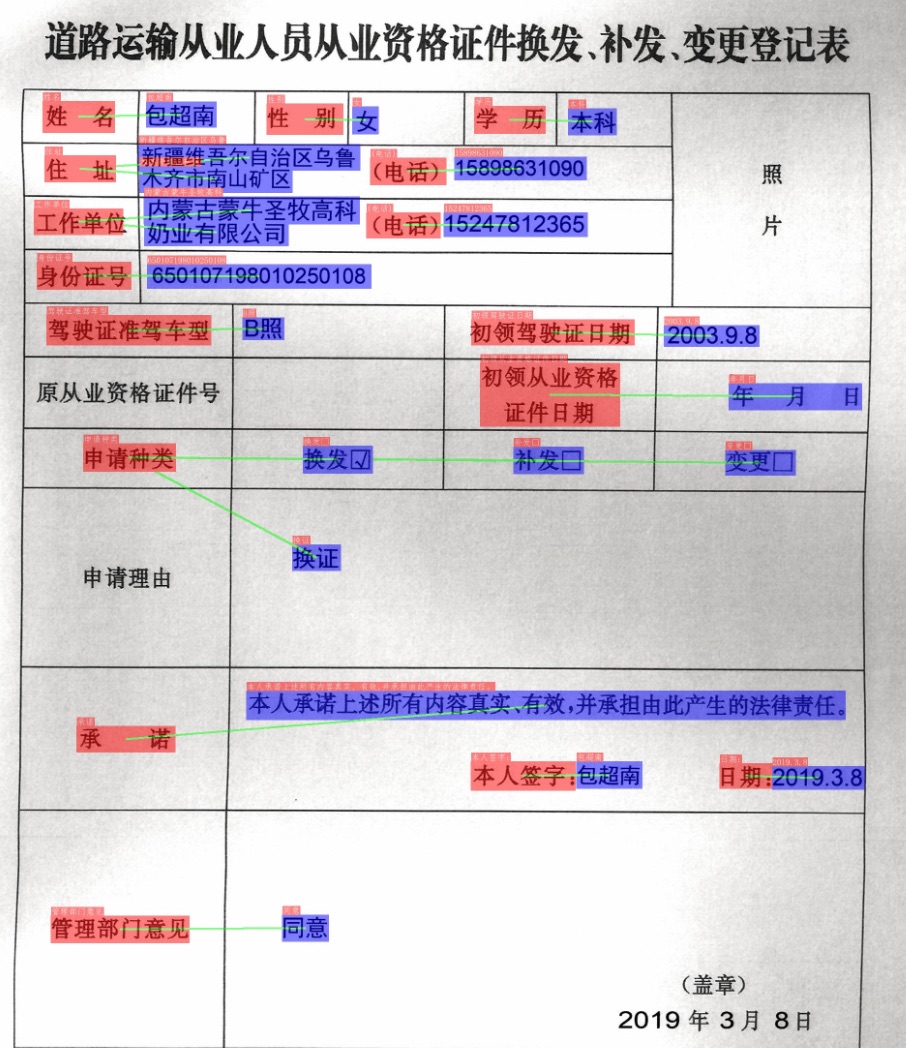

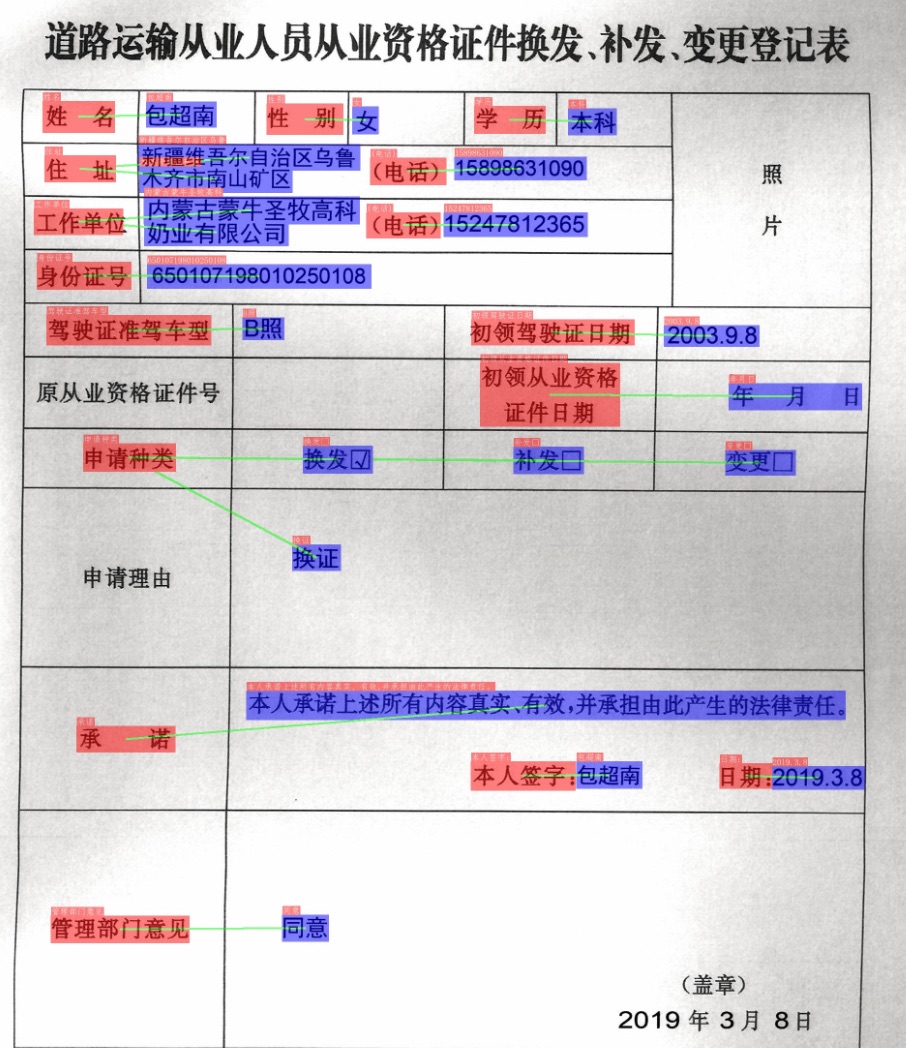

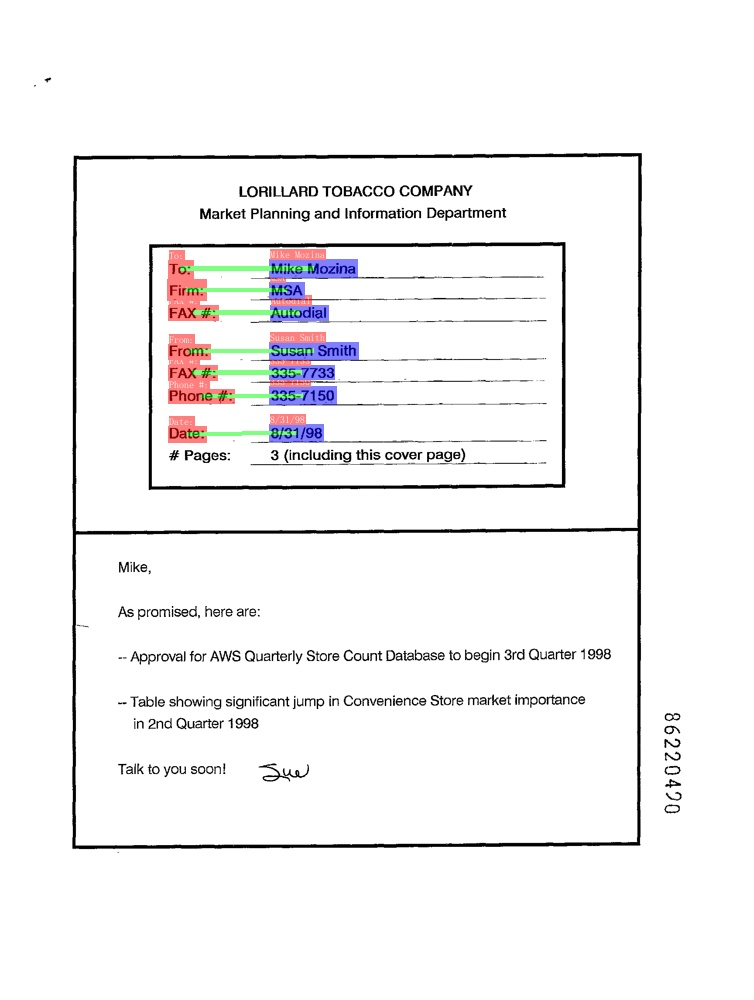

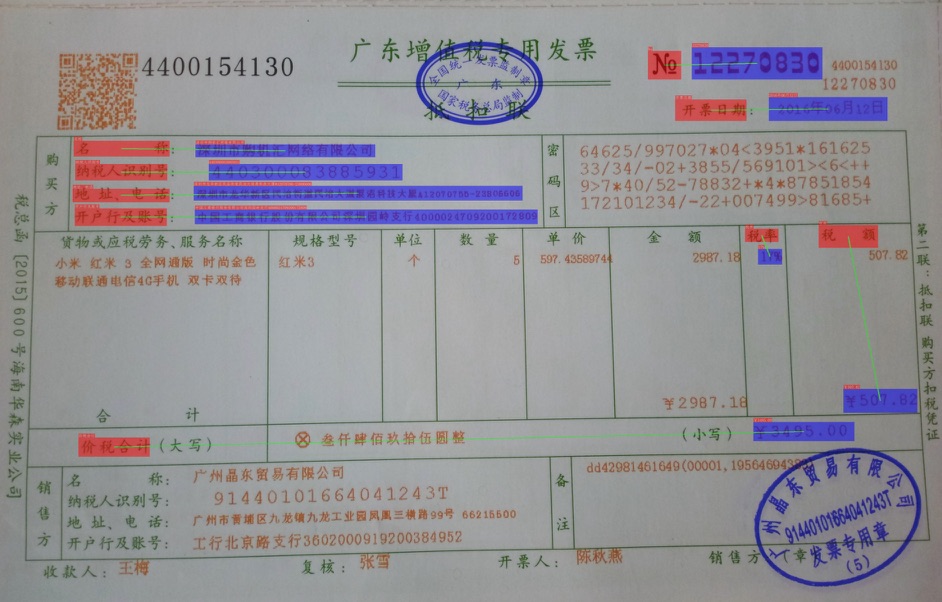

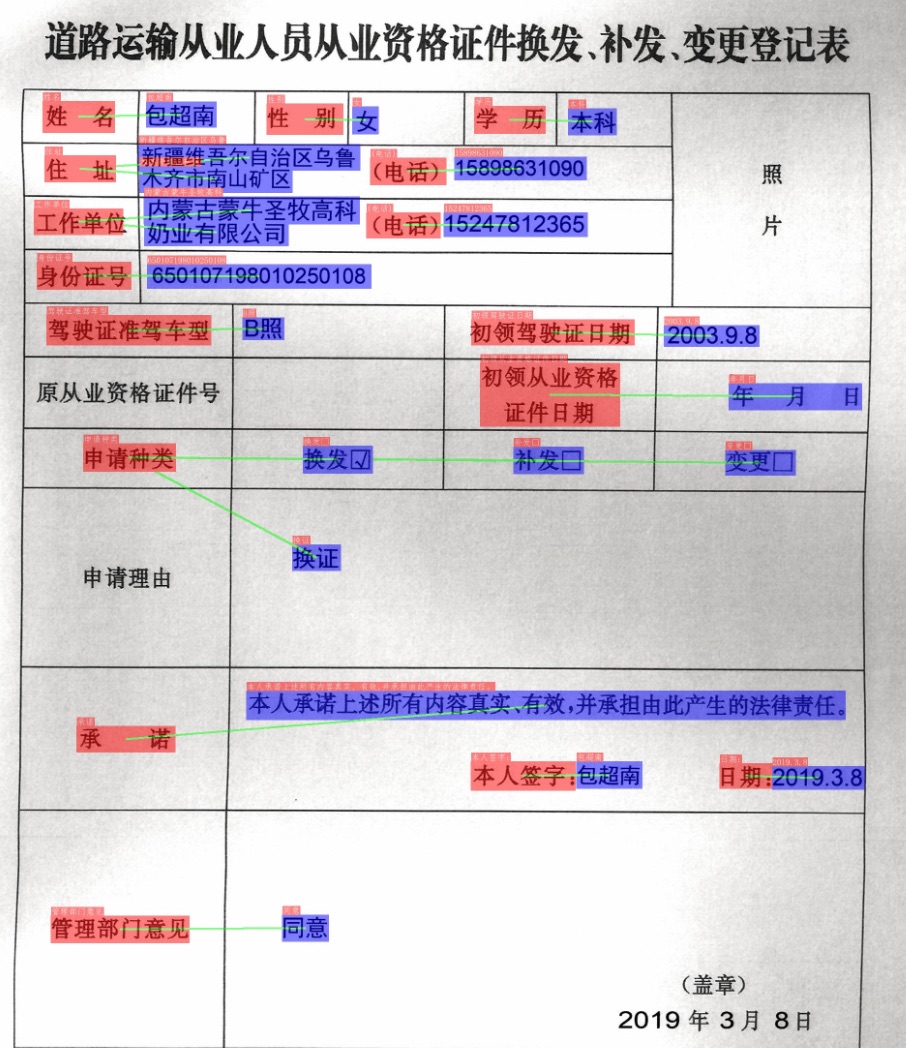

+* RE

-Table recognition converts table images into excel documents, which include the detection and recognition of table text and the prediction of table structure and cell coordinates. For detailed instructions, please refer to [document](table/README.md)

+In the figure, the red box represents `Question`, the blue box represents `Answer`, and `Question` and `Answer` are connected by green lines.

-### 6.2 KIE

+

+

+

-Multi-modal based Key Information Extraction (KIE) methods include Semantic Entity Recognition (SER) and Relation Extraction (RE) tasks. Based on SER task, text recognition and classification in images can be completed. Based on THE RE task, we can extract the relation of the text content in the image, such as judge the problem pair. For details, please refer to [document](kie/README.md)

+

+

+

-## 7. Model List

+

+

+

-PP-Structure Series Model List (Updating)

+

+

+

-### 7.1 Layout analysis model

+## 4. Quick start

-|model name|description|download|label_map|

-| --- | --- | --- |--- |

-| ppyolov2_r50vd_dcn_365e_publaynet | The layout analysis model trained on the PubLayNet dataset can divide image into 5 types of areas **text, title, table, picture, and list** | [PubLayNet](https://paddle-model-ecology.bj.bcebos.com/model/layout-parser/ppyolov2_r50vd_dcn_365e_publaynet.tar) | {0: "Text", 1: "Title", 2: "List", 3:"Table", 4:"Figure"}|

+Start from [Quick Start](./docs/quickstart_en.md).

-### 7.2 OCR and table recognition model

+## 5. Model List

-|model name|description|model size|download|

-| --- | --- | --- | --- |

-|ch_PP-OCRv3_det| [New] Lightweight model, supporting Chinese, English, multilingual text detection | 3.8M |[inference model](https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_infer.tar) / [trained model](https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_det_distill_train.tar)|

-|ch_PP-OCRv3_rec| [New] Lightweight model, supporting Chinese, English, multilingual text recognition | 12.4M |[inference model](https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_infer.tar) / [trained model](https://paddleocr.bj.bcebos.com/PP-OCRv3/chinese/ch_PP-OCRv3_rec_train.tar) |

-|ch_ppstructure_mobile_v2.0_SLANet|Chinese table recognition model based on SLANet|9.3M|[inference model](https://paddleocr.bj.bcebos.com/ppstructure/models/slanet/ch_ppstructure_mobile_v2.0_SLANet_infer.tar) / [trained model](https://paddleocr.bj.bcebos.com/ppstructure/models/slanet/ch_ppstructure_mobile_v2.0_SLANet_train.tar) |

+Some tasks need to use both the structured analysis models and the OCR models. For example, the table recognition task needs to use the table recognition model for structured analysis, and the OCR model to recognize the text in the table. Please select the appropriate models according to your specific needs.

-### 7.3 KIE model

+For structural analysis related model downloads, please refer to:

+- [PP-Structure Model Zoo](./docs/models_list_en.md)

-|model name|description|model size|download|

-| --- | --- | --- | --- |

-|ser_LayoutXLM_xfun_zhd|SER model trained on xfun Chinese dataset based on LayoutXLM|1.4G|[inference model coming soon]() / [trained model](https://paddleocr.bj.bcebos.com/pplayout/ser_LayoutXLM_xfun_zh.tar) |

-|re_LayoutXLM_xfun_zh|RE model trained on xfun Chinese dataset based on LayoutXLM|1.4G|[inference model coming soon]() / [trained model](https://paddleocr.bj.bcebos.com/pplayout/re_LayoutXLM_xfun_zh.tar) |

+For OCR related model downloads, please refer to:

+- [PP-OCR Model Zoo](../doc/doc_en/models_list_en.md)

-If you need to use other models, you can download the model in [PPOCR model_list](../doc/doc_en/models_list_en.md) and [PPStructure model_list](./docs/models_list.md)

diff --git a/ppstructure/README_ch.md b/ppstructure/README_ch.md

index 6539002bfe1497853dfa11eb774cf3c453567988..87a9c625b32c32e9c7fffb8ebc9b9fdf3b2130db 100644

--- a/ppstructure/README_ch.md

+++ b/ppstructure/README_ch.md

@@ -21,7 +21,7 @@ PP-Structurev2系统流程图如下所示,文档图像首先经过图像矫正

- 关键信息抽取任务中,首先使用OCR引擎提取文本内容,然后由语义实体识别模块获取图像中的语义实体,最后经关系抽取模块获取语义实体之间的对应关系,从而提取需要的关键信息。

-更多技术细节:👉 [PP-Structurev2技术报告]()

+更多技术细节:👉 [PP-Structurev2技术报告](docs/PP-Structurev2_introduction.md)

PP-Structurev2支持各个模块独立使用或灵活搭配,如,可以单独使用版面分析,或单独使用表格识别,点击下面相应链接获取各个独立模块的使用教程:

@@ -76,6 +76,14 @@ PP-Structurev2支持各个模块独立使用或灵活搭配,如,可以单独

+

+ +

+ +

+  +

+ +

+

+

+  +

+ +

+

+

+ +

+

+

+ +

+ +

+  +

+

+

+ -The main features of PP-Structure are as follows:

+More technical details: 👉 [PP-Structurev2 Technical Report](docs/PP-Structurev2_introduction.md)

-- Support the layout analysis of documents, divide the documents into 5 types of areas **text, title, table, image and list** (conjunction with Layout-Parser)

-- Support to extract the texts from the text, title, picture and list areas (used in conjunction with PP-OCR)

-- Support to extract excel files from the table areas

-- Support python whl package and command line usage, easy to use

-- Support custom training for layout analysis and table structure tasks

-- Support Document Key Information Extraction (KIE) tasks: Semantic Entity Recognition (SER) and Relation Extraction (RE)

+PP-Structurev2 supports independent use or flexible collocation of each module. For example, you can use layout analysis alone or table recognition alone. Click the corresponding link below to get the tutorial for each independent module:

-## 4. Results

+- [Layout Analysis](layout/README.md)

+- [Table Recognition](table/README.md)

+- [Key Information Extraction](kie/README.md)

+- [Layout Recovery](recovery/README.md)

-### 4.1 Layout analysis and table recognition

+## 2. Features

-

-The main features of PP-Structure are as follows:

+More technical details: 👉 [PP-Structurev2 Technical Report](docs/PP-Structurev2_introduction.md)

-- Support the layout analysis of documents, divide the documents into 5 types of areas **text, title, table, image and list** (conjunction with Layout-Parser)

-- Support to extract the texts from the text, title, picture and list areas (used in conjunction with PP-OCR)

-- Support to extract excel files from the table areas

-- Support python whl package and command line usage, easy to use

-- Support custom training for layout analysis and table structure tasks

-- Support Document Key Information Extraction (KIE) tasks: Semantic Entity Recognition (SER) and Relation Extraction (RE)

+PP-Structurev2 supports independent use or flexible collocation of each module. For example, you can use layout analysis alone or table recognition alone. Click the corresponding link below to get the tutorial for each independent module:

-## 4. Results

+- [Layout Analysis](layout/README.md)

+- [Table Recognition](table/README.md)

+- [Key Information Extraction](kie/README.md)

+- [Layout Recovery](recovery/README.md)

-### 4.1 Layout analysis and table recognition

+## 2. Features

- -## 5. Quick start

+### 3.3 KIE

-Start from [Quick Installation](./docs/quickstart.md)

+* SER

-## 6. PP-Structure System

+Different colored boxes in the figure represent different categories.

-### 6.1 Layout analysis and table recognition

+

-## 5. Quick start

+### 3.3 KIE

-Start from [Quick Installation](./docs/quickstart.md)

+* SER

-## 6. PP-Structure System

+Different colored boxes in the figure represent different categories.

-### 6.1 Layout analysis and table recognition

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ -更多技术细节:👉 [PP-Structurev2技术报告]()

+更多技术细节:👉 [PP-Structurev2技术报告](docs/PP-Structurev2_introduction.md)

PP-Structurev2支持各个模块独立使用或灵活搭配,如,可以单独使用版面分析,或单独使用表格识别,点击下面相应链接获取各个独立模块的使用教程:

@@ -76,6 +76,14 @@ PP-Structurev2支持各个模块独立使用或灵活搭配,如,可以单独

-更多技术细节:👉 [PP-Structurev2技术报告]()

+更多技术细节:👉 [PP-Structurev2技术报告](docs/PP-Structurev2_introduction.md)

PP-Structurev2支持各个模块独立使用或灵活搭配,如,可以单独使用版面分析,或单独使用表格识别,点击下面相应链接获取各个独立模块的使用教程:

@@ -76,6 +76,14 @@ PP-Structurev2支持各个模块独立使用或灵活搭配,如,可以单独

+

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+ +

+