Merge branch 'dygraph' of https://github.com/PaddlePaddle/PaddleOCR into update_requirements

Showing

因为 它太大了无法显示 source diff 。你可以改为 查看blob。

configs/e2e/e2e_r50_vd_pg.yml

0 → 100644

文件已移动

deploy/pdserving/README.md

0 → 100644

deploy/pdserving/README_CN.md

0 → 100644

deploy/pdserving/__init__.py

0 → 100644

deploy/pdserving/config.yml

0 → 100644

26.2 KB

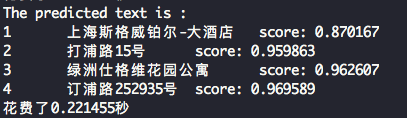

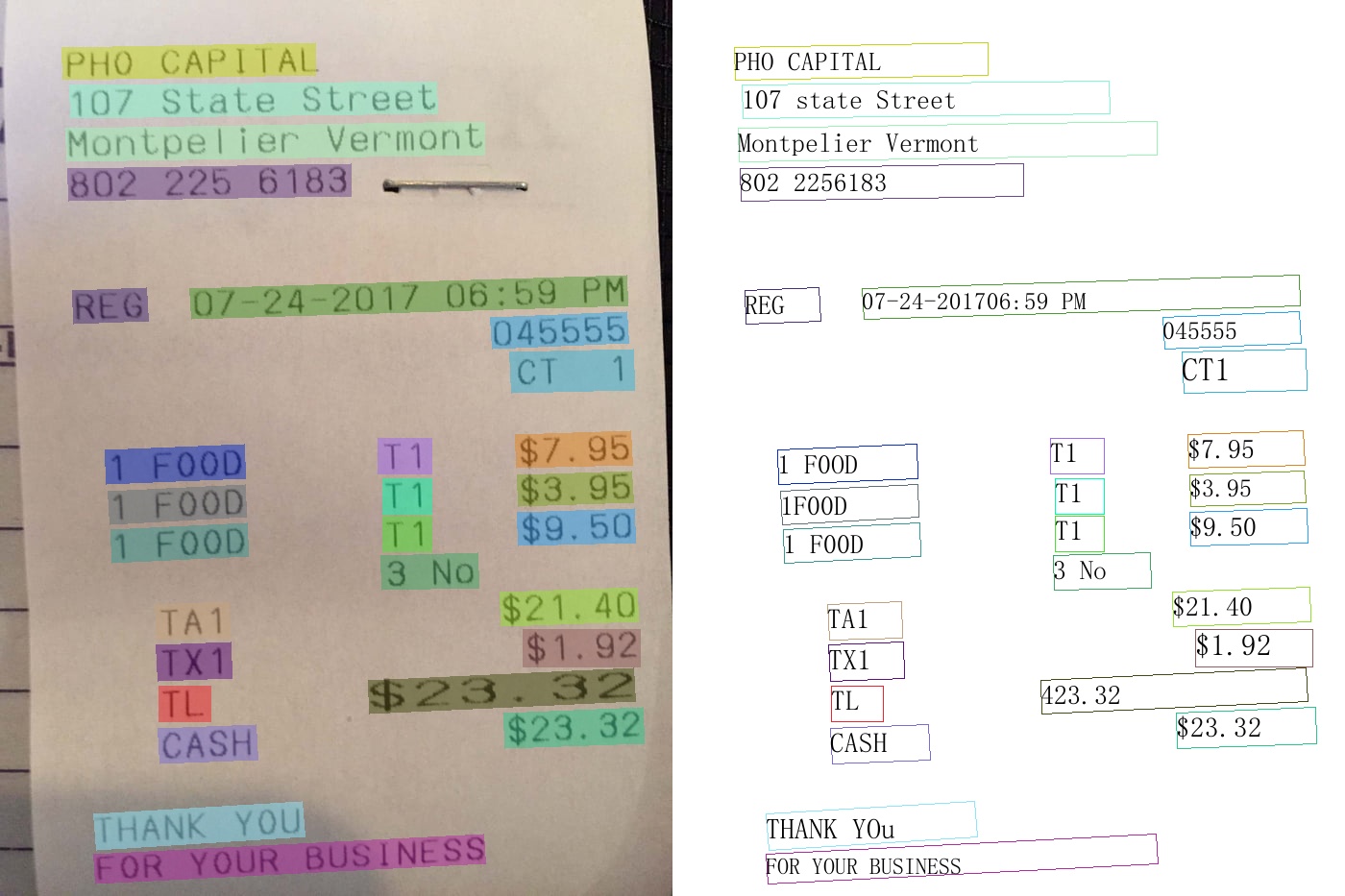

deploy/pdserving/imgs/results.png

0 → 100644

119.4 KB

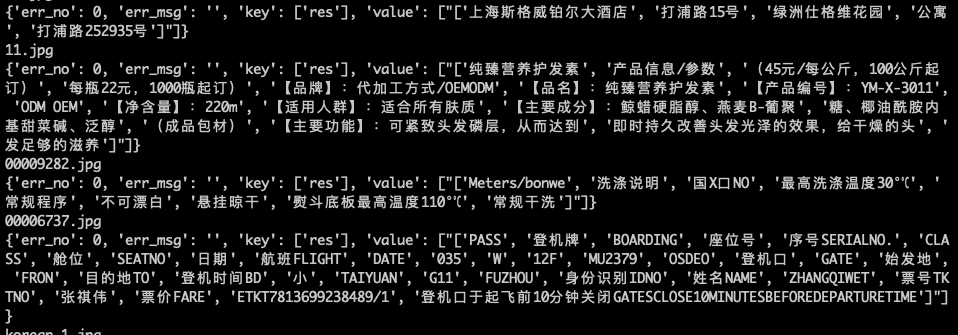

194.6 KB

deploy/pdserving/ocr_reader.py

0 → 100644

deploy/pdserving/web_service.py

0 → 100644

deploy/slim/prune/README.md

0 → 100644

deploy/slim/prune/README_en.md

0 → 100644

doc/doc_ch/multi_languages.md

0 → 100644

doc/doc_ch/pgnet.md

0 → 100644

doc/doc_en/multi_languages_en.md

0 → 100644

doc/doc_en/pgnet_en.md

0 → 100644

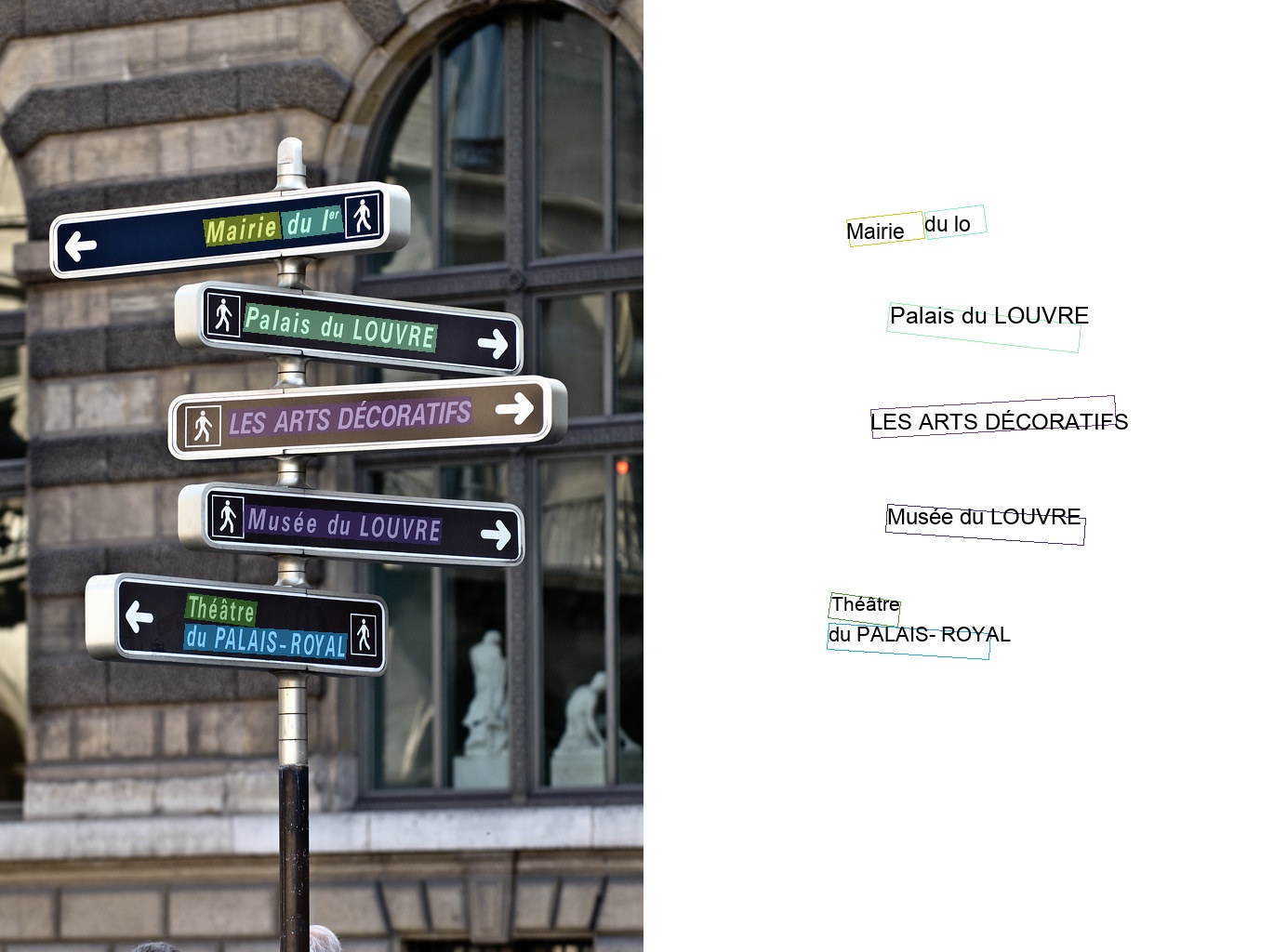

60.6 KB

662.8 KB

466.8 KB

133.6 KB

337.2 KB

533.8 KB

558.2 KB

231.7 KB

249.3 KB

460.7 KB

| W: | H:

| W: | H:

| W: | H:

| W: | H:

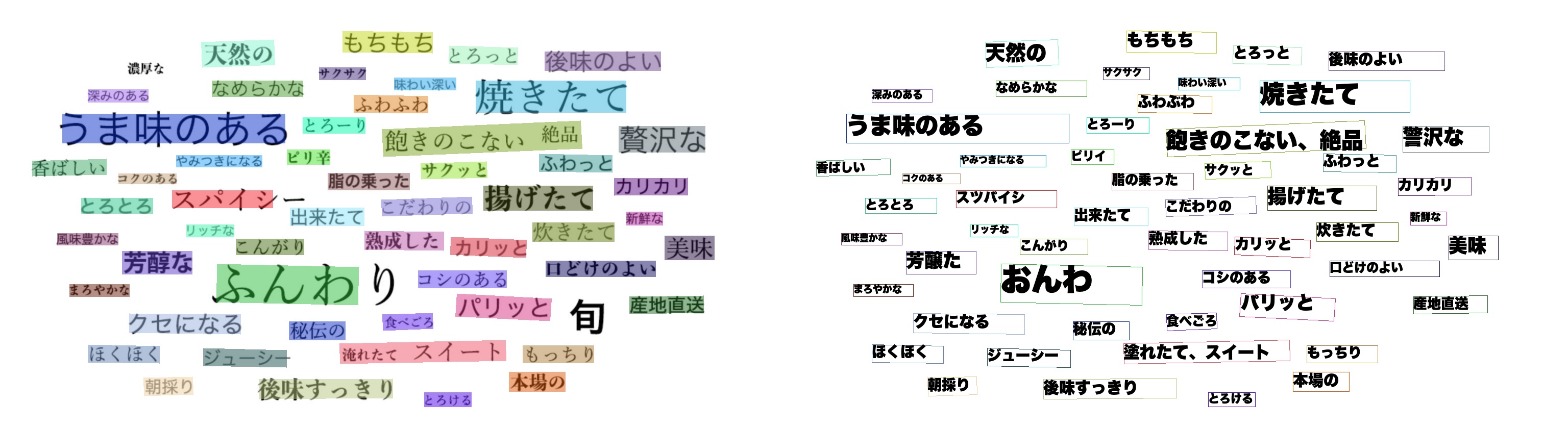

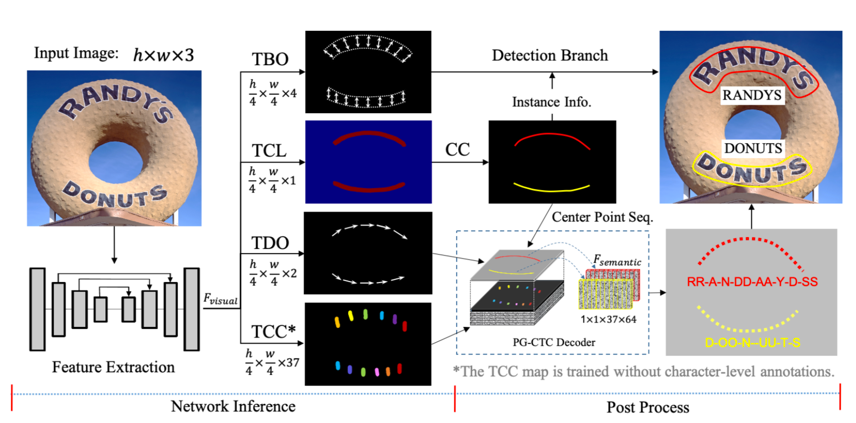

doc/pgnet_framework.png

0 → 100644

241.7 KB

ppocr/data/imaug/pg_process.py

0 → 100644

ppocr/data/pgnet_dataset.py

0 → 100644

ppocr/losses/e2e_pg_loss.py

0 → 100644

ppocr/metrics/e2e_metric.py

0 → 100644

ppocr/modeling/necks/pg_fpn.py

0 → 100644

ppocr/utils/dict/arabic_dict.txt

0 → 100644

ppocr/utils/dict/latin_dict.txt

0 → 100644

ppocr/utils/e2e_metric/Deteval.py

0 → 100755

ppocr/utils/e2e_utils/visual.py

0 → 100644

ppocr/utils/en_dict.txt

0 → 100644

| ... | ... | @@ -7,4 +7,5 @@ opencv-python==4.2.0.32 |

| tqdm | ||

| numpy | ||

| visualdl | ||

| python-Levenshtein | ||

| \ No newline at end of file | ||

| python-Levenshtein | ||

| opencv-contrib-python | ||

| \ No newline at end of file |

tools/infer/predict_e2e.py

0 → 100755

tools/infer_e2e.py

0 → 100755