diff --git a/modules/image/Image_gan/gan/photopen/README.md b/modules/image/Image_gan/gan/photopen/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..73c80f9ad381b2adaeb7ab28d95c702b6cc55102

--- /dev/null

+++ b/modules/image/Image_gan/gan/photopen/README.md

@@ -0,0 +1,126 @@

+# photopen

+

+|模型名称|photopen|

+| :--- | :---: |

+|类别|图像 - 图像生成|

+|网络|SPADEGenerator|

+|数据集|coco_stuff|

+|是否支持Fine-tuning|否|

+|模型大小|74MB|

+|最新更新日期|2021-12-14|

+|数据指标|-|

+

+

+## 一、模型基本信息

+

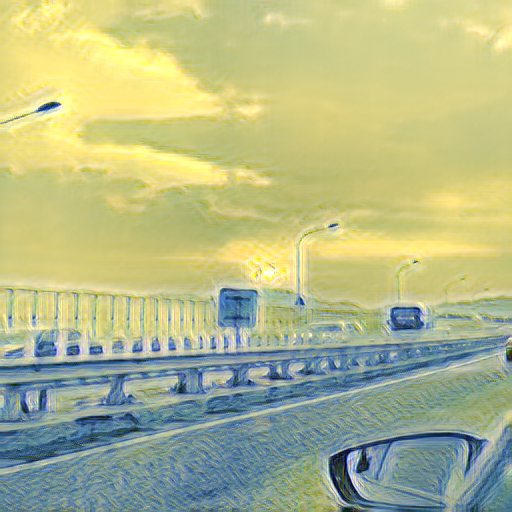

+- ### 应用效果展示

+ - 样例结果示例:

+

+  +

+

+

+- ### 模型介绍

+

+ - 本模块采用一个像素风格迁移网络 Pix2PixHD,能够根据输入的语义分割标签生成照片风格的图片。为了解决模型归一化层导致标签语义信息丢失的问题,向 Pix2PixHD 的生成器网络中添加了 SPADE(Spatially-Adaptive

+ Normalization)空间自适应归一化模块,通过两个卷积层保留了归一化时训练的缩放与偏置参数的空间维度,以增强生成图片的质量。语义风格标签图像可以参考[coco_stuff数据集](https://github.com/nightrome/cocostuff)获取, 也可以通过[PaddleGAN repo中的该项目](https://github.com/PaddlePaddle/PaddleGAN/blob/87537ad9d4eeda17eaa5916c6a585534ab989ea8/docs/zh_CN/tutorials/photopen.md)来自定义生成图像进行体验。

+

+

+

+## 二、安装

+

+- ### 1、环境依赖

+ - ppgan

+

+- ### 2、安装

+

+ - ```shell

+ $ hub install photopen

+ ```

+ - 如您安装时遇到问题,可参考:[零基础windows安装](../../../../docs/docs_ch/get_start/windows_quickstart.md)

+ | [零基础Linux安装](../../../../docs/docs_ch/get_start/linux_quickstart.md) | [零基础MacOS安装](../../../../docs/docs_ch/get_start/mac_quickstart.md)

+

+## 三、模型API预测

+

+- ### 1、命令行预测

+

+ - ```shell

+ # Read from a file

+ $ hub run photopen --input_path "/PATH/TO/IMAGE"

+ ```

+ - 通过命令行方式实现图像生成模型的调用,更多请见 [PaddleHub命令行指令](../../../../docs/docs_ch/tutorial/cmd_usage.rst)

+

+- ### 2、预测代码示例

+

+ - ```python

+ import paddlehub as hub

+

+ module = hub.Module(name="photopen")

+ input_path = ["/PATH/TO/IMAGE"]

+ # Read from a file

+ module.photo_transfer(paths=input_path, output_dir='./transfer_result/', use_gpu=True)

+ ```

+

+- ### 3、API

+

+ - ```python

+ photo_transfer(images=None, paths=None, output_dir='./transfer_result/', use_gpu=False, visualization=True):

+ ```

+ - 图像转换生成API。

+

+ - **参数**

+

+ - images (list\[numpy.ndarray\]): 图片数据,ndarray.shape 为 \[H, W, C\];

+ - paths (list\[str\]): 图片的路径;

+ - output\_dir (str): 结果保存的路径;

+ - use\_gpu (bool): 是否使用 GPU;

+ - visualization(bool): 是否保存结果到本地文件夹

+

+

+## 四、服务部署

+

+- PaddleHub Serving可以部署一个在线图像转换生成服务。

+

+- ### 第一步:启动PaddleHub Serving

+

+ - 运行启动命令:

+ - ```shell

+ $ hub serving start -m photopen

+ ```

+

+ - 这样就完成了一个图像转换生成的在线服务API的部署,默认端口号为8866。

+

+ - **NOTE:** 如使用GPU预测,则需要在启动服务之前,请设置CUDA\_VISIBLE\_DEVICES环境变量,否则不用设置。

+

+- ### 第二步:发送预测请求

+

+ - 配置好服务端,以下数行代码即可实现发送预测请求,获取预测结果

+

+ - ```python

+ import requests

+ import json

+ import cv2

+ import base64

+

+

+ def cv2_to_base64(image):

+ data = cv2.imencode('.jpg', image)[1]

+ return base64.b64encode(data.tostring()).decode('utf8')

+

+ # 发送HTTP请求

+ data = {'images':[cv2_to_base64(cv2.imread("/PATH/TO/IMAGE"))]}

+ headers = {"Content-type": "application/json"}

+ url = "http://127.0.0.1:8866/predict/photopen"

+ r = requests.post(url=url, headers=headers, data=json.dumps(data))

+

+ # 打印预测结果

+ print(r.json()["results"])

+

+## 五、更新历史

+

+* 1.0.0

+

+ 初始发布

+

+ - ```shell

+ $ hub install photopen==1.0.0

+ ```

diff --git a/modules/image/Image_gan/gan/photopen/model.py b/modules/image/Image_gan/gan/photopen/model.py

new file mode 100644

index 0000000000000000000000000000000000000000..4a0b0a4836b010ca4d72995c8857a8bb0ddd7aa2

--- /dev/null

+++ b/modules/image/Image_gan/gan/photopen/model.py

@@ -0,0 +1,62 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserve.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import os

+

+import cv2

+import numpy as np

+import paddle

+from PIL import Image

+from PIL import ImageOps

+from ppgan.models.generators import SPADEGenerator

+from ppgan.utils.filesystem import load

+from ppgan.utils.photopen import data_onehot_pro

+

+

+class PhotoPenPredictor:

+ def __init__(self, weight_path, gen_cfg):

+

+ # 初始化模型

+ gen = SPADEGenerator(

+ gen_cfg.ngf,

+ gen_cfg.num_upsampling_layers,

+ gen_cfg.crop_size,

+ gen_cfg.aspect_ratio,

+ gen_cfg.norm_G,

+ gen_cfg.semantic_nc,

+ gen_cfg.use_vae,

+ gen_cfg.nef,

+ )

+ gen.eval()

+ para = load(weight_path)

+ if 'net_gen' in para:

+ gen.set_state_dict(para['net_gen'])

+ else:

+ gen.set_state_dict(para)

+

+ self.gen = gen

+ self.gen_cfg = gen_cfg

+

+ def run(self, image):

+ sem = Image.fromarray(image).convert('L')

+ sem = sem.resize((self.gen_cfg.crop_size, self.gen_cfg.crop_size), Image.NEAREST)

+ sem = np.array(sem).astype('float32')

+ sem = paddle.to_tensor(sem)

+ sem = sem.reshape([1, 1, self.gen_cfg.crop_size, self.gen_cfg.crop_size])

+

+ one_hot = data_onehot_pro(sem, self.gen_cfg)

+ predicted = self.gen(one_hot)

+ pic = predicted.numpy()[0].reshape((3, 256, 256)).transpose((1, 2, 0))

+ pic = ((pic + 1.) / 2. * 255).astype('uint8')

+

+ return pic

diff --git a/modules/image/Image_gan/gan/photopen/module.py b/modules/image/Image_gan/gan/photopen/module.py

new file mode 100644

index 0000000000000000000000000000000000000000..f8a23e574c9823c52daf2e07a318e344b8220b70

--- /dev/null

+++ b/modules/image/Image_gan/gan/photopen/module.py

@@ -0,0 +1,133 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import argparse

+import copy

+import os

+

+import cv2

+import numpy as np

+import paddle

+from ppgan.utils.config import get_config

+from skimage.io import imread

+from skimage.transform import rescale

+from skimage.transform import resize

+

+import paddlehub as hub

+from .model import PhotoPenPredictor

+from .util import base64_to_cv2

+from paddlehub.module.module import moduleinfo

+from paddlehub.module.module import runnable

+from paddlehub.module.module import serving

+

+

+@moduleinfo(

+ name="photopen", type="CV/style_transfer", author="paddlepaddle", author_email="", summary="", version="1.0.0")

+class Photopen:

+ def __init__(self):

+ self.pretrained_model = os.path.join(self.directory, "photopen.pdparams")

+ cfg = get_config(os.path.join(self.directory, "photopen.yaml"))

+ self.network = PhotoPenPredictor(weight_path=self.pretrained_model, gen_cfg=cfg.predict)

+

+ def photo_transfer(self,

+ images: list = None,

+ paths: list = None,

+ output_dir: str = './transfer_result/',

+ use_gpu: bool = False,

+ visualization: bool = True):

+ '''

+ images (list[numpy.ndarray]): data of images, shape of each is [H, W, C], color space must be BGR(read by cv2).

+ paths (list[str]): paths to images

+

+ output_dir (str): the dir to save the results

+ use_gpu (bool): if True, use gpu to perform the computation, otherwise cpu.

+ visualization (bool): if True, save results in output_dir.

+ '''

+ results = []

+ paddle.disable_static()

+ place = 'gpu:0' if use_gpu else 'cpu'

+ place = paddle.set_device(place)

+ if images == None and paths == None:

+ print('No image provided. Please input an image or a image path.')

+ return

+

+ if images != None:

+ for image in images:

+ image = image[:, :, ::-1]

+ out = self.network.run(image)

+ results.append(out)

+

+ if paths != None:

+ for path in paths:

+ image = cv2.imread(path)[:, :, ::-1]

+ out = self.network.run(image)

+ results.append(out)

+

+ if visualization == True:

+ if not os.path.exists(output_dir):

+ os.makedirs(output_dir, exist_ok=True)

+ for i, out in enumerate(results):

+ if out is not None:

+ cv2.imwrite(os.path.join(output_dir, 'output_{}.png'.format(i)), out[:, :, ::-1])

+

+ return results

+

+ @runnable

+ def run_cmd(self, argvs: list):

+ """

+ Run as a command.

+ """

+ self.parser = argparse.ArgumentParser(

+ description="Run the {} module.".format(self.name),

+ prog='hub run {}'.format(self.name),

+ usage='%(prog)s',

+ add_help=True)

+

+ self.arg_input_group = self.parser.add_argument_group(title="Input options", description="Input data. Required")

+ self.arg_config_group = self.parser.add_argument_group(

+ title="Config options", description="Run configuration for controlling module behavior, not required.")

+ self.add_module_config_arg()

+ self.add_module_input_arg()

+ self.args = self.parser.parse_args(argvs)

+ results = self.photo_transfer(

+ paths=[self.args.input_path],

+ output_dir=self.args.output_dir,

+ use_gpu=self.args.use_gpu,

+ visualization=self.args.visualization)

+ return results

+

+ @serving

+ def serving_method(self, images, **kwargs):

+ """

+ Run as a service.

+ """

+ images_decode = [base64_to_cv2(image) for image in images]

+ results = self.photo_transfer(images=images_decode, **kwargs)

+ tolist = [result.tolist() for result in results]

+ return tolist

+

+ def add_module_config_arg(self):

+ """

+ Add the command config options.

+ """

+ self.arg_config_group.add_argument('--use_gpu', action='store_true', help="use GPU or not")

+

+ self.arg_config_group.add_argument(

+ '--output_dir', type=str, default='transfer_result', help='output directory for saving result.')

+ self.arg_config_group.add_argument('--visualization', type=bool, default=False, help='save results or not.')

+

+ def add_module_input_arg(self):

+ """

+ Add the command input options.

+ """

+ self.arg_input_group.add_argument('--input_path', type=str, help="path to input image.")

diff --git a/modules/image/Image_gan/gan/photopen/photopen.yaml b/modules/image/Image_gan/gan/photopen/photopen.yaml

new file mode 100644

index 0000000000000000000000000000000000000000..178f361736c06f1f816997dc4a52a9a6bd62bcc9

--- /dev/null

+++ b/modules/image/Image_gan/gan/photopen/photopen.yaml

@@ -0,0 +1,95 @@

+total_iters: 1

+output_dir: output_dir

+checkpoints_dir: checkpoints

+

+model:

+ name: PhotoPenModel

+ generator:

+ name: SPADEGenerator

+ ngf: 24

+ num_upsampling_layers: normal

+ crop_size: 256

+ aspect_ratio: 1.0

+ norm_G: spectralspadebatch3x3

+ semantic_nc: 14

+ use_vae: False

+ nef: 16

+ discriminator:

+ name: MultiscaleDiscriminator

+ ndf: 128

+ num_D: 4

+ crop_size: 256

+ label_nc: 12

+ output_nc: 3

+ contain_dontcare_label: True

+ no_instance: False

+ n_layers_D: 6

+ criterion:

+ name: PhotoPenPerceptualLoss

+ crop_size: 224

+ lambda_vgg: 1.6

+ label_nc: 12

+ contain_dontcare_label: True

+ batchSize: 1

+ crop_size: 256

+ lambda_feat: 10.0

+

+dataset:

+ train:

+ name: PhotoPenDataset

+ content_root: test/coco_stuff

+ load_size: 286

+ crop_size: 256

+ num_workers: 0

+ batch_size: 1

+ test:

+ name: PhotoPenDataset_test

+ content_root: test/coco_stuff

+ load_size: 286

+ crop_size: 256

+ num_workers: 0

+ batch_size: 1

+

+lr_scheduler: # abundoned

+ name: LinearDecay

+ learning_rate: 0.0001

+ start_epoch: 99999

+ decay_epochs: 99999

+ # will get from real dataset

+ iters_per_epoch: 1

+

+optimizer:

+ lr: 0.0001

+ optimG:

+ name: Adam

+ net_names:

+ - net_gen

+ beta1: 0.9

+ beta2: 0.999

+ optimD:

+ name: Adam

+ net_names:

+ - net_des

+ beta1: 0.9

+ beta2: 0.999

+

+log_config:

+ interval: 1

+ visiual_interval: 1

+

+snapshot_config:

+ interval: 1

+

+predict:

+ name: SPADEGenerator

+ ngf: 24

+ num_upsampling_layers: normal

+ crop_size: 256

+ aspect_ratio: 1.0

+ norm_G: spectralspadebatch3x3

+ semantic_nc: 14

+ use_vae: False

+ nef: 16

+ contain_dontcare_label: True

+ label_nc: 12

+ batchSize: 1

diff --git a/modules/image/Image_gan/gan/photopen/requirements.txt b/modules/image/Image_gan/gan/photopen/requirements.txt

new file mode 100644

index 0000000000000000000000000000000000000000..67e9bb6fa840355e9ed0d44b7134850f1fe22fe1

--- /dev/null

+++ b/modules/image/Image_gan/gan/photopen/requirements.txt

@@ -0,0 +1 @@

+ppgan

diff --git a/modules/image/Image_gan/gan/photopen/util.py b/modules/image/Image_gan/gan/photopen/util.py

new file mode 100644

index 0000000000000000000000000000000000000000..531a0ae0d487822a870ba7f09817e658967aff10

--- /dev/null

+++ b/modules/image/Image_gan/gan/photopen/util.py

@@ -0,0 +1,11 @@

+import base64

+

+import cv2

+import numpy as np

+

+

+def base64_to_cv2(b64str):

+ data = base64.b64decode(b64str.encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ data = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ return data

diff --git a/modules/image/Image_gan/style_transfer/face_parse/README.md b/modules/image/Image_gan/style_transfer/face_parse/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..8d9716150c156912c42eebe67bf0cd38db9f2bcd

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/face_parse/README.md

@@ -0,0 +1,133 @@

+# face_parse

+

+|模型名称|face_parse|

+| :--- | :---: |

+|类别|图像 - 人脸解析|

+|网络|BiSeNet|

+|数据集|COCO-Stuff|

+|是否支持Fine-tuning|否|

+|模型大小|77MB|

+|最新更新日期|2021-12-07|

+|数据指标|-|

+

+

+## 一、模型基本信息

+

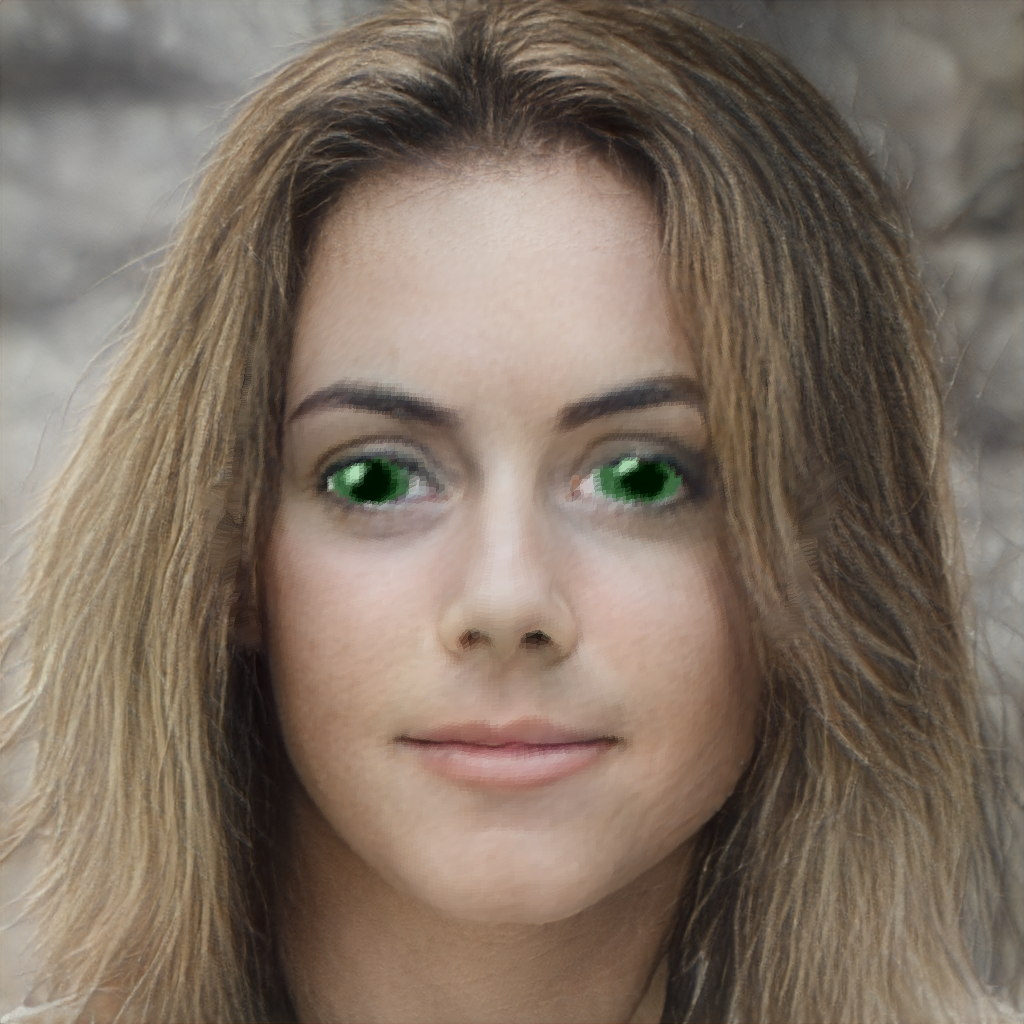

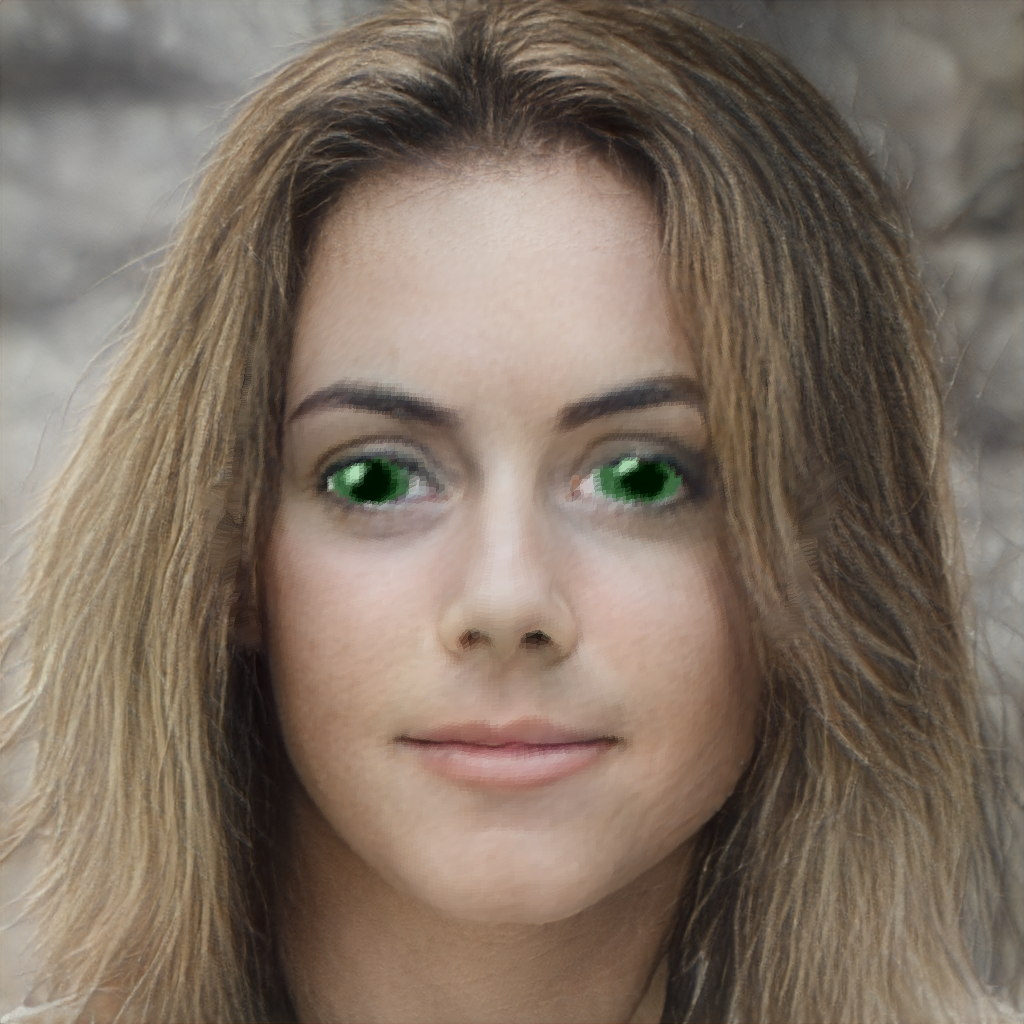

+- ### 应用效果展示

+ - 样例结果示例:

+

+  +

+

+ 输入图像

+

+  +

+

+ 输出图像

+

+

+

+- ### 模型介绍

+

+ - 人脸解析是语义图像分割的一种特殊情况,人脸解析是计算人脸图像中不同语义成分(如头发、嘴唇、鼻子、眼睛等)的像素级标签映射。给定一个输入的人脸图像,人脸解析将为每个语义成分分配一个像素级标签。

+

+

+

+## 二、安装

+

+- ### 1、环境依赖

+ - ppgan

+ - dlib

+

+- ### 2、安装

+

+ - ```shell

+ $ hub install face_parse

+ ```

+ - 如您安装时遇到问题,可参考:[零基础windows安装](../../../../docs/docs_ch/get_start/windows_quickstart.md)

+ | [零基础Linux安装](../../../../docs/docs_ch/get_start/linux_quickstart.md) | [零基础MacOS安装](../../../../docs/docs_ch/get_start/mac_quickstart.md)

+

+## 三、模型API预测

+

+- ### 1、命令行预测

+

+ - ```shell

+ # Read from a file

+ $ hub run face_parse --input_path "/PATH/TO/IMAGE"

+ ```

+ - 通过命令行方式实现人脸解析模型的调用,更多请见 [PaddleHub命令行指令](../../../../docs/docs_ch/tutorial/cmd_usage.rst)

+

+- ### 2、预测代码示例

+

+ - ```python

+ import paddlehub as hub

+

+ module = hub.Module(name="face_parse")

+ input_path = ["/PATH/TO/IMAGE"]

+ # Read from a file

+ module.style_transfer(paths=input_path, output_dir='./transfer_result/', use_gpu=True)

+ ```

+

+- ### 3、API

+

+ - ```python

+ style_transfer(images=None, paths=None, output_dir='./transfer_result/', use_gpu=False, visualization=True):

+ ```

+ - 人脸解析转换API。

+

+ - **参数**

+

+ - images (list\[numpy.ndarray\]): 图片数据,ndarray.shape 为 \[H, W, C\];

+ - paths (list\[str\]): 图片的路径;

+ - output\_dir (str): 结果保存的路径;

+ - use\_gpu (bool): 是否使用 GPU;

+ - visualization(bool): 是否保存结果到本地文件夹

+

+

+## 四、服务部署

+

+- PaddleHub Serving可以部署一个在线人脸解析转换服务。

+

+- ### 第一步:启动PaddleHub Serving

+

+ - 运行启动命令:

+ - ```shell

+ $ hub serving start -m face_parse

+ ```

+

+ - 这样就完成了一个人脸解析转换的在线服务API的部署,默认端口号为8866。

+

+ - **NOTE:** 如使用GPU预测,则需要在启动服务之前,请设置CUDA\_VISIBLE\_DEVICES环境变量,否则不用设置。

+

+- ### 第二步:发送预测请求

+

+ - 配置好服务端,以下数行代码即可实现发送预测请求,获取预测结果

+

+ - ```python

+ import requests

+ import json

+ import cv2

+ import base64

+

+

+ def cv2_to_base64(image):

+ data = cv2.imencode('.jpg', image)[1]

+ return base64.b64encode(data.tostring()).decode('utf8')

+

+ # 发送HTTP请求

+ data = {'images':[cv2_to_base64(cv2.imread("/PATH/TO/IMAGE"))]}

+ headers = {"Content-type": "application/json"}

+ url = "http://127.0.0.1:8866/predict/face_parse"

+ r = requests.post(url=url, headers=headers, data=json.dumps(data))

+

+ # 打印预测结果

+ print(r.json()["results"])

+

+## 五、更新历史

+

+* 1.0.0

+

+ 初始发布

+

+ - ```shell

+ $ hub install face_parse==1.0.0

+ ```

diff --git a/modules/image/Image_gan/style_transfer/face_parse/model.py b/modules/image/Image_gan/style_transfer/face_parse/model.py

new file mode 100644

index 0000000000000000000000000000000000000000..c5df633416cd0ddc199bbb4bc7908e9dec008c58

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/face_parse/model.py

@@ -0,0 +1,51 @@

+# copyright (c) 2020 PaddlePaddle Authors. All Rights Reserve.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+

+import os

+import sys

+import argparse

+

+from PIL import Image

+import numpy as np

+import cv2

+

+import ppgan.faceutils as futils

+from ppgan.utils.preprocess import *

+from ppgan.utils.visual import mask2image

+

+

+class FaceParsePredictor:

+ def __init__(self):

+ self.input_size = (512, 512)

+ self.up_ratio = 0.6 / 0.85

+ self.down_ratio = 0.2 / 0.85

+ self.width_ratio = 0.2 / 0.85

+ self.face_parser = futils.mask.FaceParser()

+

+ def run(self, image):

+ image = Image.fromarray(image)

+ face = futils.dlib.detect(image)

+

+ if not face:

+ return

+ face_on_image = face[0]

+ image, face, crop_face = futils.dlib.crop(image, face_on_image, self.up_ratio, self.down_ratio,

+ self.width_ratio)

+ np_image = np.array(image)

+ mask = self.face_parser.parse(np.float32(cv2.resize(np_image, self.input_size)))

+ mask = cv2.resize(mask.numpy(), (256, 256))

+ mask = mask.astype(np.uint8)

+ mask = mask2image(mask)

+

+ return mask

diff --git a/modules/image/Image_gan/style_transfer/face_parse/module.py b/modules/image/Image_gan/style_transfer/face_parse/module.py

new file mode 100644

index 0000000000000000000000000000000000000000..f1985f9ba23faf68a74e07315d2dc766ffb4f0fc

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/face_parse/module.py

@@ -0,0 +1,133 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import argparse

+import copy

+import os

+

+import cv2

+import numpy as np

+import paddle

+from skimage.io import imread

+from skimage.transform import rescale

+from skimage.transform import resize

+

+import paddlehub as hub

+from .model import FaceParsePredictor

+from .util import base64_to_cv2

+from paddlehub.module.module import moduleinfo

+from paddlehub.module.module import runnable

+from paddlehub.module.module import serving

+

+

+@moduleinfo(

+ name="face_parse", type="CV/style_transfer", author="paddlepaddle", author_email="", summary="", version="1.0.0")

+class Face_parse:

+ def __init__(self):

+ self.pretrained_model = os.path.join(self.directory, "bisenet.pdparams")

+

+ self.network = FaceParsePredictor()

+

+ def style_transfer(self,

+ images: list = None,

+ paths: list = None,

+ output_dir: str = './transfer_result/',

+ use_gpu: bool = False,

+ visualization: bool = True):

+ '''

+

+

+ images (list[numpy.ndarray]): data of images, shape of each is [H, W, C], color space must be BGR(read by cv2).

+ paths (list[str]): paths to images

+ output_dir (str): the dir to save the results

+ use_gpu (bool): if True, use gpu to perform the computation, otherwise cpu.

+ visualization (bool): if True, save results in output_dir.

+ '''

+ results = []

+ paddle.disable_static()

+ place = 'gpu:0' if use_gpu else 'cpu'

+ place = paddle.set_device(place)

+ if images == None and paths == None:

+ print('No image provided. Please input an image or a image path.')

+ return

+

+ if images != None:

+ for image in images:

+ image = image[:, :, ::-1]

+ out = self.network.run(image)

+ results.append(out)

+

+ if paths != None:

+ for path in paths:

+ image = cv2.imread(path)[:, :, ::-1]

+ out = self.network.run(image)

+ results.append(out)

+

+ if visualization == True:

+ if not os.path.exists(output_dir):

+ os.makedirs(output_dir, exist_ok=True)

+ for i, out in enumerate(results):

+ if out is not None:

+ cv2.imwrite(os.path.join(output_dir, 'output_{}.png'.format(i)), out[:, :, ::-1])

+

+ return results

+

+ @runnable

+ def run_cmd(self, argvs: list):

+ """

+ Run as a command.

+ """

+ self.parser = argparse.ArgumentParser(

+ description="Run the {} module.".format(self.name),

+ prog='hub run {}'.format(self.name),

+ usage='%(prog)s',

+ add_help=True)

+

+ self.arg_input_group = self.parser.add_argument_group(title="Input options", description="Input data. Required")

+ self.arg_config_group = self.parser.add_argument_group(

+ title="Config options", description="Run configuration for controlling module behavior, not required.")

+ self.add_module_config_arg()

+ self.add_module_input_arg()

+ self.args = self.parser.parse_args(argvs)

+ results = self.style_transfer(

+ paths=[self.args.input_path],

+ output_dir=self.args.output_dir,

+ use_gpu=self.args.use_gpu,

+ visualization=self.args.visualization)

+ return results

+

+ @serving

+ def serving_method(self, images, **kwargs):

+ """

+ Run as a service.

+ """

+ images_decode = [base64_to_cv2(image) for image in images]

+ results = self.style_transfer(images=images_decode, **kwargs)

+ tolist = [result.tolist() for result in results]

+ return tolist

+

+ def add_module_config_arg(self):

+ """

+ Add the command config options.

+ """

+ self.arg_config_group.add_argument('--use_gpu', action='store_true', help="use GPU or not")

+

+ self.arg_config_group.add_argument(

+ '--output_dir', type=str, default='transfer_result', help='output directory for saving result.')

+ self.arg_config_group.add_argument('--visualization', type=bool, default=False, help='save results or not.')

+

+ def add_module_input_arg(self):

+ """

+ Add the command input options.

+ """

+ self.arg_input_group.add_argument('--input_path', type=str, help="path to input image.")

diff --git a/modules/image/Image_gan/style_transfer/face_parse/requirements.txt b/modules/image/Image_gan/style_transfer/face_parse/requirements.txt

new file mode 100644

index 0000000000000000000000000000000000000000..d9bfc85782a3ee323241fe7beb87a9f281c120fe

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/face_parse/requirements.txt

@@ -0,0 +1,2 @@

+ppgan

+dlib

diff --git a/modules/image/Image_gan/style_transfer/face_parse/util.py b/modules/image/Image_gan/style_transfer/face_parse/util.py

new file mode 100644

index 0000000000000000000000000000000000000000..b88ac3562b74cadc1d4d6459a56097ca4a938a0b

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/face_parse/util.py

@@ -0,0 +1,10 @@

+import base64

+import cv2

+import numpy as np

+

+

+def base64_to_cv2(b64str):

+ data = base64.b64decode(b64str.encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ data = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ return data

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_circuit/README.md b/modules/image/Image_gan/style_transfer/lapstyle_circuit/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..39c3270adf3914cacd7c60f6b250be58b74188c1

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_circuit/README.md

@@ -0,0 +1,142 @@

+# lapstyle_circuit

+

+|模型名称|lapstyle_circuit|

+| :--- | :---: |

+|类别|图像 - 风格迁移|

+|网络|LapStyle|

+|数据集|COCO|

+|是否支持Fine-tuning|否|

+|模型大小|121MB|

+|最新更新日期|2021-12-07|

+|数据指标|-|

+

+

+## 一、模型基本信息

+

+- ### 应用效果展示

+ - 样例结果示例:

+

+  +

+

+ 输入内容图形

+

+  +

+

+ 输入风格图形

+

+  +

+

+ 输出图像

+

+

+

+- ### 模型介绍

+

+ - LapStyle--拉普拉斯金字塔风格化网络,是一种能够生成高质量风格化图的快速前馈风格化网络,能渐进地生成复杂的纹理迁移效果,同时能够在512分辨率下达到100fps的速度。可实现多种不同艺术风格的快速迁移,在艺术图像生成、滤镜等领域有广泛的应用。

+

+ - 更多详情参考:[Drafting and Revision: Laplacian Pyramid Network for Fast High-Quality Artistic Style Transfer](https://arxiv.org/pdf/2104.05376.pdf)

+

+

+

+## 二、安装

+

+- ### 1、环境依赖

+ - ppgan

+

+- ### 2、安装

+

+ - ```shell

+ $ hub install lapstyle_circuit

+ ```

+ - 如您安装时遇到问题,可参考:[零基础windows安装](../../../../../docs/docs_ch/get_start/windows_quickstart.md)

+ | [零基础Linux安装](../../../../../docs/docs_ch/get_start/linux_quickstart.md) | [零基础MacOS安装](../../../../../docs/docs_ch/get_start/mac_quickstart.md)

+

+## 三、模型API预测

+

+- ### 1、命令行预测

+

+ - ```shell

+ # Read from a file

+ $ hub run lapstyle_circuit --content "/PATH/TO/IMAGE" --style "/PATH/TO/IMAGE1"

+ ```

+ - 通过命令行方式实现风格转换模型的调用,更多请见 [PaddleHub命令行指令](../../../../docs/docs_ch/tutorial/cmd_usage.rst)

+

+- ### 2、预测代码示例

+

+ - ```python

+ import paddlehub as hub

+

+ module = hub.Module(name="lapstyle_circuit")

+ content = cv2.imread("/PATH/TO/IMAGE")

+ style = cv2.imread("/PATH/TO/IMAGE1")

+ results = module.style_transfer(images=[{'content':content, 'style':style}], output_dir='./transfer_result', use_gpu=True)

+ ```

+

+- ### 3、API

+

+ - ```python

+ style_transfer(images=None, paths=None, output_dir='./transfer_result/', use_gpu=False, visualization=True)

+ ```

+ - 风格转换API。

+

+ - **参数**

+

+ - images (list[dict]): data of images, 每一个元素都为一个 dict,有关键字 content, style, 相应取值为:

+ - content (numpy.ndarray): 待转换的图片,shape 为 \[H, W, C\],BGR格式;

+ - style (numpy.ndarray) : 风格图像,shape为 \[H, W, C\],BGR格式;

+ - paths (list[str]): paths to images, 每一个元素都为一个dict, 有关键字 content, style, 相应取值为:

+ - content (str): 待转换的图片的路径;

+ - style (str) : 风格图像的路径;

+ - output\_dir (str): 结果保存的路径;

+ - use\_gpu (bool): 是否使用 GPU;

+ - visualization(bool): 是否保存结果到本地文件夹

+

+

+## 四、服务部署

+

+- PaddleHub Serving可以部署一个在线图像风格转换服务。

+

+- ### 第一步:启动PaddleHub Serving

+

+ - 运行启动命令:

+ - ```shell

+ $ hub serving start -m lapstyle_circuit

+ ```

+

+ - 这样就完成了一个图像风格转换的在线服务API的部署,默认端口号为8866。

+

+ - **NOTE:** 如使用GPU预测,则需要在启动服务之前,请设置CUDA\_VISIBLE\_DEVICES环境变量,否则不用设置。

+

+- ### 第二步:发送预测请求

+

+ - 配置好服务端,以下数行代码即可实现发送预测请求,获取预测结果

+

+ - ```python

+ import requests

+ import json

+ import cv2

+ import base64

+

+

+ def cv2_to_base64(image):

+ data = cv2.imencode('.jpg', image)[1]

+ return base64.b64encode(data.tostring()).decode('utf8')

+

+ # 发送HTTP请求

+ data = {'images':[{'content': cv2_to_base64(cv2.imread("/PATH/TO/IMAGE")), 'style': cv2_to_base64(cv2.imread("/PATH/TO/IMAGE1"))}]}

+ headers = {"Content-type": "application/json"}

+ url = "http://127.0.0.1:8866/predict/lapstyle_circuit"

+ r = requests.post(url=url, headers=headers, data=json.dumps(data))

+

+ # 打印预测结果

+ print(r.json()["results"])

+

+## 五、更新历史

+

+* 1.0.0

+

+ 初始发布

+

+ - ```shell

+ $ hub install lapstyle_circuit==1.0.0

+ ```

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_circuit/model.py b/modules/image/Image_gan/style_transfer/lapstyle_circuit/model.py

new file mode 100644

index 0000000000000000000000000000000000000000..d66c02322ecf630d643b23e193ac95b05d62a826

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_circuit/model.py

@@ -0,0 +1,140 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserve.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import os

+import urllib.request

+

+import cv2 as cv

+import numpy as np

+import paddle

+import paddle.nn.functional as F

+from paddle.vision.transforms import functional

+from PIL import Image

+from ppgan.models.generators import DecoderNet

+from ppgan.models.generators import Encoder

+from ppgan.models.generators import RevisionNet

+from ppgan.utils.visual import tensor2img

+

+

+def img(img):

+ # some images have 4 channels

+ if img.shape[2] > 3:

+ img = img[:, :, :3]

+ # HWC to CHW

+ return img

+

+

+def img_totensor(content_img, style_img):

+ if content_img.ndim == 2:

+ content_img = cv.cvtColor(content_img, cv.COLOR_GRAY2RGB)

+ else:

+ content_img = cv.cvtColor(content_img, cv.COLOR_BGR2RGB)

+ h, w, c = content_img.shape

+ content_img = Image.fromarray(content_img)

+ content_img = content_img.resize((512, 512), Image.BILINEAR)

+ content_img = np.array(content_img)

+ content_img = img(content_img)

+ content_img = functional.to_tensor(content_img)

+

+ style_img = cv.cvtColor(style_img, cv.COLOR_BGR2RGB)

+ style_img = Image.fromarray(style_img)

+ style_img = style_img.resize((512, 512), Image.BILINEAR)

+ style_img = np.array(style_img)

+ style_img = img(style_img)

+ style_img = functional.to_tensor(style_img)

+

+ content_img = paddle.unsqueeze(content_img, axis=0)

+ style_img = paddle.unsqueeze(style_img, axis=0)

+ return content_img, style_img, h, w

+

+

+def tensor_resample(tensor, dst_size, mode='bilinear'):

+ return F.interpolate(tensor, dst_size, mode=mode, align_corners=False)

+

+

+def laplacian(x):

+ """

+ Laplacian

+

+ return:

+ x - upsample(downsample(x))

+ """

+ return x - tensor_resample(tensor_resample(x, [x.shape[2] // 2, x.shape[3] // 2]), [x.shape[2], x.shape[3]])

+

+

+def make_laplace_pyramid(x, levels):

+ """

+ Make Laplacian Pyramid

+ """

+ pyramid = []

+ current = x

+ for i in range(levels):

+ pyramid.append(laplacian(current))

+ current = tensor_resample(current, (max(current.shape[2] // 2, 1), max(current.shape[3] // 2, 1)))

+ pyramid.append(current)

+ return pyramid

+

+

+def fold_laplace_pyramid(pyramid):

+ """

+ Fold Laplacian Pyramid

+ """

+ current = pyramid[-1]

+ for i in range(len(pyramid) - 2, -1, -1): # iterate from len-2 to 0

+ up_h, up_w = pyramid[i].shape[2], pyramid[i].shape[3]

+ current = pyramid[i] + tensor_resample(current, (up_h, up_w))

+ return current

+

+

+class LapStylePredictor:

+ def __init__(self, weight_path=None):

+

+ self.net_enc = Encoder()

+ self.net_dec = DecoderNet()

+ self.net_rev = RevisionNet()

+ self.net_rev_2 = RevisionNet()

+

+ self.net_enc.set_dict(paddle.load(weight_path)['net_enc'])

+ self.net_enc.eval()

+ self.net_dec.set_dict(paddle.load(weight_path)['net_dec'])

+ self.net_dec.eval()

+ self.net_rev.set_dict(paddle.load(weight_path)['net_rev'])

+ self.net_rev.eval()

+ self.net_rev_2.set_dict(paddle.load(weight_path)['net_rev_2'])

+ self.net_rev_2.eval()

+

+ def run(self, content_img, style_image):

+ content_img, style_img, h, w = img_totensor(content_img, style_image)

+ pyr_ci = make_laplace_pyramid(content_img, 2)

+ pyr_si = make_laplace_pyramid(style_img, 2)

+ pyr_ci.append(content_img)

+ pyr_si.append(style_img)

+ cF = self.net_enc(pyr_ci[2])

+ sF = self.net_enc(pyr_si[2])

+ stylized_small = self.net_dec(cF, sF)

+ stylized_up = F.interpolate(stylized_small, scale_factor=2)

+

+ revnet_input = paddle.concat(x=[pyr_ci[1], stylized_up], axis=1)

+ stylized_rev_lap = self.net_rev(revnet_input)

+ stylized_rev = fold_laplace_pyramid([stylized_rev_lap, stylized_small])

+

+ stylized_up = F.interpolate(stylized_rev, scale_factor=2)

+

+ revnet_input = paddle.concat(x=[pyr_ci[0], stylized_up], axis=1)

+ stylized_rev_lap_second = self.net_rev_2(revnet_input)

+ stylized_rev_second = fold_laplace_pyramid([stylized_rev_lap_second, stylized_rev_lap, stylized_small])

+

+ stylized = stylized_rev_second

+ stylized_visual = tensor2img(stylized, min_max=(0., 1.))

+

+ return stylized_visual

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_circuit/module.py b/modules/image/Image_gan/style_transfer/lapstyle_circuit/module.py

new file mode 100644

index 0000000000000000000000000000000000000000..6a4fbc67816660e202960828b2c4abd042e71a3c

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_circuit/module.py

@@ -0,0 +1,150 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import argparse

+import copy

+import os

+

+import cv2

+import numpy as np

+import paddle

+from skimage.io import imread

+from skimage.transform import rescale

+from skimage.transform import resize

+

+import paddlehub as hub

+from .model import LapStylePredictor

+from .util import base64_to_cv2

+from paddlehub.module.module import moduleinfo

+from paddlehub.module.module import runnable

+from paddlehub.module.module import serving

+

+

+@moduleinfo(

+ name="lapstyle_circuit",

+ type="CV/style_transfer",

+ author="paddlepaddle",

+ author_email="",

+ summary="",

+ version="1.0.0")

+class Lapstyle_circuit:

+ def __init__(self):

+ self.pretrained_model = os.path.join(self.directory, "lapstyle_circuit.pdparams")

+

+ self.network = LapStylePredictor(weight_path=self.pretrained_model)

+

+ def style_transfer(self,

+ images: list = None,

+ paths: list = None,

+ output_dir: str = './transfer_result/',

+ use_gpu: bool = False,

+ visualization: bool = True):

+ '''

+ Transfer a image to circuit style.

+

+ images (list[dict]): data of images, each element is a dict:

+ - content (numpy.ndarray): input image,shape is \[H, W, C\],BGR format;

+ - style (numpy.ndarray) : style image,shape is \[H, W, C\],BGR format;

+ paths (list[dict]): paths to images, eacg element is a dict:

+ - content (str): path to input image;

+ - style (str) : path to style image;

+

+ output_dir (str): the dir to save the results

+ use_gpu (bool): if True, use gpu to perform the computation, otherwise cpu.

+ visualization (bool): if True, save results in output_dir.

+

+ '''

+ results = []

+ paddle.disable_static()

+ place = 'gpu:0' if use_gpu else 'cpu'

+ place = paddle.set_device(place)

+ if images == None and paths == None:

+ print('No image provided. Please input an image or a image path.')

+ return

+

+ if images != None:

+ for image_dict in images:

+ content_img = image_dict['content']

+ style_img = image_dict['style']

+ results.append(self.network.run(content_img, style_img))

+

+ if paths != None:

+ for path_dict in paths:

+ content_img = cv2.imread(path_dict['content'])

+ style_img = cv2.imread(path_dict['style'])

+ results.append(self.network.run(content_img, style_img))

+

+ if visualization == True:

+ if not os.path.exists(output_dir):

+ os.makedirs(output_dir, exist_ok=True)

+ for i, out in enumerate(results):

+ cv2.imwrite(os.path.join(output_dir, 'output_{}.png'.format(i)), out[:, :, ::-1])

+

+ return results

+

+ @runnable

+ def run_cmd(self, argvs: list):

+ """

+ Run as a command.

+ """

+ self.parser = argparse.ArgumentParser(

+ description="Run the {} module.".format(self.name),

+ prog='hub run {}'.format(self.name),

+ usage='%(prog)s',

+ add_help=True)

+

+ self.arg_input_group = self.parser.add_argument_group(title="Input options", description="Input data. Required")

+ self.arg_config_group = self.parser.add_argument_group(

+ title="Config options", description="Run configuration for controlling module behavior, not required.")

+ self.add_module_config_arg()

+ self.add_module_input_arg()

+ self.args = self.parser.parse_args(argvs)

+

+ self.style_transfer(

+ paths=[{

+ 'content': self.args.content,

+ 'style': self.args.style

+ }],

+ output_dir=self.args.output_dir,

+ use_gpu=self.args.use_gpu,

+ visualization=self.args.visualization)

+

+ @serving

+ def serving_method(self, images, **kwargs):

+ """

+ Run as a service.

+ """

+ images_decode = copy.deepcopy(images)

+ for image in images_decode:

+ image['content'] = base64_to_cv2(image['content'])

+ image['style'] = base64_to_cv2(image['style'])

+ results = self.style_transfer(images_decode, **kwargs)

+ tolist = [result.tolist() for result in results]

+ return tolist

+

+ def add_module_config_arg(self):

+ """

+ Add the command config options.

+ """

+ self.arg_config_group.add_argument('--use_gpu', action='store_true', help="use GPU or not")

+

+ self.arg_config_group.add_argument(

+ '--output_dir', type=str, default='transfer_result', help='output directory for saving result.')

+ self.arg_config_group.add_argument('--visualization', type=bool, default=False, help='save results or not.')

+

+ def add_module_input_arg(self):

+ """

+ Add the command input options.

+ """

+ self.arg_input_group.add_argument('--content', type=str, help="path to content image.")

+ self.arg_input_group.add_argument('--style', type=str, help="path to style image.")

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_circuit/requirements.txt b/modules/image/Image_gan/style_transfer/lapstyle_circuit/requirements.txt

new file mode 100644

index 0000000000000000000000000000000000000000..67e9bb6fa840355e9ed0d44b7134850f1fe22fe1

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_circuit/requirements.txt

@@ -0,0 +1 @@

+ppgan

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_circuit/util.py b/modules/image/Image_gan/style_transfer/lapstyle_circuit/util.py

new file mode 100644

index 0000000000000000000000000000000000000000..531a0ae0d487822a870ba7f09817e658967aff10

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_circuit/util.py

@@ -0,0 +1,11 @@

+import base64

+

+import cv2

+import numpy as np

+

+

+def base64_to_cv2(b64str):

+ data = base64.b64decode(b64str.encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ data = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ return data

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_ocean/README.md b/modules/image/Image_gan/style_transfer/lapstyle_ocean/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..497dba5af97ab602827ddf87e1749e8586b4a296

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_ocean/README.md

@@ -0,0 +1,142 @@

+# lapstyle_ocean

+

+|模型名称|lapstyle_ocean|

+| :--- | :---: |

+|类别|图像 - 风格迁移|

+|网络|LapStyle|

+|数据集|COCO|

+|是否支持Fine-tuning|否|

+|模型大小|121MB|

+|最新更新日期|2021-12-07|

+|数据指标|-|

+

+

+## 一、模型基本信息

+

+- ### 应用效果展示

+ - 样例结果示例:

+

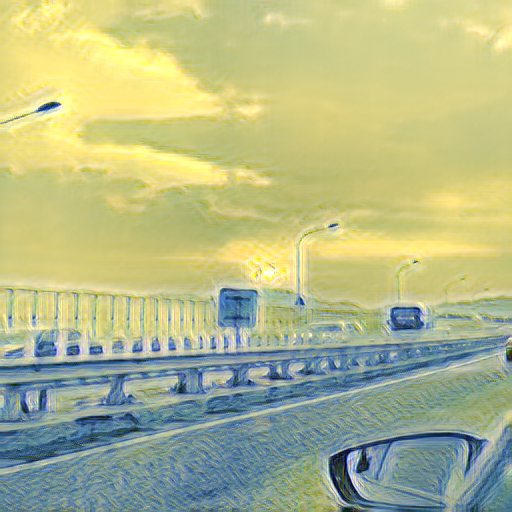

+  +

+

+ 输入内容图形

+

+  +

+

+ 输入风格图形

+

+  +

+

+ 输出图像

+

+

+

+- ### 模型介绍

+

+ - LapStyle--拉普拉斯金字塔风格化网络,是一种能够生成高质量风格化图的快速前馈风格化网络,能渐进地生成复杂的纹理迁移效果,同时能够在512分辨率下达到100fps的速度。可实现多种不同艺术风格的快速迁移,在艺术图像生成、滤镜等领域有广泛的应用。

+

+ - 更多详情参考:[Drafting and Revision: Laplacian Pyramid Network for Fast High-Quality Artistic Style Transfer](https://arxiv.org/pdf/2104.05376.pdf)

+

+

+

+## 二、安装

+

+- ### 1、环境依赖

+ - ppgan

+

+- ### 2、安装

+

+ - ```shell

+ $ hub install lapstyle_ocean

+ ```

+ - 如您安装时遇到问题,可参考:[零基础windows安装](../../../../../docs/docs_ch/get_start/windows_quickstart.md)

+ | [零基础Linux安装](../../../../../docs/docs_ch/get_start/linux_quickstart.md) | [零基础MacOS安装](../../../../../docs/docs_ch/get_start/mac_quickstart.md)

+

+## 三、模型API预测

+

+- ### 1、命令行预测

+

+ - ```shell

+ # Read from a file

+ $ hub run lapstyle_ocean --content "/PATH/TO/IMAGE" --style "/PATH/TO/IMAGE1"

+ ```

+ - 通过命令行方式实现风格转换模型的调用,更多请见 [PaddleHub命令行指令](../../../../docs/docs_ch/tutorial/cmd_usage.rst)

+

+- ### 2、预测代码示例

+

+ - ```python

+ import paddlehub as hub

+

+ module = hub.Module(name="lapstyle_ocean")

+ content = cv2.imread("/PATH/TO/IMAGE")

+ style = cv2.imread("/PATH/TO/IMAGE1")

+ results = module.style_transfer(images=[{'content':content, 'style':style}], output_dir='./transfer_result', use_gpu=True)

+ ```

+

+- ### 3、API

+

+ - ```python

+ style_transfer(images=None, paths=None, output_dir='./transfer_result/', use_gpu=False, visualization=True)

+ ```

+ - 风格转换API。

+

+ - **参数**

+

+ - images (list[dict]): data of images, 每一个元素都为一个 dict,有关键字 content, style, 相应取值为:

+ - content (numpy.ndarray): 待转换的图片,shape 为 \[H, W, C\],BGR格式;

+ - style (numpy.ndarray) : 风格图像,shape为 \[H, W, C\],BGR格式;

+ - paths (list[str]): paths to images, 每一个元素都为一个dict, 有关键字 content, style, 相应取值为:

+ - content (str): 待转换的图片的路径;

+ - style (str) : 风格图像的路径;

+ - output\_dir (str): 结果保存的路径;

+ - use\_gpu (bool): 是否使用 GPU;

+ - visualization(bool): 是否保存结果到本地文件夹

+

+

+## 四、服务部署

+

+- PaddleHub Serving可以部署一个在线图像风格转换服务。

+

+- ### 第一步:启动PaddleHub Serving

+

+ - 运行启动命令:

+ - ```shell

+ $ hub serving start -m lapstyle_ocean

+ ```

+

+ - 这样就完成了一个图像风格转换的在线服务API的部署,默认端口号为8866。

+

+ - **NOTE:** 如使用GPU预测,则需要在启动服务之前,请设置CUDA\_VISIBLE\_DEVICES环境变量,否则不用设置。

+

+- ### 第二步:发送预测请求

+

+ - 配置好服务端,以下数行代码即可实现发送预测请求,获取预测结果

+

+ - ```python

+ import requests

+ import json

+ import cv2

+ import base64

+

+

+ def cv2_to_base64(image):

+ data = cv2.imencode('.jpg', image)[1]

+ return base64.b64encode(data.tostring()).decode('utf8')

+

+ # 发送HTTP请求

+ data = {'images':[{'content': cv2_to_base64(cv2.imread("/PATH/TO/IMAGE")), 'style': cv2_to_base64(cv2.imread("/PATH/TO/IMAGE1"))}]}

+ headers = {"Content-type": "application/json"}

+ url = "http://127.0.0.1:8866/predict/lapstyle_ocean"

+ r = requests.post(url=url, headers=headers, data=json.dumps(data))

+

+ # 打印预测结果

+ print(r.json()["results"])

+

+## 五、更新历史

+

+* 1.0.0

+

+ 初始发布

+

+ - ```shell

+ $ hub install lapstyle_ocean==1.0.0

+ ```

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_ocean/model.py b/modules/image/Image_gan/style_transfer/lapstyle_ocean/model.py

new file mode 100644

index 0000000000000000000000000000000000000000..d66c02322ecf630d643b23e193ac95b05d62a826

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_ocean/model.py

@@ -0,0 +1,140 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserve.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import os

+import urllib.request

+

+import cv2 as cv

+import numpy as np

+import paddle

+import paddle.nn.functional as F

+from paddle.vision.transforms import functional

+from PIL import Image

+from ppgan.models.generators import DecoderNet

+from ppgan.models.generators import Encoder

+from ppgan.models.generators import RevisionNet

+from ppgan.utils.visual import tensor2img

+

+

+def img(img):

+ # some images have 4 channels

+ if img.shape[2] > 3:

+ img = img[:, :, :3]

+ # HWC to CHW

+ return img

+

+

+def img_totensor(content_img, style_img):

+ if content_img.ndim == 2:

+ content_img = cv.cvtColor(content_img, cv.COLOR_GRAY2RGB)

+ else:

+ content_img = cv.cvtColor(content_img, cv.COLOR_BGR2RGB)

+ h, w, c = content_img.shape

+ content_img = Image.fromarray(content_img)

+ content_img = content_img.resize((512, 512), Image.BILINEAR)

+ content_img = np.array(content_img)

+ content_img = img(content_img)

+ content_img = functional.to_tensor(content_img)

+

+ style_img = cv.cvtColor(style_img, cv.COLOR_BGR2RGB)

+ style_img = Image.fromarray(style_img)

+ style_img = style_img.resize((512, 512), Image.BILINEAR)

+ style_img = np.array(style_img)

+ style_img = img(style_img)

+ style_img = functional.to_tensor(style_img)

+

+ content_img = paddle.unsqueeze(content_img, axis=0)

+ style_img = paddle.unsqueeze(style_img, axis=0)

+ return content_img, style_img, h, w

+

+

+def tensor_resample(tensor, dst_size, mode='bilinear'):

+ return F.interpolate(tensor, dst_size, mode=mode, align_corners=False)

+

+

+def laplacian(x):

+ """

+ Laplacian

+

+ return:

+ x - upsample(downsample(x))

+ """

+ return x - tensor_resample(tensor_resample(x, [x.shape[2] // 2, x.shape[3] // 2]), [x.shape[2], x.shape[3]])

+

+

+def make_laplace_pyramid(x, levels):

+ """

+ Make Laplacian Pyramid

+ """

+ pyramid = []

+ current = x

+ for i in range(levels):

+ pyramid.append(laplacian(current))

+ current = tensor_resample(current, (max(current.shape[2] // 2, 1), max(current.shape[3] // 2, 1)))

+ pyramid.append(current)

+ return pyramid

+

+

+def fold_laplace_pyramid(pyramid):

+ """

+ Fold Laplacian Pyramid

+ """

+ current = pyramid[-1]

+ for i in range(len(pyramid) - 2, -1, -1): # iterate from len-2 to 0

+ up_h, up_w = pyramid[i].shape[2], pyramid[i].shape[3]

+ current = pyramid[i] + tensor_resample(current, (up_h, up_w))

+ return current

+

+

+class LapStylePredictor:

+ def __init__(self, weight_path=None):

+

+ self.net_enc = Encoder()

+ self.net_dec = DecoderNet()

+ self.net_rev = RevisionNet()

+ self.net_rev_2 = RevisionNet()

+

+ self.net_enc.set_dict(paddle.load(weight_path)['net_enc'])

+ self.net_enc.eval()

+ self.net_dec.set_dict(paddle.load(weight_path)['net_dec'])

+ self.net_dec.eval()

+ self.net_rev.set_dict(paddle.load(weight_path)['net_rev'])

+ self.net_rev.eval()

+ self.net_rev_2.set_dict(paddle.load(weight_path)['net_rev_2'])

+ self.net_rev_2.eval()

+

+ def run(self, content_img, style_image):

+ content_img, style_img, h, w = img_totensor(content_img, style_image)

+ pyr_ci = make_laplace_pyramid(content_img, 2)

+ pyr_si = make_laplace_pyramid(style_img, 2)

+ pyr_ci.append(content_img)

+ pyr_si.append(style_img)

+ cF = self.net_enc(pyr_ci[2])

+ sF = self.net_enc(pyr_si[2])

+ stylized_small = self.net_dec(cF, sF)

+ stylized_up = F.interpolate(stylized_small, scale_factor=2)

+

+ revnet_input = paddle.concat(x=[pyr_ci[1], stylized_up], axis=1)

+ stylized_rev_lap = self.net_rev(revnet_input)

+ stylized_rev = fold_laplace_pyramid([stylized_rev_lap, stylized_small])

+

+ stylized_up = F.interpolate(stylized_rev, scale_factor=2)

+

+ revnet_input = paddle.concat(x=[pyr_ci[0], stylized_up], axis=1)

+ stylized_rev_lap_second = self.net_rev_2(revnet_input)

+ stylized_rev_second = fold_laplace_pyramid([stylized_rev_lap_second, stylized_rev_lap, stylized_small])

+

+ stylized = stylized_rev_second

+ stylized_visual = tensor2img(stylized, min_max=(0., 1.))

+

+ return stylized_visual

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_ocean/module.py b/modules/image/Image_gan/style_transfer/lapstyle_ocean/module.py

new file mode 100644

index 0000000000000000000000000000000000000000..18534a3756805db51d33e9ff4bbb59bcf76d0dc7

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_ocean/module.py

@@ -0,0 +1,149 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import argparse

+import copy

+import os

+

+import cv2

+import numpy as np

+import paddle

+from skimage.io import imread

+from skimage.transform import rescale

+from skimage.transform import resize

+

+import paddlehub as hub

+from .model import LapStylePredictor

+from .util import base64_to_cv2

+from paddlehub.module.module import moduleinfo

+from paddlehub.module.module import runnable

+from paddlehub.module.module import serving

+

+

+@moduleinfo(

+ name="lapstyle_ocean",

+ type="CV/style_transfer",

+ author="paddlepaddle",

+ author_email="",

+ summary="",

+ version="1.0.0")

+class Lapstyle_ocean:

+ def __init__(self):

+ self.pretrained_model = os.path.join(self.directory, "lapstyle_ocean.pdparams")

+

+ self.network = LapStylePredictor(weight_path=self.pretrained_model)

+

+ def style_transfer(self,

+ images: list = None,

+ paths: list = None,

+ output_dir: str = './transfer_result/',

+ use_gpu: bool = False,

+ visualization: bool = True):

+ '''

+ Transfer a image to ocean style.

+

+ images (list[dict]): data of images, each element is a dict:

+ - content (numpy.ndarray): input image,shape is \[H, W, C\],BGR format;

+ - style (numpy.ndarray) : style image,shape is \[H, W, C\],BGR format;

+ paths (list[dict]): paths to images, eacg element is a dict:

+ - content (str): path to input image;

+ - style (str) : path to style image;

+

+ output_dir (str): the dir to save the results

+ use_gpu (bool): if True, use gpu to perform the computation, otherwise cpu.

+ visualization (bool): if True, save results in output_dir.

+ '''

+ results = []

+ paddle.disable_static()

+ place = 'gpu:0' if use_gpu else 'cpu'

+ place = paddle.set_device(place)

+ if images == None and paths == None:

+ print('No image provided. Please input an image or a image path.')

+ return

+

+ if images != None:

+ for image_dict in images:

+ content_img = image_dict['content']

+ style_img = image_dict['style']

+ results.append(self.network.run(content_img, style_img))

+

+ if paths != None:

+ for path_dict in paths:

+ content_img = cv2.imread(path_dict['content'])

+ style_img = cv2.imread(path_dict['style'])

+ results.append(self.network.run(content_img, style_img))

+

+ if visualization == True:

+ if not os.path.exists(output_dir):

+ os.makedirs(output_dir, exist_ok=True)

+ for i, out in enumerate(results):

+ cv2.imwrite(os.path.join(output_dir, 'output_{}.png'.format(i)), out[:, :, ::-1])

+

+ return results

+

+ @runnable

+ def run_cmd(self, argvs: list):

+ """

+ Run as a command.

+ """

+ self.parser = argparse.ArgumentParser(

+ description="Run the {} module.".format(self.name),

+ prog='hub run {}'.format(self.name),

+ usage='%(prog)s',

+ add_help=True)

+

+ self.arg_input_group = self.parser.add_argument_group(title="Input options", description="Input data. Required")

+ self.arg_config_group = self.parser.add_argument_group(

+ title="Config options", description="Run configuration for controlling module behavior, not required.")

+ self.add_module_config_arg()

+ self.add_module_input_arg()

+ self.args = self.parser.parse_args(argvs)

+

+ self.style_transfer(

+ paths=[{

+ 'content': self.args.content,

+ 'style': self.args.style

+ }],

+ output_dir=self.args.output_dir,

+ use_gpu=self.args.use_gpu,

+ visualization=self.args.visualization)

+

+ @serving

+ def serving_method(self, images, **kwargs):

+ """

+ Run as a service.

+ """

+ images_decode = copy.deepcopy(images)

+ for image in images_decode:

+ image['content'] = base64_to_cv2(image['content'])

+ image['style'] = base64_to_cv2(image['style'])

+ results = self.style_transfer(images_decode, **kwargs)

+ tolist = [result.tolist() for result in results]

+ return tolist

+

+ def add_module_config_arg(self):

+ """

+ Add the command config options.

+ """

+ self.arg_config_group.add_argument('--use_gpu', action='store_true', help="use GPU or not")

+

+ self.arg_config_group.add_argument(

+ '--output_dir', type=str, default='transfer_result', help='output directory for saving result.')

+ self.arg_config_group.add_argument('--visualization', type=bool, default=False, help='save results or not.')

+

+ def add_module_input_arg(self):

+ """

+ Add the command input options.

+ """

+ self.arg_input_group.add_argument('--content', type=str, help="path to content image.")

+ self.arg_input_group.add_argument('--style', type=str, help="path to style image.")

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_ocean/requirements.txt b/modules/image/Image_gan/style_transfer/lapstyle_ocean/requirements.txt

new file mode 100644

index 0000000000000000000000000000000000000000..67e9bb6fa840355e9ed0d44b7134850f1fe22fe1

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_ocean/requirements.txt

@@ -0,0 +1 @@

+ppgan

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_ocean/util.py b/modules/image/Image_gan/style_transfer/lapstyle_ocean/util.py

new file mode 100644

index 0000000000000000000000000000000000000000..531a0ae0d487822a870ba7f09817e658967aff10

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_ocean/util.py

@@ -0,0 +1,11 @@

+import base64

+

+import cv2

+import numpy as np

+

+

+def base64_to_cv2(b64str):

+ data = base64.b64decode(b64str.encode('utf8'))

+ data = np.fromstring(data, np.uint8)

+ data = cv2.imdecode(data, cv2.IMREAD_COLOR)

+ return data

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_starrynew/README.md b/modules/image/Image_gan/style_transfer/lapstyle_starrynew/README.md

new file mode 100644

index 0000000000000000000000000000000000000000..4219317c3239d0083413bad47f645aebccd4aa23

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_starrynew/README.md

@@ -0,0 +1,142 @@

+# lapstyle_starrynew

+

+|模型名称|lapstyle_starrynew|

+| :--- | :---: |

+|类别|图像 - 风格迁移|

+|网络|LapStyle|

+|数据集|COCO|

+|是否支持Fine-tuning|否|

+|模型大小|121MB|

+|最新更新日期|2021-12-07|

+|数据指标|-|

+

+

+## 一、模型基本信息

+

+- ### 应用效果展示

+ - 样例结果示例:

+

+  +

+

+ 输入内容图形

+

+  +

+

+ 输入风格图形

+

+  +

+

+ 输出图像

+

+

+

+- ### 模型介绍

+

+ - LapStyle--拉普拉斯金字塔风格化网络,是一种能够生成高质量风格化图的快速前馈风格化网络,能渐进地生成复杂的纹理迁移效果,同时能够在512分辨率下达到100fps的速度。可实现多种不同艺术风格的快速迁移,在艺术图像生成、滤镜等领域有广泛的应用。

+

+ - 更多详情参考:[Drafting and Revision: Laplacian Pyramid Network for Fast High-Quality Artistic Style Transfer](https://arxiv.org/pdf/2104.05376.pdf)

+

+

+

+## 二、安装

+

+- ### 1、环境依赖

+ - ppgan

+

+- ### 2、安装

+

+ - ```shell

+ $ hub install lapstyle_starrynew

+ ```

+ - 如您安装时遇到问题,可参考:[零基础windows安装](../../../../../docs/docs_ch/get_start/windows_quickstart.md)

+ | [零基础Linux安装](../../../../../docs/docs_ch/get_start/linux_quickstart.md) | [零基础MacOS安装](../../../../../docs/docs_ch/get_start/mac_quickstart.md)

+

+## 三、模型API预测

+

+- ### 1、命令行预测

+

+ - ```shell

+ # Read from a file

+ $ hub run lapstyle_starrynew --content "/PATH/TO/IMAGE" --style "/PATH/TO/IMAGE1"

+ ```

+ - 通过命令行方式实现风格转换模型的调用,更多请见 [PaddleHub命令行指令](../../../../docs/docs_ch/tutorial/cmd_usage.rst)

+

+- ### 2、预测代码示例

+

+ - ```python

+ import paddlehub as hub

+

+ module = hub.Module(name="lapstyle_starrynew")

+ content = cv2.imread("/PATH/TO/IMAGE")

+ style = cv2.imread("/PATH/TO/IMAGE1")

+ results = module.style_transfer(images=[{'content':content, 'style':style}], output_dir='./transfer_result', use_gpu=True)

+ ```

+

+- ### 3、API

+

+ - ```python

+ style_transfer(images=None, paths=None, output_dir='./transfer_result/', use_gpu=False, visualization=True)

+ ```

+ - 风格转换API。

+

+ - **参数**

+

+ - images (list[dict]): data of images, 每一个元素都为一个 dict,有关键字 content, style, 相应取值为:

+ - content (numpy.ndarray): 待转换的图片,shape 为 \[H, W, C\],BGR格式;

+ - style (numpy.ndarray) : 风格图像,shape为 \[H, W, C\],BGR格式;

+ - paths (list[str]): paths to images, 每一个元素都为一个dict, 有关键字 content, style, 相应取值为:

+ - content (str): 待转换的图片的路径;

+ - style (str) : 风格图像的路径;

+ - output\_dir (str): 结果保存的路径;

+ - use\_gpu (bool): 是否使用 GPU;

+ - visualization(bool): 是否保存结果到本地文件夹

+

+

+## 四、服务部署

+

+- PaddleHub Serving可以部署一个在线图像风格转换服务。

+

+- ### 第一步:启动PaddleHub Serving

+

+ - 运行启动命令:

+ - ```shell

+ $ hub serving start -m lapstyle_starrynew

+ ```

+

+ - 这样就完成了一个图像风格转换的在线服务API的部署,默认端口号为8866。

+

+ - **NOTE:** 如使用GPU预测,则需要在启动服务之前,请设置CUDA\_VISIBLE\_DEVICES环境变量,否则不用设置。

+

+- ### 第二步:发送预测请求

+

+ - 配置好服务端,以下数行代码即可实现发送预测请求,获取预测结果

+

+ - ```python

+ import requests

+ import json

+ import cv2

+ import base64

+

+

+ def cv2_to_base64(image):

+ data = cv2.imencode('.jpg', image)[1]

+ return base64.b64encode(data.tostring()).decode('utf8')

+

+ # 发送HTTP请求

+ data = {'images':[{'content': cv2_to_base64(cv2.imread("/PATH/TO/IMAGE")), 'style': cv2_to_base64(cv2.imread("/PATH/TO/IMAGE1"))}]}

+ headers = {"Content-type": "application/json"}

+ url = "http://127.0.0.1:8866/predict/lapstyle_starrynew"

+ r = requests.post(url=url, headers=headers, data=json.dumps(data))

+

+ # 打印预测结果

+ print(r.json()["results"])

+

+## 五、更新历史

+

+* 1.0.0

+

+ 初始发布

+

+ - ```shell

+ $ hub install lapstyle_starrynew==1.0.0

+ ```

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_starrynew/model.py b/modules/image/Image_gan/style_transfer/lapstyle_starrynew/model.py

new file mode 100644

index 0000000000000000000000000000000000000000..d66c02322ecf630d643b23e193ac95b05d62a826

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_starrynew/model.py

@@ -0,0 +1,140 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserve.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import os

+import urllib.request

+

+import cv2 as cv

+import numpy as np

+import paddle

+import paddle.nn.functional as F

+from paddle.vision.transforms import functional

+from PIL import Image

+from ppgan.models.generators import DecoderNet

+from ppgan.models.generators import Encoder

+from ppgan.models.generators import RevisionNet

+from ppgan.utils.visual import tensor2img

+

+

+def img(img):

+ # some images have 4 channels

+ if img.shape[2] > 3:

+ img = img[:, :, :3]

+ # HWC to CHW

+ return img

+

+

+def img_totensor(content_img, style_img):

+ if content_img.ndim == 2:

+ content_img = cv.cvtColor(content_img, cv.COLOR_GRAY2RGB)

+ else:

+ content_img = cv.cvtColor(content_img, cv.COLOR_BGR2RGB)

+ h, w, c = content_img.shape

+ content_img = Image.fromarray(content_img)

+ content_img = content_img.resize((512, 512), Image.BILINEAR)

+ content_img = np.array(content_img)

+ content_img = img(content_img)

+ content_img = functional.to_tensor(content_img)

+

+ style_img = cv.cvtColor(style_img, cv.COLOR_BGR2RGB)

+ style_img = Image.fromarray(style_img)

+ style_img = style_img.resize((512, 512), Image.BILINEAR)

+ style_img = np.array(style_img)

+ style_img = img(style_img)

+ style_img = functional.to_tensor(style_img)

+

+ content_img = paddle.unsqueeze(content_img, axis=0)

+ style_img = paddle.unsqueeze(style_img, axis=0)

+ return content_img, style_img, h, w

+

+

+def tensor_resample(tensor, dst_size, mode='bilinear'):

+ return F.interpolate(tensor, dst_size, mode=mode, align_corners=False)

+

+

+def laplacian(x):

+ """

+ Laplacian

+

+ return:

+ x - upsample(downsample(x))

+ """

+ return x - tensor_resample(tensor_resample(x, [x.shape[2] // 2, x.shape[3] // 2]), [x.shape[2], x.shape[3]])

+

+

+def make_laplace_pyramid(x, levels):

+ """

+ Make Laplacian Pyramid

+ """

+ pyramid = []

+ current = x

+ for i in range(levels):

+ pyramid.append(laplacian(current))

+ current = tensor_resample(current, (max(current.shape[2] // 2, 1), max(current.shape[3] // 2, 1)))

+ pyramid.append(current)

+ return pyramid

+

+

+def fold_laplace_pyramid(pyramid):

+ """

+ Fold Laplacian Pyramid

+ """

+ current = pyramid[-1]

+ for i in range(len(pyramid) - 2, -1, -1): # iterate from len-2 to 0

+ up_h, up_w = pyramid[i].shape[2], pyramid[i].shape[3]

+ current = pyramid[i] + tensor_resample(current, (up_h, up_w))

+ return current

+

+

+class LapStylePredictor:

+ def __init__(self, weight_path=None):

+

+ self.net_enc = Encoder()

+ self.net_dec = DecoderNet()

+ self.net_rev = RevisionNet()

+ self.net_rev_2 = RevisionNet()

+

+ self.net_enc.set_dict(paddle.load(weight_path)['net_enc'])

+ self.net_enc.eval()

+ self.net_dec.set_dict(paddle.load(weight_path)['net_dec'])

+ self.net_dec.eval()

+ self.net_rev.set_dict(paddle.load(weight_path)['net_rev'])

+ self.net_rev.eval()

+ self.net_rev_2.set_dict(paddle.load(weight_path)['net_rev_2'])

+ self.net_rev_2.eval()

+

+ def run(self, content_img, style_image):

+ content_img, style_img, h, w = img_totensor(content_img, style_image)

+ pyr_ci = make_laplace_pyramid(content_img, 2)

+ pyr_si = make_laplace_pyramid(style_img, 2)

+ pyr_ci.append(content_img)

+ pyr_si.append(style_img)

+ cF = self.net_enc(pyr_ci[2])

+ sF = self.net_enc(pyr_si[2])

+ stylized_small = self.net_dec(cF, sF)

+ stylized_up = F.interpolate(stylized_small, scale_factor=2)

+

+ revnet_input = paddle.concat(x=[pyr_ci[1], stylized_up], axis=1)

+ stylized_rev_lap = self.net_rev(revnet_input)

+ stylized_rev = fold_laplace_pyramid([stylized_rev_lap, stylized_small])

+

+ stylized_up = F.interpolate(stylized_rev, scale_factor=2)

+

+ revnet_input = paddle.concat(x=[pyr_ci[0], stylized_up], axis=1)

+ stylized_rev_lap_second = self.net_rev_2(revnet_input)

+ stylized_rev_second = fold_laplace_pyramid([stylized_rev_lap_second, stylized_rev_lap, stylized_small])

+

+ stylized = stylized_rev_second

+ stylized_visual = tensor2img(stylized, min_max=(0., 1.))

+

+ return stylized_visual

diff --git a/modules/image/Image_gan/style_transfer/lapstyle_starrynew/module.py b/modules/image/Image_gan/style_transfer/lapstyle_starrynew/module.py

new file mode 100644

index 0000000000000000000000000000000000000000..b6cdab72eb2d4c89bd53c5ba3a63adcbc061acc3

--- /dev/null

+++ b/modules/image/Image_gan/style_transfer/lapstyle_starrynew/module.py

@@ -0,0 +1,148 @@

+# Copyright (c) 2021 PaddlePaddle Authors. All Rights Reserved.

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+import argparse

+import copy

+import os

+

+import cv2

+import numpy as np

+import paddle

+from skimage.io import imread

+from skimage.transform import rescale

+from skimage.transform import resize

+

+import paddlehub as hub

+from .model import LapStylePredictor

+from .util import base64_to_cv2

+from paddlehub.module.module import moduleinfo

+from paddlehub.module.module import runnable

+from paddlehub.module.module import serving

+

+

+@moduleinfo(

+ name="lapstyle_starrynew",

+ type="CV/style_transfer",

+ author="paddlepaddle",

+ author_email="",

+ summary="",

+ version="1.0.0")

+class Lapstyle_starrynew:

+ def __init__(self):

+ self.pretrained_model = os.path.join(self.directory, "lapstyle_starrynew.pdparams")

+

+ self.network = LapStylePredictor(weight_path=self.pretrained_model)

+

+ def style_transfer(self,

+ images: list = None,

+ paths: list = None,

+ output_dir: str = './transfer_result/',

+ use_gpu: bool = False,

+ visualization: bool = True):

+ '''

+ Transfer a image to starrynew style.

+

+ images (list[dict]): data of images, each element is a dict:

+ - content (numpy.ndarray): input image,shape is \[H, W, C\],BGR format;

+ - style (numpy.ndarray) : style image,shape is \[H, W, C\],BGR format;

+ paths (list[dict]): paths to images, eacg element is a dict:

+ - content (str): path to input image;

+ - style (str) : path to style image;

+ output_dir (str): the dir to save the results

+ use_gpu (bool): if True, use gpu to perform the computation, otherwise cpu.

+ visualization (bool): if True, save results in output_dir.

+ '''

+ results = []

+ paddle.disable_static()

+ place = 'gpu:0' if use_gpu else 'cpu'

+ place = paddle.set_device(place)

+ if images == None and paths == None:

+ print('No image provided. Please input an image or a image path.')

+ return

+

+ if images != None:

+ for image_dict in images:

+ content_img = image_dict['content']

+ style_img = image_dict['style']

+ results.append(self.network.run(content_img, style_img))

+

+ if paths != None:

+ for path_dict in paths:

+ content_img = cv2.imread(path_dict['content'])

+ style_img = cv2.imread(path_dict['style'])

+ results.append(self.network.run(content_img, style_img))

+

+ if visualization == True:

+ if not os.path.exists(output_dir):

+ os.makedirs(output_dir, exist_ok=True)

+ for i, out in enumerate(results):

+ cv2.imwrite(os.path.join(output_dir, 'output_{}.png'.format(i)), out[:, :, ::-1])

+

+ return results

+

+ @runnable

+ def run_cmd(self, argvs: list):

+ """

+ Run as a command.

+ """

+ self.parser = argparse.ArgumentParser(

+ description="Run the {} module.".format(self.name),

+ prog='hub run {}'.format(self.name),

+ usage='%(prog)s',

+ add_help=True)

+

+ self.arg_input_group = self.parser.add_argument_group(title="Input options", description="Input data. Required")

+ self.arg_config_group = self.parser.add_argument_group(

+ title="Config options", description="Run configuration for controlling module behavior, not required.")

+ self.add_module_config_arg()

+ self.add_module_input_arg()

+ self.args = self.parser.parse_args(argvs)

+

+ self.style_transfer(

+ paths=[{

+ 'content': self.args.content,

+ 'style': self.args.style

+ }],

+ output_dir=self.args.output_dir,

+ use_gpu=self.args.use_gpu,

+ visualization=self.args.visualization)

+

+ @serving

+ def serving_method(self, images, **kwargs):

+ """

+ Run as a service.

+ """

+ images_decode = copy.deepcopy(images)

+ for image in images_decode:

+ image['content'] = base64_to_cv2(image['content'])

+ image['style'] = base64_to_cv2(image['style'])

+ results = self.style_transfer(images_decode, **kwargs)

+ tolist = [result.tolist() for result in results]

+ return tolist

+

+ def add_module_config_arg(self):

+ """

+ Add the command config options.

+ """

+ self.arg_config_group.add_argument('--use_gpu', action='store_true', help="use GPU or not")

+

+ self.arg_config_group.add_argument(