Merge branch 'develop' of https://github.com/PaddlePaddle/Paddle into prepare_pserver_executor

Showing

cmake/external/threadpool.cmake

0 → 100644

175.1 KB

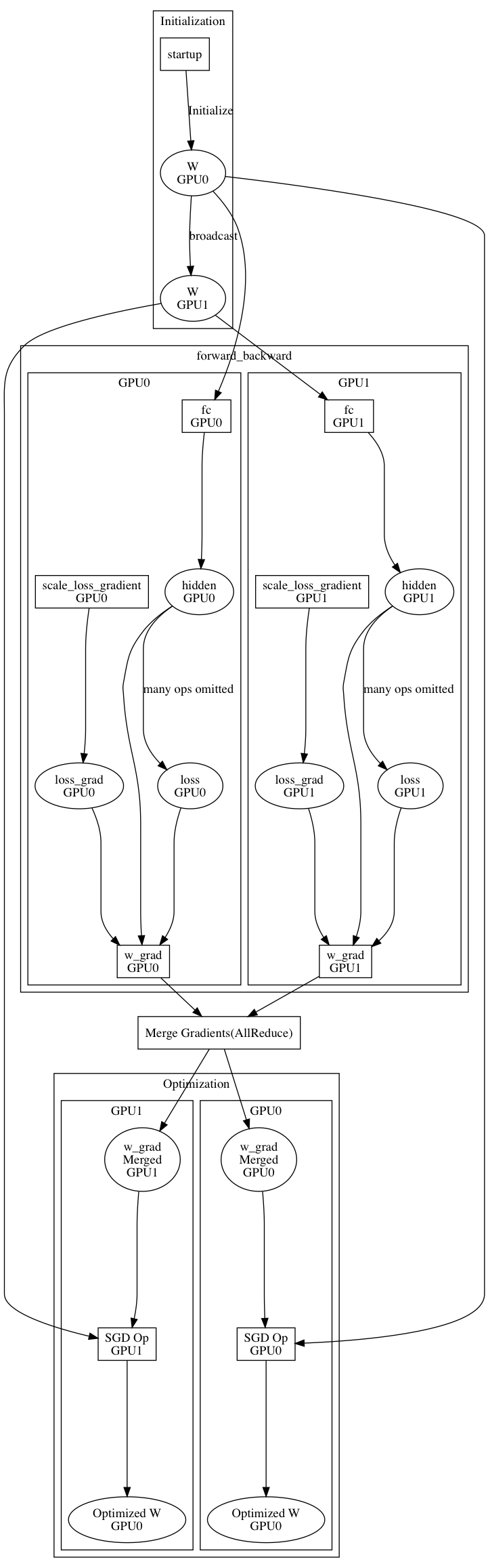

doc/design/parallel_executor.md

0 → 100644

doc/fluid/api/CMakeLists.txt

0 → 100644

133.4 KB

83.6 KB

doc/fluid/dev/api_doc_std_en.md

0 → 100644

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动

文件已移动