Merge branch 'develop' of https://github.com/PaddlePaddle/Paddle into feature/non_layer_api_doc

Showing

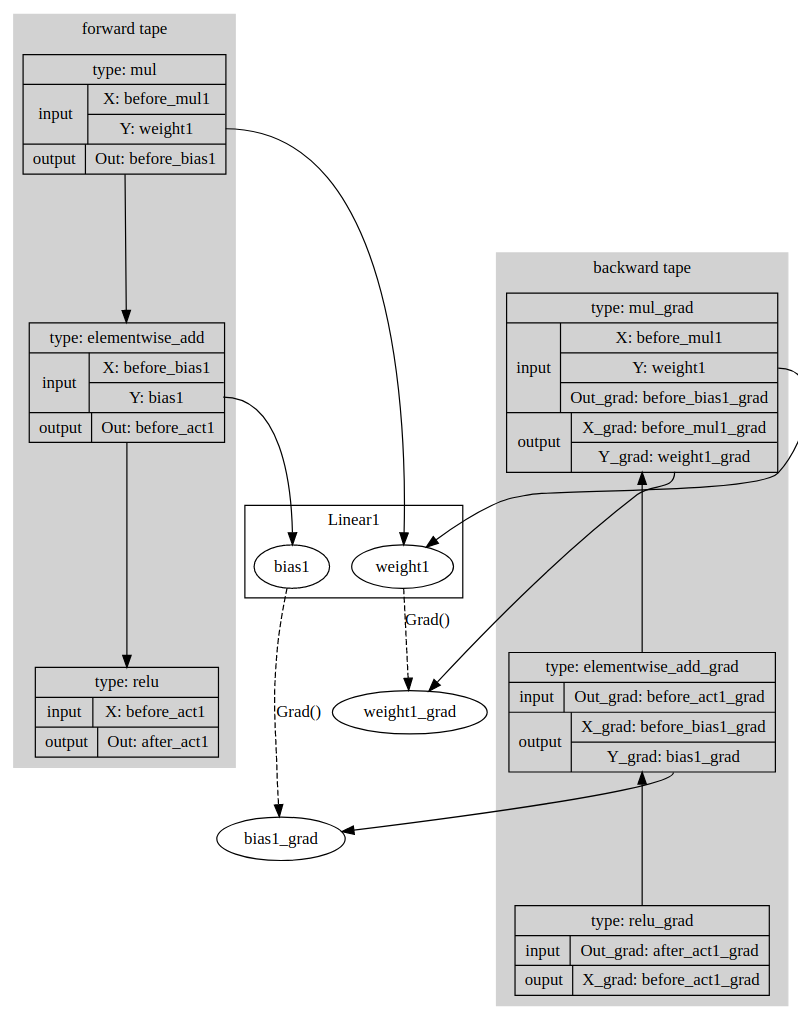

paddle/contrib/tape/README.md

已删除

100644 → 0

94.4 KB

paddle/contrib/tape/tape.cc

已删除

100644 → 0

tools/print_signatures.py

0 → 100644