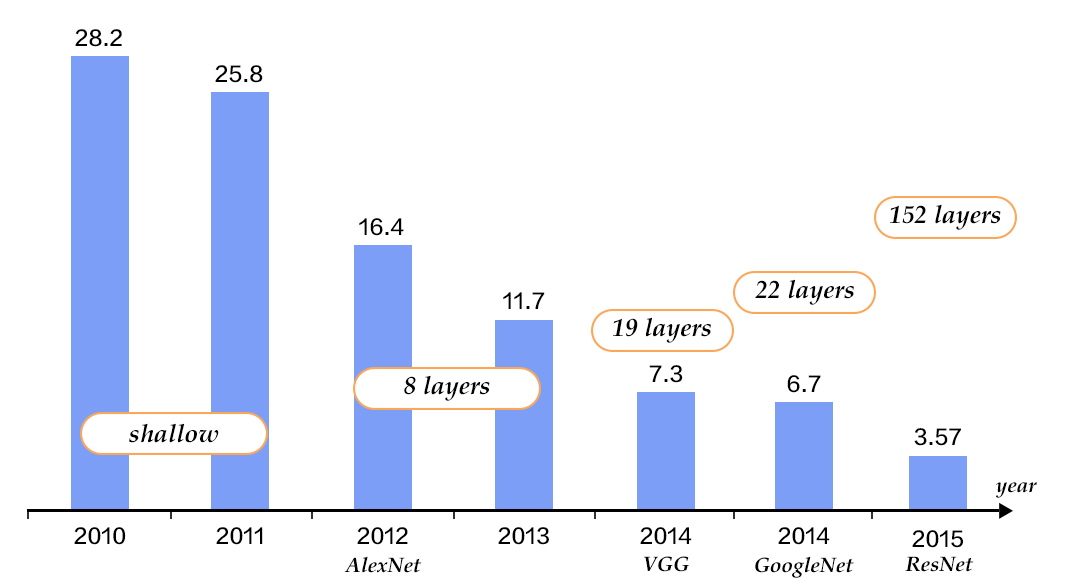

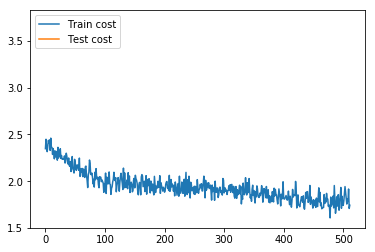

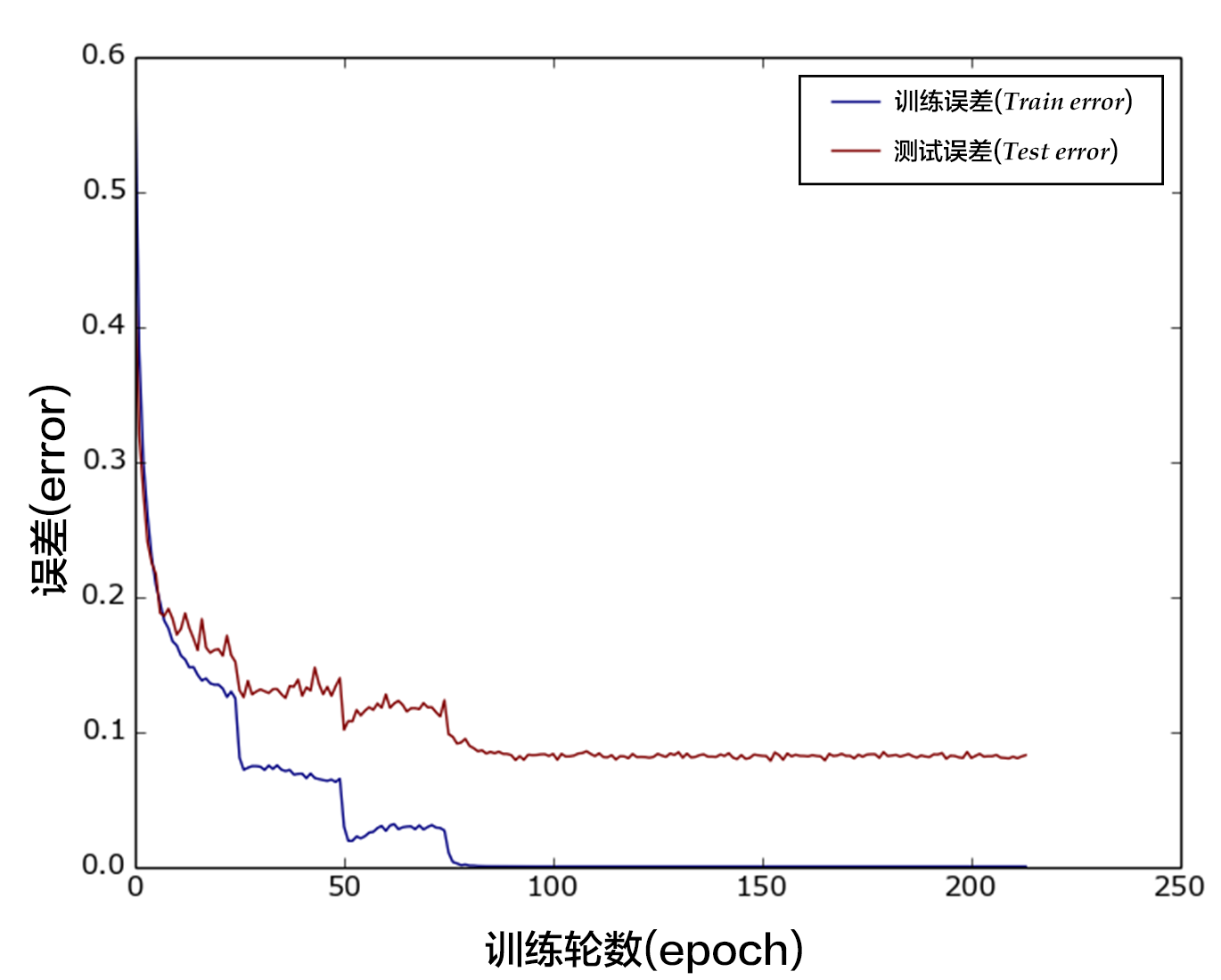

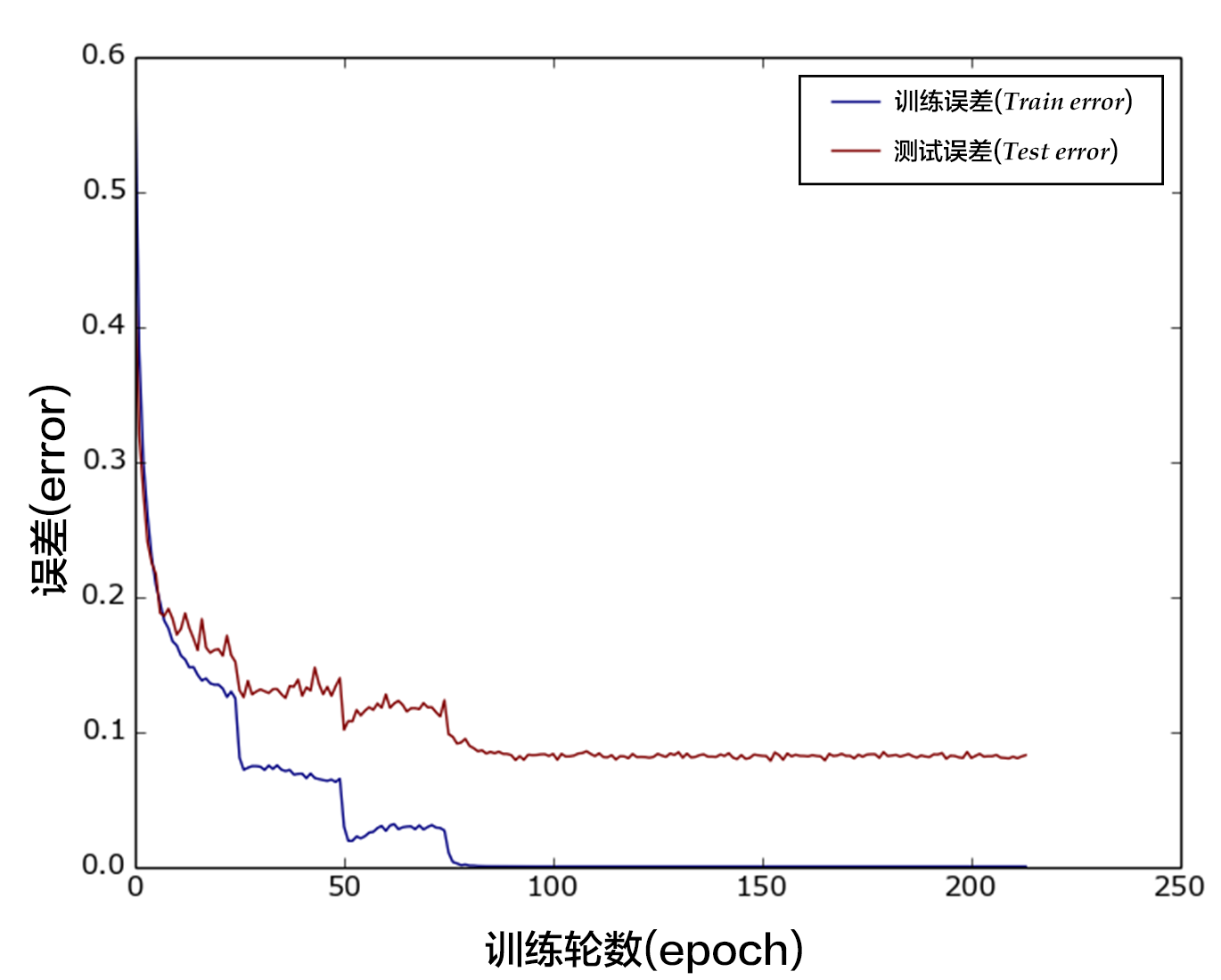

-图12. CIFAR10数据集上VGG模型的分类错误率

+

+图13. CIFAR10数据集上VGG模型的分类错误率

## 应用模型

@@ -471,19 +488,19 @@ import numpy as np

import os

def load_image(file):

-im = Image.open(file)

-im = im.resize((32, 32), Image.ANTIALIAS)

+ im = Image.open(file)

+ im = im.resize((32, 32), Image.ANTIALIAS)

-im = np.array(im).astype(np.float32)

-# The storage order of the loaded image is W(width),

-# H(height), C(channel). PaddlePaddle requires

-# the CHW order, so transpose them.

-im = im.transpose((2, 0, 1)) # CHW

-im = im / 255.0

+ im = np.array(im).astype(np.float32)

+ # The storage order of the loaded image is W(width),

+ # H(height), C(channel). PaddlePaddle requires

+ # the CHW order, so transpose them.

+ im = im.transpose((2, 0, 1)) # CHW

+ im = im / 255.0

-# Add one dimension to mimic the list format.

-im = numpy.expand_dims(im, axis=0)

-return im

+ # Add one dimension to mimic the list format.

+ im = numpy.expand_dims(im, axis=0)

+ return im

cur_dir = os.getcwd()

img = load_image(cur_dir + '/image/dog.png')

@@ -497,11 +514,11 @@ img = load_image(cur_dir + '/image/dog.png')

```python

inferencer = fluid.Inferencer(

-infer_func=inference_program, param_path=params_dirname, place=place)

-

+ infer_func=inference_program, param_path=params_dirname, place=place)

+label_list = ["airplane", "automobile", "bird", "cat", "deer", "dog", "frog", "horse", "ship", "truck"]

# inference

results = inferencer.infer({'pixel': img})

-print("infer results: ", results)

+print("infer results: %s" % label_list[np.argmax(results[0])])

```

## 总结

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/dog.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/dog.png

deleted file mode 100644

index ca8f858a902ea723d886d2b88c2c0a1005301c50..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/dog.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/dog_cat.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/dog_cat.png

deleted file mode 100644

index 38b21f21604b1bb84fc3f6aa96bd5fce45d15a55..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/dog_cat.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/fea_conv0.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/fea_conv0.png

deleted file mode 100644

index 647c822e52cd55d50e5f207978f5e6ada86cf34c..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/fea_conv0.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/flowers.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/flowers.png

deleted file mode 100644

index 04245cef60fe7126ae4c92ba8085273965078bee..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/flowers.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/googlenet.jpeg b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/googlenet.jpeg

deleted file mode 100644

index 249dbf96df61c3352ea5bd80470f6c4a1e03ff10..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/googlenet.jpeg and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/ilsvrc.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/ilsvrc.png

deleted file mode 100644

index 4660ac122e9d533023a21154d35eee29e3b08d27..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/ilsvrc.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/inception.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/inception.png

deleted file mode 100644

index 9591a0c1e8c0165c40ca560be35a7b9a91cd5027..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/inception.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/lenet.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/lenet.png

deleted file mode 100644

index 77f785e03bacd38c4c64a817874a58ff3298d2f3..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/lenet.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/plot.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/plot.png

deleted file mode 100644

index 57e45cc0c27dd99b9918de2ff1228bc6b65f7424..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/plot.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/resnet.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/resnet.png

deleted file mode 100644

index 0aeb4f254639fdbf18e916dc219ca61602596d85..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/resnet.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/resnet_block.jpg b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/resnet_block.jpg

deleted file mode 100644

index c500eb01a90190ff66150871fe83ec275e2de8d7..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/resnet_block.jpg and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/train_and_test.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/train_and_test.png

deleted file mode 100644

index c6336a9a69b95dc978719ce68896e3e752e67fed..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/train_and_test.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/vgg16.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/vgg16.png

deleted file mode 100644

index 6270eefcfd7071bc1643ee06567e5b81aaf4c177..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/vgg16.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/index.rst b/doc/fluid/new_docs/beginners_guide/basics/index.rst

index d16f8b947253a535567ddc8d7b227dd153d9b154..0fcb008e0a7773e81e5124da09fe07366130b924 100644

--- a/doc/fluid/new_docs/beginners_guide/basics/index.rst

+++ b/doc/fluid/new_docs/beginners_guide/basics/index.rst

@@ -10,9 +10,9 @@

.. toctree::

:maxdepth: 2

- image_classification/index.md

- word2vec/index.md

- recommender_system/index.md

- understand_sentiment/index.md

- label_semantic_roles/index.md

- machine_translation/index.md

+ image_classification/README.cn.md

+ word2vec/README.cn.md

+ recommender_system/README.cn.md

+ understand_sentiment/README.cn.md

+ label_semantic_roles/README.cn.md

+ machine_translation/README.cn.md

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/index.md b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/README.cn.md

similarity index 54%

rename from doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/index.md

rename to doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/README.cn.md

index 828ca738317992270487647e66b08b6d2f80e209..545f6002f23c1c698bd0d77fb39a8797ff7f5bde 100644

--- a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/index.md

+++ b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/README.cn.md

@@ -1,6 +1,6 @@

# 语义角色标注

-本教程源代码目录在[book/label_semantic_roles](https://github.com/PaddlePaddle/book/tree/develop/07.label_semantic_roles), 初次使用请参考PaddlePaddle[安装教程](https://github.com/PaddlePaddle/book/blob/develop/README.cn.md#运行这本书)。

+本教程源代码目录在[book/label_semantic_roles](https://github.com/PaddlePaddle/book/tree/develop/07.label_semantic_roles), 初次使用请参考PaddlePaddle[安装教程](https://github.com/PaddlePaddle/book/blob/develop/README.cn.md#运行这本书),更多内容请参考本教程的[视频课堂](http://bit.baidu.com/course/detail/id/178.html)。

## 背景介绍

@@ -8,7 +8,7 @@

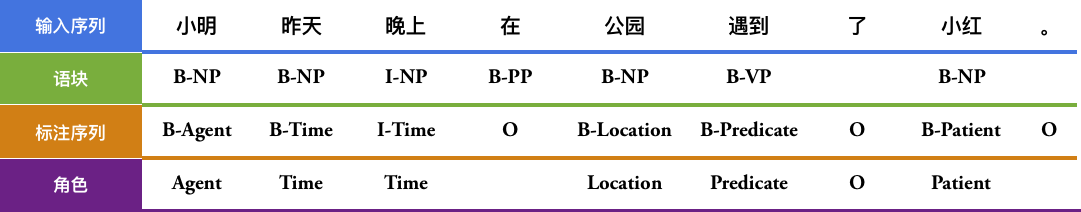

请看下面的例子,“遇到” 是谓词(Predicate,通常简写为“Pred”),“小明”是施事者(Agent),“小红”是受事者(Patient),“昨天” 是事件发生的时间(Time),“公园”是事情发生的地点(Location)。

-$$\mbox{[小明]}_{\mbox{Agent}}\mbox{[昨天]}_{\mbox{Time}}\mbox{[晚上]}_{\mbox{Time}}\mbox{在[公园]}_{\mbox{Location}}\mbox{[遇到]}_{\mbox{Predicate}}\mbox{了[小红]}_{\mbox{Patient}}\mbox{。}$$

+$$\mbox{[小明]}_{\mbox{Agent}}\mbox{[昨天]}_{\mbox{Time}}\mbox{[晚上]}_\mbox{Time}\mbox{在[公园]}_{\mbox{Location}}\mbox{[遇到]}_{\mbox{Predicate}}\mbox{了[小红]}_{\mbox{Patient}}\mbox{。}$$

语义角色标注(Semantic Role Labeling,SRL)以句子的谓词为中心,不对句子所包含的语义信息进行深入分析,只分析句子中各成分与谓词之间的关系,即句子的谓词(Predicate)- 论元(Argument)结构,并用语义角色来描述这些结构关系,是许多自然语言理解任务(如信息抽取,篇章分析,深度问答等)的一个重要中间步骤。在研究中一般都假定谓词是给定的,所要做的就是找出给定谓词的各个论元和它们的语义角色。

@@ -20,17 +20,17 @@ $$\mbox{[小明]}_{\mbox{Agent}}\mbox{[昨天]}_{\mbox{Time}}\mbox{[晚上]}_{\m

4. 论元识别:这个过程是从上一步剪除之后的候选中判断哪些是真正的论元,通常当做一个二分类问题来解决。

5. 对第4步的结果,通过多分类得到论元的语义角色标签。可以看到,句法分析是基础,并且后续步骤常常会构造的一些人工特征,这些特征往往也来自句法分析。

-

+

+

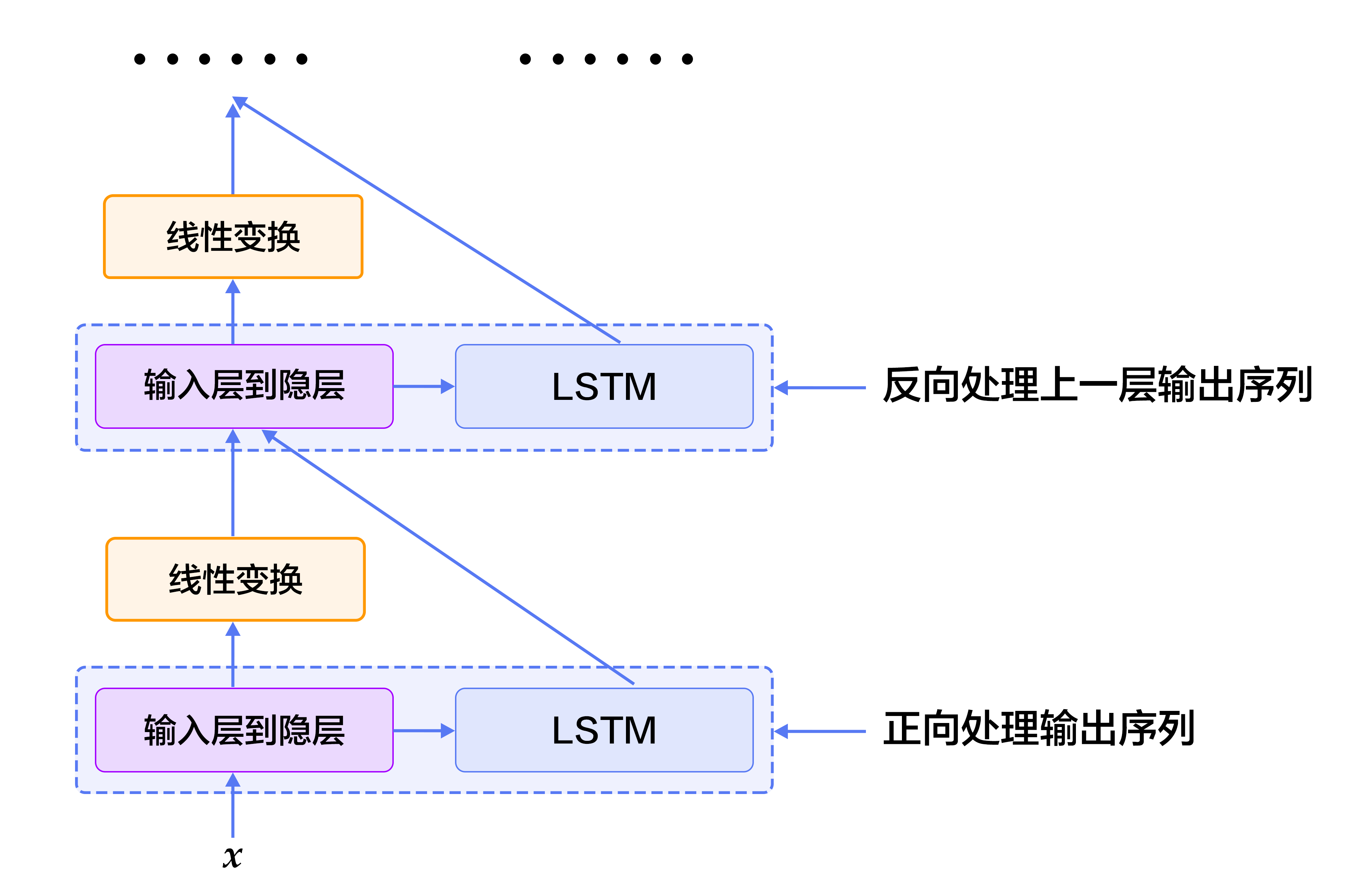

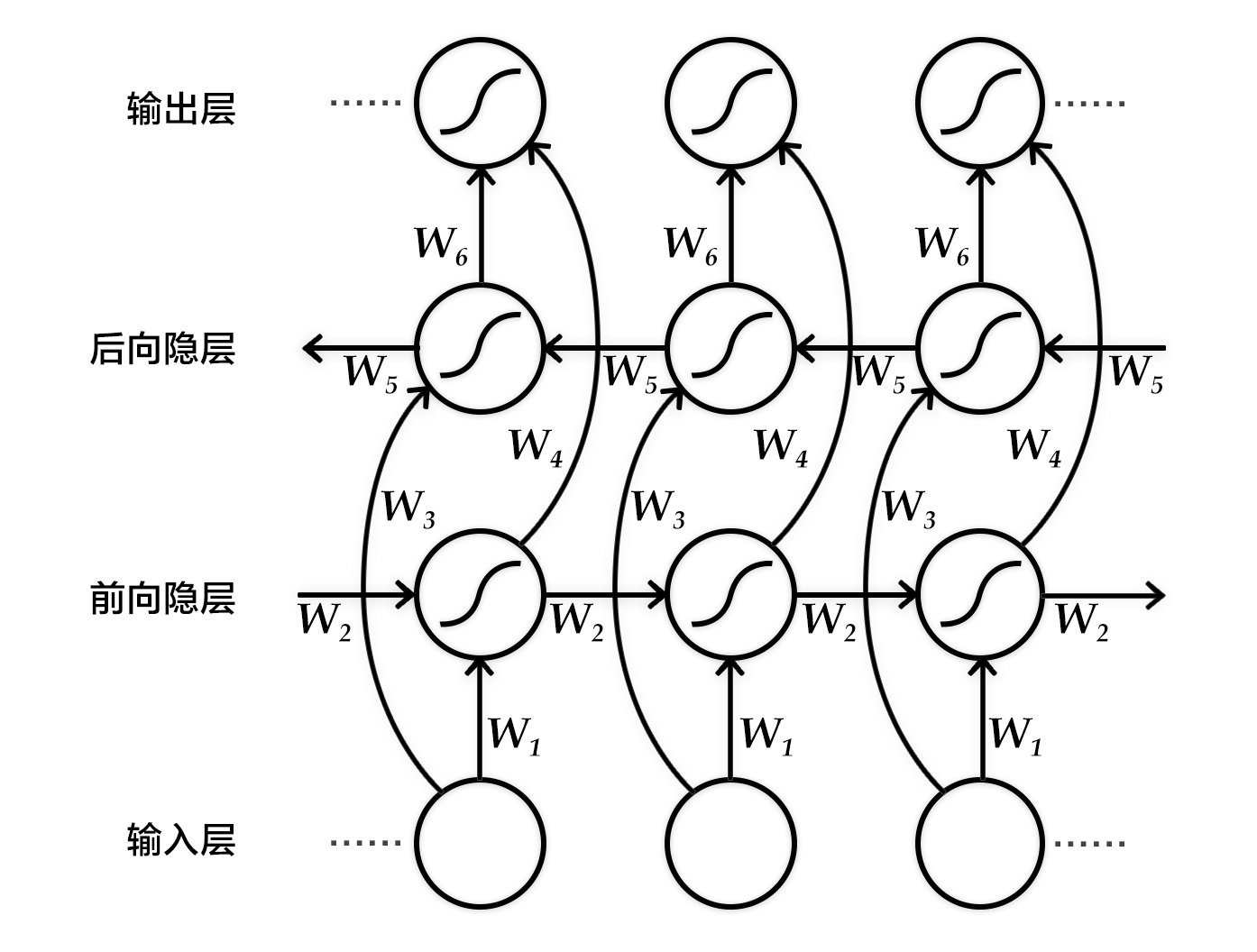

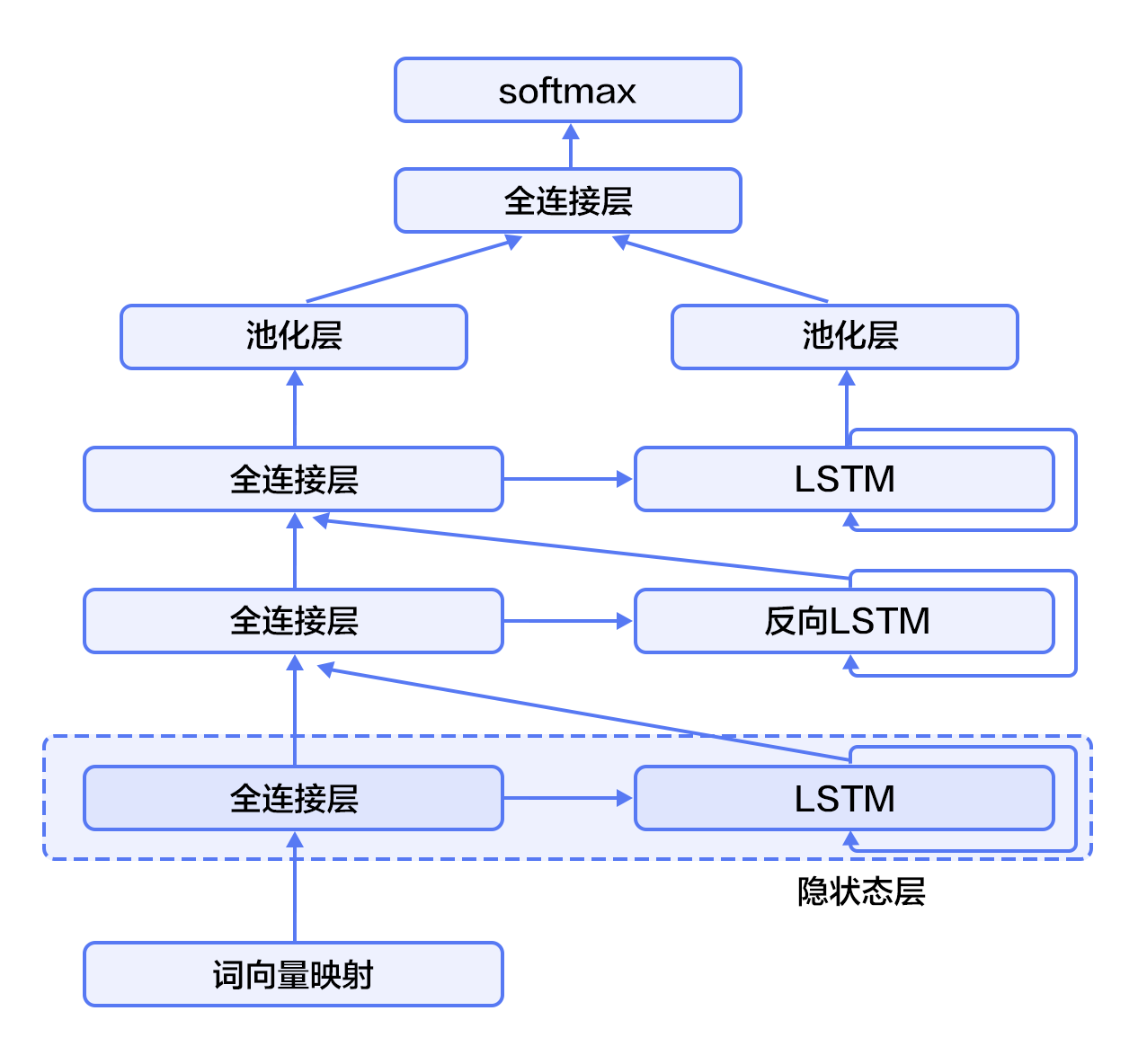

图6. SRL任务上的深层双向LSTM模型

@@ -137,8 +137,8 @@ $$\DeclareMathOperator*{\argmax}{arg\,max} L(\lambda, D) = - \text{log}\left(\pr

```text

conll05st-release/

└── test.wsj

-├── props # 标注结果

-└── words # 输入文本序列

+ ├── props # 标注结果

+ └── words # 输入文本序列

```

标注信息源自Penn TreeBank\[[7](#参考文献)\]和PropBank\[[8](#参考文献)\]的标注结果。PropBank标注结果的标签和我们在文章一开始示例中使用的标注结果标签不同,但原理是相同的,关于标注结果标签含义的说明,请参考论文\[[9](#参考文献)\]。

@@ -146,19 +146,11 @@ conll05st-release/

原始数据需要进行数据预处理才能被PaddlePaddle处理,预处理包括下面几个步骤:

1. 将文本序列和标记序列其合并到一条记录中;

-2. 一个句子如果含有`$n$`个谓词,这个句子会被处理`$n$`次,变成`$n$`条独立的训练样本,每个样本一个不同的谓词;

+2. 一个句子如果含有$n$个谓词,这个句子会被处理$n$次,变成$n$条独立的训练样本,每个样本一个不同的谓词;

3. 抽取谓词上下文和构造谓词上下文区域标记;

4. 构造以BIO法表示的标记;

5. 依据词典获取词对应的整数索引。

-

-```python

-# import paddle.v2.dataset.conll05 as conll05

-# conll05.corpus_reader函数完成上面第1步和第2步.

-# conll05.reader_creator函数完成上面第3步到第5步.

-# conll05.test函数可以获取处理之后的每条样本来供PaddlePaddle训练.

-```

-

预处理完成之后一条训练样本包含9个特征,分别是:句子序列、谓词、谓词上下文(占 5 列)、谓词上下区域标志、标注序列。下表是一条训练样本的示例。

| 句子序列 | 谓词 | 谓词上下文(窗口 = 5) | 谓词上下文区域标记 | 标注序列 |

@@ -187,6 +179,8 @@ conll05st-release/

获取词典,打印词典大小:

```python

+from __future__ import print_function

+

import math, os

import numpy as np

import paddle

@@ -201,9 +195,9 @@ word_dict_len = len(word_dict)

label_dict_len = len(label_dict)

pred_dict_len = len(verb_dict)

-print word_dict_len

-print label_dict_len

-print pred_dict_len

+print('word_dict_len: ', word_dict_len)

+print('label_dict_len: ', label_dict_len)

+print('pred_dict_len: ', pred_dict_len)

```

## 模型配置说明

@@ -232,96 +226,96 @@ embedding_name = 'emb'

```python

# 这里加载PaddlePaddle上版保存的二进制模型

def load_parameter(file_name, h, w):

-with open(file_name, 'rb') as f:

-f.read(16) # skip header.

-return np.fromfile(f, dtype=np.float32).reshape(h, w)

+ with open(file_name, 'rb') as f:

+ f.read(16) # skip header.

+ return np.fromfile(f, dtype=np.float32).reshape(h, w)

```

- 8个LSTM单元以“正向/反向”的顺序对所有输入序列进行学习。

-```python

+```python

def db_lstm(word, predicate, ctx_n2, ctx_n1, ctx_0, ctx_p1, ctx_p2, mark,

-**ignored):

-# 8 features

-predicate_embedding = fluid.layers.embedding(

-input=predicate,

-size=[pred_dict_len, word_dim],

-dtype='float32',

-is_sparse=IS_SPARSE,

-param_attr='vemb')

-

-mark_embedding = fluid.layers.embedding(

-input=mark,

-size=[mark_dict_len, mark_dim],

-dtype='float32',

-is_sparse=IS_SPARSE)

-

-word_input = [word, ctx_n2, ctx_n1, ctx_0, ctx_p1, ctx_p2]

-# Since word vector lookup table is pre-trained, we won't update it this time.

-# trainable being False prevents updating the lookup table during training.

-emb_layers = [

-fluid.layers.embedding(

-size=[word_dict_len, word_dim],

-input=x,

-param_attr=fluid.ParamAttr(

-name=embedding_name, trainable=False)) for x in word_input

-]

-emb_layers.append(predicate_embedding)

-emb_layers.append(mark_embedding)

-

-# 8 LSTM units are trained through alternating left-to-right / right-to-left order

-# denoted by the variable `reverse`.

-hidden_0_layers = [

-fluid.layers.fc(input=emb, size=hidden_dim, act='tanh')

-for emb in emb_layers

-]

-

-hidden_0 = fluid.layers.sums(input=hidden_0_layers)

-

-lstm_0 = fluid.layers.dynamic_lstm(

-input=hidden_0,

-size=hidden_dim,

-candidate_activation='relu',

-gate_activation='sigmoid',

-cell_activation='sigmoid')

-

-# stack L-LSTM and R-LSTM with direct edges

-input_tmp = [hidden_0, lstm_0]

-

-# In PaddlePaddle, state features and transition features of a CRF are implemented

-# by a fully connected layer and a CRF layer seperately. The fully connected layer

-# with linear activation learns the state features, here we use fluid.layers.sums

-# (fluid.layers.fc can be uesed as well), and the CRF layer in PaddlePaddle:

-# fluid.layers.linear_chain_crf only

-# learns the transition features, which is a cost layer and is the last layer of the network.

-# fluid.layers.linear_chain_crf outputs the log probability of true tag sequence

-# as the cost by given the input sequence and it requires the true tag sequence

-# as target in the learning process.

-

-for i in range(1, depth):

-mix_hidden = fluid.layers.sums(input=[

-fluid.layers.fc(input=input_tmp[0], size=hidden_dim, act='tanh'),

-fluid.layers.fc(input=input_tmp[1], size=hidden_dim, act='tanh')

-])

-

-lstm = fluid.layers.dynamic_lstm(

-input=mix_hidden,

-size=hidden_dim,

-candidate_activation='relu',

-gate_activation='sigmoid',

-cell_activation='sigmoid',

-is_reverse=((i % 2) == 1))

-

-input_tmp = [mix_hidden, lstm]

-

-# 取最后一个栈式LSTM的输出和这个LSTM单元的输入到隐层映射,

-# 经过一个全连接层映射到标记字典的维度,来学习 CRF 的状态特征

-feature_out = fluid.layers.sums(input=[

-fluid.layers.fc(input=input_tmp[0], size=label_dict_len, act='tanh'),

-fluid.layers.fc(input=input_tmp[1], size=label_dict_len, act='tanh')

-])

-

-return feature_out

+ **ignored):

+ # 8 features

+ predicate_embedding = fluid.layers.embedding(

+ input=predicate,

+ size=[pred_dict_len, word_dim],

+ dtype='float32',

+ is_sparse=IS_SPARSE,

+ param_attr='vemb')

+

+ mark_embedding = fluid.layers.embedding(

+ input=mark,

+ size=[mark_dict_len, mark_dim],

+ dtype='float32',

+ is_sparse=IS_SPARSE)

+

+ word_input = [word, ctx_n2, ctx_n1, ctx_0, ctx_p1, ctx_p2]

+ # Since word vector lookup table is pre-trained, we won't update it this time.

+ # trainable being False prevents updating the lookup table during training.

+ emb_layers = [

+ fluid.layers.embedding(

+ size=[word_dict_len, word_dim],

+ input=x,

+ param_attr=fluid.ParamAttr(

+ name=embedding_name, trainable=False)) for x in word_input

+ ]

+ emb_layers.append(predicate_embedding)

+ emb_layers.append(mark_embedding)

+

+ # 8 LSTM units are trained through alternating left-to-right / right-to-left order

+ # denoted by the variable `reverse`.

+ hidden_0_layers = [

+ fluid.layers.fc(input=emb, size=hidden_dim, act='tanh')

+ for emb in emb_layers

+ ]

+

+ hidden_0 = fluid.layers.sums(input=hidden_0_layers)

+

+ lstm_0 = fluid.layers.dynamic_lstm(

+ input=hidden_0,

+ size=hidden_dim,

+ candidate_activation='relu',

+ gate_activation='sigmoid',

+ cell_activation='sigmoid')

+

+ # stack L-LSTM and R-LSTM with direct edges

+ input_tmp = [hidden_0, lstm_0]

+

+ # In PaddlePaddle, state features and transition features of a CRF are implemented

+ # by a fully connected layer and a CRF layer seperately. The fully connected layer

+ # with linear activation learns the state features, here we use fluid.layers.sums

+ # (fluid.layers.fc can be uesed as well), and the CRF layer in PaddlePaddle:

+ # fluid.layers.linear_chain_crf only

+ # learns the transition features, which is a cost layer and is the last layer of the network.

+ # fluid.layers.linear_chain_crf outputs the log probability of true tag sequence

+ # as the cost by given the input sequence and it requires the true tag sequence

+ # as target in the learning process.

+

+ for i in range(1, depth):

+ mix_hidden = fluid.layers.sums(input=[

+ fluid.layers.fc(input=input_tmp[0], size=hidden_dim, act='tanh'),

+ fluid.layers.fc(input=input_tmp[1], size=hidden_dim, act='tanh')

+ ])

+

+ lstm = fluid.layers.dynamic_lstm(

+ input=mix_hidden,

+ size=hidden_dim,

+ candidate_activation='relu',

+ gate_activation='sigmoid',

+ cell_activation='sigmoid',

+ is_reverse=((i % 2) == 1))

+

+ input_tmp = [mix_hidden, lstm]

+

+ # 取最后一个栈式LSTM的输出和这个LSTM单元的输入到隐层映射,

+ # 经过一个全连接层映射到标记字典的维度,来学习 CRF 的状态特征

+ feature_out = fluid.layers.sums(input=[

+ fluid.layers.fc(input=input_tmp[0], size=label_dict_len, act='tanh'),

+ fluid.layers.fc(input=input_tmp[1], size=label_dict_len, act='tanh')

+ ])

+

+ return feature_out

```

## 训练模型

@@ -338,116 +332,116 @@ return feature_out

```python

def train(use_cuda, save_dirname=None, is_local=True):

-# define network topology

-

-# 句子序列

-word = fluid.layers.data(

-name='word_data', shape=[1], dtype='int64', lod_level=1)

-

-# 谓词

-predicate = fluid.layers.data(

-name='verb_data', shape=[1], dtype='int64', lod_level=1)

-

-# 谓词上下文5个特征

-ctx_n2 = fluid.layers.data(

-name='ctx_n2_data', shape=[1], dtype='int64', lod_level=1)

-ctx_n1 = fluid.layers.data(

-name='ctx_n1_data', shape=[1], dtype='int64', lod_level=1)

-ctx_0 = fluid.layers.data(

-name='ctx_0_data', shape=[1], dtype='int64', lod_level=1)

-ctx_p1 = fluid.layers.data(

-name='ctx_p1_data', shape=[1], dtype='int64', lod_level=1)

-ctx_p2 = fluid.layers.data(

-name='ctx_p2_data', shape=[1], dtype='int64', lod_level=1)

-

-# 谓词上下区域标志

-mark = fluid.layers.data(

-name='mark_data', shape=[1], dtype='int64', lod_level=1)

-

-# define network topology

-feature_out = db_lstm(**locals())

-

-# 标注序列

-target = fluid.layers.data(

-name='target', shape=[1], dtype='int64', lod_level=1)

-

-# 学习 CRF 的转移特征

-crf_cost = fluid.layers.linear_chain_crf(

-input=feature_out,

-label=target,

-param_attr=fluid.ParamAttr(

-name='crfw', learning_rate=mix_hidden_lr))

-

-avg_cost = fluid.layers.mean(crf_cost)

-

-sgd_optimizer = fluid.optimizer.SGD(

-learning_rate=fluid.layers.exponential_decay(

-learning_rate=0.01,

-decay_steps=100000,

-decay_rate=0.5,

-staircase=True))

-

-sgd_optimizer.minimize(avg_cost)

-

-# The CRF decoding layer is used for evaluation and inference.

-# It shares weights with CRF layer. The sharing of parameters among multiple layers

-# is specified by using the same parameter name in these layers. If true tag sequence

-# is provided in training process, `fluid.layers.crf_decoding` calculates labelling error

-# for each input token and sums the error over the entire sequence.

-# Otherwise, `fluid.layers.crf_decoding` generates the labelling tags.

-crf_decode = fluid.layers.crf_decoding(

-input=feature_out, param_attr=fluid.ParamAttr(name='crfw'))

-

-train_data = paddle.batch(

-paddle.reader.shuffle(

-paddle.dataset.conll05.test(), buf_size=8192),

-batch_size=BATCH_SIZE)

-

-place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

-

-

-feeder = fluid.DataFeeder(

-feed_list=[

-word, ctx_n2, ctx_n1, ctx_0, ctx_p1, ctx_p2, predicate, mark, target

-],

-place=place)

-exe = fluid.Executor(place)

-

-def train_loop(main_program):

-exe.run(fluid.default_startup_program())

-embedding_param = fluid.global_scope().find_var(

-embedding_name).get_tensor()

-embedding_param.set(

-load_parameter(conll05.get_embedding(), word_dict_len, word_dim),

-place)

-

-start_time = time.time()

-batch_id = 0

-for pass_id in xrange(PASS_NUM):

-for data in train_data():

-cost = exe.run(main_program,

-feed=feeder.feed(data),

-fetch_list=[avg_cost])

-cost = cost[0]

-

-if batch_id % 10 == 0:

-print("avg_cost:" + str(cost))

-if batch_id != 0:

-print("second per batch: " + str((time.time(

-) - start_time) / batch_id))

-# Set the threshold low to speed up the CI test

-if float(cost) < 60.0:

-if save_dirname is not None:

-fluid.io.save_inference_model(save_dirname, [

-'word_data', 'verb_data', 'ctx_n2_data',

-'ctx_n1_data', 'ctx_0_data', 'ctx_p1_data',

-'ctx_p2_data', 'mark_data'

-], [feature_out], exe)

-return

-

-batch_id = batch_id + 1

-

-train_loop(fluid.default_main_program())

+ # define network topology

+

+ # 句子序列

+ word = fluid.layers.data(

+ name='word_data', shape=[1], dtype='int64', lod_level=1)

+

+ # 谓词

+ predicate = fluid.layers.data(

+ name='verb_data', shape=[1], dtype='int64', lod_level=1)

+

+ # 谓词上下文5个特征

+ ctx_n2 = fluid.layers.data(

+ name='ctx_n2_data', shape=[1], dtype='int64', lod_level=1)

+ ctx_n1 = fluid.layers.data(

+ name='ctx_n1_data', shape=[1], dtype='int64', lod_level=1)

+ ctx_0 = fluid.layers.data(

+ name='ctx_0_data', shape=[1], dtype='int64', lod_level=1)

+ ctx_p1 = fluid.layers.data(

+ name='ctx_p1_data', shape=[1], dtype='int64', lod_level=1)

+ ctx_p2 = fluid.layers.data(

+ name='ctx_p2_data', shape=[1], dtype='int64', lod_level=1)

+

+ # 谓词上下区域标志

+ mark = fluid.layers.data(

+ name='mark_data', shape=[1], dtype='int64', lod_level=1)

+

+ # define network topology

+ feature_out = db_lstm(**locals())

+

+ # 标注序列

+ target = fluid.layers.data(

+ name='target', shape=[1], dtype='int64', lod_level=1)

+

+ # 学习 CRF 的转移特征

+ crf_cost = fluid.layers.linear_chain_crf(

+ input=feature_out,

+ label=target,

+ param_attr=fluid.ParamAttr(

+ name='crfw', learning_rate=mix_hidden_lr))

+

+ avg_cost = fluid.layers.mean(crf_cost)

+

+ sgd_optimizer = fluid.optimizer.SGD(

+ learning_rate=fluid.layers.exponential_decay(

+ learning_rate=0.01,

+ decay_steps=100000,

+ decay_rate=0.5,

+ staircase=True))

+

+ sgd_optimizer.minimize(avg_cost)

+

+ # The CRF decoding layer is used for evaluation and inference.

+ # It shares weights with CRF layer. The sharing of parameters among multiple layers

+ # is specified by using the same parameter name in these layers. If true tag sequence

+ # is provided in training process, `fluid.layers.crf_decoding` calculates labelling error

+ # for each input token and sums the error over the entire sequence.

+ # Otherwise, `fluid.layers.crf_decoding` generates the labelling tags.

+ crf_decode = fluid.layers.crf_decoding(

+ input=feature_out, param_attr=fluid.ParamAttr(name='crfw'))

+

+ train_data = paddle.batch(

+ paddle.reader.shuffle(

+ paddle.dataset.conll05.test(), buf_size=8192),

+ batch_size=BATCH_SIZE)

+

+ place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

+

+

+ feeder = fluid.DataFeeder(

+ feed_list=[

+ word, ctx_n2, ctx_n1, ctx_0, ctx_p1, ctx_p2, predicate, mark, target

+ ],

+ place=place)

+ exe = fluid.Executor(place)

+

+ def train_loop(main_program):

+ exe.run(fluid.default_startup_program())

+ embedding_param = fluid.global_scope().find_var(

+ embedding_name).get_tensor()

+ embedding_param.set(

+ load_parameter(conll05.get_embedding(), word_dict_len, word_dim),

+ place)

+

+ start_time = time.time()

+ batch_id = 0

+ for pass_id in xrange(PASS_NUM):

+ for data in train_data():

+ cost = exe.run(main_program,

+ feed=feeder.feed(data),

+ fetch_list=[avg_cost])

+ cost = cost[0]

+

+ if batch_id % 10 == 0:

+ print("avg_cost: " + str(cost))

+ if batch_id != 0:

+ print("second per batch: " + str((time.time(

+ ) - start_time) / batch_id))

+ # Set the threshold low to speed up the CI test

+ if float(cost) < 60.0:

+ if save_dirname is not None:

+ fluid.io.save_inference_model(save_dirname, [

+ 'word_data', 'verb_data', 'ctx_n2_data',

+ 'ctx_n1_data', 'ctx_0_data', 'ctx_p1_data',

+ 'ctx_p2_data', 'mark_data'

+ ], [feature_out], exe)

+ return

+

+ batch_id = batch_id + 1

+

+ train_loop(fluid.default_main_program())

```

@@ -457,92 +451,92 @@ train_loop(fluid.default_main_program())

```python

def infer(use_cuda, save_dirname=None):

-if save_dirname is None:

-return

-

-place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

-exe = fluid.Executor(place)

-

-inference_scope = fluid.core.Scope()

-with fluid.scope_guard(inference_scope):

-# Use fluid.io.load_inference_model to obtain the inference program desc,

-# the feed_target_names (the names of variables that will be fed

-# data using feed operators), and the fetch_targets (variables that

-# we want to obtain data from using fetch operators).

-[inference_program, feed_target_names,

-fetch_targets] = fluid.io.load_inference_model(save_dirname, exe)

-

-# Setup inputs by creating LoDTensors to represent sequences of words.

-# Here each word is the basic element of these LoDTensors and the shape of

-# each word (base_shape) should be [1] since it is simply an index to

-# look up for the corresponding word vector.

-# Suppose the length_based level of detail (lod) info is set to [[3, 4, 2]],

-# which has only one lod level. Then the created LoDTensors will have only

-# one higher level structure (sequence of words, or sentence) than the basic

-# element (word). Hence the LoDTensor will hold data for three sentences of

-# length 3, 4 and 2, respectively.

-# Note that lod info should be a list of lists.

-lod = [[3, 4, 2]]

-base_shape = [1]

-# The range of random integers is [low, high]

-word = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-pred = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=pred_dict_len - 1)

-ctx_n2 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-ctx_n1 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-ctx_0 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-ctx_p1 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-ctx_p2 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-mark = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=mark_dict_len - 1)

-

-# Construct feed as a dictionary of {feed_target_name: feed_target_data}

-# and results will contain a list of data corresponding to fetch_targets.

-assert feed_target_names[0] == 'word_data'

-assert feed_target_names[1] == 'verb_data'

-assert feed_target_names[2] == 'ctx_n2_data'

-assert feed_target_names[3] == 'ctx_n1_data'

-assert feed_target_names[4] == 'ctx_0_data'

-assert feed_target_names[5] == 'ctx_p1_data'

-assert feed_target_names[6] == 'ctx_p2_data'

-assert feed_target_names[7] == 'mark_data'

-

-results = exe.run(inference_program,

-feed={

-feed_target_names[0]: word,

-feed_target_names[1]: pred,

-feed_target_names[2]: ctx_n2,

-feed_target_names[3]: ctx_n1,

-feed_target_names[4]: ctx_0,

-feed_target_names[5]: ctx_p1,

-feed_target_names[6]: ctx_p2,

-feed_target_names[7]: mark

-},

-fetch_list=fetch_targets,

-return_numpy=False)

-print(results[0].lod())

-np_data = np.array(results[0])

-print("Inference Shape: ", np_data.shape)

+ if save_dirname is None:

+ return

+

+ place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

+ exe = fluid.Executor(place)

+

+ inference_scope = fluid.core.Scope()

+ with fluid.scope_guard(inference_scope):

+ # Use fluid.io.load_inference_model to obtain the inference program desc,

+ # the feed_target_names (the names of variables that will be fed

+ # data using feed operators), and the fetch_targets (variables that

+ # we want to obtain data from using fetch operators).

+ [inference_program, feed_target_names,

+ fetch_targets] = fluid.io.load_inference_model(save_dirname, exe)

+

+ # Setup inputs by creating LoDTensors to represent sequences of words.

+ # Here each word is the basic element of these LoDTensors and the shape of

+ # each word (base_shape) should be [1] since it is simply an index to

+ # look up for the corresponding word vector.

+ # Suppose the length_based level of detail (lod) info is set to [[3, 4, 2]],

+ # which has only one lod level. Then the created LoDTensors will have only

+ # one higher level structure (sequence of words, or sentence) than the basic

+ # element (word). Hence the LoDTensor will hold data for three sentences of

+ # length 3, 4 and 2, respectively.

+ # Note that lod info should be a list of lists.

+ lod = [[3, 4, 2]]

+ base_shape = [1]

+ # The range of random integers is [low, high]

+ word = fluid.create_random_int_lodtensor(

+ lod, base_shape, place, low=0, high=word_dict_len - 1)

+ pred = fluid.create_random_int_lodtensor(

+ lod, base_shape, place, low=0, high=pred_dict_len - 1)

+ ctx_n2 = fluid.create_random_int_lodtensor(

+ lod, base_shape, place, low=0, high=word_dict_len - 1)

+ ctx_n1 = fluid.create_random_int_lodtensor(

+ lod, base_shape, place, low=0, high=word_dict_len - 1)

+ ctx_0 = fluid.create_random_int_lodtensor(

+ lod, base_shape, place, low=0, high=word_dict_len - 1)

+ ctx_p1 = fluid.create_random_int_lodtensor(

+ lod, base_shape, place, low=0, high=word_dict_len - 1)

+ ctx_p2 = fluid.create_random_int_lodtensor(

+ lod, base_shape, place, low=0, high=word_dict_len - 1)

+ mark = fluid.create_random_int_lodtensor(

+ lod, base_shape, place, low=0, high=mark_dict_len - 1)

+

+ # Construct feed as a dictionary of {feed_target_name: feed_target_data}

+ # and results will contain a list of data corresponding to fetch_targets.

+ assert feed_target_names[0] == 'word_data'

+ assert feed_target_names[1] == 'verb_data'

+ assert feed_target_names[2] == 'ctx_n2_data'

+ assert feed_target_names[3] == 'ctx_n1_data'

+ assert feed_target_names[4] == 'ctx_0_data'

+ assert feed_target_names[5] == 'ctx_p1_data'

+ assert feed_target_names[6] == 'ctx_p2_data'

+ assert feed_target_names[7] == 'mark_data'

+

+ results = exe.run(inference_program,

+ feed={

+ feed_target_names[0]: word,

+ feed_target_names[1]: pred,

+ feed_target_names[2]: ctx_n2,

+ feed_target_names[3]: ctx_n1,

+ feed_target_names[4]: ctx_0,

+ feed_target_names[5]: ctx_p1,

+ feed_target_names[6]: ctx_p2,

+ feed_target_names[7]: mark

+ },

+ fetch_list=fetch_targets,

+ return_numpy=False)

+ print(results[0].lod())

+ np_data = np.array(results[0])

+ print("Inference Shape: ", np_data.shape)

```

整个程序的入口如下:

```python

def main(use_cuda, is_local=True):

-if use_cuda and not fluid.core.is_compiled_with_cuda():

-return

+ if use_cuda and not fluid.core.is_compiled_with_cuda():

+ return

-# Directory for saving the trained model

-save_dirname = "label_semantic_roles.inference.model"

+ # Directory for saving the trained model

+ save_dirname = "label_semantic_roles.inference.model"

-train(use_cuda, save_dirname, is_local)

-infer(use_cuda, save_dirname)

+ train(use_cuda, save_dirname, is_local)

+ infer(use_cuda, save_dirname)

main(use_cuda=False)

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bidirectional_stacked_lstm.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bidirectional_stacked_lstm.png

deleted file mode 100644

index e63f5ebd6d00f2e4ecf97b9ab2027e74683013f2..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bidirectional_stacked_lstm.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bidirectional_stacked_lstm_en.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bidirectional_stacked_lstm_en.png

deleted file mode 100755

index f0a195c24d9ee493f96bb93c28a99e70566be7a4..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bidirectional_stacked_lstm_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bio_example.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bio_example.png

deleted file mode 100755

index e5f7151c9fcc50a7cf7af485cbbc7e4fccab0c20..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bio_example.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bio_example_en.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bio_example_en.png

deleted file mode 100755

index 93b44dd4874402ef29ad7bd7d94147609b92e309..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/bio_example_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/db_lstm_network.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/db_lstm_network.png

deleted file mode 100644

index 592f7ee23bdc88a9a35059612e5ab880bbc9d34b..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/db_lstm_network.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/db_lstm_network_en.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/db_lstm_network_en.png

deleted file mode 100755

index c3646312e48db977402fb353dc0c9b4d02269bf4..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/db_lstm_network_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/dependency_parsing.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/dependency_parsing.png

deleted file mode 100755

index 9265b671735940ed6549e2980064d2ce08baae64..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/dependency_parsing.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/dependency_parsing_en.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/dependency_parsing_en.png

deleted file mode 100755

index 23f4f45b603e3d60702af2b2464d10fc8deed061..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/dependency_parsing_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/linear_chain_crf.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/linear_chain_crf.png

deleted file mode 100644

index 0778fda74b2ad22ce4b631791a7b028cdef780a5..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/linear_chain_crf.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/stacked_lstm.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/stacked_lstm.png

deleted file mode 100644

index 3d2914c726b5f4c46e66dfa85d4e88649fede6b3..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/stacked_lstm.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/stacked_lstm_en.png b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/stacked_lstm_en.png

deleted file mode 100755

index 0b944ef91e8b5ba4b14d2a35bd8879f261cf8f61..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/image/stacked_lstm_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/index.md b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/README.cn.md

similarity index 70%

rename from doc/fluid/new_docs/beginners_guide/basics/machine_translation/index.md

rename to doc/fluid/new_docs/beginners_guide/basics/machine_translation/README.cn.md

index fc161aaae9c37b0e1a596204e7138025a98adb1d..3f6efec8841a608638389331152a720cbb3d9735 100644

--- a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/index.md

+++ b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/README.cn.md

@@ -11,10 +11,10 @@

为解决以上问题,统计机器翻译(Statistical Machine Translation, SMT)技术应运而生。在统计机器翻译技术中,转化规则是由机器自动从大规模的语料中学习得到的,而非我们人主动提供规则。因此,它克服了基于规则的翻译系统所面临的知识获取瓶颈的问题,但仍然存在许多挑战:1)人为设计许多特征(feature),但永远无法覆盖所有的语言现象;2)难以利用全局的特征;3)依赖于许多预处理环节,如词语对齐、分词或符号化(tokenization)、规则抽取、句法分析等,而每个环节的错误会逐步累积,对翻译的影响也越来越大。

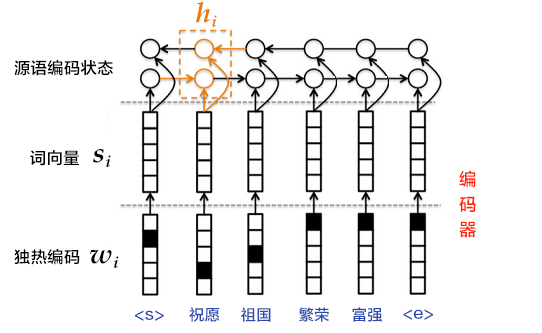

近年来,深度学习技术的发展为解决上述挑战提供了新的思路。将深度学习应用于机器翻译任务的方法大致分为两类:1)仍以统计机器翻译系统为框架,只是利用神经网络来改进其中的关键模块,如语言模型、调序模型等(见图1的左半部分);2)不再以统计机器翻译系统为框架,而是直接用神经网络将源语言映射到目标语言,即端到端的神经网络机器翻译(End-to-End Neural Machine Translation, End-to-End NMT)(见图1的右半部分),简称为NMT模型。

-

-

+

+

图1. 基于神经网络的机器翻译系统

-

+

本教程主要介绍NMT模型,以及如何用PaddlePaddle来训练一个NMT模型。

@@ -30,7 +30,9 @@

1 -6.23177 These are the light of hope and relief .

2 -7.7914 These are the light of hope and the relief of hope .

```

+

- 左起第一列是生成句子的序号;左起第二列是该条句子的得分(从大到小),分值越高越好;左起第三列是生成的英语句子。

+

- 另外有两个特殊标志:``表示句子的结尾,``表示未登录词(unknown word),即未在训练字典中出现的词。

## 模型概览

@@ -43,18 +45,20 @@

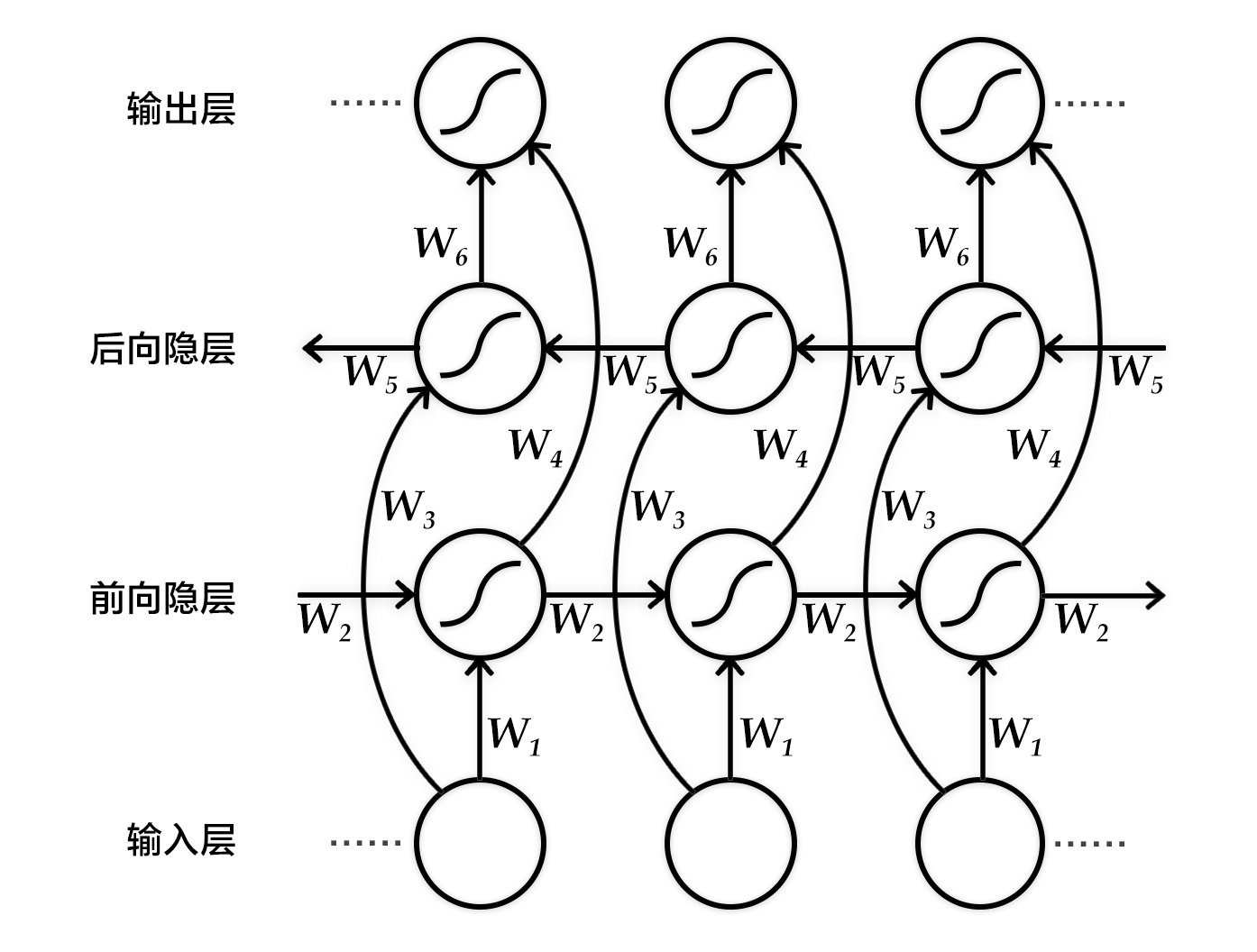

具体来说,该双向循环神经网络分别在时间维以顺序和逆序——即前向(forward)和后向(backward)——依次处理输入序列,并将每个时间步RNN的输出拼接成为最终的输出层。这样每个时间步的输出节点,都包含了输入序列中当前时刻完整的过去和未来的上下文信息。下图展示的是一个按时间步展开的双向循环神经网络。该网络包含一个前向和一个后向RNN,其中有六个权重矩阵:输入到前向隐层和后向隐层的权重矩阵(`$W_1, W_3$`),隐层到隐层自己的权重矩阵(`$W_2,W_5$`),前向隐层和后向隐层到输出层的权重矩阵(`$W_4, W_6$`)。注意,该网络的前向隐层和后向隐层之间没有连接。

-

-

-图3. 按时间步展开的双向循环神经网络

-

+

+

+

+图2. 按时间步展开的双向循环神经网络

+

-图4. 编码器-解码器框架

-

+

+

+图3. 编码器-解码器框架

+

-图5. 使用双向GRU的编码器

-

+

+

+图4. 使用双向GRU的编码器

+

`,表示解码开始;`$z_i$`是`$i$`时刻解码RNN的隐层状态,`$z_0$`是一个全零的向量。

2. 将`$z_{i+1}$`通过`softmax`归一化,得到目标语言序列的第`$i+1$`个单词的概率分布`$p_{i+1}$`。概率分布公式如下:

-

$$p\left ( u_{i+1}|u_{<i+1},\mathbf{x} \right )=softmax(W_sz_{i+1}+b_z)$$

-

其中`$W_sz_{i+1}+b_z$`是对每个可能的输出单词进行打分,再用softmax归一化就可以得到第`$i+1$`个词的概率`$p_{i+1}$`。

3. 根据`$p_{i+1}$`和`$u_{i+1}$`计算代价。

+

4. 重复步骤1~3,直到目标语言序列中的所有词处理完毕。

机器翻译任务的生成过程,通俗来讲就是根据预先训练的模型来翻译源语言句子。生成过程中的解码阶段和上述训练过程的有所差异,具体介绍请见[柱搜索算法](#柱搜索算法)。

@@ -101,10 +100,12 @@ $$p\left ( u_{i+1}|u_{<i+1},\mathbf{x} \right )=softmax(W_sz_{i+1}+b_z)$$

柱搜索算法使用广度优先策略建立搜索树,在树的每一层,按照启发代价(heuristic cost)(本教程中,为生成词的log概率之和)对节点进行排序,然后仅留下预先确定的个数(文献中通常称为beam width、beam size、柱宽度等)的节点。只有这些节点会在下一层继续扩展,其他节点就被剪掉了,也就是说保留了质量较高的节点,剪枝了质量较差的节点。因此,搜索所占用的空间和时间大幅减少,但缺点是无法保证一定获得最优解。

使用柱搜索算法的解码阶段,目标是最大化生成序列的概率。思路是:

-

1. 每一个时刻,根据源语言句子的编码信息`$c$`、生成的第`$i$`个目标语言序列单词`$u_i$`和`$i$`时刻RNN的隐层状态`$z_i$`,计算出下一个隐层状态`$z_{i+1}$`。

+

2. 将`$z_{i+1}$`通过`softmax`归一化,得到目标语言序列的第`$i+1$`个单词的概率分布`$p_{i+1}$`。

+

3. 根据`$p_{i+1}$`采样出单词`$u_{i+1}$`。

+

4. 重复步骤1~3,直到获得句子结束标记``或超过句子的最大生成长度为止。

注意:`$z_{i+1}$`和`$p_{i+1}$`的计算公式同[解码器](#解码器)中的一样。且由于生成时的每一步都是通过贪心法实现的,因此并不能保证得到全局最优解。

@@ -116,9 +117,13 @@ $$p\left ( u_{i+1}|u_{<i+1},\mathbf{x} \right )=softmax(W_sz_{i+1}+b_z)$$

### 数据预处理

我们的预处理流程包括两步:

+

- 将每个源语言到目标语言的平行语料库文件合并为一个文件:

+

- 合并每个`XXX.src`和`XXX.trg`文件为`XXX`。

+

- `XXX`中的第`$i$`行内容为`XXX.src`中的第`$i$`行和`XXX.trg`中的第`$i$`行连接,用'\t'分隔。

+

- 创建训练数据的“源字典”和“目标字典”。每个字典都有**DICTSIZE**个单词,包括:语料中词频最高的(DICTSIZE - 3)个单词,和3个特殊符号``(序列的开始)、``(序列的结束)和``(未登录词)。

### 示例数据

@@ -132,6 +137,7 @@ $$p\left ( u_{i+1}|u_{<i+1},\mathbf{x} \right )=softmax(W_sz_{i+1}+b_z)$$

下面我们开始根据输入数据的形式配置模型。首先引入所需的库函数以及定义全局变量。

```python

+from __future__ import print_function

import contextlib

import numpy as np

@@ -157,139 +163,152 @@ decoder_size = hidden_dim

然后如下实现编码器框架:

-```python

-def encoder(is_sparse):

-src_word_id = pd.data(

-name="src_word_id", shape=[1], dtype='int64', lod_level=1)

-src_embedding = pd.embedding(

-input=src_word_id,

-size=[dict_size, word_dim],

-dtype='float32',

-is_sparse=is_sparse,

-param_attr=fluid.ParamAttr(name='vemb'))

-

-fc1 = pd.fc(input=src_embedding, size=hidden_dim * 4, act='tanh')

-lstm_hidden0, lstm_0 = pd.dynamic_lstm(input=fc1, size=hidden_dim * 4)

-encoder_out = pd.sequence_last_step(input=lstm_hidden0)

-return encoder_out

-```

+ ```python

+ def encoder(is_sparse):

+ src_word_id = pd.data(

+ name="src_word_id", shape=[1], dtype='int64', lod_level=1)

+ src_embedding = pd.embedding(

+ input=src_word_id,

+ size=[dict_size, word_dim],

+ dtype='float32',

+ is_sparse=is_sparse,

+ param_attr=fluid.ParamAttr(name='vemb'))

+

+ fc1 = pd.fc(input=src_embedding, size=hidden_dim * 4, act='tanh')

+ lstm_hidden0, lstm_0 = pd.dynamic_lstm(input=fc1, size=hidden_dim * 4)

+ encoder_out = pd.sequence_last_step(input=lstm_hidden0)

+ return encoder_out

+ ```

再实现训练模式下的解码器:

```python

-def train_decoder(context, is_sparse):

-trg_language_word = pd.data(

-name="target_language_word", shape=[1], dtype='int64', lod_level=1)

-trg_embedding = pd.embedding(

-input=trg_language_word,

-size=[dict_size, word_dim],

-dtype='float32',

-is_sparse=is_sparse,

-param_attr=fluid.ParamAttr(name='vemb'))

-

-rnn = pd.DynamicRNN()

-with rnn.block():

-current_word = rnn.step_input(trg_embedding)

-pre_state = rnn.memory(init=context)

-current_state = pd.fc(input=[current_word, pre_state],

-size=decoder_size,

-act='tanh')

-

-current_score = pd.fc(input=current_state,

-size=target_dict_dim,

-act='softmax')

-rnn.update_memory(pre_state, current_state)

-rnn.output(current_score)

-

-return rnn()

+ def train_decoder(context, is_sparse):

+ trg_language_word = pd.data(

+ name="target_language_word", shape=[1], dtype='int64', lod_level=1)

+ trg_embedding = pd.embedding(

+ input=trg_language_word,

+ size=[dict_size, word_dim],

+ dtype='float32',

+ is_sparse=is_sparse,

+ param_attr=fluid.ParamAttr(name='vemb'))

+

+ rnn = pd.DynamicRNN()

+ with rnn.block():

+ current_word = rnn.step_input(trg_embedding)

+ pre_state = rnn.memory(init=context)

+ current_state = pd.fc(input=[current_word, pre_state],

+ size=decoder_size,

+ act='tanh')

+

+ current_score = pd.fc(input=current_state,

+ size=target_dict_dim,

+ act='softmax')

+ rnn.update_memory(pre_state, current_state)

+ rnn.output(current_score)

+

+ return rnn()

```

实现推测模式下的解码器:

```python

def decode(context, is_sparse):

-init_state = context

-array_len = pd.fill_constant(shape=[1], dtype='int64', value=max_length)

-counter = pd.zeros(shape=[1], dtype='int64', force_cpu=True)

-

-# fill the first element with init_state

-state_array = pd.create_array('float32')

-pd.array_write(init_state, array=state_array, i=counter)

-

-# ids, scores as memory

-ids_array = pd.create_array('int64')

-scores_array = pd.create_array('float32')

-

-init_ids = pd.data(name="init_ids", shape=[1], dtype="int64", lod_level=2)

-init_scores = pd.data(

-name="init_scores", shape=[1], dtype="float32", lod_level=2)

-

-pd.array_write(init_ids, array=ids_array, i=counter)

-pd.array_write(init_scores, array=scores_array, i=counter)

-

-cond = pd.less_than(x=counter, y=array_len)

-

-while_op = pd.While(cond=cond)

-with while_op.block():

-pre_ids = pd.array_read(array=ids_array, i=counter)

-pre_state = pd.array_read(array=state_array, i=counter)

-pre_score = pd.array_read(array=scores_array, i=counter)

-

-# expand the lod of pre_state to be the same with pre_score

-pre_state_expanded = pd.sequence_expand(pre_state, pre_score)

-

-pre_ids_emb = pd.embedding(

-input=pre_ids,

-size=[dict_size, word_dim],

-dtype='float32',

-is_sparse=is_sparse)

-

-# use rnn unit to update rnn

-current_state = pd.fc(input=[pre_state_expanded, pre_ids_emb],

-size=decoder_size,

-act='tanh')

-current_state_with_lod = pd.lod_reset(x=current_state, y=pre_score)

-# use score to do beam search

-current_score = pd.fc(input=current_state_with_lod,

-size=target_dict_dim,

-act='softmax')

-topk_scores, topk_indices = pd.topk(current_score, k=topk_size)

-selected_ids, selected_scores = pd.beam_search(

-pre_ids, topk_indices, topk_scores, beam_size, end_id=10, level=0)

-

-pd.increment(x=counter, value=1, in_place=True)

-

-# update the memories

-pd.array_write(current_state, array=state_array, i=counter)

-pd.array_write(selected_ids, array=ids_array, i=counter)

-pd.array_write(selected_scores, array=scores_array, i=counter)

-

-pd.less_than(x=counter, y=array_len, cond=cond)

-

-translation_ids, translation_scores = pd.beam_search_decode(

-ids=ids_array, scores=scores_array)

-

-return translation_ids, translation_scores

+ init_state = context

+ array_len = pd.fill_constant(shape=[1], dtype='int64', value=max_length)

+ counter = pd.zeros(shape=[1], dtype='int64', force_cpu=True)

+

+ # fill the first element with init_state

+ state_array = pd.create_array('float32')

+ pd.array_write(init_state, array=state_array, i=counter)

+

+ # ids, scores as memory

+ ids_array = pd.create_array('int64')

+ scores_array = pd.create_array('float32')

+

+ init_ids = pd.data(name="init_ids", shape=[1], dtype="int64", lod_level=2)

+ init_scores = pd.data(

+ name="init_scores", shape=[1], dtype="float32", lod_level=2)

+

+ pd.array_write(init_ids, array=ids_array, i=counter)

+ pd.array_write(init_scores, array=scores_array, i=counter)

+

+ cond = pd.less_than(x=counter, y=array_len)

+

+ while_op = pd.While(cond=cond)

+ with while_op.block():

+ pre_ids = pd.array_read(array=ids_array, i=counter)

+ pre_state = pd.array_read(array=state_array, i=counter)

+ pre_score = pd.array_read(array=scores_array, i=counter)

+

+ # expand the lod of pre_state to be the same with pre_score

+ pre_state_expanded = pd.sequence_expand(pre_state, pre_score)

+

+ pre_ids_emb = pd.embedding(

+ input=pre_ids,

+ size=[dict_size, word_dim],

+ dtype='float32',

+ is_sparse=is_sparse)

+

+ # use rnn unit to update rnn

+ current_state = pd.fc(input=[pre_state_expanded, pre_ids_emb],

+ size=decoder_size,

+ act='tanh')

+ current_state_with_lod = pd.lod_reset(x=current_state, y=pre_score)

+ # use score to do beam search

+ current_score = pd.fc(input=current_state_with_lod,

+ size=target_dict_dim,

+ act='softmax')

+ topk_scores, topk_indices = pd.topk(current_score, k=beam_size)

+ # calculate accumulated scores after topk to reduce computation cost

+ accu_scores = pd.elementwise_add(

+ x=pd.log(topk_scores), y=pd.reshape(pre_score, shape=[-1]), axis=0)

+ selected_ids, selected_scores = pd.beam_search(

+ pre_ids,

+ pre_score,

+ topk_indices,

+ accu_scores,

+ beam_size,

+ end_id=10,

+ level=0)

+

+ pd.increment(x=counter, value=1, in_place=True)

+

+ # update the memories

+ pd.array_write(current_state, array=state_array, i=counter)

+ pd.array_write(selected_ids, array=ids_array, i=counter)

+ pd.array_write(selected_scores, array=scores_array, i=counter)

+

+ # update the break condition: up to the max length or all candidates of

+ # source sentences have ended.

+ length_cond = pd.less_than(x=counter, y=array_len)

+ finish_cond = pd.logical_not(pd.is_empty(x=selected_ids))

+ pd.logical_and(x=length_cond, y=finish_cond, out=cond)

+

+ translation_ids, translation_scores = pd.beam_search_decode(

+ ids=ids_array, scores=scores_array, beam_size=beam_size, end_id=10)

+

+ return translation_ids, translation_scores

```

进而,我们定义一个`train_program`来使用`inference_program`计算出的结果,在标记数据的帮助下来计算误差。我们还定义了一个`optimizer_func`来定义优化器。

```python

def train_program(is_sparse):

-context = encoder(is_sparse)

-rnn_out = train_decoder(context, is_sparse)

-label = pd.data(

-name="target_language_next_word", shape=[1], dtype='int64', lod_level=1)

-cost = pd.cross_entropy(input=rnn_out, label=label)

-avg_cost = pd.mean(cost)

-return avg_cost

+ context = encoder(is_sparse)

+ rnn_out = train_decoder(context, is_sparse)

+ label = pd.data(

+ name="target_language_next_word", shape=[1], dtype='int64', lod_level=1)

+ cost = pd.cross_entropy(input=rnn_out, label=label)

+ avg_cost = pd.mean(cost)

+ return avg_cost

def optimizer_func():

-return fluid.optimizer.Adagrad(

-learning_rate=1e-4,

-regularization=fluid.regularizer.L2DecayRegularizer(

-regularization_coeff=0.1))

+ return fluid.optimizer.Adagrad(

+ learning_rate=1e-4,

+ regularization=fluid.regularizer.L2DecayRegularizer(

+ regularization_coeff=0.1))

```

## 训练模型

@@ -307,9 +326,9 @@ place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

```python

train_reader = paddle.batch(

-paddle.reader.shuffle(

-paddle.dataset.wmt14.train(dict_size), buf_size=1000),

-batch_size=batch_size)

+ paddle.reader.shuffle(

+ paddle.dataset.wmt14.train(dict_size), buf_size=1000),

+ batch_size=batch_size)

```

### 构造训练器(trainer)

@@ -318,9 +337,9 @@ batch_size=batch_size)

```python

is_sparse = False

trainer = fluid.Trainer(

-train_func=partial(train_program, is_sparse),

-place=place,

-optimizer_func=optimizer_func)

+ train_func=partial(train_program, is_sparse),

+ place=place,

+ optimizer_func=optimizer_func)

```

### 提供数据

@@ -329,8 +348,8 @@ optimizer_func=optimizer_func)

```python

feed_order = [

-'src_word_id', 'target_language_word', 'target_language_next_word'

-]

+ 'src_word_id', 'target_language_word', 'target_language_next_word'

+ ]

```

### 事件处理器

@@ -338,12 +357,12 @@ feed_order = [

```python

def event_handler(event):

-if isinstance(event, fluid.EndStepEvent):

-if event.step % 10 == 0:

-print('pass_id=' + str(event.epoch) + ' batch=' + str(event.step))

+ if isinstance(event, fluid.EndStepEvent):

+ if event.step % 10 == 0:

+ print('pass_id=' + str(event.epoch) + ' batch=' + str(event.step))

-if event.step == 20:

-trainer.stop()

+ if event.step == 20:

+ trainer.stop()

```

### 开始训练

@@ -353,10 +372,10 @@ trainer.stop()

EPOCH_NUM = 1

trainer.train(

-reader=train_reader,

-num_epochs=EPOCH_NUM,

-event_handler=event_handler,

-feed_order=feed_order)

+ reader=train_reader,

+ num_epochs=EPOCH_NUM,

+ event_handler=event_handler,

+ feed_order=feed_order)

```

## 应用模型

@@ -377,7 +396,7 @@ translation_ids, translation_scores = decode(context, is_sparse)

```python

init_ids_data = np.array([1 for _ in range(batch_size)], dtype='int64')

init_scores_data = np.array(

-[1. for _ in range(batch_size)], dtype='float32')

+ [1. for _ in range(batch_size)], dtype='float32')

init_ids_data = init_ids_data.reshape((batch_size, 1))

init_scores_data = init_scores_data.reshape((batch_size, 1))

init_lod = [1] * batch_size

@@ -387,14 +406,14 @@ init_ids = fluid.create_lod_tensor(init_ids_data, init_lod, place)

init_scores = fluid.create_lod_tensor(init_scores_data, init_lod, place)

test_data = paddle.batch(

-paddle.reader.shuffle(

-paddle.dataset.wmt14.test(dict_size), buf_size=1000),

-batch_size=batch_size)

+ paddle.reader.shuffle(

+ paddle.dataset.wmt14.test(dict_size), buf_size=1000),

+ batch_size=batch_size)

feed_order = ['src_word_id']

feed_list = [

-framework.default_main_program().global_block().var(var_name)

-for var_name in feed_order

+ framework.default_main_program().global_block().var(var_name)

+ for var_name in feed_order

]

feeder = fluid.DataFeeder(feed_list, place)

@@ -409,27 +428,30 @@ exe = Executor(place)

exe.run(framework.default_startup_program())

for data in test_data():

-feed_data = map(lambda x: [x[0]], data)

-feed_dict = feeder.feed(feed_data)

-feed_dict['init_ids'] = init_ids

-feed_dict['init_scores'] = init_scores

-

-results = exe.run(

-framework.default_main_program(),

-feed=feed_dict,

-fetch_list=[translation_ids, translation_scores],

-return_numpy=False)

-

-result_ids = np.array(results[0])

-result_scores = np.array(results[1])

-

-print("Original sentence:")

-print(" ".join([src_dict[w] for w in feed_data[0][0]]))

-print("Translated sentence:")

-print(" ".join([trg_dict[w] for w in result_ids]))

-print("Corresponding score: ", result_scores)

-

-break

+ feed_data = map(lambda x: [x[0]], data)

+ feed_dict = feeder.feed(feed_data)

+ feed_dict['init_ids'] = init_ids

+ feed_dict['init_scores'] = init_scores

+

+ results = exe.run(

+ framework.default_main_program(),

+ feed=feed_dict,

+ fetch_list=[translation_ids, translation_scores],

+ return_numpy=False)

+

+ result_ids = np.array(results[0])

+ result_scores = np.array(results[1])

+

+ print("Original sentence:")

+ print(" ".join([src_dict[w] for w in feed_data[0][0][1:-1]]))

+ print("Translated score and sentence:")

+ for i in xrange(beam_size):

+ start_pos = result_ids_lod[1][i] + 1

+ end_pos = result_ids_lod[1][i+1]

+ print("%d\t%.4f\t%s\n" % (i+1, result_scores[end_pos-1],

+ " ".join([trg_dict[w] for w in result_ids[start_pos:end_pos]])))

+

+ break

```

## 总结

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/bi_rnn.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/bi_rnn.png

deleted file mode 100644

index 9d8efd50a49d0305586f550344472ab94c93bed3..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/bi_rnn.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/bi_rnn_en.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/bi_rnn_en.png

deleted file mode 100755

index 4b35c88fc8ea2c503473c0c15711744e784d6af6..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/bi_rnn_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/decoder_attention.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/decoder_attention.png

deleted file mode 100644

index 1b355e7786d25487a3f564af758c2c52c43b4690..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/decoder_attention.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/decoder_attention_en.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/decoder_attention_en.png

deleted file mode 100755

index 3728f782ee09d9308d02b42305027b2735467ead..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/decoder_attention_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_attention.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_attention.png

deleted file mode 100644

index 28d7a15a3bd65262bde22a3f41b5aa78b46b368a..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_attention.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_attention_en.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_attention_en.png

deleted file mode 100755

index ea8585565da1ecaf241654c278c6f9b15e283286..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_attention_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_decoder.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_decoder.png

deleted file mode 100755

index 60aee0017de73f462e35708b1055aff8992c03e1..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_decoder.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_decoder_en.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_decoder_en.png

deleted file mode 100755

index 6b73798fe632e0873b35c117b86f347c8cf3116a..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/encoder_decoder_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/gru.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/gru.png

deleted file mode 100644

index 0cde685b84106650a4df18ce335a23e6338d3d11..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/gru.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/gru_en.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/gru_en.png

deleted file mode 100755

index a6af429f23f0f7e82650139bbd8dcbef27a34abe..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/gru_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/nmt.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/nmt.png

deleted file mode 100644

index bf56d73ebf297fadf522389c7b6836dd379aa097..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/nmt.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/nmt_en.png b/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/nmt_en.png

deleted file mode 100755

index 557310e044b2b6687e5ea6895417ed946ac7bc11..0000000000000000000000000000000000000000

Binary files a/doc/fluid/new_docs/beginners_guide/basics/machine_translation/image/nmt_en.png and /dev/null differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/recommender_system/index.md b/doc/fluid/new_docs/beginners_guide/basics/recommender_system/README.cn.md

similarity index 67%

rename from doc/fluid/new_docs/beginners_guide/basics/recommender_system/index.md

rename to doc/fluid/new_docs/beginners_guide/basics/recommender_system/README.cn.md

index 09a07f3dc30abc57ab3731af054dd83491acc9a6..3174a8c6d70166619306c784db9126f20a85f4c8 100644

--- a/doc/fluid/new_docs/beginners_guide/basics/recommender_system/index.md

+++ b/doc/fluid/new_docs/beginners_guide/basics/recommender_system/README.cn.md

@@ -1,6 +1,6 @@

# 个性化推荐

-本教程源代码目录在[book/recommender_system](https://github.com/PaddlePaddle/book/tree/develop/05.recommender_system), 初次使用请参考PaddlePaddle[安装教程](https://github.com/PaddlePaddle/book/blob/develop/README.cn.md#运行这本书)。

+本教程源代码目录在[book/recommender_system](https://github.com/PaddlePaddle/book/tree/develop/05.recommender_system), 初次使用请参考PaddlePaddle[安装教程](https://github.com/PaddlePaddle/book/blob/develop/README.cn.md#运行这本书),更多内容请参考本教程的[视频课堂](http://bit.baidu.com/course/detail/id/176.html)。

## 背景介绍

@@ -36,8 +36,8 @@ Prediction Score is 4.25

YouTube是世界上最大的视频上传、分享和发现网站,YouTube推荐系统为超过10亿用户从不断增长的视频库中推荐个性化的内容。整个系统由两个神经网络组成:候选生成网络和排序网络。候选生成网络从百万量级的视频库中生成上百个候选,排序网络对候选进行打分排序,输出排名最高的数十个结果。系统结构如图1所示:

-

+

图1. YouTube 推荐系统结构

@@ -45,20 +45,20 @@ YouTube是世界上最大的视频上传、分享和发现网站,YouTube推荐

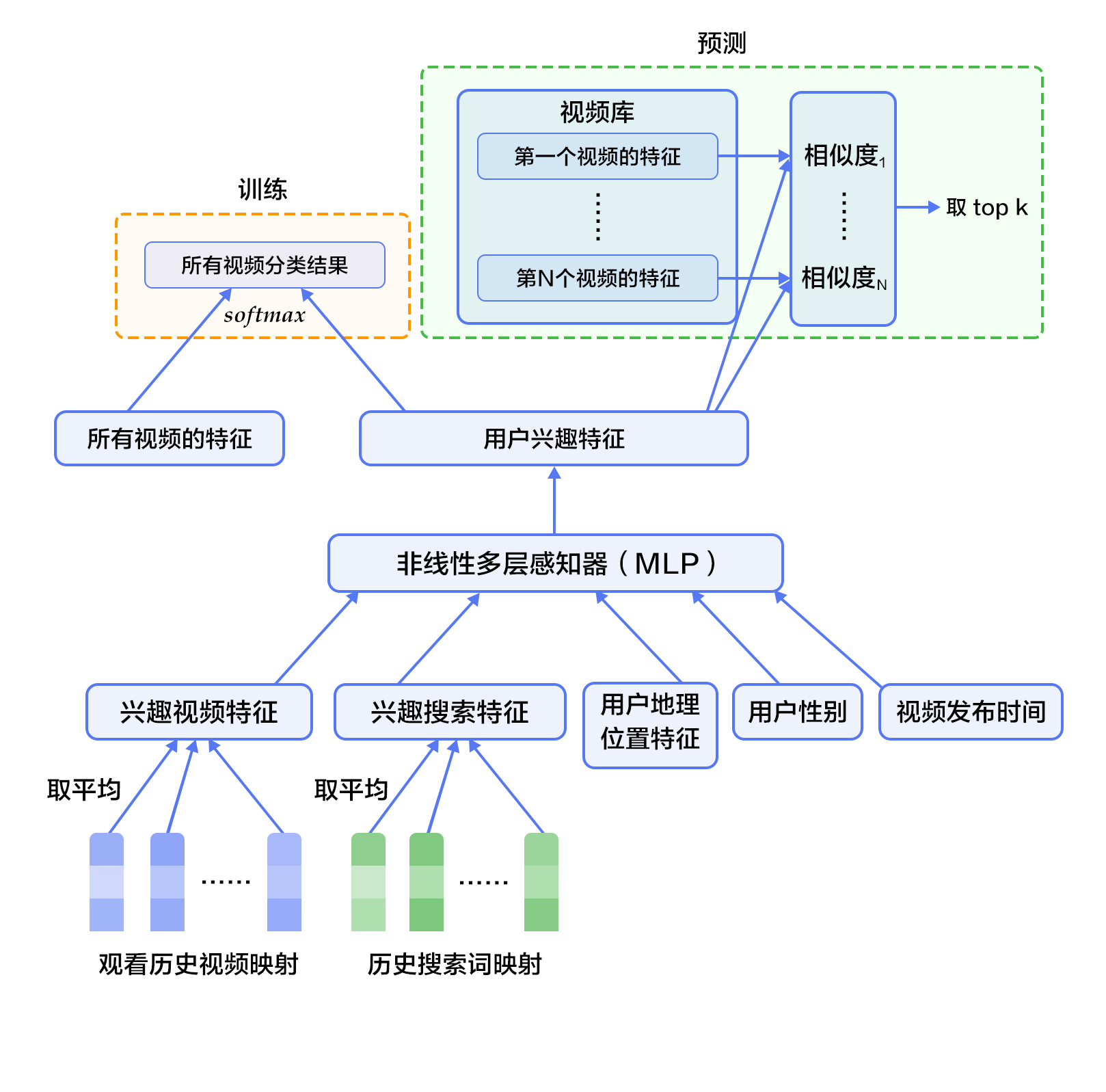

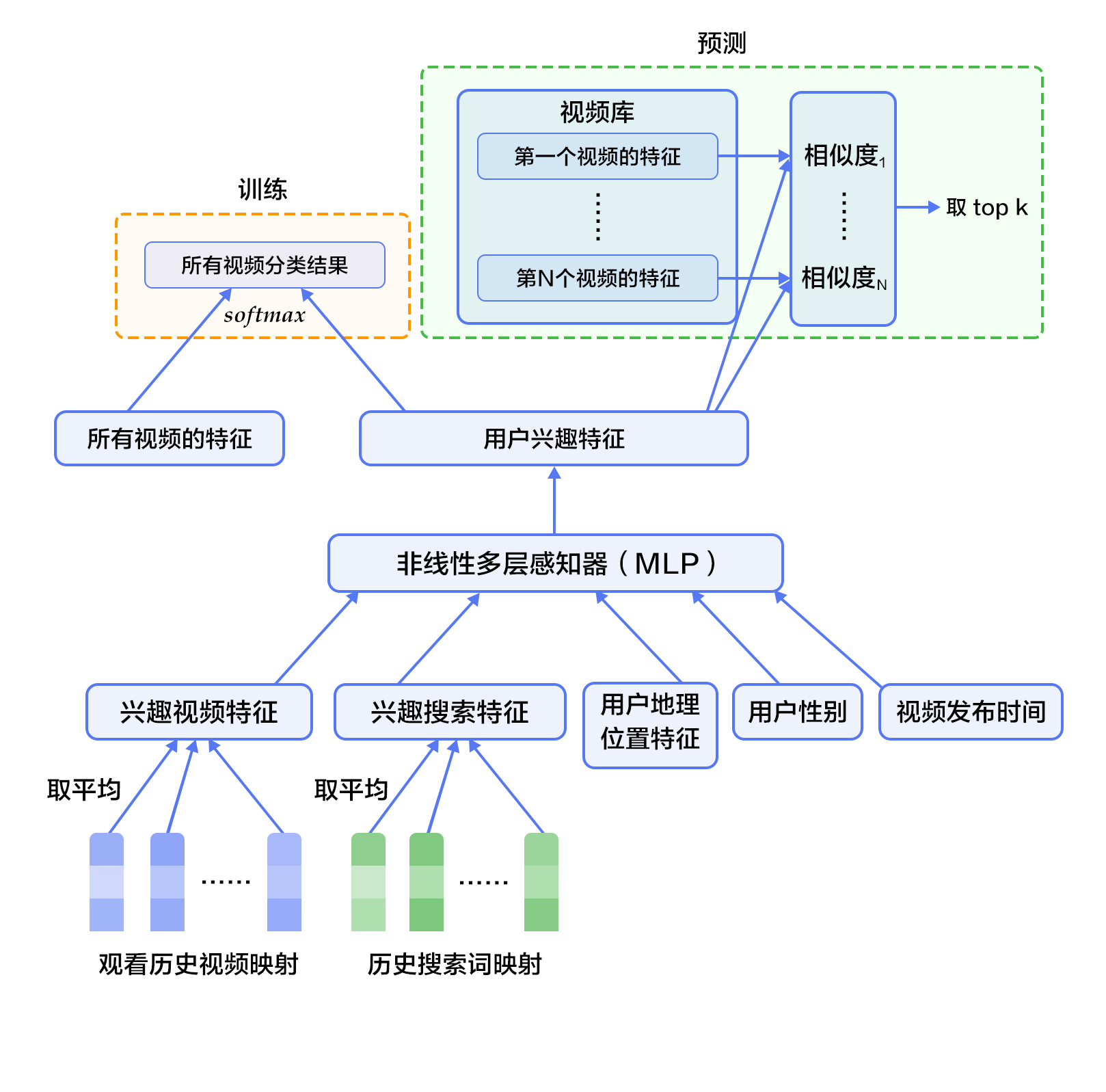

候选生成网络将推荐问题建模为一个类别数极大的多类分类问题:对于一个Youtube用户,使用其观看历史(视频ID)、搜索词记录(search tokens)、人口学信息(如地理位置、用户登录设备)、二值特征(如性别,是否登录)和连续特征(如用户年龄)等,对视频库中所有视频进行多分类,得到每一类别的分类结果(即每一个视频的推荐概率),最终输出概率较高的几百个视频。

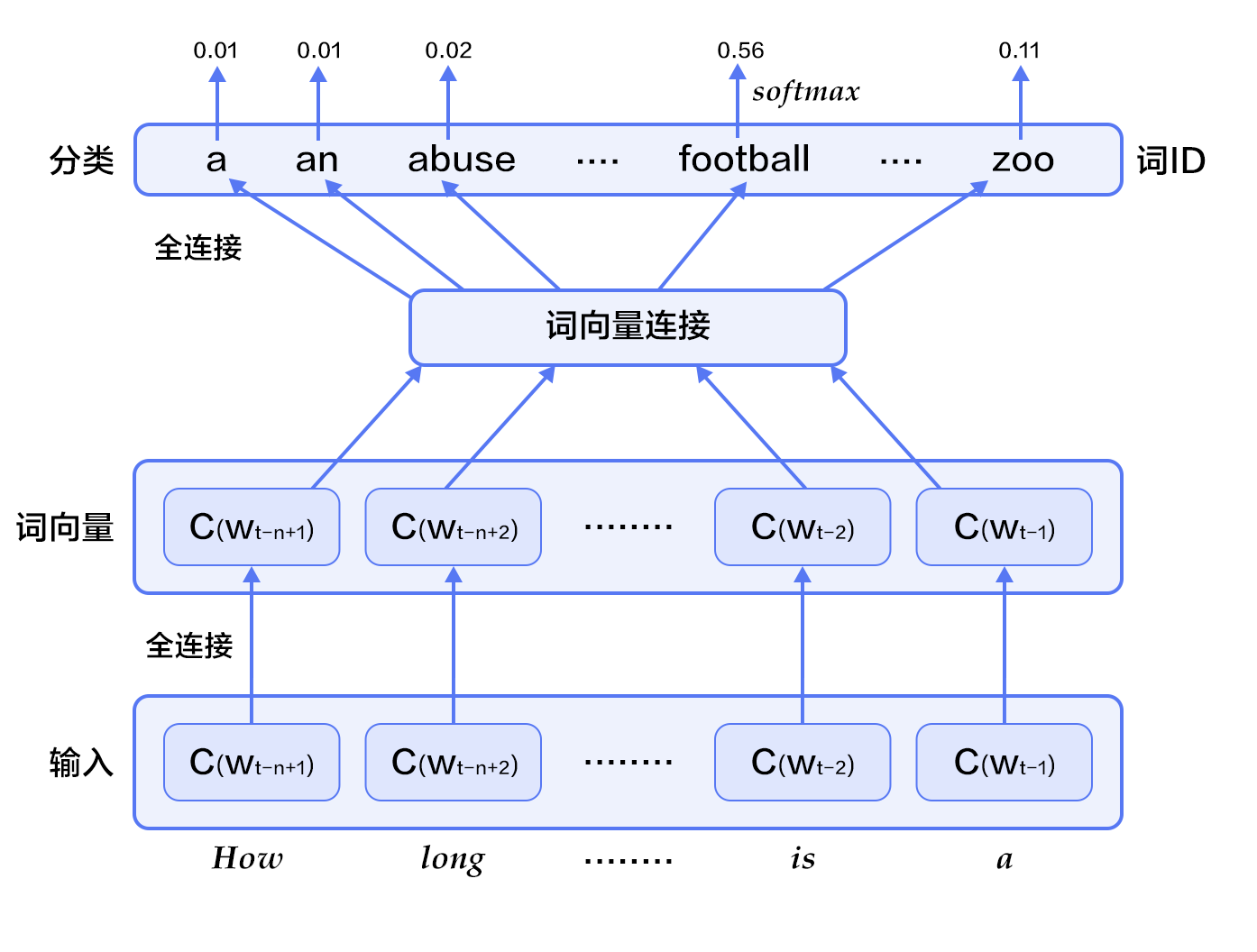

-首先,将观看历史及搜索词记录这类历史信息,映射为向量后取平均值得到定长表示;同时,输入人口学特征以优化新用户的推荐效果,并将二值特征和连续特征归一化处理到[0, 1]范围。接下来,将所有特征表示拼接为一个向量,并输入给非线形多层感知器(MLP,详见[识别数字](https://github.com/PaddlePaddle/book/blob/develop/02.recognize_digits/README.cn.md)教程)处理。最后,训练时将MLP的输出给softmax做分类,预测时计算用户的综合特征(MLP的输出)与所有视频的相似度,取得分最高的`$k$`个作为候选生成网络的筛选结果。图2显示了候选生成网络结构。

+首先,将观看历史及搜索词记录这类历史信息,映射为向量后取平均值得到定长表示;同时,输入人口学特征以优化新用户的推荐效果,并将二值特征和连续特征归一化处理到[0, 1]范围。接下来,将所有特征表示拼接为一个向量,并输入给非线形多层感知器(MLP,详见[识别数字](https://github.com/PaddlePaddle/book/blob/develop/02.recognize_digits/README.cn.md)教程)处理。最后,训练时将MLP的输出给softmax做分类,预测时计算用户的综合特征(MLP的输出)与所有视频的相似度,取得分最高的$k$个作为候选生成网络的筛选结果。图2显示了候选生成网络结构。

-

+

图2. 候选生成网络结构

-对于一个用户`$U$`,预测此刻用户要观看的视频`$\omega$`为视频`$i$`的概率公式为:

+对于一个用户$U$,预测此刻用户要观看的视频$\omega$为视频$i$的概率公式为:

$$P(\omega=i|u)=\frac{e^{v_{i}u}}{\sum_{j \in V}e^{v_{j}u}}$$

-其中`$u$`为用户`$U$`的特征表示,`$V$`为视频库集合,`$v_i$`为视频库中第`$i$`个视频的特征表示。`$u$`和`$v_i$`为长度相等的向量,两者点积可以通过全连接层实现。

+其中$u$为用户$U$的特征表示,$V$为视频库集合,$v_i$为视频库中第$i$个视频的特征表示。$u$和$v_i$为长度相等的向量,两者点积可以通过全连接层实现。

-考虑到softmax分类的类别数非常多,为了保证一定的计算效率:1)训练阶段,使用负样本类别采样将实际计算的类别数缩小至数千;2)推荐(预测)阶段,忽略softmax的归一化计算(不影响结果),将类别打分问题简化为点积(dot product)空间中的最近邻(nearest neighbor)搜索问题,取与`$u$`最近的`$k$`个视频作为生成的候选。

+考虑到softmax分类的类别数非常多,为了保证一定的计算效率:1)训练阶段,使用负样本类别采样将实际计算的类别数缩小至数千;2)推荐(预测)阶段,忽略softmax的归一化计算(不影响结果),将类别打分问题简化为点积(dot product)空间中的最近邻(nearest neighbor)搜索问题,取与$u$最近的$k$个视频作为生成的候选。

#### 排序网络(Ranking Network)

排序网络的结构类似于候选生成网络,但是它的目标是对候选进行更细致的打分排序。和传统广告排序中的特征抽取方法类似,这里也构造了大量的用于视频排序的相关特征(如视频 ID、上次观看时间等)。这些特征的处理方式和候选生成网络类似,不同之处是排序网络的顶部是一个加权逻辑回归(weighted logistic regression),它对所有候选视频进行打分,从高到底排序后将分数较高的一些视频返回给用户。

@@ -72,20 +72,20 @@ $$P(\omega=i|u)=\frac{e^{v_{i}u}}{\sum_{j \in V}e^{v_{j}u}}$$

卷积神经网络主要由卷积(convolution)和池化(pooling)操作构成,其应用及组合方式灵活多变,种类繁多。本小结我们以如图3所示的网络进行讲解:

-

+

图3. 卷积神经网络文本分类模型

-假设待处理句子的长度为`$n$`,其中第`$i$`个词的词向量(word embedding)为`$x_i\in\mathbb{R}^k$`,`$k$`为维度大小。

+假设待处理句子的长度为$n$,其中第$i$个词的词向量(word embedding)为$x_i\in\mathbb{R}^k$,$k$为维度大小。

-首先,进行词向量的拼接操作:将每`$h$`个词拼接起来形成一个大小为`$h$`的词窗口,记为`$x_{i:i+h-1}$`,它表示词序列`$x_{i},x_{i+1},\ldots,x_{i+h-1}$`的拼接,其中,`$i$`表示词窗口中第一个词在整个句子中的位置,取值范围从`$1$`到`$n-h+1$`,`$x_{i:i+h-1}\in\mathbb{R}^{hk}$`。

+首先,进行词向量的拼接操作:将每$h$个词拼接起来形成一个大小为$h$的词窗口,记为$x_{i:i+h-1}$,它表示词序列$x_{i},x_{i+1},\ldots,x_{i+h-1}$的拼接,其中,$i$表示词窗口中第一个词在整个句子中的位置,取值范围从$1$到$n-h+1$,$x_{i:i+h-1}\in\mathbb{R}^{hk}$。

-其次,进行卷积操作:把卷积核(kernel)`$w\in\mathbb{R}^{hk}$`应用于包含`$h$`个词的窗口`$x_{i:i+h-1}$`,得到特征`$c_i=f(w\cdot x_{i:i+h-1}+b)$`,其中`$b\in\mathbb{R}$`为偏置项(bias),`$f$`为非线性激活函数,如`$sigmoid$`。将卷积核应用于句子中所有的词窗口`${x_{1:h},x_{2:h+1},\ldots,x_{n-h+1:n}}$`,产生一个特征图(feature map):

+其次,进行卷积操作:把卷积核(kernel)$w\in\mathbb{R}^{hk}$应用于包含$h$个词的窗口$x_{i:i+h-1}$,得到特征$c_i=f(w\cdot x_{i:i+h-1}+b)$,其中$b\in\mathbb{R}$为偏置项(bias),$f$为非线性激活函数,如$sigmoid$。将卷积核应用于句子中所有的词窗口${x_{1:h},x_{2:h+1},\ldots,x_{n-h+1:n}}$,产生一个特征图(feature map):

$$c=[c_1,c_2,\ldots,c_{n-h+1}], c \in \mathbb{R}^{n-h+1}$$

-接下来,对特征图采用时间维度上的最大池化(max pooling over time)操作得到此卷积核对应的整句话的特征`$\hat c$`,它是特征图中所有元素的最大值:

+接下来,对特征图采用时间维度上的最大池化(max pooling over time)操作得到此卷积核对应的整句话的特征$\hat c$,它是特征图中所有元素的最大值:

$$\hat c=max(c)$$

@@ -95,9 +95,9 @@ $$\hat c=max(c)$$

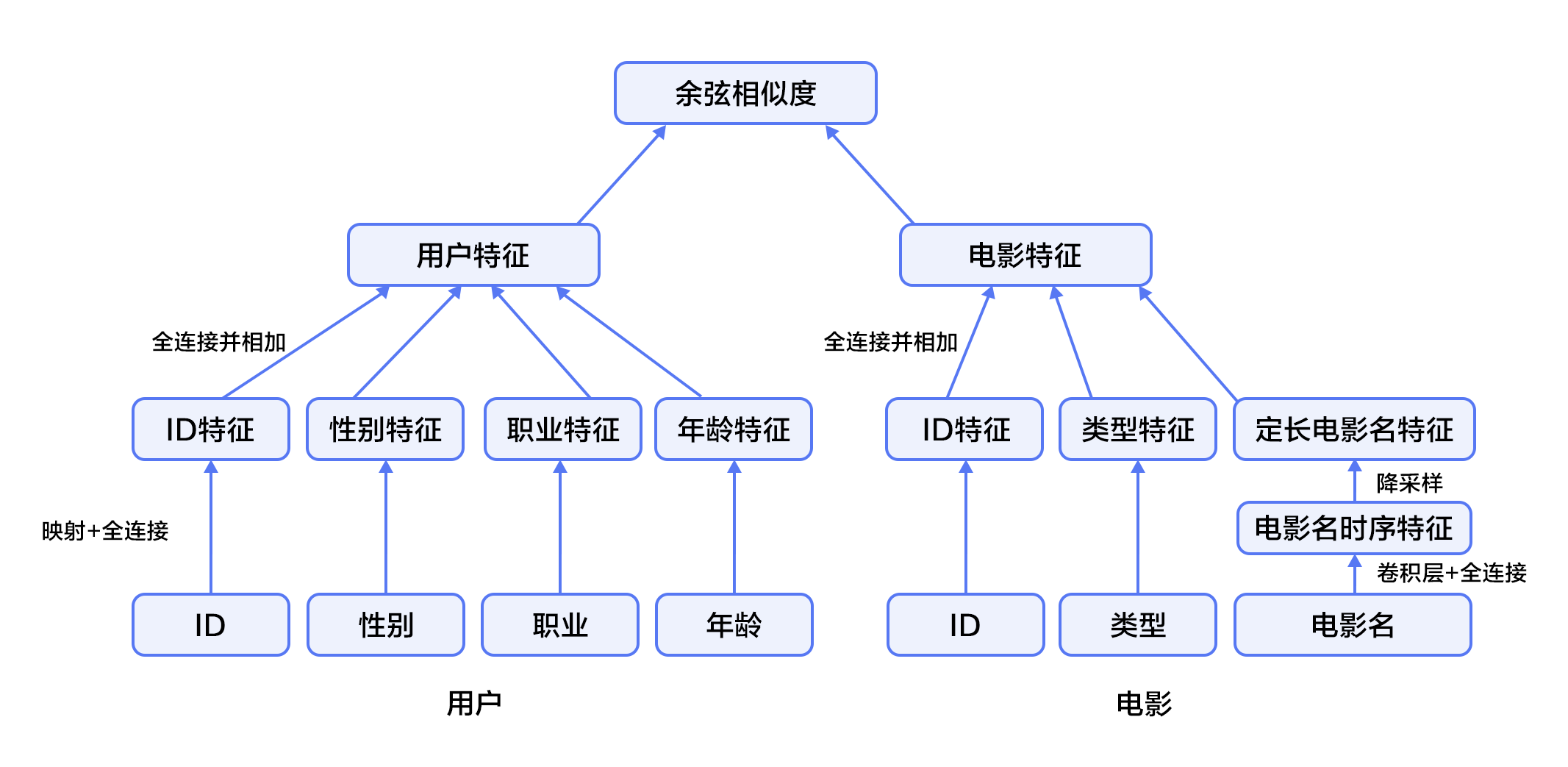

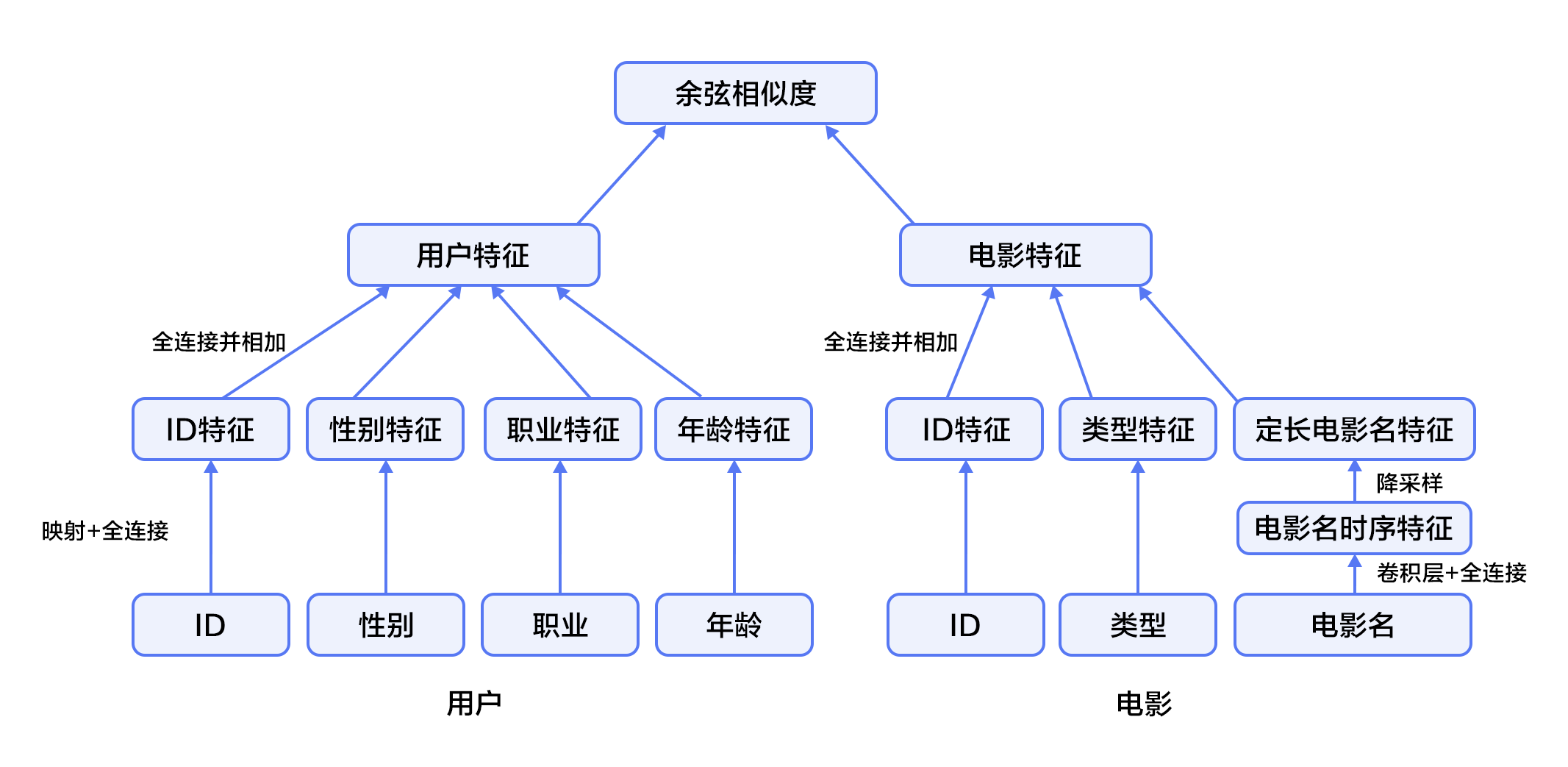

1. 首先,使用用户特征和电影特征作为神经网络的输入,其中:

-- 用户特征融合了四个属性信息,分别是用户ID、性别、职业和年龄。

+ - 用户特征融合了四个属性信息,分别是用户ID、性别、职业和年龄。

-- 电影特征融合了三个属性信息,分别是电影ID、电影类型ID和电影名称。

+ - 电影特征融合了三个属性信息,分别是电影ID、电影类型ID和电影名称。

2. 对用户特征,将用户ID映射为维度大小为256的向量表示,输入全连接层,并对其他三个属性也做类似的处理。然后将四个属性的特征表示分别全连接并相加。

@@ -105,8 +105,9 @@ $$\hat c=max(c)$$

4. 得到用户和电影的向量表示后,计算二者的余弦相似度作为推荐系统的打分。最后,用该相似度打分和用户真实打分的差异的平方作为该回归模型的损失函数。

-

+

+

图4. 融合推荐模型

@@ -141,7 +142,7 @@ movie_info = paddle.dataset.movielens.movie_info()

print movie_info.values()[0]

```

-

+

这表示,电影的id是1,标题是《Toy Story》,该电影被分为到三个类别中。这三个类别是动画,儿童,喜剧。

@@ -152,13 +153,14 @@ user_info = paddle.dataset.movielens.user_info()

print user_info.values()[0]

```

-

+

这表示,该用户ID是1,女性,年龄比18岁还年轻。职业ID是10。

其中,年龄使用下列分布

+

* 1: "Under 18"

* 18: "18-24"

* 25: "25-34"

@@ -168,6 +170,7 @@ print user_info.values()[0]

* 56: "56+"

职业是从下面几种选项里面选则得出:

+

* 0: "other" or not specified

* 1: "academic/educator"

* 2: "artist"

@@ -203,7 +206,7 @@ mov_id = train_sample[len(user_info[uid].value())]

print "User %s rates Movie %s with Score %s"%(user_info[uid], movie_info[mov_id], train_sample[-1])

```

-User rates Movie with Score [5.0]

+ User rates Movie with Score [5.0]

即用户1对电影1193的评价为5分。

@@ -214,6 +217,7 @@ User rates Movie 表格 1 电影评论情感分析

-在自然语言处理中,情感分析属于典型的**文本分类**问题,即把需要进行情感分析的文本划分为其所属类别。文本分类涉及文本表示和分类方法两个问题。在深度学习的方法出现之前,主流的文本表示方法为词袋模型BOW(bag of words),话题模型等等;分类方法有SVM(support vector machine), LR(logistic regression)等等。

+在自然语言处理中,情感分析属于典型的**文本分类**问题,即把需要进行情感分析的文本划分为其所属类别。文本分类涉及文本表示和分类方法两个问题。在深度学习的方法出现之前,主流的文本表示方法为词袋模型BOW(bag of words),话题模型等等;分类方法有SVM(support vector machine), LR(logistic regression)等等。

-对于一段文本,BOW表示会忽略其词顺序、语法和句法,将这段文本仅仅看做是一个词集合,因此BOW方法并不能充分表示文本的语义信息。例如,句子“这部电影糟糕透了”和“一个乏味,空洞,没有内涵的作品”在情感分析中具有很高的语义相似度,但是它们的BOW表示的相似度为0。又如,句子“一个空洞,没有内涵的作品”和“一个不空洞而且有内涵的作品”的BOW相似度很高,但实际上它们的意思很不一样。

+对于一段文本,BOW表示会忽略其词顺序、语法和句法,将这段文本仅仅看做是一个词集合,因此BOW方法并不能充分表示文本的语义信息。例如,句子“这部电影糟糕透了”和“一个乏味,空洞,没有内涵的作品”在情感分析中具有很高的语义相似度,但是它们的BOW表示的相似度为0。又如,句子“一个空洞,没有内涵的作品”和“一个不空洞而且有内涵的作品”的BOW相似度很高,但实际上它们的意思很不一样。

本章我们所要介绍的深度学习模型克服了BOW表示的上述缺陷,它在考虑词顺序的基础上把文本映射到低维度的语义空间,并且以端对端(end to end)的方式进行文本表示及分类,其性能相对于传统方法有显著的提升\[[1](#参考文献)\]。

@@ -36,54 +36,54 @@

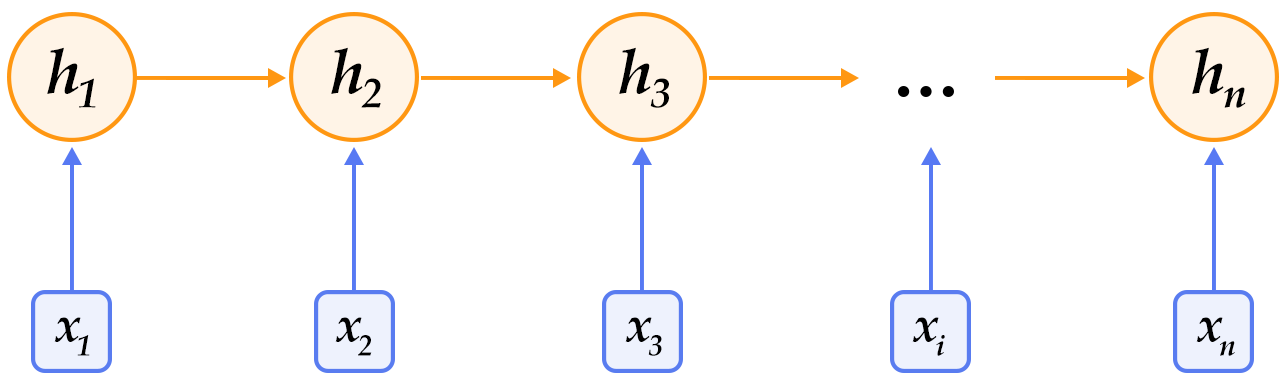

循环神经网络是一种能对序列数据进行精确建模的有力工具。实际上,循环神经网络的理论计算能力是图灵完备的\[[4](#参考文献)\]。自然语言是一种典型的序列数据(词序列),近年来,循环神经网络及其变体(如long short term memory\[[5](#参考文献)\]等)在自然语言处理的多个领域,如语言模型、句法解析、语义角色标注(或一般的序列标注)、语义表示、图文生成、对话、机器翻译等任务上均表现优异甚至成为目前效果最好的方法。

-

+

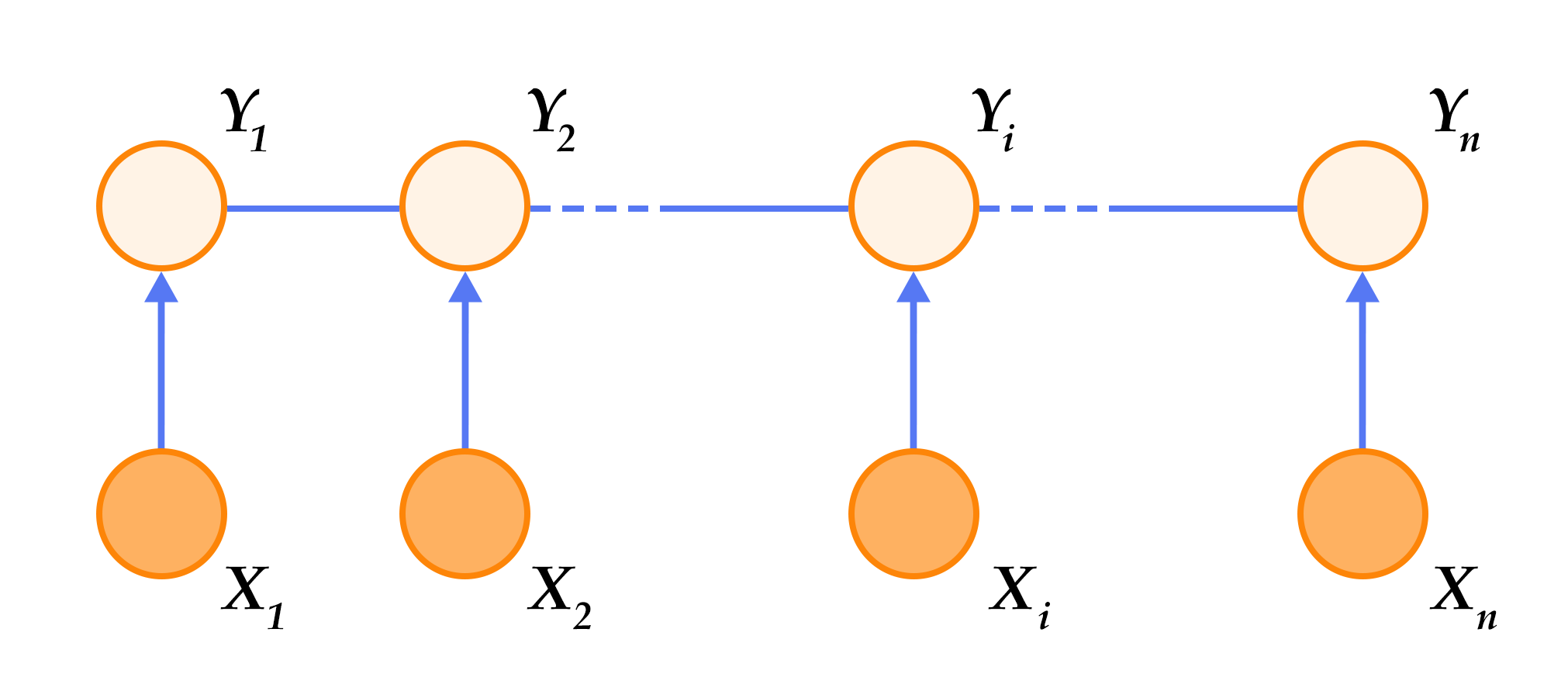

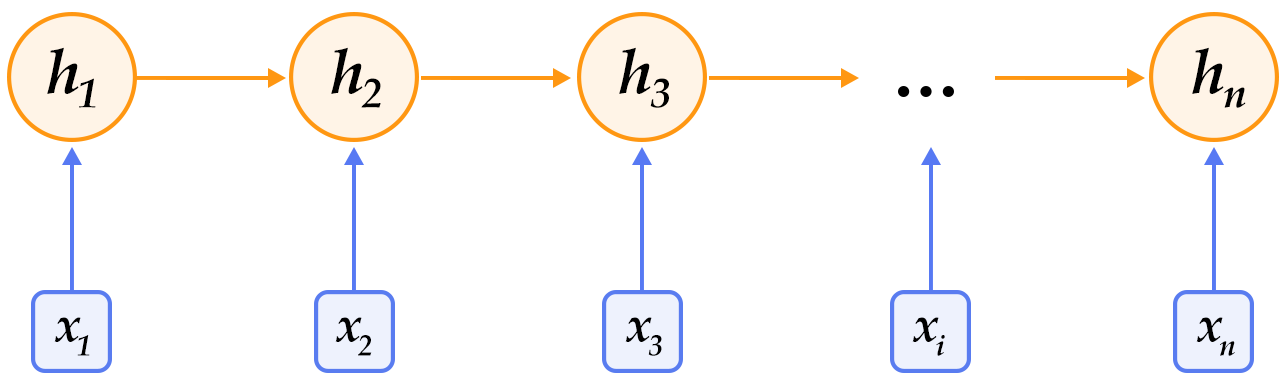

图1. 循环神经网络按时间展开的示意图

-循环神经网络按时间展开后如图1所示:在第`$t$`时刻,网络读入第`$t$`个输入`$x_t$`(向量表示)及前一时刻隐层的状态值`$h_{t-1}$`(向量表示,`$h_0$`一般初始化为`$0$`向量),计算得出本时刻隐层的状态值`$h_t$`,重复这一步骤直至读完所有输入。如果将循环神经网络所表示的函数记为`$f$`,则其公式可表示为:

+循环神经网络按时间展开后如图1所示:在第$t$时刻,网络读入第$t$个输入$x_t$(向量表示)及前一时刻隐层的状态值$h_{t-1}$(向量表示,$h_0$一般初始化为$0$向量),计算得出本时刻隐层的状态值$h_t$,重复这一步骤直至读完所有输入。如果将循环神经网络所表示的函数记为$f$,则其公式可表示为:

$$h_t=f(x_t,h_{t-1})=\sigma(W_{xh}x_t+W_{hh}h_{t-1}+b_h)$$

-其中`$W_{xh}$`是输入到隐层的矩阵参数,`$W_{hh}$`是隐层到隐层的矩阵参数,`$b_h$`为隐层的偏置向量(bias)参数,`$\sigma$`为`$sigmoid$`函数。

+其中$W_{xh}$是输入到隐层的矩阵参数,$W_{hh}$是隐层到隐层的矩阵参数,$b_h$为隐层的偏置向量(bias)参数,$\sigma$为$sigmoid$函数。

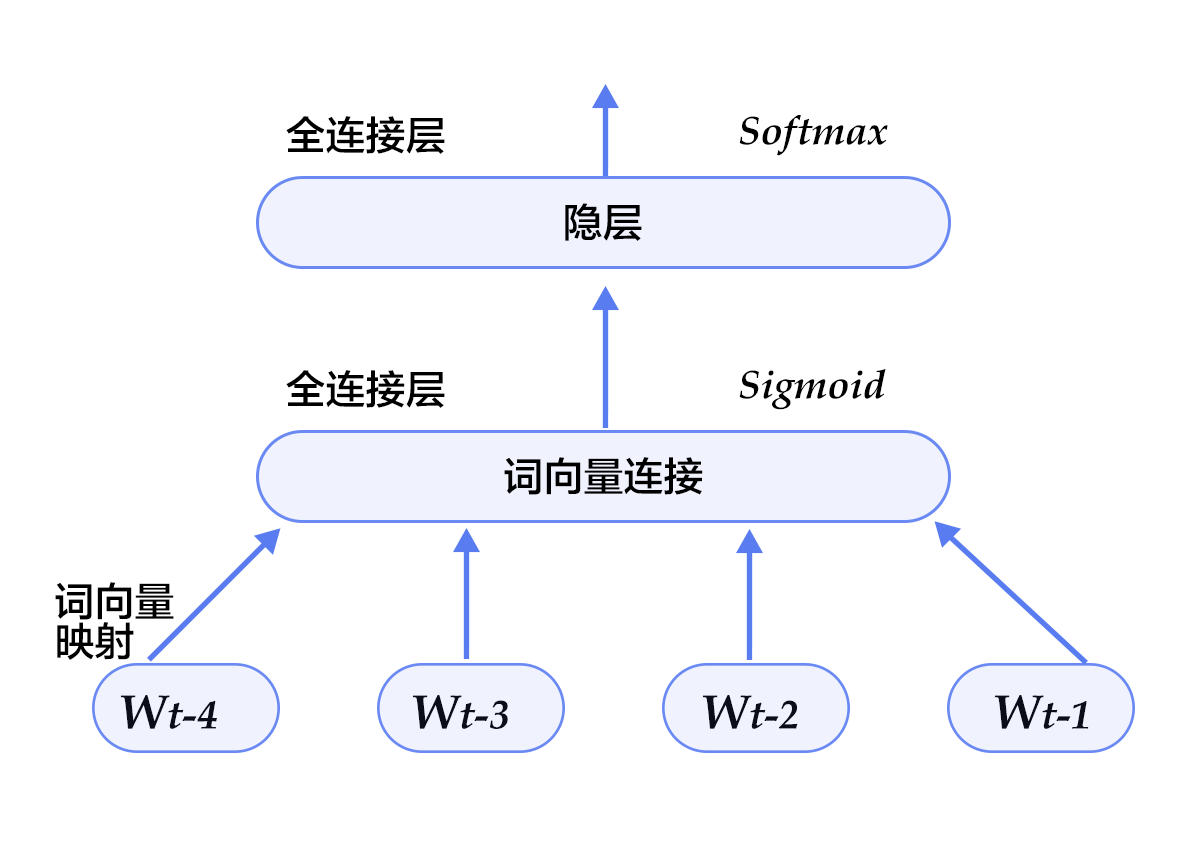

-在处理自然语言时,一般会先将词(one-hot表示)映射为其词向量(word embedding)表示,然后再作为循环神经网络每一时刻的输入`$x_t$`。此外,可以根据实际需要的不同在循环神经网络的隐层上连接其它层。如,可以把一个循环神经网络的隐层输出连接至下一个循环神经网络的输入构建深层(deep or stacked)循环神经网络,或者提取最后一个时刻的隐层状态作为句子表示进而使用分类模型等等。

+在处理自然语言时,一般会先将词(one-hot表示)映射为其词向量(word embedding)表示,然后再作为循环神经网络每一时刻的输入$x_t$。此外,可以根据实际需要的不同在循环神经网络的隐层上连接其它层。如,可以把一个循环神经网络的隐层输出连接至下一个循环神经网络的输入构建深层(deep or stacked)循环神经网络,或者提取最后一个时刻的隐层状态作为句子表示进而使用分类模型等等。

### 长短期记忆网络(LSTM)

-对于较长的序列数据,循环神经网络的训练过程中容易出现梯度消失或爆炸现象\[[6](#参考文献)\]。为了解决这一问题,Hochreiter S, Schmidhuber J. (1997)提出了LSTM(long short term memory\[[5](#参考文献)\])。

+对于较长的序列数据,循环神经网络的训练过程中容易出现梯度消失或爆炸现象\[[6](#参考文献)\]。为了解决这一问题,Hochreiter S, Schmidhuber J. (1997)提出了LSTM(long short term memory\[[5](#参考文献)\])。

-相比于简单的循环神经网络,LSTM增加了记忆单元`$c$`、输入门`$i$`、遗忘门`$f$`及输出门`$o$`。这些门及记忆单元组合起来大大提升了循环神经网络处理长序列数据的能力。若将基于LSTM的循环神经网络表示的函数记为`$F$`,则其公式为:

+相比于简单的循环神经网络,LSTM增加了记忆单元$c$、输入门$i$、遗忘门$f$及输出门$o$。这些门及记忆单元组合起来大大提升了循环神经网络处理长序列数据的能力。若将基于LSTM的循环神经网络表示的函数记为$F$,则其公式为:

$$ h_t=F(x_t,h_{t-1})$$

-`$F$`由下列公式组合而成\[[7](#参考文献)\]:

+$F$由下列公式组合而成\[[7](#参考文献)\]:

$$ i_t = \sigma{(W_{xi}x_t+W_{hi}h_{t-1}+W_{ci}c_{t-1}+b_i)} $$

$$ f_t = \sigma(W_{xf}x_t+W_{hf}h_{t-1}+W_{cf}c_{t-1}+b_f) $$

$$ c_t = f_t\odot c_{t-1}+i_t\odot tanh(W_{xc}x_t+W_{hc}h_{t-1}+b_c) $$

$$ o_t = \sigma(W_{xo}x_t+W_{ho}h_{t-1}+W_{co}c_{t}+b_o) $$

$$ h_t = o_t\odot tanh(c_t) $$

-其中,`$i_t, f_t, c_t, o_t$`分别表示输入门,遗忘门,记忆单元及输出门的向量值,带角标的`$W$`及`$b$`为模型参数,`$tanh$`为双曲正切函数,`$\odot$`表示逐元素(elementwise)的乘法操作。输入门控制着新输入进入记忆单元`$c$`的强度,遗忘门控制着记忆单元维持上一时刻值的强度,输出门控制着输出记忆单元的强度。三种门的计算方式类似,但有着完全不同的参数,它们各自以不同的方式控制着记忆单元`$c$`,如图2所示:

+其中,$i_t, f_t, c_t, o_t$分别表示输入门,遗忘门,记忆单元及输出门的向量值,带角标的$W$及$b$为模型参数,$tanh$为双曲正切函数,$\odot$表示逐元素(elementwise)的乘法操作。输入门控制着新输入进入记忆单元$c$的强度,遗忘门控制着记忆单元维持上一时刻值的强度,输出门控制着输出记忆单元的强度。三种门的计算方式类似,但有着完全不同的参数,它们各自以不同的方式控制着记忆单元$c$,如图2所示:

-

-图2. 时刻`$t$`的LSTM [7]

+

+图2. 时刻$t$的LSTM [7]

LSTM通过给简单的循环神经网络增加记忆及控制门的方式,增强了其处理远距离依赖问题的能力。类似原理的改进还有Gated Recurrent Unit (GRU)\[[8](#参考文献)\],其设计更为简洁一些。**这些改进虽然各有不同,但是它们的宏观描述却与简单的循环神经网络一样(如图2所示),即隐状态依据当前输入及前一时刻的隐状态来改变,不断地循环这一过程直至输入处理完毕:**

$$ h_t=Recrurent(x_t,h_{t-1})$$

-其中,`$Recrurent$`可以表示简单的循环神经网络、GRU或LSTM。

+其中,$Recrurent$可以表示简单的循环神经网络、GRU或LSTM。

### 栈式双向LSTM(Stacked Bidirectional LSTM)

-对于正常顺序的循环神经网络,`$h_t$`包含了`$t$`时刻之前的输入信息,也就是上文信息。同样,为了得到下文信息,我们可以使用反方向(将输入逆序处理)的循环神经网络。结合构建深层循环神经网络的方法(深层神经网络往往能得到更抽象和高级的特征表示),我们可以通过构建更加强有力的基于LSTM的栈式双向循环神经网络\[[9](#参考文献)\],来对时序数据进行建模。

+对于正常顺序的循环神经网络,$h_t$包含了$t$时刻之前的输入信息,也就是上文信息。同样,为了得到下文信息,我们可以使用反方向(将输入逆序处理)的循环神经网络。结合构建深层循环神经网络的方法(深层神经网络往往能得到更抽象和高级的特征表示),我们可以通过构建更加强有力的基于LSTM的栈式双向循环神经网络\[[9](#参考文献)\],来对时序数据进行建模。

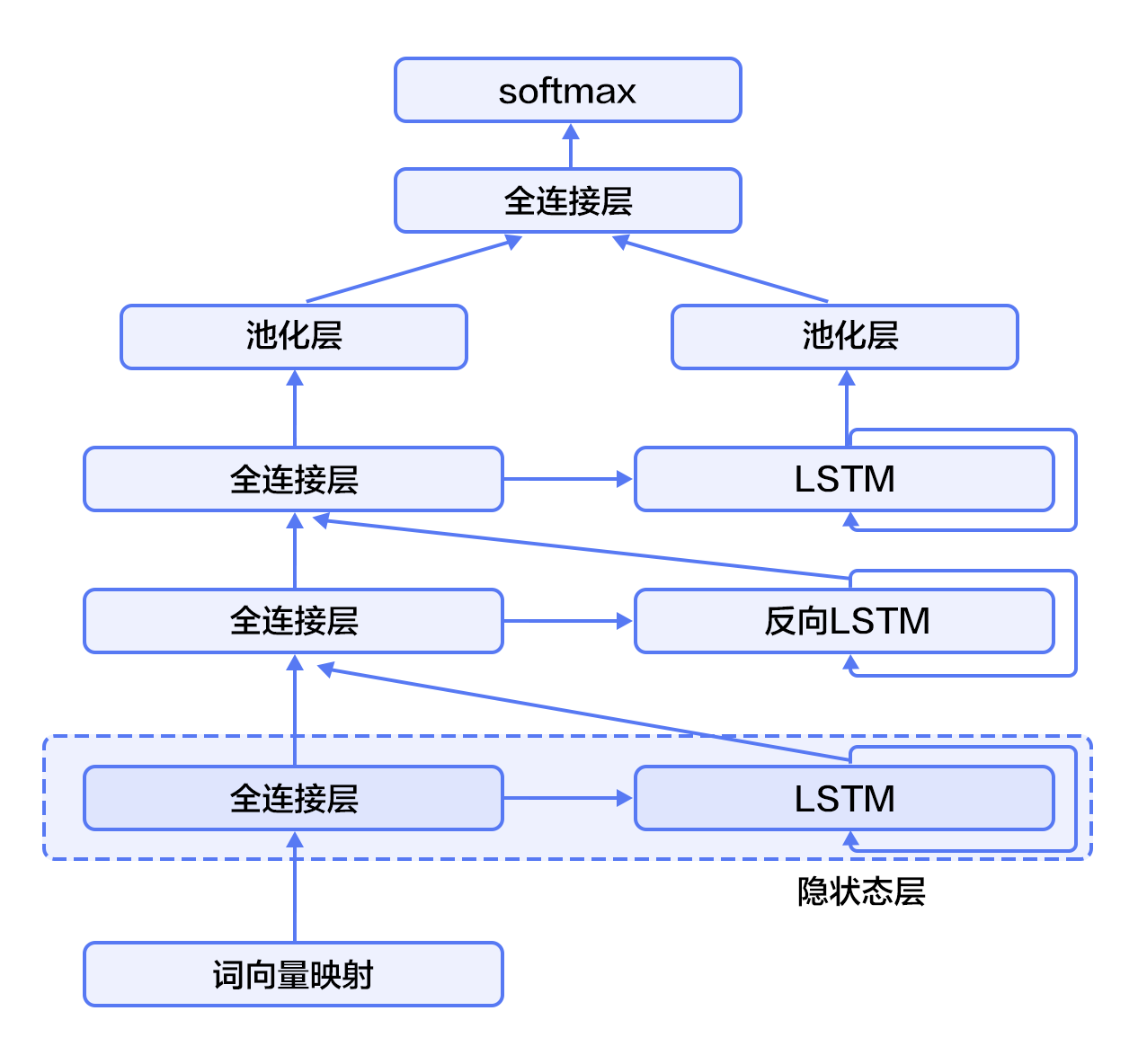

如图3所示(以三层为例),奇数层LSTM正向,偶数层LSTM反向,高一层的LSTM使用低一层LSTM及之前所有层的信息作为输入,对最高层LSTM序列使用时间维度上的最大池化即可得到文本的定长向量表示(这一表示充分融合了文本的上下文信息,并且对文本进行了深层次抽象),最后我们将文本表示连接至softmax构建分类模型。

-

+

图3. 栈式双向LSTM用于文本分类

@@ -94,11 +94,11 @@ $$ h_t=Recrurent(x_t,h_{t-1})$$

```text

aclImdb

|- test

-|-- neg

-|-- pos

+ |-- neg

+ |-- pos

|- train

-|-- neg

-|-- pos

+ |-- neg

+ |-- pos

```

Paddle在`dataset/imdb.py`中提实现了imdb数据集的自动下载和读取,并提供了读取字典、训练数据、测试数据等API。

@@ -107,6 +107,7 @@ Paddle在`dataset/imdb.py`中提实现了imdb数据集的自动下载和读取

在该示例中,我们实现了两种文本分类算法,分别基于[推荐系统](https://github.com/PaddlePaddle/book/tree/develop/05.recommender_system)一节介绍过的文本卷积神经网络,以及[栈式双向LSTM](#栈式双向LSTM(Stacked Bidirectional LSTM))。我们首先引入要用到的库和定义全局变量:

```python

+from __future__ import print_function

import paddle

import paddle.fluid as fluid

from functools import partial

@@ -115,6 +116,7 @@ import numpy as np

CLASS_DIM = 2

EMB_DIM = 128

HID_DIM = 512

+STACKED_NUM = 3

BATCH_SIZE = 128

USE_GPU = False

```

@@ -126,23 +128,23 @@ USE_GPU = False

```python

def convolution_net(data, input_dim, class_dim, emb_dim, hid_dim):

-emb = fluid.layers.embedding(

-input=data, size=[input_dim, emb_dim], is_sparse=True)

-conv_3 = fluid.nets.sequence_conv_pool(

-input=emb,

-num_filters=hid_dim,

-filter_size=3,

-act="tanh",

-pool_type="sqrt")

-conv_4 = fluid.nets.sequence_conv_pool(

-input=emb,

-num_filters=hid_dim,

-filter_size=4,

-act="tanh",

-pool_type="sqrt")

-prediction = fluid.layers.fc(

-input=[conv_3, conv_4], size=class_dim, act="softmax")

-return prediction

+ emb = fluid.layers.embedding(

+ input=data, size=[input_dim, emb_dim], is_sparse=True)

+ conv_3 = fluid.nets.sequence_conv_pool(

+ input=emb,

+ num_filters=hid_dim,

+ filter_size=3,

+ act="tanh",

+ pool_type="sqrt")

+ conv_4 = fluid.nets.sequence_conv_pool(

+ input=emb,

+ num_filters=hid_dim,

+ filter_size=4,

+ act="tanh",

+ pool_type="sqrt")

+ prediction = fluid.layers.fc(

+ input=[conv_3, conv_4], size=class_dim, act="softmax")

+ return prediction

```

网络的输入`input_dim`表示的是词典的大小,`class_dim`表示类别数。这里,我们使用[`sequence_conv_pool`](https://github.com/PaddlePaddle/Paddle/blob/develop/python/paddle/trainer_config_helpers/networks.py) API实现了卷积和池化操作。

@@ -154,27 +156,26 @@ return prediction

```python

def stacked_lstm_net(data, input_dim, class_dim, emb_dim, hid_dim, stacked_num):

-emb = fluid.layers.embedding(

-input=data, size=[input_dim, emb_dim], is_sparse=True)

+ emb = fluid.layers.embedding(

+ input=data, size=[input_dim, emb_dim], is_sparse=True)

-fc1 = fluid.layers.fc(input=emb, size=hid_dim)

-lstm1, cell1 = fluid.layers.dynamic_lstm(input=fc1, size=hid_dim)

+ fc1 = fluid.layers.fc(input=emb, size=hid_dim)

+ lstm1, cell1 = fluid.layers.dynamic_lstm(input=fc1, size=hid_dim)

-inputs = [fc1, lstm1]

+ inputs = [fc1, lstm1]

-for i in range(2, stacked_num + 1):

-fc = fluid.layers.fc(input=inputs, size=hid_dim)

-lstm, cell = fluid.layers.dynamic_lstm(

-input=fc, size=hid_dim, is_reverse=(i % 2) == 0)

-inputs = [fc, lstm]

+ for i in range(2, stacked_num + 1):

+ fc = fluid.layers.fc(input=inputs, size=hid_dim)

+ lstm, cell = fluid.layers.dynamic_lstm(

+ input=fc, size=hid_dim, is_reverse=(i % 2) == 0)

+ inputs = [fc, lstm]

-fc_last = fluid.layers.sequence_pool(input=inputs[0], pool_type='max')

-lstm_last = fluid.layers.sequence_pool(input=inputs[1], pool_type='max')

+ fc_last = fluid.layers.sequence_pool(input=inputs[0], pool_type='max')