Skip to content

体验新版

项目

组织

正在加载...

登录

切换导航

打开侧边栏

PaddlePaddle

Paddle-Lite

提交

81825474

P

Paddle-Lite

项目概览

PaddlePaddle

/

Paddle-Lite

通知

331

Star

4

Fork

1

代码

文件

提交

分支

Tags

贡献者

分支图

Diff

Issue

271

列表

看板

标记

里程碑

合并请求

78

Wiki

0

Wiki

分析

仓库

DevOps

项目成员

Pages

P

Paddle-Lite

项目概览

项目概览

详情

发布

仓库

仓库

文件

提交

分支

标签

贡献者

分支图

比较

Issue

271

Issue

271

列表

看板

标记

里程碑

合并请求

78

合并请求

78

Pages

分析

分析

仓库分析

DevOps

Wiki

0

Wiki

成员

成员

收起侧边栏

关闭侧边栏

动态

分支图

创建新Issue

提交

Issue看板

未验证

提交

81825474

编写于

5月 06, 2020

作者:

H

hong19860320

提交者:

GitHub

5月 06, 2020

浏览文件

操作

浏览文件

下载

电子邮件补丁

差异文件

[Doc] Add baidu XPU doc and refine MTK and RK doc (#3551) (#3553)

上级

2c1c8093

变更

4

显示空白变更内容

内联

并排

Showing

4 changed file

with

254 addition

and

10 deletion

+254

-10

docs/demo_guides/baidu_xpu.md

docs/demo_guides/baidu_xpu.md

+243

-0

docs/demo_guides/mediatek_apu.md

docs/demo_guides/mediatek_apu.md

+2

-2

docs/demo_guides/rockchip_npu.md

docs/demo_guides/rockchip_npu.md

+8

-8

docs/index.rst

docs/index.rst

+1

-0

未找到文件。

docs/demo_guides/baidu_xpu.md

0 → 100644

浏览文件 @

81825474

# PaddleLite使用百度XPU预测部署

Paddle Lite已支持百度XPU在x86和arm服务器(例如飞腾 FT-2000+/64)上进行预测部署。

目前支持Kernel和子图两种接入方式,其中子图接入方式与之前华为NPU类似,即加载并分析Paddle模型,将Paddle算子转成XTCL组网API进行网络构建,在线生成并执行模型。

## 支持现状

### 已支持的芯片

-

昆仑818-100(推理芯片)

-

昆仑818-300(训练芯片)

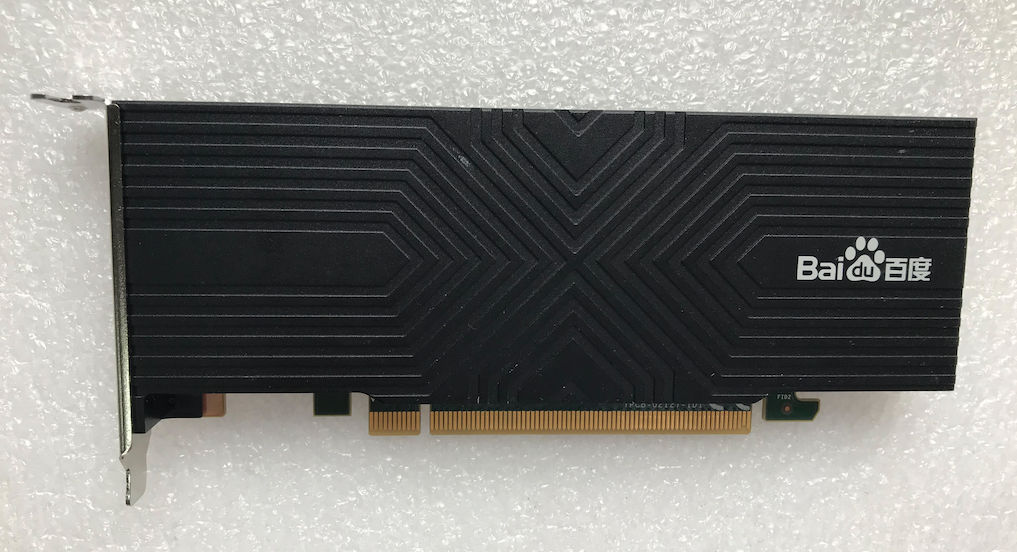

### 已支持的设备

-

K100/K200昆仑AI加速卡

### 已支持的Paddle模型

-

[

ResNet50

](

https://paddlelite-demo.bj.bcebos.com/models/resnet50_fp32_224_fluid.tar.gz

)

-

[

BERT

](

https://paddlelite-demo.bj.bcebos.com/models/bert_fp32_fluid.tar.gz

)

-

[

ERNIE

](

https://paddlelite-demo.bj.bcebos.com/models/ernie_fp32_fluid.tar.gz

)

-

YOLOv3

-

Mask R-CNN

-

Faster R-CNN

-

UNet

-

SENet

-

SSD

-

百度内部业务模型(由于涉密,不方便透露具体细节)

### 已支持(或部分支持)的Paddle算子(Kernel接入方式)

-

scale

-

relu

-

tanh

-

sigmoid

-

stack

-

matmul

-

pool2d

-

slice

-

lookup_table

-

elementwise_add

-

elementwise_sub

-

cast

-

batch_norm

-

mul

-

layer_norm

-

softmax

-

conv2d

-

io_copy

-

io_copy_once

-

__xpu__fc

-

__xpu__multi_encoder

-

__xpu__resnet50

-

__xpu__embedding_with_eltwise_add

### 已支持(或部分支持)的Paddle算子(子图/XTCL接入方式)

-

relu

-

tanh

-

conv2d

-

depthwise_conv2d

-

elementwise_add

-

pool2d

-

softmax

-

mul

-

batch_norm

-

stack

-

gather

-

scale

-

lookup_table

-

slice

-

transpose

-

transpose2

-

reshape

-

reshape2

-

layer_norm

-

gelu

-

dropout

-

matmul

-

cast

-

yolo_box

## 参考示例演示

### 测试设备(K100昆仑AI加速卡)

### 准备设备环境

-

K100/200昆仑AI加速卡

[

规格说明书

](

https://paddlelite-demo.bj.bcebos.com/devices/baidu/K100_K200_spec.pdf

)

,如需更详细的规格说明书或购买产品,请联系欧阳剑ouyangjian@baidu.com;

-

K100为全长半高PCI-E卡,K200为全长全高PCI-E卡,要求使用PCI-E x16插槽,且需要单独的8针供电线进行供电;

-

安装K100/K200驱动,目前支持Ubuntu和CentOS系统,由于驱动依赖Linux kernel版本,请正确安装对应版本的驱动安装包。

### 准备本地编译环境

-

为了保证编译环境一致,建议参考

[

源码编译

](

../user_guides/source_compile

)

中的Linux开发环境进行配置;

-

由于编译示例程序需要依赖OpenCV和CMake 3.10.3,请执行如下命令进行安装;

```

shell

$

sudo

apt-get update

$

sudo

apt-get

install

gcc g++ make wget unzip libopencv-dev pkg-config

$

wget https://www.cmake.org/files/v3.10/cmake-3.10.3.tar.gz

$

tar

-zxvf

cmake-3.10.3.tar.gz

$

cd

cmake-3.10.3

$

./configure

$

make

$

sudo

make

install

```

### 运行图像分类示例程序

-

从

[

https://paddlelite-demo.bj.bcebos.com/devices/baidu/PaddleLite-linux-demo.tar.gz

](

https://paddlelite-demo.bj.bcebos.com/devices/baidu/PaddleLite-linux-demo.tar.gz

)

下载示例程序,解压后清单如下:

```

shell

- PaddleLite-linux-demo

- image_classification_demo

- assets

- images

- tabby_cat.jpg

# 测试图片

- labels

- synset_words.txt

# 1000分类label文件

- models

- resnet50_fp32_224_fluid

# Paddle fluid non-combined格式的resnet50 float32模型

- __model__

# Paddle fluid模型组网文件,可拖入https://lutzroeder.github.io/netron/进行可视化显示网络结构

- bn2a_branch1_mean

# Paddle fluid模型参数文件

- bn2a_branch1_scale

...

- shell

- CMakeLists.txt

# 示例程序CMake脚本

- build

- image_classification_demo

# 已编译好的,适用于amd64的示例程序

- image_classification_demo.cc

# 示例程序源码

- build.sh

# 示例程序编译脚本

- run.sh

# 示例程序运行脚本

- libs

- PaddleLite

- amd64

- include

# PaddleLite头文件

- lib

- libiomp5.so

# Intel OpenMP库

- libmklml_intel.so

# Intel MKL库

- libxpuapi.so

# XPU API库,提供设备管理和算子实现。

- llibxpurt.so

# XPU runtime库

- libpaddle_full_api_shared.so

# 预编译PaddleLite full api库

- arm64

- include

# PaddleLite头文件

- lib

- libxpuapi.so

# XPU API库,提供设备管理和算子实现。

- llibxpurt.so

# XPU runtime库

- libpaddle_full_api_shared.so

# 预编译PaddleLite full api库

```

-

进入PaddleLite-linux-demo/image_classification_demo/shell,直接执行./run.sh amd64即可;

```

shell

$

cd

PaddleLite-linux-demo/image_classification_demo/shell

$

./run.sh amd64

# 默认已生成amd64版本的build/image_classification_demo,因此,无需重新编译示例程序就可以执行。

$

./run.sh arm64

# 需要在arm64(FT-2000+/64)服务器上执行./build.sh arm64后才能执行该命令。

...

AUTOTUNE:

(

12758016, 16, 1, 2048, 7, 7, 512, 1, 1, 1, 1, 0, 0, 0

)

=

1by1_bsp

(

1, 32, 128, 128

)

Find Best Result

in

150 choices, avg-conv-op-time

=

40 us

[

INFO][XPUAPI][/home/qa_work/xpu_workspace/xpu_build_dailyjob/api_root/baidu/xpu/api/src/wrapper/conv.cpp:274] Start Tuning:

(

12758016, 16, 1, 512, 7, 7, 512, 3, 3, 1, 1, 1, 1, 0

)

AUTOTUNE:

(

12758016, 16, 1, 512, 7, 7, 512, 3, 3, 1, 1, 1, 1, 0

)

=

wpinned_bsp

(

1, 171, 16, 128

)

Find Best Result

in

144 choices, avg-conv-op-time

=

79 us

I0502 22:34:18.176113 15876 io_copy_compute.cc:75] xpu to host, copy size 4000

I0502 22:34:18.176406 15876 io_copy_compute.cc:36] host to xpu, copy size 602112

I0502 22:34:18.176697 15876 io_copy_compute.cc:75] xpu to host, copy size 4000

iter 0 cost: 2.116000 ms

I0502 22:34:18.178530 15876 io_copy_compute.cc:36] host to xpu, copy size 602112

I0502 22:34:18.178792 15876 io_copy_compute.cc:75] xpu to host, copy size 4000

iter 1 cost: 2.101000 ms

I0502 22:34:18.180634 15876 io_copy_compute.cc:36] host to xpu, copy size 602112

I0502 22:34:18.180881 15876 io_copy_compute.cc:75] xpu to host, copy size 4000

iter 2 cost: 2.089000 ms

I0502 22:34:18.182726 15876 io_copy_compute.cc:36] host to xpu, copy size 602112

I0502 22:34:18.182976 15876 io_copy_compute.cc:75] xpu to host, copy size 4000

iter 3 cost: 2.085000 ms

I0502 22:34:18.184814 15876 io_copy_compute.cc:36] host to xpu, copy size 602112

I0502 22:34:18.185068 15876 io_copy_compute.cc:75] xpu to host, copy size 4000

iter 4 cost: 2.101000 ms

warmup: 1 repeat: 5, average: 2.098400 ms, max: 2.116000 ms, min: 2.085000 ms

results: 3

Top0 tabby, tabby

cat

- 0.689418

Top1 tiger

cat

- 0.190557

Top2 Egyptian

cat

- 0.112354

Preprocess

time

: 1.553000 ms

Prediction

time

: 2.098400 ms

Postprocess

time

: 0.081000 ms

```

-

如果需要更改测试图片,可将图片拷贝到PaddleLite-linux-demo/image_classification_demo/assets/images目录下,然后将run.sh的IMAGE_NAME设置成指定文件名即可;

-

如果需要重新编译示例程序,直接运行./build.sh amd64或./build.sh arm64即可。

```

shell

$

cd

PaddleLite-linux-demo/image_classification_demo/shell

$

./build.sh amd64

# For amd64

$

./build.sh arm64

# For arm64(FT-2000+/64)

```

### 更新模型

-

通过Paddle Fluid训练,或X2Paddle转换得到ResNet50 float32模型

[

resnet50_fp32_224_fluid

](

https://paddlelite-demo.bj.bcebos.com/models/resnet50_fp32_224_fluid.tar.gz

)

;

-

由于XPU一般部署在Server端,因此将使用PaddleLite的full api加载原始的Paddle Fluid模型进行预测,即采用CXXConfig配置相关参数。

### 更新支持百度XPU的Paddle Lite库

-

下载PaddleLite源码;

```

shell

$

git clone https://github.com/PaddlePaddle/Paddle-Lite.git

$

cd

Paddle-Lite

$

git checkout <release-version-tag>

```

-

下载xpu_toolchain for amd64 or arm64(FT-2000+/64);

```

shell

$

wget <URL_to_download_xpu_toolchain>

$

tar

-xvf

output.tar.gz

$

mv

output xpu_toolchain

```

-

编译full_publish for amd64 or arm64(FT-2000+/64);

```

shell

For amd64,如果报找不到cxx11::符号的编译错误,请将gcc切换到4.8版本。

$

./lite/tools/build.sh

--build_xpu

=

ON

--xpu_sdk_root

=

./xpu_toolchain x86

For arm64

(

FT-2000+/64

)

$

./lite/tools/build.sh

--arm_os

=

armlinux

--arm_abi

=

armv8

--arm_lang

=

gcc

--build_extra

=

ON

--build_xpu

=

ON

--xpu_sdk_root

=

./xpu_toolchain

--with_log

=

ON full_publish

```

-

将编译生成的build.lite.x86/inference_lite_lib/cxx/include替换PaddleLite-linux-demo/libs/PaddleLite/amd64/include目录;

-

将编译生成的build.lite.x86/inference_lite_lib/cxx/include/lib/libpaddle_full_api_shared.so替换PaddleLite-linux-demo/libs/PaddleLite/amd64/lib/libpaddle_full_api_shared.so文件;

-

将编译生成的build.lite.armlinux.armv8.gcc/inference_lite_lib.armlinux.armv8.xpu/cxx/include替换PaddleLite-linux-demo/libs/PaddleLite/arm64/include目录;

-

将编译生成的build.lite.armlinux.armv8.gcc/inference_lite_lib.armlinux.armv8.xpu/cxx/lib/libpaddle_full_api_shared.so替换PaddleLite-linux-demo/libs/PaddleLite/arm64/lib/libpaddle_full_api_shared.so文件。

## 其它说明

-

如需更进一步的了解相关产品的信息,请联系欧阳剑ouyangjian@baidu.com;

-

百度昆仑的研发同学正在持续适配更多的Paddle算子,以便支持更多的Paddle模型。

docs/demo_guides/mediatek_apu.md

浏览文件 @

81825474

...

...

@@ -7,7 +7,7 @@ Paddle Lite已支持MTK APU的预测部署。

### 已支持的芯片

-

[

MT8168

](

https://www.mediatek.cn/products/tablets/mt8168

)

/

[

MT8175

](

https://www.mediatek.cn/products/tablets/mt8175

)

。

-

[

MT8168

](

https://www.mediatek.cn/products/tablets/mt8168

)

/

[

MT8175

](

https://www.mediatek.cn/products/tablets/mt8175

)

及其他智能芯片

。

### 已支持的设备

...

...

@@ -148,7 +148,7 @@ $ ./opt --model_dir=mobilenet_v1_int8_224_fluid \

```

-

注意:opt生成的模型只是标记了MTK APU支持的Paddle算子,并没有真正生成MTK APU模型,只有在执行时才会将标记的Paddle算子转成MTK Neuron adapter API调用实现组网,最终生成并执行模型。

### 更新支持

RK N

PU的Paddle Lite库

### 更新支持

MTK A

PU的Paddle Lite库

-

下载PaddleLite源码和APU DDK;

```

shell

...

...

docs/demo_guides/rockchip_npu.md

浏览文件 @

81825474

...

...

@@ -52,10 +52,10 @@ Paddle Lite已支持RK NPU的预测部署。

### 运行图像分类示例程序

-

从

[

https://paddlelite-demo.bj.bcebos.com/devices/rockchip/PaddleLite-

armlinux-demo.tar.gz

](

https://paddlelite-demo.bj.bcebos.com/devices/rockchip/PaddleLite-arm

linux-demo.tar.gz

)

下载示例程序,解压后清单如下:

-

从

[

https://paddlelite-demo.bj.bcebos.com/devices/rockchip/PaddleLite-

linux-demo.tar.gz

](

https://paddlelite-demo.bj.bcebos.com/devices/rockchip/PaddleLite-

linux-demo.tar.gz

)

下载示例程序,解压后清单如下:

```

shell

- PaddleLite-

arm

linux-demo

- PaddleLite-linux-demo

- image_classification_demo

- assets

- images

...

...

@@ -96,9 +96,9 @@ Paddle Lite已支持RK NPU的预测部署。

- libpaddle_light_api_shared.so

```

-

进入PaddleLite-

arm

linux-demo/image_classification_demo/shell,直接执行./run.sh arm64即可,注意:run.sh不能在docker环境执行,否则无法找到设备;

-

进入PaddleLite-linux-demo/image_classification_demo/shell,直接执行./run.sh arm64即可,注意:run.sh不能在docker环境执行,否则无法找到设备;

```

shell

$

cd

PaddleLite-

arm

linux-demo/image_classification_demo/shell

$

cd

PaddleLite-linux-demo/image_classification_demo/shell

$

./run.sh arm64

# For RK1808 EVB

$

./run.sh armhf

# For RK1806 EVB

...

...

...

@@ -147,10 +147,10 @@ For RK1806 EVB

$

./lite/tools/build.sh

--arm_os

=

armlinux

--arm_abi

=

armv7

--arm_lang

=

gcc

--build_extra

=

ON

--with_log

=

ON

--build_rknpu

=

ON

--rknpu_ddk_root

=

./rknpu_ddk full_publish

$

./lite/tools/build.sh

--arm_os

=

armlinux

--arm_abi

=

armv7

--arm_lang

=

gcc

--build_extra

=

ON

--with_log

=

ON

--build_rknpu

=

ON

--rknpu_ddk_root

=

./rknpu_ddk tiny_publish

```

-

将编译生成的build.lite.armlinux.armv8.gcc/inference_lite_lib.armlinux.armv8.rknpu/cxx/include替换PaddleLite-

arm

linux-demo/libs/PaddleLite/arm64/include目录;

-

将编译生成的build.lite.armlinux.armv8.gcc/inference_lite_lib.armlinux.armv8.rknpu/cxx/lib/libpaddle_light_api_shared.so替换PaddleLite-

arm

linux-demo/libs/PaddleLite/arm64/lib/libpaddle_light_api_shared.so文件;

-

将编译生成的build.lite.armlinux.armv7.gcc/inference_lite_lib.armlinux.armv7.rknpu/cxx/include替换PaddleLite-

arm

linux-demo/libs/PaddleLite/armhf/include目录;

-

将编译生成的build.lite.armlinux.armv7.gcc/inference_lite_lib.armlinux.armv7.rknpu/cxx/lib/libpaddle_light_api_shared.so替换PaddleLite-

arm

linux-demo/libs/PaddleLite/armhf/lib/libpaddle_light_api_shared.so文件。

-

将编译生成的build.lite.armlinux.armv8.gcc/inference_lite_lib.armlinux.armv8.rknpu/cxx/include替换PaddleLite-linux-demo/libs/PaddleLite/arm64/include目录;

-

将编译生成的build.lite.armlinux.armv8.gcc/inference_lite_lib.armlinux.armv8.rknpu/cxx/lib/libpaddle_light_api_shared.so替换PaddleLite-linux-demo/libs/PaddleLite/arm64/lib/libpaddle_light_api_shared.so文件;

-

将编译生成的build.lite.armlinux.armv7.gcc/inference_lite_lib.armlinux.armv7.rknpu/cxx/include替换PaddleLite-linux-demo/libs/PaddleLite/armhf/include目录;

-

将编译生成的build.lite.armlinux.armv7.gcc/inference_lite_lib.armlinux.armv7.rknpu/cxx/lib/libpaddle_light_api_shared.so替换PaddleLite-linux-demo/libs/PaddleLite/armhf/lib/libpaddle_light_api_shared.so文件。

## 其它说明

...

...

docs/index.rst

浏览文件 @

81825474

...

...

@@ -54,6 +54,7 @@ Welcome to Paddle-Lite's documentation!

demo_guides/opencl

demo_guides/fpga

demo_guides/npu

demo_guides/baidu_xpu

demo_guides/rockchip_npu

demo_guides/mediatek_apu

...

...

编辑

预览

Markdown

is supported

0%

请重试

或

添加新附件

.

添加附件

取消

You are about to add

0

people

to the discussion. Proceed with caution.

先完成此消息的编辑!

取消

想要评论请

注册

或

登录