Revert "add table eval and predict script" (#3062)

Showing

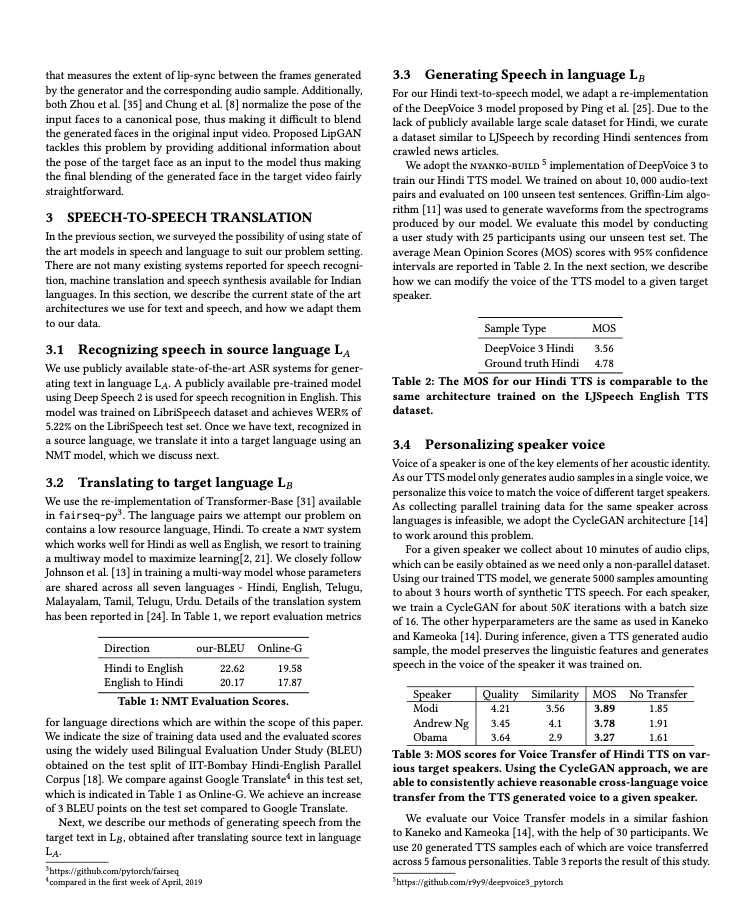

doc/table/1.png

已删除

100644 → 0

262.8 KB

此差异已折叠。

ppocr/utils/network.py

已删除

100644 → 0

ppstructure/MANIFEST.in

已删除

100644 → 0

ppstructure/__init__.py

已删除

100644 → 0

ppstructure/layout/README.md

0 → 100644

ppstructure/layout/README_ch.md

0 → 100644

ppstructure/setup.py

已删除

100644 → 0

ppstructure/table/__init__.py

已删除

100644 → 0

ppstructure/table/matcher.py

已删除

100755 → 0

此差异已折叠。

ppstructure/utility.py

已删除

100644 → 0

此差异已折叠。