Merge branch 'develop' into enable_drop_in_average_and_max_layer

Showing

demo/mnist/api_train.py

0 → 100644

demo/mnist/mnist_util.py

0 → 100644

demo/quick_start/cluster/env.sh

0 → 100644

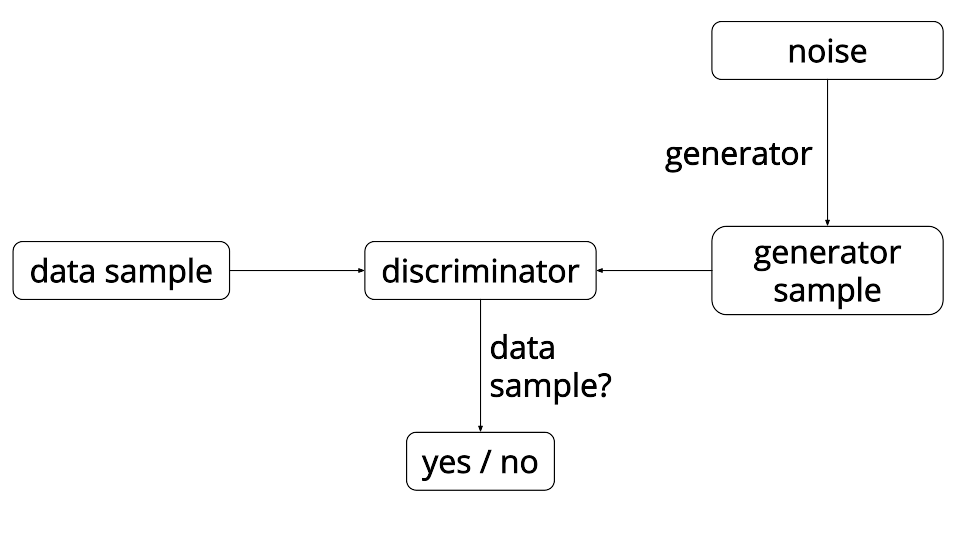

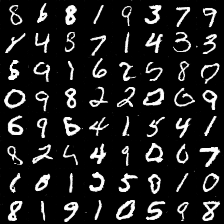

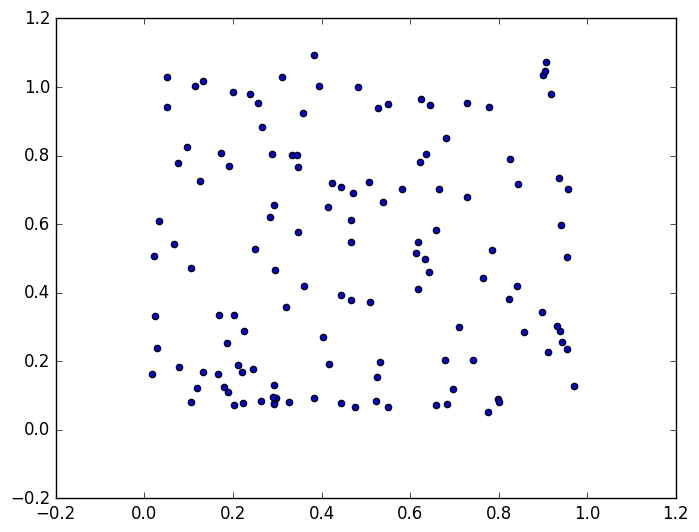

doc/tutorials/gan/gan.png

0 → 100644

32.5 KB

doc/tutorials/gan/index_en.md

0 → 100644

28.0 KB

20.1 KB

paddle/api/ParameterUpdater.cpp

0 → 100644