Merge branch 'develop' of https://github.com/PaddlePaddle/Paddle into feature/rewrite_vector

Showing

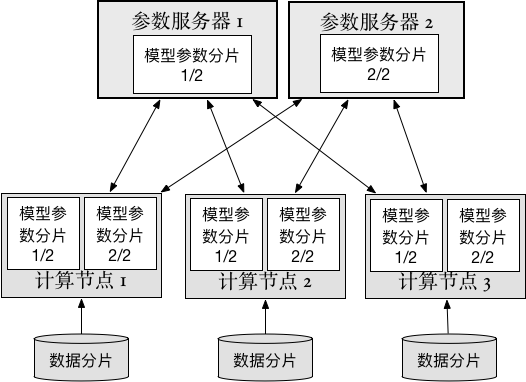

doc/howto/cluster/src/ps_cn.png

0 → 100644

33.1 KB

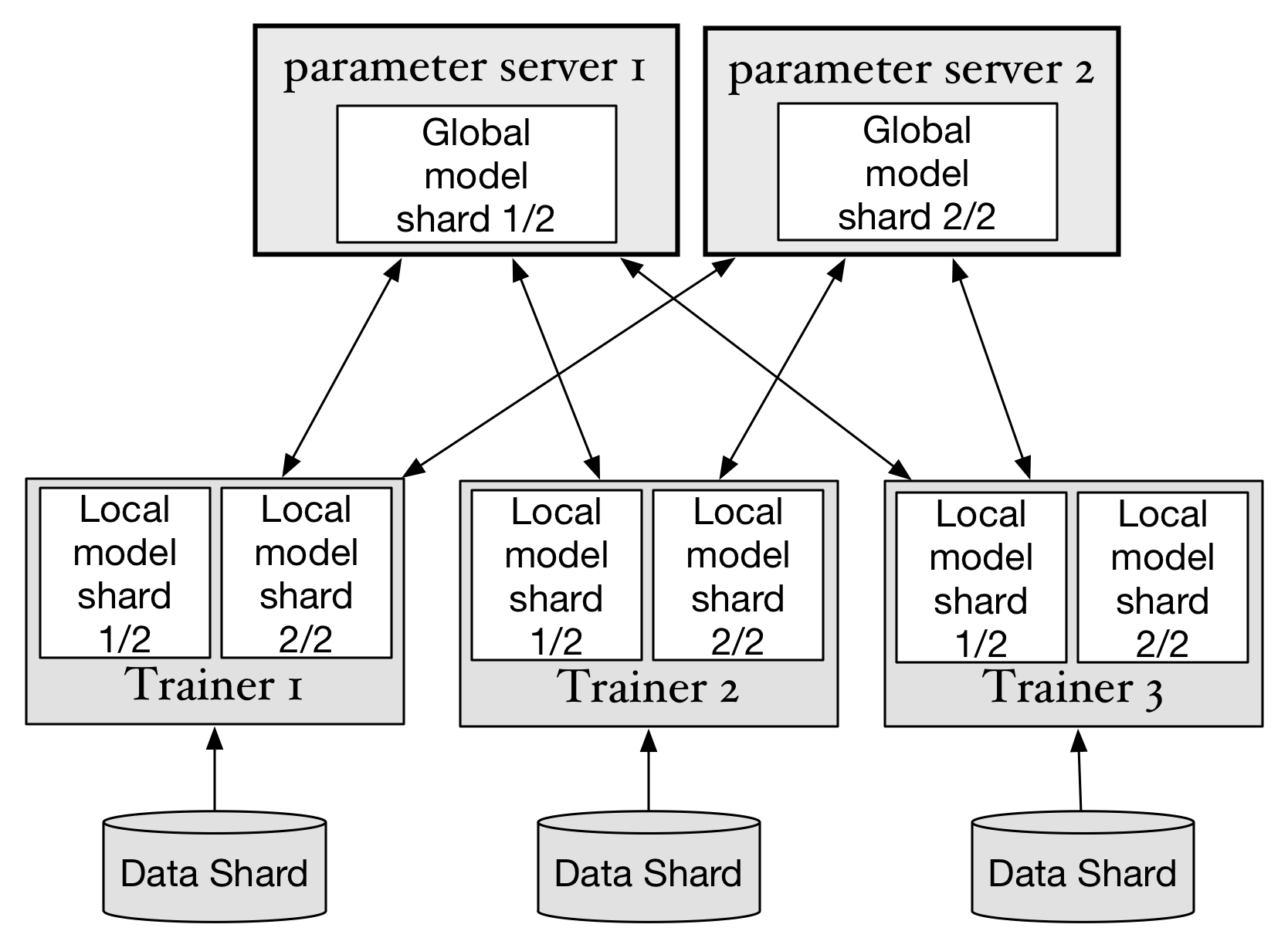

doc/howto/cluster/src/ps_en.png

0 → 100644

141.7 KB

paddle/operators/layer_norm_op.cu

0 → 100644