Merge branch 'develop' of https://github.com/PaddlePaddle/Paddle into feature/simplify_delay_logic

Showing

doc/mobile/CMakeLists.txt

0 → 100644

doc/mobile/index_cn.rst

0 → 100644

doc/mobile/index_en.rst

0 → 100644

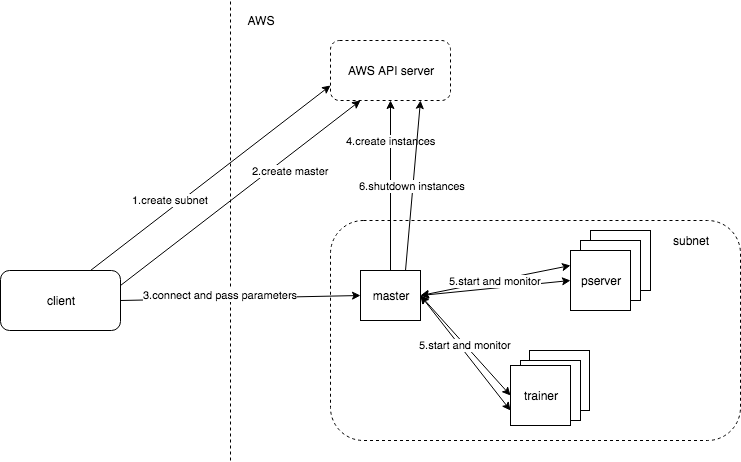

tools/aws_benchmarking/README.md

0 → 100644

39.8 KB