diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/.gitignore b/doc/fluid/new_docs/beginners_guide/basics/image_classification/.gitignore

deleted file mode 100644

index dc7c62b06287ad333dd41082e566b0553d3a5341..0000000000000000000000000000000000000000

--- a/doc/fluid/new_docs/beginners_guide/basics/image_classification/.gitignore

+++ /dev/null

@@ -1,8 +0,0 @@

-*.pyc

-train.log

-output

-data/cifar-10-batches-py/

-data/cifar-10-python.tar.gz

-data/*.txt

-data/*.list

-data/mean.meta

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/index.md b/doc/fluid/new_docs/beginners_guide/basics/image_classification/README.cn.md

similarity index 70%

rename from doc/fluid/new_docs/beginners_guide/basics/image_classification/index.md

rename to doc/fluid/new_docs/beginners_guide/basics/image_classification/README.cn.md

index ce0d2bb1dc0cf73151ee9aceea7e4d7b24af1926..8d645718e12e4d976a8e71de105e11f495191fbf 100644

--- a/doc/fluid/new_docs/beginners_guide/basics/image_classification/index.md

+++ b/doc/fluid/new_docs/beginners_guide/basics/image_classification/README.cn.md

@@ -1,559 +1,576 @@

-

-# 图像分类

-

-本教程源代码目录在[book/image_classification](https://github.com/PaddlePaddle/book/tree/develop/03.image_classification), 初次使用请参考PaddlePaddle[安装教程](https://github.com/PaddlePaddle/book/blob/develop/README.cn.md#运行这本书)。

-

-## 背景介绍

-

-图像相比文字能够提供更加生动、容易理解及更具艺术感的信息,是人们转递与交换信息的重要来源。在本教程中,我们专注于图像识别领域的一个重要问题,即图像分类。

-

-图像分类是根据图像的语义信息将不同类别图像区分开来,是计算机视觉中重要的基本问题,也是图像检测、图像分割、物体跟踪、行为分析等其他高层视觉任务的基础。图像分类在很多领域有广泛应用,包括安防领域的人脸识别和智能视频分析等,交通领域的交通场景识别,互联网领域基于内容的图像检索和相册自动归类,医学领域的图像识别等。

-

-

-一般来说,图像分类通过手工特征或特征学习方法对整个图像进行全部描述,然后使用分类器判别物体类别,因此如何提取图像的特征至关重要。在深度学习算法之前使用较多的是基于词袋(Bag of Words)模型的物体分类方法。词袋方法从自然语言处理中引入,即一句话可以用一个装了词的袋子表示其特征,袋子中的词为句子中的单词、短语或字。对于图像而言,词袋方法需要构建字典。最简单的词袋模型框架可以设计为**底层特征抽取**、**特征编码**、**分类器设计**三个过程。

-

-而基于深度学习的图像分类方法,可以通过有监督或无监督的方式**学习**层次化的特征描述,从而取代了手工设计或选择图像特征的工作。深度学习模型中的卷积神经网络(Convolution Neural Network, CNN)近年来在图像领域取得了惊人的成绩,CNN直接利用图像像素信息作为输入,最大程度上保留了输入图像的所有信息,通过卷积操作进行特征的提取和高层抽象,模型输出直接是图像识别的结果。这种基于"输入-输出"直接端到端的学习方法取得了非常好的效果,得到了广泛的应用。

-

-本教程主要介绍图像分类的深度学习模型,以及如何使用PaddlePaddle训练CNN模型。

-

-## 效果展示

-

-图像分类包括通用图像分类、细粒度图像分类等。图1展示了通用图像分类效果,即模型可以正确识别图像上的主要物体。

-

-

-

-图1. 通用图像分类展示

-

-

-

-图2展示了细粒度图像分类-花卉识别的效果,要求模型可以正确识别花的类别。

-

-

-

-图2. 细粒度图像分类展示

-

-

-

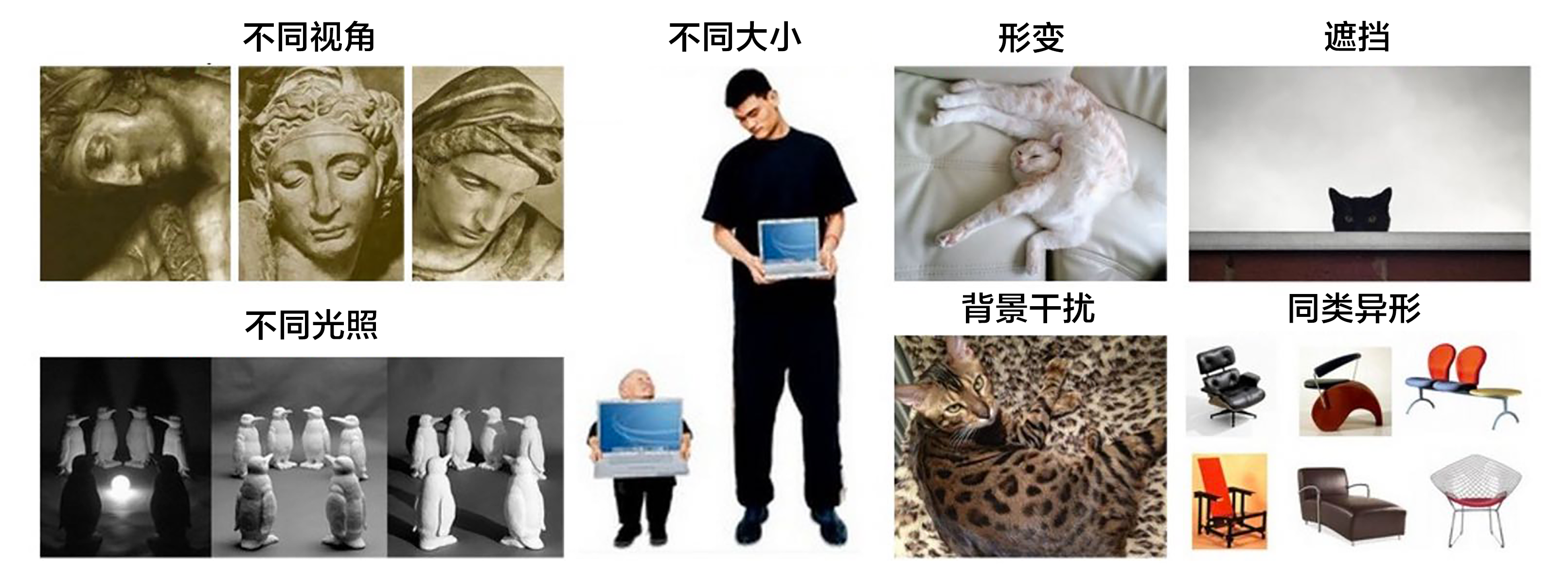

-一个好的模型既要对不同类别识别正确,同时也应该能够对不同视角、光照、背景、变形或部分遮挡的图像正确识别(这里我们统一称作图像扰动)。图3展示了一些图像的扰动,较好的模型会像聪明的人类一样能够正确识别。

-

-

-

-图3. 扰动图片展示[22]

-

-

-## 模型概览

-

-图像识别领域大量的研究成果都是建立在[PASCAL VOC](http://host.robots.ox.ac.uk/pascal/VOC/)、[ImageNet](http://image-net.org/)等公开的数据集上,很多图像识别算法通常在这些数据集上进行测试和比较。PASCAL VOC是2005年发起的一个视觉挑战赛,ImageNet是2010年发起的大规模视觉识别竞赛(ILSVRC)的数据集,在本章中我们基于这些竞赛的一些论文介绍图像分类模型。

-

-在2012年之前的传统图像分类方法可以用背景描述中提到的三步完成,但通常完整建立图像识别模型一般包括底层特征学习、特征编码、空间约束、分类器设计、模型融合等几个阶段。

-1). **底层特征提取**: 通常从图像中按照固定步长、尺度提取大量局部特征描述。常用的局部特征包括SIFT(Scale-Invariant Feature Transform, 尺度不变特征转换) \[[1](#参考文献)\]、HOG(Histogram of Oriented Gradient, 方向梯度直方图) \[[2](#参考文献)\]、LBP(Local Bianray Pattern, 局部二值模式) \[[3](#参考文献)\] 等,一般也采用多种特征描述子,防止丢失过多的有用信息。

-2). **特征编码**: 底层特征中包含了大量冗余与噪声,为了提高特征表达的鲁棒性,需要使用一种特征变换算法对底层特征进行编码,称作特征编码。常用的特征编码包括向量量化编码 \[[4](#参考文献)\]、稀疏编码 \[[5](#参考文献)\]、局部线性约束编码 \[[6](#参考文献)\]、Fisher向量编码 \[[7](#参考文献)\] 等。

-3). **空间特征约束**: 特征编码之后一般会经过空间特征约束,也称作**特征汇聚**。特征汇聚是指在一个空间范围内,对每一维特征取最大值或者平均值,可以获得一定特征不变形的特征表达。金字塔特征匹配是一种常用的特征聚会方法,这种方法提出将图像均匀分块,在分块内做特征汇聚。

-4). **通过分类器分类**: 经过前面步骤之后一张图像可以用一个固定维度的向量进行描述,接下来就是经过分类器对图像进行分类。通常使用的分类器包括SVM(Support Vector Machine, 支持向量机)、随机森林等。而使用核方法的SVM是最为广泛的分类器,在传统图像分类任务上性能很好。

-

-这种方法在PASCAL VOC竞赛中的图像分类算法中被广泛使用 \[[18](#参考文献)\]。[NEC实验室](http://www.nec-labs.com/)在ILSVRC2010中采用SIFT和LBP特征,两个非线性编码器以及SVM分类器获得图像分类的冠军 \[[8](#参考文献)\]。

-

-Alex Krizhevsky在2012年ILSVRC提出的CNN模型 \[[9](#参考文献)\] 取得了历史性的突破,效果大幅度超越传统方法,获得了ILSVRC2012冠军,该模型被称作AlexNet。这也是首次将深度学习用于大规模图像分类中。从AlexNet之后,涌现了一系列CNN模型,不断地在ImageNet上刷新成绩,如图4展示。随着模型变得越来越深以及精妙的结构设计,Top-5的错误率也越来越低,降到了3.5%附近。而在同样的ImageNet数据集上,人眼的辨识错误率大概在5.1%,也就是目前的深度学习模型的识别能力已经超过了人眼。

-

-

-

-图4. ILSVRC图像分类Top-5错误率

-

-

-### CNN

-

-传统CNN包含卷积层、全连接层等组件,并采用softmax多类别分类器和多类交叉熵损失函数,一个典型的卷积神经网络如图5所示,我们先介绍用来构造CNN的常见组件。

-

-

-

-图5. CNN网络示例[20]

-

-

-- 卷积层(convolution layer): 执行卷积操作提取底层到高层的特征,发掘出图片局部关联性质和空间不变性质。

-- 池化层(pooling layer): 执行降采样操作。通过取卷积输出特征图中局部区块的最大值(max-pooling)或者均值(avg-pooling)。降采样也是图像处理中常见的一种操作,可以过滤掉一些不重要的高频信息。

-- 全连接层(fully-connected layer,或者fc layer): 输入层到隐藏层的神经元是全部连接的。

-- 非线性变化: 卷积层、全连接层后面一般都会接非线性变化层,例如Sigmoid、Tanh、ReLu等来增强网络的表达能力,在CNN里最常使用的为ReLu激活函数。

-- Dropout \[[10](#参考文献)\] : 在模型训练阶段随机让一些隐层节点权重不工作,提高网络的泛化能力,一定程度上防止过拟合。

-

-另外,在训练过程中由于每层参数不断更新,会导致下一次输入分布发生变化,这样导致训练过程需要精心设计超参数。如2015年Sergey Ioffe和Christian Szegedy提出了Batch Normalization (BN)算法 \[[14](#参考文献)\] 中,每个batch对网络中的每一层特征都做归一化,使得每层分布相对稳定。BN算法不仅起到一定的正则作用,而且弱化了一些超参数的设计。经过实验证明,BN算法加速了模型收敛过程,在后来较深的模型中被广泛使用。

-

-接下来我们主要介绍VGG,GoogleNet和ResNet网络结构。

-

-### VGG

-

-牛津大学VGG(Visual Geometry Group)组在2014年ILSVRC提出的模型被称作VGG模型 \[[11](#参考文献)\] 。该模型相比以往模型进一步加宽和加深了网络结构,它的核心是五组卷积操作,每两组之间做Max-Pooling空间降维。同一组内采用多次连续的3X3卷积,卷积核的数目由较浅组的64增多到最深组的512,同一组内的卷积核数目是一样的。卷积之后接两层全连接层,之后是分类层。由于每组内卷积层的不同,有11、13、16、19层这几种模型,下图展示一个16层的网络结构。VGG模型结构相对简洁,提出之后也有很多文章基于此模型进行研究,如在ImageNet上首次公开超过人眼识别的模型\[[19](#参考文献)\]就是借鉴VGG模型的结构。

-

-

-

-图6. 基于ImageNet的VGG16模型

-

-

-### GoogleNet

-

-GoogleNet \[[12](#参考文献)\] 在2014年ILSVRC的获得了冠军,在介绍该模型之前我们先来了解NIN(Network in Network)模型 \[[13](#参考文献)\] 和Inception模块,因为GoogleNet模型由多组Inception模块组成,模型设计借鉴了NIN的一些思想。

-

-NIN模型主要有两个特点:1) 引入了多层感知卷积网络(Multi-Layer Perceptron Convolution, MLPconv)代替一层线性卷积网络。MLPconv是一个微小的多层卷积网络,即在线性卷积后面增加若干层1x1的卷积,这样可以提取出高度非线性特征。2) 传统的CNN最后几层一般都是全连接层,参数较多。而NIN模型设计最后一层卷积层包含类别维度大小的特征图,然后采用全局均值池化(Avg-Pooling)替代全连接层,得到类别维度大小的向量,再进行分类。这种替代全连接层的方式有利于减少参数。

-

-Inception模块如下图7所示,图(a)是最简单的设计,输出是3个卷积层和一个池化层的特征拼接。这种设计的缺点是池化层不会改变特征通道数,拼接后会导致特征的通道数较大,经过几层这样的模块堆积后,通道数会越来越大,导致参数和计算量也随之增大。为了改善这个缺点,图(b)引入3个1x1卷积层进行降维,所谓的降维就是减少通道数,同时如NIN模型中提到的1x1卷积也可以修正线性特征。

-

-

-

-图7. Inception模块

-

-

-GoogleNet由多组Inception模块堆积而成。另外,在网络最后也没有采用传统的多层全连接层,而是像NIN网络一样采用了均值池化层;但与NIN不同的是,池化层后面接了一层到类别数映射的全连接层。除了这两个特点之外,由于网络中间层特征也很有判别性,GoogleNet在中间层添加了两个辅助分类器,在后向传播中增强梯度并且增强正则化,而整个网络的损失函数是这个三个分类器的损失加权求和。

-

-GoogleNet整体网络结构如图8所示,总共22层网络:开始由3层普通的卷积组成;接下来由三组子网络组成,第一组子网络包含2个Inception模块,第二组包含5个Inception模块,第三组包含2个Inception模块;然后接均值池化层、全连接层。

-

-

-

-图8. GoogleNet[12]

-

-

-

-上面介绍的是GoogleNet第一版模型(称作GoogleNet-v1)。GoogleNet-v2 \[[14](#参考文献)\] 引入BN层;GoogleNet-v3 \[[16](#参考文献)\] 对一些卷积层做了分解,进一步提高网络非线性能力和加深网络;GoogleNet-v4 \[[17](#参考文献)\] 引入下面要讲的ResNet设计思路。从v1到v4每一版的改进都会带来准确度的提升,介于篇幅,这里不再详细介绍v2到v4的结构。

-

-

-### ResNet

-

-ResNet(Residual Network) \[[15](#参考文献)\] 是2015年ImageNet图像分类、图像物体定位和图像物体检测比赛的冠军。针对训练卷积神经网络时加深网络导致准确度下降的问题,ResNet提出了采用残差学习。在已有设计思路(BN, 小卷积核,全卷积网络)的基础上,引入了残差模块。每个残差模块包含两条路径,其中一条路径是输入特征的直连通路,另一条路径对该特征做两到三次卷积操作得到该特征的残差,最后再将两条路径上的特征相加。

-

-残差模块如图9所示,左边是基本模块连接方式,由两个输出通道数相同的3x3卷积组成。右边是瓶颈模块(Bottleneck)连接方式,之所以称为瓶颈,是因为上面的1x1卷积用来降维(图示例即256->64),下面的1x1卷积用来升维(图示例即64->256),这样中间3x3卷积的输入和输出通道数都较小(图示例即64->64)。

-

-

-

-图9. 残差模块

-

-

-图10展示了50、101、152层网络连接示意图,使用的是瓶颈模块。这三个模型的区别在于每组中残差模块的重复次数不同(见图右上角)。ResNet训练收敛较快,成功的训练了上百乃至近千层的卷积神经网络。

-

-

-

-图10. 基于ImageNet的ResNet模型

-

-

-

-## 数据准备

-

-通用图像分类公开的标准数据集常用的有[CIFAR](https://www.cs.toronto.edu/~kriz/cifar.html)、[ImageNet](http://image-net.org/)、[COCO](http://mscoco.org/)等,常用的细粒度图像分类数据集包括[CUB-200-2011](http://www.vision.caltech.edu/visipedia/CUB-200-2011.html)、[Stanford Dog](http://vision.stanford.edu/aditya86/ImageNetDogs/)、[Oxford-flowers](http://www.robots.ox.ac.uk/~vgg/data/flowers/)等。其中ImageNet数据集规模相对较大,如[模型概览](#模型概览)一章所讲,大量研究成果基于ImageNet。ImageNet数据从2010年来稍有变化,常用的是ImageNet-2012数据集,该数据集包含1000个类别:训练集包含1,281,167张图片,每个类别数据732至1300张不等,验证集包含50,000张图片,平均每个类别50张图片。

-

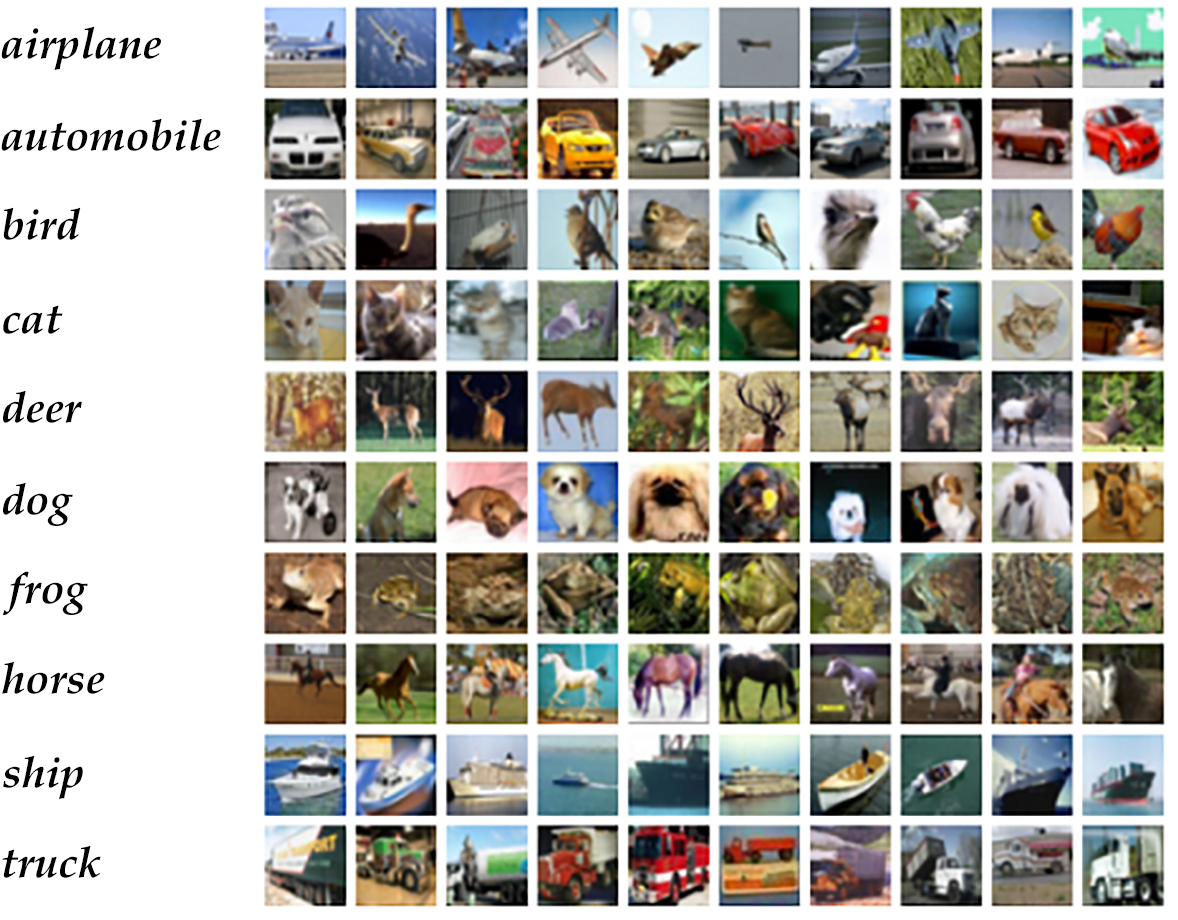

-由于ImageNet数据集较大,下载和训练较慢,为了方便大家学习,我们使用[CIFAR10]()数据集。CIFAR10数据集包含60,000张32x32的彩色图片,10个类别,每个类包含6,000张。其中50,000张图片作为训练集,10000张作为测试集。图11从每个类别中随机抽取了10张图片,展示了所有的类别。

-

-

-

-图11. CIFAR10数据集[21]

-

-

-Paddle API提供了自动加载cifar数据集模块 `paddle.dataset.cifar`。

-

-通过输入`python train.py`,就可以开始训练模型了,以下小节将详细介绍`train.py`的相关内容。

-

-### 模型结构

-

-#### Paddle 初始化

-

-让我们从导入 Paddle Fluid API 和辅助模块开始。

-

-```python

-import paddle

-import paddle.fluid as fluid

-import numpy

-import sys

-```

-

-本教程中我们提供了VGG和ResNet两个模型的配置。

-

-#### VGG

-

-首先介绍VGG模型结构,由于CIFAR10图片大小和数量相比ImageNet数据小很多,因此这里的模型针对CIFAR10数据做了一定的适配。卷积部分引入了BN和Dropout操作。

-VGG核心模块的输入是数据层,`vgg_bn_drop` 定义了16层VGG结构,每层卷积后面引入BN层和Dropout层,详细的定义如下:

-

-```python

-def vgg_bn_drop(input):

-def conv_block(ipt, num_filter, groups, dropouts):

-return fluid.nets.img_conv_group(

-input=ipt,

-pool_size=2,

-pool_stride=2,

-conv_num_filter=[num_filter] * groups,

-conv_filter_size=3,

-conv_act='relu',

-conv_with_batchnorm=True,

-conv_batchnorm_drop_rate=dropouts,

-pool_type='max')

-

-conv1 = conv_block(input, 64, 2, [0.3, 0])

-conv2 = conv_block(conv1, 128, 2, [0.4, 0])

-conv3 = conv_block(conv2, 256, 3, [0.4, 0.4, 0])

-conv4 = conv_block(conv3, 512, 3, [0.4, 0.4, 0])

-conv5 = conv_block(conv4, 512, 3, [0.4, 0.4, 0])

-

-drop = fluid.layers.dropout(x=conv5, dropout_prob=0.5)

-fc1 = fluid.layers.fc(input=drop, size=512, act=None)

-bn = fluid.layers.batch_norm(input=fc1, act='relu')

-drop2 = fluid.layers.dropout(x=bn, dropout_prob=0.5)

-fc2 = fluid.layers.fc(input=drop2, size=512, act=None)

-predict = fluid.layers.fc(input=fc2, size=10, act='softmax')

-return predict

-```

-

-1. 首先定义了一组卷积网络,即conv_block。卷积核大小为3x3,池化窗口大小为2x2,窗口滑动大小为2,groups决定每组VGG模块是几次连续的卷积操作,dropouts指定Dropout操作的概率。所使用的`img_conv_group`是在`paddle.networks`中预定义的模块,由若干组 Conv->BN->ReLu->Dropout 和 一组 Pooling 组成。

-

-2. 五组卷积操作,即 5个conv_block。 第一、二组采用两次连续的卷积操作。第三、四、五组采用三次连续的卷积操作。每组最后一个卷积后面Dropout概率为0,即不使用Dropout操作。

-

-3. 最后接两层512维的全连接。

-

-4. 通过上面VGG网络提取高层特征,然后经过全连接层映射到类别维度大小的向量,再通过Softmax归一化得到每个类别的概率,也可称作分类器。

-

-### ResNet

-

-ResNet模型的第1、3、4步和VGG模型相同,这里不再介绍。主要介绍第2步即CIFAR10数据集上ResNet核心模块。

-

-先介绍`resnet_cifar10`中的一些基本函数,再介绍网络连接过程。

-

-- `conv_bn_layer` : 带BN的卷积层。

-- `shortcut` : 残差模块的"直连"路径,"直连"实际分两种形式:残差模块输入和输出特征通道数不等时,采用1x1卷积的升维操作;残差模块输入和输出通道相等时,采用直连操作。

-- `basicblock` : 一个基础残差模块,即图9左边所示,由两组3x3卷积组成的路径和一条"直连"路径组成。

-- `bottleneck` : 一个瓶颈残差模块,即图9右边所示,由上下1x1卷积和中间3x3卷积组成的路径和一条"直连"路径组成。

-- `layer_warp` : 一组残差模块,由若干个残差模块堆积而成。每组中第一个残差模块滑动窗口大小与其他可以不同,以用来减少特征图在垂直和水平方向的大小。

-

-```python

-def conv_bn_layer(input,

-ch_out,

-filter_size,

-stride,

-padding,

-act='relu',

-bias_attr=False):

-tmp = fluid.layers.conv2d(

-input=input,

-filter_size=filter_size,

-num_filters=ch_out,

-stride=stride,

-padding=padding,

-act=None,

-bias_attr=bias_attr)

-return fluid.layers.batch_norm(input=tmp, act=act)

-

-

-def shortcut(input, ch_in, ch_out, stride):

-if ch_in != ch_out:

-return conv_bn_layer(input, ch_out, 1, stride, 0, None)

-else:

-return input

-

-

-def basicblock(input, ch_in, ch_out, stride):

-tmp = conv_bn_layer(input, ch_out, 3, stride, 1)

-tmp = conv_bn_layer(tmp, ch_out, 3, 1, 1, act=None, bias_attr=True)

-short = shortcut(input, ch_in, ch_out, stride)

-return fluid.layers.elementwise_add(x=tmp, y=short, act='relu')

-

-

-def layer_warp(block_func, input, ch_in, ch_out, count, stride):

-tmp = block_func(input, ch_in, ch_out, stride)

-for i in range(1, count):

-tmp = block_func(tmp, ch_out, ch_out, 1)

-return tmp

-```

-

-`resnet_cifar10` 的连接结构主要有以下几个过程。

-

-1. 底层输入连接一层 `conv_bn_layer`,即带BN的卷积层。

-2. 然后连接3组残差模块即下面配置3组 `layer_warp` ,每组采用图 10 左边残差模块组成。

-3. 最后对网络做均值池化并返回该层。

-

-注意:除过第一层卷积层和最后一层全连接层之外,要求三组 `layer_warp` 总的含参层数能够被6整除,即 `resnet_cifar10` 的 depth 要满足 `$(depth - 2) % 6 == 0$` 。

-

-```python

-def resnet_cifar10(ipt, depth=32):

-# depth should be one of 20, 32, 44, 56, 110, 1202

-assert (depth - 2) % 6 == 0

-n = (depth - 2) / 6

-nStages = {16, 64, 128}

-conv1 = conv_bn_layer(ipt, ch_out=16, filter_size=3, stride=1, padding=1)

-res1 = layer_warp(basicblock, conv1, 16, 16, n, 1)

-res2 = layer_warp(basicblock, res1, 16, 32, n, 2)

-res3 = layer_warp(basicblock, res2, 32, 64, n, 2)

-pool = fluid.layers.pool2d(

-input=res3, pool_size=8, pool_type='avg', pool_stride=1)

-predict = fluid.layers.fc(input=pool, size=10, act='softmax')

-return predict

-```

-

-## Infererence Program 配置

-

-网络输入定义为 `data_layer` (数据层),在图像分类中即为图像像素信息。CIFRAR10是RGB 3通道32x32大小的彩色图,因此输入数据大小为3072(3x32x32)。

-

-```python

-def inference_program():

-# The image is 32 * 32 with RGB representation.

-data_shape = [3, 32, 32]

-images = fluid.layers.data(name='pixel', shape=data_shape, dtype='float32')

-

-predict = resnet_cifar10(images, 32)

-# predict = vgg_bn_drop(images) # un-comment to use vgg net

-return predict

-```

-

-## Train Program 配置

-

-然后我们需要设置训练程序 `train_program`。它首先从推理程序中进行预测。

-在训练期间,它将从预测中计算 `avg_cost`。

-在有监督训练中需要输入图像对应的类别信息,同样通过`fluid.layers.data`来定义。训练中采用多类交叉熵作为损失函数,并作为网络的输出,预测阶段定义网络的输出为分类器得到的概率信息。

-

-**注意:** 训练程序应该返回一个数组,第一个返回参数必须是 `avg_cost`。训练器使用它来计算梯度。

-

-```python

-def train_program():

-predict = inference_program()

-

-label = fluid.layers.data(name='label', shape=[1], dtype='int64')

-cost = fluid.layers.cross_entropy(input=predict, label=label)

-avg_cost = fluid.layers.mean(cost)

-accuracy = fluid.layers.accuracy(input=predict, label=label)

-return [avg_cost, accuracy]

-```

-

-## Optimizer Function 配置

-

-在下面的 `Adam optimizer`,`learning_rate` 是训练的速度,与网络的训练收敛速度有关系。

-

-```python

-def optimizer_program():

-return fluid.optimizer.Adam(learning_rate=0.001)

-```

-

-## 训练模型

-

-### Trainer 配置

-

-现在,我们需要配置 `Trainer`。`Trainer` 需要接受训练程序 `train_program`, `place` 和优化器 `optimizer_func`。

-

-```python

-use_cuda = False

-place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

-trainer = fluid.Trainer(

-train_func=train_program,

-optimizer_func=optimizer_program,

-place=place)

-```

-

-### Data Feeders 配置

-

-`cifar.train10()` 每次产生一条样本,在完成shuffle和batch之后,作为训练的输入。

-

-```python

-# Each batch will yield 128 images

-BATCH_SIZE = 128

-

-# Reader for training

-train_reader = paddle.batch(

-paddle.reader.shuffle(paddle.dataset.cifar.train10(), buf_size=50000),

-batch_size=BATCH_SIZE)

-

-# Reader for testing. A separated data set for testing.

-test_reader = paddle.batch(

-paddle.dataset.cifar.test10(), batch_size=BATCH_SIZE)

-```

-

-### Event Handler

-

-可以使用`event_handler`回调函数来观察训练过程,或进行测试等, 该回调函数是`trainer.train`函数里设定。

-

-`event_handler_plot`可以用来利用回调数据来打点画图:

-

-

-

-```python

-params_dirname = "image_classification_resnet.inference.model"

-

-from paddle.v2.plot import Ploter

-

-train_title = "Train cost"

-test_title = "Test cost"

-cost_ploter = Ploter(train_title, test_title)

-

-step = 0

-def event_handler_plot(event):

-global step

-if isinstance(event, fluid.EndStepEvent):

-if step % 1 == 0:

-cost_ploter.append(train_title, step, event.metrics[0])

-cost_ploter.plot()

-step += 1

-if isinstance(event, fluid.EndEpochEvent):

-avg_cost, accuracy = trainer.test(

-reader=test_reader,

-feed_order=['pixel', 'label'])

-cost_ploter.append(test_title, step, avg_cost)

-

-# save parameters

-if params_dirname is not None:

-trainer.save_params(params_dirname)

-```

-

-`event_handler` 用来在训练过程中输出文本日志

-

-```python

-params_dirname = "image_classification_resnet.inference.model"

-

-# event handler to track training and testing process

-def event_handler(event):

-if isinstance(event, fluid.EndStepEvent):

-if event.step % 100 == 0:

-print("\nPass %d, Batch %d, Cost %f, Acc %f" %

-(event.step, event.epoch, event.metrics[0],

-event.metrics[1]))

-else:

-sys.stdout.write('.')

-sys.stdout.flush()

-

-if isinstance(event, fluid.EndEpochEvent):

-# Test against with the test dataset to get accuracy.

-avg_cost, accuracy = trainer.test(

-reader=test_reader, feed_order=['pixel', 'label'])

-

-print('\nTest with Pass {0}, Loss {1:2.2}, Acc {2:2.2}'.format(event.epoch, avg_cost, accuracy))

-

-# save parameters

-if params_dirname is not None:

-trainer.save_params(params_dirname)

-```

-

-### 训练

-

-通过`trainer.train`函数训练:

-

-**注意:** CPU,每个 Epoch 将花费大约15~20分钟。这部分可能需要一段时间。请随意修改代码,在GPU上运行测试,以提高培训速度。

-

-```python

-trainer.train(

-reader=train_reader,

-num_epochs=2,

-event_handler=event_handler,

-feed_order=['pixel', 'label'])

-```

-

-一轮训练log示例如下所示,经过1个pass, 训练集上平均 Accuracy 为0.59 ,测试集上平均 Accuracy 为0.6 。

-

-```text

-Pass 0, Batch 0, Cost 3.869598, Acc 0.164062

-...................................................................................................

-Pass 100, Batch 0, Cost 1.481038, Acc 0.460938

-...................................................................................................

-Pass 200, Batch 0, Cost 1.340323, Acc 0.523438

-...................................................................................................

-Pass 300, Batch 0, Cost 1.223424, Acc 0.593750

-..........................................................................................

-Test with Pass 0, Loss 1.1, Acc 0.6

-```

-

-图12是训练的分类错误率曲线图,运行到第200个pass后基本收敛,最终得到测试集上分类错误率为8.54%。

-

-

-

-图12. CIFAR10数据集上VGG模型的分类错误率

-

-

-## 应用模型

-

-可以使用训练好的模型对图片进行分类,下面程序展示了如何使用 `fluid.Inferencer` 接口进行推断,可以打开注释,更改加载的模型。

-

-### 生成预测输入数据

-

-`dog.png` is an example image of a dog. Turn it into an numpy array to match the data feeder format.

-

-```python

-# Prepare testing data.

-from PIL import Image

-import numpy as np

-import os

-

-def load_image(file):

-im = Image.open(file)

-im = im.resize((32, 32), Image.ANTIALIAS)

-

-im = np.array(im).astype(np.float32)

-# The storage order of the loaded image is W(width),

-# H(height), C(channel). PaddlePaddle requires

-# the CHW order, so transpose them.

-im = im.transpose((2, 0, 1)) # CHW

-im = im / 255.0

-

-# Add one dimension to mimic the list format.

-im = numpy.expand_dims(im, axis=0)

-return im

-

-cur_dir = os.getcwd()

-img = load_image(cur_dir + '/image/dog.png')

-```

-

-### Inferencer 配置和预测

-

-`Inferencer` 需要一个 `infer_func` 和 `param_path` 来设置网络和经过训练的参数。

-我们可以简单地插入前面定义的推理程序。

-现在我们准备做预测。

-

-```python

-inferencer = fluid.Inferencer(

-infer_func=inference_program, param_path=params_dirname, place=place)

-

-# inference

-results = inferencer.infer({'pixel': img})

-print("infer results: ", results)

-```

-

-## 总结

-

-传统图像分类方法由多个阶段构成,框架较为复杂,而端到端的CNN模型结构可一步到位,而且大幅度提升了分类准确率。本文我们首先介绍VGG、GoogleNet、ResNet三个经典的模型;然后基于CIFAR10数据集,介绍如何使用PaddlePaddle配置和训练CNN模型,尤其是VGG和ResNet模型;最后介绍如何使用PaddlePaddle的API接口对图片进行预测和特征提取。对于其他数据集比如ImageNet,配置和训练流程是同样的,大家可以自行进行实验。

-

-

-## 参考文献

-

-[1] D. G. Lowe, [Distinctive image features from scale-invariant keypoints](http://www.cs.ubc.ca/~lowe/papers/ijcv04.pdf). IJCV, 60(2):91-110, 2004.

-

-[2] N. Dalal, B. Triggs, [Histograms of Oriented Gradients for Human Detection](http://vision.stanford.edu/teaching/cs231b_spring1213/papers/CVPR05_DalalTriggs.pdf), Proc. IEEE Conf. Computer Vision and Pattern Recognition, 2005.

-

-[3] Ahonen, T., Hadid, A., and Pietikinen, M. (2006). [Face description with local binary patterns: Application to face recognition](http://ieeexplore.ieee.org/document/1717463/). PAMI, 28.

-

-[4] J. Sivic, A. Zisserman, [Video Google: A Text Retrieval Approach to Object Matching in Videos](http://www.robots.ox.ac.uk/~vgg/publications/papers/sivic03.pdf), Proc. Ninth Int'l Conf. Computer Vision, pp. 1470-1478, 2003.

-

-[5] B. Olshausen, D. Field, [Sparse Coding with an Overcomplete Basis Set: A Strategy Employed by V1?](http://redwood.psych.cornell.edu/papers/olshausen_field_1997.pdf), Vision Research, vol. 37, pp. 3311-3325, 1997.

-

-[6] Wang, J., Yang, J., Yu, K., Lv, F., Huang, T., and Gong, Y. (2010). [Locality-constrained Linear Coding for image classification](http://ieeexplore.ieee.org/abstract/document/5540018/). In CVPR.

-

-[7] Perronnin, F., Sánchez, J., & Mensink, T. (2010). [Improving the fisher kernel for large-scale image classification](http://dl.acm.org/citation.cfm?id=1888101). In ECCV (4).

-

-[8] Lin, Y., Lv, F., Cao, L., Zhu, S., Yang, M., Cour, T., Yu, K., and Huang, T. (2011). [Large-scale image clas- sification: Fast feature extraction and SVM training](http://ieeexplore.ieee.org/document/5995477/). In CVPR.

-

-[9] Krizhevsky, A., Sutskever, I., and Hinton, G. (2012). [ImageNet classification with deep convolutional neu- ral networks](http://www.cs.toronto.edu/~kriz/imagenet_classification_with_deep_convolutional.pdf). In NIPS.

-

-[10] G.E. Hinton, N. Srivastava, A. Krizhevsky, I. Sutskever, and R.R. Salakhutdinov. [Improving neural networks by preventing co-adaptation of feature detectors](https://arxiv.org/abs/1207.0580). arXiv preprint arXiv:1207.0580, 2012.

-

-[11] K. Chatfield, K. Simonyan, A. Vedaldi, A. Zisserman. [Return of the Devil in the Details: Delving Deep into Convolutional Nets](https://arxiv.org/abs/1405.3531). BMVC, 2014。

-

-[12] Szegedy, C., Liu, W., Jia, Y., Sermanet, P., Reed, S., Anguelov, D., Erhan, D., Vanhoucke, V., Rabinovich, A., [Going deeper with convolutions](https://arxiv.org/abs/1409.4842). In: CVPR. (2015)

-

-[13] Lin, M., Chen, Q., and Yan, S. [Network in network](https://arxiv.org/abs/1312.4400). In Proc. ICLR, 2014.

-

-[14] S. Ioffe and C. Szegedy. [Batch normalization: Accelerating deep network training by reducing internal covariate shift](https://arxiv.org/abs/1502.03167). In ICML, 2015.

-

-[15] K. He, X. Zhang, S. Ren, J. Sun. [Deep Residual Learning for Image Recognition](https://arxiv.org/abs/1512.03385). CVPR 2016.

-

-[16] Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z. [Rethinking the incep-tion architecture for computer vision](https://arxiv.org/abs/1512.00567). In: CVPR. (2016).

-

-[17] Szegedy, C., Ioffe, S., Vanhoucke, V. [Inception-v4, inception-resnet and the impact of residual connections on learning](https://arxiv.org/abs/1602.07261). arXiv:1602.07261 (2016).

-

-[18] Everingham, M., Eslami, S. M. A., Van Gool, L., Williams, C. K. I., Winn, J. and Zisserman, A. [The Pascal Visual Object Classes Challenge: A Retrospective]((http://link.springer.com/article/10.1007/s11263-014-0733-5)). International Journal of Computer Vision, 111(1), 98-136, 2015.

-

-[19] He, K., Zhang, X., Ren, S., and Sun, J. [Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification](https://arxiv.org/abs/1502.01852). ArXiv e-prints, February 2015.

-

-[20] http://deeplearning.net/tutorial/lenet.html

-

-[21] https://www.cs.toronto.edu/~kriz/cifar.html

-

-[22] http://cs231n.github.io/classification/

-

-

-

本教程 由 PaddlePaddle 创作,采用 知识共享 署名-相同方式共享 4.0 国际 许可协议进行许可。

+

+# 图像分类

+

+本教程源代码目录在[book/image_classification](https://github.com/PaddlePaddle/book/tree/develop/03.image_classification), 初次使用请参考PaddlePaddle[安装教程](https://github.com/PaddlePaddle/book/blob/develop/README.cn.md#运行这本书),更多内容请参考本教程的[视频课堂](http://bit.baidu.com/course/detail/id/168.html)。

+

+## 背景介绍

+

+图像相比文字能够提供更加生动、容易理解及更具艺术感的信息,是人们转递与交换信息的重要来源。在本教程中,我们专注于图像识别领域的一个重要问题,即图像分类。

+

+图像分类是根据图像的语义信息将不同类别图像区分开来,是计算机视觉中重要的基本问题,也是图像检测、图像分割、物体跟踪、行为分析等其他高层视觉任务的基础。图像分类在很多领域有广泛应用,包括安防领域的人脸识别和智能视频分析等,交通领域的交通场景识别,互联网领域基于内容的图像检索和相册自动归类,医学领域的图像识别等。

+

+

+一般来说,图像分类通过手工特征或特征学习方法对整个图像进行全部描述,然后使用分类器判别物体类别,因此如何提取图像的特征至关重要。在深度学习算法之前使用较多的是基于词袋(Bag of Words)模型的物体分类方法。词袋方法从自然语言处理中引入,即一句话可以用一个装了词的袋子表示其特征,袋子中的词为句子中的单词、短语或字。对于图像而言,词袋方法需要构建字典。最简单的词袋模型框架可以设计为**底层特征抽取**、**特征编码**、**分类器设计**三个过程。

+

+而基于深度学习的图像分类方法,可以通过有监督或无监督的方式**学习**层次化的特征描述,从而取代了手工设计或选择图像特征的工作。深度学习模型中的卷积神经网络(Convolution Neural Network, CNN)近年来在图像领域取得了惊人的成绩,CNN直接利用图像像素信息作为输入,最大程度上保留了输入图像的所有信息,通过卷积操作进行特征的提取和高层抽象,模型输出直接是图像识别的结果。这种基于"输入-输出"直接端到端的学习方法取得了非常好的效果,得到了广泛的应用。

+

+本教程主要介绍图像分类的深度学习模型,以及如何使用PaddlePaddle训练CNN模型。

+

+## 效果展示

+

+图像分类包括通用图像分类、细粒度图像分类等。图1展示了通用图像分类效果,即模型可以正确识别图像上的主要物体。

+

+

+

+图1. 通用图像分类展示

+

+

+

+图2展示了细粒度图像分类-花卉识别的效果,要求模型可以正确识别花的类别。

+

+

+

+

+图2. 细粒度图像分类展示

+

+

+

+一个好的模型既要对不同类别识别正确,同时也应该能够对不同视角、光照、背景、变形或部分遮挡的图像正确识别(这里我们统一称作图像扰动)。图3展示了一些图像的扰动,较好的模型会像聪明的人类一样能够正确识别。

+

+

+

+图3. 扰动图片展示[22]

+

+

+## 模型概览

+

+图像识别领域大量的研究成果都是建立在[PASCAL VOC](http://host.robots.ox.ac.uk/pascal/VOC/)、[ImageNet](http://image-net.org/)等公开的数据集上,很多图像识别算法通常在这些数据集上进行测试和比较。PASCAL VOC是2005年发起的一个视觉挑战赛,ImageNet是2010年发起的大规模视觉识别竞赛(ILSVRC)的数据集,在本章中我们基于这些竞赛的一些论文介绍图像分类模型。

+

+在2012年之前的传统图像分类方法可以用背景描述中提到的三步完成,但通常完整建立图像识别模型一般包括底层特征学习、特征编码、空间约束、分类器设计、模型融合等几个阶段。

+

+ 1). **底层特征提取**: 通常从图像中按照固定步长、尺度提取大量局部特征描述。常用的局部特征包括SIFT(Scale-Invariant Feature Transform, 尺度不变特征转换) \[[1](#参考文献)\]、HOG(Histogram of Oriented Gradient, 方向梯度直方图) \[[2](#参考文献)\]、LBP(Local Bianray Pattern, 局部二值模式) \[[3](#参考文献)\] 等,一般也采用多种特征描述子,防止丢失过多的有用信息。

+

+ 2). **特征编码**: 底层特征中包含了大量冗余与噪声,为了提高特征表达的鲁棒性,需要使用一种特征变换算法对底层特征进行编码,称作特征编码。常用的特征编码包括向量量化编码 \[[4](#参考文献)\]、稀疏编码 \[[5](#参考文献)\]、局部线性约束编码 \[[6](#参考文献)\]、Fisher向量编码 \[[7](#参考文献)\] 等。

+

+ 3). **空间特征约束**: 特征编码之后一般会经过空间特征约束,也称作**特征汇聚**。特征汇聚是指在一个空间范围内,对每一维特征取最大值或者平均值,可以获得一定特征不变形的特征表达。金字塔特征匹配是一种常用的特征聚会方法,这种方法提出将图像均匀分块,在分块内做特征汇聚。

+

+ 4). **通过分类器分类**: 经过前面步骤之后一张图像可以用一个固定维度的向量进行描述,接下来就是经过分类器对图像进行分类。通常使用的分类器包括SVM(Support Vector Machine, 支持向量机)、随机森林等。而使用核方法的SVM是最为广泛的分类器,在传统图像分类任务上性能很好。

+

+这种方法在PASCAL VOC竞赛中的图像分类算法中被广泛使用 \[[18](#参考文献)\]。[NEC实验室](http://www.nec-labs.com/)在ILSVRC2010中采用SIFT和LBP特征,两个非线性编码器以及SVM分类器获得图像分类的冠军 \[[8](#参考文献)\]。

+

+Alex Krizhevsky在2012年ILSVRC提出的CNN模型 \[[9](#参考文献)\] 取得了历史性的突破,效果大幅度超越传统方法,获得了ILSVRC2012冠军,该模型被称作AlexNet。这也是首次将深度学习用于大规模图像分类中。从AlexNet之后,涌现了一系列CNN模型,不断地在ImageNet上刷新成绩,如图4展示。随着模型变得越来越深以及精妙的结构设计,Top-5的错误率也越来越低,降到了3.5%附近。而在同样的ImageNet数据集上,人眼的辨识错误率大概在5.1%,也就是目前的深度学习模型的识别能力已经超过了人眼。

+

+

+

+图4. ILSVRC图像分类Top-5错误率

+

+

+### CNN

+

+传统CNN包含卷积层、全连接层等组件,并采用softmax多类别分类器和多类交叉熵损失函数,一个典型的卷积神经网络如图5所示,我们先介绍用来构造CNN的常见组件。

+

+

+

+图5. CNN网络示例[20]

+

+

+- 卷积层(convolution layer): 执行卷积操作提取底层到高层的特征,发掘出图片局部关联性质和空间不变性质。

+- 池化层(pooling layer): 执行降采样操作。通过取卷积输出特征图中局部区块的最大值(max-pooling)或者均值(avg-pooling)。降采样也是图像处理中常见的一种操作,可以过滤掉一些不重要的高频信息。

+- 全连接层(fully-connected layer,或者fc layer): 输入层到隐藏层的神经元是全部连接的。

+- 非线性变化: 卷积层、全连接层后面一般都会接非线性变化层,例如Sigmoid、Tanh、ReLu等来增强网络的表达能力,在CNN里最常使用的为ReLu激活函数。

+- Dropout \[[10](#参考文献)\] : 在模型训练阶段随机让一些隐层节点权重不工作,提高网络的泛化能力,一定程度上防止过拟合。

+

+另外,在训练过程中由于每层参数不断更新,会导致下一次输入分布发生变化,这样导致训练过程需要精心设计超参数。如2015年Sergey Ioffe和Christian Szegedy提出了Batch Normalization (BN)算法 \[[14](#参考文献)\] 中,每个batch对网络中的每一层特征都做归一化,使得每层分布相对稳定。BN算法不仅起到一定的正则作用,而且弱化了一些超参数的设计。经过实验证明,BN算法加速了模型收敛过程,在后来较深的模型中被广泛使用。

+

+接下来我们主要介绍VGG,GoogleNet和ResNet网络结构。

+

+### VGG

+

+牛津大学VGG(Visual Geometry Group)组在2014年ILSVRC提出的模型被称作VGG模型 \[[11](#参考文献)\] 。该模型相比以往模型进一步加宽和加深了网络结构,它的核心是五组卷积操作,每两组之间做Max-Pooling空间降维。同一组内采用多次连续的3X3卷积,卷积核的数目由较浅组的64增多到最深组的512,同一组内的卷积核数目是一样的。卷积之后接两层全连接层,之后是分类层。由于每组内卷积层的不同,有11、13、16、19层这几种模型,下图展示一个16层的网络结构。VGG模型结构相对简洁,提出之后也有很多文章基于此模型进行研究,如在ImageNet上首次公开超过人眼识别的模型\[[19](#参考文献)\]就是借鉴VGG模型的结构。

+

+

+

+图6. 基于ImageNet的VGG16模型

+

+

+### GoogleNet

+

+GoogleNet \[[12](#参考文献)\] 在2014年ILSVRC的获得了冠军,在介绍该模型之前我们先来了解NIN(Network in Network)模型 \[[13](#参考文献)\] 和Inception模块,因为GoogleNet模型由多组Inception模块组成,模型设计借鉴了NIN的一些思想。

+

+NIN模型主要有两个特点:

+

+1) 引入了多层感知卷积网络(Multi-Layer Perceptron Convolution, MLPconv)代替一层线性卷积网络。MLPconv是一个微小的多层卷积网络,即在线性卷积后面增加若干层1x1的卷积,这样可以提取出高度非线性特征。

+

+2) 传统的CNN最后几层一般都是全连接层,参数较多。而NIN模型设计最后一层卷积层包含类别维度大小的特征图,然后采用全局均值池化(Avg-Pooling)替代全连接层,得到类别维度大小的向量,再进行分类。这种替代全连接层的方式有利于减少参数。

+

+Inception模块如下图7所示,图(a)是最简单的设计,输出是3个卷积层和一个池化层的特征拼接。这种设计的缺点是池化层不会改变特征通道数,拼接后会导致特征的通道数较大,经过几层这样的模块堆积后,通道数会越来越大,导致参数和计算量也随之增大。为了改善这个缺点,图(b)引入3个1x1卷积层进行降维,所谓的降维就是减少通道数,同时如NIN模型中提到的1x1卷积也可以修正线性特征。

+

+

+

+图7. Inception模块

+

+

+GoogleNet由多组Inception模块堆积而成。另外,在网络最后也没有采用传统的多层全连接层,而是像NIN网络一样采用了均值池化层;但与NIN不同的是,池化层后面接了一层到类别数映射的全连接层。除了这两个特点之外,由于网络中间层特征也很有判别性,GoogleNet在中间层添加了两个辅助分类器,在后向传播中增强梯度并且增强正则化,而整个网络的损失函数是这个三个分类器的损失加权求和。

+

+GoogleNet整体网络结构如图8所示,总共22层网络:开始由3层普通的卷积组成;接下来由三组子网络组成,第一组子网络包含2个Inception模块,第二组包含5个Inception模块,第三组包含2个Inception模块;然后接均值池化层、全连接层。

+

+

+

+图8. GoogleNet[12]

+

+

+

+上面介绍的是GoogleNet第一版模型(称作GoogleNet-v1)。GoogleNet-v2 \[[14](#参考文献)\] 引入BN层;GoogleNet-v3 \[[16](#参考文献)\] 对一些卷积层做了分解,进一步提高网络非线性能力和加深网络;GoogleNet-v4 \[[17](#参考文献)\] 引入下面要讲的ResNet设计思路。从v1到v4每一版的改进都会带来准确度的提升,介于篇幅,这里不再详细介绍v2到v4的结构。

+

+

+### ResNet

+

+ResNet(Residual Network) \[[15](#参考文献)\] 是2015年ImageNet图像分类、图像物体定位和图像物体检测比赛的冠军。针对训练卷积神经网络时加深网络导致准确度下降的问题,ResNet提出了采用残差学习。在已有设计思路(BN, 小卷积核,全卷积网络)的基础上,引入了残差模块。每个残差模块包含两条路径,其中一条路径是输入特征的直连通路,另一条路径对该特征做两到三次卷积操作得到该特征的残差,最后再将两条路径上的特征相加。

+

+残差模块如图9所示,左边是基本模块连接方式,由两个输出通道数相同的3x3卷积组成。右边是瓶颈模块(Bottleneck)连接方式,之所以称为瓶颈,是因为上面的1x1卷积用来降维(图示例即256->64),下面的1x1卷积用来升维(图示例即64->256),这样中间3x3卷积的输入和输出通道数都较小(图示例即64->64)。

+

+

+

+图9. 残差模块

+

+

+图10展示了50、101、152层网络连接示意图,使用的是瓶颈模块。这三个模型的区别在于每组中残差模块的重复次数不同(见图右上角)。ResNet训练收敛较快,成功的训练了上百乃至近千层的卷积神经网络。

+

+

+

+图10. 基于ImageNet的ResNet模型

+

+

+

+## 数据准备

+

+通用图像分类公开的标准数据集常用的有[CIFAR](https://www.cs.toronto.edu/~kriz/cifar.html)、[ImageNet](http://image-net.org/)、[COCO](http://mscoco.org/)等,常用的细粒度图像分类数据集包括[CUB-200-2011](http://www.vision.caltech.edu/visipedia/CUB-200-2011.html)、[Stanford Dog](http://vision.stanford.edu/aditya86/ImageNetDogs/)、[Oxford-flowers](http://www.robots.ox.ac.uk/~vgg/data/flowers/)等。其中ImageNet数据集规模相对较大,如[模型概览](#模型概览)一章所讲,大量研究成果基于ImageNet。ImageNet数据从2010年来稍有变化,常用的是ImageNet-2012数据集,该数据集包含1000个类别:训练集包含1,281,167张图片,每个类别数据732至1300张不等,验证集包含50,000张图片,平均每个类别50张图片。

+

+由于ImageNet数据集较大,下载和训练较慢,为了方便大家学习,我们使用[CIFAR10]()数据集。CIFAR10数据集包含60,000张32x32的彩色图片,10个类别,每个类包含6,000张。其中50,000张图片作为训练集,10000张作为测试集。图11从每个类别中随机抽取了10张图片,展示了所有的类别。

+

+

+

+图11. CIFAR10数据集[21]

+

+

+Paddle API提供了自动加载cifar数据集模块 `paddle.dataset.cifar`。

+

+通过输入`python train.py`,就可以开始训练模型了,以下小节将详细介绍`train.py`的相关内容。

+

+### 模型结构

+

+#### Paddle 初始化

+

+让我们从导入 Paddle Fluid API 和辅助模块开始。

+

+```python

+import paddle

+import paddle.fluid as fluid

+import numpy

+import sys

+from __future__ import print_function

+```

+

+本教程中我们提供了VGG和ResNet两个模型的配置。

+

+#### VGG

+

+首先介绍VGG模型结构,由于CIFAR10图片大小和数量相比ImageNet数据小很多,因此这里的模型针对CIFAR10数据做了一定的适配。卷积部分引入了BN和Dropout操作。

+VGG核心模块的输入是数据层,`vgg_bn_drop` 定义了16层VGG结构,每层卷积后面引入BN层和Dropout层,详细的定义如下:

+

+```python

+def vgg_bn_drop(input):

+ def conv_block(ipt, num_filter, groups, dropouts):

+ return fluid.nets.img_conv_group(

+ input=ipt,

+ pool_size=2,

+ pool_stride=2,

+ conv_num_filter=[num_filter] * groups,

+ conv_filter_size=3,

+ conv_act='relu',

+ conv_with_batchnorm=True,

+ conv_batchnorm_drop_rate=dropouts,

+ pool_type='max')

+

+ conv1 = conv_block(input, 64, 2, [0.3, 0])

+ conv2 = conv_block(conv1, 128, 2, [0.4, 0])

+ conv3 = conv_block(conv2, 256, 3, [0.4, 0.4, 0])

+ conv4 = conv_block(conv3, 512, 3, [0.4, 0.4, 0])

+ conv5 = conv_block(conv4, 512, 3, [0.4, 0.4, 0])

+

+ drop = fluid.layers.dropout(x=conv5, dropout_prob=0.5)

+ fc1 = fluid.layers.fc(input=drop, size=512, act=None)

+ bn = fluid.layers.batch_norm(input=fc1, act='relu')

+ drop2 = fluid.layers.dropout(x=bn, dropout_prob=0.5)

+ fc2 = fluid.layers.fc(input=drop2, size=512, act=None)

+ predict = fluid.layers.fc(input=fc2, size=10, act='softmax')

+ return predict

+```

+

+

+1. 首先定义了一组卷积网络,即conv_block。卷积核大小为3x3,池化窗口大小为2x2,窗口滑动大小为2,groups决定每组VGG模块是几次连续的卷积操作,dropouts指定Dropout操作的概率。所使用的`img_conv_group`是在`paddle.networks`中预定义的模块,由若干组 Conv->BN->ReLu->Dropout 和 一组 Pooling 组成。

+

+2. 五组卷积操作,即 5个conv_block。 第一、二组采用两次连续的卷积操作。第三、四、五组采用三次连续的卷积操作。每组最后一个卷积后面Dropout概率为0,即不使用Dropout操作。

+

+3. 最后接两层512维的全连接。

+

+4. 通过上面VGG网络提取高层特征,然后经过全连接层映射到类别维度大小的向量,再通过Softmax归一化得到每个类别的概率,也可称作分类器。

+

+### ResNet

+

+ResNet模型的第1、3、4步和VGG模型相同,这里不再介绍。主要介绍第2步即CIFAR10数据集上ResNet核心模块。

+

+先介绍`resnet_cifar10`中的一些基本函数,再介绍网络连接过程。

+

+ - `conv_bn_layer` : 带BN的卷积层。

+ - `shortcut` : 残差模块的"直连"路径,"直连"实际分两种形式:残差模块输入和输出特征通道数不等时,采用1x1卷积的升维操作;残差模块输入和输出通道相等时,采用直连操作。

+ - `basicblock` : 一个基础残差模块,即图9左边所示,由两组3x3卷积组成的路径和一条"直连"路径组成。

+ - `bottleneck` : 一个瓶颈残差模块,即图9右边所示,由上下1x1卷积和中间3x3卷积组成的路径和一条"直连"路径组成。

+ - `layer_warp` : 一组残差模块,由若干个残差模块堆积而成。每组中第一个残差模块滑动窗口大小与其他可以不同,以用来减少特征图在垂直和水平方向的大小。

+

+```python

+def conv_bn_layer(input,

+ ch_out,

+ filter_size,

+ stride,

+ padding,

+ act='relu',

+ bias_attr=False):

+ tmp = fluid.layers.conv2d(

+ input=input,

+ filter_size=filter_size,

+ num_filters=ch_out,

+ stride=stride,

+ padding=padding,

+ act=None,

+ bias_attr=bias_attr)

+ return fluid.layers.batch_norm(input=tmp, act=act)

+

+

+def shortcut(input, ch_in, ch_out, stride):

+ if ch_in != ch_out:

+ return conv_bn_layer(input, ch_out, 1, stride, 0, None)

+ else:

+ return input

+

+

+def basicblock(input, ch_in, ch_out, stride):

+ tmp = conv_bn_layer(input, ch_out, 3, stride, 1)

+ tmp = conv_bn_layer(tmp, ch_out, 3, 1, 1, act=None, bias_attr=True)

+ short = shortcut(input, ch_in, ch_out, stride)

+ return fluid.layers.elementwise_add(x=tmp, y=short, act='relu')

+

+

+def layer_warp(block_func, input, ch_in, ch_out, count, stride):

+ tmp = block_func(input, ch_in, ch_out, stride)

+ for i in range(1, count):

+ tmp = block_func(tmp, ch_out, ch_out, 1)

+ return tmp

+```

+

+`resnet_cifar10` 的连接结构主要有以下几个过程。

+

+1. 底层输入连接一层 `conv_bn_layer`,即带BN的卷积层。

+

+2. 然后连接3组残差模块即下面配置3组 `layer_warp` ,每组采用图 10 左边残差模块组成。

+

+3. 最后对网络做均值池化并返回该层。

+

+注意:除过第一层卷积层和最后一层全连接层之外,要求三组 `layer_warp` 总的含参层数能够被6整除,即 `resnet_cifar10` 的 depth 要满足 $(depth - 2) % 6 == 0$ 。

+

+```python

+def resnet_cifar10(ipt, depth=32):

+ # depth should be one of 20, 32, 44, 56, 110, 1202

+ assert (depth - 2) % 6 == 0

+ n = (depth - 2) / 6

+ nStages = {16, 64, 128}

+ conv1 = conv_bn_layer(ipt, ch_out=16, filter_size=3, stride=1, padding=1)

+ res1 = layer_warp(basicblock, conv1, 16, 16, n, 1)

+ res2 = layer_warp(basicblock, res1, 16, 32, n, 2)

+ res3 = layer_warp(basicblock, res2, 32, 64, n, 2)

+ pool = fluid.layers.pool2d(

+ input=res3, pool_size=8, pool_type='avg', pool_stride=1)

+ predict = fluid.layers.fc(input=pool, size=10, act='softmax')

+ return predict

+```

+

+## Infererence Program 配置

+

+网络输入定义为 `data_layer` (数据层),在图像分类中即为图像像素信息。CIFRAR10是RGB 3通道32x32大小的彩色图,因此输入数据大小为3072(3x32x32)。

+

+```python

+def inference_program():

+ # The image is 32 * 32 with RGB representation.

+ data_shape = [3, 32, 32]

+ images = fluid.layers.data(name='pixel', shape=data_shape, dtype='float32')

+

+ predict = resnet_cifar10(images, 32)

+ # predict = vgg_bn_drop(images) # un-comment to use vgg net

+ return predict

+```

+

+## Train Program 配置

+

+然后我们需要设置训练程序 `train_program`。它首先从推理程序中进行预测。

+在训练期间,它将从预测中计算 `avg_cost`。

+在有监督训练中需要输入图像对应的类别信息,同样通过`fluid.layers.data`来定义。训练中采用多类交叉熵作为损失函数,并作为网络的输出,预测阶段定义网络的输出为分类器得到的概率信息。

+

+**注意:** 训练程序应该返回一个数组,第一个返回参数必须是 `avg_cost`。训练器使用它来计算梯度。

+

+```python

+def train_program():

+ predict = inference_program()

+

+ label = fluid.layers.data(name='label', shape=[1], dtype='int64')

+ cost = fluid.layers.cross_entropy(input=predict, label=label)

+ avg_cost = fluid.layers.mean(cost)

+ accuracy = fluid.layers.accuracy(input=predict, label=label)

+ return [avg_cost, accuracy]

+```

+

+## Optimizer Function 配置

+

+在下面的 `Adam optimizer`,`learning_rate` 是训练的速度,与网络的训练收敛速度有关系。

+

+```python

+def optimizer_program():

+ return fluid.optimizer.Adam(learning_rate=0.001)

+```

+

+## 训练模型

+

+### Trainer 配置

+

+现在,我们需要配置 `Trainer`。`Trainer` 需要接受训练程序 `train_program`, `place` 和优化器 `optimizer_func`。

+

+```python

+use_cuda = False

+place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

+trainer = fluid.Trainer(

+ train_func=train_program,

+ optimizer_func=optimizer_program,

+ place=place)

+```

+

+### Data Feeders 配置

+

+`cifar.train10()` 每次产生一条样本,在完成shuffle和batch之后,作为训练的输入。

+

+```python

+# Each batch will yield 128 images

+BATCH_SIZE = 128

+

+# Reader for training

+train_reader = paddle.batch(

+ paddle.reader.shuffle(paddle.dataset.cifar.train10(), buf_size=50000),

+ batch_size=BATCH_SIZE)

+

+# Reader for testing. A separated data set for testing.

+test_reader = paddle.batch(

+ paddle.dataset.cifar.test10(), batch_size=BATCH_SIZE)

+```

+

+### Event Handler

+

+可以使用`event_handler`回调函数来观察训练过程,或进行测试等, 该回调函数是`trainer.train`函数里设定。

+

+`event_handler_plot`可以用来利用回调数据来打点画图:

+

+

+

+图12. 训练结果

+

+

+

+```python

+params_dirname = "image_classification_resnet.inference.model"

+

+from paddle.v2.plot import Ploter

+

+train_title = "Train cost"

+test_title = "Test cost"

+cost_ploter = Ploter(train_title, test_title)

+

+step = 0

+def event_handler_plot(event):

+ global step

+ if isinstance(event, fluid.EndStepEvent):

+ if step % 1 == 0:

+ cost_ploter.append(train_title, step, event.metrics[0])

+ cost_ploter.plot()

+ step += 1

+ if isinstance(event, fluid.EndEpochEvent):

+ avg_cost, accuracy = trainer.test(

+ reader=test_reader,

+ feed_order=['pixel', 'label'])

+ cost_ploter.append(test_title, step, avg_cost)

+

+ # save parameters

+ if params_dirname is not None:

+ trainer.save_params(params_dirname)

+```

+

+`event_handler` 用来在训练过程中输出文本日志

+

+```python

+params_dirname = "image_classification_resnet.inference.model"

+

+# event handler to track training and testing process

+def event_handler(event):

+ if isinstance(event, fluid.EndStepEvent):

+ if event.step % 100 == 0:

+ print("\nPass %d, Batch %d, Cost %f, Acc %f" %

+ (event.step, event.epoch, event.metrics[0],

+ event.metrics[1]))

+ else:

+ sys.stdout.write('.')

+ sys.stdout.flush()

+

+ if isinstance(event, fluid.EndEpochEvent):

+ # Test against with the test dataset to get accuracy.

+ avg_cost, accuracy = trainer.test(

+ reader=test_reader, feed_order=['pixel', 'label'])

+

+ print('\nTest with Pass {0}, Loss {1:2.2}, Acc {2:2.2}'.format(event.epoch, avg_cost, accuracy))

+

+ # save parameters

+ if params_dirname is not None:

+ trainer.save_params(params_dirname)

+```

+

+### 训练

+

+通过`trainer.train`函数训练:

+

+**注意:** CPU,每个 Epoch 将花费大约15~20分钟。这部分可能需要一段时间。请随意修改代码,在GPU上运行测试,以提高训练速度。

+

+```python

+trainer.train(

+ reader=train_reader,

+ num_epochs=2,

+ event_handler=event_handler,

+ feed_order=['pixel', 'label'])

+```

+

+一轮训练log示例如下所示,经过1个pass, 训练集上平均 Accuracy 为0.59 ,测试集上平均 Accuracy 为0.6 。

+

+```text

+Pass 0, Batch 0, Cost 3.869598, Acc 0.164062

+...................................................................................................

+Pass 100, Batch 0, Cost 1.481038, Acc 0.460938

+...................................................................................................

+Pass 200, Batch 0, Cost 1.340323, Acc 0.523438

+...................................................................................................

+Pass 300, Batch 0, Cost 1.223424, Acc 0.593750

+..........................................................................................

+Test with Pass 0, Loss 1.1, Acc 0.6

+```

+

+图13是训练的分类错误率曲线图,运行到第200个pass后基本收敛,最终得到测试集上分类错误率为8.54%。

+

+

+

+图13. CIFAR10数据集上VGG模型的分类错误率

+

+

+## 应用模型

+

+可以使用训练好的模型对图片进行分类,下面程序展示了如何使用 `fluid.Inferencer` 接口进行推断,可以打开注释,更改加载的模型。

+

+### 生成预测输入数据

+

+`dog.png` is an example image of a dog. Turn it into an numpy array to match the data feeder format.

+

+```python

+# Prepare testing data.

+from PIL import Image

+import numpy as np

+import os

+

+def load_image(file):

+ im = Image.open(file)

+ im = im.resize((32, 32), Image.ANTIALIAS)

+

+ im = np.array(im).astype(np.float32)

+ # The storage order of the loaded image is W(width),

+ # H(height), C(channel). PaddlePaddle requires

+ # the CHW order, so transpose them.

+ im = im.transpose((2, 0, 1)) # CHW

+ im = im / 255.0

+

+ # Add one dimension to mimic the list format.

+ im = numpy.expand_dims(im, axis=0)

+ return im

+

+cur_dir = os.getcwd()

+img = load_image(cur_dir + '/image/dog.png')

+```

+

+### Inferencer 配置和预测

+

+`Inferencer` 需要一个 `infer_func` 和 `param_path` 来设置网络和经过训练的参数。

+我们可以简单地插入前面定义的推理程序。

+现在我们准备做预测。

+

+```python

+inferencer = fluid.Inferencer(

+ infer_func=inference_program, param_path=params_dirname, place=place)

+label_list = ["airplane", "automobile", "bird", "cat", "deer", "dog", "frog", "horse", "ship", "truck"]

+# inference

+results = inferencer.infer({'pixel': img})

+print("infer results: %s" % label_list[np.argmax(results[0])])

+```

+

+## 总结

+

+传统图像分类方法由多个阶段构成,框架较为复杂,而端到端的CNN模型结构可一步到位,而且大幅度提升了分类准确率。本文我们首先介绍VGG、GoogleNet、ResNet三个经典的模型;然后基于CIFAR10数据集,介绍如何使用PaddlePaddle配置和训练CNN模型,尤其是VGG和ResNet模型;最后介绍如何使用PaddlePaddle的API接口对图片进行预测和特征提取。对于其他数据集比如ImageNet,配置和训练流程是同样的,大家可以自行进行实验。

+

+

+## 参考文献

+

+[1] D. G. Lowe, [Distinctive image features from scale-invariant keypoints](http://www.cs.ubc.ca/~lowe/papers/ijcv04.pdf). IJCV, 60(2):91-110, 2004.

+

+[2] N. Dalal, B. Triggs, [Histograms of Oriented Gradients for Human Detection](http://vision.stanford.edu/teaching/cs231b_spring1213/papers/CVPR05_DalalTriggs.pdf), Proc. IEEE Conf. Computer Vision and Pattern Recognition, 2005.

+

+[3] Ahonen, T., Hadid, A., and Pietikinen, M. (2006). [Face description with local binary patterns: Application to face recognition](http://ieeexplore.ieee.org/document/1717463/). PAMI, 28.

+

+[4] J. Sivic, A. Zisserman, [Video Google: A Text Retrieval Approach to Object Matching in Videos](http://www.robots.ox.ac.uk/~vgg/publications/papers/sivic03.pdf), Proc. Ninth Int'l Conf. Computer Vision, pp. 1470-1478, 2003.

+

+[5] B. Olshausen, D. Field, [Sparse Coding with an Overcomplete Basis Set: A Strategy Employed by V1?](http://redwood.psych.cornell.edu/papers/olshausen_field_1997.pdf), Vision Research, vol. 37, pp. 3311-3325, 1997.

+

+[6] Wang, J., Yang, J., Yu, K., Lv, F., Huang, T., and Gong, Y. (2010). [Locality-constrained Linear Coding for image classification](http://ieeexplore.ieee.org/abstract/document/5540018/). In CVPR.

+

+[7] Perronnin, F., Sánchez, J., & Mensink, T. (2010). [Improving the fisher kernel for large-scale image classification](http://dl.acm.org/citation.cfm?id=1888101). In ECCV (4).

+

+[8] Lin, Y., Lv, F., Cao, L., Zhu, S., Yang, M., Cour, T., Yu, K., and Huang, T. (2011). [Large-scale image clas- sification: Fast feature extraction and SVM training](http://ieeexplore.ieee.org/document/5995477/). In CVPR.

+

+[9] Krizhevsky, A., Sutskever, I., and Hinton, G. (2012). [ImageNet classification with deep convolutional neu- ral networks](http://www.cs.toronto.edu/~kriz/imagenet_classification_with_deep_convolutional.pdf). In NIPS.

+

+[10] G.E. Hinton, N. Srivastava, A. Krizhevsky, I. Sutskever, and R.R. Salakhutdinov. [Improving neural networks by preventing co-adaptation of feature detectors](https://arxiv.org/abs/1207.0580). arXiv preprint arXiv:1207.0580, 2012.

+

+[11] K. Chatfield, K. Simonyan, A. Vedaldi, A. Zisserman. [Return of the Devil in the Details: Delving Deep into Convolutional Nets](https://arxiv.org/abs/1405.3531). BMVC, 2014。

+

+[12] Szegedy, C., Liu, W., Jia, Y., Sermanet, P., Reed, S., Anguelov, D., Erhan, D., Vanhoucke, V., Rabinovich, A., [Going deeper with convolutions](https://arxiv.org/abs/1409.4842). In: CVPR. (2015)

+

+[13] Lin, M., Chen, Q., and Yan, S. [Network in network](https://arxiv.org/abs/1312.4400). In Proc. ICLR, 2014.

+

+[14] S. Ioffe and C. Szegedy. [Batch normalization: Accelerating deep network training by reducing internal covariate shift](https://arxiv.org/abs/1502.03167). In ICML, 2015.

+

+[15] K. He, X. Zhang, S. Ren, J. Sun. [Deep Residual Learning for Image Recognition](https://arxiv.org/abs/1512.03385). CVPR 2016.

+

+[16] Szegedy, C., Vanhoucke, V., Ioffe, S., Shlens, J., Wojna, Z. [Rethinking the incep-tion architecture for computer vision](https://arxiv.org/abs/1512.00567). In: CVPR. (2016).

+

+[17] Szegedy, C., Ioffe, S., Vanhoucke, V. [Inception-v4, inception-resnet and the impact of residual connections on learning](https://arxiv.org/abs/1602.07261). arXiv:1602.07261 (2016).

+

+[18] Everingham, M., Eslami, S. M. A., Van Gool, L., Williams, C. K. I., Winn, J. and Zisserman, A. [The Pascal Visual Object Classes Challenge: A Retrospective]((http://link.springer.com/article/10.1007/s11263-014-0733-5)). International Journal of Computer Vision, 111(1), 98-136, 2015.

+

+[19] He, K., Zhang, X., Ren, S., and Sun, J. [Delving Deep into Rectifiers: Surpassing Human-Level Performance on ImageNet Classification](https://arxiv.org/abs/1502.01852). ArXiv e-prints, February 2015.

+

+[20] http://deeplearning.net/tutorial/lenet.html

+

+[21] https://www.cs.toronto.edu/~kriz/cifar.html

+

+[22] http://cs231n.github.io/classification/

+

+

+

本教程 由 PaddlePaddle 创作,采用 知识共享 署名-相同方式共享 4.0 国际 许可协议进行许可。

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/cifar.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/cifar.png

new file mode 100644

index 0000000000000000000000000000000000000000..f3c5f2f7b0c84f83382b70124dcd439586ed4eb0

Binary files /dev/null and b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/cifar.png differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/inception_en.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/inception_en.png

new file mode 100644

index 0000000000000000000000000000000000000000..39580c20b583f2a15d17fd124a572c84e6e2db1d

Binary files /dev/null and b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/inception_en.png differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/lenet_en.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/lenet_en.png

new file mode 100644

index 0000000000000000000000000000000000000000..97a1e3eee45c0db95e6a943ca3b8c0cf6c34d4b6

Binary files /dev/null and b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/lenet_en.png differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/plot_en.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/plot_en.png

new file mode 100644

index 0000000000000000000000000000000000000000..147e575bf49086811c43420d5a9c8f749e2da405

Binary files /dev/null and b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/plot_en.png differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/variations.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/variations.png

new file mode 100644

index 0000000000000000000000000000000000000000..b4ebbbe6a50f5fd7cd0cccb52cdac5653e34654c

Binary files /dev/null and b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/variations.png differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/variations_en.png b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/variations_en.png

new file mode 100644

index 0000000000000000000000000000000000000000..88c60fe87f802c5ce560bb15bbdbd229aeafc4e4

Binary files /dev/null and b/doc/fluid/new_docs/beginners_guide/basics/image_classification/image/variations_en.png differ

diff --git a/doc/fluid/new_docs/beginners_guide/basics/index.rst b/doc/fluid/new_docs/beginners_guide/basics/index.rst

index d16f8b947253a535567ddc8d7b227dd153d9b154..e1fd226116d88fbf137741242b304b367e598ba5 100644

--- a/doc/fluid/new_docs/beginners_guide/basics/index.rst

+++ b/doc/fluid/new_docs/beginners_guide/basics/index.rst

@@ -6,13 +6,13 @@

.. todo::

概述

-

+

.. toctree::

:maxdepth: 2

- image_classification/index.md

- word2vec/index.md

- recommender_system/index.md

- understand_sentiment/index.md

- label_semantic_roles/index.md

- machine_translation/index.md

+ image_classification/README.cn.md

+ word2vec/README.cn.md

+ recommender_system/README.cn.md

+ understand_sentiment/README.cn.md

+ label_semantic_roles/README.cn.md

+ machine_translation/README.cn.md

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/.gitignore b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/.gitignore

deleted file mode 100644

index 29b5622a53a1b0847e9f53febf1cc50dcf4f044a..0000000000000000000000000000000000000000

--- a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/.gitignore

+++ /dev/null

@@ -1,12 +0,0 @@

-data/train.list

-data/test.*

-data/conll05st-release.tar.gz

-data/conll05st-release

-data/predicate_dict

-data/label_dict

-data/word_dict

-data/emb

-data/feature

-output

-predict.res

-train.log

diff --git a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/index.md b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/README.cn.md

similarity index 54%

rename from doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/index.md

rename to doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/README.cn.md

index 828ca738317992270487647e66b08b6d2f80e209..47e948bd1ffc0ca692dc9899193e94831ce4234b 100644

--- a/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/index.md

+++ b/doc/fluid/new_docs/beginners_guide/basics/label_semantic_roles/README.cn.md

@@ -1,568 +1,562 @@

-# 语义角色标注

-

-本教程源代码目录在[book/label_semantic_roles](https://github.com/PaddlePaddle/book/tree/develop/07.label_semantic_roles), 初次使用请参考PaddlePaddle[安装教程](https://github.com/PaddlePaddle/book/blob/develop/README.cn.md#运行这本书)。

-

-## 背景介绍

-

-自然语言分析技术大致分为三个层面:词法分析、句法分析和语义分析。语义角色标注是实现浅层语义分析的一种方式。在一个句子中,谓词是对主语的陈述或说明,指出“做什么”、“是什么”或“怎么样,代表了一个事件的核心,跟谓词搭配的名词称为论元。语义角色是指论元在动词所指事件中担任的角色。主要有:施事者(Agent)、受事者(Patient)、客体(Theme)、经验者(Experiencer)、受益者(Beneficiary)、工具(Instrument)、处所(Location)、目标(Goal)和来源(Source)等。

-

-请看下面的例子,“遇到” 是谓词(Predicate,通常简写为“Pred”),“小明”是施事者(Agent),“小红”是受事者(Patient),“昨天” 是事件发生的时间(Time),“公园”是事情发生的地点(Location)。

-

-$$\mbox{[小明]}_{\mbox{Agent}}\mbox{[昨天]}_{\mbox{Time}}\mbox{[晚上]}_{\mbox{Time}}\mbox{在[公园]}_{\mbox{Location}}\mbox{[遇到]}_{\mbox{Predicate}}\mbox{了[小红]}_{\mbox{Patient}}\mbox{。}$$

-

-语义角色标注(Semantic Role Labeling,SRL)以句子的谓词为中心,不对句子所包含的语义信息进行深入分析,只分析句子中各成分与谓词之间的关系,即句子的谓词(Predicate)- 论元(Argument)结构,并用语义角色来描述这些结构关系,是许多自然语言理解任务(如信息抽取,篇章分析,深度问答等)的一个重要中间步骤。在研究中一般都假定谓词是给定的,所要做的就是找出给定谓词的各个论元和它们的语义角色。

-

-传统的SRL系统大多建立在句法分析基础之上,通常包括5个流程:

-

-1. 构建一棵句法分析树,例如,图1是对上面例子进行依存句法分析得到的一棵句法树。

-2. 从句法树上识别出给定谓词的候选论元。

-3. 候选论元剪除;一个句子中的候选论元可能很多,候选论元剪除就是从大量的候选项中剪除那些最不可能成为论元的候选项。

-4. 论元识别:这个过程是从上一步剪除之后的候选中判断哪些是真正的论元,通常当做一个二分类问题来解决。

-5. 对第4步的结果,通过多分类得到论元的语义角色标签。可以看到,句法分析是基础,并且后续步骤常常会构造的一些人工特征,这些特征往往也来自句法分析。

-

-

-

-图1. 依存句法分析句法树示例

-

-

-然而,完全句法分析需要确定句子所包含的全部句法信息,并确定句子各成分之间的关系,是一个非常困难的任务,目前技术下的句法分析准确率并不高,句法分析的细微错误都会导致SRL的错误。为了降低问题的复杂度,同时获得一定的句法结构信息,“浅层句法分析”的思想应运而生。浅层句法分析也称为部分句法分析(partial parsing)或语块划分(chunking)。和完全句法分析得到一颗完整的句法树不同,浅层句法分析只需要识别句子中某些结构相对简单的独立成分,例如:动词短语,这些被识别出来的结构称为语块。为了回避 “无法获得准确率较高的句法树” 所带来的困难,一些研究\[[1](#参考文献)\]也提出了基于语块(chunk)的SRL方法。基于语块的SRL方法将SRL作为一个序列标注问题来解决。序列标注任务一般都会采用BIO表示方式来定义序列标注的标签集,我们先来介绍这种表示方法。在BIO表示法中,B代表语块的开始,I代表语块的中间,O代表语块结束。通过B、I、O 三种标记将不同的语块赋予不同的标签,例如:对于一个角色为A的论元,将它所包含的第一个语块赋予标签B-A,将它所包含的其它语块赋予标签I-A,不属于任何论元的语块赋予标签O。

-

-我们继续以上面的这句话为例,图1展示了BIO表示方法。

-

-

-

-图2. BIO标注方法示例

-

-

-从上面的例子可以看到,根据序列标注结果可以直接得到论元的语义角色标注结果,是一个相对简单的过程。这种简单性体现在:(1)依赖浅层句法分析,降低了句法分析的要求和难度;(2)没有了候选论元剪除这一步骤;(3)论元的识别和论元标注是同时实现的。这种一体化处理论元识别和论元标注的方法,简化了流程,降低了错误累积的风险,往往能够取得更好的结果。

-

-与基于语块的SRL方法类似,在本教程中我们也将SRL看作一个序列标注问题,不同的是,我们只依赖输入文本序列,不依赖任何额外的语法解析结果或是复杂的人造特征,利用深度神经网络构建一个端到端学习的SRL系统。我们以[CoNLL-2004 and CoNLL-2005 Shared Tasks](http://www.cs.upc.edu/~srlconll/)任务中SRL任务的公开数据集为例,实践下面的任务:给定一句话和这句话里的一个谓词,通过序列标注的方式,从句子中找到谓词对应的论元,同时标注它们的语义角色。

-

-## 模型概览

-

-循环神经网络(Recurrent Neural Network)是一种对序列建模的重要模型,在自然语言处理任务中有着广泛地应用。不同于前馈神经网络(Feed-forward Neural Network),RNN能够处理输入之间前后关联的问题。LSTM是RNN的一种重要变种,常用来学习长序列中蕴含的长程依赖关系,我们在[情感分析](https://github.com/PaddlePaddle/book/tree/develop/05.understand_sentiment)一篇中已经介绍过,这一篇中我们依然利用LSTM来解决SRL问题。

-

-### 栈式循环神经网络(Stacked Recurrent Neural Network)

-

-深层网络有助于形成层次化特征,网络上层在下层已经学习到的初级特征基础上,形成更复杂的高级特征。尽管LSTM沿时间轴展开后等价于一个非常“深”的前馈网络,但由于LSTM各个时间步参数共享,`$t-1$`时刻状态到`$t$`时刻的映射,始终只经过了一次非线性映射,也就是说单层LSTM对状态转移的建模是 “浅” 的。堆叠多个LSTM单元,令前一个LSTM`$t$`时刻的输出,成为下一个LSTM单元`$t$`时刻的输入,帮助我们构建起一个深层网络,我们把它称为第一个版本的栈式循环神经网络。深层网络提高了模型拟合复杂模式的能力,能够更好地建模跨不同时间步的模式\[[2](#参考文献)\]。

-

-然而,训练一个深层LSTM网络并非易事。纵向堆叠多个LSTM单元可能遇到梯度在纵向深度上传播受阻的问题。通常,堆叠4层LSTM单元可以正常训练,当层数达到4~8层时,会出现性能衰减,这时必须考虑一些新的结构以保证梯度纵向顺畅传播,这是训练深层LSTM网络必须解决的问题。我们可以借鉴LSTM解决 “梯度消失梯度爆炸” 问题的智慧之一:在记忆单元(Memory Cell)这条信息传播的路线上没有非线性映射,当梯度反向传播时既不会衰减、也不会爆炸。因此,深层LSTM模型也可以在纵向上添加一条保证梯度顺畅传播的路径。

-

-一个LSTM单元完成的运算可以被分为三部分:(1)输入到隐层的映射(input-to-hidden) :每个时间步输入信息`$x$`会首先经过一个矩阵映射,再作为遗忘门,输入门,记忆单元,输出门的输入,注意,这一次映射没有引入非线性激活;(2)隐层到隐层的映射(hidden-to-hidden):这一步是LSTM计算的主体,包括遗忘门,输入门,记忆单元更新,输出门的计算;(3)隐层到输出的映射(hidden-to-output):通常是简单的对隐层向量进行激活。我们在第一个版本的栈式网络的基础上,加入一条新的路径:除上一层LSTM输出之外,将前层LSTM的输入到隐层的映射作为的一个新的输入,同时加入一个线性映射去学习一个新的变换。

-

-图3是最终得到的栈式循环神经网络结构示意图。

-

-

-

-图3. 基于LSTM的栈式循环神经网络结构示意图

-

-

-### 双向循环神经网络(Bidirectional Recurrent Neural Network)

-

-在LSTM中,`$t$`时刻的隐藏层向量编码了到`$t$`时刻为止所有输入的信息,但`$t$`时刻的LSTM可以看到历史,却无法看到未来。在绝大多数自然语言处理任务中,我们几乎总是能拿到整个句子。这种情况下,如果能够像获取历史信息一样,得到未来的信息,对序列学习任务会有很大的帮助。

-

-为了克服这一缺陷,我们可以设计一种双向循环网络单元,它的思想简单且直接:对上一节的栈式循环神经网络进行一个小小的修改,堆叠多个LSTM单元,让每一层LSTM单元分别以:正向、反向、正向 …… 的顺序学习上一层的输出序列。于是,从第2层开始,`$t$`时刻我们的LSTM单元便总是可以看到历史和未来的信息。图4是基于LSTM的双向循环神经网络结构示意图。

-

-

-

-图4. 基于LSTM的双向循环神经网络结构示意图

-

-

-需要说明的是,这种双向RNN结构和Bengio等人在机器翻译任务中使用的双向RNN结构\[[3](#参考文献), [4](#参考文献)\] 并不相同,我们会在后续[机器翻译](https://github.com/PaddlePaddle/book/blob/develop/08.machine_translation/README.cn.md)任务中,介绍另一种双向循环神经网络。

-

-### 条件随机场 (Conditional Random Field)

-

-使用神经网络模型解决问题的思路通常是:前层网络学习输入的特征表示,网络的最后一层在特征基础上完成最终的任务。在SRL任务中,深层LSTM网络学习输入的特征表示,条件随机场(Conditional Random Filed, CRF)在特征的基础上完成序列标注,处于整个网络的末端。

-

-CRF是一种概率化结构模型,可以看作是一个概率无向图模型,结点表示随机变量,边表示随机变量之间的概率依赖关系。简单来讲,CRF学习条件概率`$P(X|Y)$`,其中 `$X = (x_1, x_2, ... , x_n)$` 是输入序列,`$Y = (y_1, y_2, ... , y_n)$` 是标记序列;解码过程是给定 `$X$`序列求解令`$P(Y|X)$`最大的`$Y$`序列,即`$Y^* = \mbox{arg max}_{Y} P(Y | X)$`。

-

-序列标注任务只需要考虑输入和输出都是一个线性序列,并且由于我们只是将输入序列作为条件,不做任何条件独立假设,因此输入序列的元素之间并不存在图结构。综上,在序列标注任务中使用的是如图5所示的定义在链式图上的CRF,称之为线性链条件随机场(Linear Chain Conditional Random Field)。

-

-

-

-图5. 序列标注任务中使用的线性链条件随机场

-

-

-根据线性链条件随机场上的因子分解定理\[[5](#参考文献)\],在给定观测序列`$X$`时,一个特定标记序列`$Y$`的概率可以定义为:

-

-$$p(Y | X) = \frac{1}{Z(X)} \text{exp}\left(\sum_{i=1}^{n}\left(\sum_{j}\lambda_{j}t_{j} (y_{i - 1}, y_{i}, X, i) + \sum_{k} \mu_k s_k (y_i, X, i)\right)\right)$$

-

-其中`$Z(X)$`是归一化因子,`$t_j$` 是定义在边上的特征函数,依赖于当前和前一个位置,称为转移特征,表示对于输入序列`$X$`及其标注序列在 `$i$`及`$i - 1$`位置上标记的转移概率。`$s_k$`是定义在结点上的特征函数,称为状态特征,依赖于当前位置,表示对于观察序列`$X$`及其`$i$`位置的标记概率。`$\lambda_j$` 和 `$\mu_k$` 分别是转移特征函数和状态特征函数对应的权值。实际上,`$t$`和`$s$`可以用相同的数学形式表示,再对转移特征和状态特在各个位置`$i$`求和有:`$f_{k}(Y, X) = \sum_{i=1}^{n}f_k({y_{i - 1}, y_i, X, i})$`,把`$f$`统称为特征函数,于是`$P(Y|X)$`可表示为:

-

-$$p(Y|X, W) = \frac{1}{Z(X)}\text{exp}\sum_{k}\omega_{k}f_{k}(Y, X)$$

-

-`$\omega$`是特征函数对应的权值,是CRF模型要学习的参数。训练时,对于给定的输入序列和对应的标记序列集合`$D = \left[(X_1, Y_1), (X_2 , Y_2) , ... , (X_N, Y_N)\right]$` ,通过正则化的极大似然估计,求解如下优化目标:

-

-$$\DeclareMathOperator*{\argmax}{arg\,max} L(\lambda, D) = - \text{log}\left(\prod_{m=1}^{N}p(Y_m|X_m, W)\right) + C \frac{1}{2}\lVert W\rVert^{2}$$

-

-这个优化目标可以通过反向传播算法和整个神经网络一起求解。解码时,对于给定的输入序列`$X$`,通过解码算法(通常有:维特比算法、Beam Search)求令出条件概率`$\bar{P}(Y|X)$`最大的输出序列 `$\bar{Y}$`。

-

-### 深度双向LSTM(DB-LSTM)SRL模型

-

-在SRL任务中,输入是 “谓词” 和 “一句话”,目标是从这句话中找到谓词的论元,并标注论元的语义角色。如果一个句子含有`$n$`个谓词,这个句子会被处理`$n$`次。一个最为直接的模型是下面这样:

-

-1. 构造输入;

-- 输入1是谓词,输入2是句子

-- 将输入1扩展成和输入2一样长的序列,用one-hot方式表示;

-2. one-hot方式的谓词序列和句子序列通过词表,转换为实向量表示的词向量序列;

-3. 将步骤2中的2个词向量序列作为双向LSTM的输入,学习输入序列的特征表示;

-4. CRF以步骤3中模型学习到的特征为输入,以标记序列为监督信号,实现序列标注;

-

-大家可以尝试上面这种方法。这里,我们提出一些改进,引入两个简单但对提高系统性能非常有效的特征:

-

-- 谓词上下文:上面的方法中,只用到了谓词的词向量表达谓词相关的所有信息,这种方法始终是非常弱的,特别是如果谓词在句子中出现多次,有可能引起一定的歧义。从经验出发,谓词前后若干个词的一个小片段,能够提供更丰富的信息,帮助消解歧义。于是,我们把这样的经验也添加到模型中,为每个谓词同时抽取一个“谓词上下文” 片段,也就是从这个谓词前后各取`$n$`个词构成的一个窗口片段;

-- 谓词上下文区域标记:为句子中的每一个词引入一个0-1二值变量,表示它们是否在“谓词上下文”片段中;

-

-修改后的模型如下(图6是一个深度为4的模型结构示意图):

-

-1. 构造输入

-- 输入1是句子序列,输入2是谓词序列,输入3是谓词上下文,从句子中抽取这个谓词前后各`$n$`个词,构成谓词上下文,用one-hot方式表示,输入4是谓词上下文区域标记,标记了句子中每一个词是否在谓词上下文中;

-- 将输入2~3均扩展为和输入1一样长的序列;

-2. 输入1~4均通过词表取词向量转换为实向量表示的词向量序列;其中输入1、3共享同一个词表,输入2和4各自独有词表;

-3. 第2步的4个词向量序列作为双向LSTM模型的输入;LSTM模型学习输入序列的特征表示,得到新的特性表示序列;

-4. CRF以第3步中LSTM学习到的特征为输入,以标记序列为监督信号,完成序列标注;

-

-

-

-图6. SRL任务上的深层双向LSTM模型

-

-

-

-## 数据介绍

-

-在此教程中,我们选用[CoNLL 2005](http://www.cs.upc.edu/~srlconll/)SRL任务开放出的数据集作为示例。需要特别说明的是,CoNLL 2005 SRL任务的训练数集和开发集在比赛之后并非免费进行公开,目前,能够获取到的只有测试集,包括Wall Street Journal的23节和Brown语料集中的3节。在本教程中,我们以测试集中的WSJ数据为训练集来讲解模型。但是,由于测试集中样本的数量远远不够,如果希望训练一个可用的神经网络SRL系统,请考虑付费获取全量数据。

-

-原始数据中同时包括了词性标注、命名实体识别、语法解析树等多种信息。本教程中,我们使用test.wsj文件夹中的数据进行训练和测试,并只会用到words文件夹(文本序列)和props文件夹(标注结果)下的数据。本教程使用的数据目录如下:

-

-```text

-conll05st-release/

-└── test.wsj

-├── props # 标注结果

-└── words # 输入文本序列

-```

-

-标注信息源自Penn TreeBank\[[7](#参考文献)\]和PropBank\[[8](#参考文献)\]的标注结果。PropBank标注结果的标签和我们在文章一开始示例中使用的标注结果标签不同,但原理是相同的,关于标注结果标签含义的说明,请参考论文\[[9](#参考文献)\]。

-

-原始数据需要进行数据预处理才能被PaddlePaddle处理,预处理包括下面几个步骤:

-

-1. 将文本序列和标记序列其合并到一条记录中;

-2. 一个句子如果含有`$n$`个谓词,这个句子会被处理`$n$`次,变成`$n$`条独立的训练样本,每个样本一个不同的谓词;

-3. 抽取谓词上下文和构造谓词上下文区域标记;

-4. 构造以BIO法表示的标记;

-5. 依据词典获取词对应的整数索引。

-

-

-```python

-# import paddle.v2.dataset.conll05 as conll05

-# conll05.corpus_reader函数完成上面第1步和第2步.

-# conll05.reader_creator函数完成上面第3步到第5步.

-# conll05.test函数可以获取处理之后的每条样本来供PaddlePaddle训练.

-```

-

-预处理完成之后一条训练样本包含9个特征,分别是:句子序列、谓词、谓词上下文(占 5 列)、谓词上下区域标志、标注序列。下表是一条训练样本的示例。

-

-| 句子序列 | 谓词 | 谓词上下文(窗口 = 5) | 谓词上下文区域标记 | 标注序列 |

-|---|---|---|---|---|

-| A | set | n't been set . × | 0 | B-A1 |

-| record | set | n't been set . × | 0 | I-A1 |

-| date | set | n't been set . × | 0 | I-A1 |

-| has | set | n't been set . × | 0 | O |

-| n't | set | n't been set . × | 1 | B-AM-NEG |

-| been | set | n't been set . × | 1 | O |

-| set | set | n't been set . × | 1 | B-V |

-| . | set | n't been set . × | 1 | O |

-

-

-除数据之外,我们同时提供了以下资源:

-

-| 文件名称 | 说明 |

-|---|---|

-| word_dict | 输入句子的词典,共计44068个词 |

-| label_dict | 标记的词典,共计106个标记 |

-| predicate_dict | 谓词的词典,共计3162个词 |

-| emb | 一个训练好的词表,32维 |

-

-我们在英文维基百科上训练语言模型得到了一份词向量用来初始化SRL模型。在SRL模型训练过程中,词向量不再被更新。关于语言模型和词向量可以参考[词向量](https://github.com/PaddlePaddle/book/blob/develop/04.word2vec/README.cn.md) 这篇教程。我们训练语言模型的语料共有995,000,000个token,词典大小控制为4900,000词。CoNLL 2005训练语料中有5%的词不在这4900,000个词中,我们将它们全部看作未登录词,用``表示。

-

-获取词典,打印词典大小:

-

-```python

-import math, os

-import numpy as np

-import paddle

-import paddle.v2.dataset.conll05 as conll05

-import paddle.fluid as fluid

-import time

-

-with_gpu = os.getenv('WITH_GPU', '0') != '0'

-

-word_dict, verb_dict, label_dict = conll05.get_dict()

-word_dict_len = len(word_dict)

-label_dict_len = len(label_dict)

-pred_dict_len = len(verb_dict)

-

-print word_dict_len

-print label_dict_len

-print pred_dict_len

-```

-

-## 模型配置说明

-

-- 定义输入数据维度及模型超参数。

-

-```python

-mark_dict_len = 2 # 谓上下文区域标志的维度,是一个0-1 2值特征,因此维度为2

-word_dim = 32 # 词向量维度

-mark_dim = 5 # 谓词上下文区域通过词表被映射为一个实向量,这个是相邻的维度

-hidden_dim = 512 # LSTM隐层向量的维度 : 512 / 4

-depth = 8 # 栈式LSTM的深度

-mix_hidden_lr = 1e-3

-

-IS_SPARSE = True

-PASS_NUM = 10

-BATCH_SIZE = 10

-

-embedding_name = 'emb'

-```

-

-这里需要特别说明的是hidden_dim = 512指定了LSTM隐层向量的维度为128维,关于这一点请参考PaddlePaddle官方文档中[lstmemory](http://www.paddlepaddle.org/doc/ui/api/trainer_config_helpers/layers.html#lstmemory)的说明。

-

-- 如上文提到,我们用基于英文维基百科训练好的词向量来初始化序列输入、谓词上下文总共6个特征的embedding层参数,在训练中不更新。

-

-```python

-# 这里加载PaddlePaddle上版保存的二进制模型

-def load_parameter(file_name, h, w):

-with open(file_name, 'rb') as f:

-f.read(16) # skip header.

-return np.fromfile(f, dtype=np.float32).reshape(h, w)

-```

-

-- 8个LSTM单元以“正向/反向”的顺序对所有输入序列进行学习。

-

-```python

-def db_lstm(word, predicate, ctx_n2, ctx_n1, ctx_0, ctx_p1, ctx_p2, mark,

-**ignored):

-# 8 features

-predicate_embedding = fluid.layers.embedding(

-input=predicate,

-size=[pred_dict_len, word_dim],

-dtype='float32',

-is_sparse=IS_SPARSE,

-param_attr='vemb')

-

-mark_embedding = fluid.layers.embedding(

-input=mark,

-size=[mark_dict_len, mark_dim],

-dtype='float32',

-is_sparse=IS_SPARSE)

-

-word_input = [word, ctx_n2, ctx_n1, ctx_0, ctx_p1, ctx_p2]

-# Since word vector lookup table is pre-trained, we won't update it this time.

-# trainable being False prevents updating the lookup table during training.

-emb_layers = [

-fluid.layers.embedding(

-size=[word_dict_len, word_dim],

-input=x,

-param_attr=fluid.ParamAttr(

-name=embedding_name, trainable=False)) for x in word_input

-]

-emb_layers.append(predicate_embedding)

-emb_layers.append(mark_embedding)

-

-# 8 LSTM units are trained through alternating left-to-right / right-to-left order

-# denoted by the variable `reverse`.

-hidden_0_layers = [

-fluid.layers.fc(input=emb, size=hidden_dim, act='tanh')

-for emb in emb_layers

-]

-

-hidden_0 = fluid.layers.sums(input=hidden_0_layers)

-

-lstm_0 = fluid.layers.dynamic_lstm(

-input=hidden_0,

-size=hidden_dim,

-candidate_activation='relu',

-gate_activation='sigmoid',

-cell_activation='sigmoid')

-

-# stack L-LSTM and R-LSTM with direct edges

-input_tmp = [hidden_0, lstm_0]

-

-# In PaddlePaddle, state features and transition features of a CRF are implemented

-# by a fully connected layer and a CRF layer seperately. The fully connected layer

-# with linear activation learns the state features, here we use fluid.layers.sums

-# (fluid.layers.fc can be uesed as well), and the CRF layer in PaddlePaddle:

-# fluid.layers.linear_chain_crf only

-# learns the transition features, which is a cost layer and is the last layer of the network.

-# fluid.layers.linear_chain_crf outputs the log probability of true tag sequence

-# as the cost by given the input sequence and it requires the true tag sequence

-# as target in the learning process.

-

-for i in range(1, depth):

-mix_hidden = fluid.layers.sums(input=[

-fluid.layers.fc(input=input_tmp[0], size=hidden_dim, act='tanh'),

-fluid.layers.fc(input=input_tmp[1], size=hidden_dim, act='tanh')

-])

-

-lstm = fluid.layers.dynamic_lstm(

-input=mix_hidden,

-size=hidden_dim,

-candidate_activation='relu',

-gate_activation='sigmoid',

-cell_activation='sigmoid',

-is_reverse=((i % 2) == 1))

-

-input_tmp = [mix_hidden, lstm]

-

-# 取最后一个栈式LSTM的输出和这个LSTM单元的输入到隐层映射,

-# 经过一个全连接层映射到标记字典的维度,来学习 CRF 的状态特征

-feature_out = fluid.layers.sums(input=[

-fluid.layers.fc(input=input_tmp[0], size=label_dict_len, act='tanh'),

-fluid.layers.fc(input=input_tmp[1], size=label_dict_len, act='tanh')

-])

-

-return feature_out

-```

-

-## 训练模型

-

-- 我们根据网络拓扑结构和模型参数来构造出trainer用来训练,在构造时还需指定优化方法,这里使用最基本的SGD方法(momentum设置为0),同时设定了学习率、正则等。

-

-- 数据介绍部分提到CoNLL 2005训练集付费,这里我们使用测试集训练供大家学习。conll05.test()每次产生一条样本,包含9个特征,shuffle和组完batch后作为训练的输入。

-

-- 通过feeding来指定每一个数据和data_layer的对应关系。 例如 下面feeding表示: conll05.test()产生数据的第0列对应word_data层的特征。

-

-- 可以使用event_handler回调函数来观察训练过程,或进行测试等。这里我们打印了训练过程的cost,该回调函数是trainer.train函数里设定。

-

-- 通过trainer.train函数训练

-

-```python

-def train(use_cuda, save_dirname=None, is_local=True):

-# define network topology

-

-# 句子序列

-word = fluid.layers.data(

-name='word_data', shape=[1], dtype='int64', lod_level=1)

-

-# 谓词

-predicate = fluid.layers.data(

-name='verb_data', shape=[1], dtype='int64', lod_level=1)

-

-# 谓词上下文5个特征

-ctx_n2 = fluid.layers.data(

-name='ctx_n2_data', shape=[1], dtype='int64', lod_level=1)

-ctx_n1 = fluid.layers.data(

-name='ctx_n1_data', shape=[1], dtype='int64', lod_level=1)

-ctx_0 = fluid.layers.data(

-name='ctx_0_data', shape=[1], dtype='int64', lod_level=1)

-ctx_p1 = fluid.layers.data(

-name='ctx_p1_data', shape=[1], dtype='int64', lod_level=1)

-ctx_p2 = fluid.layers.data(

-name='ctx_p2_data', shape=[1], dtype='int64', lod_level=1)

-

-# 谓词上下区域标志

-mark = fluid.layers.data(

-name='mark_data', shape=[1], dtype='int64', lod_level=1)

-

-# define network topology

-feature_out = db_lstm(**locals())

-

-# 标注序列

-target = fluid.layers.data(

-name='target', shape=[1], dtype='int64', lod_level=1)

-

-# 学习 CRF 的转移特征

-crf_cost = fluid.layers.linear_chain_crf(

-input=feature_out,

-label=target,

-param_attr=fluid.ParamAttr(

-name='crfw', learning_rate=mix_hidden_lr))

-

-avg_cost = fluid.layers.mean(crf_cost)

-

-sgd_optimizer = fluid.optimizer.SGD(

-learning_rate=fluid.layers.exponential_decay(

-learning_rate=0.01,

-decay_steps=100000,

-decay_rate=0.5,

-staircase=True))

-

-sgd_optimizer.minimize(avg_cost)

-

-# The CRF decoding layer is used for evaluation and inference.

-# It shares weights with CRF layer. The sharing of parameters among multiple layers

-# is specified by using the same parameter name in these layers. If true tag sequence

-# is provided in training process, `fluid.layers.crf_decoding` calculates labelling error

-# for each input token and sums the error over the entire sequence.

-# Otherwise, `fluid.layers.crf_decoding` generates the labelling tags.

-crf_decode = fluid.layers.crf_decoding(

-input=feature_out, param_attr=fluid.ParamAttr(name='crfw'))

-

-train_data = paddle.batch(

-paddle.reader.shuffle(

-paddle.dataset.conll05.test(), buf_size=8192),

-batch_size=BATCH_SIZE)

-

-place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

-

-

-feeder = fluid.DataFeeder(

-feed_list=[

-word, ctx_n2, ctx_n1, ctx_0, ctx_p1, ctx_p2, predicate, mark, target

-],

-place=place)

-exe = fluid.Executor(place)

-

-def train_loop(main_program):

-exe.run(fluid.default_startup_program())

-embedding_param = fluid.global_scope().find_var(

-embedding_name).get_tensor()

-embedding_param.set(

-load_parameter(conll05.get_embedding(), word_dict_len, word_dim),

-place)

-

-start_time = time.time()

-batch_id = 0

-for pass_id in xrange(PASS_NUM):

-for data in train_data():

-cost = exe.run(main_program,

-feed=feeder.feed(data),

-fetch_list=[avg_cost])

-cost = cost[0]

-

-if batch_id % 10 == 0:

-print("avg_cost:" + str(cost))

-if batch_id != 0:

-print("second per batch: " + str((time.time(

-) - start_time) / batch_id))

-# Set the threshold low to speed up the CI test

-if float(cost) < 60.0:

-if save_dirname is not None:

-fluid.io.save_inference_model(save_dirname, [

-'word_data', 'verb_data', 'ctx_n2_data',

-'ctx_n1_data', 'ctx_0_data', 'ctx_p1_data',

-'ctx_p2_data', 'mark_data'

-], [feature_out], exe)

-return

-

-batch_id = batch_id + 1

-

-train_loop(fluid.default_main_program())

-```

-

-

-## 应用模型

-

-训练完成之后,需要依据某个我们关心的性能指标选择最优的模型进行预测,可以简单的选择测试集上标记错误最少的那个模型。以下我们给出一个使用训练后的模型进行预测的示例。

-

-```python

-def infer(use_cuda, save_dirname=None):

-if save_dirname is None:

-return

-

-place = fluid.CUDAPlace(0) if use_cuda else fluid.CPUPlace()

-exe = fluid.Executor(place)

-

-inference_scope = fluid.core.Scope()

-with fluid.scope_guard(inference_scope):

-# Use fluid.io.load_inference_model to obtain the inference program desc,

-# the feed_target_names (the names of variables that will be fed

-# data using feed operators), and the fetch_targets (variables that

-# we want to obtain data from using fetch operators).

-[inference_program, feed_target_names,

-fetch_targets] = fluid.io.load_inference_model(save_dirname, exe)

-

-# Setup inputs by creating LoDTensors to represent sequences of words.

-# Here each word is the basic element of these LoDTensors and the shape of

-# each word (base_shape) should be [1] since it is simply an index to

-# look up for the corresponding word vector.

-# Suppose the length_based level of detail (lod) info is set to [[3, 4, 2]],

-# which has only one lod level. Then the created LoDTensors will have only

-# one higher level structure (sequence of words, or sentence) than the basic

-# element (word). Hence the LoDTensor will hold data for three sentences of

-# length 3, 4 and 2, respectively.

-# Note that lod info should be a list of lists.

-lod = [[3, 4, 2]]

-base_shape = [1]

-# The range of random integers is [low, high]

-word = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-pred = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=pred_dict_len - 1)

-ctx_n2 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-ctx_n1 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-ctx_0 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-ctx_p1 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-ctx_p2 = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=word_dict_len - 1)

-mark = fluid.create_random_int_lodtensor(

-lod, base_shape, place, low=0, high=mark_dict_len - 1)

-

-# Construct feed as a dictionary of {feed_target_name: feed_target_data}

-# and results will contain a list of data corresponding to fetch_targets.

-assert feed_target_names[0] == 'word_data'

-assert feed_target_names[1] == 'verb_data'

-assert feed_target_names[2] == 'ctx_n2_data'

-assert feed_target_names[3] == 'ctx_n1_data'

-assert feed_target_names[4] == 'ctx_0_data'

-assert feed_target_names[5] == 'ctx_p1_data'

-assert feed_target_names[6] == 'ctx_p2_data'

-assert feed_target_names[7] == 'mark_data'

-

-results = exe.run(inference_program,

-feed={

-feed_target_names[0]: word,

-feed_target_names[1]: pred,

-feed_target_names[2]: ctx_n2,

-feed_target_names[3]: ctx_n1,

-feed_target_names[4]: ctx_0,

-feed_target_names[5]: ctx_p1,

-feed_target_names[6]: ctx_p2,

-feed_target_names[7]: mark

-},

-fetch_list=fetch_targets,

-return_numpy=False)

-print(results[0].lod())

-np_data = np.array(results[0])

-print("Inference Shape: ", np_data.shape)

-```

-

-整个程序的入口如下:

-

-```python

-def main(use_cuda, is_local=True):

-if use_cuda and not fluid.core.is_compiled_with_cuda():

-return

-

-# Directory for saving the trained model

-save_dirname = "label_semantic_roles.inference.model"

-

-train(use_cuda, save_dirname, is_local)

-infer(use_cuda, save_dirname)

-

-

-main(use_cuda=False)

-```

-

-## 总结

-

-语义角色标注是许多自然语言理解任务的重要中间步骤。这篇教程中我们以语义角色标注任务为例,介绍如何利用PaddlePaddle进行序列标注任务。教程中所介绍的模型来自我们发表的论文\[[10](#参考文献)\]。由于 CoNLL 2005 SRL任务的训练数据目前并非完全开放,教程中只使用测试数据作为示例。在这个过程中,我们希望减少对其它自然语言处理工具的依赖,利用神经网络数据驱动、端到端学习的能力,得到一个和传统方法可比、甚至更好的模型。在论文中我们证实了这种可能性。关于模型更多的信息和讨论可以在论文中找到。

-

-## 参考文献

-1. Sun W, Sui Z, Wang M, et al. [Chinese semantic role labeling with shallow parsing](http://www.aclweb.org/anthology/D09-1#page=1513)[C]//Proceedings of the 2009 Conference on Empirical Methods in Natural Language Processing: Volume 3-Volume 3. Association for Computational Linguistics, 2009: 1475-1483.

-2. Pascanu R, Gulcehre C, Cho K, et al. [How to construct deep recurrent neural networks](https://arxiv.org/abs/1312.6026)[J]. arXiv preprint arXiv:1312.6026, 2013.

-3. Cho K, Van Merriënboer B, Gulcehre C, et al. [Learning phrase representations using RNN encoder-decoder for statistical machine translation](https://arxiv.org/abs/1406.1078)[J]. arXiv preprint arXiv:1406.1078, 2014.